Poldem – Protest Dataset 30 European Countries

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Political Ideas and Movements That Created the Modern World

harri+b.cov 27/5/03 4:15 pm Page 1 UNDERSTANDINGPOLITICS Understanding RITTEN with the A2 component of the GCE WGovernment and Politics A level in mind, this book is a comprehensive introduction to the political ideas and movements that created the modern world. Underpinned by the work of major thinkers such as Hobbes, Locke, Marx, Mill, Weber and others, the first half of the book looks at core political concepts including the British and European political issues state and sovereignty, the nation, democracy, representation and legitimacy, freedom, equality and rights, obligation and citizenship. The role of ideology in modern politics and society is also discussed. The second half of the book addresses established ideologies such as Conservatism, Liberalism, Socialism, Marxism and Nationalism, before moving on to more recent movements such as Environmentalism and Ecologism, Fascism, and Feminism. The subject is covered in a clear, accessible style, including Understanding a number of student-friendly features, such as chapter summaries, key points to consider, definitions and tips for further sources of information. There is a definite need for a text of this kind. It will be invaluable for students of Government and Politics on introductory courses, whether they be A level candidates or undergraduates. political ideas KEVIN HARRISON IS A LECTURER IN POLITICS AND HISTORY AT MANCHESTER COLLEGE OF ARTS AND TECHNOLOGY. HE IS ALSO AN ASSOCIATE McNAUGHTON LECTURER IN SOCIAL SCIENCES WITH THE OPEN UNIVERSITY. HE HAS WRITTEN ARTICLES ON POLITICS AND HISTORY AND IS JOINT AUTHOR, WITH TONY BOYD, OF THE BRITISH CONSTITUTION: EVOLUTION OR REVOLUTION? and TONY BOYD WAS FORMERLY HEAD OF GENERAL STUDIES AT XAVERIAN VI FORM COLLEGE, MANCHESTER, WHERE HE TAUGHT POLITICS AND HISTORY. -

French Underground Raves of the Nineties. Aesthetic Politics of Affect and Autonomy Jean-Christophe Sevin

French underground raves of the nineties. Aesthetic politics of affect and autonomy Jean-Christophe Sevin To cite this version: Jean-Christophe Sevin. French underground raves of the nineties. Aesthetic politics of affect and autonomy. Political Aesthetics: Culture, Critique and the Everyday, Arundhati Virmani, pp.71-86, 2016, 978-0-415-72884-3. halshs-01954321 HAL Id: halshs-01954321 https://halshs.archives-ouvertes.fr/halshs-01954321 Submitted on 13 Dec 2018 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. French underground raves of the 1990s. Aesthetic politics of affect and autonomy Jean-Christophe Sevin FRENCH UNDERGROUND RAVES OF THE 1990S. AESTHETIC POLITICS OF AFFECT AND AUTONOMY In Arundhati Virmani (ed.), Political Aesthetics: Culture, Critique and the Everyday, London, Routledge, 2016, p.71-86. The emergence of techno music – commonly used in France as electronic dance music – in the early 1990s is inseparable from rave parties as a form of spatiotemporal deployment. It signifies that the live diffusion via a sound system powerful enough to diffuse not only its volume but also its sound frequencies spectrum, including infrabass, is an integral part of the techno experience. In other words listening on domestic equipment is not a sufficient condition to experience this music. -

Technics, Precarity and Exodus in Rave Culture

29 Dancecult: Journal of Electronic Dance Music Culture • vol 1 no 2 Technics, Precarity and Exodus in Rave Culture TOBIAS C. VAN VEEN MCGILL UNIVERSITY Abstract Without a doubt, the question of rave culture’s politics – or lack thereof – has polarized debate concerning the cultural, social and political value of rave culture not only within electronic dance music culture (EDMC) studies, but in disciplines that look to various manifestations of subculture and counterculture for political innovation. It is time for the groundwork of this debate to be rethought. Ask not what rave culture’s politics can do for you; nor even what you can do for it. Rather, ask what the unexamined account of politics has ever done for anyone; then question all that rave culture has interrogated – from its embodied and technological practices to its production of ecstatic and collective subjectivities – and begin to trace how it has complicated the very question of the political, the communal and the ethical. This complication begins with the dissolution of the boundaries of labour and leisure and the always-already co-optation of culture. To the negation of ethics, community and politics, this tracing calls for the hauntology of technics, precarity and exodus. And it ends with a list of impossible demands demonstrating the parallax gap of rave culture’s politics. Keywords exodus, precarity, technics, multitude, workplay He [Randy] predicted the [rave] parties will eventually disappear under the combined pressure of police, city and fire officials. “In the next year and a half it’s going to vanish”, he said. “Then, when they think it’s gone, it will come back, becoming more underground again”. -

Bulgaria – the Difficult “Return to Europe”

European Democracy in Action BULGARIA – THE DIFFICULT “RETURN TO EUROPE” TAMARA BUSCHEK Against the background of the EU accession of Bulgaria on 1st January 2007 and the first Bulgarian elections for the European Parliament on 20th May 2007, Tamara Buschek takes a closer look at Bulgaria’s uneven political and economic transition – at its difficult “return to Europe”. Graduated from Graz University (Austria) in 2003 with a Masters in Law [magistra juris] after finishing her studies in European and international law. After gaining a grant from the Chamber of Commerce in 2000 to complete an internship at the Austrian Embassy in London, she carried out research for her dissertation in criminal law – “The Prevention of Sexual Child Abuse – Austria/Great Britain” - in 2001 at the London School of Economics. She studied European and administrative law in Paris from 2001 to 2002 as part of an Erasmus year. She is quadrilingual (German, Bulgarian, English and French). « BULGARIA – THE DIFFICULT RETURN TO EUROPE » MAY 2007 Table of Contents Introduction P. 1 2.3 The current governmental coalition, 2005-2007 and the P. 21 presidential election in 2006 I – Background Information P. 3 III - The first European Parliament elections, 20 May 2007 P. 25 1.1 Hopes and Fears P. 3 Conclusion P. 30 1.2 Ethnic Minorities P. 5 1.3 Economic Facts P. 7 Annex P. 32 II – Political Situation- a difficult path towards stability P. 9 Annex 1: Key facts P. 32 2.1 The transition from 1989 till 2001 P. 9 Annex 2: Economic Profile P. 33 2.1.1 The legislative elections of 1990 and the first P. -

How Transnational Party Alliances Influence National Parties' Policies

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by ZORA Zurich Open Repository and Archive University of Zurich Main Library Strickhofstrasse 39 CH-8057 Zurich www.zora.uzh.ch Year: 2021 How Transnational party alliances influence national parties’ policies Senninger, Roman ; Bischof, Daniel ; Ezrow, Lawrence Abstract: Previous research reports that parties in established European democracies learn from and em- ulate the successful election strategies of foreign incumbents, i.e., successful parties are influential abroad. We theorize that—in addition to incumbency (or success)—exchange takes place through transnational party alliances in the European Union. Relying on party manifesto data and spatial econometric analyses, we show that belonging to the same European Parliament (EP) party group enhances learning and em- ulation processes between national political parties. Estimated short- and long-term effects are approxi- mately two and three times greater when foreign incumbents are in the same EP party group compared to other foreign incumbents. Our results have implications for our understanding of how transnational party groups influence national parties’ policy positions. DOI: https://doi.org/10.1017/psrm.2020.55 Posted at the Zurich Open Repository and Archive, University of Zurich ZORA URL: https://doi.org/10.5167/uzh-196868 Journal Article Accepted Version The following work is licensed under a Creative Commons: Attribution-NonCommercial-NoDerivatives 4.0 International (CC BY-NC-ND 4.0) License. Originally published at: Senninger, Roman; Bischof, Daniel; Ezrow, Lawrence (2021). How Transnational party alliances influence national parties’ policies. Political Science Research and Methods:Epub ahead of print. -

Compassthe DIRECTION for the DEMOCRATIC LEFT

compassTHE DIRECTION FOR THE DEMOCRATIC LEFT MAPPING THE CENTRE GROUND Peter Kellner compasscontents Mapping the Centre Ground “This is a good time to think afresh about the way we do politics.The decline of the old ideologies has made many of the old Left-Right arguments redundant.A bold project to design a positive version of the Centre could fill the void.” Compass publications are intended to create real debate and discussion around the key issues facing the democratic left - however the views expressed in this publication are not a statement of Compass policy. compass Mapping the Centre Ground Peter Kellner All three leaders of Britain’s main political parties agree on one thing: elections are won and lost on the centre ground.Tony Blair insists that Labour has won the last three elections as a centre party, and would return to the wilderness were it to revert to left-wing policies. David Cameron says with equal fervour that the Conservatives must embrace the Centre if they are to return to power. Sir Menzies Campbell says that the Liberal Democrats occupy the centre ground out of principle, not electoral calculation, and he has nothing to fear from his rivals invading his space. What are we to make of all this? It is sometimes said that when any proposition commands such broad agreement, it is probably wrong. Does the shared obsession of all three party leaders count as a bad, consensual error – or are they right to compete for the same location on the left-right axis? This article is an attempt to answer that question, via an excursion down memory lane, a search for clear definitions and some speculation about the future of political debate. -

Codebook Indiveu – Party Preferences

Codebook InDivEU – party preferences European University Institute, Robert Schuman Centre for Advanced Studies December 2020 Introduction The “InDivEU – party preferences” dataset provides data on the positions of more than 400 parties from 28 countries1 on questions of (differentiated) European integration. The dataset comprises a selection of party positions taken from two existing datasets: (1) The EU Profiler/euandi Trend File The EU Profiler/euandi Trend File contains party positions for three rounds of European Parliament elections (2009, 2014, and 2019). Party positions were determined in an iterative process of party self-placement and expert judgement. For more information: https://cadmus.eui.eu/handle/1814/65944 (2) The Chapel Hill Expert Survey The Chapel Hill Expert Survey contains party positions for the national elections most closely corresponding the European Parliament elections of 2009, 2014, 2019. Party positions were determined by expert judgement. For more information: https://www.chesdata.eu/ Three additional party positions, related to DI-specific questions, are included in the dataset. These positions were determined by experts involved in the 2019 edition of euandi after the elections took place. The inclusion of party positions in the “InDivEU – party preferences” is limited to the following issues: - General questions about the EU - Questions about EU policy - Questions about differentiated integration - Questions about party ideology 1 This includes all 27 member states of the European Union in 2020, plus the United Kingdom. How to Cite When using the ‘InDivEU – Party Preferences’ dataset, please cite all of the following three articles: 1. Reiljan, Andres, Frederico Ferreira da Silva, Lorenzo Cicchi, Diego Garzia, Alexander H. -

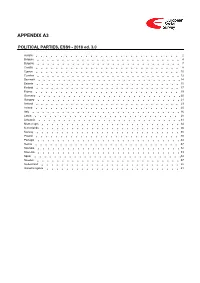

ESS9 Appendix A3 Political Parties Ed

APPENDIX A3 POLITICAL PARTIES, ESS9 - 2018 ed. 3.0 Austria 2 Belgium 4 Bulgaria 7 Croatia 8 Cyprus 10 Czechia 12 Denmark 14 Estonia 15 Finland 17 France 19 Germany 20 Hungary 21 Iceland 23 Ireland 25 Italy 26 Latvia 28 Lithuania 31 Montenegro 34 Netherlands 36 Norway 38 Poland 40 Portugal 44 Serbia 47 Slovakia 52 Slovenia 53 Spain 54 Sweden 57 Switzerland 58 United Kingdom 61 Version Notes, ESS9 Appendix A3 POLITICAL PARTIES ESS9 edition 3.0 (published 10.12.20): Changes from previous edition: Additional countries: Denmark, Iceland. ESS9 edition 2.0 (published 15.06.20): Changes from previous edition: Additional countries: Croatia, Latvia, Lithuania, Montenegro, Portugal, Slovakia, Spain, Sweden. Austria 1. Political parties Language used in data file: German Year of last election: 2017 Official party names, English 1. Sozialdemokratische Partei Österreichs (SPÖ) - Social Democratic Party of Austria - 26.9 % names/translation, and size in last 2. Österreichische Volkspartei (ÖVP) - Austrian People's Party - 31.5 % election: 3. Freiheitliche Partei Österreichs (FPÖ) - Freedom Party of Austria - 26.0 % 4. Liste Peter Pilz (PILZ) - PILZ - 4.4 % 5. Die Grünen – Die Grüne Alternative (Grüne) - The Greens – The Green Alternative - 3.8 % 6. Kommunistische Partei Österreichs (KPÖ) - Communist Party of Austria - 0.8 % 7. NEOS – Das Neue Österreich und Liberales Forum (NEOS) - NEOS – The New Austria and Liberal Forum - 5.3 % 8. G!LT - Verein zur Förderung der Offenen Demokratie (GILT) - My Vote Counts! - 1.0 % Description of political parties listed 1. The Social Democratic Party (Sozialdemokratische Partei Österreichs, or SPÖ) is a social above democratic/center-left political party that was founded in 1888 as the Social Democratic Worker's Party (Sozialdemokratische Arbeiterpartei, or SDAP), when Victor Adler managed to unite the various opposing factions. -

Travel and Drug Use in Europe: a Short Review 1

ISSN 1725-5767 ISSN Travel and drug use in Europe: a short review 1 Travel and drug use in Europe: a short review PAPERS THEMATIC Travel and drug use in Europe: a short review emcdda.europa.eu Contents 1. Introduction 3 2. Travelling and using drugs 4 Young people 4 Problem drug users 4 3. Examples of drug-related destinations 6 4. Prevalence of drug use among young travellers 9 Young holidaymakers in Europe 9 Young backpackers 10 Young clubbers and partygoers 11 5. Risks associated with drug use while travelling 13 Health risks 13 Risk related to personal safety 14 Legal risks 14 Risks related to injecting drug use 14 Risks to local communities 15 6. Potential for prevention interventions 17 7. Conclusions 19 Acknowledgements 21 References 22 Travel and drug use in Europe: a short review 3 emcdda.europa.eu 1. Introduction Recent decades have seen a growth in travel and tourism abroad because of cheap air fares and holiday packages. This has been accompanied by a relaxation of border controls, especially within parts of Europe participating in the Schengen Agreement. As some people may be more inclined to use illicit substances during holiday periods and some may even choose to travel to destinations that are associated with drug use — a phenomenon sometimes referred to as ‘drug tourism’ — this means that from a European drug policy perspective the issue of drug use and travel has become more important. This Thematic paper examines travellers and drug use, with a focus on Europeans travelling within Europe, although some other relevant destinations are also included. -

Equality, Freedom, and Democracy OUP CORRECTED AUTOPAGE PROOFS – FINAL, 16/09/20, Spi OUP CORRECTED AUTOPAGE PROOFS – FINAL, 16/09/20, Spi

OUP CORRECTED AUTOPAGE PROOFS – FINAL, 16/09/20, SPi Equality, Freedom, and Democracy OUP CORRECTED AUTOPAGE PROOFS – FINAL, 16/09/20, SPi OUP CORRECTED AUTOPAGE PROOFS – FINAL, 16/09/20, SPi Equality, Freedom, and Democracy Europe After the Great Recession By LEONARDO MORLINO with DANIELA PIANA MARIO QUARANTA FRANCESCO RANIOLO CECILIA EMMA SOTTILOTTA CLAUDIUS WAGEMANN 1 OUP CORRECTED AUTOPAGE PROOFS – FINAL, 16/09/20, SPi 1 Great Clarendon Street, Oxford, OX2 6DP, United Kingdom Oxford University Press is a department of the University of Oxford. It furthers the University’s objective of excellence in research, scholarship, and education by publishing worldwide. Oxford is a registered trade mark of Oxford University Press in the UK and in certain other countries © Leonardo Morlino 2020. Some rights reserved. © Chapter 2 © Leonardo Morlino, Claudius Wagemann, and Francesco Raniolo 2020. Chapter 3 © Leonardo Morlino and Daniela Piana 2020. Chapter 4 © Leonardo Morlino, Mario Quaranta, and Francesco Raniolo 2020. Chapter 5 © Leonardo Morlino and Francesco Raniolo 2020. Chapter 6 © Leonardo Morlino and Daniela Piana 2020. Chapter 7 © Leonardo Morlino, Daniela Piana, and Cecilia Sottilotta 2020. The moral rights of the authors have been asserted First Edition published in 2020 Impression: 1 Some rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, for commercial purposes, without the prior permission in writing of Oxford University Press, or as expressly permitted by law, by licence or under terms agreed with the appropriate reprographics rights organization. This is an open access publication, available online and distributed under the terms of a Creative Commons Attribution – Non Commercial – No Derivatives 4.0 International licence (CC BY-NC-ND 4.0), a copy of which is available at http://creativecommons.org/licenses/by-nc-nd/4.0/. -

Terror Fried Brains

wake up! wake up! it’s yer sunny side up Summer Solstice 2003 Free/Donation Issue 411/412 TERROR FRIED BRAINS “Every ten years or so, the United States Poached Rights needs to pick up some small crappy little Switch on the news and you hear about CRAP ARREST OF THE WEEK country and throw it against the wall, just to more terrorists being arrested. But who For reading the paper! show the world we mean business.”-Michael are they? In December, five Turks and a An American anti-war prisoner serving time Ledden, holder of the ‘Freedom Chair’ at Briton were charged under the Terrorism for protesting at a military base was put the American Enterprise Institute. Act 2000 for supporting the Turkish into solitary confinement for eight days af- Last week a poll revealed that a third Revolutionary People’s Liberation Party- ter he got sent and distributed anti-war ar- of Americans believe that weapons of mass Front, or DHKP-C. They were nicked be- ticles from such radical publications as the destruction have been found in Iraq, while New York Times, Readers’ Digest, cause DHKP-C has been banned by the Newsweek, and The Guardian! Better not 22 per cent reckon Iraq actually used UK government and the six arrestees were send him this weeks SchNEWS then. them! Even before the war, half of those supposedly “facilitating the retention or www.naplesnews.com/03/06/florida/ polled said the Iraq regime was responsi- control of terrorist property.” Guns? d939395a.htm ble for September 11th. But no one’s found Bombs? Er, no, the people arrested were any weapons of mass destruction let alone simply in possession of magazines, vid- handcuffed at gunpoint and driven to Govan used them (well apart from the Americans eos and posters that supported the Police Station. -

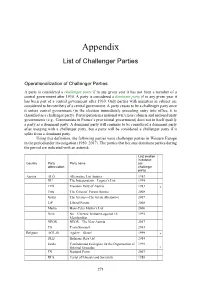

Challenger Party List

Appendix List of Challenger Parties Operationalization of Challenger Parties A party is considered a challenger party if in any given year it has not been a member of a central government after 1930. A party is considered a dominant party if in any given year it has been part of a central government after 1930. Only parties with ministers in cabinet are considered to be members of a central government. A party ceases to be a challenger party once it enters central government (in the election immediately preceding entry into office, it is classified as a challenger party). Participation in a national war/crisis cabinets and national unity governments (e.g., Communists in France’s provisional government) does not in itself qualify a party as a dominant party. A dominant party will continue to be considered a dominant party after merging with a challenger party, but a party will be considered a challenger party if it splits from a dominant party. Using this definition, the following parties were challenger parties in Western Europe in the period under investigation (1950–2017). The parties that became dominant parties during the period are indicated with an asterisk. Last election in dataset Country Party Party name (as abbreviation challenger party) Austria ALÖ Alternative List Austria 1983 DU The Independents—Lugner’s List 1999 FPÖ Freedom Party of Austria 1983 * Fritz The Citizens’ Forum Austria 2008 Grüne The Greens—The Green Alternative 2017 LiF Liberal Forum 2008 Martin Hans-Peter Martin’s List 2006 Nein No—Citizens’ Initiative against