A Practical Guide to Low-Power Design

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

BUK9609-55A N-Channel Trenchmos Logic Level FET

Important notice Dear Customer, On 7 February 2017 the former NXP Standard Product business became a new company with the tradename Nexperia. Nexperia is an industry leading supplier of Discrete, Logic and PowerMOS semiconductors with its focus on the automotive, industrial, computing, consumer and wearable application markets In data sheets and application notes which still contain NXP or Philips Semiconductors references, use the references to Nexperia, as shown below. Instead of http://www.nxp.com, http://www.philips.com/ or http://www.semiconductors.philips.com/, use http://www.nexperia.com Instead of [email protected] or [email protected], use [email protected] (email) Replace the copyright notice at the bottom of each page or elsewhere in the document, depending on the version, as shown below: - © NXP N.V. (year). All rights reserved or © Koninklijke Philips Electronics N.V. (year). All rights reserved Should be replaced with: - © Nexperia B.V. (year). All rights reserved. If you have any questions related to the data sheet, please contact our nearest sales office via e-mail or telephone (details via [email protected]). Thank you for your cooperation and understanding, Kind regards, Team Nexperia BUK9609-55A D2PAK N-channel TrenchMOS logic level FET Rev. 02 — 3 February 2011 Product data sheet 1. Product profile 1.1 General description Logic level N-channel enhancement mode Field-Effect Transistor (FET) in a plastic package using TrenchMOS technology. This product has been designed and qualified to the appropriate AEC standard for use in automotive critical applications. 1.2 Features and benefits AEC Q101 compliant Suitable for logic level gate drive Low conduction losses due to low sources on-state resistance Suitable for thermally demanding environments due to 175 °C rating 1.3 Applications 12 V and 24 V loads Motors, lamps and solenoids Automotive and general purpose power switching 1.4 Quick reference data Table 1. -

KE02 Sub-Family Data Sheet

NXP Semiconductors Document Number MKE02P64M40SF0 Data Sheet: Technical Data Rev. 6, 12/2019 MKE02P64M40SF0 KE02 Sub-Family Data Sheet Supports the following: MKE02Z16VLC4(R), MKE02Z32VLC4(R), MKE02Z64VLC4(R), MKE02Z16VLD4(R), MKE02Z32VLD4(R), MKE02Z64VLD4(R), MKE02Z32VLH4(R), MKE02Z64VLH4(R), MKE02Z32VQH4(R), MKE02Z64VQH4(R), MKE02Z16VFM4(R), MKE02Z32VFM4(R), and MKE02Z64VFM4(R) Key features • System peripherals – Power management module (PMC) with three power • Operating characteristics modes: Run, Wait, Stop – Voltage range: 2.7 to 5.5 V – Low-voltage detection (LVD) with reset or interrupt, – Flash write voltage range: 2.7 to 5.5 V selectable trip points – Temperature range (ambient): -40 to 105°C – Watchdog with independent clock source (WDOG) • Performance – Programmable cyclic redundancy check module – Up to 40 MHz Arm® Cortex-M0+ core and up to 20 (CRC) MHz bus clock – Serial wire debug interface (SWD) – Single cycle 32-bit x 32-bit multiplier – Bit manipulation engine (BME) – Single cycle I/O access port • Security and integrity modules • Memories and memory interfaces – 64-bit unique identification (ID) number per chip – Up to 64 KB flash • Human-machine interface – Up to 256 B EEPROM – Up to 57 general-purpose input/output (GPIO) – Up to 4 KB RAM – Two up to 8-bit keyboard interrupt modules (KBI) • Clocks – External interrupt (IRQ) – Oscillator (OSC) - supports 32.768 kHz crystal or 4 • Analog modules MHz to 20 MHz crystal or ceramic resonator; choice – One up to 16-channel 12-bit SAR ADC, operation in of low power or high gain oscillators Stop mode, optional hardware trigger (ADC) – Internal clock source (ICS) - internal FLL with – Two analog comparators containing a 6-bit DAC internal or external reference, 31.25 kHz pre- and programmable reference input (ACMP) trimmed internal reference for 32 MHz system clock (able to be trimmed for up to 40 MHz system clock) – Internal 1 kHz low-power oscillator (LPO) NXP reserves the right to change the production detail specifications as may be required to permit improvements in the design of its products. -

Important Notice Dear Customer, on 7 February 2017 The

Important notice Dear Customer, On 7 February 2017 the former NXP Standard Product business became a new company with the tradename Nexperia. Nexperia is an industry leading supplier of Discrete, Logic and PowerMOS semiconductors with its focus on the automotive, industrial, computing, consumer and wearable application markets In data sheets and application notes which still contain NXP or Philips Semiconductors references, use the references to Nexperia, as shown below. Instead of http://www.nxp.com, http://www.philips.com/ or http://www.semiconductors.philips.com/, use http://www.nexperia.com Instead of [email protected] or [email protected], use [email protected] (email) Replace the copyright notice at the bottom of each page or elsewhere in the document, depending on the version, as shown below: - © NXP N.V. (year). All rights reserved or © Koninklijke Philips Electronics N.V. (year). All rights reserved Should be replaced with: - © Nexperia B.V. (year). All rights reserved. If you have any questions related to the data sheet, please contact our nearest sales office via e-mail or telephone (details via [email protected]). Thank you for your cooperation and understanding, Kind regards, Team Nexperia PMN27XPE 20 V, single P-channel Trench MOSFET 20 September 2012 Product data sheet 1. Product profile 1.1 General description P-channel enhancement mode Field-Effect Transistor (FET) in a small SOT457 (SC-74) Surface-Mounted Device (SMD) plastic package using Trench MOSFET technology. 1.2 Features and benefits • Fast switching • Trench MOSFET technology • 2 kV ESD protection 1.3 Applications • Relay driver • High-speed line driver • High-side loadswitch • Switching circuits 1.4 Quick reference data Table 1. -

I.MX RT1160 Crossover Processors Data Sheet for Consumer Products

NXP Semiconductors Document Number:IMXRT1160CEC Data Sheet: Technical Data Rev. 0, 04/2021 MIMXRT1166DVM6A MIMXRT1165DVM6A i.MX RT1160 Crossover Processors Data Sheet for Consumer Products Package Information Plastic Package 289-pin MAPBGA, 14 x 14 mm, 0.8 mm pitch Ordering Information See Table 1 on page 6 1. i.MX RT1160 introduction . 1 1 i.MX RT1160 introduction 1.1. Features . 2 1.2. Ordering information . 6 The i.MX RT1160 is a new high-end processor of i.MX 1.3. Package marking information . 9 RT family, which features NXP’s advanced 2. Architectural overview . 10 2.1. Block diagram . 10 implementation of a high performance Arm 3. Modules list . 11 Cortex®-M7 core operating at speeds up to 600 MHz 3.1. Special signal considerations . 20 ® 3.2. Recommended connections for unused analog and a power efficient Cortex -M4 core up to 240 MHz. interfaces . 21 4. Electrical characteristics . 23 The i.MX RT1160 processor has 1 MB on-chip RAM in 4.1. Chip-level conditions . 23 total, including a 768 KB RAM which can be flexibly 4.2. System power and clocks . 32 configured as TCM (512 KB RAM shared with M7 TCM 4.3. I/O parameters . 42 4.4. System modules . 50 and 256 KB RAM shared with M4 TCM) or 4.5. External memory interface . 54 general-purpose on-chip RAM. The i.MX RT1160 4.6. Display and graphics . 64 4.7. Audio . 70 integrates advanced power management module with 4.8. Analog . 72 DCDC and LDO regulators that reduce complexity of 4.9. -

AN5079, Qoriq P1 Series to T1 Series Migration Guide

NXP Semiconductors Document Number: AN5079 Application Note Rev. 0, 07/2017 QorIQ P1 Series to T1 Series Migration Guide Contents 1 About this document 1 About this document............................................... 1 This document provides a summary of the significant 2 Introduction.............................. .............................. 1 differences between QorIQ P1 series and T1 series devices. 3 Feature-set comparison................... ........................2 The QorIQ P1 series devices discussed in this document are P1010, P1020, and P1022. These are compared with QorIQ T1 4 PowerPC e500v2 vs e5500 core..............................4 series devices including T1024, T1014, T1023, T1013, T1040, 5 Ethernet controller...................................................8 T1020, T1042, and T1022. Use this document as a recommended resource for migrating products from P1 series 6 POR sequence....................................................... 14 to T1 series devices. 7 DDR memory controller................ .......................17 8 Local bus - eLBC vs IFC...................................... 18 9 USB.......................................................................20 2 Introduction 10 eSDHC...................................................................21 QorIQ P1 series devices combine single or dual e500v2 Power 11 Related documentation..........................................22 Architecture® core offering excellent combinations of 12 Revision history.................................................... 22 protocol -

PSMN3R7-30YLC N-Channel 30 V 3.95Mω Logic Level MOSFET in LFPAK Using Nextpower

Important notice Dear Customer, On 7 February 2017 the former NXP Standard Product business became a new company with the tradename Nexperia. Nexperia is an industry leading supplier of Discrete, Logic and PowerMOS semiconductors with its focus on the automotive, industrial, computing, consumer and wearable application markets In data sheets and application notes which still contain NXP or Philips Semiconductors references, use the references to Nexperia, as shown below. Instead of http://www.nxp.com, http://www.philips.com/ or http://www.semiconductors.philips.com/, use http://www.nexperia.com Instead of [email protected] or [email protected], use [email protected] (email) Replace the copyright notice at the bottom of each page or elsewhere in the document, depending on the version, as shown below: - © NXP N.V. (year). All rights reserved or © Koninklijke Philips Electronics N.V. (year). All rights reserved Should be replaced with: - © Nexperia B.V. (year). All rights reserved. If you have any questions related to the data sheet, please contact our nearest sales office via e-mail or telephone (details via [email protected]). Thank you for your cooperation and understanding, Kind regards, Team Nexperia PSMN3R7-30YLC LFPAK N-channel 30 V 3.95mΩ logic level MOSFET in LFPAK using NextPower technology Rev. 01 — 2 May 2011 Product data sheet 1. Product profile 1.1 General description Logic level enhancement mode N-channel MOSFET in LFPAK package. This product is designed and qualified for use in a wide range of industrial, communications and domestic equipment. 1.2 Features and benefits High reliability Power SO8 package, Optimised for 4.5V Gate drive utilising qualified to 175°C NextPower Superjunction technology Low parasitic inductance and Ultra low QG, QGD, and QOSS for resistance high system efficiencies at low and high loads 1.3 Applications DC-to-DC converters Server power supplies Load switching Sync rectifier Power OR-ing 1.4 Quick reference data Table 1. -

Terms of Use Nexperia.Com 2017

NEXPERIA TERMS OF USE NEXPERIA.COM 2017 nexperia.com Acceptance of Terms of Use: This Web Site is owned by NEXPERIA B.V. inconsistent with these Terms of Use, such specific terms will supersede having its registered office at Jonkerbosplein 52, 6534 AB Nijmegen. these Terms of Use. The Web Site is intended for the lawful use of NEXPERIA B.V. and/or its subsidiaries (‘NEXPERIA’) customers, employees and members of the NEXPERIA reserves the right to make changes or updates with respect to general public who are over the age of 13. By accessing and using this or in the Content of the Web Site or the format thereof at any time Web Site you agree to be bound by the following Terms of Use and all without notice. NEXPERIA reserves the right to terminate or restrict terms and conditions contained and/or referenced herein or any additional access to the Web Site for any reason whatsoever at its sole discretion. terms and conditions set forth on this Web Site and all such terms shall be deemed accepted by you. If you do NOT agree to all these Terms of Use, Although care has been taken to ensure the accuracy of the information you should NOT access or use this Web Site. If you do not agree to any on this Web Site, financial and product information included, NEXPERIA additional specific terms which apply to particular Content (as defined assumes no responsibility therefor. below) or to particular transactions concluded through this Web Site, then you should NOT access or use the part of the Web Site which contains such Content or through which such transactions may be concluded and you should not use such Content or conclude such transactions. -

IEEE Standard for Design and Verification of Low-Power, Energy- Aware Electronic Systems

IEEE Standard for Design and Verification of Low-Power, Energy- Aware Electronic Systems IEEE Computer Society Sponsored by the Design Automation Standards Committee IEEE 3 Park Avenue IEEE Std 1801™-2015 New York, NY 10016-5997 (Revision of USA IEEE Std 1801-2013) Authorized licensed use limited to: Universidad Nacional Autonoma De Mexico (UNAM). Downloaded on January 16,2018 at 01:18:13 UTC from IEEE Xplore. Restrictions apply. IEEE Std 1801™-2015 (Revision of IEEE Std 1801-2013) IEEE Standard for Design and Verification of Low-Power, Energy- Aware Electronic Systems Sponsor Design Automation Standards Committee of the IEEE Computer Society Approved 5 December 2015 IEEE-SA Standards Board Authorized licensed use limited to: Universidad Nacional Autonoma De Mexico (UNAM). Downloaded on January 16,2018 at 01:18:13 UTC from IEEE Xplore. Restrictions apply. Grateful acknowledgment is made to the following for permission to use source material: Accellera Systems Initiative Unified Power Format (UPF) Standard, Version 1.0 Cadence Design Systems, Inc. Library Cell Modeling Guide Using CPF Hierarchical Power Intent Modeling Guide Using CPF Silicon Integration Initiative, Inc. Si2 Common Power Format Specification, Version 2.1 Abstract: A method is provided for specifying power intent for an electronic design, for use in verification of the structure and behavior of the design in the context of a given power- management architecture, and for driving implementation of that power-management architecture. The method supports incremental refinement of power-intent specifications required for IP-based design flows. Keywords: bottom-up implementation, buffers, energy-aware design, IEEE 1801™, interface specification, IP reuse, isolation, level-shifting, power domains, power intent, power modeling, power states, successive refinement, supply states, repeaters, retention, Unified Power Format (UPF) • The Institute of Electrical and Electronics Engineers, Inc. -

An Effective and Efficient Methodology for Soc Power Management Through UPF

An effective and efficient methodology for SoC power management through UPF By Renduchinthala Anusha Under the Supervision of Dr. Sujay Deb Mr. Akhilesh Chandra Mishra Mr. Rangarajan Ramanujam Indraprastha Institute of Information Technology Delhi June, 2016 ©Indraprastha Institute of Information Technology (IIITD), New Delhi 2016 An effective and efficient methodology for SoC power management through UPF By Renduchinthala Anusha Submitted in partial fulfilment of the requirements for the degree of Master of Technology in Electronics & Communication Engineering with specialization in VLSI & Embedded Systems To Indraprastha Institute of Information Technology Delhi June, 2016 Abstract With technology scaling and increase of chip complexity, power consumption of chip has been rising and its power architecture is getting complicated. Many power management techniques like power gating, multi-voltage, multi-threshold are applied to reduce power dissipation of devices. UPF is an IEEE 1801 standard format to describe the power architecture, also called as power intent, including power network connectivity and power reduction methods. It enables verification of power intent at early phases of the design cycle. The UPF developed should be consistent with the design at all stages of the design cycle and it should be updated according to the modifications made in the design. In conventional UPF flow through design cycle, few practical challenges are faced. Many bugs are not detected at earlier phases which might lead to the wrong implementation of power intent. In addition, parallel development of power intent for complex designs, limitations of UPF standard to describe few power intent components effectively and time- consuming conventional UPF flow hinder efficient UPF development and management. -

Important Notice Dear Customer, on 7 February 2017 The

Important notice Dear Customer, On 7 February 2017 the former NXP Standard Product business became a new company with the tradename Nexperia. Nexperia is an industry leading supplier of Discrete, Logic and PowerMOS semiconductors with its focus on the automotive, industrial, computing, consumer and wearable application markets In data sheets and application notes which still contain NXP or Philips Semiconductors references, use the references to Nexperia, as shown below. Instead of http://www.nxp.com, http://www.philips.com/ or http://www.semiconductors.philips.com/, use http://www.nexperia.com Instead of [email protected] or [email protected], use [email protected] (email) Replace the copyright notice at the bottom of each page or elsewhere in the document, depending on the version, as shown below: - © NXP N.V. (year). All rights reserved or © Koninklijke Philips Electronics N.V. (year). All rights reserved Should be replaced with: - © Nexperia B.V. (year). All rights reserved. If you have any questions related to the data sheet, please contact our nearest sales office via e-mail or telephone (details via [email protected]). Thank you for your cooperation and understanding, Kind regards, Team Nexperia BUK954R4-80E N-channel TrenchMOS logic level FET 11 September 2012 Product data sheet 1. Product profile 1.1 General description Logic level N-channel MOSFET in a SOT78 package using TrenchMOS technology. This product has been designed and qualified to AEC Q101 standard for use in high performance automotive applications. 1.2 Features and benefits • AEC Q101 compliant • Repetitive avalanche rated • Suitable for thermally demanding environments due to 175 °C rating • True logic level gate with Vgst(th) rating of greater than 0.5V at 175 °C 1.3 Applications • 12V, 24V and 48V Automotive systems • Motors, lamps and solenoid control • Start-Stop micro-hybrid applications • Transmission control • Ultra high performance power switching 1.4 Quick reference data Table 1. -

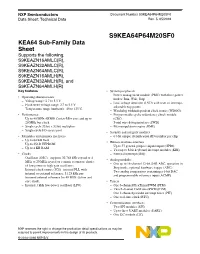

S9KEA64P64M20SF0, KEA64 Sub-Family Data Sheet

NXP Semiconductors Document Number S9KEA64P64M20SF0 Data Sheet: Technical Data Rev. 5, 05/2016 S9KEA64P64M20SF0 KEA64 Sub-Family Data Sheet Supports the following: S9KEAZN16AMLC(R), S9KEAZN32AMLC(R), S9KEAZN64AMLC(R), S9KEAZN16AMLH(R), S9KEAZN32AMLH(R), and S9KEAZN64AMLH(R) Key features • System peripherals – Power management module (PMC) with three power • Operating characteristics modes: Run, Wait, Stop – Voltage range: 2.7 to 5.5 V – Low-voltage detection (LVD) with reset or interrupt, – Flash write voltage range: 2.7 to 5.5 V selectable trip points – Temperature range (ambient): -40 to 125°C – Watchdog with independent clock source (WDOG) • Performance – Programmable cyclic redundancy check module – Up to 40 MHz ARM® Cortex-M0+ core and up to (CRC) 20 MHz bus clock – Serial wire debug interface (SWD) – Single cycle 32-bit x 32-bit multiplier – Bit manipulation engine (BME) – Single cycle I/O access port • Security and integrity modules • Memories and memory interfaces – 64-bit unique identification (ID) number per chip – Up to 64 KB flash • Human-machine interface – Up to 256 B EEPROM – Up to 57 general-purpose input/output (GPIO) – Up to 4 KB RAM – Two up to 8-bit keyboard interrupt modules (KBI) • Clocks – External interrupt (IRQ) – Oscillator (OSC) - supports 32.768 kHz crystal or 4 • Analog modules MHz to 20 MHz crystal or ceramic resonator; choice – One up to 16-channel 12-bit SAR ADC, operation in of low power or high gain oscillators Stop mode, optional hardware trigger (ADC) – Internal clock source (ICS) - internal FLL with – Two analog comparators containing a 6-bit DAC internal or external reference, 31.25 kHz pre- and programmable reference input (ACMP) trimmed internal reference for 40 MHz system and core clock. -

Mask Set Errata for Mask XN33G

NXP Semiconductors MWCT1xxx_XN33G Mask Set Errata Rev. March 2019 Mask Set Errata for Mask XN33G This report applies to mask XN33G for these products: • MWCT1xxxA3 Table 1. Revision History Revision Changes Mar 2019 Public release revision. e9432: eFlexPWM: Fractional delay block may powerup with an output of 1 instead of 0. Description: Any of the eFlexPWM outputs may be set to 1 upon powering up the fractional delay block. Because there is no reset signal to the flops in the fractional delay block to force a specific reset state, the output must be cleared by creating a pulse on the PWM. This issue only occurs when using the fractional delay block and only lasts until the first time that PWM channel transitions. Workaround: After powering up the fractional delay block of the eFlexPWM by setting FRCTRL[FRAC_PU] and waiting the required power up time, program the VAL2-5 registers in all submodules to create a PWM pulse (>0% duty cycle) and run for at least one PWM period to clear the state of the registers in the analog block. This can be done prior to enabling the PWM outputs so that external circuitry is not affected. How to Reach Us: Information in this document is provided solely to enable system and software implementers to use Home Page: NXP products. There are no express or implied copyright licenses granted hereunder to design or nxp.com fabricate any integrated circuits based on the information in this document. NXP reserves the right to Web Support: make changes without further notice to any products herein.