Chapter 2. Vectors and Vector Spaces

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

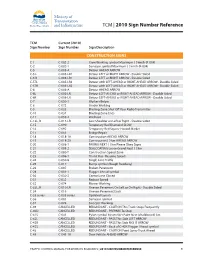

2010 Sign Number Reference for Traffic Control Manual

TCM | 2010 Sign Number Reference TCM Current (2010) Sign Number Sign Number Sign Description CONSTRUCTION SIGNS C-1 C-002-2 Crew Working symbol Maximum ( ) km/h (R-004) C-2 C-002-1 Surveyor symbol Maximum ( ) km/h (R-004) C-5 C-005-A Detour AHEAD ARROW C-5 L C-005-LR1 Detour LEFT or RIGHT ARROW - Double Sided C-5 R C-005-LR1 Detour LEFT or RIGHT ARROW - Double Sided C-5TL C-005-LR2 Detour with LEFT-AHEAD or RIGHT-AHEAD ARROW - Double Sided C-5TR C-005-LR2 Detour with LEFT-AHEAD or RIGHT-AHEAD ARROW - Double Sided C-6 C-006-A Detour AHEAD ARROW C-6L C-006-LR Detour LEFT-AHEAD or RIGHT-AHEAD ARROW - Double Sided C-6R C-006-LR Detour LEFT-AHEAD or RIGHT-AHEAD ARROW - Double Sided C-7 C-050-1 Workers Below C-8 C-072 Grader Working C-9 C-033 Blasting Zone Shut Off Your Radio Transmitter C-10 C-034 Blasting Zone Ends C-11 C-059-2 Washout C-13L, R C-013-LR Low Shoulder on Left or Right - Double Sided C-15 C-090 Temporary Red Diamond SLOW C-16 C-092 Temporary Red Square Hazard Marker C-17 C-051 Bridge Repair C-18 C-018-1A Construction AHEAD ARROW C-19 C-018-2A Construction ( ) km AHEAD ARROW C-20 C-008-1 PAVING NEXT ( ) km Please Obey Signs C-21 C-008-2 SEALCOATING Loose Gravel Next ( ) km C-22 C-080-T Construction Speed Zone C-23 C-086-1 Thank You - Resume Speed C-24 C-030-8 Single Lane Traffic C-25 C-017 Bump symbol (Rough Roadway) C-26 C-007 Broken Pavement C-28 C-001-1 Flagger Ahead symbol C-30 C-030-2 Centre Lane Closed C-31 C-032 Reduce Speed C-32 C-074 Mower Working C-33L, R C-010-LR Uneven Pavement On Left or On Right - Double Sided C-34 -

Glossary of Linear Algebra Terms

INNER PRODUCT SPACES AND THE GRAM-SCHMIDT PROCESS A. HAVENS 1. The Dot Product and Orthogonality 1.1. Review of the Dot Product. We first recall the notion of the dot product, which gives us a familiar example of an inner product structure on the real vector spaces Rn. This product is connected to the Euclidean geometry of Rn, via lengths and angles measured in Rn. Later, we will introduce inner product spaces in general, and use their structure to define general notions of length and angle on other vector spaces. Definition 1.1. The dot product of real n-vectors in the Euclidean vector space Rn is the scalar product · : Rn × Rn ! R given by the rule n n ! n X X X (u; v) = uiei; viei 7! uivi : i=1 i=1 i n Here BS := (e1;:::; en) is the standard basis of R . With respect to our conventions on basis and matrix multiplication, we may also express the dot product as the matrix-vector product 2 3 v1 6 7 t î ó 6 . 7 u v = u1 : : : un 6 . 7 : 4 5 vn It is a good exercise to verify the following proposition. Proposition 1.1. Let u; v; w 2 Rn be any real n-vectors, and s; t 2 R be any scalars. The Euclidean dot product (u; v) 7! u · v satisfies the following properties. (i:) The dot product is symmetric: u · v = v · u. (ii:) The dot product is bilinear: • (su) · v = s(u · v) = u · (sv), • (u + v) · w = u · w + v · w. -

Calculus Terminology

AP Calculus BC Calculus Terminology Absolute Convergence Asymptote Continued Sum Absolute Maximum Average Rate of Change Continuous Function Absolute Minimum Average Value of a Function Continuously Differentiable Function Absolutely Convergent Axis of Rotation Converge Acceleration Boundary Value Problem Converge Absolutely Alternating Series Bounded Function Converge Conditionally Alternating Series Remainder Bounded Sequence Convergence Tests Alternating Series Test Bounds of Integration Convergent Sequence Analytic Methods Calculus Convergent Series Annulus Cartesian Form Critical Number Antiderivative of a Function Cavalieri’s Principle Critical Point Approximation by Differentials Center of Mass Formula Critical Value Arc Length of a Curve Centroid Curly d Area below a Curve Chain Rule Curve Area between Curves Comparison Test Curve Sketching Area of an Ellipse Concave Cusp Area of a Parabolic Segment Concave Down Cylindrical Shell Method Area under a Curve Concave Up Decreasing Function Area Using Parametric Equations Conditional Convergence Definite Integral Area Using Polar Coordinates Constant Term Definite Integral Rules Degenerate Divergent Series Function Operations Del Operator e Fundamental Theorem of Calculus Deleted Neighborhood Ellipsoid GLB Derivative End Behavior Global Maximum Derivative of a Power Series Essential Discontinuity Global Minimum Derivative Rules Explicit Differentiation Golden Spiral Difference Quotient Explicit Function Graphic Methods Differentiable Exponential Decay Greatest Lower Bound Differential -

Rotation Matrix - Wikipedia, the Free Encyclopedia Page 1 of 22

Rotation matrix - Wikipedia, the free encyclopedia Page 1 of 22 Rotation matrix From Wikipedia, the free encyclopedia In linear algebra, a rotation matrix is a matrix that is used to perform a rotation in Euclidean space. For example the matrix rotates points in the xy -Cartesian plane counterclockwise through an angle θ about the origin of the Cartesian coordinate system. To perform the rotation, the position of each point must be represented by a column vector v, containing the coordinates of the point. A rotated vector is obtained by using the matrix multiplication Rv (see below for details). In two and three dimensions, rotation matrices are among the simplest algebraic descriptions of rotations, and are used extensively for computations in geometry, physics, and computer graphics. Though most applications involve rotations in two or three dimensions, rotation matrices can be defined for n-dimensional space. Rotation matrices are always square, with real entries. Algebraically, a rotation matrix in n-dimensions is a n × n special orthogonal matrix, i.e. an orthogonal matrix whose determinant is 1: . The set of all rotation matrices forms a group, known as the rotation group or the special orthogonal group. It is a subset of the orthogonal group, which includes reflections and consists of all orthogonal matrices with determinant 1 or -1, and of the special linear group, which includes all volume-preserving transformations and consists of matrices with determinant 1. Contents 1 Rotations in two dimensions 1.1 Non-standard orientation -

1 Sets and Set Notation. Definition 1 (Naive Definition of a Set)

LINEAR ALGEBRA MATH 2700.006 SPRING 2013 (COHEN) LECTURE NOTES 1 Sets and Set Notation. Definition 1 (Naive Definition of a Set). A set is any collection of objects, called the elements of that set. We will most often name sets using capital letters, like A, B, X, Y , etc., while the elements of a set will usually be given lower-case letters, like x, y, z, v, etc. Two sets X and Y are called equal if X and Y consist of exactly the same elements. In this case we write X = Y . Example 1 (Examples of Sets). (1) Let X be the collection of all integers greater than or equal to 5 and strictly less than 10. Then X is a set, and we may write: X = f5; 6; 7; 8; 9g The above notation is an example of a set being described explicitly, i.e. just by listing out all of its elements. The set brackets {· · ·} indicate that we are talking about a set and not a number, sequence, or other mathematical object. (2) Let E be the set of all even natural numbers. We may write: E = f0; 2; 4; 6; 8; :::g This is an example of an explicity described set with infinitely many elements. The ellipsis (:::) in the above notation is used somewhat informally, but in this case its meaning, that we should \continue counting forever," is clear from the context. (3) Let Y be the collection of all real numbers greater than or equal to 5 and strictly less than 10. Recalling notation from previous math courses, we may write: Y = [5; 10) This is an example of using interval notation to describe a set. -

THE NUMBER of ⊕-SIGN TYPES Jian-Yi Shi Department Of

THE NUMBER OF © -SIGN TYPES Jian-yi Shi Department of Mathematics, East China Normal University Shanghai, 200062, P.R.C. In [6], the author introduced the concept of a sign type indexed by the set f(i; j) j 1 · i; j · n; i 6= jg for the description of the cells in the affine Weyl group of type Ae` ( in the sense of Kazhdan and Lusztig [3] ) . Later, the author generalized it by defining a sign type indexed by any root system [8]. Since then, sign type has played a more and more important role in the study of the cells of any irreducible affine Weyl group [1],[2],[5],[8],[9],[10],[12]. Thus it is worth to study sign type itself. One task is to enumerate various kinds of sign types. In [8], the author verified Carter’s conjecture on the number of all sign types indexed by any irreducible root system. In the present paper, we consider some special kind of sign types, called ©-sign types. We show that these sign types are in one-to-one correspondence with the increasing subsets of the related positive root system as a partially ordered set. Thus we get the number of all the ©-sign types indexed by any irreducible positive root system Φ+ by enumerating all the increasing subsets Key words and phrases. © -sign types, increasing subsets, Young diagram. Supported by the National Science Foundation of China and by the Science Foundation of the University Doctorial Program of CNEC Typeset by AMS-TEX 1 2 JIAN.YI-SHI of Φ+. -

Sign of the Derivative TEACHER NOTES MATH NSPIRED

Sign of the Derivative TEACHER NOTES MATH NSPIRED Math Objectives • Students will make a connection between the sign of the derivative (positive/negative/zero) and the increasing or decreasing nature of the graph. Activity Type • Student Exploration About the Lesson TI-Nspire™ Technology Skills: • Students will move a point on a function and observe the changes • Download a TI-Nspire in the sign of the derivative. Based on observations, students will document answer questions on a worksheet. In the second part of the • Open a document investigation, students will move the point and see a trace of the • Move between pages derivative to connect the positive or negative sign of the • Grab and drag a point derivative with the location on the coordinate plane. Tech Tips: Directions • Make sure the font size on • Grab the point on the function and move it along the curve. your TI-Nspire handheld is Observe the relationship between the sign of the derivative and set to Medium. the direction of the graph. • You can hide the function entry line by pressing /G. Lesson Materials: Student Activity Sign_of_the_Derivative_Student .pdf Sign_of_the_Derivative_Student .doc TI-Nspire document Sign_of_the_Derivative.tns Visit www.mathnspired.com for lesson updates. ©2010 DANIEL R. ILARIA, PH.D 1 Used with permission Sign of the Derivative TEACHER NOTES MATH NSPIRED Discussion Points and Possible Answers PART I: Move to page 1.3. Tech Tips: The sign of the derivative will indicate negative when the function is decreasing and positive when the function is increasing. The screen will also indicate a zero derivative. Students will need to edit the function on the screen in order to investigate other functions. -

A Tutorial on Euler Angles and Quaternions

A Tutorial on Euler Angles and Quaternions Moti Ben-Ari Department of Science Teaching Weizmann Institute of Science http://www.weizmann.ac.il/sci-tea/benari/ Version 2.0.1 c 2014–17 by Moti Ben-Ari. This work is licensed under the Creative Commons Attribution-ShareAlike 3.0 Unported License. To view a copy of this license, visit http://creativecommons.org/licenses/ by-sa/3.0/ or send a letter to Creative Commons, 444 Castro Street, Suite 900, Mountain View, California, 94041, USA. Chapter 1 Introduction You sitting in an airplane at night, watching a movie displayed on the screen attached to the seat in front of you. The airplane gently banks to the left. You may feel the slight acceleration, but you won’t see any change in the position of the movie screen. Both you and the screen are in the same frame of reference, so unless you stand up or make another move, the position and orientation of the screen relative to your position and orientation won’t change. The same is not true with respect to your position and orientation relative to the frame of reference of the earth. The airplane is moving forward at a very high speed and the bank changes your orientation with respect to the earth. The transformation of coordinates (position and orientation) from one frame of reference is a fundamental operation in several areas: flight control of aircraft and rockets, move- ment of manipulators in robotics, and computer graphics. This tutorial introduces the mathematics of rotations using two formalisms: (1) Euler angles are the angles of rotation of a three-dimensional coordinate frame. -

Operations with Integers Sponsored by the Center for Teaching and Learning at UIS

Operations with Integers Sponsored by The Center for Teaching and Learning at UIS What are integers? Integers are zero and all the positive and negative whole numbers. When you first begin to work with integers, imagine a tremendously large line that extends infinitely left and right. Now, directly in front of you is a spot on that line we will call the center and label it zero. To the left are all the negative numbers and to the right all the positive. -10 -9 -8 -7 -6 -5 -4 -3 -2 -1 0 1 2 3 4 5 6 7 8 9 10 Adding Integers -30 01 Integers with the Same Sign `0 Adding integers that have the same sign is pretty straightforward. Add the two numbers together and maintain the sign. Example: Both numbers are positive so we add the numbers together and number remains positive. Example: Both addends are negative, so we add them together and maintain the negative sign. Integers with Different Signs When adding integers with different signs, ignore the signs at first and subtract the smaller number from the larger. The final sum will maintain the sign of the larger addend. Example: Since we are adding two numbers with different signs, ignore the signs and we are left with 3 and 8. The number 3 is smaller than 8 so we subtract 3 from 8 which give us 5. Of the two addends, 8 was the larger and was positive, so the final sum will be positive. Thus, our final sum is 5. Example: In this example, when we ignore the signs, the number 50 is greater than 18. -

On the Expression of Higher Mathematics in American Sign Language John Tabak None, [email protected]

Journal of Interpretation Volume 25 | Issue 1 Article 10 2016 On the Expression of Higher Mathematics in American Sign Language John Tabak none, [email protected] Follow this and additional works at: http://digitalcommons.unf.edu/joi Part of the Accessibility Commons, Applied Mathematics Commons, Bilingual, Multilingual, and Multicultural Education Commons, and the Mathematics Commons Suggested Citation Tabak, John (2016) "On the Expression of Higher Mathematics in American Sign Language," Journal of Interpretation: Vol. 25 : Iss. 1 , Article 10. Available at: http://digitalcommons.unf.edu/joi/vol25/iss1/10 This Article is brought to you for free and open access by UNF Digital Commons. It has been accepted for inclusion in Journal of Interpretation by an authorized editor of the JOI, on behalf of the Registry of Interpreters for the Deaf (RID). For more information, please contact [email protected]. © All Rights Reserved Tabak On the Expression of Higher Mathematics in American Sign Language John Tabak ABSTRACT The grammar and vocabulary of higher mathematics are different from the grammar and vocabulary of conversational English and conversational American Sign Language (ASL). Consequently, mathematical language presents interpreters with a unique set of challenges. This article characterizes those aspects of mathematical grammar that are peculiar to the subject. (A discussion of mathematical vocabulary and its expression in ASL can be found elsewhere (Tabak, 2014).) An increased awareness of the grammar of mathematical language will prove useful to those interpreters for the deaf and deaf mathematics professionals seeking to express higher mathematics in ASL. In this article one will, for the first time, find a model of mathematical language created for interpreters that identifies those aspects of the language that must be retained in any accurate interpretation. -

Vector Space Theory

Vector Space Theory A course for second year students by Robert Howlett typesetting by TEX Contents Copyright University of Sydney Chapter 1: Preliminaries 1 §1a Logic and commonSchool sense of Mathematics and Statistics 1 §1b Sets and functions 3 §1c Relations 7 §1d Fields 10 Chapter 2: Matrices, row vectors and column vectors 18 §2a Matrix operations 18 §2b Simultaneous equations 24 §2c Partial pivoting 29 §2d Elementary matrices 32 §2e Determinants 35 §2f Introduction to eigenvalues 38 Chapter 3: Introduction to vector spaces 49 §3a Linearity 49 §3b Vector axioms 52 §3c Trivial consequences of the axioms 61 §3d Subspaces 63 §3e Linear combinations 71 Chapter 4: The structure of abstract vector spaces 81 §4a Preliminary lemmas 81 §4b Basis theorems 85 §4c The Replacement Lemma 86 §4d Two properties of linear transformations 91 §4e Coordinates relative to a basis 93 Chapter 5: Inner Product Spaces 99 §5a The inner product axioms 99 §5b Orthogonal projection 106 §5c Orthogonal and unitary transformations 116 §5d Quadratic forms 121 iii Chapter 6: Relationships between spaces 129 §6a Isomorphism 129 §6b Direct sums Copyright University of Sydney 134 §6c Quotient spaces 139 §6d The dual spaceSchool of Mathematics and Statistics 142 Chapter 7: Matrices and Linear Transformations 148 §7a The matrix of a linear transformation 148 §7b Multiplication of transformations and matrices 153 §7c The Main Theorem on Linear Transformations 157 §7d Rank and nullity of matrices 161 Chapter 8: Permutations and determinants 171 §8a Permutations 171 §8b Determinants -

Definition of a Vector Space

i i “main” 2007/2/16 page 240 i i 240 CHAPTER 4 Vector Spaces 5. Verify the commutative law of addition for vectors in 8. Show with examples that if x is a vector in the first R4. quadrant of R2 (i.e., both coordinates of x are posi- tive) and y is a vector in the third quadrant of R2 (i.e., 6. Verify the associative law of addition for vectors in both coordinates of y are negative), then the sum x +y R4. could occur in any of the four quadrants. 7. Verify properties (4.1.5)–(4.1.8) for vectors in R3. 4.2 Definition of a Vector Space In the previous section, we showed how the set Rn of all ordered n-tuples of real num- bers, together with the addition and scalar multiplication operations defined on it, has the same algebraic properties as the familiar algebra of geometric vectors. We now push this abstraction one step further and introduce the idea of a vector space. Such an ab- straction will enable us to develop a mathematical framework for studying a broad class of linear problems, such as systems of linear equations, linear differential equations, and systems of linear differential equations, which have far-reaching applications in all areas of applied mathematics, science, and engineering. Let V be a nonempty set. For our purposes, it is useful to call the elements of V vectors and use the usual vector notation u, v,...,to denote these elements. For example, if V is the set of all 2 × 2 matrices, then the vectors in V are 2 × 2 matrices, whereas if V is the set of all positive integers, then the vectors in V are positive integers.