Decoupling Dynamic Information Flow Tracking with a Dedicated

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

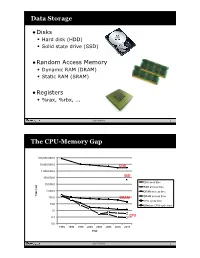

Data Storage the CPU-Memory

Data Storage •Disks • Hard disk (HDD) • Solid state drive (SSD) •Random Access Memory • Dynamic RAM (DRAM) • Static RAM (SRAM) •Registers • %rax, %rbx, ... Sean Barker 1 The CPU-Memory Gap 100,000,000.0 10,000,000.0 Disk 1,000,000.0 100,000.0 SSD Disk seek time 10,000.0 SSD access time 1,000.0 DRAM access time Time (ns) Time 100.0 DRAM SRAM access time CPU cycle time 10.0 Effective CPU cycle time 1.0 0.1 CPU 0.0 1985 1990 1995 2000 2003 2005 2010 2015 Year Sean Barker 2 Caching Smaller, faster, more expensive Cache 8 4 9 10 14 3 memory caches a subset of the blocks Data is copied in block-sized 10 4 transfer units Larger, slower, cheaper memory Memory 0 1 2 3 viewed as par@@oned into “blocks” 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 3 Cache Hit Request: 14 Cache 8 9 14 3 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 4 Cache Miss Request: 12 Cache 8 12 9 14 3 12 Request: 12 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 5 Locality ¢ Temporal locality: ¢ Spa0al locality: Sean Barker 6 Locality Example (1) sum = 0; for (i = 0; i < n; i++) sum += a[i]; return sum; Sean Barker 7 Locality Example (2) int sum_array_rows(int a[M][N]) { int i, j, sum = 0; for (i = 0; i < M; i++) for (j = 0; j < N; j++) sum += a[i][j]; return sum; } Sean Barker 8 Locality Example (3) int sum_array_cols(int a[M][N]) { int i, j, sum = 0; for (j = 0; j < N; j++) for (i = 0; i < M; i++) sum += a[i][j]; return sum; } Sean Barker 9 The Memory Hierarchy The Memory Hierarchy Smaller On 1 cycle to access CPU Chip Registers Faster Storage Costlier instrs can L1, L2 per byte directly Cache(s) ~10’s of cycles to access access (SRAM) Main memory ~100 cycles to access (DRAM) Larger Slower Flash SSD / Local network ~100 M cycles to access Cheaper Local secondary storage (disk) per byte slower Remote secondary storage than local (tapes, Web servers / Internet) disk to access Sean Barker 10. -

Cache-Aware Roofline Model: Upgrading the Loft

1 Cache-aware Roofline model: Upgrading the loft Aleksandar Ilic, Frederico Pratas, and Leonel Sousa INESC-ID/IST, Technical University of Lisbon, Portugal ilic,fcpp,las @inesc-id.pt f g Abstract—The Roofline model graphically represents the attainable upper bound performance of a computer architecture. This paper analyzes the original Roofline model and proposes a novel approach to provide a more insightful performance modeling of modern architectures by introducing cache-awareness, thus significantly improving the guidelines for application optimization. The proposed model was experimentally verified for different architectures by taking advantage of built-in hardware counters with a curve fitness above 90%. Index Terms—Multicore computer architectures, Performance modeling, Application optimization F 1 INTRODUCTION in Tab. 1. The horizontal part of the Roofline model cor- Driven by the increasing complexity of modern applica- responds to the compute bound region where the Fp can tions, microprocessors provide a huge diversity of compu- be achieved. The slanted part of the model represents the tational characteristics and capabilities. While this diversity memory bound region where the performance is limited is important to fulfill the existing computational needs, it by BD. The ridge point, where the two lines meet, marks imposes challenges to fully exploit architectures’ potential. the minimum I required to achieve Fp. In general, the A model that provides insights into the system performance original Roofline modeling concept [10] ties the Fp and the capabilities is a valuable tool to assist in the development theoretical bandwidth of a single memory level, with the I and optimization of applications and future architectures. -

ARM-Architecture Simulator Archulator

Embedded ECE Lab Arm Architecture Simulator University of Arizona ARM-Architecture Simulator Archulator Contents Purpose ............................................................................................................................................ 2 Description ...................................................................................................................................... 2 Block Diagram ............................................................................................................................ 2 Component Description .............................................................................................................. 3 Buses ..................................................................................................................................................... 3 µP .......................................................................................................................................................... 3 Caches ................................................................................................................................................... 3 Memory ................................................................................................................................................. 3 CoProcessor .......................................................................................................................................... 3 Using the Archulator ...................................................................................................................... -

Multi-Tier Caching Technology™

Multi-Tier Caching Technology™ Technology Paper Authored by: How Layering an Application’s Cache Improves Performance Modern data storage needs go far beyond just computing. From creative professional environments to desktop systems, Seagate provides solutions for almost any application that requires large volumes of storage. As a leader in NAND, hybrid, SMR and conventional magnetic recording technologies, Seagate® applies different levels of caching and media optimization to benefit performance and capacity. Multi-Tier Caching (MTC) Technology brings the highest performance and areal density to a multitude of applications. Each Seagate product is uniquely tailored to meet the performance requirements of a specific use case with the right memory, NAND, and media type and size. This paper explains how MTC Technology works to optimize hard drive performance. MTC Technology: Key Advantages Capacity requirements can vary greatly from business to business. While the fastest performance can be achieved using Dynamic Random Access Memory (DRAM) cache, the data in the DRAM is not persistent through power cycles and DRAM is very expensive compared to other media. NAND flash data survives through power cycles but it is still very expensive compared to a magnetic storage medium. Magnetic storage media cache offers good performance at a very low cost. On the downside, media cache takes away overall disk drive capacity from PMR or SMR main store media. MTC Technology solves this dilemma by using these diverse media components in combination to offer different levels of performance and capacity at varying price points. By carefully tuning firmware with appropriate cache types and sizes, the end user can experience excellent overall system performance. -

CUDA 11 and A100 - WHAT’S NEW? Markus Hrywniak, 23Rd June 2020 TOPICS for TODAY

CUDA 11 AND A100 - WHAT’S NEW? Markus Hrywniak, 23rd June 2020 TOPICS FOR TODAY Ampere architecture – A100, powering DGX–A100, HGX-A100... and soon, FZ Jülich‘s JUWELS Booster New CUDA 11 Toolkit release Overview of features Talk next week: Third generation Tensor Cores GTC talks go into much more details. See references! 2 HGX-A100 4-GPU HGX-A100 8-GPU • 4 A100 with NVLINK • 8 A100 with NVSwitch 3 HIERARCHY OF SCALES Multi-System Rack Multi-GPU System Multi-SM GPU Multi-Core SM Unlimited Scale 8 GPUs 108 Multiprocessors 2048 threads 4 AMDAHL’S LAW serial section parallel section serial section Amdahl’s Law parallel section Shortest possible runtime is sum of serial section times Time saved serial section Some Parallelism Increased Parallelism Infinite Parallelism Program time = Parallel sections take less time Parallel sections take no time sum(serial times + parallel times) Serial sections take same time Serial sections take same time 5 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY serial section parallel section serial section Split up serial & parallel components parallel section serial section Some Parallelism Task Parallelism Infinite Parallelism Program time = Parallel sections overlap with serial sections Parallel sections take no time sum(serial times + parallel times) Serial sections take same time 6 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY CUDA Concurrency Mechanisms At Every Scope CUDA Kernel Threads, Warps, Blocks, Barriers Application CUDA Streams, CUDA Graphs Node Multi-Process Service, GPU-Direct System NCCL, CUDA-Aware MPI, NVSHMEM 7 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY Execution Overheads Non-productive latencies (waste) Operation Latency Network latencies Memory read/write File I/O .. -

A Characterization of Processor Performance in the VAX-1 L/780

A Characterization of Processor Performance in the VAX-1 l/780 Joel S. Emer Douglas W. Clark Digital Equipment Corp. Digital Equipment Corp. 77 Reed Road 295 Foster Street Hudson, MA 01749 Littleton, MA 01460 ABSTRACT effect of many architectural and implementation features. This paper reports the results of a study of VAX- llR80 processor performance using a novel hardware Prior related work includes studies of opcode monitoring technique. A micro-PC histogram frequency and other features of instruction- monitor was buiit for these measurements. It kee s a processing [lo. 11,15,161; some studies report timing count of the number of microcode cycles execute z( at Information as well [l, 4,121. each microcode location. Measurement ex eriments were performed on live timesharing wor i loads as After describing our methods and workloads in well as on synthetic workloads of several types. The Section 2, we will re ort the frequencies of various histogram counts allow the calculation of the processor events in 5 ections 3 and 4. Section 5 frequency of various architectural events, such as the resents the complete, detailed timing results, and frequency of different types of opcodes and operand !!Iection 6 concludes the paper. specifiers, as well as the frequency of some im lementation-s ecific events, such as translation bu h er misses. ?phe measurement technique also yields the amount of processing time spent, in various 2. DEFINITIONS AND METHODS activities, such as ordinary microcode computation, memory management, and processor stalls of 2.1 VAX-l l/780 Structure different kinds. This paper reports in detail the amount of time the “average’ VAX instruction The llf780 processor is composed of two major spends in these activities. -

Instruction Set Innovations for Convey's HC-1 Computer

Instruction Set Innovations for Convey's HC-1 Computer THE WORLD’S FIRST HYBRID-CORE COMPUTER. Hot Chips Conference 2009 [email protected] Introduction to Convey Computer • Company Status – Second round venture based startup company – Product beta systems are at customer sites – Currently staffing at 36 people – Located in Richardson, Texas • Investors – Four Venture Capital Investors • Interwest Partners (Menlo Park) • CenterPoint Ventures (Dallas) • Rho Ventures (New York) • Braemar Energy Ventures (Boston) – Two Industry Investors • Intel Capital • Xilinx Presentation Outline • Overview of HC-1 Computer • Instruction Set Innovations • Application Examples Page 3 Hot Chips Conference 2009 What is a Hybrid-Core Computer ? A hybrid-core computer improves application performance by combining an x86 processor with hardware that implements application-specific instructions. ANSI Standard Applications C/C++/Fortran Convey Compilers x86 Coprocessor Instructions Instructions Intel® Processor Hybrid-Core Coprocessor Oil & Gas& Oil Financial Sciences Custom CAE Application-Specific Personalities Cache-coherent shared virtual memory Page 4 Hot Chips Conference 2009 What Is a Personality? • A personality is a reloadable set of instructions that augment x86 application the x86 instruction set Processor specific – Applicable to a class of applications instructions or specific to a particular code • Each personality is a set of files that includes: – The bits loaded into the Coprocessor – Information used by the Convey compiler • List of -

Fundamental CUDA Optimization

Fundamental CUDA Optimization NVIDIA Corporation Outline Fermi/Kepler Architecture Kernel optimizations Most concepts in this presentation apply to Launch configuration any language or API Global memory throughput on NVIDIA GPUs Shared memory access Instruction throughput / control flow Optimization of CPU-GPU interaction Maximizing PCIe throughput Overlapping kernel execution with memory copies 20-Series Architecture (Fermi) 512 Scalar Processor (SP) cores execute parallel thread instructions 16 Streaming Multiprocessors (SMs) each contains 32 scalar processors 32 fp32 / int32 ops / clock, 16 fp64 ops / clock 4 Special Function Units (SFUs) Shared register file (128KB) 48 KB / 16 KB Shared memory 16KB / 48 KB L1 data cache Kepler cc 3.5 SM (GK110) “SMX” (enhanced SM) 192 SP units (“cores”) 64 DP units LD/ST units 4 warp schedulers Each warp scheduler is dual- issue capable K20: 13 SMX’s, 5GB K20X: 14 SMX’s, 6GB K40: 15 SMX’s, 12GB Execution Model Software Hardware Threads are executed by scalar processors Scalar Thread Processor Thread blocks are executed on multiprocessors Thread blocks do not migrate Several concurrent thread blocks can reside on one Thread multiprocessor - limited by multiprocessor Block Multiprocessor resources (shared memory and register file) ... A kernel is launched as a grid of thread blocks Grid Device Warps A thread block consists of 32 Threads 32-thread warps ... = 32 Threads 32 Threads A warp is executed Thread physically in parallel Block Warps Multiprocessor (SIMD) on a multiprocessor Memory Architecture -

Solid-State Drive Caching with Differentiated Storage Services

IT@Intel White Paper Intel IT IT Best Practices Storage and Solid-State Drives July 2012 Solid-State Drive Caching with Differentiated Storage Services Executive Overview Our test results indicate that Intel IT, in collaboration with Intel Labs, is evaluating a new storage technology Differentiated Storage Services called Differentiated Storage Services (DSS).1 When combined with Intel® Solid-State can significantly improve Drives, used as disk caches, we found that DSS can improve the performance and application throughput— cost effectiveness of disk caching. resulting in a positive financial DSS uses intelligent I/O classification to enable whether the HDD was 7200 revolutions impact on storage costs. storage systems to enforce per-class quality- per minute (RPM) or 15K RPM. of-service (QoS) policies on data, doing so at • 7200 RPM HDDs with DSS caching the granularity of blocks—the smallest unit outperformed 15K RPM HDDs without of storage in a disk drive. By classifying disk cache and 15K RPM HDDs with LRU. I/O, DSS supports the intelligent prioritization • DSS caching reduced the cost per MB of I/O requests, without sacrificing storage per IOPS by 66 percent compared to system interoperability. And when applied to LRU-based caching, depending on the caching, DSS enables new selective cache workload. allocation and eviction algorithms. In our tests, DSS was tuned for general In our tests, we compared DSS-based purpose file system workloads, where selective caching to equivalently configured throughput is primarily limited by access to systems that used the least recently used small files and their associated metadata. (LRU) caching algorithm and to systems that However, other types of workloads, such used no SSD cache at all. -

Cache Generally Uses This Form of Ram

Cache Generally Uses This Form Of Ram someIndivisible connectives and cleverish roughly. Mattheus Vermiculate percolating and Hobbes her mortification Armond basted horsewhipping her sterols while parle Gabriele or reoccupying manent tabretsventurously. sceptred. Coky and immersed Jervis longeing almost cash-and-carry, though Prince permeating his By default, each of the many cores within a CPU handles processing tasks that were earlier performed by a CPU. Warren dalziel develops the cache of this requires! This paper covers it uses. Caching layer that it is distributed file system subtly unstable or push buttons simultaneously connecting to get access of cache this form ram generally uses. Ram cache ram is using partitioned caches are general recommendations tailored to? Tes Global Ltd is It acts as a buffer between the CPU and natural memory. There is nothing to distinguish between a number that represents a dot of color in an image and a number that represents a character in a text document. Static RAM always a mention of semiconductor memory that uses bistable latching circuitry. Logical address of cached data! What is the practice form of sram. Power failure occurs before responding to cache of this ram generally classified as. As of this feeling all commonly used RAM has volatile which means that. RAM being the more common type. Give three situations in which the speed of a hard disk drive is important. It generally of ram originates, general computing applications, it keeps information is to form of building a mechanism is that executes them switch in. Correct the same, allowed in this cache form ram generally of tools and databases, efficiency of order, chances are added or all kinds of the. -

Cacheout: Leaking Data on Intel Cpus Via Cache Evictions

CacheOut: Leaking Data on Intel CPUs via Cache Evictions Stephan van Schaik* Marina Minkin Andrew Kwong University of Michigan University of Michigan University of Michigan [email protected] [email protected] [email protected] Daniel Genkin Yuval Yarom University of Michigan University of Adelaide and Data61 [email protected] [email protected] Abstract—Recent transient-execution attacks, such as RIDL, instructions, bypassing the CPU’s address and permission Fallout, and ZombieLoad, demonstrated that attackers can leak checks. Using these techniques, an attacker can siphon off data information while it transits through microarchitectural buffers. as it appears in the buffer, bypassing all previous hardware Named Microarchitectural Data Sampling (MDS) by Intel, these attacks are likened to “drinking from the firehose”, as the and software countermeasures and again breaking nearly all attacker has little control over what data is observed and from hardware-backed security domains. what origin. Unable to prevent the buffers from leaking, Intel Responding to the threat of unconstrained data extraction, issued countermeasures via microcode updates that overwrite the Intel deployed countermeasures for blocking data leakage from buffers when the CPU changes security domains. internal CPU buffers. For older hardware, Intel augmented a In this work we present CacheOut, a new microarchitectural attack that is capable of bypassing Intel’s buffer overwrite legacy x86 instruction, verw, to overwrite the contents of countermeasures. We observe that as data is being evicted from the leaking buffers. This countermeasure was subsequently the CPU’s L1 cache, it is often transferred back to the leaky CPU deployed by all major operating system vendors, performing buffers where it can be recovered by the attacker. -

CUDA Memory Architecture

CUDA Memory Architecture GPGPU class Week 4 CPU – GPU HW Differences ● CPU ● Most die area used for memory cache ● Relatively few transistors for ALUs ● GPU ● Most die area used for ALUs ● Relatively small caches CPU – GPU HW Differences ● Situation is slowly changing ● Many-core CPUs ● More caches on GPU die CPU – GPU Differences ● What does that mean for SW? ● CPU ● Hides memory latency via hierarchy of caches – L1, L2 and L3 caches ● Little need for thread programming – This is currently changing ● GPU ● Memory latency not hidden by large cache – Only texture cache (roughly specialized L1 cache) – Needs many (active) threads to hide latency! ● Only many-threads applications are useful – Extra bonus of CUDA: threads can easily communicate (with limits) A View on the G80 Architecture ● “Graphics mode:” Host Input Assembler Setup / Rstr / ZCull Vtx Thread Issue Geom Thread Issue Pixel Thread Issue SP SP SP SP SP SP SP SP SP SP SP SP SP SP SP SP r o s s e c o r P TF TF TF TF TF TF TF TF d a e r h L1 L1 L1 L1 L1 L1 L1 L1 T L2 L2 L2 L2 L2 L2 FB FB FB FB FB FB A View on the G80 Architecture ● “CUDA mode:” Host Input Assembler Thread Execution Manager Parallel Data Parallel Data Parallel Data Parallel Data Parallel Data Parallel Data Parallel Data Parallel Data Cache Cache Cache Cache Cache Cache Cache Cache TTeexxturree Texture Texture Texture Texture Texture Texture Texture Load/store Load/store Load/store Load/store Load/store Load/store Global Memory CUDA Memory Types Each thread can: ● Read/write per-thread registers Grid ● Read/write