Cache Generally Uses This Form of Ram

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

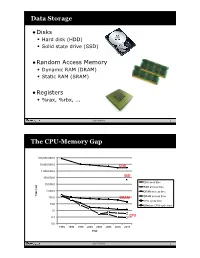

Data Storage the CPU-Memory

Data Storage •Disks • Hard disk (HDD) • Solid state drive (SSD) •Random Access Memory • Dynamic RAM (DRAM) • Static RAM (SRAM) •Registers • %rax, %rbx, ... Sean Barker 1 The CPU-Memory Gap 100,000,000.0 10,000,000.0 Disk 1,000,000.0 100,000.0 SSD Disk seek time 10,000.0 SSD access time 1,000.0 DRAM access time Time (ns) Time 100.0 DRAM SRAM access time CPU cycle time 10.0 Effective CPU cycle time 1.0 0.1 CPU 0.0 1985 1990 1995 2000 2003 2005 2010 2015 Year Sean Barker 2 Caching Smaller, faster, more expensive Cache 8 4 9 10 14 3 memory caches a subset of the blocks Data is copied in block-sized 10 4 transfer units Larger, slower, cheaper memory Memory 0 1 2 3 viewed as par@@oned into “blocks” 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 3 Cache Hit Request: 14 Cache 8 9 14 3 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 4 Cache Miss Request: 12 Cache 8 12 9 14 3 12 Request: 12 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 5 Locality ¢ Temporal locality: ¢ Spa0al locality: Sean Barker 6 Locality Example (1) sum = 0; for (i = 0; i < n; i++) sum += a[i]; return sum; Sean Barker 7 Locality Example (2) int sum_array_rows(int a[M][N]) { int i, j, sum = 0; for (i = 0; i < M; i++) for (j = 0; j < N; j++) sum += a[i][j]; return sum; } Sean Barker 8 Locality Example (3) int sum_array_cols(int a[M][N]) { int i, j, sum = 0; for (j = 0; j < N; j++) for (i = 0; i < M; i++) sum += a[i][j]; return sum; } Sean Barker 9 The Memory Hierarchy The Memory Hierarchy Smaller On 1 cycle to access CPU Chip Registers Faster Storage Costlier instrs can L1, L2 per byte directly Cache(s) ~10’s of cycles to access access (SRAM) Main memory ~100 cycles to access (DRAM) Larger Slower Flash SSD / Local network ~100 M cycles to access Cheaper Local secondary storage (disk) per byte slower Remote secondary storage than local (tapes, Web servers / Internet) disk to access Sean Barker 10. -

Secondary Memory

Secondary Memory This type of memory is also known as external memory or non-volatile. It is slower than main memory. These are used for storing data/Information permanently. CPU directly does not access these memories instead they are accessed via input-output routines. Contents of secondary memories are first transferred to main memory, and then CPU can access it. For example : Hard disk, CD-ROM, DVD etc. Electronic data is a sequence of bits. This data can either reside in : • Primary storage - main memory (RAM), relatively small, fast access, expensive (cost per MB), volatile (go away when power goes off) • Secondary storage - disks, tape, large amounts of data, slower access, cheap (cost per MB), persistent (remain even when power is off) Data storage has expanded from text and numeric files to include digital music files, photographic files, video files, and much more. These new types of files require secondary storage devices with much greater capacity than floppy disks. 1 Primary storage ( or main memory or internal memory) , often referred to simply as memory , is the only directly accessible to the CPU. Primary memory can be divided into volatile and nonvolatile memories. Primary storage (Main Memory ) has three main functions: 1-It stored all or part of the program that being executed. 2-It also holds data that are being used by the program. 3-It also stored the operating system programs that manage the operation of the computer. Limitation of Primary storage 1. Limited capacity- because the cost per bit of storage is high. 2. Volatile – data stored in it is lost when the electric power is turned off Or interrupted. -

Computer Organization and Architecture Designing for Performance Ninth Edition

COMPUTER ORGANIZATION AND ARCHITECTURE DESIGNING FOR PERFORMANCE NINTH EDITION William Stallings Boston Columbus Indianapolis New York San Francisco Upper Saddle River Amsterdam Cape Town Dubai London Madrid Milan Munich Paris Montréal Toronto Delhi Mexico City São Paulo Sydney Hong Kong Seoul Singapore Taipei Tokyo Editorial Director: Marcia Horton Designer: Bruce Kenselaar Executive Editor: Tracy Dunkelberger Manager, Visual Research: Karen Sanatar Associate Editor: Carole Snyder Manager, Rights and Permissions: Mike Joyce Director of Marketing: Patrice Jones Text Permission Coordinator: Jen Roach Marketing Manager: Yez Alayan Cover Art: Charles Bowman/Robert Harding Marketing Coordinator: Kathryn Ferranti Lead Media Project Manager: Daniel Sandin Marketing Assistant: Emma Snider Full-Service Project Management: Shiny Rajesh/ Director of Production: Vince O’Brien Integra Software Services Pvt. Ltd. Managing Editor: Jeff Holcomb Composition: Integra Software Services Pvt. Ltd. Production Project Manager: Kayla Smith-Tarbox Printer/Binder: Edward Brothers Production Editor: Pat Brown Cover Printer: Lehigh-Phoenix Color/Hagerstown Manufacturing Buyer: Pat Brown Text Font: Times Ten-Roman Creative Director: Jayne Conte Credits: Figure 2.14: reprinted with permission from The Computer Language Company, Inc. Figure 17.10: Buyya, Rajkumar, High-Performance Cluster Computing: Architectures and Systems, Vol I, 1st edition, ©1999. Reprinted and Electronically reproduced by permission of Pearson Education, Inc. Upper Saddle River, New Jersey, Figure 17.11: Reprinted with permission from Ethernet Alliance. Credits and acknowledgments borrowed from other sources and reproduced, with permission, in this textbook appear on the appropriate page within text. Copyright © 2013, 2010, 2006 by Pearson Education, Inc., publishing as Prentice Hall. All rights reserved. Manufactured in the United States of America. -

CSCI 120 Introduction to Computation Bits... and Pieces (Draft)

CSCI 120 Introduction to Computation Bits... and pieces (draft) Saad Mneimneh Visiting Professor Hunter College of CUNY 1 Yes No Yes No... I am a Bit You may recall from the previous lecture that the use of electro mechanical relays, and in subsequent years, diodes and transistor, made it possible to con- struct more advanced computers, e.g. ENIAC. This is accredited to the fact that these devices could function as on/off switches. On one hand, they create the ability to encode logic into the circuits of the computer. This means that the computer can perform different tasks under different conditions, i.e. the notion of a program. For instance, one could encode the logic if A OR B then C. On the other hand, these devices allow the engineers to worry less about the values that could possibly arise in the system: the switch is either on or off. It cannot be anything in between. Therefore, this means that any errors due to fluctuation in voltage levels are greatly reduced. It would be enough to simply distinguish between high voltage and low voltage. This brings us to the question of Analog versus Digital. In simple terms, a digital system encodes information using a number of de- vices that have discrete states (e.g. on/off switches). An analog system encodes information using a device that have continuous states (e.g. measurement in an electric circuit). To build an intuition for digital versus analog, consider the problem of en- coding a number using buckets of water. One possibility is to use two kinds of buckets, full and empty. -

Cache-Aware Roofline Model: Upgrading the Loft

1 Cache-aware Roofline model: Upgrading the loft Aleksandar Ilic, Frederico Pratas, and Leonel Sousa INESC-ID/IST, Technical University of Lisbon, Portugal ilic,fcpp,las @inesc-id.pt f g Abstract—The Roofline model graphically represents the attainable upper bound performance of a computer architecture. This paper analyzes the original Roofline model and proposes a novel approach to provide a more insightful performance modeling of modern architectures by introducing cache-awareness, thus significantly improving the guidelines for application optimization. The proposed model was experimentally verified for different architectures by taking advantage of built-in hardware counters with a curve fitness above 90%. Index Terms—Multicore computer architectures, Performance modeling, Application optimization F 1 INTRODUCTION in Tab. 1. The horizontal part of the Roofline model cor- Driven by the increasing complexity of modern applica- responds to the compute bound region where the Fp can tions, microprocessors provide a huge diversity of compu- be achieved. The slanted part of the model represents the tational characteristics and capabilities. While this diversity memory bound region where the performance is limited is important to fulfill the existing computational needs, it by BD. The ridge point, where the two lines meet, marks imposes challenges to fully exploit architectures’ potential. the minimum I required to achieve Fp. In general, the A model that provides insights into the system performance original Roofline modeling concept [10] ties the Fp and the capabilities is a valuable tool to assist in the development theoretical bandwidth of a single memory level, with the I and optimization of applications and future architectures. -

ARM-Architecture Simulator Archulator

Embedded ECE Lab Arm Architecture Simulator University of Arizona ARM-Architecture Simulator Archulator Contents Purpose ............................................................................................................................................ 2 Description ...................................................................................................................................... 2 Block Diagram ............................................................................................................................ 2 Component Description .............................................................................................................. 3 Buses ..................................................................................................................................................... 3 µP .......................................................................................................................................................... 3 Caches ................................................................................................................................................... 3 Memory ................................................................................................................................................. 3 CoProcessor .......................................................................................................................................... 3 Using the Archulator ...................................................................................................................... -

Chapter 6 : Memory System

Computer Organization and Architecture Chapter 6 : Memory System Chapter – 6 Memory System 6.1 Microcomputer Memory Memory is an essential component of the microcomputer system. It stores binary instructions and datum for the microcomputer. The memory is the place where the computer holds current programs and data that are in use. None technology is optimal in satisfying the memory requirements for a computer system. Computer memory exhibits perhaps the widest range of type, technology, organization, performance and cost of any feature of a computer system. The memory unit that communicates directly with the CPU is called main memory. Devices that provide backup storage are called auxiliary memory or secondary memory. 6.2 Characteristics of memory systems The memory system can be characterised with their Location, Capacity, Unit of transfer, Access method, Performance, Physical type, Physical characteristics, Organisation. Location • Processor memory: The memory like registers is included within the processor and termed as processor memory. • Internal memory: It is often termed as main memory and resides within the CPU. • External memory: It consists of peripheral storage devices such as disk and magnetic tape that are accessible to processor via i/o controllers. Capacity • Word size: Capacity is expressed in terms of words or bytes. — The natural unit of organisation • Number of words: Common word lengths are 8, 16, 32 bits etc. — or Bytes Unit of Transfer • Internal: For internal memory, the unit of transfer is equal to the number of data lines into and out of the memory module. • External: For external memory, they are transferred in block which is larger than a word. -

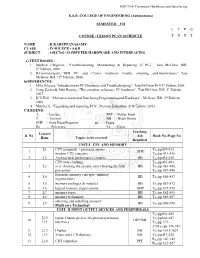

Computer Hardware and Interfacing KSR

16EC764/ Computer Hardware and Interfacing K.S.R. COLLEGE OF ENGINEERING (Autonomous) SEMESTER – VII L T P C COURSE / LESSON PLAN SCHEDULE 3 0 0 3 NAME : K.KARUPPANASAMY CLASS : IV-B.E ECE - A&B SUBJECT : 16EC764 / COMPUTER HARDWARE AND INTERFACING a) TEXT BOOKS : 1. Stephen J.Bigelow, “Troubleshooting, Maintaining & Repairing of PCs”, Tata McGraw Hill, 5th Edition, 2008. 2. B.Govindarajulu, “IBM PC and Clones hardware trouble shooting and maintenance”, Tata McGraw Hill, 12th Edition, 2008. b)REFERENCES: 1. Mike Meyers,“Introduction to PC Hardware and Troubleshooting”, Tata McGraw Hill,1st Edition,2005. 2. Craig Zacker& John Rourke, “The complete reference: PC hardware”, Tata McGraw Hill, 1st Edition, 2007. 3. D.V.Hall, “Microprocessorsand InterfacingProgrammingand Hardware”, McGraw Hill, 2nd Edition, 2006. 4. Mueller.S, “Upgrading and repairing PCS”, Pearson Education, 21th Edition, 2013. C)LEGEND: L - Lecture PPT - Power Point T - Tutorial BB - Black Board OHP - Over Head Projector pp - Pages Rx - Reference Ex - Extra Teaching Lecture S. No Aid Book No./Page No Hour Topics to be covered Required UNIT-I CPU AND MEMORY L1 CPU essentials - processor modes , T /pp429-431 1 OHP X1 modern CPU concepts TX1/pp 431-436 2 L2 Architectural performance features BB TX1/pp436-439 CPU over clocking , TX1/pp481-483, 3 L3 over clocking the system ,over clocking the Intel BB TX1/pp 483-486, processors TX1/pp 487-490 Essential memory concepts -memory 4 L4 BB T /pp 856-857 organizations X1 5 L5 memory packages & modules BB TX1/pp 857-872 6 L6 logical memory -

Multi-Tier Caching Technology™

Multi-Tier Caching Technology™ Technology Paper Authored by: How Layering an Application’s Cache Improves Performance Modern data storage needs go far beyond just computing. From creative professional environments to desktop systems, Seagate provides solutions for almost any application that requires large volumes of storage. As a leader in NAND, hybrid, SMR and conventional magnetic recording technologies, Seagate® applies different levels of caching and media optimization to benefit performance and capacity. Multi-Tier Caching (MTC) Technology brings the highest performance and areal density to a multitude of applications. Each Seagate product is uniquely tailored to meet the performance requirements of a specific use case with the right memory, NAND, and media type and size. This paper explains how MTC Technology works to optimize hard drive performance. MTC Technology: Key Advantages Capacity requirements can vary greatly from business to business. While the fastest performance can be achieved using Dynamic Random Access Memory (DRAM) cache, the data in the DRAM is not persistent through power cycles and DRAM is very expensive compared to other media. NAND flash data survives through power cycles but it is still very expensive compared to a magnetic storage medium. Magnetic storage media cache offers good performance at a very low cost. On the downside, media cache takes away overall disk drive capacity from PMR or SMR main store media. MTC Technology solves this dilemma by using these diverse media components in combination to offer different levels of performance and capacity at varying price points. By carefully tuning firmware with appropriate cache types and sizes, the end user can experience excellent overall system performance. -

CUDA 11 and A100 - WHAT’S NEW? Markus Hrywniak, 23Rd June 2020 TOPICS for TODAY

CUDA 11 AND A100 - WHAT’S NEW? Markus Hrywniak, 23rd June 2020 TOPICS FOR TODAY Ampere architecture – A100, powering DGX–A100, HGX-A100... and soon, FZ Jülich‘s JUWELS Booster New CUDA 11 Toolkit release Overview of features Talk next week: Third generation Tensor Cores GTC talks go into much more details. See references! 2 HGX-A100 4-GPU HGX-A100 8-GPU • 4 A100 with NVLINK • 8 A100 with NVSwitch 3 HIERARCHY OF SCALES Multi-System Rack Multi-GPU System Multi-SM GPU Multi-Core SM Unlimited Scale 8 GPUs 108 Multiprocessors 2048 threads 4 AMDAHL’S LAW serial section parallel section serial section Amdahl’s Law parallel section Shortest possible runtime is sum of serial section times Time saved serial section Some Parallelism Increased Parallelism Infinite Parallelism Program time = Parallel sections take less time Parallel sections take no time sum(serial times + parallel times) Serial sections take same time Serial sections take same time 5 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY serial section parallel section serial section Split up serial & parallel components parallel section serial section Some Parallelism Task Parallelism Infinite Parallelism Program time = Parallel sections overlap with serial sections Parallel sections take no time sum(serial times + parallel times) Serial sections take same time 6 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY CUDA Concurrency Mechanisms At Every Scope CUDA Kernel Threads, Warps, Blocks, Barriers Application CUDA Streams, CUDA Graphs Node Multi-Process Service, GPU-Direct System NCCL, CUDA-Aware MPI, NVSHMEM 7 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY Execution Overheads Non-productive latencies (waste) Operation Latency Network latencies Memory read/write File I/O .. -

BES Scientific and Facility Highlights/Accomplishments FY 1997

Thirteen Years of Basic Energy Sciences Accomplishments Provided below are vignettes of some significant Basic Energy Sciences (BES) program accomplishments from FY 1997 through FY 2009. These brief accounts appear in the BES sections of the President’s FY 1999 through FY 2011 Budget Requests to Congress, respectively. The selected program highlights are representative of the broad range of studies supported in the BES program. Selected FY 2009 Scientific Highlights/Accomplishments Materials Sciences and Engineering Subprogram . Encoding Information at Sub-Atomic Scales. Using state-of-the-art nanoscience instruments and novel techniques, scientists have set a record for the smallest writing, forming letters with features that are one third of a billionth of a meter or 0.3 nanometers. This sub-atomic writing was achieved by using a scanning tunnelling microscope (STM) to precisely position carbon monoxide molecules into a desired pattern on a copper surface. The electrons that move around on the copper surface act as waves that interfere with the carbon monoxide molecules and with each other, forming an interference pattern that depends on the positions of the molecules. By altering the arrangement of the molecules, specific electron interference patterns are created, thereby encoding information for later retrieval. In addition, several data sets can be stored in a single molecular arrangement by using multiple electron energies, one of the variables possible with the STM. The same STM technology can then be used to read the data that has been stored. Because the information is stored in the electron interference pattern, rather than in the individual carbon monoxide molecules or surface copper atoms, the storage density is not limited by the size of an atom. -

A Characterization of Processor Performance in the VAX-1 L/780

A Characterization of Processor Performance in the VAX-1 l/780 Joel S. Emer Douglas W. Clark Digital Equipment Corp. Digital Equipment Corp. 77 Reed Road 295 Foster Street Hudson, MA 01749 Littleton, MA 01460 ABSTRACT effect of many architectural and implementation features. This paper reports the results of a study of VAX- llR80 processor performance using a novel hardware Prior related work includes studies of opcode monitoring technique. A micro-PC histogram frequency and other features of instruction- monitor was buiit for these measurements. It kee s a processing [lo. 11,15,161; some studies report timing count of the number of microcode cycles execute z( at Information as well [l, 4,121. each microcode location. Measurement ex eriments were performed on live timesharing wor i loads as After describing our methods and workloads in well as on synthetic workloads of several types. The Section 2, we will re ort the frequencies of various histogram counts allow the calculation of the processor events in 5 ections 3 and 4. Section 5 frequency of various architectural events, such as the resents the complete, detailed timing results, and frequency of different types of opcodes and operand !!Iection 6 concludes the paper. specifiers, as well as the frequency of some im lementation-s ecific events, such as translation bu h er misses. ?phe measurement technique also yields the amount of processing time spent, in various 2. DEFINITIONS AND METHODS activities, such as ordinary microcode computation, memory management, and processor stalls of 2.1 VAX-l l/780 Structure different kinds. This paper reports in detail the amount of time the “average’ VAX instruction The llf780 processor is composed of two major spends in these activities.