Cacheout: Leaking Data on Intel Cpus Via Cache Evictions

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

SPP 2019.09.0 Component Release Notes

SPP 2019.09.0 Component Release Notes BIOS - System ROM Driver - Chipset Driver - Network Driver - Storage Driver - Storage Controller Driver - Storage Fibre Channel and Fibre Channel Over Ethernet Driver - System Driver - System Management Driver - Video Firmware - Blade Infrastructure Firmware - Lights-Out Management Firmware - Network Firmware - NVDIMM Firmware - PCIe NVMe Storage Disk Firmware - Power Management Firmware - SAS Storage Disk Firmware - SATA Storage Disk Firmware - Storage Controller Firmware - Storage Fibre Channel Firmware - System Firmware (Entitlement Required) - Storage Controller Software - Lights-Out Management Software - Management Software - Network Software - Storage Controller Software - Storage Fibre Channel Software - Storage Fibre Channel HBA Software - System Management BIOS - System ROM Top Online ROM Flash Component for Linux - HPE ProLiant DL380 Gen9/DL360 Gen9 (P89) Servers Version: 2.74_07-21-2019 (Optional) Filename: RPMS/i386/firmware-system-p89-2.74_2019_07_21-1.1.i386.rpm Important Note! Important Notes: None Deliverable Name: HPE ProLiant DL360/DL380 Gen9 System ROM - P89 Release Version: 2.74_07-21-2019 Last Recommended or Critical Revision: 2.72_03-25-2019 Previous Revision: 2.72_03-25-2019 Firmware Dependencies: None Enhancements/New Features: This revision of the System ROM includes the latest revision of the Intel microcode which provides mitigation for an Intel sighting where the system may experience a machine check after updating to the latest System ROM which contained a fix for an Intel TSX (Transactional Synchronizations Extensions) sightings. The previous microcode was first introduced in the v2.70 System ROM. This issue only impacts systems configured with Intel Xeon v4 Series processors. This issue is not unique to HPE servers. Problems Fixed: Addressed an extremely rare issue where a system booting to VMware may experience a PSOD in legacy boot mode. -

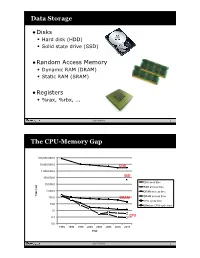

Data Storage the CPU-Memory

Data Storage •Disks • Hard disk (HDD) • Solid state drive (SSD) •Random Access Memory • Dynamic RAM (DRAM) • Static RAM (SRAM) •Registers • %rax, %rbx, ... Sean Barker 1 The CPU-Memory Gap 100,000,000.0 10,000,000.0 Disk 1,000,000.0 100,000.0 SSD Disk seek time 10,000.0 SSD access time 1,000.0 DRAM access time Time (ns) Time 100.0 DRAM SRAM access time CPU cycle time 10.0 Effective CPU cycle time 1.0 0.1 CPU 0.0 1985 1990 1995 2000 2003 2005 2010 2015 Year Sean Barker 2 Caching Smaller, faster, more expensive Cache 8 4 9 10 14 3 memory caches a subset of the blocks Data is copied in block-sized 10 4 transfer units Larger, slower, cheaper memory Memory 0 1 2 3 viewed as par@@oned into “blocks” 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 3 Cache Hit Request: 14 Cache 8 9 14 3 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 4 Cache Miss Request: 12 Cache 8 12 9 14 3 12 Request: 12 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 5 Locality ¢ Temporal locality: ¢ Spa0al locality: Sean Barker 6 Locality Example (1) sum = 0; for (i = 0; i < n; i++) sum += a[i]; return sum; Sean Barker 7 Locality Example (2) int sum_array_rows(int a[M][N]) { int i, j, sum = 0; for (i = 0; i < M; i++) for (j = 0; j < N; j++) sum += a[i][j]; return sum; } Sean Barker 8 Locality Example (3) int sum_array_cols(int a[M][N]) { int i, j, sum = 0; for (j = 0; j < N; j++) for (i = 0; i < M; i++) sum += a[i][j]; return sum; } Sean Barker 9 The Memory Hierarchy The Memory Hierarchy Smaller On 1 cycle to access CPU Chip Registers Faster Storage Costlier instrs can L1, L2 per byte directly Cache(s) ~10’s of cycles to access access (SRAM) Main memory ~100 cycles to access (DRAM) Larger Slower Flash SSD / Local network ~100 M cycles to access Cheaper Local secondary storage (disk) per byte slower Remote secondary storage than local (tapes, Web servers / Internet) disk to access Sean Barker 10. -

Microcode Revision Guidance August 31, 2019 MCU Recommendations

microcode revision guidance August 31, 2019 MCU Recommendations Section 1 – Planned microcode updates • Provides details on Intel microcode updates currently planned or available and corresponding to Intel-SA-00233 published June 18, 2019. • Changes from prior revision(s) will be highlighted in yellow. Section 2 – No planned microcode updates • Products for which Intel does not plan to release microcode updates. This includes products previously identified as such. LEGEND: Production Status: • Planned – Intel is planning on releasing a MCU at a future date. • Beta – Intel has released this production signed MCU under NDA for all customers to validate. • Production – Intel has completed all validation and is authorizing customers to use this MCU in a production environment. -

SDG Adhoc Reporting

VMware Deliverable Release Notes This document does not apply to HPE Superdome servers. For information on HPE Superdome, see the following links: HPE Integrity Superdome X HPE Superdome Flex Information on HPE Synergy supported VMware ESXi OS releases, HPE ESXi Custom Images and HPE Synergy Custom SPPs is available at: VMware OS Support Tool for HPE Synergy Information on HPE Synergy Software Releases is available at: HPE Synergy Software Releases - Overview SPP 2021.04.0 Release Notes for VMware vSphere 6.5 BIOS (Login Required) - System ROM Driver - Network Driver - Storage Controller Firmware - Network Firmware - NVDIMM Firmware - Storage Controller Firmware - Storage Fibre Channel Software - Management Software - Storage Controller Software - Storage Fibre Channel Software - System Management BIOS (Login Required) - System ROM Top ROM Flash Firmware Package - HPE Apollo 2000 Gen10/HPE ProLiant XL170r/XL190r Gen10 (U38) Servers Version: 2.42_01-23-2021 (Recommended) Filename: U38_2.42_01_23_2021.fwpkg Important Note! Important Notes: None Deliverable Name: HPE Apollo 2000 Gen10/HPE ProLiant XL170r/XL190r Gen10 System ROM - U38 Release Version: 2.42_01-23-2021 Last Recommended or Critical Revision: 2.42_01-23-2021 Previous Revision: 2.40_10-26-2020 Firmware Dependencies: None Enhancements/New Features: Updated the support for Fast Fault Tolerant Memory Mode (ADDDC) to improve system uptime. Added support to the BIOS/Platform Configuration (RBSU) Time Zones to add Dublin/London (UTC+1). This support also requires the latest version of iLO Firmware, version 2.40 or later. Problems Fixed: This revision of the System ROM includes the latest revision of the Intel microcode which provides a fix for a potential machine check exception under heavy stress with short loops of instructions. -

Class-Action Lawsuit

Case 3:20-cv-00863-SI Document 1 Filed 05/29/20 Page 1 of 279 Steve D. Larson, OSB No. 863540 Email: [email protected] Jennifer S. Wagner, OSB No. 024470 Email: [email protected] STOLL STOLL BERNE LOKTING & SHLACHTER P.C. 209 SW Oak Street, Suite 500 Portland, Oregon 97204 Telephone: (503) 227-1600 Attorneys for Plaintiffs [Additional Counsel Listed on Signature Page.] UNITED STATES DISTRICT COURT DISTRICT OF OREGON PORTLAND DIVISION BLUE PEAK HOSTING, LLC, PAMELA Case No. GREEN, TITI RICAFORT, MARGARITE SIMPSON, and MICHAEL NELSON, on behalf of CLASS ACTION ALLEGATION themselves and all others similarly situated, COMPLAINT Plaintiffs, DEMAND FOR JURY TRIAL v. INTEL CORPORATION, a Delaware corporation, Defendant. CLASS ACTION ALLEGATION COMPLAINT Case 3:20-cv-00863-SI Document 1 Filed 05/29/20 Page 2 of 279 Plaintiffs Blue Peak Hosting, LLC, Pamela Green, Titi Ricafort, Margarite Sampson, and Michael Nelson, individually and on behalf of the members of the Class defined below, allege the following against Defendant Intel Corporation (“Intel” or “the Company”), based upon personal knowledge with respect to themselves and on information and belief derived from, among other things, the investigation of counsel and review of public documents as to all other matters. INTRODUCTION 1. Despite Intel’s intentional concealment of specific design choices that it long knew rendered its central processing units (“CPUs” or “processors”) unsecure, it was only in January 2018 that it was first revealed to the public that Intel’s CPUs have significant security vulnerabilities that gave unauthorized program instructions access to protected data. 2. A CPU is the “brain” in every computer and mobile device and processes all of the essential applications, including the handling of confidential information such as passwords and encryption keys. -

NEC V. Intel: a Guide to Using "Clean Room" Procedures As Evidence, 10 Computer L.J

The John Marshall Journal of Information Technology & Privacy Law Volume 10 Issue 4 Computer/Law Journal - Winter 1990 Article 1 Winter 1990 NEC v. Intel: A Guide to Using "Clean Room" Procedures as Evidence, 10 Computer L.J. 453 (1990) David S. Elkins Follow this and additional works at: https://repository.law.uic.edu/jitpl Part of the Computer Law Commons, Internet Law Commons, Privacy Law Commons, and the Science and Technology Law Commons Recommended Citation David S. Elkins, NEC v. Intel: A Guide to Using "Clean Room" Procedures as Evidence, 10 Computer L.J. 453 (1990) https://repository.law.uic.edu/jitpl/vol10/iss4/1 This Article is brought to you for free and open access by UIC Law Open Access Repository. It has been accepted for inclusion in The John Marshall Journal of Information Technology & Privacy Law by an authorized administrator of UIC Law Open Access Repository. For more information, please contact [email protected]. NEC V. INTEL: A GUIDE TO USING "CLEAN ROOM" PROCEDURES AS EVIDENCE DAVID S. ELKINS* I. INTRODUCTION The recent United States District Court decision in NEC Corp. v. Intel Corp.' has made a significant imprint on the field of copyright law in two respects. The case marks the first time that computer microcode 2 has been held copyrightable,3 and the first time that "clean room" procedures have been used as evidence in an infringement ac- tion.4 Simply put, clean room procedures comprise a method of creating a certain type of technology without the possibility of influence from outside sources. These procedures may be necessary in situations where the mere creation of the technology gives rise to an inference of copy- * J.D., King Hall School of Law, University of California, Davis, 1990; A.B., Univer- sity of California, Berkeley, 1986. -

Cache-Aware Roofline Model: Upgrading the Loft

1 Cache-aware Roofline model: Upgrading the loft Aleksandar Ilic, Frederico Pratas, and Leonel Sousa INESC-ID/IST, Technical University of Lisbon, Portugal ilic,fcpp,las @inesc-id.pt f g Abstract—The Roofline model graphically represents the attainable upper bound performance of a computer architecture. This paper analyzes the original Roofline model and proposes a novel approach to provide a more insightful performance modeling of modern architectures by introducing cache-awareness, thus significantly improving the guidelines for application optimization. The proposed model was experimentally verified for different architectures by taking advantage of built-in hardware counters with a curve fitness above 90%. Index Terms—Multicore computer architectures, Performance modeling, Application optimization F 1 INTRODUCTION in Tab. 1. The horizontal part of the Roofline model cor- Driven by the increasing complexity of modern applica- responds to the compute bound region where the Fp can tions, microprocessors provide a huge diversity of compu- be achieved. The slanted part of the model represents the tational characteristics and capabilities. While this diversity memory bound region where the performance is limited is important to fulfill the existing computational needs, it by BD. The ridge point, where the two lines meet, marks imposes challenges to fully exploit architectures’ potential. the minimum I required to achieve Fp. In general, the A model that provides insights into the system performance original Roofline modeling concept [10] ties the Fp and the capabilities is a valuable tool to assist in the development theoretical bandwidth of a single memory level, with the I and optimization of applications and future architectures. -

ARM-Architecture Simulator Archulator

Embedded ECE Lab Arm Architecture Simulator University of Arizona ARM-Architecture Simulator Archulator Contents Purpose ............................................................................................................................................ 2 Description ...................................................................................................................................... 2 Block Diagram ............................................................................................................................ 2 Component Description .............................................................................................................. 3 Buses ..................................................................................................................................................... 3 µP .......................................................................................................................................................... 3 Caches ................................................................................................................................................... 3 Memory ................................................................................................................................................. 3 CoProcessor .......................................................................................................................................... 3 Using the Archulator ...................................................................................................................... -

Accelerate Hybrid Cloud AI Workloads Solution Brief

Solution Brief Data Center | Hybrid Cloud Accelerate Hybrid Cloud AI Workloads Ease your journey to hybrid/multicloud with a reference architecture for Intel® technology and VMware Cloud Foundation Executive Summary To remain competitive in today’s world, organizations need a modern data center. Companies using these data centers must accelerate their product development, compete more successfully at a lower cost, and Solution Benefits reduce their downtime and maintenance overhead. Technology must move and change with the times—solutions for hosting applications and Intel’s VMware Cloud Foundation services must innovate and change as well. reference architecture takes advantage of Intel® compute, Companies with older and outdated data centers will want to meet these memory, storage, and networking challenges by upgrading to hybrid cloud solutions, where the data center innovations to help enable can easily and seamlessly interface between on‑premises and cloud software-defined data centers and systems. Based on VMware Cloud Foundation with VMware Tanzu, Intel hybrid/multicloudOptional adoption. partner logo goes here addressed these requirements by offering a hybrid/multicloud reference • Fast AI inference. AI workloads architecture—available in a Base and Plus configuration—that is easily can benefit from innovations deployable and manageable for virtual machines (VMs) and containers. from Intel such as Intel® DL Boost. • Flexibility and portability. VMware Cloud Foundation helps enable enterprises to run their workloads where it makes most sense, whether that’s Private Public on‑premises, in a public cloud, Cloud Cloud or in several clouds at once. VMware Cloud Foundation VMware VMware VMware VMware vSphere vRealize Suite vSA S‑T VMware SDDC Manager Figure 1. -

Beyond MOV ADD XOR – the Unusual and Unexpected

Beyond MOV ADD XOR the unusual and unexpected in x86 Mateusz "j00ru" Jurczyk, Gynvael Coldwind CONFidence 2013, Kraków Who • Mateusz Jurczyk o Information Security Engineer @ Google o http://j00ru.vexillium.org/ o @j00ru • Gynvael Coldwind o Information Security Engineer @ Google o http://gynvael.coldwind.pl/ o @gynvael Agenda • Getting you up to speed with new x86 research. • Highlighting interesting facts and tricks. • Both x86 and x86-64 discussed. Security relevance • Local vulnerabilities in CPU ↔ OS integration. • Subtle CPU-specific information disclosure. • Exploit mitigations on CPU level. • Loosely related considerations and quirks. x86 - introduction not required • Intel first ships 8086 in 1978 o 16-bit extension of the 8-bit 8085. • Only 80386 and later are used today. o first shipped in 1985 o fully 32-bit architecture o designed with security in mind . code and i/o privilege levels . memory protection . segmentation x86 - produced by... Intel, AMD, VIA - yeah, we all know these. • Chips and Technologies - left market after failed 386 compatible chip failed to boot the Windows operating system. • NEC - sold early Intel architecture compatibles such as NEC V20 and NEC V30; product line transitioned to NEC internal architecture http://www.cpu-collection.de/ x86 - other manufacturers Eastern Bloc KM1810BM86 (USSR) http://www.cpu-collection.de/ x86 - other manufacturers Transmeta, Rise Technology, IDT, National Semiconductor, Cyrix, NexGen, Chips and Technologies, IBM, UMC, DM&P Electronics, ZF Micro, Zet IA-32, RDC Semiconductors, Nvidia, ALi, SiS, GlobalFoundries, TSMC, Fujitsu, SGS-Thomson, Texas Instruments, ... (via Wikipedia) At first, a simple architecture... At first, a simple architecture... x86 bursted with new functions • No eXecute bit (W^X, DEP) o completely redefined exploit development, together with ASLR • Supervisor Mode Execution Prevention • RDRAND instruction o cryptographically secure prng • Related: TPM, VT-d, IOMMU Overall.. -

Multi-Tier Caching Technology™

Multi-Tier Caching Technology™ Technology Paper Authored by: How Layering an Application’s Cache Improves Performance Modern data storage needs go far beyond just computing. From creative professional environments to desktop systems, Seagate provides solutions for almost any application that requires large volumes of storage. As a leader in NAND, hybrid, SMR and conventional magnetic recording technologies, Seagate® applies different levels of caching and media optimization to benefit performance and capacity. Multi-Tier Caching (MTC) Technology brings the highest performance and areal density to a multitude of applications. Each Seagate product is uniquely tailored to meet the performance requirements of a specific use case with the right memory, NAND, and media type and size. This paper explains how MTC Technology works to optimize hard drive performance. MTC Technology: Key Advantages Capacity requirements can vary greatly from business to business. While the fastest performance can be achieved using Dynamic Random Access Memory (DRAM) cache, the data in the DRAM is not persistent through power cycles and DRAM is very expensive compared to other media. NAND flash data survives through power cycles but it is still very expensive compared to a magnetic storage medium. Magnetic storage media cache offers good performance at a very low cost. On the downside, media cache takes away overall disk drive capacity from PMR or SMR main store media. MTC Technology solves this dilemma by using these diverse media components in combination to offer different levels of performance and capacity at varying price points. By carefully tuning firmware with appropriate cache types and sizes, the end user can experience excellent overall system performance. -

CUDA 11 and A100 - WHAT’S NEW? Markus Hrywniak, 23Rd June 2020 TOPICS for TODAY

CUDA 11 AND A100 - WHAT’S NEW? Markus Hrywniak, 23rd June 2020 TOPICS FOR TODAY Ampere architecture – A100, powering DGX–A100, HGX-A100... and soon, FZ Jülich‘s JUWELS Booster New CUDA 11 Toolkit release Overview of features Talk next week: Third generation Tensor Cores GTC talks go into much more details. See references! 2 HGX-A100 4-GPU HGX-A100 8-GPU • 4 A100 with NVLINK • 8 A100 with NVSwitch 3 HIERARCHY OF SCALES Multi-System Rack Multi-GPU System Multi-SM GPU Multi-Core SM Unlimited Scale 8 GPUs 108 Multiprocessors 2048 threads 4 AMDAHL’S LAW serial section parallel section serial section Amdahl’s Law parallel section Shortest possible runtime is sum of serial section times Time saved serial section Some Parallelism Increased Parallelism Infinite Parallelism Program time = Parallel sections take less time Parallel sections take no time sum(serial times + parallel times) Serial sections take same time Serial sections take same time 5 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY serial section parallel section serial section Split up serial & parallel components parallel section serial section Some Parallelism Task Parallelism Infinite Parallelism Program time = Parallel sections overlap with serial sections Parallel sections take no time sum(serial times + parallel times) Serial sections take same time 6 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY CUDA Concurrency Mechanisms At Every Scope CUDA Kernel Threads, Warps, Blocks, Barriers Application CUDA Streams, CUDA Graphs Node Multi-Process Service, GPU-Direct System NCCL, CUDA-Aware MPI, NVSHMEM 7 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY Execution Overheads Non-productive latencies (waste) Operation Latency Network latencies Memory read/write File I/O ..