CUDA OPTIMIZATION TIPS, TRICKS and TECHNIQUES Stephen Jones, GTC 2017 the Art of Doing More with Less

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

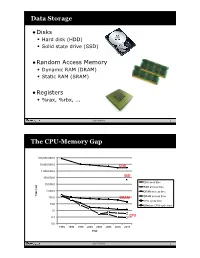

Data Storage the CPU-Memory

Data Storage •Disks • Hard disk (HDD) • Solid state drive (SSD) •Random Access Memory • Dynamic RAM (DRAM) • Static RAM (SRAM) •Registers • %rax, %rbx, ... Sean Barker 1 The CPU-Memory Gap 100,000,000.0 10,000,000.0 Disk 1,000,000.0 100,000.0 SSD Disk seek time 10,000.0 SSD access time 1,000.0 DRAM access time Time (ns) Time 100.0 DRAM SRAM access time CPU cycle time 10.0 Effective CPU cycle time 1.0 0.1 CPU 0.0 1985 1990 1995 2000 2003 2005 2010 2015 Year Sean Barker 2 Caching Smaller, faster, more expensive Cache 8 4 9 10 14 3 memory caches a subset of the blocks Data is copied in block-sized 10 4 transfer units Larger, slower, cheaper memory Memory 0 1 2 3 viewed as par@@oned into “blocks” 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 3 Cache Hit Request: 14 Cache 8 9 14 3 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 4 Cache Miss Request: 12 Cache 8 12 9 14 3 12 Request: 12 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 5 Locality ¢ Temporal locality: ¢ Spa0al locality: Sean Barker 6 Locality Example (1) sum = 0; for (i = 0; i < n; i++) sum += a[i]; return sum; Sean Barker 7 Locality Example (2) int sum_array_rows(int a[M][N]) { int i, j, sum = 0; for (i = 0; i < M; i++) for (j = 0; j < N; j++) sum += a[i][j]; return sum; } Sean Barker 8 Locality Example (3) int sum_array_cols(int a[M][N]) { int i, j, sum = 0; for (j = 0; j < N; j++) for (i = 0; i < M; i++) sum += a[i][j]; return sum; } Sean Barker 9 The Memory Hierarchy The Memory Hierarchy Smaller On 1 cycle to access CPU Chip Registers Faster Storage Costlier instrs can L1, L2 per byte directly Cache(s) ~10’s of cycles to access access (SRAM) Main memory ~100 cycles to access (DRAM) Larger Slower Flash SSD / Local network ~100 M cycles to access Cheaper Local secondary storage (disk) per byte slower Remote secondary storage than local (tapes, Web servers / Internet) disk to access Sean Barker 10. -

2.5 Classification of Parallel Computers

52 // Architectures 2.5 Classification of Parallel Computers 2.5 Classification of Parallel Computers 2.5.1 Granularity In parallel computing, granularity means the amount of computation in relation to communication or synchronisation Periods of computation are typically separated from periods of communication by synchronization events. • fine level (same operations with different data) ◦ vector processors ◦ instruction level parallelism ◦ fine-grain parallelism: – Relatively small amounts of computational work are done between communication events – Low computation to communication ratio – Facilitates load balancing 53 // Architectures 2.5 Classification of Parallel Computers – Implies high communication overhead and less opportunity for per- formance enhancement – If granularity is too fine it is possible that the overhead required for communications and synchronization between tasks takes longer than the computation. • operation level (different operations simultaneously) • problem level (independent subtasks) ◦ coarse-grain parallelism: – Relatively large amounts of computational work are done between communication/synchronization events – High computation to communication ratio – Implies more opportunity for performance increase – Harder to load balance efficiently 54 // Architectures 2.5 Classification of Parallel Computers 2.5.2 Hardware: Pipelining (was used in supercomputers, e.g. Cray-1) In N elements in pipeline and for 8 element L clock cycles =) for calculation it would take L + N cycles; without pipeline L ∗ N cycles Example of good code for pipelineing: §doi =1 ,k ¤ z ( i ) =x ( i ) +y ( i ) end do ¦ 55 // Architectures 2.5 Classification of Parallel Computers Vector processors, fast vector operations (operations on arrays). Previous example good also for vector processor (vector addition) , but, e.g. recursion – hard to optimise for vector processors Example: IntelMMX – simple vector processor. -

Chapter 5 Multiprocessors and Thread-Level Parallelism

Computer Architecture A Quantitative Approach, Fifth Edition Chapter 5 Multiprocessors and Thread-Level Parallelism Copyright © 2012, Elsevier Inc. All rights reserved. 1 Contents 1. Introduction 2. Centralized SMA – shared memory architecture 3. Performance of SMA 4. DMA – distributed memory architecture 5. Synchronization 6. Models of Consistency Copyright © 2012, Elsevier Inc. All rights reserved. 2 1. Introduction. Why multiprocessors? Need for more computing power Data intensive applications Utility computing requires powerful processors Several ways to increase processor performance Increased clock rate limited ability Architectural ILP, CPI – increasingly more difficult Multi-processor, multi-core systems more feasible based on current technologies Advantages of multiprocessors and multi-core Replication rather than unique design. Copyright © 2012, Elsevier Inc. All rights reserved. 3 Introduction Multiprocessor types Symmetric multiprocessors (SMP) Share single memory with uniform memory access/latency (UMA) Small number of cores Distributed shared memory (DSM) Memory distributed among processors. Non-uniform memory access/latency (NUMA) Processors connected via direct (switched) and non-direct (multi- hop) interconnection networks Copyright © 2012, Elsevier Inc. All rights reserved. 4 Important ideas Technology drives the solutions. Multi-cores have altered the game!! Thread-level parallelism (TLP) vs ILP. Computing and communication deeply intertwined. Write serialization exploits broadcast communication -

Chapter 5 Thread-Level Parallelism

Chapter 5 Thread-Level Parallelism Instructor: Josep Torrellas CS433 Copyright Josep Torrellas 1999, 2001, 2002, 2013 1 Progress Towards Multiprocessors + Rate of speed growth in uniprocessors saturated + Wide-issue processors are very complex + Wide-issue processors consume a lot of power + Steady progress in parallel software : the major obstacle to parallel processing 2 Flynn’s Classification of Parallel Architectures According to the parallelism in I and D stream • Single I stream , single D stream (SISD): uniprocessor • Single I stream , multiple D streams(SIMD) : same I executed by multiple processors using diff D – Each processor has its own data memory – There is a single control processor that sends the same I to all processors – These processors are usually special purpose 3 • Multiple I streams, single D stream (MISD) : no commercial machine • Multiple I streams, multiple D streams (MIMD) – Each processor fetches its own instructions and operates on its own data – Architecture of choice for general purpose mps – Flexible: can be used in single user mode or multiprogrammed – Use of the shelf µprocessors 4 MIMD Machines 1. Centralized shared memory architectures – Small #’s of processors (≈ up to 16-32) – Processors share a centralized memory – Usually connected in a bus – Also called UMA machines ( Uniform Memory Access) 2. Machines w/physically distributed memory – Support many processors – Memory distributed among processors – Scales the mem bandwidth if most of the accesses are to local mem 5 Figure 5.1 6 Figure 5.2 7 2. Machines w/physically distributed memory (cont) – Also reduces the memory latency – Of course interprocessor communication is more costly and complex – Often each node is a cluster (bus based multiprocessor) – 2 types, depending on method used for interprocessor communication: 1. -

Trafficdb: HERE's High Performance Shared-Memory Data Store

TrafficDB: HERE’s High Performance Shared-Memory Data Store Ricardo Fernandes Piotr Zaczkowski Bernd Gottler¨ HERE Global B.V. HERE Global B.V. HERE Global B.V. [email protected] [email protected] [email protected] Conor Ettinoffe Anis Moussa HERE Global B.V. HERE Global B.V. conor.ettinoff[email protected] [email protected] ABSTRACT HERE's traffic-aware services enable route planning and traf- fic visualisation on web, mobile and connected car appli- cations. These services process thousands of requests per second and require efficient ways to access the information needed to provide a timely response to end-users. The char- acteristics of road traffic information and these traffic-aware services require storage solutions with specific performance features. A route planning application utilising traffic con- gestion information to calculate the optimal route from an origin to a destination might hit a database with millions of queries per second. However, existing storage solutions are not prepared to handle such volumes of concurrent read Figure 1: HERE Location Cloud is accessible world- operations, as well as to provide the desired vertical scalabil- wide from web-based applications, mobile devices ity. This paper presents TrafficDB, a shared-memory data and connected cars. store, designed to provide high rates of read operations, en- abling applications to directly access the data from memory. Our evaluation demonstrates that TrafficDB handles mil- HERE is a leader in mapping and location-based services, lions of read operations and provides near-linear scalability providing fresh and highly accurate traffic information to- on multi-core machines, where additional processes can be gether with advanced route planning and navigation tech- spawned to increase the systems' throughput without a no- nologies that helps drivers reach their destination in the ticeable impact on the latency of querying the data store. -

Cache-Aware Roofline Model: Upgrading the Loft

1 Cache-aware Roofline model: Upgrading the loft Aleksandar Ilic, Frederico Pratas, and Leonel Sousa INESC-ID/IST, Technical University of Lisbon, Portugal ilic,fcpp,las @inesc-id.pt f g Abstract—The Roofline model graphically represents the attainable upper bound performance of a computer architecture. This paper analyzes the original Roofline model and proposes a novel approach to provide a more insightful performance modeling of modern architectures by introducing cache-awareness, thus significantly improving the guidelines for application optimization. The proposed model was experimentally verified for different architectures by taking advantage of built-in hardware counters with a curve fitness above 90%. Index Terms—Multicore computer architectures, Performance modeling, Application optimization F 1 INTRODUCTION in Tab. 1. The horizontal part of the Roofline model cor- Driven by the increasing complexity of modern applica- responds to the compute bound region where the Fp can tions, microprocessors provide a huge diversity of compu- be achieved. The slanted part of the model represents the tational characteristics and capabilities. While this diversity memory bound region where the performance is limited is important to fulfill the existing computational needs, it by BD. The ridge point, where the two lines meet, marks imposes challenges to fully exploit architectures’ potential. the minimum I required to achieve Fp. In general, the A model that provides insights into the system performance original Roofline modeling concept [10] ties the Fp and the capabilities is a valuable tool to assist in the development theoretical bandwidth of a single memory level, with the I and optimization of applications and future architectures. -

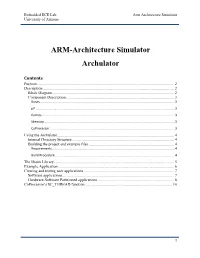

ARM-Architecture Simulator Archulator

Embedded ECE Lab Arm Architecture Simulator University of Arizona ARM-Architecture Simulator Archulator Contents Purpose ............................................................................................................................................ 2 Description ...................................................................................................................................... 2 Block Diagram ............................................................................................................................ 2 Component Description .............................................................................................................. 3 Buses ..................................................................................................................................................... 3 µP .......................................................................................................................................................... 3 Caches ................................................................................................................................................... 3 Memory ................................................................................................................................................. 3 CoProcessor .......................................................................................................................................... 3 Using the Archulator ...................................................................................................................... -

Multi-Tier Caching Technology™

Multi-Tier Caching Technology™ Technology Paper Authored by: How Layering an Application’s Cache Improves Performance Modern data storage needs go far beyond just computing. From creative professional environments to desktop systems, Seagate provides solutions for almost any application that requires large volumes of storage. As a leader in NAND, hybrid, SMR and conventional magnetic recording technologies, Seagate® applies different levels of caching and media optimization to benefit performance and capacity. Multi-Tier Caching (MTC) Technology brings the highest performance and areal density to a multitude of applications. Each Seagate product is uniquely tailored to meet the performance requirements of a specific use case with the right memory, NAND, and media type and size. This paper explains how MTC Technology works to optimize hard drive performance. MTC Technology: Key Advantages Capacity requirements can vary greatly from business to business. While the fastest performance can be achieved using Dynamic Random Access Memory (DRAM) cache, the data in the DRAM is not persistent through power cycles and DRAM is very expensive compared to other media. NAND flash data survives through power cycles but it is still very expensive compared to a magnetic storage medium. Magnetic storage media cache offers good performance at a very low cost. On the downside, media cache takes away overall disk drive capacity from PMR or SMR main store media. MTC Technology solves this dilemma by using these diverse media components in combination to offer different levels of performance and capacity at varying price points. By carefully tuning firmware with appropriate cache types and sizes, the end user can experience excellent overall system performance. -

CUDA 11 and A100 - WHAT’S NEW? Markus Hrywniak, 23Rd June 2020 TOPICS for TODAY

CUDA 11 AND A100 - WHAT’S NEW? Markus Hrywniak, 23rd June 2020 TOPICS FOR TODAY Ampere architecture – A100, powering DGX–A100, HGX-A100... and soon, FZ Jülich‘s JUWELS Booster New CUDA 11 Toolkit release Overview of features Talk next week: Third generation Tensor Cores GTC talks go into much more details. See references! 2 HGX-A100 4-GPU HGX-A100 8-GPU • 4 A100 with NVLINK • 8 A100 with NVSwitch 3 HIERARCHY OF SCALES Multi-System Rack Multi-GPU System Multi-SM GPU Multi-Core SM Unlimited Scale 8 GPUs 108 Multiprocessors 2048 threads 4 AMDAHL’S LAW serial section parallel section serial section Amdahl’s Law parallel section Shortest possible runtime is sum of serial section times Time saved serial section Some Parallelism Increased Parallelism Infinite Parallelism Program time = Parallel sections take less time Parallel sections take no time sum(serial times + parallel times) Serial sections take same time Serial sections take same time 5 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY serial section parallel section serial section Split up serial & parallel components parallel section serial section Some Parallelism Task Parallelism Infinite Parallelism Program time = Parallel sections overlap with serial sections Parallel sections take no time sum(serial times + parallel times) Serial sections take same time 6 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY CUDA Concurrency Mechanisms At Every Scope CUDA Kernel Threads, Warps, Blocks, Barriers Application CUDA Streams, CUDA Graphs Node Multi-Process Service, GPU-Direct System NCCL, CUDA-Aware MPI, NVSHMEM 7 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY Execution Overheads Non-productive latencies (waste) Operation Latency Network latencies Memory read/write File I/O .. -

Scheduling of Shared Memory with Multi - Core Performance in Dynamic Allocator Using Arm Processor

VOL. 11, NO. 9, MAY 2016 ISSN 1819-6608 ARPN Journal of Engineering and Applied Sciences ©2006-2016 Asian Research Publishing Network (ARPN). All rights reserved. www.arpnjournals.com SCHEDULING OF SHARED MEMORY WITH MULTI - CORE PERFORMANCE IN DYNAMIC ALLOCATOR USING ARM PROCESSOR Venkata Siva Prasad Ch. and S. Ravi Department of Electronics and Communication Engineering, Dr. M.G.R. Educational and Research Institute University, Chennai, India E-Mail: [email protected] ABSTRACT In this paper we proposed Shared-memory in Scheduling designed for the Multi-Core Processors, recent trends in Scheduler of Shared Memory environment have gained more importance in multi-core systems with Performance for workloads greatly depends on which processing tasks are scheduled to run on the same or different subset of cores (it’s contention-aware scheduling). The implementation of an optimal multi-core scheduler with improved prediction of consequences between the processor in Large Systems for that we executed applications using allocation generated by our new processor to-core mapping algorithms on used ARM FL-2440 Board hardware architecture with software of Linux in the running state. The results are obtained by dynamic allocation/de-allocation (Reallocated) using lock-free algorithm to reduce the time constraints, and automatically executes in concurrent components in parallel on multi-core systems to allocate free memory. The demonstrated concepts are scalable to multiple kernels and virtual OS environments using the runtime scheduler in a machine and showed an averaged improvement (up to 26%) which is very significant. Keywords: scheduler, shared memory, multi-core processor, linux-2.6-OpenMp, ARM-Fl2440. -

The Impact of Exploiting Instruction-Level Parallelism on Shared-Memory Multiprocessors

218 IEEE TRANSACTIONS ON COMPUTERS, VOL. 48, NO. 2, FEBRUARY 1999 The Impact of Exploiting Instruction-Level Parallelism on Shared-Memory Multiprocessors Vijay S. Pai, Student Member, IEEE, Parthasarathy Ranganathan, Student Member, IEEE, Hazim Abdel-Shafi, Student Member, IEEE, and Sarita Adve, Member, IEEE AbstractÐCurrent microprocessors incorporate techniques to aggressively exploit instruction-level parallelism (ILP). This paper evaluates the impact of such processors on the performance of shared-memory multiprocessors, both without and with the latency- hiding optimization of software prefetching. Our results show that, while ILP techniques substantially reduce CPU time in multiprocessors, they are less effective in removing memory stall time. Consequently, despite the inherent latency tolerance features of ILP processors, we find memory system performance to be a larger bottleneck and parallel efficiencies to be generally poorer in ILP- based multiprocessors than in previous generation multiprocessors. The main reasons for these deficiencies are insufficient opportunities in the applications to overlap multiple load misses and increased contention for resources in the system. We also find that software prefetching does not change the memory bound nature of most of our applications on our ILP multiprocessor, mainly due to a large number of late prefetches and resource contention. Our results suggest the need for additional latency hiding or reducing techniques for ILP systems, such as software clustering of load misses and producer-initiated communication. Index TermsÐShared-memory multiprocessors, instruction-level parallelism, software prefetching, performance evaluation. æ 1INTRODUCTION HARED-MEMORY multiprocessors built from commodity performance improvements from the use of current ILP Smicroprocessors are being increasingly used to provide techniques, but the improvements vary widely. -

Lecture 17: Multiprocessors

Lecture 17: Multiprocessors • Topics: multiprocessor intro and taxonomy, symmetric shared-memory multiprocessors (Sections 4.1-4.2) 1 Taxonomy • SISD: single instruction and single data stream: uniprocessor • MISD: no commercial multiprocessor: imagine data going through a pipeline of execution engines • SIMD: vector architectures: lower flexibility • MIMD: most multiprocessors today: easy to construct with off-the-shelf computers, most flexibility 2 Memory Organization - I • Centralized shared-memory multiprocessor or Symmetric shared-memory multiprocessor (SMP) • Multiple processors connected to a single centralized memory – since all processors see the same memory organization Æ uniform memory access (UMA) • Shared-memory because all processors can access the entire memory address space • Can centralized memory emerge as a bandwidth bottleneck? – not if you have large caches and employ fewer than a dozen processors 3 SMPs or Centralized Shared-Memory Processor Processor Processor Processor Caches Caches Caches Caches Main Memory I/O System 4 Memory Organization - II • For higher scalability, memory is distributed among processors Æ distributed memory multiprocessors • If one processor can directly address the memory local to another processor, the address space is shared Æ distributed shared-memory (DSM) multiprocessor • If memories are strictly local, we need messages to communicate data Æ cluster of computers or multicomputers • Non-uniform memory architecture (NUMA) since local memory has lower latency than remote memory 5 Distributed