ARM Processor Architecture

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

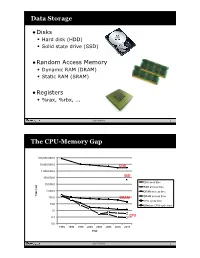

Data Storage the CPU-Memory

Data Storage •Disks • Hard disk (HDD) • Solid state drive (SSD) •Random Access Memory • Dynamic RAM (DRAM) • Static RAM (SRAM) •Registers • %rax, %rbx, ... Sean Barker 1 The CPU-Memory Gap 100,000,000.0 10,000,000.0 Disk 1,000,000.0 100,000.0 SSD Disk seek time 10,000.0 SSD access time 1,000.0 DRAM access time Time (ns) Time 100.0 DRAM SRAM access time CPU cycle time 10.0 Effective CPU cycle time 1.0 0.1 CPU 0.0 1985 1990 1995 2000 2003 2005 2010 2015 Year Sean Barker 2 Caching Smaller, faster, more expensive Cache 8 4 9 10 14 3 memory caches a subset of the blocks Data is copied in block-sized 10 4 transfer units Larger, slower, cheaper memory Memory 0 1 2 3 viewed as par@@oned into “blocks” 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 3 Cache Hit Request: 14 Cache 8 9 14 3 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 4 Cache Miss Request: 12 Cache 8 12 9 14 3 12 Request: 12 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 5 Locality ¢ Temporal locality: ¢ Spa0al locality: Sean Barker 6 Locality Example (1) sum = 0; for (i = 0; i < n; i++) sum += a[i]; return sum; Sean Barker 7 Locality Example (2) int sum_array_rows(int a[M][N]) { int i, j, sum = 0; for (i = 0; i < M; i++) for (j = 0; j < N; j++) sum += a[i][j]; return sum; } Sean Barker 8 Locality Example (3) int sum_array_cols(int a[M][N]) { int i, j, sum = 0; for (j = 0; j < N; j++) for (i = 0; i < M; i++) sum += a[i][j]; return sum; } Sean Barker 9 The Memory Hierarchy The Memory Hierarchy Smaller On 1 cycle to access CPU Chip Registers Faster Storage Costlier instrs can L1, L2 per byte directly Cache(s) ~10’s of cycles to access access (SRAM) Main memory ~100 cycles to access (DRAM) Larger Slower Flash SSD / Local network ~100 M cycles to access Cheaper Local secondary storage (disk) per byte slower Remote secondary storage than local (tapes, Web servers / Internet) disk to access Sean Barker 10. -

Arm Cortex-R52

Arm Cortex-R52 Product Brief Benefits Overview 1. Software Separation The Cortex-R52 is the most advanced processor in the Cortex-R family delivering real-time Robust hardware-enforced software performance for functional safety. As the first Armv8-R processor, Cortex-R52 introduces separation provides confidence that support for a hypervisor, simplifying software integration with robust separation to protect software functions can’t interfere with safety-critical code, while maintaining real-time deterministic operation required in high each other. For safety-related tasks, dependable control systems. this can mean less code needs to be certified, saving time, cost and effort. Cortex-R52 addresses a range of applications such as high performance domain controllers for vehicle powertrain and chassis systems or as a safety island providing 2. Multiple OS upportS protection in complex ADAS and Autonomous Drive systems. Virtualization support gives developers flexibility, readily allowing consolidation Safety Ready of applications using multiple operating systems within a single CPU. This eases Arm Cortex-R52 is part of Arm’s Safety Ready portfolio, a collection of Arm IP that the addition of functionality without have been through various and rigorous levels of functional safety systematic flows growing the number of electronic and development. control units. Learn more at www.arm.com/safety 3. Real-Time Performance High-performance multicore clusters of Cortex-R52 CPUs deliver real-time responsiveness for deterministic systems with the lowest Cortex-R latency. 1 Specifications Architecture Armv8-R Arm and Thumb-2. Supports DSP instructions and a configurable Floating-Point Unit either with Instruction Set single-precision or double precision and Neon. -

ARM Architecture

ARM Architecture Comppgzuter Organization and Assembly ygg Languages Yung-Yu Chuang with slides by Peng-Sheng Chen, Ville Pietikainen ARM history • 1983 developed by Acorn computers – To replace 6502 in BBC computers – 4-man VLSI design team – Its simp lic ity comes from the inexper ience team – Match the needs for generalized SoC for reasonable power, performance and die size – The first commercial RISC implemenation • 1990 ARM (Advanced RISC Mac hine ), owned by Acorn, Apple and VLSI ARM Ltd Design and license ARM core design but not fabricate Why ARM? • One of the most licensed and thus widespread processor cores in the world – Used in PDA, cell phones, multimedia players, handheld game console, digital TV and cameras – ARM7: GBA, iPod – ARM9: NDS, PSP, Sony Ericsson, BenQ – ARM11: Apple iPhone, Nokia N93, N800 – 90% of 32-bit embedded RISC processors till 2009 • Used especially in portable devices due to its low power consumption and reasonable performance ARM powered products ARM processors • A simple but powerful design • A whlhole filfamily of didesigns shiharing siilimilar didesign principles and a common instruction set Naming ARM •ARMxyzTDMIEJFS – x: series – y: MMU – z: cache – T: Thumb – D: debugger – M: Multiplier – I: EmbeddedICE (built-in debugger hardware) – E: Enhanced instruction – J: Jazell e (JVM) – F: Floating-point – S: SthiiblSynthesizible version (source code version for EDA tools) Popular ARM architectures •ARM7TDMI – 3 pipe line stages (ft(fetc h/deco de /execu te ) – High code density/low power consumption – One of the most used ARM-version (for low-end systems) – All ARM cores after ARM7TDMI include TDMI even if they do not include TDMI in their labels • ARM9TDMI – Compatible with ARM7 – 5 stages (fe tc h/deco de /execu te /memory /wr ite ) – Separate instruction and data cache •ARM11 ARM family comparison year 1995 1997 1999 2003 ARM is a RISC • RISC: simple but powerful instructions that execute within a single cycle at high clock speed. -

OMAP-L138 DSP+ARM9™ Development Kit Low-Cost Development Kit to Jump-Start Real-Time Signal Processing Innovation

OMAP-L138 DSP+ARM9™ Development Kit Low-cost development kit to jump-start real-time signal processing innovation Texas Instruments’ OMAP-L138 development kit is a new, robust low-cost development board designed to spark innovative designs based on the OMAP-L138 processor. Along with TI’s new included Linux™ Software Development Kit (SDK), the OMAP-L138 development kit is ideal for power- optimized, networked applications including industrial control, medical diagnostics and communications. It includes the OMAP-L138 baseboard, SD cards with a Linux demo, DSP/BIOS™ kernel and SDK, and Code Composer Studio™ (CCStudio) Integrated Development Environment (IDE), a power supply and cord, VGA cable and USB cable. Technical details • SATA port (3 Gbps) Key features and benefi ts The OMAP-L138 development kit is based • VGA port (15-pin D-SUB) • OMAP-L138 DSP+ARM9 software and on the OMAP-L138 DSP+ARM9 processor, a • LCD port (Beagleboard-XM connectors) development kit to jump-start real-time low-power applications processor based on • 3 audio ports signal processing innovation an ARM926EJ-S and a TMS320C674x DSP • Reduces design work with downloadable core. It provides signifi cantly lower power • 1 line in and duplicable board schematics and than other members of the TMS320C6000™ • 1 line out design fi les platform of DSPs. The OMAP-L138 processor • 1 MIC in • Fast and easy development of applica- enables developers to quickly design and • Composite in (RCA jack) tions requiring fi ngerprint recognition and develop devices featuring robust operating • Leopard Imaging camera sensor input (32- face detection with embedded analytics systems support and rich user interfaces with pin ZIP connector) • Low-power OMAP-L138 DSP+ a fully integrated mixed-processor solution. -

Cache-Aware Roofline Model: Upgrading the Loft

1 Cache-aware Roofline model: Upgrading the loft Aleksandar Ilic, Frederico Pratas, and Leonel Sousa INESC-ID/IST, Technical University of Lisbon, Portugal ilic,fcpp,las @inesc-id.pt f g Abstract—The Roofline model graphically represents the attainable upper bound performance of a computer architecture. This paper analyzes the original Roofline model and proposes a novel approach to provide a more insightful performance modeling of modern architectures by introducing cache-awareness, thus significantly improving the guidelines for application optimization. The proposed model was experimentally verified for different architectures by taking advantage of built-in hardware counters with a curve fitness above 90%. Index Terms—Multicore computer architectures, Performance modeling, Application optimization F 1 INTRODUCTION in Tab. 1. The horizontal part of the Roofline model cor- Driven by the increasing complexity of modern applica- responds to the compute bound region where the Fp can tions, microprocessors provide a huge diversity of compu- be achieved. The slanted part of the model represents the tational characteristics and capabilities. While this diversity memory bound region where the performance is limited is important to fulfill the existing computational needs, it by BD. The ridge point, where the two lines meet, marks imposes challenges to fully exploit architectures’ potential. the minimum I required to achieve Fp. In general, the A model that provides insights into the system performance original Roofline modeling concept [10] ties the Fp and the capabilities is a valuable tool to assist in the development theoretical bandwidth of a single memory level, with the I and optimization of applications and future architectures. -

ARM-Architecture Simulator Archulator

Embedded ECE Lab Arm Architecture Simulator University of Arizona ARM-Architecture Simulator Archulator Contents Purpose ............................................................................................................................................ 2 Description ...................................................................................................................................... 2 Block Diagram ............................................................................................................................ 2 Component Description .............................................................................................................. 3 Buses ..................................................................................................................................................... 3 µP .......................................................................................................................................................... 3 Caches ................................................................................................................................................... 3 Memory ................................................................................................................................................. 3 CoProcessor .......................................................................................................................................... 3 Using the Archulator ...................................................................................................................... -

Μc/OS-II™ Real-Time Operating System

μC/OS-II™ Real-Time Operating System DESCRIPTION APPLICATIONS μC/OS-II is a portable, ROMable, scalable, preemptive, real-time ■ Avionics deterministic multitasking kernel for microprocessors, ■ Medical equipment/devices microcontrollers and DSPs. Offering unprecedented ease-of-use, ■ Data communications equipment μC/OS-II is delivered with complete 100% ANSI C source code and in-depth documentation. μC/OS-II runs on the largest number of ■ White goods (appliances) processor architectures, with ports available for download from the ■ Mobile Phones, PDAs, MIDs Micrium Web site. ■ Industrial controls μC/OS-II manages up to 250 application tasks. μC/OS-II includes: ■ Consumer electronics semaphores; event flags; mutual-exclusion semaphores that eliminate ■ Automotive unbounded priority inversions; message mailboxes and queues; task, time and timer management; and fixed sized memory block ■ A wide-range of embedded applications management. FEATURES μC/OS-II’s footprint can be scaled (between 5 Kbytes to 24 Kbytes) to only contain the features required for a specific application. The ■ Unprecedented ease-of-use combined with an extremely short execution time for most services provided by μC/OS-II is both learning curve enables rapid time-to-market advantage. constant and deterministic; execution times do not depend on the number of tasks running in the application. ■ Runs on the largest number of processor architectures with ports easily downloaded. A validation suite provides all documentation necessary to support the use of μC/OS-II in safety-critical systems. Specifically, μC/OS-II is ■ Scalability – Between 5 Kbytes to 24 Kbytes currently implemented in a wide array of high level of safety-critical ■ Max interrupt disable time: 200 clock cycles (typical devices, including: configuration, ARM9, no wait states). -

Multi-Tier Caching Technology™

Multi-Tier Caching Technology™ Technology Paper Authored by: How Layering an Application’s Cache Improves Performance Modern data storage needs go far beyond just computing. From creative professional environments to desktop systems, Seagate provides solutions for almost any application that requires large volumes of storage. As a leader in NAND, hybrid, SMR and conventional magnetic recording technologies, Seagate® applies different levels of caching and media optimization to benefit performance and capacity. Multi-Tier Caching (MTC) Technology brings the highest performance and areal density to a multitude of applications. Each Seagate product is uniquely tailored to meet the performance requirements of a specific use case with the right memory, NAND, and media type and size. This paper explains how MTC Technology works to optimize hard drive performance. MTC Technology: Key Advantages Capacity requirements can vary greatly from business to business. While the fastest performance can be achieved using Dynamic Random Access Memory (DRAM) cache, the data in the DRAM is not persistent through power cycles and DRAM is very expensive compared to other media. NAND flash data survives through power cycles but it is still very expensive compared to a magnetic storage medium. Magnetic storage media cache offers good performance at a very low cost. On the downside, media cache takes away overall disk drive capacity from PMR or SMR main store media. MTC Technology solves this dilemma by using these diverse media components in combination to offer different levels of performance and capacity at varying price points. By carefully tuning firmware with appropriate cache types and sizes, the end user can experience excellent overall system performance. -

CUDA 11 and A100 - WHAT’S NEW? Markus Hrywniak, 23Rd June 2020 TOPICS for TODAY

CUDA 11 AND A100 - WHAT’S NEW? Markus Hrywniak, 23rd June 2020 TOPICS FOR TODAY Ampere architecture – A100, powering DGX–A100, HGX-A100... and soon, FZ Jülich‘s JUWELS Booster New CUDA 11 Toolkit release Overview of features Talk next week: Third generation Tensor Cores GTC talks go into much more details. See references! 2 HGX-A100 4-GPU HGX-A100 8-GPU • 4 A100 with NVLINK • 8 A100 with NVSwitch 3 HIERARCHY OF SCALES Multi-System Rack Multi-GPU System Multi-SM GPU Multi-Core SM Unlimited Scale 8 GPUs 108 Multiprocessors 2048 threads 4 AMDAHL’S LAW serial section parallel section serial section Amdahl’s Law parallel section Shortest possible runtime is sum of serial section times Time saved serial section Some Parallelism Increased Parallelism Infinite Parallelism Program time = Parallel sections take less time Parallel sections take no time sum(serial times + parallel times) Serial sections take same time Serial sections take same time 5 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY serial section parallel section serial section Split up serial & parallel components parallel section serial section Some Parallelism Task Parallelism Infinite Parallelism Program time = Parallel sections overlap with serial sections Parallel sections take no time sum(serial times + parallel times) Serial sections take same time 6 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY CUDA Concurrency Mechanisms At Every Scope CUDA Kernel Threads, Warps, Blocks, Barriers Application CUDA Streams, CUDA Graphs Node Multi-Process Service, GPU-Direct System NCCL, CUDA-Aware MPI, NVSHMEM 7 OVERCOMING AMDAHL: ASYNCHRONY & LATENCY Execution Overheads Non-productive latencies (waste) Operation Latency Network latencies Memory read/write File I/O .. -

SEGGER — the Embedded Experts It Simply Works!

SEGGER — The Embedded Experts It simply works! Buyout licensing for Embedded Studio No license server, no hardware dongle Monheim, Germany – November 26 th, 2018 It only takes two minutes to install: With unlimited evaluaton and the freedom to use the sofware at no cost for non-commercial purposes, SEGGER has always made it easy to use Embedded Studio. In additon to this and by popular demand from developers in larger corporatons, SEGGER introduces a buyout licensing opton that makes things even easier. The new buyout opton allows usage by an unlimited number of users, without copy protecton, making it very easy to install and use the sofware anywhere: In the ofce, on the road, at customer's site or at home. No license server, no hardware dongle. Developers can fully concentrate on what they do and like best and what they are paid for: Develop sofware rather than deal with copy protecton issues. Being available for Windows, macOS and Linux, it reduces the dependencies on any third party. It is the perfect choice for mid-size to large corporatons with strict licensing policies. In additon to that, Embedded Studio's source code is available. "We are seeing more and more companies adoptng Embedded Studio as their Development Environment of choice throughout their entre organizaton. Listening to our customers, we found that this new opton helps to make Embedded Studio even more atractve. Easier is beter", says Rolf Segger, Founder of SEGGER. Get more informaton on the new SEGGER Embedded Studio at: www.segger.com/embedded-studio.html ### About Embedded Studio SEGGER — The Embedded Experts It simply works! Embedded Studio is a leading Integrated Development Environment (IDE) made by and for embedded sofware developers. -

ARM Roadmap Spring 2017

ARM Roadmap Spring 2017 Robert Boys [email protected] Version 9.0 Agenda . Roadmap . Architectures ARM1™ die . Issues . What is NEW ! . big.LITTLE™ . 64 Bit . Cortex®-A15 . 64 BIT . DynamIQ 3 © ARM 2017 In the Beginning… . 1985 32 years ago in a barn.... 12 engineers . Cash from Apple and VLSI . IP from Acorn Computers . Proof of concept . No patents, no independent customers, product not ready for mass market. A barn, some energy, experience and belief: “We’re going to be the Global Standard” 4 © ARM 2017 The Cortex Processor Roadmap in 2008 Application Real-time Microcontroller Cortex-A9 Cortex-A8 ARM11 Cortex-R4F ARM9 Cortex-R4 ARM7TDMI ARM7 Cortex-M3 SC300 Cortex-M1 5 © ARM 2017 5 Cortex-A73 ARM 2017 Processor Roadmap Cortex-A35 Cortex-A32 Cortex-A72 Cortex-A57 ARM 7, 9, 11 Cortex-A17 Application Cortex-A53 Cortex-A15 Real-time Not to scale to Not Cortex-A9 (Dual) Microcontroller Cortex-A9 (MPCore) Cortex-A8 Cortex-A7 ARM11(MP) Cortex-A5 MMU ARM926EJ-S Cortex-R52 No MMU Cortex-R8 200+ MHz Cortex-R7 ARM9 Cortex-R5 Cortex-R4 Cortex-M7 200+ MHz Cortex-M33 ARM7TDM 72 – 150 + MHz Cortex-M4 Cortex-M3 ARM7I SC300 Cortex-M23 DesignStart™ Cortex-M1 SC000 Cortex-M0+ Cortex-M0 6 © ARM 2017 6 Versions, cores and architectures ? Family Architecture Cores ARM7TDMI ARMv4T ARM7TDMI(S) ARM9 ARM9E ARMv5TE ARM926EJ-S, ARM966E-S ARM11 ARMv6 (T2) ARM1136(F), 1156T2(F)-S, 1176JZ(F), ARM11 MPCore™ Cortex-A ARMv7-A Cortex-A5, A7, A8, A9, A12, A15, A17 Cortex-R ARMv7-R Cortex-R4(F), Cortex-R5, R7, R8 … Cortex-M ARMv7-M Cortex-M3, M4, M7 (M7 is ARMv7-ME) ARMv6-M Cortex-M1, M0, M0+ NEW ! ARMv8-A 64 Bit: Cortex-A35/A53/57/A72 Cortex-A73 Cortex-A32 NEW ! ARMv8-R 32 Bit: Cortex-R52 NEW ! ARMv8-M 32 Bit: Cortex-M23 & M33 TrustZone® 7 © ARM 2017 What is New ? DynamIQ ! . -

J-Flash User Guide of the Stand-Alone Flash Programming Software

J-Flash User guide of the stand-alone flash programming software Document: UM08003 Software Version: 7.50 Date: July 1, 2021 A product of SEGGER Microcontroller GmbH www.segger.com 2 Disclaimer Specifications written in this document are believed to be accurate, but are not guaranteed to be entirely free of error. The information in this manual is subject to change for functional or performance improvements without notice. Please make sure your manual is the latest edition. While the information herein is assumed to be accurate, SEGGER Microcontroller GmbH (SEG- GER) assumes no responsibility for any errors or omissions. SEGGER makes and you receive no warranties or conditions, express, implied, statutory or in any communication with you. SEGGER specifically disclaims any implied warranty of merchantability or fitness for a particular purpose. Copyright notice You may not extract portions of this manual or modify the PDF file in any way without the prior written permission of SEGGER. The software described in this document is furnished under a license and may only be used or copied in accordance with the terms of such a license. © 2004-2018 SEGGER Microcontroller GmbH, Monheim am Rhein / Germany Trademarks Names mentioned in this manual may be trademarks of their respective companies. Brand and product names are trademarks or registered trademarks of their respective holders. Contact address SEGGER Microcontroller GmbH Ecolab-Allee 5 D-40789 Monheim am Rhein Germany Tel. +49-2173-99312-0 Fax. +49-2173-99312-28 E-mail: [email protected] Internet: www.segger.com J-Flash User Guide (UM08003) © 2004-2018 SEGGER Microcontroller GmbH 3 Manual versions This manual describes the current software version.