Generations of Microprocessors Area Costs of Caches What Is Exactly

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Design and Verification of the Alphastation 600 5-Series Workstation by John H

The Design and Verification of the AlphaStation 600 5-series Workstation by John H. Zurawski, John E. Murray, and Paul J. Lemmon ABSTRACT The AlphaStation 600 5-series workstation is a high-performance, uniprocessor design based on the Alpha 21164 microprocessor and on the PCI bus. Six CMOS ASICs provide high-bandwidth, low-latency interconnects between the CPU, the main memory, and the I/O subsystem. The verification effort used directed, pseudorandom testing on a VERILOG software model. A hardware-based verification technique provided a test throughput that resulted in a significant improvement over software tests. This technique currently involves the use of graphics cards to emulate generic DMA devices. A PCI hardware demon is under development to further enhance the capability of the hardware-based verification. INTRODUCTION The high-performance AlphaStation 600 5-series workstation is based on the fastest Alpha microprocessor to date -- the Alpha 21164.[1] The I/O subsystem uses the 64-bit version of the Peripheral Component Interconnect (PCI) and the Extended Industry Standard Architecture (EISA) bus. The AlphaStation 600 supports three operating systems: Digital UNIX (formerly DEC OSF/1), OpenVMS, and Microsoft's Windows NT. This workstation series uses the DECchip 21171 chip set designed and built by Digital. These chips provide high-bandwidth, low-latency interconnects between the CPU, the main memory, and the PCI bus. This paper describes the architecture and features of the AlphaStation 600 5-series workstation and the DECchip 21171 chip set. The system overview is first presented, followed by a detailed discussion of the chip set. The paper then describes the cache and memory designs, detailing how the memory design evolved from the workstation's requirements. -

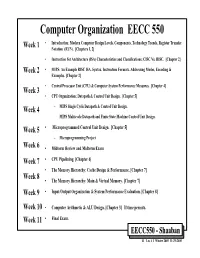

Computer Organization EECC 550 • Introduction: Modern Computer Design Levels, Components, Technology Trends, Register Transfer Week 1 Notation (RTN)

Computer Organization EECC 550 • Introduction: Modern Computer Design Levels, Components, Technology Trends, Register Transfer Week 1 Notation (RTN). [Chapters 1, 2] • Instruction Set Architecture (ISA) Characteristics and Classifications: CISC Vs. RISC. [Chapter 2] Week 2 • MIPS: An Example RISC ISA. Syntax, Instruction Formats, Addressing Modes, Encoding & Examples. [Chapter 2] • Central Processor Unit (CPU) & Computer System Performance Measures. [Chapter 4] Week 3 • CPU Organization: Datapath & Control Unit Design. [Chapter 5] Week 4 – MIPS Single Cycle Datapath & Control Unit Design. – MIPS Multicycle Datapath and Finite State Machine Control Unit Design. Week 5 • Microprogrammed Control Unit Design. [Chapter 5] – Microprogramming Project Week 6 • Midterm Review and Midterm Exam Week 7 • CPU Pipelining. [Chapter 6] • The Memory Hierarchy: Cache Design & Performance. [Chapter 7] Week 8 • The Memory Hierarchy: Main & Virtual Memory. [Chapter 7] Week 9 • Input/Output Organization & System Performance Evaluation. [Chapter 8] Week 10 • Computer Arithmetic & ALU Design. [Chapter 3] If time permits. Week 11 • Final Exam. EECC550 - Shaaban #1 Lec # 1 Winter 2005 11-29-2005 Computing System History/Trends + Instruction Set Architecture (ISA) Fundamentals • Computing Element Choices: – Computing Element Programmability – Spatial vs. Temporal Computing – Main Processor Types/Applications • General Purpose Processor Generations • The Von Neumann Computer Model • CPU Organization (Design) • Recent Trends in Computer Design/performance • Hierarchy -

Computer Architectures an Overview

Computer Architectures An Overview PDF generated using the open source mwlib toolkit. See http://code.pediapress.com/ for more information. PDF generated at: Sat, 25 Feb 2012 22:35:32 UTC Contents Articles Microarchitecture 1 x86 7 PowerPC 23 IBM POWER 33 MIPS architecture 39 SPARC 57 ARM architecture 65 DEC Alpha 80 AlphaStation 92 AlphaServer 95 Very long instruction word 103 Instruction-level parallelism 107 Explicitly parallel instruction computing 108 References Article Sources and Contributors 111 Image Sources, Licenses and Contributors 113 Article Licenses License 114 Microarchitecture 1 Microarchitecture In computer engineering, microarchitecture (sometimes abbreviated to µarch or uarch), also called computer organization, is the way a given instruction set architecture (ISA) is implemented on a processor. A given ISA may be implemented with different microarchitectures.[1] Implementations might vary due to different goals of a given design or due to shifts in technology.[2] Computer architecture is the combination of microarchitecture and instruction set design. Relation to instruction set architecture The ISA is roughly the same as the programming model of a processor as seen by an assembly language programmer or compiler writer. The ISA includes the execution model, processor registers, address and data formats among other things. The Intel Core microarchitecture microarchitecture includes the constituent parts of the processor and how these interconnect and interoperate to implement the ISA. The microarchitecture of a machine is usually represented as (more or less detailed) diagrams that describe the interconnections of the various microarchitectural elements of the machine, which may be everything from single gates and registers, to complete arithmetic logic units (ALU)s and even larger elements. -

VAX VMS at 20

1977–1997... and beyond Nothing Stops It! Of all the winning attributes of the OpenVMS operating system, perhaps its key success factor is its evolutionary spirit. Some would say OpenVMS was revolutionary. But I would prefer to call it evolutionary because its transition has been peaceful and constructive. Over a 20-year period, OpenVMS has experienced evolution in five arenas. First, it evolved from a system running on some 20 printed circuit boards to a single chip. Second, it evolved from being proprietary to open. Third, it evolved from running on CISC-based VAX to RISC-based Alpha systems. Fourth, VMS evolved from being primarily a technical oper- ating system, to a commercial operat- ing system, to a high availability mission-critical commercial operating system. And fifth, VMS evolved from time-sharing to a workstation environment, to a client/server computing style environment. The hardware has experienced a similar evolution. Just as the 16-bit PDP systems laid the groundwork for the VAX platform, VAX laid the groundwork for Alpha—the industry’s leading 64-bit systems. While the platforms have grown and changed, the success continues. Today, OpenVMS is the most flexible and adaptable operating system on the planet. What start- ed out as the concept of ‘Starlet’ in 1975 is moving into ‘Galaxy’ for the 21st century. And like the universe, there is no end in sight. —Jesse Lipcon Vice President of UNIX and OpenVMS Systems Business Unit TABLE OF CONTENTS CHAPTER I Changing the Face of Computing 4 CHAPTER II Setting the Stage 6 CHAPTER -

The Alphaserver 4100 Cached Processor Module Maurice B

I' UI* Qi .H I1 0 &, hi 1, 7 : I . - * -1 ALPHASERVER 4100 SYSTEM -. ORACLE AND SYBASE DATABASE PRODUCTS - - 1 FORVLM .& Digital . &&' *-I INSTRUCTION EXECUTION ON ALPHA PROCESSORS 663***01 c: c: "' ~echn ica l 3 Journal i ri. i . - ,.i;' F ,. ! , 14 )-? ".,' & I-, 1 *,< .- , ',,I"',., , '*a, ,...,- csa y -p~ iic Editorial The Digital TechnicalJournalis a refereed The following are trademarks of Digital Jane C. Blake, Managing Editor journal published quarterly by Digital Equipment Corporation: AlphaServer, Kathleen M. Stetson, Editor Equipment Corporation, 50 Nagog Park, Alphastation, DEC, DECnet, DIGITAL, Helen L. Patterson, Editor AK02-3/B3, Acton, MA 01720-9843. the DIGITAL logo, VAX, VMS, and Hard-copy subscriptions can be ordered by ULTRIX. Circulation sending a check in U.S. hds(made payable AIM is a trademark ofAIM Technology, Inc. Catherine M. Phillips, Administrator to Digital Equipment Corporation) to the CCT is a registered trademark of Cooper Dorothea B. Cassady, Secretary published-by address. General subscription and Chyan Technologies, Inc. CHALLENGE rates are $40.00 (non-U.S. $60) for four and Silicon Graphics are registered trademarks Production issues and $75.00 (non-U.S. $115) for and POWER CHALLENGE is a trademark Christa W. Jessico, Production Editor eight issues. University and college profes- of Silicon Graphics, Inc. Compaq is a regis- Anne S. Katzeff, Typographer sors and Ph.D. students in the electrical tered trademark and ProLiant is a trademark Peter R. Woodbury, Illustrator engineering and computer science fields of Compaq Computer Corporation. HP is receive complimentary subscriptions upon a registered trademark of Hewlett-Packard Advisory Board request. -

Data Caches for Superscalar Processors*

Data Caches for Superscalar Processors* Toni Juan Juan J. Navarro Olivier Temam antoniojQx.upc.es juanjoQac.upc.es [email protected] Dept. Arquitectura de Computadors - Barcelona PRiSM [Jniversitat Politecnica de Catalunya Versailles University Spain FlWlCe Abstract are more difficult to design because load/store requests sent in parallel share no obvious locality properties. The diffi- As the number of instructions executed in parallel increases, culty is to propose a design that can cope with increasing superscalar processors will require higher bandwidth from degree of instruction parallelism. The solutions presently data caches. Because of the high cost of true multi-ported implemented in processors can be classified as: caches, alternative cache designs must be evaluated. The l True multi-porting. With respect to performance true purpose of this study is to examine the data cache band- multi-porting is clearly an ideal solution, but its chip area width requirements of high-degree superscalar processors, cost is high. Cost can be partly reduced by accepting a and investigate alternative solutions. The designs studied certain degradation of cache access time that would reduce range from classic solutions like multi-banked caches to more the performance. complex solutions recently proposed in the literature. The . Multiple Cache Copies. For n accesses, the cache must performance tradeoffs of these different cache designs are be replicated n times with no benefit to storage space. More- examined in details. Then, using a chip area cost model, over, store requests are sent simultaneously to all cache all solutions are compared with respect to both cost and copies for coherence and thus no other cache request can performance. -

Zarka Cvetanovic and R.E. Kessler Compaq Computer Corporation

PERFORMANCE ANALYSIS OF THE ALPHA 21264-BASED COMPAQ ES40 SYSTEM Zarka Cvetanovic and R.E. Kessler Compaq Computer Corporation Abstract SPEC95 1-CPU 90 This paper evaluates performance characteristics of the Compaq ES40/21264 667MHz Compaq ES40 shared memory multiprocessor. The ES40 80 HP PA-8500 440MHz system contains up to four Alpha 21264 CPU’s together IBM Power3 375MHz with a high-performance memory system. We qualitatively 70 SUN USPARC-II 450MHz describe architectural features included in the 21264 Intel Pentium-III 800MHz microprocessor and the surrounding system chipset. We 60 further quantitatively show the performance effects of these features using benchmark results and profiling data 50 collected from industry-standard commercial and technical workloads. The profile data includes basic performance 40 information – such as instructions per cycle, branch mispredicts, and cache misses – as well as other data that 30 specifically characterizes the 21264. Wherever possible, we compare and contrast the ES40 to the AlphaServer 4100 – a 20 previous-generation Alpha system containing four Alpha 21164 microprocessors – to highlight the architectural 10 advances in the ES40. We find that the Compaq ES40 often provides 2 to 3 times the performance of the AlphaServer 0 4100 at similar clock frequencies. We also find that the SPECint95 SPECfp95 ES40 memory system has about five times the memory bandwidth of the 4100. These performance improvements Figure 1 - SPEC95 Comparison come from numerous microprocessor and platform enhancements, including out-of-order execution, branch SPECfp_rate95 prediction, functional units, and the memory system. 3000 Compaq ES40/21264 667MHz 1. INTRODUCTION HP PA-8500 440MHz 2500 SUN USparc-II 400MHz The Compaq ES40 is a shared memory multiprocessor Intel Pentium-III 800MHz containing up to four third-generation Alpha 21264 2000 microprocessors [1][2]. -

MICROPROCESSOR EVALUATIONS for SAFETY-CRITICAL, REAL-TIME February 2009 APPLICATIONS: AUTHORITY for EXPENDITURE NO

DOT/FAA/AR-08/55 Microprocessor Evaluations for Air Traffic Organization Operations Planning Safety-Critical, Real-Time Office of Aviation Research and Development Applications: Authority for Washington, DC 20591 Expenditure No. 43 Phase 3 Report February 2009 Final Report This document is available to the U.S. public through the National Technical Information Services (NTIS), Springfield, Virginia 22161. U.S. Department of Transportation Federal Aviation Administration NOTICE This document is disseminated under the sponsorship of the U.S. Department of Transportation in the interest of information exchange. The United States Government assumes no liability for the contents or use thereof. The United States Government does not endorse products or manufacturers. Trade or manufacturer's names appear herein solely because they are considered essential to the objective of this report. This document does not constitute FAA certification policy. Consult your local FAA aircraft certification office as to its use. This report is available at the Federal Aviation Administration William J. Hughes Technical Center’s Full-Text Technical Reports page: actlibrary.act.faa.gov in Adobe Acrobat portable document format (PDF). Technical Report Documentation Page 1. Report No. 2. Government Accession No. 3. Recipient's Catalog No. DOT/FAA/AR-08/55 4. Title and Subtitle 5. Report Date MICROPROCESSOR EVALUATIONS FOR SAFETY-CRITICAL, REAL-TIME February 2009 APPLICATIONS: AUTHORITY FOR EXPENDITURE NO. 43 PHASE 3 REPORT 6. Performing Organization Code 7. Author(s) 8. Performing Organization Report No. TAMU-CS-AVSI-72005 Rabi N. Mahapatra, Praveen Bhojwani, Jason Lee, and Yoonjin Kim 9. Performing Organization Name and Address 10. Work Unit No. -

The Computer History Simulation Project

The Computer History Simulation Project The Computer History Simulation Project The Computer History Simulation Project is a loose Internet-based collective of people interested in restoring historically significant computer hardware and software systems by simulation. The goal of the project is to create highly portable system simulators and to publish them as freeware on the Internet, with freely available copies of significant or representative software. Simulators SIMH is a highly portable, multi-system simulator. ● Download the latest sources for SIMH (V3.5-1 updated 15-Oct-2005 - see change log). ● Download a zip file containing Windows executables for all the SIMH simulators. The VAX and PDP-11 are compiled without Ethernet support. Versions with Ethernet support are available here. If you download the executables, you should download the source archive as well, as it contains the documentation and other supporting files. ● If your host system is Alpha/VMS, and you want Ethernet support, you need to download the VMS Pcap library and execlet here. SIMH implements simulators for: ● Data General Nova, Eclipse ● Digital Equipment Corporation PDP-1, PDP-4, PDP-7, PDP-8, PDP-9, PDP-10, PDP-11, PDP- 15, VAX ● GRI Corporation GRI-909 ● IBM 1401, 1620, 1130, System 3 ● Interdata (Perkin-Elmer) 16b and 32b systems ● Hewlett-Packard 2116, 2100, 21MX ● Honeywell H316/H516 ● MITS Altair 8800, with both 8080 and Z80 ● Royal-Mcbee LGP-30, LGP-21 ● Scientific Data Systems SDS 940 Also available is a collection of tools for manipulating simulator file formats and for cross- assembling code for the PDP-1, PDP-7, PDP-8, and PDP-11. -

Digital Semiconductor Alpha 21164 Microprocessor Product Brief

Digital Semiconductor Alpha 21164 Microprocessor Product Brief March 1995 Description The Alpha 21164 microprocessor is a high-performance implementation of Digi- tal’s Alpha architecture designed for application servers and high-performance clients. It has a superscalar design capable of issuing four instructions every clock cycle. The integration of an instruction cache, data cache, and second-level cache offers unrivaled microprocessor performance. The 21164 uses a high-performance interface to access main memory, data buses, and an optional board-level cache. Features • Fully pipelined 64-bit advanced RISC • Onchip, 96KB, 3-way, set-associative (reduced instruction set computing) write-back L2 unified instruction and architecture supports multiple operat- data cache ing systems, including: • Onchip write buffer with six fully - Microsoft Windows NT associative 32-byte entries - Digital UNIX • High-performance interface - OpenVMS - 128-bit memory data path • Best-in-class performance - Selectable error correction code - 266 through 300 MHz operation (ECC) or parity protection on data - 290 through 330 SPECint (est.) - 40-bit addressing - 440 through 500 SPECfp (est.) - Programmable interface timing - Superscalar (4-way instruction issue) - Two outstanding load instructions - Peak instruction execution rate of - Control for optional offchip L3 over 1200 million instructions per cache second - Synchronous/asynchronous RAM - 0.50 µm CMOS technology support • Pipelined (9-stage) floating-point unit - Programmable cache block size - IEEE -

Performance of Various Computers Using Standard Linear Equations Software

———————— CS - 89 - 85 ———————— Performance of Various Computers Using Standard Linear Equations Software Jack J. Dongarra* Electrical Engineering and Computer Science Department University of Tennessee Knoxville, TN 37996-1301 Computer Science and Mathematics Division Oak Ridge National Laboratory Oak Ridge, TN 37831 University of Manchester CS - 89 - 85 June 15, 2014 * Electronic mail address: [email protected]. An up-to-date version of this report can be found at http://www.netlib.org/benchmark/performance.ps This work was supported in part by the Applied Mathematical Sciences subprogram of the Office of Energy Research, U.S. Department of Energy, under Contract DE-AC05-96OR22464, and in part by the Science Alliance a state supported program at the University of Tennessee. 6/15/2014 2 Performance of Various Computers Using Standard Linear Equations Software Jack J. Dongarra Electrical Engineering and Computer Science Department University of Tennessee Knoxville, TN 37996-1301 Computer Science and Mathematics Division Oak Ridge National Laboratory Oak Ridge, TN 37831 University of Manchester June 15, 2014 Abstract This report compares the performance of different computer systems in solving dense systems of linear equations. The comparison involves approximately a hundred computers, ranging from the Earth Simulator to personal computers. 1. Introduction and Objectives The timing information presented here should in no way be used to judge the overall performance of a computer system. The results reflect only one problem area: solving dense systems of equations. This report provides performance information on a wide assortment of computers ranging from the home-used PC up to the most powerful supercomputers. The information has been collected over a period of time and will undergo change as new machines are added and as hardware and software systems improve. -

Clock Distribution Lecture 25 Clock Distribution Power Distribution

EE141 EE141-Spring 2006 Digital Integrated Circuits Clock Distribution Lecture 25 Clock Distribution Power Distribution 1 4 EECS141EE141 EECS141EE141 Clock Distribution Administrative Stuff Homework #9 due this Thursday Visit to Intel, April 21 Signup sheet H-tree Friday lab - project ph. 4 starts after we get back Project phase 4 Posted In lab April 21-April 27 CLK Hardware lab this week Friday section on April 28 Clock is distributed in a tree-like fashion 2 5 EECS141EE141 EECS141EE141 Class Material More realistic H-tree Last lecture Timing Today’s lecture Clock distribution Power distribution Reading Chapter 10, 9 (pp. 453-462, 469-475, 508- 515) [Restle98] 3 6 EECS141EE141 EECS141EE141 1 EE141 The Grid System GCLK Driver Driver GCLK GCLK Clock Drivers Dri ver •No rc-matching •Large power Driver GCLK 7 10 EECS141EE141 EECS141EE141 Example: DEC Alpha 21164 Clock Skew in Alpha Processor Clock Frequency: 300 MHz - 9.3 Million Transistors Total Clock Load: 3.75 nF Power in Clock Distribution network : 20 W (out of 50) Uses Two Level Clock Distribution: • Single 6-stage driver at center of chip • Secondary buffers drive left and right side clock grid in Metal3 and Metal4 Total driver size: 58 cm! 8 11 EECS141EE141 EECS141EE141 EV6 (Alpha 21264) Clocking 21164 Clocking 600 MHz – 0.35 micron CMOS tcycle= 3.3ns 2 phase single wire clock, tcycle= 1.67ns distributed globally trise = 0.35ns tskew = 150ps 2 distributed driver channels trise = 0.35ns tskew = 50ps Clock waveform Global clock waveform Reduced RC delay/skew final