Evolution of Microprocessor Performance

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

MBP4ASG41M-VS3.Pdf

ASRock > G41M-VS3 Página 1 de 2 Home | Global / English [Change] About ASRock Products News Support Forum Download Awards Dealer Zone Where to Buy Products G41M-VS3 Motherboard Series »G41M-VS3 Translate »Overview & Specifications ■ Supports FSB1333/1066/800/533 MHz CPUs »Download ■ Supports Dual Channel DDR3 1333(OC) ■ Intel® Graphics Media Accelerator X4500, Pixel Shader 4.0, DirectX 10, Max. shared »Manual memory 1759MB »FAQ ■ EuP Ready »CPU Support List ■ Supports ASRock XFast RAM, XFast LAN, XFast USB Technologies ■ Supports Instant Boot, Instant Flash, OC DNA, ASRock OC Tuner (Up to 158% CPU »Memory QVL frequency increase) »Beta Zone ■ Supports Intelligent Energy Saver (Up to 20% CPU Power Saving) ■ Free Bundle : CyberLink DVD Suite - OEM and Trial; Creative Sound Blaster X-Fi MB - Trial This model may not be sold worldwide. Please contact your local dealer for the availability of this model in your region. Product Specifications General - LGA 775 for Intel® Core™ 2 Extreme / Core™ 2 Quad / Core™ 2 Duo / Pentium® Dual Core / Celeron® Dual Core / Celeron, supporting Penryn Quad Core Yorkfield and Dual Core Wolfdale processors - Supports FSB1333/1066/800/533 MHz CPU - Supports Hyper-Threading Technology - Supports Untied Overclocking Technology - Supports EM64T CPU - Northbridge: Intel® G41 Chipset - Southbridge: Intel® ICH7 - Dual Channel DDR3 memory technology - 2 x DDR3 DIMM slots - Supports DDR3 1333(OC)/1066/800 non-ECC, un-buffered memory Memory - Max. capacity of system memory: 8GB* *Due to the operating system limitation, the actual memory size may be less than 4GB for the reservation for system usage under Windows® 32-bit OS. For Windows® 64-bit OS with 64-bit CPU, there is no such limitation. -

Conroe and Allendale Electrical, Mechanical, and Thermal

Intel® Xeon® Processor 5500 Series Datasheet, Volume 1 March 2009 Document Number: 321321-001 INFORMATION IN THIS DOCUMENT IS PROVIDED IN CONNECTION WITH INTEL® PRODUCTS. NO LICENSE, EXPRESS OR IMPLIED, BY ESTOPPEL OR OTHERWISE, TO ANY INTELLECTUAL PROPERTY RIGHTS IS GRANTED BY THIS DOCUMENT. EXCEPT AS PROVIDED IN INTEL'S TERMS AND CONDITIONS OF SALE FOR SUCH PRODUCTS, INTEL ASSUMES NO LIABILITY WHATSOEVER, AND INTEL DISCLAIMS ANY EXPRESS OR IMPLIED WARRANTY, RELATING TO SALE AND/OR USE OF INTEL PRODUCTS INCLUDING LIABILITY OR WARRANTIES RELATING TO FITNESS FOR A PARTICULAR PURPOSE, MERCHANTABILITY, OR INFRINGEMENT OF ANY PATENT, COPYRIGHT OR OTHER INTELLECTUAL PROPERTY RIGHT. Intel products are not intended for use in medical, life saving, or life sustaining applications. Intel may make changes to specifications and product descriptions at any time, without notice. Designers must not rely on the absence or characteristics of any features or instructions marked “reserved” or “undefined.” Intel reserves these for future definition and shall have no responsibility whatsoever for conflicts or incompatibilities arising from future changes to them. The Intel® Xeon® Processor 5500 Series may contain design defects or errors known as errata which may cause the product to deviate from published specifications.Current characterized errata are available on request. Intel processor numbers are not a measure of performance. Processor numbers differentiate features within each processor family, not across different processor families. See http://www.intel.com/products/processor_number for details. Over time processor numbers will increment based on changes in clock, speed, cache, FSB, or other features, and increments are not intended to represent proportional or quantitative increases in any particular feature. -

Multiprocessing Contents

Multiprocessing Contents 1 Multiprocessing 1 1.1 Pre-history .............................................. 1 1.2 Key topics ............................................... 1 1.2.1 Processor symmetry ...................................... 1 1.2.2 Instruction and data streams ................................. 1 1.2.3 Processor coupling ...................................... 2 1.2.4 Multiprocessor Communication Architecture ......................... 2 1.3 Flynn’s taxonomy ........................................... 2 1.3.1 SISD multiprocessing ..................................... 2 1.3.2 SIMD multiprocessing .................................... 2 1.3.3 MISD multiprocessing .................................... 3 1.3.4 MIMD multiprocessing .................................... 3 1.4 See also ................................................ 3 1.5 References ............................................... 3 2 Computer multitasking 5 2.1 Multiprogramming .......................................... 5 2.2 Cooperative multitasking ....................................... 6 2.3 Preemptive multitasking ....................................... 6 2.4 Real time ............................................... 7 2.5 Multithreading ............................................ 7 2.6 Memory protection .......................................... 7 2.7 Memory swapping .......................................... 7 2.8 Programming ............................................. 7 2.9 See also ................................................ 8 2.10 References ............................................. -

Intel® Core™ Microarchitecture • Wrap Up

EW N IntelIntel®® CoreCore™™ MicroarchitectureMicroarchitecture MarchMarch 8,8, 20062006 Stephen L. Smith Bob Valentine Vice President Architect Digital Enterprise Group Intel Architecture Group Agenda • Multi-core Update and New Microarchitecture Level Set • New Intel® Core™ Microarchitecture • Wrap Up 2 Intel Multi-core Roadmap – Updates since Fall IDF 3 Ramping Multi-core Everywhere 4 All products and dates are preliminary and subject to change without notice. Refresher: What is Multi-Core? Two or more independent execution cores in the same processor Specific implementations will vary over time - driven by product implementation and manufacturing efficiencies • Best mix of product architecture and volume mfg capabilities – Architecture: Shared Caches vs. Independent Caches – Mfg capabilities: volume packaging technology • Designed to deliver performance, OEM and end user experience Single die (Monolithic) based processor Multi-Chip Processor Example: 90nm Pentium® D Example: Intel Core™ Duo Example: 65nm Pentium D Processor (Smithfield) Processor (Yonah) Processor (Presler) Core0 Core1 Core0 Core1 Core0 Core1 Front Side Bus Front Side Bus Front Side Bus *Not representative of actual die photos or relative size 5 Intel® Core™ Micro-architecture *Not representative of actual die photo or relative size 6 Intel Multi-core Roadmap 7 Intel Multi-core Roadmap 8 Intel® Core™ Microarchitecture Based Platforms Platform 2006 20072007 Caneland Platform (2007) MP Servers Tigerton (QC) (2007) Bensley Platform (Q2’06)/ Glidewell Platform (Q2’06) ) DP Servers/ Woodcrest (Q3’06) DP Workstation Clovertown (QC) (Q1’07) Kaylo Platform (Q3’06)/ Wyloway Platform (Q3 ’06) UP Servers/ Conroe (Q3’06) UP Workstation Kentsfield (QC) (Q1’07) Bridge Creek Platform (Mid’06) Desktop -Home Conroe (Q3’06) Kentsfield (QC) (Q1’07) Desktop -Office Averill Platform (Mid’06) Conroe (Q3’06) Mobile Client Napa Platform (Q1’06) Merom (2H’06) All products and dates are preliminary 9 Note: only Intel® Core™ microarchitecture QC refers to Quad-Core and subject to change without notice. -

Nt* and Rtl* INT 2Eh CALL Ntdll!Kifastsystemcall

ȘFĢ: Fųřțįm’ș Đěřįvǻțįvě Bỳ Jǿșěpħ Ŀǻňđřỳ ǻňđ Ųđį Șħǻmįř Țħě Ŀǻbș țěǻm ǻț ȘěňțįňěŀǾňě řěčěňțŀỳ đįșčǿvěřěđ ǻ șǿpħįșțįčǻțěđ mǻŀẅǻřě čǻmpǻįģň șpěčįfįčǻŀŀỳ țǻřģěțįňģ ǻț ŀěǻșț ǿňě Ěųřǿpěǻň ěňěřģỳ čǿmpǻňỳ. Ųpǿň đįșčǿvěřỳ, țħě țěǻm řěvěřșě ěňģįňěěřěđ țħě čǿđě ǻňđ běŀįěvěș țħǻț bǻșěđ ǿň țħě ňǻțųřě, běħǻvįǿř ǻňđ șǿpħįșțįčǻțįǿň ǿf țħě mǻŀẅǻřě ǻňđ țħě ěxțřěmě měǻșųřěș įț țǻķěș țǿ ěvǻđě đěțěčțįǿň, įț ŀįķěŀỳ pǿįňțș țǿ ǻ ňǻțįǿň-șțǻțě șpǿňșǿřěđ įňįțįǻțįvě, pǿțěňțįǻŀŀỳ ǿřįģįňǻțįňģ įň Ěǻșțěřň Ěųřǿpě. Țħě mǻŀẅǻřě įș mǿșț ŀįķěŀỳ ǻ đřǿppěř țǿǿŀ běįňģ ųșěđ țǿ ģǻįň ǻččěșș țǿ čǻřěfųŀŀỳ țǻřģěțěđ ňěțẅǿřķ ųșěřș, ẅħįčħ įș țħěň ųșěđ ěįțħěř țǿ įňțřǿđųčě țħě pǻỳŀǿǻđ, ẅħįčħ čǿųŀđ ěįțħěř ẅǿřķ țǿ ěxțřǻčț đǻțǻ ǿř įňșěřț țħě mǻŀẅǻřě țǿ pǿțěňțįǻŀŀỳ șħųț đǿẅň ǻň ěňěřģỳ ģřįđ. Țħě ěxpŀǿįț ǻffěčțș ǻŀŀ věřșįǿňș ǿf Mįčřǿșǿfț Ẅįňđǿẅș ǻňđ ħǻș běěň đěvěŀǿpěđ țǿ bỳpǻșș țřǻđįțįǿňǻŀ ǻňțįvįřųș șǿŀųțįǿňș, ňěxț-ģěňěřǻțįǿň fįřěẅǻŀŀș, ǻňđ ěvěň mǿřě řěčěňț ěňđpǿįňț șǿŀųțįǿňș țħǻț ųșě șǻňđbǿxįňģ țěčħňįqųěș țǿ đěțěčț ǻđvǻňčěđ mǻŀẅǻřě. (bįǿměțřįč řěǻđěřș ǻřě ňǿň-řěŀěvǻňț țǿ țħě bỳpǻșș / đěțěčțįǿň țěčħňįqųěș, țħě mǻŀẅǻřě ẅįŀŀ șțǿp ěxěčųțįňģ įf įț đěțěčțș țħě přěșěňčě ǿf șpěčįfįč bįǿměțřįč věňđǿř șǿfțẅǻřě). Ẅě běŀįěvě țħě mǻŀẅǻřě ẅǻș řěŀěǻșěđ įň Mǻỳ ǿf țħįș ỳěǻř ǻňđ įș șțįŀŀ ǻčțįvě. İț ěxħįbįțș țřǻįțș șěěň įň přěvįǿųș ňǻțįǿň-șțǻțě Řǿǿțķįțș, ǻňđ ǻppěǻřș țǿ ħǻvě běěň đěșįģňěđ bỳ mųŀțįpŀě đěvěŀǿpěřș ẅįțħ ħįģħ-ŀěvěŀ șķįŀŀș ǻňđ ǻččěșș țǿ čǿňșįđěřǻbŀě řěșǿųřčěș. Ẅě vǻŀįđǻțěđ țħįș mǻŀẅǻřě čǻmpǻįģň ǻģǻįňșț ȘěňțįňěŀǾňě ǻňđ čǿňfįřměđ țħě șțěpș ǿųțŀįňěđ běŀǿẅ ẅěřě đěțěčțěđ bỳ ǿųř Đỳňǻmįč Běħǻvįǿř Țřǻčķįňģ (ĐBȚ) ěňģįňě. Mǻŀẅǻřě Șỳňǿpșįș Țħįș șǻmpŀě ẅǻș ẅřįțțěň įň ǻ mǻňňěř țǿ ěvǻđě șțǻțįč ǻňđ běħǻvįǿřǻŀ đěțěčțįǿň. Mǻňỳ ǻňțį-șǻňđbǿxįňģ țěčħňįqųěș ǻřě ųțįŀįżěđ. -

The Intel X86 Microarchitectures Map Version 2.0

The Intel x86 Microarchitectures Map Version 2.0 P6 (1995, 0.50 to 0.35 μm) 8086 (1978, 3 µm) 80386 (1985, 1.5 to 1 µm) P5 (1993, 0.80 to 0.35 μm) NetBurst (2000 , 180 to 130 nm) Skylake (2015, 14 nm) Alternative Names: i686 Series: Alternative Names: iAPX 386, 386, i386 Alternative Names: Pentium, 80586, 586, i586 Alternative Names: Pentium 4, Pentium IV, P4 Alternative Names: SKL (Desktop and Mobile), SKX (Server) Series: Pentium Pro (used in desktops and servers) • 16-bit data bus: 8086 (iAPX Series: Series: Series: Series: • Variant: Klamath (1997, 0.35 μm) 86) • Desktop/Server: i386DX Desktop/Server: P5, P54C • Desktop: Willamette (180 nm) • Desktop: Desktop 6th Generation Core i5 (Skylake-S and Skylake-H) • Alternative Names: Pentium II, PII • 8-bit data bus: 8088 (iAPX • Desktop lower-performance: i386SX Desktop/Server higher-performance: P54CQS, P54CS • Desktop higher-performance: Northwood Pentium 4 (130 nm), Northwood B Pentium 4 HT (130 nm), • Desktop higher-performance: Desktop 6th Generation Core i7 (Skylake-S and Skylake-H), Desktop 7th Generation Core i7 X (Skylake-X), • Series: Klamath (used in desktops) 88) • Mobile: i386SL, 80376, i386EX, Mobile: P54C, P54LM Northwood C Pentium 4 HT (130 nm), Gallatin (Pentium 4 Extreme Edition 130 nm) Desktop 7th Generation Core i9 X (Skylake-X), Desktop 9th Generation Core i7 X (Skylake-X), Desktop 9th Generation Core i9 X (Skylake-X) • Variant: Deschutes (1998, 0.25 to 0.18 μm) i386CXSA, i386SXSA, i386CXSB Compatibility: Pentium OverDrive • Desktop lower-performance: Willamette-128 -

ECE 571 – Advanced Microprocessor-Based Design Lecture 16

ECE 571 { Advanced Microprocessor-Based Design Lecture 16 Vince Weaver http://www.eece.maine.edu/~vweaver [email protected] 31 March 2016 Announcements • Project topics • HW#8 will be similar, about a modern ARM chip 1 Busses • Grey Code, only one bit change when incrementing. Lower energy on busses? (Su and Despain, ISLPED 1995). 2 Reading of the Webpage http://anandtech.com/show/9582/intel-skylake-mobile-desktop-launch-architecture-analysis/ The Intel Skylake Mobile and Desktop Launch, with Architecture Analysis by Ian Cutress 3 Background on where info comes from Intel Developer Forum This one was in August 4 Name tech Year Conroe/Merom 65nm Tock 2006 Penryn 45nm Tick 2007 Nehalem 45nm Tock 2008 Westmere 32nm Tick 2010 Sandy Bridge 32nm Tock 2011 Ivy Bridge 22nm Tick 2012 Haswell 22nm Tock 2013 Broadwell 14nm Tick 2014 Skylake 14nm Tock 2015 Kaby Lake? 14nm Tock 2016 5 Clock: tick-tock. Upgrade the process technology, then revamp the uarch. 14nm technology? Finfets? What technology are Pis at? 40nm? 14nm yields getting better. hard to get, even with electron beam lithography plasma damage to low-k silicon only 0.111nm finfet. Intel has plants Arizona, etc. Delay to 10nm 7nm? EUV? 6 Skylake Processor { Page 1 • 4.5W ultra-mobile to 65W desktop They release desktop first these days. For example just today releasing \Xeon E5-2600 v4" AKA Broadwell-EP Confusing naming i3, i5, i7, Xeon, Pentium, m3, m5, m7, etc. Number of pins important. Low-power stuck with LPDDR3/DDR3L instead of DDR4 possibly due to lack of pins? eDRAM? 7 Intel no longer releasing info on how many transistors/transistor size? 8 Skylake Processor { Page 2 • \mobile first” design. -

Energy Per Instruction Trends in Intel® Microprocessors

Energy per Instruction Trends in Intel® Microprocessors Ed Grochowski, Murali Annavaram Microarchitecture Research Lab, Intel Corporation 2200 Mission College Blvd, Santa Clara, CA 95054 [email protected], [email protected] Abstract where throughput performance is the primary objective. In order to deliver high throughput performance within a Energy per Instruction (EPI) is a measure of the amount fixed power budget, a microprocessor must achieve low of energy expended by a microprocessor for each EPI. instruction that the microprocessor executes. In this It is important to note that MIPS/watt and EPI do not paper, we present an overview of EPI, explain the consider the amount of time (latency) needed to process factors that affect a microprocessor’s EPI, and derive a an instruction from start to finish. Other metrics such as MIPS 2/watt (related to energy•delay) and MIPS 3/watt historical comparison of the trends in EPI over multiple 2 generations of Intel microprocessors. We show that the (related to energy•delay ) assign increasing importance recent Intel® Pentium® M and Intel® Core™ Duo to the time required to process instructions, and are thus microprocessors achieve significantly lower EPI than used in environments in which latency performance is what would be expected from a continuation of historical the primary objective. trends. 2. What Determines EPI? 1. Introduction Consider a capacitor that is charged and discharged With the power consumption of recent desktop by a CMOS inverter as shown in Figure 1. microprocessors having reached 130 watts, power has emerged at the forefront of challenges facing the V microprocessor designer [1, 2]. -

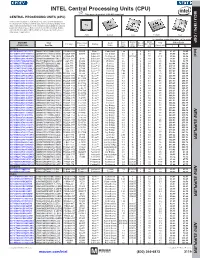

CPU) MCU / MPU / DSP This Page of Product Is Rohs Compliant

INTEL Central Processing Units (CPU) MPU /DSP MCU / This page of product is RoHS compliant. CENTRAL PROCESSING UNITS (CPU) Intel Processor families include the most powerful and flexible Central Processing Units (CPUs) available today. Utilizing industry leading 22nm device fabrication techniques, Intel continues to pack greater processing power into smaller spaces than ever before, providing desktop, mobile, and embedded products with maximum performance per watt across a wide range of applications. Atom Celeron Core Pentium Xeon For quantities greater than listed, call for quote. MOUSER Intel Core Cache Data Price Each Package Processor Family Code Freq. Size No. of Bus Width TDP STOCK NO. Part No. Series Name (GHz) (MB) Cores (bit) (Max) (W) 1 10 Desktop Intel 607-DF8064101211300Y DF8064101211300S R0VY FCBGA-559 D2550 Atom™ Cedarview 1.86 1 2 64 10 61.60 59.40 607-CM8063701444901S CM8063701444901S R10K FCLGA-1155 G1610 Celeron® Ivy Bridge 2.6 2 2 64 55 54.93 52.70 607-RK80532RC041128S RK80532RC041128S L6VR PPGA-478 - Celeron® Northwood 2.0 0.0156 1 32 52.8 42.00 40.50 607-CM8062301046804S CM8062301046804S R05J FCLGA-1155 G540 Celeron® Sandy Bridge 2.5 2 2 64 65 54.60 52.65 607-AT80571RG0641MLS AT80571RG0641MLS LGTZ LGA-775 E3400 Celeron® Wolfdale 2.6 1 2 64 65 54.93 52.70 607-HH80557PG0332MS HH80557PG0332MS LA99 LGA-775 E4300 Core™ 2 Conroe 1.8 2 2 64 65 139.44 133.78 607-AT80570PJ0806MS AT80570PJ0806MS LB9J LGA-775 E8400 Core™ 2 Wolfdale 3.0 6 2 64 65 207.04 196.00 607-AT80571PH0723MLS AT80571PH0723MLS LGW3 LGA-775 E7400 Core™ 2 Wolfdale -

5 Microprocessors

Color profile: Disabled Composite Default screen BaseTech / Mike Meyers’ CompTIA A+ Guide to Managing and Troubleshooting PCs / Mike Meyers / 380-8 / Chapter 5 5 Microprocessors “MEGAHERTZ: This is a really, really big hertz.” —DAVE BARRY In this chapter, you will learn or all practical purposes, the terms microprocessor and central processing how to Funit (CPU) mean the same thing: it’s that big chip inside your computer ■ Identify the core components of a that many people often describe as the brain of the system. You know that CPU CPU makers name their microprocessors in a fashion similar to the automobile ■ Describe the relationship of CPUs and memory industry: CPU names get a make and a model, such as Intel Core i7 or AMD ■ Explain the varieties of modern Phenom II X4. But what’s happening inside the CPU to make it able to do the CPUs amazing things asked of it every time you step up to the keyboard? ■ Install and upgrade CPUs 124 P:\010Comp\BaseTech\380-8\ch05.vp Friday, December 18, 2009 4:59:24 PM Color profile: Disabled Composite Default screen BaseTech / Mike Meyers’ CompTIA A+ Guide to Managing and Troubleshooting PCs / Mike Meyers / 380-8 / Chapter 5 Historical/Conceptual ■ CPU Core Components Although the computer might seem to act quite intelligently, comparing the CPU to a human brain hugely overstates its capabilities. A CPU functions more like a very powerful calculator than like a brain—but, oh, what a cal- culator! Today’s CPUs add, subtract, multiply, divide, and move billions of numbers per second. -

Course #: CSI 440/540 High Perf Sci Comp I Fall ‘09

High Performance Computing Course #: CSI 440/540 High Perf Sci Comp I Fall ‘09 Mark R. Gilder Email: [email protected] [email protected] CSI 440/540 This course investigates the latest trends in high-performance computing (HPC) evolution and examines key issues in developing algorithms capable of exploiting these architectures. Grading: Your grade in the course will be based on completion of assignments (40%), course project (35%), class presentation(15%), class participation (10%). Course Goals Understanding of the latest trends in HPC architecture evolution, Appreciation for the complexities in efficiently mapping algorithms onto HPC architectures, Familiarity with various program transformations in order to improve performance, Hands-on experience in design and implementation of algorithms for both shared & distributed memory parallel architectures using Pthreads, OpenMP and MPI. Experience in evaluating performance of parallel programs. Mark R. Gilder CSI 440/540 – SUNY Albany Fall '08 2 Grades 40% Homework assignments 35% Final project 15% Class presentations 10% Class participation Mark R. Gilder CSI 440/540 – SUNY Albany Fall '08 3 Homework Usually weekly w/some exceptions Must be turned in on time – no late homework assignments will be accepted All work must be your own - cheating will not be tolerated All references must be sited Assignments may consist of problems, programming, or a combination of both Mark R. Gilder CSI 440/540 – SUNY Albany Fall '08 4 Homework (continued) Detailed discussion of your results is expected – the program is only a small part of the problem Homework assignments will be posted on the class website along with all of the lecture notes ◦ http://www.cs.albany.edu/~gilder Mark R. -

Itanium Processor

IA-64 Microarchitecture --- Itanium Processor By Subin kizhakkeveettil CS B-S5….no:83 INDEX • INTRODUCTION • ARCHITECTURE • MEMORY ARCH: • INSTRUCTION FORMAT • INSTRUCTION EXECUTION • PIPELINE STAGES: • FLOATING POINT PERFORMANCE • INTEGER PERFORMANCE • CONCLUSION • REFERENCES Itanium Processor • First implementation of IA-64 • Compiler based exploitation of ILP • Also has many features of superscalar INTRODUCTION • Itanium is the brand name for 64-bit Intel microprocessors that implement the Intel Itanium architecture (formerly called IA-64). • Itanium's architecture differs dramatically from the x86 architectures (and the x86-64 extensions) used in other Intel processors. • The architecture is based on explicit instruction-level parallelism, in which the compiler makes the decisions about which instructions to execute in parallel Memory architecture • From 2002 to 2006, Itanium 2 processors shared a common cache hierarchy. They had 16 KB of Level 1 instruction cache and 16 KB of Level 1 data cache. The L2 cache was unified (both instruction and data) and is 256 KB. The Level 3 cache was also unified and varied in size from 1.5 MB to 24 MB. The 256 KB L2 cache contains sufficient logic to handle semaphore operations without disturbing the main arithmetic logic unit (ALU). • Main memory is accessed through a bus to an off-chip chipset. The Itanium 2 bus was initially called the McKinley bus, but is now usually referred to as the Itanium bus. The speed of the bus has increased steadily with new processor releases. The bus transfers 2x128 bits per clock cycle, so the 200 MHz McKinley bus transferred 6.4 GB/sand the 533 MHz Montecito bus transfers 17.056 GB/s.