Multivariate Normal Distribution Form Normal Density Function (Multivariate)

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Lecture 22: Bivariate Normal Distribution Distribution

6.5 Conditional Distributions General Bivariate Normal Let Z1; Z2 ∼ N (0; 1), which we will use to build a general bivariate normal Lecture 22: Bivariate Normal Distribution distribution. 1 1 2 2 f (z1; z2) = exp − (z1 + z2 ) Statistics 104 2π 2 We want to transform these unit normal distributions to have the follow Colin Rundel arbitrary parameters: µX ; µY ; σX ; σY ; ρ April 11, 2012 X = σX Z1 + µX p 2 Y = σY [ρZ1 + 1 − ρ Z2] + µY Statistics 104 (Colin Rundel) Lecture 22 April 11, 2012 1 / 22 6.5 Conditional Distributions 6.5 Conditional Distributions General Bivariate Normal - Marginals General Bivariate Normal - Cov/Corr First, lets examine the marginal distributions of X and Y , Second, we can find Cov(X ; Y ) and ρ(X ; Y ) Cov(X ; Y ) = E [(X − E(X ))(Y − E(Y ))] X = σX Z1 + µX h p i = E (σ Z + µ − µ )(σ [ρZ + 1 − ρ2Z ] + µ − µ ) = σX N (0; 1) + µX X 1 X X Y 1 2 Y Y 2 h p 2 i = N (µX ; σX ) = E (σX Z1)(σY [ρZ1 + 1 − ρ Z2]) h 2 p 2 i = σX σY E ρZ1 + 1 − ρ Z1Z2 p 2 2 Y = σY [ρZ1 + 1 − ρ Z2] + µY = σX σY ρE[Z1 ] p 2 = σX σY ρ = σY [ρN (0; 1) + 1 − ρ N (0; 1)] + µY = σ [N (0; ρ2) + N (0; 1 − ρ2)] + µ Y Y Cov(X ; Y ) ρ(X ; Y ) = = ρ = σY N (0; 1) + µY σX σY 2 = N (µY ; σY ) Statistics 104 (Colin Rundel) Lecture 22 April 11, 2012 2 / 22 Statistics 104 (Colin Rundel) Lecture 22 April 11, 2012 3 / 22 6.5 Conditional Distributions 6.5 Conditional Distributions General Bivariate Normal - RNG Multivariate Change of Variables Consequently, if we want to generate a Bivariate Normal random variable Let X1;:::; Xn have a continuous joint distribution with pdf f defined of S. -

Efficient Estimation of Parameters of the Negative Binomial Distribution

E±cient Estimation of Parameters of the Negative Binomial Distribution V. SAVANI AND A. A. ZHIGLJAVSKY Department of Mathematics, Cardi® University, Cardi®, CF24 4AG, U.K. e-mail: SavaniV@cardi®.ac.uk, ZhigljavskyAA@cardi®.ac.uk (Corresponding author) Abstract In this paper we investigate a class of moment based estimators, called power method estimators, which can be almost as e±cient as maximum likelihood estima- tors and achieve a lower asymptotic variance than the standard zero term method and method of moments estimators. We investigate di®erent methods of implementing the power method in practice and examine the robustness and e±ciency of the power method estimators. Key Words: Negative binomial distribution; estimating parameters; maximum likelihood method; e±ciency of estimators; method of moments. 1 1. The Negative Binomial Distribution 1.1. Introduction The negative binomial distribution (NBD) has appeal in the modelling of many practical applications. A large amount of literature exists, for example, on using the NBD to model: animal populations (see e.g. Anscombe (1949), Kendall (1948a)); accident proneness (see e.g. Greenwood and Yule (1920), Arbous and Kerrich (1951)) and consumer buying behaviour (see e.g. Ehrenberg (1988)). The appeal of the NBD lies in the fact that it is a simple two parameter distribution that arises in various di®erent ways (see e.g. Anscombe (1950), Johnson, Kotz, and Kemp (1992), Chapter 5) often allowing the parameters to have a natural interpretation (see Section 1.2). Furthermore, the NBD can be implemented as a distribution within stationary processes (see e.g. Anscombe (1950), Kendall (1948b)) thereby increasing the modelling potential of the distribution. -

Applied Biostatistics Mean and Standard Deviation the Mean the Median Is Not the Only Measure of Central Value for a Distribution

Health Sciences M.Sc. Programme Applied Biostatistics Mean and Standard Deviation The mean The median is not the only measure of central value for a distribution. Another is the arithmetic mean or average, usually referred to simply as the mean. This is found by taking the sum of the observations and dividing by their number. The mean is often denoted by a little bar over the symbol for the variable, e.g. x . The sample mean has much nicer mathematical properties than the median and is thus more useful for the comparison methods described later. The median is a very useful descriptive statistic, but not much used for other purposes. Median, mean and skewness The sum of the 57 FEV1s is 231.51 and hence the mean is 231.51/57 = 4.06. This is very close to the median, 4.1, so the median is within 1% of the mean. This is not so for the triglyceride data. The median triglyceride is 0.46 but the mean is 0.51, which is higher. The median is 10% away from the mean. If the distribution is symmetrical the sample mean and median will be about the same, but in a skew distribution they will not. If the distribution is skew to the right, as for serum triglyceride, the mean will be greater, if it is skew to the left the median will be greater. This is because the values in the tails affect the mean but not the median. Figure 1 shows the positions of the mean and median on the histogram of triglyceride. -

1. How Different Is the T Distribution from the Normal?

Statistics 101–106 Lecture 7 (20 October 98) c David Pollard Page 1 Read M&M §7.1 and §7.2, ignoring starred parts. Reread M&M §3.2. The eects of estimated variances on normal approximations. t-distributions. Comparison of two means: pooling of estimates of variances, or paired observations. In Lecture 6, when discussing comparison of two Binomial proportions, I was content to estimate unknown variances when calculating statistics that were to be treated as approximately normally distributed. You might have worried about the effect of variability of the estimate. W. S. Gosset (“Student”) considered a similar problem in a very famous 1908 paper, where the role of Student’s t-distribution was first recognized. Gosset discovered that the effect of estimated variances could be described exactly in a simplified problem where n independent observations X1,...,Xn are taken from (, ) = ( + ...+ )/ a normal√ distribution, N . The sample mean, X X1 Xn n has a N(, / n) distribution. The random variable X Z = √ / n 2 2 Phas a standard normal distribution. If we estimate by the sample variance, s = ( )2/( ) i Xi X n 1 , then the resulting statistic, X T = √ s/ n no longer has a normal distribution. It has a t-distribution on n 1 degrees of freedom. Remark. I have written T , instead of the t used by M&M page 505. I find it causes confusion that t refers to both the name of the statistic and the name of its distribution. As you will soon see, the estimation of the variance has the effect of spreading out the distribution a little beyond what it would be if were used. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

On the Meaning and Use of Kurtosis

Psychological Methods Copyright 1997 by the American Psychological Association, Inc. 1997, Vol. 2, No. 3,292-307 1082-989X/97/$3.00 On the Meaning and Use of Kurtosis Lawrence T. DeCarlo Fordham University For symmetric unimodal distributions, positive kurtosis indicates heavy tails and peakedness relative to the normal distribution, whereas negative kurtosis indicates light tails and flatness. Many textbooks, however, describe or illustrate kurtosis incompletely or incorrectly. In this article, kurtosis is illustrated with well-known distributions, and aspects of its interpretation and misinterpretation are discussed. The role of kurtosis in testing univariate and multivariate normality; as a measure of departures from normality; in issues of robustness, outliers, and bimodality; in generalized tests and estimators, as well as limitations of and alternatives to the kurtosis measure [32, are discussed. It is typically noted in introductory statistics standard deviation. The normal distribution has a kur- courses that distributions can be characterized in tosis of 3, and 132 - 3 is often used so that the refer- terms of central tendency, variability, and shape. With ence normal distribution has a kurtosis of zero (132 - respect to shape, virtually every textbook defines and 3 is sometimes denoted as Y2)- A sample counterpart illustrates skewness. On the other hand, another as- to 132 can be obtained by replacing the population pect of shape, which is kurtosis, is either not discussed moments with the sample moments, which gives or, worse yet, is often described or illustrated incor- rectly. Kurtosis is also frequently not reported in re- ~(X i -- S)4/n search articles, in spite of the fact that virtually every b2 (•(X i - ~')2/n)2' statistical package provides a measure of kurtosis. -

Approaching Mean-Variance Efficiency for Large Portfolios

Approaching Mean-Variance Efficiency for Large Portfolios ∗ Mengmeng Ao† Yingying Li‡ Xinghua Zheng§ First Draft: October 6, 2014 This Draft: November 11, 2017 Abstract This paper studies the large dimensional Markowitz optimization problem. Given any risk constraint level, we introduce a new approach for estimating the optimal portfolio, which is developed through a novel unconstrained regression representation of the mean-variance optimization problem, combined with high-dimensional sparse regression methods. Our estimated portfolio, under a mild sparsity assumption, asymptotically achieves mean-variance efficiency and meanwhile effectively controls the risk. To the best of our knowledge, this is the first time that these two goals can be simultaneously achieved for large portfolios. The superior properties of our approach are demonstrated via comprehensive simulation and empirical studies. Keywords: Markowitz optimization; Large portfolio selection; Unconstrained regression, LASSO; Sharpe ratio ∗Research partially supported by the RGC grants GRF16305315, GRF 16502014 and GRF 16518716 of the HKSAR, and The Fundamental Research Funds for the Central Universities 20720171073. †Wang Yanan Institute for Studies in Economics & Department of Finance, School of Economics, Xiamen University, China. [email protected] ‡Hong Kong University of Science and Technology, HKSAR. [email protected] §Hong Kong University of Science and Technology, HKSAR. [email protected] 1 1 INTRODUCTION 1.1 Markowitz Optimization Enigma The groundbreaking mean-variance portfolio theory proposed by Markowitz (1952) contin- ues to play significant roles in research and practice. The optimal mean-variance portfolio has a simple explicit expression1 that only depends on two population characteristics, the mean and the covariance matrix of asset returns. Under the ideal situation when the underlying mean and covariance matrix are known, mean-variance investors can easily compute the optimal portfolio weights based on their preferred level of risk. -

Characteristics and Statistics of Digital Remote Sensing Imagery (1)

Characteristics and statistics of digital remote sensing imagery (1) Digital Images: 1 Digital Image • With raster data structure, each image is treated as an array of values of the pixels. • Image data is organized as rows and columns (or lines and pixels) start from the upper left corner of the image. • Each pixel (picture element) is treated as a separate unite. Statistics of Digital Images Help: • Look at the frequency of occurrence of individual brightness values in the image displayed • View individual pixel brightness values at specific locations or within a geographic area; • Compute univariate descriptive statistics to determine if there are unusual anomalies in the image data; and • Compute multivariate statistics to determine the amount of between-band correlation (e.g., to identify redundancy). 2 Statistics of Digital Images It is necessary to calculate fundamental univariate and multivariate statistics of the multispectral remote sensor data. This involves identification and calculation of – maximum and minimum value –the range, mean, standard deviation – between-band variance-covariance matrix – correlation matrix, and – frequencies of brightness values The results of the above can be used to produce histograms. Such statistics provide information necessary for processing and analyzing remote sensing data. A “population” is an infinite or finite set of elements. A “sample” is a subset of the elements taken from a population used to make inferences about certain characteristics of the population. (e.g., training signatures) 3 Large samples drawn randomly from natural populations usually produce a symmetrical frequency distribution. Most values are clustered around the central value, and the frequency of occurrence declines away from this central point. -

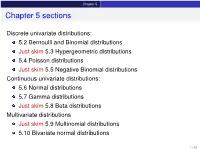

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

Basic Econometrics / Statistics Statistical Distributions: Normal, T, Chi-Sq, & F

Basic Econometrics / Statistics Statistical Distributions: Normal, T, Chi-Sq, & F Course : Basic Econometrics : HC43 / Statistics B.A. Hons Economics, Semester IV/ Semester III Delhi University Course Instructor: Siddharth Rathore Assistant Professor Economics Department, Gargi College Siddharth Rathore guj75845_appC.qxd 4/16/09 12:41 PM Page 461 APPENDIX C SOME IMPORTANT PROBABILITY DISTRIBUTIONS In Appendix B we noted that a random variable (r.v.) can be described by a few characteristics, or moments, of its probability function (PDF or PMF), such as the expected value and variance. This, however, presumes that we know the PDF of that r.v., which is a tall order since there are all kinds of random variables. In practice, however, some random variables occur so frequently that statisticians have determined their PDFs and documented their properties. For our purpose, we will consider only those PDFs that are of direct interest to us. But keep in mind that there are several other PDFs that statisticians have studied which can be found in any standard statistics textbook. In this appendix we will discuss the following four probability distributions: 1. The normal distribution 2. The t distribution 3. The chi-square (2 ) distribution 4. The F distribution These probability distributions are important in their own right, but for our purposes they are especially important because they help us to find out the probability distributions of estimators (or statistics), such as the sample mean and sample variance. Recall that estimators are random variables. Equipped with that knowledge, we will be able to draw inferences about their true population values. -

Univariate and Multivariate Skewness and Kurtosis 1

Running head: UNIVARIATE AND MULTIVARIATE SKEWNESS AND KURTOSIS 1 Univariate and Multivariate Skewness and Kurtosis for Measuring Nonnormality: Prevalence, Influence and Estimation Meghan K. Cain, Zhiyong Zhang, and Ke-Hai Yuan University of Notre Dame Author Note This research is supported by a grant from the U.S. Department of Education (R305D140037). However, the contents of the paper do not necessarily represent the policy of the Department of Education, and you should not assume endorsement by the Federal Government. Correspondence concerning this article can be addressed to Meghan Cain ([email protected]), Ke-Hai Yuan ([email protected]), or Zhiyong Zhang ([email protected]), Department of Psychology, University of Notre Dame, 118 Haggar Hall, Notre Dame, IN 46556. UNIVARIATE AND MULTIVARIATE SKEWNESS AND KURTOSIS 2 Abstract Nonnormality of univariate data has been extensively examined previously (Blanca et al., 2013; Micceri, 1989). However, less is known of the potential nonnormality of multivariate data although multivariate analysis is commonly used in psychological and educational research. Using univariate and multivariate skewness and kurtosis as measures of nonnormality, this study examined 1,567 univariate distriubtions and 254 multivariate distributions collected from authors of articles published in Psychological Science and the American Education Research Journal. We found that 74% of univariate distributions and 68% multivariate distributions deviated from normal distributions. In a simulation study using typical values of skewness and kurtosis that we collected, we found that the resulting type I error rates were 17% in a t-test and 30% in a factor analysis under some conditions. Hence, we argue that it is time to routinely report skewness and kurtosis along with other summary statistics such as means and variances. -

A Family of Skew-Normal Distributions for Modeling Proportions and Rates with Zeros/Ones Excess

S S symmetry Article A Family of Skew-Normal Distributions for Modeling Proportions and Rates with Zeros/Ones Excess Guillermo Martínez-Flórez 1, Víctor Leiva 2,* , Emilio Gómez-Déniz 3 and Carolina Marchant 4 1 Departamento de Matemáticas y Estadística, Facultad de Ciencias Básicas, Universidad de Córdoba, Montería 14014, Colombia; [email protected] 2 Escuela de Ingeniería Industrial, Pontificia Universidad Católica de Valparaíso, 2362807 Valparaíso, Chile 3 Facultad de Economía, Empresa y Turismo, Universidad de Las Palmas de Gran Canaria and TIDES Institute, 35001 Canarias, Spain; [email protected] 4 Facultad de Ciencias Básicas, Universidad Católica del Maule, 3466706 Talca, Chile; [email protected] * Correspondence: [email protected] or [email protected] Received: 30 June 2020; Accepted: 19 August 2020; Published: 1 September 2020 Abstract: In this paper, we consider skew-normal distributions for constructing new a distribution which allows us to model proportions and rates with zero/one inflation as an alternative to the inflated beta distributions. The new distribution is a mixture between a Bernoulli distribution for explaining the zero/one excess and a censored skew-normal distribution for the continuous variable. The maximum likelihood method is used for parameter estimation. Observed and expected Fisher information matrices are derived to conduct likelihood-based inference in this new type skew-normal distribution. Given the flexibility of the new distributions, we are able to show, in real data scenarios, the good performance of our proposal. Keywords: beta distribution; centered skew-normal distribution; maximum-likelihood methods; Monte Carlo simulations; proportions; R software; rates; zero/one inflated data 1.