Basic Econometrics / Statistics Statistical Distributions: Normal, T, Chi-Sq, & F

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Circularly-Symmetric Complex Normal Ratio Distribution for Scalar Transmissibility Functions. Part II: Probabilistic Model and Validation

Mechanical Systems and Signal Processing 80 (2016) 78–98 Contents lists available at ScienceDirect Mechanical Systems and Signal Processing journal homepage: www.elsevier.com/locate/ymssp Circularly-symmetric complex normal ratio distribution for scalar transmissibility functions. Part II: Probabilistic model and validation Wang-Ji Yan, Wei-Xin Ren n Department of Civil Engineering, Hefei University of Technology, Hefei, Anhui 23009, China article info abstract Article history: In Part I of this study, some new theorems, corollaries and lemmas on circularly- Received 29 May 2015 symmetric complex normal ratio distribution have been mathematically proved. This Received in revised form part II paper is dedicated to providing a rigorous treatment of statistical properties of raw 3 February 2016 scalar transmissibility functions at an arbitrary frequency line. On the basis of statistics of Accepted 27 February 2016 raw FFT coefficients and circularly-symmetric complex normal ratio distribution, explicit Available online 23 March 2016 closed-form probabilistic models are established for both multivariate and univariate Keywords: scalar transmissibility functions. Also, remarks on the independence of transmissibility Transmissibility functions at different frequency lines and the shape of the probability density function Uncertainty quantification (PDF) of univariate case are presented. The statistical structures of probabilistic models are Signal processing concise, compact and easy-implemented with a low computational effort. They hold for Statistics Structural dynamics general stationary vector processes, either Gaussian stochastic processes or non-Gaussian Ambient vibration stochastic processes. The accuracy of proposed models is verified using numerical example as well as field test data of a high-rise building and a long-span cable-stayed bridge. -

1. How Different Is the T Distribution from the Normal?

Statistics 101–106 Lecture 7 (20 October 98) c David Pollard Page 1 Read M&M §7.1 and §7.2, ignoring starred parts. Reread M&M §3.2. The eects of estimated variances on normal approximations. t-distributions. Comparison of two means: pooling of estimates of variances, or paired observations. In Lecture 6, when discussing comparison of two Binomial proportions, I was content to estimate unknown variances when calculating statistics that were to be treated as approximately normally distributed. You might have worried about the effect of variability of the estimate. W. S. Gosset (“Student”) considered a similar problem in a very famous 1908 paper, where the role of Student’s t-distribution was first recognized. Gosset discovered that the effect of estimated variances could be described exactly in a simplified problem where n independent observations X1,...,Xn are taken from (, ) = ( + ...+ )/ a normal√ distribution, N . The sample mean, X X1 Xn n has a N(, / n) distribution. The random variable X Z = √ / n 2 2 Phas a standard normal distribution. If we estimate by the sample variance, s = ( )2/( ) i Xi X n 1 , then the resulting statistic, X T = √ s/ n no longer has a normal distribution. It has a t-distribution on n 1 degrees of freedom. Remark. I have written T , instead of the t used by M&M page 505. I find it causes confusion that t refers to both the name of the statistic and the name of its distribution. As you will soon see, the estimation of the variance has the effect of spreading out the distribution a little beyond what it would be if were used. -

Programming in Stata

Programming and Post-Estimation • Bootstrapping • Monte Carlo • Post-Estimation Simulation (Clarify) • Extending Clarify to Other Models – Censored Probit Example What is Bootstrapping? • A computer-simulated nonparametric technique for making inferences about a population parameter based on sample statistics. • If the sample is a good approximation of the population, the sampling distribution of interest can be estimated by generating a large number of new samples from the original. • Useful when no analytic formula for the sampling distribution is available. B 2 ˆ B B b How do I do it? SE B = s(x )- s(x )/ B /(B -1) å[ åb =1 ] b=1 1. Obtain a Sample from the population of interest. Call this x = (x1, x2, … , xn). 2. Re-sample based on x by randomly sampling with replacement from it. 3. Generate many such samples, x1, x2, …, xB – each of length n. 4. Estimate the desired parameter in each sample, s(x1), s(x2), …, s(xB). 5. For instance the bootstrap estimate of the standard error is the standard deviation of the bootstrap replications. Example: Standard Error of a Sample Mean Canned in Stata use "I:\general\PRISM Programming\auto.dta", clear Type: sum mpg Example: Standard Error of a Sample Mean Canned in Stata Type: bootstrap "summarize mpg" r(mean), reps(1000) » 21.2973±1.96*.6790806 x B - x Example: Difference of Medians Test Type: sum length, detail Type: return list Example: Difference of Medians Test Example: Difference of Medians Test Example: Difference of Medians Test Type: bootstrap "mymedian" r(diff), reps(1000) The medians are not very different. -

The Cascade Bayesian Approach: Prior Transformation for a Controlled Integration of Internal Data, External Data and Scenarios

risks Article The Cascade Bayesian Approach: Prior Transformation for a Controlled Integration of Internal Data, External Data and Scenarios Bertrand K. Hassani 1,2,3,* and Alexis Renaudin 4 1 University College London Computer Science, 66-72 Gower Street, London WC1E 6EA, UK 2 LabEx ReFi, Université Paris 1 Panthéon-Sorbonne, CESCES, 106 bd de l’Hôpital, 75013 Paris, France 3 Capegemini Consulting, Tour Europlaza, 92400 Paris-La Défense, France 4 Aon Benfield, The Aon Centre, 122 Leadenhall Street, London EC3V 4AN, UK; [email protected] * Correspondence: [email protected]; Tel.: +44-(0)753-016-7299 Received: 14 March 2018; Accepted: 23 April 2018; Published: 27 April 2018 Abstract: According to the last proposals of the Basel Committee on Banking Supervision, banks or insurance companies under the advanced measurement approach (AMA) must use four different sources of information to assess their operational risk capital requirement. The fourth includes ’business environment and internal control factors’, i.e., qualitative criteria, whereas the three main quantitative sources available to banks for building the loss distribution are internal loss data, external loss data and scenario analysis. This paper proposes an innovative methodology to bring together these three different sources in the loss distribution approach (LDA) framework through a Bayesian strategy. The integration of the different elements is performed in two different steps to ensure an internal data-driven model is obtained. In the first step, scenarios are used to inform the prior distributions and external data inform the likelihood component of the posterior function. In the second step, the initial posterior function is used as the prior distribution and the internal loss data inform the likelihood component of the second posterior function. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

What Is Bayesian Inference? Bayesian Inference Is at the Core of the Bayesian Approach, Which Is an Approach That Allows Us to Represent Uncertainty As a Probability

Learn to Use Bayesian Inference in SPSS With Data From the National Child Measurement Programme (2016–2017) © 2019 SAGE Publications Ltd. All Rights Reserved. This PDF has been generated from SAGE Research Methods Datasets. SAGE SAGE Research Methods Datasets Part 2019 SAGE Publications, Ltd. All Rights Reserved. 2 Learn to Use Bayesian Inference in SPSS With Data From the National Child Measurement Programme (2016–2017) Student Guide Introduction This example dataset introduces Bayesian Inference. Bayesian statistics (the general name for all Bayesian-related topics, including inference) has become increasingly popular in recent years, due predominantly to the growth of evermore powerful and sophisticated statistical software. However, Bayesian statistics grew from the ideas of an English mathematician, Thomas Bayes, who lived and worked in the first half of the 18th century and have been refined and adapted by statisticians and mathematicians ever since. Despite its longevity, the Bayesian approach did not become mainstream: the Frequentist approach was and remains the dominant means to conduct statistical analysis. However, there is a renewed interest in Bayesian statistics, part prompted by software development and part by a growing critique of the limitations of the null hypothesis significance testing which dominates the Frequentist approach. This renewed interest can be seen in the incorporation of Bayesian analysis into mainstream statistical software, such as, IBM® SPSS® and in many major statistics text books. Bayesian Inference is at the heart of Bayesian statistics and is different from Frequentist approaches due to how it views probability. In the Frequentist approach, probability is the product of the frequency of random events occurring Page 2 of 19 Learn to Use Bayesian Inference in SPSS With Data From the National Child Measurement Programme (2016–2017) SAGE SAGE Research Methods Datasets Part 2019 SAGE Publications, Ltd. -

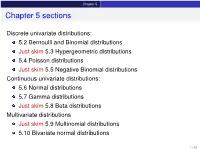

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

The Normal Moment Generating Function

MSc. Econ: MATHEMATICAL STATISTICS, 1996 The Moment Generating Function of the Normal Distribution Recall that the probability density function of a normally distributed random variable x with a mean of E(x)=and a variance of V (x)=2 is 2 1 1 (x)2/2 (1) N(x; , )=p e 2 . (22) Our object is to nd the moment generating function which corresponds to this distribution. To begin, let us consider the case where = 0 and 2 =1. Then we have a standard normal, denoted by N(z;0,1), and the corresponding moment generating function is dened by Z zt zt 1 1 z2 Mz(t)=E(e )= e √ e 2 dz (2) 2 1 t2 = e 2 . To demonstate this result, the exponential terms may be gathered and rear- ranged to give exp zt exp 1 z2 = exp 1 z2 + zt (3) 2 2 1 2 1 2 = exp 2 (z t) exp 2 t . Then Z 1t2 1 1(zt)2 Mz(t)=e2 √ e 2 dz (4) 2 1 t2 = e 2 , where the nal equality follows from the fact that the expression under the integral is the N(z; = t, 2 = 1) probability density function which integrates to unity. Now consider the moment generating function of the Normal N(x; , 2) distribution when and 2 have arbitrary values. This is given by Z xt xt 1 1 (x)2/2 (5) Mx(t)=E(e )= e p e 2 dx (22) Dene x (6) z = , which implies x = z + . 1 MSc. Econ: MATHEMATICAL STATISTICS: BRIEF NOTES, 1996 Then, using the change-of-variable technique, we get Z 1 1 2 dx t zt p 2 z Mx(t)=e e e dz 2 dz Z (2 ) (7) t zt 1 1 z2 = e e √ e 2 dz 2 t 1 2t2 = e e 2 , Here, to establish the rst equality, we have used dx/dz = . -

A Family of Skew-Normal Distributions for Modeling Proportions and Rates with Zeros/Ones Excess

S S symmetry Article A Family of Skew-Normal Distributions for Modeling Proportions and Rates with Zeros/Ones Excess Guillermo Martínez-Flórez 1, Víctor Leiva 2,* , Emilio Gómez-Déniz 3 and Carolina Marchant 4 1 Departamento de Matemáticas y Estadística, Facultad de Ciencias Básicas, Universidad de Córdoba, Montería 14014, Colombia; [email protected] 2 Escuela de Ingeniería Industrial, Pontificia Universidad Católica de Valparaíso, 2362807 Valparaíso, Chile 3 Facultad de Economía, Empresa y Turismo, Universidad de Las Palmas de Gran Canaria and TIDES Institute, 35001 Canarias, Spain; [email protected] 4 Facultad de Ciencias Básicas, Universidad Católica del Maule, 3466706 Talca, Chile; [email protected] * Correspondence: [email protected] or [email protected] Received: 30 June 2020; Accepted: 19 August 2020; Published: 1 September 2020 Abstract: In this paper, we consider skew-normal distributions for constructing new a distribution which allows us to model proportions and rates with zero/one inflation as an alternative to the inflated beta distributions. The new distribution is a mixture between a Bernoulli distribution for explaining the zero/one excess and a censored skew-normal distribution for the continuous variable. The maximum likelihood method is used for parameter estimation. Observed and expected Fisher information matrices are derived to conduct likelihood-based inference in this new type skew-normal distribution. Given the flexibility of the new distributions, we are able to show, in real data scenarios, the good performance of our proposal. Keywords: beta distribution; centered skew-normal distribution; maximum-likelihood methods; Monte Carlo simulations; proportions; R software; rates; zero/one inflated data 1. -

Practical Meta-Analysis -- Lipsey & Wilson Overview

Practical Meta-Analysis -- Lipsey & Wilson The Great Debate • 1952: Hans J. Eysenck concluded that there were no favorable Practical Meta-Analysis effects of psychotherapy, starting a raging debate • 20 years of evaluation research and hundreds of studies failed David B. Wilson to resolve the debate American Evaluation Association • 1978: To proved Eysenck wrong, Gene V. Glass statistically Orlando, Florida, October 3, 1999 aggregate the findings of 375 psychotherapy outcome studies • Glass (and colleague Smith) concluded that psychotherapy did indeed work • Glass called his method “meta-analysis” 1 2 The Emergence of Meta-Analysis The Logic of Meta-Analysis • Ideas behind meta-analysis predate Glass’ work by several • Traditional methods of review focus on statistical significance decades testing – R. A. Fisher (1944) • Significance testing is not well suited to this task • “When a number of quite independent tests of significance have been made, it sometimes happens that although few or none can be – highly dependent on sample size claimed individually as significant, yet the aggregate gives an – null finding does not carry to same “weight” as a significant impression that the probabilities are on the whole lower than would often have been obtained by chance” (p. 99). finding • Source of the idea of cumulating probability values • Meta-analysis changes the focus to the direction and – W. G. Cochran (1953) magnitude of the effects across studies • Discusses a method of averaging means across independent studies – Isn’t this what we are -

Statistical Power in Meta-Analysis Jin Liu University of South Carolina - Columbia

University of South Carolina Scholar Commons Theses and Dissertations 12-14-2015 Statistical Power in Meta-Analysis Jin Liu University of South Carolina - Columbia Follow this and additional works at: https://scholarcommons.sc.edu/etd Part of the Educational Psychology Commons Recommended Citation Liu, J.(2015). Statistical Power in Meta-Analysis. (Doctoral dissertation). Retrieved from https://scholarcommons.sc.edu/etd/3221 This Open Access Dissertation is brought to you by Scholar Commons. It has been accepted for inclusion in Theses and Dissertations by an authorized administrator of Scholar Commons. For more information, please contact [email protected]. STATISTICAL POWER IN META-ANALYSIS by Jin Liu Bachelor of Arts Chongqing University of Arts and Sciences, 2009 Master of Education University of South Carolina, 2012 Submitted in Partial Fulfillment of the Requirements For the Degree of Doctor of Philosophy in Educational Psychology and Research College of Education University of South Carolina 2015 Accepted by: Xiaofeng Liu, Major Professor Christine DiStefano, Committee Member Robert Johnson, Committee Member Brian Habing, Committee Member Lacy Ford, Senior Vice Provost and Dean of Graduate Studies © Copyright by Jin Liu, 2015 All Rights Reserved. ii ACKNOWLEDGEMENTS I would like to express my thanks to all committee members who continuously supported me in the dissertation work. My dissertation chair, Xiaofeng Liu, always inspired and trusted me during this study. I could not finish my dissertation at this time without his support. Brian Habing helped me with R coding and research design. I could not finish the coding process so quickly without his instruction. I appreciate the encouragement from Christine DiStefano and Robert Johnson. -

Descriptive Statistics Frequency Distributions and Their Graphs

Chapter 2 Descriptive Statistics § 2.1 Frequency Distributions and Their Graphs Frequency Distributions A frequency distribution is a table that shows classes or intervals of data with a count of the number in each class. The frequency f of a class is the number of data points in the class. Class Frequency, f 1 – 4 4 LowerUpper Class 5 – 8 5 Limits 9 – 12 3 Frequencies 13 – 16 4 17 – 20 2 Larson & Farber, Elementary Statistics: Picturing the World , 3e 3 1 Frequency Distributions The class width is the distance between lower (or upper) limits of consecutive classes. Class Frequency, f 1 – 4 4 5 – 1 = 4 5 – 8 5 9 – 5 = 4 9 – 12 3 13 – 9 = 4 13 – 16 4 17 – 13 = 4 17 – 20 2 The class width is 4. The range is the difference between the maximum and minimum data entries. Larson & Farber, Elementary Statistics: Picturing the World , 3e 4 Constructing a Frequency Distribution Guidelines 1. Decide on the number of classes to include. The number of classes should be between 5 and 20; otherwise, it may be difficult to detect any patterns. 2. Find the class width as follows. Determine the range of the data, divide the range by the number of classes, and round up to the next convenient number. 3. Find the class limits. You can use the minimum entry as the lower limit of the first class. To find the remaining lower limits, add the class width to the lower limit of the preceding class. Then find the upper class limits. 4.