Brice Prelims 1..16

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Viz Verdi Switcher Guide

. Viz Verdi Switcher Guide Version 1.0 Copyright © 2020 Vizrt. All rights reserved. No part of this software, documentation or publication may be reproduced, transcribed, stored in a retrieval system, translated into any language, computer language, or transmitted in any form or by any means, electronically, mechanically, magnetically, optically, chemically, photocopied, manually, or otherwise, without prior written permission from Vizrt. Vizrt specifically retains title to all Vizrt software. This software is supplied under a license agreement and may only be installed, used or copied in accordance to that agreement. Disclaimer Vizrt provides this publication “as is” without warranty of any kind, either expressed or implied. This publication may contain technical inaccuracies or typographical errors. While every precaution has been taken in the preparation of this document to ensure that it contains accurate and up-to-date information, the publisher and author assume no responsibility for errors or omissions. Nor is any liability assumed for damages resulting from the use of the information contained in this document. Vizrt’s policy is one of continual development, so the content of this document is periodically subject to be modified without notice. These changes will be incorporated in new editions of the publication. Vizrt may make improvements and/or changes in the product(s) and/or the program(s) described in this publication at any time. Vizrt may have patents or pending patent applications covering subject matters in this document. The furnishing of this document does not give you any license to these patents. Technical Support For technical support and the latest news of upgrades, documentation, and related products, visit the Vizrt web site at www.vizrt.com. -

Coding and Modulation for Digital Television Multimedia Systems and Applications Series

CODING AND MODULATION FOR DIGITAL TELEVISION MULTIMEDIA SYSTEMS AND APPLICATIONS SERIES Consulting Editor Borko Furht Florida Atlantic University Recently Published Titles: CELLULAR AUTOMATA TRANSFORMS: Theory and Applications in Multimedia Compression, Encryption, and Modeling, by Olu Lafe ISBN: 0-7923-7857- 1 COMPUTED SYNCHRONIZATION FOR MULTIMEDIA APPLICATIONS, by Charles B. Owen and Fillia Makedon ISBN: 0-7923-8565-9 STILL IMAGE COMPRESSION ON PARALLEL COMPUTER ARCHITECTURES, by Savitri Bevinakoppa ISBN: 0-7923-8322-2 INTERACTIVE VIDEO-ON-DEMAND SYSTEMS: Resource Management and Scheduling Strategies, by T. P. Jimmy To and Babak Hamidzadeh ISBN: 0-7923-8320-6 MULTIMEDIA TECHNOLOGIES AND APPLICATIONS FOR THE 21st CENTURY: Visions of World Experts, by Borko Furht ISBN: 0-7923-8074-6 INTELLIGENT IMAGE DATABASES: Towards Advanced Image Retrieval, by Yihong Gong ISBN: 0-7923-8015-0 BUFFERING TECHNIQUES FOR DELIVERY OF COMPRESSED VIDEO IN VIDEO-ON-DEMAND SYSTEMS, by Wu-chi Feng ISBN: 0-7923-9998-6 HUMAN FACE RECOGNITION USING THIRD-ORDER SYNTHETIC NEURAL NETWORKS, by Okechukwu A. Uwechue, and Abhijit S. Pandya ISBN: 0-7923-9957-9 MULTIMEDIA INFORMATION SYSTEMS, by Marios C. Angelides and Schahram Dustdar ISBN: 0-7923-9915-3 MOTION ESTIMATION ALGORITHMS FOR VIDEO COMPRESSION, by Borko Furht, Joshua Greenberg and Raymond Westwater ISBN: 0-7923-9793-2 VIDEO DATA COMPRESSION FOR MULTIMEDIA COMPUTING, edited by Hua Harry Li, Shan Sun, Haluk Derin ISBN: 0-7923-9790-8 REAL-TIME VIDEO COMPRESSION: Techniques and Algorithms, by Raymond Westwater and -

Volunteer Handbook FINAL

Contents Contents .......................................................................................................... 1 Introduction ..................................................................................................... 2 HTB Vision ....................................................................................................... 4 Production Values ............................................................................................ 5 Team Expectations – Spiritual ........................................................................... 7 Team Expectations - Practical ............................................................................ 8 Venue Call Times ........................................................................................... 10 Staff & Volunteer Structure ............................................................................. 13 Job Positions Summary ................................................................................... 14 Training Process ............................................................................................ 18 Most Common Live Production Terms .............................................................. 21 Production Volunteer Handbook 1 Introduction Welcome to HTB Production! We’re so excited to have you reading this handbook to learn more about our team and what being a part of it is like. We believe that production lies at the beating heart of the church, and we exist to help everyone see what God is ‘doing in the room’. We -

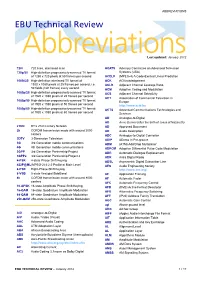

ABBREVIATIONS EBU Technical Review

ABBREVIATIONS EBU Technical Review AbbreviationsLast updated: January 2012 720i 720 lines, interlaced scan ACATS Advisory Committee on Advanced Television 720p/50 High-definition progressively-scanned TV format Systems (USA) of 1280 x 720 pixels at 50 frames per second ACELP (MPEG-4) A Code-Excited Linear Prediction 1080i/25 High-definition interlaced TV format of ACK ACKnowledgement 1920 x 1080 pixels at 25 frames per second, i.e. ACLR Adjacent Channel Leakage Ratio 50 fields (half frames) every second ACM Adaptive Coding and Modulation 1080p/25 High-definition progressively-scanned TV format ACS Adjacent Channel Selectivity of 1920 x 1080 pixels at 25 frames per second ACT Association of Commercial Television in 1080p/50 High-definition progressively-scanned TV format Europe of 1920 x 1080 pixels at 50 frames per second http://www.acte.be 1080p/60 High-definition progressively-scanned TV format ACTS Advanced Communications Technologies and of 1920 x 1080 pixels at 60 frames per second Services AD Analogue-to-Digital AD Anno Domini (after the birth of Jesus of Nazareth) 21CN BT’s 21st Century Network AD Approved Document 2k COFDM transmission mode with around 2000 AD Audio Description carriers ADC Analogue-to-Digital Converter 3DTV 3-Dimension Television ADIP ADress In Pre-groove 3G 3rd Generation mobile communications ADM (ATM) Add/Drop Multiplexer 4G 4th Generation mobile communications ADPCM Adaptive Differential Pulse Code Modulation 3GPP 3rd Generation Partnership Project ADR Automatic Dialogue Replacement 3GPP2 3rd Generation Partnership -

Creating 4K/UHD Content Poster

Creating 4K/UHD Content Colorimetry Image Format / SMPTE Standards Figure A2. Using a Table B1: SMPTE Standards The television color specification is based on standards defined by the CIE (Commission 100% color bar signal Square Division separates the image into quad links for distribution. to show conversion Internationale de L’Éclairage) in 1931. The CIE specified an idealized set of primary XYZ SMPTE Standards of RGB levels from UHDTV 1: 3840x2160 (4x1920x1080) tristimulus values. This set is a group of all-positive values converted from R’G’B’ where 700 mv (100%) to ST 125 SDTV Component Video Signal Coding for 4:4:4 and 4:2:2 for 13.5 MHz and 18 MHz Systems 0mv (0%) for each ST 240 Television – 1125-Line High-Definition Production Systems – Signal Parameters Y is proportional to the luminance of the additive mix. This specification is used as the color component with a color bar split ST 259 Television – SDTV Digital Signal/Data – Serial Digital Interface basis for color within 4K/UHDTV1 that supports both ITU-R BT.709 and BT2020. 2020 field BT.2020 and ST 272 Television – Formatting AES/EBU Audio and Auxiliary Data into Digital Video Ancillary Data Space BT.709 test signal. ST 274 Television – 1920 x 1080 Image Sample Structure, Digital Representation and Digital Timing Reference Sequences for The WFM8300 was Table A1: Illuminant (Ill.) Value Multiple Picture Rates 709 configured for Source X / Y BT.709 colorimetry ST 296 1280 x 720 Progressive Image 4:2:2 and 4:4:4 Sample Structure – Analog & Digital Representation & Analog Interface as shown in the video ST 299-0/1/2 24-Bit Digital Audio Format for SMPTE Bit-Serial Interfaces at 1.5 Gb/s and 3 Gb/s – Document Suite Illuminant A: Tungsten Filament Lamp, 2854°K x = 0.4476 y = 0.4075 session display. -

HD-SDI, HDMI, and Tempus Fugit

TECHNICALL Y SPEAKING... By Steve Somers, Vice President of Engineering HD-SDI, HDMI, and Tempus Fugit D-SDI (high definition serial digital interface) and HDMI (high definition multimedia interface) Hversion 1.3 are receiving considerable attention these days. “These days” really moved ahead rapidly now that I recall writing in this column on HD-SDI just one year ago. And, exactly two years ago the topic was DVI and HDMI. To be predictably trite, it seems like just yesterday. As with all things digital, there is much change and much to talk about. HD-SDI Redux difference channels suffice with one-half 372M spreads out the image information The HD-SDI is the 1.5 Gbps backbone the sample rate at 37.125 MHz, the ‘2s’ between the two channels to distribute of uncompressed high definition video in 4:2:2. This format is sufficient for high the data payload. Odd-numbered lines conveyance within the professional HD definition television. But, its robustness map to link A and even-numbered lines production environment. It’s been around and simplicity is pressing it into the higher map to link B. Table 1 indicates the since about 1996 and is quite literally the bandwidth demands of digital cinema and organization of 4:2:2, 4:4:4, and 4:4:4:4 savior of high definition interfacing and other uses like 12-bit, 4096 level signal data with respect to the available frame delivery at modest cost over medium-haul formats, refresh rates above 30 frames per rates. distances using RG-6 style video coax. -

S Guide to VECTORBOX 6000

VECTORBOX 6000 Quick User’s Guide Introduction VECTORBOX is a highly flexible broadcast system designed to cover every playout need in today's broadcast facility. The system provides master control room automation with a built-in video server and character generator and handles playout and ingest of content from video server as well as satellite, VTR and studio. VECTORBOX is the ideal solution for all kinds of broadcasters including local, cable, satellite, music, news and barker channels. Like other Vector 3 products, VECTORBOX is constantly undergoing development according to the changing needs of over 500 existing VECTORBOX clients and an ever more demanding broadcast industry. Configurations VECTORBOX systems are available in a variety of configurations for different applications and formats. VECTORBOX 8000 is a high definition SDI playout system with a maximum of one recorder channel and one playout channel per chassis. VECTORBOX 6000 is SDI only and is available as a recorder with a minimum configuration of 2 ingest channels and as a playout with up to four playout channels per chassis. V-BOX News is an SDI manual playout for live and news environments with the ability work in A/B roll with or without an external vision mixer as the system includes a range of transitions. Other features include journalist assignment, text field exportation to a prompter with read rate calculator, group event management and status colour coding of all events. VECTORBOX systems are multi-codec and handle a wide range of compression formats including: DVCAM, DVCPRO, DVPRO50, DVCPRO & uncompressed as well as MPEG-2 IBP and I-frame. -

Playout Delay of TV Broadcasting

Master Thesis Playout delay of TV broadcasting Wouter Kooij 06/03/2014 University of Twente Faculty of Electrical Engineering, Mathematics and Computer Science Nederlandse Organisatie voor toegepast-natuurwetenschappelijk onderzoek, TNO Supervisors UT Prof. Dr. Ir. Boudewijn R. Haverkort Dr.ir. Pieter-Tjerk de Boer Supervisors TNO Ir. Hans Stokking Ray van Brandenburg, M.Sc. Date of the graduation 13/03/2014 Contents Acknowledgments 3 Nomenclature 5 1. Background 7 1.1. Introduction . .7 1.2. Research questions . .7 1.3. Outline . .8 2. Related Work 11 3. TV content delivery networks 13 3.1. Introduction . 13 3.2. Overview . 13 3.2.1. Analog TV . 14 3.2.2. Terrestrial, Satellite and Cable TV (DVB) . 15 3.2.3. IPTV . 15 3.3. TV Content delivery chain elements . 18 4. Delays in TV content delivery networks 21 4.1. Introduction . 21 4.2. Encoding and decoding . 22 4.2.1. Coding types . 23 4.2.2. Conclusion . 25 4.3. Transmission delays . 25 4.4. IPTV Techniques . 26 4.5. Delays in the KPN Chain . 26 5. Design and development of a playout difference measurement system 29 5.1. Introduction . 29 5.2. Content recognition techniques . 29 5.2.1. Audio fingerprinting . 31 5.3. Overview . 35 5.4. Reference time-source . 38 5.4.1. GPS as time-source . 38 5.4.2. GPS architecture in Android . 39 5.4.3. Obtaining GPS time in Android . 41 i Contents Contents 5.4.4. NTP as time-source . 44 5.4.5. NTP implementation in Android . 45 5.4.6. -

ICE Flexible, Scalable, Reliable Integrated Playout Solution

DATASHEET ICE Flexible, Scalable, Reliable Integrated Playout Solution Best-in-class software solution for the entire SDI or IP broadcast chain. In today’s market, you’re likely to be under pressure to reduce the cost and ICE dramatically increases efficiency while reducing complexity. It is a proven complexity of your operations while finding new ways to increase revenue system that helps you lower your costs, rapidly launch new revenue-generat- and protect business continuity. ing services and grow your business. To meet these challenges, you need a flexible, scalable, and highly efficient Best of all, Grass Valley designed ICE to deliver “5 nines” reliability, and we system that provides automated channel playout, enables rapid deployment back it up with the best customer support in the business. of new revenue-generating services, and facilitates disaster recovery. ICE was designed from the ground up to meet the demands of a wide variety Above all, you need a no-compromise solution that delivers proven reliability, of applications: reduces complexity and cost, enables future-proof expansion and is backed • Single-channel playout up by world-class service and support. • Channel expansion — multichannel playout • Multiplatform playout — simulcast/delayed (+1,+3, etc.)/OTT The solution is ICE. The integrated playout solution from Grass Valley. • Disaster recovery/back-up Grass Valley understands that broadcast master control and playout is a • Centralization complex operation where a large number of sophisticated systems must all • Migration to IP playout work together flawlessly. • Software-defined channels When we designed an integrated playout solution, we focused on what it takes to make a great channel, not just a set of features, so we created ICE. -

CHARTS Best Practice Guide to Live Streaming Culture, Heritage and Arts Activities Page 1 of 36

CHARTS Best Practice Guide To Live Streaming Culture, Heritage and Arts Activities Page 1 of 36 CHARTS Best Practice Guide To Live Streaming Culture, Heritage and Arts Activities This report has been researched and written by Dougal Perman and Katie McGeary from live streaming specialist company Inner Ear for the benefit of CHARTS members. It draws on almost twenty years of experience in live streaming culture, heritage and arts activities from creative people who have an innate understanding of the process and technology. Advice, tips, techniques and recommendations are offered on how to get the most out of your live streaming experience. CHARTS Best Practice Guide To Live Streaming Culture, Heritage and Arts Activities Page 2 of 36 Introduction Examples Highland Dancing at Cowal Highland Gathering Glenfiddich Piping Championship Scottish Ballet’s Digital Season 2019 – Work In A Week Granite Noir 2019 Crime Writing Festival Scottish Opera Highlights from Bowmore, Islay CHARTS Showcase Event, Oban CHARTS Live Link Up Between Jura and Oban CHARTS Live Lab Tour of Dunoon Burgh Hall Ruddington Village Museum Tour Beat The Retreat Objectives What Works Well What Do You Want To Achieve? Why stream live? Engagement Content Ranking Cost Growth Why not? Planning What goes into a live stream? Live Programme Making Framework Crew and Roles Approach Multi-Camera Workflow Smartphone Stream Workflow Live Programme Making Recording Practical Production Tips Rehearsal Vision Mixing Cameras Sound Lighting The Action CHARTS Best Practice Guide To Live -

Glossary of Digital Video Terms

Glossary of Digital Video Terms 24P: 24 frame per second, progressive scan. This has been the frame rate of motion picture film since talkies arrived. It is also one of the rates allowed for transmission in the DVB and ATSC television standards – so they can handle film without needing any frame-rate change (3:2 pull-down for 60 fields-per-second systems or running film at 25fps for 50 Hz systems). It is now accepted as a part of television production formats – usually associated with high definition 1080 lines, progressive scan. A major attraction is a relatively easy path from this to all major television formats as well as offering direct electronic support for motion picture film and D-cinema. 24Psf: 24 frame per second, progressive segmented frame. A 24P system in which each frame is segmented – recorded as odd lines followed by even lines. Unlike normal television, the odd and even lines are from the same snapshot in time – exactly as film is shown today on 625/50 TV systems. This way the signal is more compatible (than normal progressive) for use with video systems, e.g. VTRs, SDTI or HD-SDI connections, mixers/switchers etc., which may also handle interlaced scans. It can also easily be viewed without the need to process the pictures to reduce 24-frame flicker. 3:2 pull-down: Method used to map the 24 fps of film onto the 30 fps (60 fields) of 525-line TV, so that one film frame occupies three TV fields, the next two, etc. It means the two fields of every other TV frame come from different film frames making operations such as rotoscoping impossible, and requiring care in editing. -

Operating Instructions

Operating Instructions <Operations and Settings> Live Switcher Model No. AV-HS410N How the Operating Instructions are configured <Basics>: The <Basics> describes the procedure for connection with the required equipment and for installation. Before installing this unit, be sure to take the time to read through <Basics> to ensure that the unit will be installed correctly. <Operations and Settings> (this manual): This <Operations and Settings> describes how to operate the unit and how to establish its settings. For details on how to perform the basic menu operations, refer to“2-2. Basic menu operations” in the <Basics>. ENGLISH M1111TY0 -FJ VQT3U71A(E) p Information on software for this product 1. Included with this product is software licensed under the GNU General Public License (GPL) and GNU Lesser General Public License (LGPL), and users are hereby informed that they have the right to obtain, change and redistribute the source codes of this software. To obtain the source codes, go to the following home page: http://pro-av.panasonic.net/ The manufacturer asks users to refrain from directing inquiries concerning the source codes they have obtained and other details to its representatives. 2. Included with this product is software which is licensed under MIT-License. Details on the above software can be found on the CD provided with the unit. Refer to the folder called “LDOC”. (Details are given in the original (English language) text.) Trademarks and registered trademarks Abbreviations p Microsoft®, Windows® XP, Windows Vista®, Windows® 7 The following abbreviations are used in this manual. and Internet Explorer® are either registered trademarks or p Microsoft® Windows® 7 Professional SP1 32/64-bit is trademarks of Microsoft Corporation in the United States abbreviated to “Windows 7”.