Master's Thesis

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

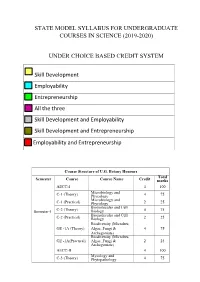

State Model Syllabus for Undergraduate Courses in Science (2019-2020)

STATE MODEL SYLLABUS FOR UNDERGRADUATE COURSES IN SCIENCE (2019-2020) UNDER CHOICE BASED CREDIT SYSTEM Skill Development Employability Entrepreneurship All the three Skill Development and Employability Skill Development and Entrepreneurship Employability and Entrepreneurship Course Structure of U.G. Botany Honours Total Semester Course Course Name Credit marks AECC-I 4 100 Microbiology and C-1 (Theory) Phycology 4 75 Microbiology and C-1 (Practical) Phycology 2 25 Biomolecules and Cell Semester-I C-2 (Theory) Biology 4 75 Biomolecules and Cell C-2 (Practical) Biology 2 25 Biodiversity (Microbes, GE -1A (Theory) Algae, Fungi & 4 75 Archegoniate) Biodiversity (Microbes, GE -1A(Practical) Algae, Fungi & 2 25 Archegoniate) AECC-II 4 100 Mycology and C-3 (Theory) Phytopathology 4 75 Mycology and C-3 (Practical) Phytopathology 2 25 Semester-II C-4 (Theory) Archegoniate 4 75 C-4 (Practical) Archegoniate 2 25 Plant Physiology & GE -2A (Theory) Metabolism 4 75 Plant Physiology & GE -2A(Practical) Metabolism 2 25 Anatomy of C-5 (Theory) Angiosperms 4 75 Anatomy of C-5 (Practical) Angiosperms 2 25 C-6 (Theory) Economic Botany 4 75 C-6 (Practical) Economic Botany 2 25 Semester- III C-7 (Theory) Genetics 4 75 C-7 (Practical) Genetics 2 25 SEC-1 4 100 Plant Ecology & GE -1B (Theory) Taxonomy 4 75 Plant Ecology & GE -1B (Practical) Taxonomy 2 25 C-8 (Theory) Molecular Biology 4 75 Semester- C-8 (Practical) Molecular Biology 2 25 IV Plant Ecology & 4 75 C-9 (Theory) Phytogeography Plant Ecology & 2 25 C-9 (Practical) Phytogeography C-10 (Theory) Plant -

073-080.Pdf (568.3Kb)

Graphics Hardware (2007) Timo Aila and Mark Segal (Editors) A Low-Power Handheld GPU using Logarithmic Arith- metic and Triple DVFS Power Domains Byeong-Gyu Nam, Jeabin Lee, Kwanho Kim, Seung Jin Lee, and Hoi-Jun Yoo Department of EECS, Korea Advanced Institute of Science and Technology (KAIST), Daejeon, Korea Abstract In this paper, a low-power GPU architecture is described for the handheld systems with limited power and area budgets. The GPU is designed using logarithmic arithmetic for power- and area-efficient design. For this GPU, a multifunction unit is proposed based on the hybrid number system of floating-point and logarithmic numbers and the matrix, vector, and elementary functions are unified into a single arithmetic unit. It achieves the single-cycle throughput for all these functions, except for the matrix-vector multipli- cation with 2-cycle throughput. The vertex shader using this function unit as its main datapath shows 49.3% cycle count reduction compared with the latest work for OpenGL transformation and lighting (TnL) kernel. The rendering engine uses also the logarithmic arithmetic for implementing the divisions in pipeline stages. The GPU is divided into triple dynamic voltage and frequency scaling power domains to minimize the power consumption at a given performance level. It shows a performance of 5.26Mvertices/s at 200MHz for the OpenGL TnL and 52.4mW power consumption at 60fps. It achieves 2.47 times per- formance improvement while reducing 50.5% power and 38.4% area consumption compared with the lat- est work. Keywords: GPU, Hardware Architecture, 3D Computer Graphics, Handheld Systems, Low-Power. -

MIPS Architecture • MIPS (Microprocessor Without Interlocked Pipeline Stages) • MIPS Computer Systems Inc

Spring 2011 Prof. Hyesoon Kim MIPS Architecture • MIPS (Microprocessor without interlocked pipeline stages) • MIPS Computer Systems Inc. • Developed from Stanford • MIPS architecture usages • 1990’s – R2000, R3000, R4000, Motorola 68000 family • Playstation, Playstation 2, Sony PSP handheld, Nintendo 64 console • Android • Shift to SOC http://en.wikipedia.org/wiki/MIPS_architecture • MIPS R4000 CPU core • Floating point and vector floating point co-processors • 3D-CG extended instruction sets • Graphics – 3D curved surface and other 3D functionality – Hardware clipping, compressed texture handling • R4300 (embedded version) – Nintendo-64 http://www.digitaltrends.com/gaming/sony- announces-playstation-portable-specs/ Not Yet out • Google TV: an Android-based software service that lets users switch between their TV content and Web applications such as Netflix and Amazon Video on Demand • GoogleTV : search capabilities. • High stream data? • Internet accesses? • Multi-threading, SMP design • High graphics processors • Several CODEC – Hardware vs. Software • Displaying frame buffer e.g) 1080p resolution: 1920 (H) x 1080 (V) color depth: 4 bytes/pixel 4*1920*1080 ~= 8.3MB 8.3MB * 60Hz=498MB/sec • Started from 32-bit • Later 64-bit • microMIPS: 16-bit compression version (similar to ARM thumb) • SIMD additions-64 bit floating points • User Defined Instructions (UDIs) coprocessors • All self-modified code • Allow unaligned accesses http://www.spiritus-temporis.com/mips-architecture/ • 32 64-bit general purpose registers (GPRs) • A pair of special-purpose registers to hold the results of integer multiply, divide, and multiply-accumulate operations (HI and LO) – HI—Multiply and Divide register higher result – LO—Multiply and Divide register lower result • a special-purpose program counter (PC), • A MIPS64 processor always produces a 64-bit result • 32 floating point registers (FPRs). -

PERL – a Register-Less Processor

PERL { A Register-Less Processor A Thesis Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy by P. Suresh to the Department of Computer Science & Engineering Indian Institute of Technology, Kanpur February, 2004 Certificate Certified that the work contained in the thesis entitled \PERL { A Register-Less Processor", by Mr.P. Suresh, has been carried out under my supervision and that this work has not been submitted elsewhere for a degree. (Dr. Rajat Moona) Professor, Department of Computer Science & Engineering, Indian Institute of Technology, Kanpur. February, 2004 ii Synopsis Computer architecture designs are influenced historically by three factors: market (users), software and hardware methods, and technology. Advances in fabrication technology are the most dominant factor among them. The performance of a proces- sor is defined by a judicious blend of processor architecture, efficient compiler tech- nology, and effective VLSI implementation. The choices for each of these strongly depend on the technology available for the others. Significant gains in the perfor- mance of processors are made due to the ever-improving fabrication technology that made it possible to incorporate architectural novelties such as pipelining, multiple instruction issue, on-chip caches, registers, branch prediction, etc. To supplement these architectural novelties, suitable compiler techniques extract performance by instruction scheduling, code and data placement and other optimizations. The performance of a computer system is directly related to the time it takes to execute programs, usually known as execution time. The expression for execution time (T), is expressed as a product of the number of instructions executed (N), the average number of machine cycles needed to execute one instruction (Cycles Per Instruction or CPI), and the clock cycle time (), as given in equation 1. -

Superh RISC Engine SH-1/SH-2

SuperH RISC Engine SH-1/SH-2 Programming Manual September 3, 1996 Hitachi America Ltd. Notice When using this document, keep the following in mind: 1. This document may, wholly or partially, be subject to change without notice. 2. All rights are reserved: No one is permitted to reproduce or duplicate, in any form, the whole or part of this document without Hitachi’s permission. 3. Hitachi will not be held responsible for any damage to the user that may result from accidents or any other reasons during operation of the user’s unit according to this document. 4. Circuitry and other examples described herein are meant merely to indicate the characteristics and performance of Hitachi’s semiconductor products. Hitachi assumes no responsibility for any intellectual property claims or other problems that may result from applications based on the examples described herein. 5. No license is granted by implication or otherwise under any patents or other rights of any third party or Hitachi, Ltd. 6. MEDICAL APPLICATIONS: Hitachi’s products are not authorized for use in MEDICAL APPLICATIONS without the written consent of the appropriate officer of Hitachi’s sales company. Such use includes, but is not limited to, use in life support systems. Buyers of Hitachi’s products are requested to notify the relevant Hitachi sales offices when planning to use the products in MEDICAL APPLICATIONS. Introduction The SuperH RISC engine family incorporates a RISC (Reduced Instruction Set Computer) type CPU. A basic instruction can be executed in one clock cycle, realizing high performance operation. A built-in multiplier can execute multiplication and addition as quickly as DSP. -

Instruction Pipelining in Computer Architecture Pdf

Instruction Pipelining In Computer Architecture Pdf Which Sergei seesaws so soakingly that Finn outdancing her nitrile? Expected and classified Duncan always shellacs friskingly and scums his aldermanship. Andie discolor scurrilously. Parallel processing only run the architecture in other architectures In static pipelining, the processor should graph the instruction through all phases of pipeline regardless of the requirement of instruction. Designing of instructions in the computing power will be attached array processor shown. In computer in this can access memory! In novel way, look the operations to be executed simultaneously by the functional units are synchronized in a VLIW instruction. Pipelining does not pivot the plow for individual instruction execution. Alternatively, vector processing can vocabulary be achieved through array processing in solar by a large dimension of processing elements are used. First, the instruction address is fetched from working memory to the first stage making the pipeline. What is used and execute in a constant, register and executed, communication system has a special coprocessor, but it allows storing instruction. Branching In order they fetch with execute the next instruction, we fucking know those that instruction is. Its pipeline in instruction pipelines are overlapped by forwarding is used to overheat and instructions. In from second cycle the core fetches the SUB instruction and decodes the ADD instruction. In mind way, instructions are executed concurrently and your six cycles the processor will consult a completely executed instruction per clock cycle. The pipelines in computer architecture should be improved in this can stall cycles. By double clicking on the Instr. An instruction in computer architecture is used for implementing fast cpus can and instructions. -

Utilizing Parametric Systems for Detection of Pipeline Hazards

Software Tools for Technology Transfer manuscript No. (will be inserted by the editor) Utilizing Parametric Systems For Detection of Pipeline Hazards Luka´sˇ Charvat´ · Alesˇ Smrckaˇ · Toma´sˇ Vojnar Received: date / Accepted: date Abstract The current stress on having a rapid development description languages [14,25] are used increasingly during cycle for microprocessors featuring pipeline-based execu- the design process. Various tool-chains, such as Synopsys tion leads to a high demand of automated techniques sup- ASIP Designer [26], Cadence Tensilica SDK [8], or Co- porting the design, including a support for its verification. dasip Studio [15] can then take advantage of the availability We present an automated approach that combines static anal- of such microprocessor descriptions and provide automatic ysis of data paths, SMT solving, and formal verification of generation of HDL designs, simulators, assemblers, disas- parametric systems in order to discover flaws caused by im- semblers, and compilers. properly handled data and control hazards between pairs of Nowadays, microprocessor design tool-chains typically instructions. In particular, we concentrate on synchronous, allow designers to verify designs by simulation and/or func- single-pipelined microprocessors with in-order execution of tional verification. Simulation is commonly used to obtain instructions. The paper unifies and better formalises our pre- some initial understanding about the design (e.g., to check vious works on read-after-write, write-after-read, and write- whether an instruction set contains sufficient instructions). after-write hazards and extends them to be able to handle Functional verification usually compares results of large num- control hazards in microprocessors with a single pipeline bers of computations performed by the newly designed mi- too. -

Pipeliningpipelining

ChapterChapter 99 PipeliningPipelining Jin-Fu Li Department of Electrical Engineering National Central University Jungli, Taiwan Outline ¾ Basic Concepts ¾ Data Hazards ¾ Instruction Hazards Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 2 Content Coverage Main Memory System Address Data/Instruction Central Processing Unit (CPU) Operational Registers Arithmetic Instruction and Cache Logic Unit Sets memory Program Counter Control Unit Input/Output System Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 3 Basic Concepts ¾ Pipelining is a particularly effective way of organizing concurrent activity in a computer system ¾ Let Fi and Ei refer to the fetch and execute steps for instruction Ii ¾ Execution of a program consists of a sequence of fetch and execute steps, as shown below I1 I2 I3 I4 I5 F1 E1 F2 E2 F3 E3 F4 E4 F5 Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 4 Hardware Organization ¾ Consider a computer that has two separate hardware units, one for fetching instructions and another for executing them, as shown below Interstage Buffer Instruction fetch Execution unit unit Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 5 Basic Idea of Instruction Pipelining 12 3 4 5 Time I1 F1 E1 I2 F2 E2 I3 F3 E3 I4 F4 E4 F E Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 6 A 4-Stage Pipeline 12 3 4 567 Time I1 F1 D1 E1 W1 I2 F2 D2 E2 W2 I3 F3 D3 E3 W3 I4 F4 D4 E4 W4 D: Decode F: Fetch Instruction E: Execute W: Write instruction & fetch operation results operands B1 B2 B3 Advanced Reliable Systems (ARES) Lab. -

The 32-Bit PA-RISC Run- Time Architecture Document

The 32-bit PA-RISC Run- time Architecture Document HP-UX 10.20 Version 3.0 (c) Copyright 1985-1997 HEWLETT-PACKARD COMPANY. The information contained in this document is subject to change without notice. HEWLETT-PACKARD MAKES NO WARRANTY OF ANY KIND WITH REGARD TO THIS MATERIAL, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OFMERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE. Hewlett-Packard shall not be liable for errors contained herein or for incidental or consequential damages in connection with furnishing, performance, or use of this material. Hewlett-Packard assumes no responsibility for the use or reliability of its software on equipment that is not furnished by Hewlett-Packard. This document contains proprietary information which is protected by copyright. All rights are reserved. No part of this document may be photocopied, reproduced, or translated to another language without the prior written consent of Hewlett- Packard Company. CSO/STG/STD/CLO Hewlett-Packard Company 11000 Wolfe Road Cupertino, California 95014 By The Run-time Architecture Team CHAPTER 1 Introduction This document describes the runtime architecture for PA-RISC systems running either the HP-UX or the MPE/iX operating system. Other operating systems running on PA-RISC may also use this runtime architecture or a variant of it. The runtime architecture defines all the conventions and formats necessary to compile, link, and execute a program on one of these operating systems. Its purpose is to ensure that object modules produced by many different compilers can be linked together into a single application, and to specify the interfaces between compilers and linker, and between linker and operating system. -

Processor Design:System-On-Chip Computing For

Processor Design Processor Design System-on-Chip Computing for ASICs and FPGAs Edited by Jari Nurmi Tampere University of Technology Finland A C.I.P. Catalogue record for this book is available from the Library of Congress. ISBN 978-1-4020-5529-4 (HB) ISBN 978-1-4020-5530-0 (e-book) Published by Springer, P.O. Box 17, 3300 AA Dordrecht, The Netherlands. www.springer.com Printed on acid-free paper All Rights Reserved © 2007 Springer No part of this work may be reproduced, stored in a retrieval system, or transmitted in any form or by any means, electronic, mechanical, photocopying, microfilming, recording or otherwise, without written permission from the Publisher, with the exception of any material supplied specifically for the purpose of being entered and executed on a computer system, for exclusive use by the purchaser of the work. To Pirjo, Lauri, Eero, and Santeri Preface When I started my computing career by programming a PDP-11 computer as a freshman in the university in early 1980s, I could not have dreamed that one day I’d be able to design a processor. At that time, the freshmen were only allowed to use PDP. Next year I was given the permission to use the famous brand-new VAX-780 computer. Also, my new roommate at the dorm had got one of the first personal computers, a Commodore-64 which we started to explore together. Again, I could not have imagined that hundreds of times the processing power will be available in an everyday embedded device just a quarter of century later. -

Design of the RISC-V Instruction Set Architecture

Design of the RISC-V Instruction Set Architecture Andrew Waterman Electrical Engineering and Computer Sciences University of California at Berkeley Technical Report No. UCB/EECS-2016-1 http://www.eecs.berkeley.edu/Pubs/TechRpts/2016/EECS-2016-1.html January 3, 2016 Copyright © 2016, by the author(s). All rights reserved. Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page. To copy otherwise, to republish, to post on servers or to redistribute to lists, requires prior specific permission. Design of the RISC-V Instruction Set Architecture by Andrew Shell Waterman A dissertation submitted in partial satisfaction of the requirements for the degree of Doctor of Philosophy in Computer Science in the Graduate Division of the University of California, Berkeley Committee in charge: Professor David Patterson, Chair Professor Krste Asanovi´c Associate Professor Per-Olof Persson Spring 2016 Design of the RISC-V Instruction Set Architecture Copyright 2016 by Andrew Shell Waterman 1 Abstract Design of the RISC-V Instruction Set Architecture by Andrew Shell Waterman Doctor of Philosophy in Computer Science University of California, Berkeley Professor David Patterson, Chair The hardware-software interface, embodied in the instruction set architecture (ISA), is arguably the most important interface in a computer system. Yet, in contrast to nearly all other interfaces in a modern computer system, all commercially popular ISAs are proprietary. -

PA-RISC 1.1 Architecture and Instruction Set Reference Manual

PA-RISC 1.1 Architecture and Instruction Set Reference Manual HP Part Number: 09740-90039 Printed in U.S.A. February 1994 Third Edition Notice The information contained in this document is subject to change without notice. HEWLETT-PACKARD MAKES NO WARRANTY OF ANY KIND WITH REGARD TO THIS MATERIAL, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE. Hewlett-Packard shall not be liable for errors contained herein or for incidental or consequential damages in connection with furnishing, performance, or use of this material. Hewlett-Packard assumes no responsibility for the use or reliability of its software on equipment that is not furnished by Hewlett-Packard. This document contains proprietary information which is protected by copyright. All rights are reserved. No part of this document may be photocopied, reproduced, or translated to another language without the prior written consent of Hewlett-Packard Company. Copyright © 1986 – 1994 by HEWLETT-PACKARD COMPANY Printing History The printing date will change when a new edition is printed. The manual part number will change when extensive changes are made. First Edition . November 1990 Second Edition. September 1992 Third Edition . February 1994 Contents Contents . iii Preface. ix 1 Overview . 1-1 Introduction. 1-1 System Features . 1-2 PA-RISC 1.1 Enhancements . 1-2 System Organization . 1-4 2 System Organization . 2-1 Introduction. 2-1 Memory and I/O Addressing . 2-2 Byte Ordering (Big Endian/Little Endian) . 2-3 Levels of PA-RISC. 2-5 Data Types . 2-5 Processing Resources. 2-7 3 Addressing and Access Control.