A Shape Transformation-Based Dataset Augmentation Framework for Pedestrian Detection

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Sinitic Languages of Northwest China: Where Did Their Case Marking Come From?* Dan Xu

Sinitic languages of Northwest China: Where did their case marking come from?* Dan Xu To cite this version: Dan Xu. Sinitic languages of Northwest China: Where did their case marking come from?*. Cao, Djamouri and Peyraube. Languages in contact in Northwestern China, 2015. hal-01386250 HAL Id: hal-01386250 https://hal.archives-ouvertes.fr/hal-01386250 Submitted on 31 Oct 2016 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Copyright Sinitic languages of Northwest China: Where did their case marking come from?* XU DAN 1. Introduction In the early 1950s, Weinreich (1953) published a monograph on language contact. Although this subject drew the attention of a few scholars, at the time it remained marginal. Over two decades, several scholars including Moravcsik (1978), Thomason and Kaufman (1988), Aikhenvald (2002), Johanson (2002), Heine and Kuteva (2005) and others began to pay more attention to language contact. As Thomason and Kaufman (1988: 23) pointed out, language is a system, or even a system of systems. Perhaps this is why previous studies (Sapir, 1921: 203; Meillet 1921: 87) indicated that grammatical categories are not easily borrowed, since grammar is a system. -

Piggyback GAN: Efficient Lifelong Learning for Image Conditioned Generation

Piggyback GAN: Efficient Lifelong Learning for Image Conditioned Generation Mengyao Zhai1, Lei Chen1, Jiawei He1, Megha Nawhal1, Frederick Tung2, and Greg Mori1 1 Simon Fraser University, Burnaby, Canada 2 Borealis AI, Vancouver, Canada {mzhai, chenleic, jha203, mnawhal}@sfu.ca, [email protected], [email protected] Task 1 Task 2 Task … Task 1 Task 2 Task … conditional target conditional target conditional generated conditional generated images images images images images images images images (a) Ground truth (b) Sequential Fine-tuning conditional generated conditional generated conditional generated conditional generated images images images images images images images images add few additional parameters (c) Train a separate model for each task (d) Piggyback GAN Fig. 1. Lifelong learning of image-conditioned generation. The goal of lifelong learning is to build a model capable of adapting to tasks that are encountered se- quentially. Traditional fine-tuning methods are susceptible to catastrophic forgetting: when we add new tasks, the network forgets how to perform previous tasks (Figure 1 (b)). Storing a separate model for each task addresses catastrophic forgetting in an inefficient way as each set of parameters is only useful for a single task (Figure 1(c)). Our Piggyback GAN achieves image-conditioned generation with high image quality on par with separate models at a lower parameter cost by efficiently utilizing stored parameters (Figure 1 (d)). Abstract. Humans accumulate knowledge in a lifelong fashion. Modern deep neural networks, on the other hand, are susceptible to catastrophic forgetting: when adapted to perform new tasks, they often fail to pre- serve their performance on previously learned tasks. -

Accelerated WGAN Update Strategy with Loss Change Rate Balancing

Accelerated WGAN update strategy with loss change rate balancing Xu Ouyang Ying Chen Gady Agam Illinois Institute of Technology {xouyang3, ychen245}@hawk.iit.edu, [email protected] Abstract Optimizing the discriminator in Generative Adversarial Networks (GANs) to completion in the inner training loop is computationally prohibitive, and on finite datasets would result in overfitting. To address this, a common update strat- egy is to alternate between k optimization steps for the dis- criminator D and one optimization step for the generator G. This strategy is repeated in various GAN algorithms where k is selected empirically. In this paper, we show that this update strategy is not optimal in terms of accuracy and con- vergence speed, and propose a new update strategy for net- works with Wasserstein GAN (WGAN) group related loss functions (e.g. WGAN, WGAN-GP, Deblur GAN, and Super resolution GAN). The proposed update strategy is based on a loss change ratio comparison of G and D. We demonstrate Figure 1. Comparison of the Frenchet Inception Distance (FID) that the proposed strategy improves both convergence speed for WGAN and the proposed adaptive WGAN with different co- and accuracy. efficient λ on the CIFAR10 dataset. A lower FID means better performance. The parameters nd and ng show the fixed number of update steps in WGAN for the discriminator and generator re- 1. Introduction spectively. GANs [8] provide an effective deep neural network framework that can capture data distribution. GANs are GANs minimize a probabilistic divergence between real and modeled as a min-max two-player game between a discrim- fake (generated by the generator) data distributions [22]. -

Is Shuma the Chinese Analog of Soma/Haoma? a Study of Early Contacts Between Indo-Iranians and Chinese

SINO-PLATONIC PAPERS Number 216 October, 2011 Is Shuma the Chinese Analog of Soma/Haoma? A Study of Early Contacts between Indo-Iranians and Chinese by ZHANG He Victor H. Mair, Editor Sino-Platonic Papers Department of East Asian Languages and Civilizations University of Pennsylvania Philadelphia, PA 19104-6305 USA [email protected] www.sino-platonic.org SINO-PLATONIC PAPERS FOUNDED 1986 Editor-in-Chief VICTOR H. MAIR Associate Editors PAULA ROBERTS MARK SWOFFORD ISSN 2157-9679 (print) 2157-9687 (online) SINO-PLATONIC PAPERS is an occasional series dedicated to making available to specialists and the interested public the results of research that, because of its unconventional or controversial nature, might otherwise go unpublished. The editor-in-chief actively encourages younger, not yet well established, scholars and independent authors to submit manuscripts for consideration. Contributions in any of the major scholarly languages of the world, including romanized modern standard Mandarin (MSM) and Japanese, are acceptable. In special circumstances, papers written in one of the Sinitic topolects (fangyan) may be considered for publication. Although the chief focus of Sino-Platonic Papers is on the intercultural relations of China with other peoples, challenging and creative studies on a wide variety of philological subjects will be entertained. This series is not the place for safe, sober, and stodgy presentations. Sino- Platonic Papers prefers lively work that, while taking reasonable risks to advance the field, capitalizes on brilliant new insights into the development of civilization. Submissions are regularly sent out to be refereed, and extensive editorial suggestions for revision may be offered. Sino-Platonic Papers emphasizes substance over form. -

Appendix A: Soy Adi Kanunu (The Surname Law)

APPENDIX A: SOY ADı KaNUNU (THE SURNaME LaW) Republic of Turkey, Law 2525, 6.21.1934 I. Every Turk must carry his surname in addition to his proper name. II. The personal name comes first and the surname comes second in speaking, writing, and signing. III. It is forbidden to use surnames that are related to military rank and civil officialdom, to tribes and foreign races and ethnicities, as well as surnames which are not suited to general customs or which are disgusting or ridiculous. IV. The husband, who is the leader of the marital union, has the duty and right to choose the surname. In the case of the annulment of marriage or in cases of divorce, even if a child is under his moth- er’s custody, the child shall take the name that his father has cho- sen or will choose. This right and duty is the wife’s if the husband is dead and his wife is not married to somebody else, or if the husband is under protection because of mental illness or weak- ness, and the marriage is still continuing. If the wife has married after the husband’s death, or if the husband has been taken into protection because of the reasons in the previous article, and the marriage has also declined, this right and duty belongs to the closest male blood relation on the father’s side, and the oldest of these, and in their absence, to the guardian. © The Author(s) 2018 183 M. Türköz, Naming and Nation-building in Turkey, https://doi.org/10.1057/978-1-137-56656-0 184 APPENDIX A: SOY ADI KANUNU (THE SURNAME LAW) V. -

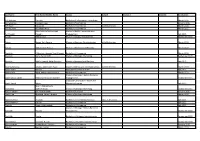

Last Name First Name/Middle Name Course Award Course 2 Award 2 Graduation

Last Name First Name/Middle Name Course Award Course 2 Award 2 Graduation A/L Krishnan Thiinash Bachelor of Information Technology March 2015 A/L Selvaraju Theeban Raju Bachelor of Commerce January 2015 A/P Balan Durgarani Bachelor of Commerce with Distinction March 2015 A/P Rajaram Koushalya Priya Bachelor of Commerce March 2015 Hiba Mohsin Mohammed Master of Health Leadership and Aal-Yaseen Hussein Management July 2015 Aamer Muhammad Master of Quality Management September 2015 Abbas Hanaa Safy Seyam Master of Business Administration with Distinction March 2015 Abbasi Muhammad Hamza Master of International Business March 2015 Abdallah AlMustafa Hussein Saad Elsayed Bachelor of Commerce March 2015 Abdallah Asma Samir Lutfi Master of Strategic Marketing September 2015 Abdallah Moh'd Jawdat Abdel Rahman Master of International Business July 2015 AbdelAaty Mosa Amany Abdelkader Saad Master of Media and Communications with Distinction March 2015 Abdel-Karim Mervat Graduate Diploma in TESOL July 2015 Abdelmalik Mark Maher Abdelmesseh Bachelor of Commerce March 2015 Master of Strategic Human Resource Abdelrahman Abdo Mohammed Talat Abdelziz Management September 2015 Graduate Certificate in Health and Abdel-Sayed Mario Physical Education July 2015 Sherif Ahmed Fathy AbdRabou Abdelmohsen Master of Strategic Marketing September 2015 Abdul Hakeem Siti Fatimah Binte Bachelor of Science January 2015 Abdul Haq Shaddad Yousef Ibrahim Master of Strategic Marketing March 2015 Abdul Rahman Al Jabier Bachelor of Engineering Honours Class II, Division 1 -

Clans at Bukit Pasoh the Clan Associations of Bukit Pasoh Were, and Still Are, Integral to Their Respective Communities

Chinatown Stories | Updated as of August 2019 Clans At Bukit Pasoh The clan associations of Bukit Pasoh were, and still are, integral to their respective communities. In 19th and early 20th century Singapore, social services were sorely lacking. This was where the clan associations played a vital role: providing a community and invaluable support for fellow immigrants of common ancestry, surname or language, or those who were from the same hometown. Beyond social functions, some of these clans founded schools, provided scholarships, and supported local arts and culture. They also assisted members with important life events such as weddings and funerals. These clan associations used to be concentrated along certain streets in Chinatown, such as Bukit Pasoh Road. Even though their numbers are fast dwindling today due to diminishing membership and high operating costs, the few that remain at Bukit Pasoh such as Gan Clan, Tung On Wui Kun, Koh Clan and Chin Kang Huay Kuan have adapted to the times. They continue to pool resources to promote Chinese culture, guard their heritage and benefit the community. Gan Clan This kinship clan’s early years were fraught with obstacles. Dating from 1926, it was established for immigrants with the surname Gan (颜, Yan in Mandarin) and was known as the Lu Guo Tang (鲁国堂) Gan Clan Association. During the Japanese Occupation, it ceased operations, but re-registered itself as an association after 1948. However, it dissolved again as a result of weak organisational structure. Undeterred, founding chairman Gan Yue Cheng (颜有政) suggested reviving the clan during a banquet in 1965. -

Self-Supervised Gans Via Auxiliary Rotation Loss

Self-Supervised GANs via Auxiliary Rotation Loss Ting Chen∗ Xiaohua Zhai Marvin Ritter University of California, Los Angeles Google Brain Google Brain [email protected] [email protected] [email protected] Mario Lucic Neil Houlsby Google Brain Google Brain [email protected] [email protected] Abstract proposed [4, 5, 6, 7, 8, 9, 10]. A major contributor to train- ing instability is the fact that the generator and discriminator Conditional GANs are at the forefront of natural image learn in a non-stationary environment. In particular, the dis- synthesis. The main drawback of such models is the neces- criminator is a classifier for which the distribution of one sity for labeled data. In this work we exploit two popular class (the fake samples) shifts as the generator changes dur- unsupervised learning techniques, adversarial training and ing training. In non-stationary online environments, neural self-supervision, and take a step towards bridging the gap networks forget previous tasks [11, 12, 13]. If the discrimi- between conditional and unconditional GANs. In particular, nator forgets previous classification boundaries, training may we allow the networks to collaborate on the task of repre- become unstable or cyclic. This issue is usually addressed sentation learning, while being adversarial with respect to either by reusing old samples or by applying continual learn- the classic GAN game. The role of self-supervision is to ing techniques [14, 15, 16, 17, 18, 19]. These issues become encourage the discriminator to learn meaningful feature rep- more prominent in the context of complex data sets. A key resentations which are not forgotten during training. -

Erectile Dysfunction

FOR DISPLAY ONLY chapter 58 Men’s Health: Erectile Dysfunction rectile dysfunction (yáng wêi 阳痿) is an inability to Eachieve and maintain an erection, achieve ejaculation, BOX 58.1 PATTERNS or both. Men presenting with erectile dysfunction often Liver qi constraint experience other complaints in addition to difficulty with · traditional approach erections, including loss of libido, ejaculatory failure, in- · modern strategy ability to achieve orgasm and premature ejaculation. These Blood stasis problems can also be dealt with using the strategies outlined · systemic, mild to moderate in this chapter. · severe, with marked stasis in lower burner Erectile dysfunction is a complicated issue, often as- · alternative strategies sociated with vascular disease and a tangle of social and Damp-heat emotional factors such as overwork and fatigue, anxiety and · chronic, dampness greater than heat depression, disinterest in the sexual partner, fear of sexual · with yin deficiency incompetence, marital discord or guilt about unconven- Qi and blood deficiency tional sexual impulses. · Heart blood and Spleen qi deficiency In Chinese medicine, the ability to achieve erection · fear damaging Kidney qi (with reasonable frequency, based on the age of the patient) · Heart and Kidney axis disruption ultimately reflects the state of the Kidneys and the distri- Kidney deficiency bution of qi and blood. A number of factors must be in – yang deficiency sync in order for erection and reproduction to take place. · diminished mìng mén fire First, intact Kidney yang is necessary to provide the ‘fire of · with enlarged prostate and poor fluid desire’ and the yang hydraulics to enable erection to occur. metabolism – yin deficiency Second, Liver qi must be free flowing so that qi and blood can reach the extremity of the Liver channel to inflate the ancestral sinew of the Liver (i.e., the penis). -

Deciphering the Formulation Secret Underlying Chinese Huo-Clearing Herbal Drink

ORIGINAL RESEARCH published: 22 April 2021 doi: 10.3389/fphar.2021.654699 Deciphering the Formulation Secret Underlying Chinese Huo-Clearing Herbal Drink Jianan Wang 1,2,3†, Bo Zhou 1,2,3†, Xiangdong Hu 1,2,3, Shuang Dong 4, Ming Hong 1,2,3, Jun Wang 4, Jian Chen 4, Jiuliang Zhang 5, Qiyun Zhang 1,2,3, Xiaohua Li 1,2,3, Alexander N. Shikov 6, Sheng Hu 4* and Xuebo Hu 1,2,3* 1Laboratory of Drug Discovery and Molecular Engineering, Department of Medicinal Plants, College of Plant Science and Technology, Huazhong Agricultural University, Wuhan, China, 2National and Local Joint Engineering Research Center (Hubei) for Medicinal Plant Breeding and Cultivation, Wuhan, China, 3Hubei Provincial Engineering Research Center for Medicinal Plants, Wuhan, China, 4Hubei Cancer Hospital, Tongji Medical College, Huazhong University of Science and Technology, Wuhan, China, 5College of Food Science and Technology, Huazhong Agricultural University, Wuhan, China, 6Department of Technology of pharmaceutical formulations, Saint-Petersburg State Chemical Pharmaceutical University, Saint-Petersburg, Russia Herbal teas or herbal drinks are traditional beverages that are prevalent in many cultures around the world. In Traditional Chinese Medicine, an herbal drink infused with different Edited by: ‘ ’ Michał Tomczyk, types of medicinal plants is believed to reduce the Shang Huo , or excessive body heat, a Medical University of Bialystok, Poland status of sub-optimal health. Although it is widely accepted and has a very large market, Reviewed by: the underlying science for herbal drinks remains elusive. By studying a group of herbs for José Carlos Tavares Carvalho, drinks, including ‘Gan’ (Glycyrrhiza uralensis Fisch. -

THE WORST STAGE of COVID-19 WILL BE SYSTEMIC TOXIC HEAT and LUNG FIRE Jake Fratkin, OMD, L.Ac. Author of Essential Chinese Formu

THE WORST STAGE OF COVID-19 WILL BE SYSTEMIC TOXIC HEAT AND LUNG FIRE Jake Fratkin, OMD, L.Ac. Author of Essential Chinese Formulas, 2014 These cases of COVID-19 will exhibit high fever and harsh painful cough. Fevers can reach 104 or 105 degrees, with body ache, headache, chills or shivering, and delirium, lasting 3 to 5 days. This systemic heat will locate in the lungs causing lung fire, and that cough can last many weeks. You can treat your patients without them setting foot in your clinic, by listening to their symptoms, and providing medicines at the door. The key to treatment here is to concentrate on herbs that kill virus, namely herbs found in the category Clear Heat, Resolve Toxins (qīnɡ rè jiě dú, 清热解毒). For classical practitioners who rely on six stages, the classical formulas will not be strong enough, because they do not contain enough herbs that kill viruses. They can be combined with the anti-toxic heat formulas, but alone will not do the job. I am providing a list of herbal products that you should consider stocking up on. This pandemic can go well into July or August. If you cannot obtain the products due to shortages, and if you have a raw herb or extract granule pharmacy, then make the formulas yourself from the formulas as listed on their websites. Or use herbal companies who can make up the formulas for you. My company, Dr Jake Fratkin Herbal Formulas, manufactured two formulas recommended by the China Ministry of Health during the SARS epidemic. -

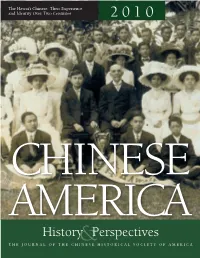

CHSA HP2010.Pdf

The Hawai‘i Chinese: Their Experience and Identity Over Two Centuries 2 0 1 0 CHINESE AMERICA History&Perspectives thej O u r n a l O f T HE C H I n E s E H I s T O r I C a l s OCIET y O f a m E r I C a Chinese America History and PersPectives the Journal of the chinese Historical society of america 2010 Special issUe The hawai‘i Chinese Chinese Historical society of america with UCLA asian american studies center Chinese America: History & Perspectives – The Journal of the Chinese Historical Society of America The Hawai‘i Chinese chinese Historical society of america museum & learning center 965 clay street san francisco, california 94108 chsa.org copyright © 2010 chinese Historical society of america. all rights reserved. copyright of individual articles remains with the author(s). design by side By side studios, san francisco. Permission is granted for reproducing up to fifty copies of any one article for educa- tional Use as defined by thed igital millennium copyright act. to order additional copies or inquire about large-order discounts, see order form at back or email [email protected]. articles appearing in this journal are indexed in Historical Abstracts and America: History and Life. about the cover image: Hawai‘i chinese student alliance. courtesy of douglas d. l. chong. Contents Preface v Franklin Ng introdUction 1 the Hawai‘i chinese: their experience and identity over two centuries David Y. H. Wu and Harry J. Lamley Hawai‘i’s nam long 13 their Background and identity as a Zhongshan subgroup Douglas D.