Exploring Boundaries in Game Processing

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

SPIM S20: a MIPS R2000 Simulator∗

SPIM S20: A MIPS R2000 Simulator∗ 1 th “ 25 the performance at none of the cost” James R. Larus [email protected] Computer Sciences Department University of Wisconsin–Madison 1210 West Dayton Street Madison, WI 53706, USA 608-262-9519 Copyright °c 1990–1997 by James R. Larus (This document may be copied without royalties, so long as this copyright notice remains on it.) 1 SPIM SPIM S20 is a simulator that runs programs for the MIPS R2000/R3000 RISC computers.1 SPIM can read and immediately execute files containing assembly language. SPIM is a self- contained system for running these programs and contains a debugger and interface to a few operating system services. The architecture of the MIPS computers is simple and regular, which makes it easy to learn and understand. The processor contains 32 general-purpose 32-bit registers and a well-designed instruction set that make it a propitious target for generating code in a compiler. However, the obvious question is: why use a simulator when many people have workstations that contain a hardware, and hence significantly faster, implementation of this computer? One reason is that these workstations are not generally available. Another reason is that these ma- chine will not persist for many years because of the rapid progress leading to new and faster computers. Unfortunately, the trend is to make computers faster by executing several instruc- tions concurrently, which makes their architecture more difficult to understand and program. The MIPS architecture may be the epitome of a simple, clean RISC machine. In addition, simulators can provide a better environment for low-level programming than an actual machine because they can detect more errors and provide more features than an actual computer. -

MIPS IV Instruction Set

MIPS IV Instruction Set Revision 3.2 September, 1995 Charles Price MIPS Technologies, Inc. All Right Reserved RESTRICTED RIGHTS LEGEND Use, duplication, or disclosure of the technical data contained in this document by the Government is subject to restrictions as set forth in subdivision (c) (1) (ii) of the Rights in Technical Data and Computer Software clause at DFARS 52.227-7013 and / or in similar or successor clauses in the FAR, or in the DOD or NASA FAR Supplement. Unpublished rights reserved under the Copyright Laws of the United States. Contractor / manufacturer is MIPS Technologies, Inc., 2011 N. Shoreline Blvd., Mountain View, CA 94039-7311. R2000, R3000, R6000, R4000, R4400, R4200, R8000, R4300 and R10000 are trademarks of MIPS Technologies, Inc. MIPS and R3000 are registered trademarks of MIPS Technologies, Inc. The information in this document is preliminary and subject to change without notice. MIPS Technologies, Inc. (MTI) reserves the right to change any portion of the product described herein to improve function or design. MTI does not assume liability arising out of the application or use of any product or circuit described herein. Information on MIPS products is available electronically: (a) Through the World Wide Web. Point your WWW client to: http://www.mips.com (b) Through ftp from the internet site “sgigate.sgi.com”. Login as “ftp” or “anonymous” and then cd to the directory “pub/doc”. (c) Through an automated FAX service: Inside the USA toll free: (800) 446-6477 (800-IGO-MIPS) Outside the USA: (415) 688-4321 (call from a FAX machine) MIPS Technologies, Inc. -

Average Memory Access Time: Reducing Misses

Review: Cache performance 332 Miss-oriented Approach to Memory Access: Advanced Computer Architecture ⎛ MemAccess ⎞ CPUtime = IC × ⎜ CPI + × MissRate × MissPenalt y ⎟ × CycleTime Chapter 2 ⎝ Execution Inst ⎠ ⎛ MemMisses ⎞ CPUtime = IC × ⎜ CPI + × MissPenalt y ⎟ × CycleTime Caches and Memory Systems ⎝ Execution Inst ⎠ CPIExecution includes ALU and Memory instructions January 2007 Separating out Memory component entirely Paul H J Kelly AMAT = Average Memory Access Time CPIALUOps does not include memory instructions ⎛ AluOps MemAccess ⎞ These lecture notes are partly based on the course text, Hennessy CPUtime = IC × ⎜ × CPI + × AMAT ⎟ × CycleTime and Patterson’s Computer Architecture, a quantitative approach (3rd ⎝ Inst AluOps Inst ⎠ and 4th eds), and on the lecture slides of David Patterson and John AMAT = HitTime + MissRate × MissPenalt y Kubiatowicz’s Berkeley course = ()HitTime Inst + MissRate Inst × MissPenalt y Inst + ()HitTime Data + MissRate Data × MissPenalt y Data Advanced Computer Architecture Chapter 2.1 Advanced Computer Architecture Chapter 2.2 Average memory access time: Reducing Misses Classifying Misses: 3 Cs AMAT = HitTime + MissRate × MissPenalt y Compulsory—The first access to a block is not in the cache, so the block must be brought into the cache. Also called cold start misses or first reference misses. There are three ways to improve cache (Misses in even an Infinite Cache) Capacity—If the cache cannot contain all the blocks needed during performance: execution of a program, capacity misses will occur due to blocks being discarded and later retrieved. (Misses in Fully Associative Size X Cache) 1. Reduce the miss rate, Conflict—If block-placement strategy is set associative or direct mapped, conflict misses (in addition to compulsory & capacity misses) will 2. -

MIPS Architecture • MIPS (Microprocessor Without Interlocked Pipeline Stages) • MIPS Computer Systems Inc

Spring 2011 Prof. Hyesoon Kim MIPS Architecture • MIPS (Microprocessor without interlocked pipeline stages) • MIPS Computer Systems Inc. • Developed from Stanford • MIPS architecture usages • 1990’s – R2000, R3000, R4000, Motorola 68000 family • Playstation, Playstation 2, Sony PSP handheld, Nintendo 64 console • Android • Shift to SOC http://en.wikipedia.org/wiki/MIPS_architecture • MIPS R4000 CPU core • Floating point and vector floating point co-processors • 3D-CG extended instruction sets • Graphics – 3D curved surface and other 3D functionality – Hardware clipping, compressed texture handling • R4300 (embedded version) – Nintendo-64 http://www.digitaltrends.com/gaming/sony- announces-playstation-portable-specs/ Not Yet out • Google TV: an Android-based software service that lets users switch between their TV content and Web applications such as Netflix and Amazon Video on Demand • GoogleTV : search capabilities. • High stream data? • Internet accesses? • Multi-threading, SMP design • High graphics processors • Several CODEC – Hardware vs. Software • Displaying frame buffer e.g) 1080p resolution: 1920 (H) x 1080 (V) color depth: 4 bytes/pixel 4*1920*1080 ~= 8.3MB 8.3MB * 60Hz=498MB/sec • Started from 32-bit • Later 64-bit • microMIPS: 16-bit compression version (similar to ARM thumb) • SIMD additions-64 bit floating points • User Defined Instructions (UDIs) coprocessors • All self-modified code • Allow unaligned accesses http://www.spiritus-temporis.com/mips-architecture/ • 32 64-bit general purpose registers (GPRs) • A pair of special-purpose registers to hold the results of integer multiply, divide, and multiply-accumulate operations (HI and LO) – HI—Multiply and Divide register higher result – LO—Multiply and Divide register lower result • a special-purpose program counter (PC), • A MIPS64 processor always produces a 64-bit result • 32 floating point registers (FPRs). -

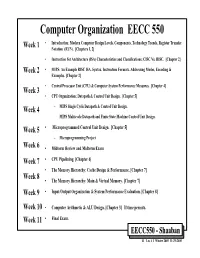

Computer Organization EECC 550 • Introduction: Modern Computer Design Levels, Components, Technology Trends, Register Transfer Week 1 Notation (RTN)

Computer Organization EECC 550 • Introduction: Modern Computer Design Levels, Components, Technology Trends, Register Transfer Week 1 Notation (RTN). [Chapters 1, 2] • Instruction Set Architecture (ISA) Characteristics and Classifications: CISC Vs. RISC. [Chapter 2] Week 2 • MIPS: An Example RISC ISA. Syntax, Instruction Formats, Addressing Modes, Encoding & Examples. [Chapter 2] • Central Processor Unit (CPU) & Computer System Performance Measures. [Chapter 4] Week 3 • CPU Organization: Datapath & Control Unit Design. [Chapter 5] Week 4 – MIPS Single Cycle Datapath & Control Unit Design. – MIPS Multicycle Datapath and Finite State Machine Control Unit Design. Week 5 • Microprogrammed Control Unit Design. [Chapter 5] – Microprogramming Project Week 6 • Midterm Review and Midterm Exam Week 7 • CPU Pipelining. [Chapter 6] • The Memory Hierarchy: Cache Design & Performance. [Chapter 7] Week 8 • The Memory Hierarchy: Main & Virtual Memory. [Chapter 7] Week 9 • Input/Output Organization & System Performance Evaluation. [Chapter 8] Week 10 • Computer Arithmetic & ALU Design. [Chapter 3] If time permits. Week 11 • Final Exam. EECC550 - Shaaban #1 Lec # 1 Winter 2005 11-29-2005 Computing System History/Trends + Instruction Set Architecture (ISA) Fundamentals • Computing Element Choices: – Computing Element Programmability – Spatial vs. Temporal Computing – Main Processor Types/Applications • General Purpose Processor Generations • The Von Neumann Computer Model • CPU Organization (Design) • Recent Trends in Computer Design/performance • Hierarchy -

IDT79R4600 and IDT79R4700 RISC Processor Hardware User's Manual

IDT79R4600™ and IDT79R4700™ RISC Processor Hardware User’s Manual Revision 2.0 April 1995 Integrated Device Technology, Inc. Integrated Device Technology, Inc. reserves the right to make changes to its products or specifications at any time, without notice, in order to improve design or performance and to supply the best possible product. IDT does not assume any respon- sibility for use of any circuitry described other than the circuitry embodied in an IDT product. ITD makes no representations that circuitry described herein is free from patent infringement or other rights of third parties which may result from its use. No license is granted by implication or otherwise under any patent, patent rights, or other rights of Integrated Device Technology, Inc. LIFE SUPPORT POLICY Integrated Device Technology’s products are not authorized for use as critical components in life sup- port devices or systems unless a specific written agreement pertaining to such intended use is executed between the manufacturer and an officer of IDT. 1. Life support devices or systems are devices or systems that (a) are intended for surgical implant into the body, or (b) support or sustain life, and whose failure to perform, when properly used in accor- dance with instructions for use provided in the labeling, can be reasonably expected to result in a sig- nificant injury to the user. 2. A critical component is any component of a life support device or system whose failure to perform can be reasonably expected to cause the failure of the life support device or system, or to affect its safety or effectiveness. -

Mips 16 Bit Instruction Set

Mips 16 bit instruction set Continue Instruction set architecture MIPSDesignerMIPS Technologies, Imagination TechnologiesBits64-bit (32 → 64)Introduced1985; 35 years ago (1985)VersionMIPS32/64 Issue 6 (2014)DesignRISCTypeRegister-RegisterEncodingFixedBranchingCompare and branchEndiannessBiPage size4 KBExtensionsMDMX, MIPS-3DOpenPartly. The R12000 has been on the market for more than 20 years and therefore cannot be subject to patent claims. Thus, the R12000 and old processors are completely open. RegistersGeneral Target32Floating Point32 MIPS (Microprocessor without interconnected pipeline stages) is a reduced setting of the Computer Set (RISC) Instruction Set Architecture (ISA):A-3:19, developed by MIPS Computer Systems, currently based in the United States. There are several versions of MIPS: including MIPS I, II, III, IV and V; and five MIPS32/64 releases (for 32- and 64-bit sales, respectively). The early MIPS architectures were only 32-bit; The 64-bit versions were developed later. As of April 2017, the current version of MIPS is MIPS32/64 Release 6. MiPS32/64 differs primarily from MIPS I-V, defining the system Control Coprocessor kernel preferred mode in addition to the user mode architecture. The MIPS architecture has several additional extensions. MIPS-3D, which is a simple set of floating-point SIMD instructions dedicated to common 3D tasks, MDMX (MaDMaX), which is a more extensive set of SIMD instructions using 64-bit floating current registers, MIPS16e, which adds compression to flow instructions to make programs that take up less space, and MIPS MT, which adds layered potential. Computer architecture courses in universities and technical schools often study MIPS architecture. Architecture has had a major impact on later RISC architectures such as Alpha. -

A Programming Model and Processor Architecture for Heterogeneous Multicore Computers

A PROGRAMMING MODEL AND PROCESSOR ARCHITECTURE FOR HETEROGENEOUS MULTICORE COMPUTERS A DISSERTATION SUBMITTED TO THE DEPARTMENT OF ELECTRICAL ENGINEERING AND THE COMMITTEE ON GRADUATE STUDIES OF STANFORD UNIVERSITY IN PARTIAL FULFILLMENT OF THE REQUIREMENTS FOR THE DEGREE OF DOCTOR OF PHILOSOPHY Michael D. Linderman February 2009 c Copyright by Michael D. Linderman 2009 All Rights Reserved ii I certify that I have read this dissertation and that, in my opinion, it is fully adequate in scope and quality as a dissertation for the degree of Doctor of Philosophy. (Professor Teresa H. Meng) Principal Adviser I certify that I have read this dissertation and that, in my opinion, it is fully adequate in scope and quality as a dissertation for the degree of Doctor of Philosophy. (Professor Mark Horowitz) I certify that I have read this dissertation and that, in my opinion, it is fully adequate in scope and quality as a dissertation for the degree of Doctor of Philosophy. (Professor Krishna V. Shenoy) Approved for the University Committee on Graduate Studies. iii Abstract Heterogeneous multicore computers, those systems that integrate specialized accelerators into and alongside multicore general-purpose processors (GPPs), provide the scalable performance needed by computationally demanding information processing (informatics) applications. However, these systems often feature instruction sets and functionality that significantly differ from GPPs and for which there is often little or no sophisticated compiler support. Consequently developing applica- tions for these systems is difficult and developer productivity is low. This thesis presents Merge, a general-purpose programming model for heterogeneous multicore systems. The Merge programming model enables the programmer to leverage different processor- specific or application domain-specific toolchains to create software modules specialized for differ- ent hardware configurations; and provides language mechanisms to enable the automatic mapping of processor-agnostic applications to these processor-specific modules. -

MOTOROLA 1995 112P

High-Performance Internal Product Portfolio Overview Issue 10 Fourth Quarter, 1995 Motorola reserves the right to make changes without further notice to any products herein. Motorola makes no warranty, representation or guarantee regarding the suitability of its products for any particular purpose, nor does Motorola assume any liability arising out of the application or use of any product or circuit, and specifically disclaims any and all liability, including without limitation consequential or incidental damages. "Typical" parameters can and do vary in different applications. All operating parameters, including "Typicals" must be validated for each customer application by customer's technical experts. Motorola does not convey any license under its patent rights nor the rights of others. Motorola products are not designed, intended, or authorized for use as components in systems intended for surgical implant into the body, or other applications intended to support or sustain life, or for any other application in which the failure of the Motorola product could create a situation where personal injury or death may occur. Should Buyer purchase or use Motorola products for any such unintended or unauthorized application, Buyer shall indemnify and hold Motorola and its officers, employees, subsidiaries, affiliates, and distributors harmless against all claims, costs, damages, and expenses, and reasonable attorney fees arising out of, directly or indirectly, any claim of personal injury or death associated with such unintended or unauthorized use, even if such claim alleges that Motorola was negligent regarding the design or manufacture of the part. Motorola and µ are registered trademarks of Motorola, Inc. Motorola, Inc. is an Equal Opportunity/Affirmative Action Employer. -

Production Cluster Visualization: Experiences and Challenges

Workshop on Parallel Visualization and Graphics Production Cluster Visualization: Experiences and Challenges Randall Frank VIEWS Visualization Project Lead Lawrence Livermore National Laboratory UCRL-PRES-200237 Workshop on Parallel Visualization and Graphics Why COTS Distributed Visualization Clusters? The realities of extreme dataset sizes (10TB+) • Stored with the compute platform • Cannot afford to copy the data • Co-resident visualization Track compute platform trends • Distributed infrastructure • Commodity hardware trends • Cost-effective solutions Migration of graphics leadership • The PC (Gamers) Desktops • Display technologies • HDR, resolution, stereo, tiled, etc Workshop on Parallel Visualization and Graphics 2 Production Visualization Requirements Unique, aggresive I/O requirements • Access patterns/performance Generation of graphical primitives • Graphics computation: primitive extraction/computation • Dataset decomposition (e.g. slabs vs chunks) Rendering of primitives • Aggregation of multiple rendering engines Video displays • Routing of digital or video tiles to displays (over distance) Interactivity (not a render-farm!) • Real-time imagery • Interaction devices, human in the loop (latency, prediction issues) Scheduling • Systems and people Workshop on Parallel Visualization and Graphics 3 Visualization Environment Architecture Archive PowerWall Analog Simulation Video Data Switch GigE Switch Visualization Engine Data Manipulation Engine Offices • Raw data on platform disks/archive systems • Data manipulation -

Introduction to Playstation®2 Architecture

Introduction to PlayStation®2 Architecture James Russell Software Engineer SCEE Technology Group In this presentation ä Company overview ä PlayStation 2 architecture overview ä PS2 Game Development ä Differences between PS2 and PC. Technology Group 1) Sony Computer Entertainment Overview SCE Europe (includes Aus, NZ, Mid East, America Technology Group Japan Southern Africa) Sales ä 40 million sold world-wide since launch ä Since March 2000 in Japan ä Since Nov 2000 in Europe/US ä New markets: Middle East, India, Korea, China ä Long term aim: 100 million within 5 years of launch ä Production facilities can produce 2M/month. Technology Group Design considerations ä Over 5 years, we’ll make 100,000,000 PS2s ä Design is very important ä Must be inexpensive (or should become that way) ä Technology must be ahead of the curve ä Need high performance, low price. Technology Group How to achieve this? ä Processor yield ä High CPU clock speed means lower yields ä Solution? ä Low CPU clock speed, but high parallelism ä Nothing readily available ä SCE designs custom chips. Technology Group 2) Technical Aspects of PlayStation 2 ä 128-bit CPU core “Emotion Engine” ä + 2 independent Vector Units ä + Image Processing Unit (for MPEG) ä GS - “Graphics Synthesizer” GPU ä SPU2 - Sound Processing Unit ä I/O Processor (CD/DVD, USB, i.Link). Technology Group “Emotion Engine” - Specifications ä CPU Core 128 bit CPU ä System Clock 300MHz ä Bus Bandwidth 3.2GB/sec ä Main Memory 32MB (Direct Rambus) ä Floating Point Calculation 6.2 GFLOPS ä 3D Geometry Performance 66 Million polygons/sec. -

Sony's Emotionally Charged Chip

VOLUME 13, NUMBER 5 APRIL 19, 1999 MICROPROCESSOR REPORT THE INSIDERS’ GUIDE TO MICROPROCESSOR HARDWARE Sony’s Emotionally Charged Chip Killer Floating-Point “Emotion Engine” To Power PlayStation 2000 by Keith Diefendorff rate of two million units per month, making it the most suc- cessful single product (in units) Sony has ever built. While Intel and the PC industry stumble around in Although SCE has cornered more than 60% of the search of some need for the processing power they already $6 billion game-console market, it was beginning to feel the have, Sony has been busy trying to figure out how to get more heat from Sega’s Dreamcast (see MPR 6/1/98, p. 8), which has of it—lots more. The company has apparently succeeded: at sold over a million units since its debut last November. With the recent International Solid-State Circuits Conference (see a 200-MHz Hitachi SH-4 and NEC’s PowerVR graphics chip, MPR 4/19/99, p. 20), Sony Computer Entertainment (SCE) Dreamcast delivers 3 to 10 times as many 3D polygons as and Toshiba described a multimedia processor that will be the PlayStation’s 34-MHz MIPS processor (see MPR 7/11/94, heart of the next-generation PlayStation, which—lacking an p. 9). To maintain king-of-the-mountain status, SCE had to official name—we refer to as PlayStation 2000, or PSX2. do something spectacular. And it has: the PSX2 will deliver Called the Emotion Engine (EE), the new chip upsets more than 10 times the polygon throughput of Dreamcast, the traditional notion of a game processor.