TOPICS in ALGEBRAIC COMBINATORICS Richard P

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Algebraic Combinatorics and Finite Geometry

An Introduction to Algebraic Graph Theory Erdos-Ko-Rado˝ results Cameron-Liebler sets in PG(3; q) Cameron-Liebler k-sets in PG(n; q) Algebraic Combinatorics and Finite Geometry Leo Storme Ghent University Department of Mathematics: Analysis, Logic and Discrete Mathematics Krijgslaan 281 - Building S8 9000 Ghent Belgium Francqui Foundation, May 5, 2021 Leo Storme Algebraic Combinatorics and Finite Geometry An Introduction to Algebraic Graph Theory Erdos-Ko-Rado˝ results Cameron-Liebler sets in PG(3; q) Cameron-Liebler k-sets in PG(n; q) ACKNOWLEDGEMENT Acknowledgement: A big thank you to Ferdinand Ihringer for allowing me to use drawings and latex code of his slide presentations of his lectures for Capita Selecta in Geometry (Ghent University). Leo Storme Algebraic Combinatorics and Finite Geometry An Introduction to Algebraic Graph Theory Erdos-Ko-Rado˝ results Cameron-Liebler sets in PG(3; q) Cameron-Liebler k-sets in PG(n; q) OUTLINE 1 AN INTRODUCTION TO ALGEBRAIC GRAPH THEORY 2 ERDOS˝ -KO-RADO RESULTS 3 CAMERON-LIEBLER SETS IN PG(3; q) 4 CAMERON-LIEBLER k-SETS IN PG(n; q) Leo Storme Algebraic Combinatorics and Finite Geometry An Introduction to Algebraic Graph Theory Erdos-Ko-Rado˝ results Cameron-Liebler sets in PG(3; q) Cameron-Liebler k-sets in PG(n; q) OUTLINE 1 AN INTRODUCTION TO ALGEBRAIC GRAPH THEORY 2 ERDOS˝ -KO-RADO RESULTS 3 CAMERON-LIEBLER SETS IN PG(3; q) 4 CAMERON-LIEBLER k-SETS IN PG(n; q) Leo Storme Algebraic Combinatorics and Finite Geometry An Introduction to Algebraic Graph Theory Erdos-Ko-Rado˝ results Cameron-Liebler sets in PG(3; q) Cameron-Liebler k-sets in PG(n; q) DEFINITION A graph Γ = (X; ∼) consists of a set of vertices X and an anti-reflexive, symmetric adjacency relation ∼ ⊆ X × X. -

LINEAR ALGEBRA METHODS in COMBINATORICS László Babai

LINEAR ALGEBRA METHODS IN COMBINATORICS L´aszl´oBabai and P´eterFrankl Version 2.1∗ March 2020 ||||| ∗ Slight update of Version 2, 1992. ||||||||||||||||||||||| 1 c L´aszl´oBabai and P´eterFrankl. 1988, 1992, 2020. Preface Due perhaps to a recognition of the wide applicability of their elementary concepts and techniques, both combinatorics and linear algebra have gained increased representation in college mathematics curricula in recent decades. The combinatorial nature of the determinant expansion (and the related difficulty in teaching it) may hint at the plausibility of some link between the two areas. A more profound connection, the use of determinants in combinatorial enumeration goes back at least to the work of Kirchhoff in the middle of the 19th century on counting spanning trees in an electrical network. It is much less known, however, that quite apart from the theory of determinants, the elements of the theory of linear spaces has found striking applications to the theory of families of finite sets. With a mere knowledge of the concept of linear independence, unexpected connections can be made between algebra and combinatorics, thus greatly enhancing the impact of each subject on the student's perception of beauty and sense of coherence in mathematics. If these adjectives seem inflated, the reader is kindly invited to open the first chapter of the book, read the first page to the point where the first result is stated (\No more than 32 clubs can be formed in Oddtown"), and try to prove it before reading on. (The effect would, of course, be magnified if the title of this volume did not give away where to look for clues.) What we have said so far may suggest that the best place to present this material is a mathematics enhancement program for motivated high school students. -

GL(N, Q)-Analogues of Factorization Problems in the Symmetric Group Joel Brewster Lewis, Alejandro H

GL(n, q)-analogues of factorization problems in the symmetric group Joel Brewster Lewis, Alejandro H. Morales To cite this version: Joel Brewster Lewis, Alejandro H. Morales. GL(n, q)-analogues of factorization problems in the sym- metric group. 28-th International Conference on Formal Power Series and Algebraic Combinatorics, Simon Fraser University, Jul 2016, Vancouver, Canada. hal-02168127 HAL Id: hal-02168127 https://hal.archives-ouvertes.fr/hal-02168127 Submitted on 28 Jun 2019 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. FPSAC 2016 Vancouver, Canada DMTCS proc. BC, 2016, 755–766 GLn(Fq)-analogues of factorization problems in Sn Joel Brewster Lewis1y and Alejandro H. Morales2z 1 School of Mathematics, University of Minnesota, Twin Cities 2 Department of Mathematics, University of California, Los Angeles Abstract. We consider GLn(Fq)-analogues of certain factorization problems in the symmetric group Sn: rather than counting factorizations of the long cycle (1; 2; : : : ; n) given the number of cycles of each factor, we count factorizations of a regular elliptic element given the fixed space dimension of each factor. We show that, as in Sn, the generating function counting these factorizations has attractive coefficients after an appropriate change of basis. -

Useful Relations in Permutations and Combination 1. Useful Relations

Useful Relations in Permutations and Combination 1. Useful Relations - Factorial n! = n.(n-1)! 2. n퐶푟= n푃푟/r! n n-1 3. Pr = n( Pr-1) 4. Useful Relations - Combinations n n 1. Cr = C(n - r) Example 8 8 C6 = C2 = 8×72×1 = 28 n 2. Cn = 1 n 3. C0 = 1 n n n n n 4. C0 + C1 + C2 + ... + Cn = 2 Example 4 4 4 4 4 4 C0 + C1 + C2 + C3+ C4 = (1 + 4 + 6 + 4 + 1) = 16 = 2 n n (n+1) Cr-1 + Cr = Cr (Pascal's Law) n퐶푟 =n/퐶푟−1=n-r+1/r n n If Cx = Cy then either x = y or (n-x) = y. 5. Selection from identical objects: Some Basic Facts The number of selections of r objects out of n identical objects is 1. Total number of selections of zero or more objects from n identical objects is n+1. 6. Permutations of Objects when All Objects are Not Distinct The number of ways in which n things can be arranged taking them all at a time, when st nd 푃1 of the things are exactly alike of 1 type, 푃2 of them are exactly alike of a 2 type, and th 푃푟of them are exactly alike of r type and the rest of all are distinct is n!/ 푃1! 푃2! ... 푃푟! 1 Example: how many ways can you arrange the letters in the word THESE? 5!/2!=120/2=60 Example: how many ways can you arrange the letters in the word REFERENCE? 9!/2!.4!=362880/2*24=7560 7.Circular Permutations: Case 1: when clockwise and anticlockwise arrangements are different Number of circular permutations (arrangements) of n different things is (n-1)! 1. -

Schaum's Outline of Linear Algebra (4Th Edition)

SCHAUM’S SCHAUM’S outlines outlines Linear Algebra Fourth Edition Seymour Lipschutz, Ph.D. Temple University Marc Lars Lipson, Ph.D. University of Virginia Schaum’s Outline Series New York Chicago San Francisco Lisbon London Madrid Mexico City Milan New Delhi San Juan Seoul Singapore Sydney Toronto Copyright © 2009, 2001, 1991, 1968 by The McGraw-Hill Companies, Inc. All rights reserved. Except as permitted under the United States Copyright Act of 1976, no part of this publication may be reproduced or distributed in any form or by any means, or stored in a database or retrieval system, without the prior writ- ten permission of the publisher. ISBN: 978-0-07-154353-8 MHID: 0-07-154353-8 The material in this eBook also appears in the print version of this title: ISBN: 978-0-07-154352-1, MHID: 0-07-154352-X. All trademarks are trademarks of their respective owners. Rather than put a trademark symbol after every occurrence of a trademarked name, we use names in an editorial fashion only, and to the benefit of the trademark owner, with no intention of infringement of the trademark. Where such designations appear in this book, they have been printed with initial caps. McGraw-Hill eBooks are available at special quantity discounts to use as premiums and sales promotions, or for use in corporate training programs. To contact a representative please e-mail us at [email protected]. TERMS OF USE This is a copyrighted work and The McGraw-Hill Companies, Inc. (“McGraw-Hill”) and its licensors reserve all rights in and to the work. -

Problems in Abstract Algebra

STUDENT MATHEMATICAL LIBRARY Volume 82 Problems in Abstract Algebra A. R. Wadsworth 10.1090/stml/082 STUDENT MATHEMATICAL LIBRARY Volume 82 Problems in Abstract Algebra A. R. Wadsworth American Mathematical Society Providence, Rhode Island Editorial Board Satyan L. Devadoss John Stillwell (Chair) Erica Flapan Serge Tabachnikov 2010 Mathematics Subject Classification. Primary 00A07, 12-01, 13-01, 15-01, 20-01. For additional information and updates on this book, visit www.ams.org/bookpages/stml-82 Library of Congress Cataloging-in-Publication Data Names: Wadsworth, Adrian R., 1947– Title: Problems in abstract algebra / A. R. Wadsworth. Description: Providence, Rhode Island: American Mathematical Society, [2017] | Series: Student mathematical library; volume 82 | Includes bibliographical references and index. Identifiers: LCCN 2016057500 | ISBN 9781470435837 (alk. paper) Subjects: LCSH: Algebra, Abstract – Textbooks. | AMS: General – General and miscellaneous specific topics – Problem books. msc | Field theory and polyno- mials – Instructional exposition (textbooks, tutorial papers, etc.). msc | Com- mutative algebra – Instructional exposition (textbooks, tutorial papers, etc.). msc | Linear and multilinear algebra; matrix theory – Instructional exposition (textbooks, tutorial papers, etc.). msc | Group theory and generalizations – Instructional exposition (textbooks, tutorial papers, etc.). msc Classification: LCC QA162 .W33 2017 | DDC 512/.02–dc23 LC record available at https://lccn.loc.gov/2016057500 Copying and reprinting. Individual readers of this publication, and nonprofit libraries acting for them, are permitted to make fair use of the material, such as to copy select pages for use in teaching or research. Permission is granted to quote brief passages from this publication in reviews, provided the customary acknowledgment of the source is given. Republication, systematic copying, or multiple reproduction of any material in this publication is permitted only under license from the American Mathematical Society. -

Combinatorics

Combinatorics Problem: How to count without counting. I How do you figure out how many things there are with a certain property without actually enumerating all of them. Sometimes this requires a lot of cleverness and deep mathematical insights. But there are some standard techniques. I That's what we'll be studying. We sometimes use the bijection rule without even realizing it: I count how many people voted are in favor of something by counting the number of hands raised: I I'm hoping that there's a bijection between the people in favor and the hands raised! Bijection Rule The Bijection Rule: If f : A ! B is a bijection, then jAj = jBj. I We used this rule in defining cardinality for infinite sets. I Now we'll focus on finite sets. Bijection Rule The Bijection Rule: If f : A ! B is a bijection, then jAj = jBj. I We used this rule in defining cardinality for infinite sets. I Now we'll focus on finite sets. We sometimes use the bijection rule without even realizing it: I count how many people voted are in favor of something by counting the number of hands raised: I I'm hoping that there's a bijection between the people in favor and the hands raised! Answer: 26 choices for the first letter, 26 for the second, 10 choices for the first number, the second number, and the third number: 262 × 103 = 676; 000 Example 2: A traveling salesman wants to do a tour of all 50 state capitals. How many ways can he do this? Answer: 50 choices for the first place to visit, 49 for the second, . -

Inclusion‒Exclusion Principle

Inclusionexclusion principle 1 Inclusion–exclusion principle In combinatorics, the inclusion–exclusion principle (also known as the sieve principle) is an equation relating the sizes of two sets and their union. It states that if A and B are two (finite) sets, then The meaning of the statement is that the number of elements in the union of the two sets is the sum of the elements in each set, respectively, minus the number of elements that are in both. Similarly, for three sets A, B and C, This can be seen by counting how many times each region in the figure to the right is included in the right hand side. More generally, for finite sets A , ..., A , one has the identity 1 n This can be compactly written as The name comes from the idea that the principle is based on over-generous inclusion, followed by compensating exclusion. When n > 2 the exclusion of the pairwise intersections is (possibly) too severe, and the correct formula is as shown with alternating signs. This formula is attributed to Abraham de Moivre; it is sometimes also named for Daniel da Silva, Joseph Sylvester or Henri Poincaré. Inclusion–exclusion illustrated for three sets For the case of three sets A, B, C the inclusion–exclusion principle is illustrated in the graphic on the right. Proof Let A denote the union of the sets A , ..., A . To prove the 1 n Each term of the inclusion-exclusion formula inclusion–exclusion principle in general, we first have to verify the gradually corrects the count until finally each identity portion of the Venn Diagram is counted exactly once. -

From Arithmetic to Algebra

From arithmetic to algebra Slightly edited version of a presentation at the University of Oregon, Eugene, OR February 20, 2009 H. Wu Why can’t our students achieve introductory algebra? This presentation specifically addresses only introductory alge- bra, which refers roughly to what is called Algebra I in the usual curriculum. Its main focus is on all students’ access to the truly basic part of algebra that an average citizen needs in the high- tech age. The content of the traditional Algebra II course is on the whole more technical and is designed for future STEM students. In place of Algebra II, future non-STEM would benefit more from a mathematics-culture course devoted, for example, to an understanding of probability and data, recently solved famous problems in mathematics, and history of mathematics. At least three reasons for students’ failure: (A) Arithmetic is about computation of specific numbers. Algebra is about what is true in general for all numbers, all whole numbers, all integers, etc. Going from the specific to the general is a giant conceptual leap. Students are not prepared by our curriculum for this leap. (B) They don’t get the foundational skills needed for algebra. (C) They are taught incorrect mathematics in algebra classes. Garbage in, garbage out. These are not independent statements. They are inter-related. Consider (A) and (B): The K–3 school math curriculum is mainly exploratory, and will be ignored in this presentation for simplicity. Grades 5–7 directly prepare students for algebra. Will focus on these grades. Here, abstract mathematics appears in the form of fractions, geometry, and especially negative fractions. -

STRUCTURE ENUMERATION and SAMPLING Chemical Structure Enumeration and Sampling Have Been Studied by Mathematicians, Computer

STRUCTURE ENUMERATION AND SAMPLING MARKUS MERINGER To appear in Handbook of Chemoinformatics Algorithms Chemical structure enumeration and sampling have been studied by 5 mathematicians, computer scientists and chemists for quite a long time. Given a molecular formula plus, optionally, a list of structural con- straints, the typical questions are: (1) How many isomers exist? (2) Which are they? And, especially if (2) cannot be answered completely: (3) How to get a sample? 10 In this chapter we describe algorithms for solving these problems. The techniques are based on the representation of chemical compounds as molecular graphs (see Chapter 2), i.e. they are mainly applied to constitutional isomers. The major problem is that in silico molecular graphs have to be represented as labeled structures, while in chemical 15 compounds, the atoms are not labeled. The mathematical concept for approaching this problem is to consider orbits of labeled molecular graphs under the operation of the symmetric group. We have to solve the so–called isomorphism problem. According to our introductory questions, we distinguish several dis- 20 ciplines: counting, enumerating and sampling isomers. While counting only delivers the number of isomers, the remaining disciplines refer to constructive methods. Enumeration typically encompasses exhaustive and non–redundant methods, while sampling typically lacks these char- acteristics. However, sampling methods are sometimes better suited to 25 solve real–world problems. There is a wide range of applications where counting, enumeration and sampling techniques are helpful or even essential. Some of these applications are closely linked to other chapters of this book. Counting techniques deliver pure chemical information, they can help to estimate 30 or even determine sizes of chemical databases or compound libraries obtained from combinatorial chemistry. -

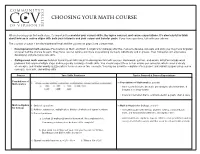

Choosing Your Math Course

CHOOSING YOUR MATH COURSE When choosing your first math class, it’s important to consider your current skills, the topics covered, and course expectations. It’s also helpful to think about how each course aligns with both your interests and your career and transfer goals. If you have questions, talk with your advisor. The courses on page 1 are developmental-level and the courses on page 2 are college-level. • Developmental math courses (Foundations of Math and Math & Algebra for College) offer the chance to develop concepts and skills you may have forgotten or never had the chance to learn. They focus less on lecture and more on practicing concepts individually and in groups. Your homework will emphasize developing and practicing new skills. • College-level math courses build on foundational skills taught in developmental math courses. Homework, quizzes, and exams will often include word problems that require multiple steps and incorporate a variety of math skills. You should expect three to four exams per semester, which cover a variety of concepts, and shorter weekly quizzes which focus on one or two concepts. You may be asked to complete a final project and submit a paper using course concepts, research, and writing skills. Course Your Skills Readiness Topics Covered & Course Expectations Foundations of 1. All multiplication facts (through tens, preferably twelves) should be memorized. In Foundations of Mathematics, you will: Mathematics • learn to use frections, decimals, percentages, whole numbers, & 2. Whole number addition, subtraction, multiplication, division (without a calculator): integers to solve problems • interpret information that is communicated in a graph, chart & table Math & Algebra 1. -

Teaching Strategies for Improving Algebra Knowledge in Middle and High School Students

EDUCATOR’S PRACTICE GUIDE A set of recommendations to address challenges in classrooms and schools WHAT WORKS CLEARINGHOUSE™ Teaching Strategies for Improving Algebra Knowledge in Middle and High School Students NCEE 2015-4010 U.S. DEPARTMENT OF EDUCATION About this practice guide The Institute of Education Sciences (IES) publishes practice guides in education to provide edu- cators with the best available evidence and expertise on current challenges in education. The What Works Clearinghouse (WWC) develops practice guides in conjunction with an expert panel, combining the panel’s expertise with the findings of existing rigorous research to produce spe- cific recommendations for addressing these challenges. The WWC and the panel rate the strength of the research evidence supporting each of their recommendations. See Appendix A for a full description of practice guides. The goal of this practice guide is to offer educators specific, evidence-based recommendations that address the challenges of teaching algebra to students in grades 6 through 12. This guide synthesizes the best available research and shares practices that are supported by evidence. It is intended to be practical and easy for teachers to use. The guide includes many examples in each recommendation to demonstrate the concepts discussed. Practice guides published by IES are available on the What Works Clearinghouse website at http://whatworks.ed.gov. How to use this guide This guide provides educators with instructional recommendations that can be implemented in conjunction with existing standards or curricula and does not recommend a particular curriculum. Teachers can use the guide when planning instruction to prepare students for future mathemat- ics and post-secondary success.