Fundamentals of Computer Organization and Architecture

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

AMD Athlon™ Processor X86 Code Optimization Guide

AMD AthlonTM Processor x86 Code Optimization Guide © 2000 Advanced Micro Devices, Inc. All rights reserved. The contents of this document are provided in connection with Advanced Micro Devices, Inc. (“AMD”) products. AMD makes no representations or warranties with respect to the accuracy or completeness of the contents of this publication and reserves the right to make changes to specifications and product descriptions at any time without notice. No license, whether express, implied, arising by estoppel or otherwise, to any intellectual property rights is granted by this publication. Except as set forth in AMD’s Standard Terms and Conditions of Sale, AMD assumes no liability whatsoever, and disclaims any express or implied warranty, relating to its products including, but not limited to, the implied warranty of merchantability, fitness for a particular purpose, or infringement of any intellectual property right. AMD’s products are not designed, intended, authorized or warranted for use as components in systems intended for surgical implant into the body, or in other applications intended to support or sustain life, or in any other applica- tion in which the failure of AMD’s product could create a situation where per- sonal injury, death, or severe property or environmental damage may occur. AMD reserves the right to discontinue or make changes to its products at any time without notice. Trademarks AMD, the AMD logo, AMD Athlon, K6, 3DNow!, and combinations thereof, AMD-751, K86, and Super7 are trademarks, and AMD-K6 is a registered trademark of Advanced Micro Devices, Inc. Microsoft, Windows, and Windows NT are registered trademarks of Microsoft Corporation. -

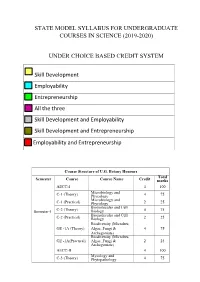

State Model Syllabus for Undergraduate Courses in Science (2019-2020)

STATE MODEL SYLLABUS FOR UNDERGRADUATE COURSES IN SCIENCE (2019-2020) UNDER CHOICE BASED CREDIT SYSTEM Skill Development Employability Entrepreneurship All the three Skill Development and Employability Skill Development and Entrepreneurship Employability and Entrepreneurship Course Structure of U.G. Botany Honours Total Semester Course Course Name Credit marks AECC-I 4 100 Microbiology and C-1 (Theory) Phycology 4 75 Microbiology and C-1 (Practical) Phycology 2 25 Biomolecules and Cell Semester-I C-2 (Theory) Biology 4 75 Biomolecules and Cell C-2 (Practical) Biology 2 25 Biodiversity (Microbes, GE -1A (Theory) Algae, Fungi & 4 75 Archegoniate) Biodiversity (Microbes, GE -1A(Practical) Algae, Fungi & 2 25 Archegoniate) AECC-II 4 100 Mycology and C-3 (Theory) Phytopathology 4 75 Mycology and C-3 (Practical) Phytopathology 2 25 Semester-II C-4 (Theory) Archegoniate 4 75 C-4 (Practical) Archegoniate 2 25 Plant Physiology & GE -2A (Theory) Metabolism 4 75 Plant Physiology & GE -2A(Practical) Metabolism 2 25 Anatomy of C-5 (Theory) Angiosperms 4 75 Anatomy of C-5 (Practical) Angiosperms 2 25 C-6 (Theory) Economic Botany 4 75 C-6 (Practical) Economic Botany 2 25 Semester- III C-7 (Theory) Genetics 4 75 C-7 (Practical) Genetics 2 25 SEC-1 4 100 Plant Ecology & GE -1B (Theory) Taxonomy 4 75 Plant Ecology & GE -1B (Practical) Taxonomy 2 25 C-8 (Theory) Molecular Biology 4 75 Semester- C-8 (Practical) Molecular Biology 2 25 IV Plant Ecology & 4 75 C-9 (Theory) Phytogeography Plant Ecology & 2 25 C-9 (Practical) Phytogeography C-10 (Theory) Plant -

073-080.Pdf (568.3Kb)

Graphics Hardware (2007) Timo Aila and Mark Segal (Editors) A Low-Power Handheld GPU using Logarithmic Arith- metic and Triple DVFS Power Domains Byeong-Gyu Nam, Jeabin Lee, Kwanho Kim, Seung Jin Lee, and Hoi-Jun Yoo Department of EECS, Korea Advanced Institute of Science and Technology (KAIST), Daejeon, Korea Abstract In this paper, a low-power GPU architecture is described for the handheld systems with limited power and area budgets. The GPU is designed using logarithmic arithmetic for power- and area-efficient design. For this GPU, a multifunction unit is proposed based on the hybrid number system of floating-point and logarithmic numbers and the matrix, vector, and elementary functions are unified into a single arithmetic unit. It achieves the single-cycle throughput for all these functions, except for the matrix-vector multipli- cation with 2-cycle throughput. The vertex shader using this function unit as its main datapath shows 49.3% cycle count reduction compared with the latest work for OpenGL transformation and lighting (TnL) kernel. The rendering engine uses also the logarithmic arithmetic for implementing the divisions in pipeline stages. The GPU is divided into triple dynamic voltage and frequency scaling power domains to minimize the power consumption at a given performance level. It shows a performance of 5.26Mvertices/s at 200MHz for the OpenGL TnL and 52.4mW power consumption at 60fps. It achieves 2.47 times per- formance improvement while reducing 50.5% power and 38.4% area consumption compared with the lat- est work. Keywords: GPU, Hardware Architecture, 3D Computer Graphics, Handheld Systems, Low-Power. -

Validated Products List, 1995 No. 3: Programming Languages, Database

NISTIR 5693 (Supersedes NISTIR 5629) VALIDATED PRODUCTS LIST Volume 1 1995 No. 3 Programming Languages Database Language SQL Graphics POSIX Computer Security Judy B. Kailey Product Data - IGES Editor U.S. DEPARTMENT OF COMMERCE Technology Administration National Institute of Standards and Technology Computer Systems Laboratory Software Standards Validation Group Gaithersburg, MD 20899 July 1995 QC 100 NIST .056 NO. 5693 1995 NISTIR 5693 (Supersedes NISTIR 5629) VALIDATED PRODUCTS LIST Volume 1 1995 No. 3 Programming Languages Database Language SQL Graphics POSIX Computer Security Judy B. Kailey Product Data - IGES Editor U.S. DEPARTMENT OF COMMERCE Technology Administration National Institute of Standards and Technology Computer Systems Laboratory Software Standards Validation Group Gaithersburg, MD 20899 July 1995 (Supersedes April 1995 issue) U.S. DEPARTMENT OF COMMERCE Ronald H. Brown, Secretary TECHNOLOGY ADMINISTRATION Mary L. Good, Under Secretary for Technology NATIONAL INSTITUTE OF STANDARDS AND TECHNOLOGY Arati Prabhakar, Director FOREWORD The Validated Products List (VPL) identifies information technology products that have been tested for conformance to Federal Information Processing Standards (FIPS) in accordance with Computer Systems Laboratory (CSL) conformance testing procedures, and have a current validation certificate or registered test report. The VPL also contains information about the organizations, test methods and procedures that support the validation programs for the FIPS identified in this document. The VPL includes computer language processors for programming languages COBOL, Fortran, Ada, Pascal, C, M[UMPS], and database language SQL; computer graphic implementations for GKS, COM, PHIGS, and Raster Graphics; operating system implementations for POSIX; Open Systems Interconnection implementations; and computer security implementations for DES, MAC and Key Management. -

Emerging Technologies Multi/Parallel Processing

Emerging Technologies Multi/Parallel Processing Mary C. Kulas New Computing Structures Strategic Relations Group December 1987 For Internal Use Only Copyright @ 1987 by Digital Equipment Corporation. Printed in U.S.A. The information contained herein is confidential and proprietary. It is the property of Digital Equipment Corporation and shall not be reproduced or' copied in whole or in part without written permission. This is an unpublished work protected under the Federal copyright laws. The following are trademarks of Digital Equipment Corporation, Maynard, MA 01754. DECpage LN03 This report was produced by Educational Services with DECpage and the LN03 laser printer. Contents Acknowledgments. 1 Abstract. .. 3 Executive Summary. .. 5 I. Analysis . .. 7 A. The Players . .. 9 1. Number and Status . .. 9 2. Funding. .. 10 3. Strategic Alliances. .. 11 4. Sales. .. 13 a. Revenue/Units Installed . .. 13 h. European Sales. .. 14 B. The Product. .. 15 1. CPUs. .. 15 2. Chip . .. 15 3. Bus. .. 15 4. Vector Processing . .. 16 5. Operating System . .. 16 6. Languages. .. 17 7. Third-Party Applications . .. 18 8. Pricing. .. 18 C. ~BM and Other Major Computer Companies. .. 19 D. Why Success? Why Failure? . .. 21 E. Future Directions. .. 25 II. Company/Product Profiles. .. 27 A. Multi/Parallel Processors . .. 29 1. Alliant . .. 31 2. Astronautics. .. 35 3. Concurrent . .. 37 4. Cydrome. .. 41 5. Eastman Kodak. .. 45 6. Elxsi . .. 47 Contents iii 7. Encore ............... 51 8. Flexible . ... 55 9. Floating Point Systems - M64line ................... 59 10. International Parallel ........................... 61 11. Loral .................................... 63 12. Masscomp ................................. 65 13. Meiko .................................... 67 14. Multiflow. ~ ................................ 69 15. Sequent................................... 71 B. Massively Parallel . 75 1. Ametek.................................... 77 2. Bolt Beranek & Newman Advanced Computers ........... -

Unit – I Computer Architecture and Operating System – Scs1315

SCHOOL OF ELECTRICAL AND ELECTRONICS DEPARTMENT OF ELECTRONICS AND COMMMUNICATION ENGINEERING UNIT – I COMPUTER ARCHITECTURE AND OPERATING SYSTEM – SCS1315 UNIT.1 INTRODUCTION Central Processing Unit - Introduction - General Register Organization - Stack organization -- Basic computer Organization - Computer Registers - Computer Instructions - Instruction Cycle. Arithmetic, Logic, Shift Microoperations- Arithmetic Logic Shift Unit -Example Architectures: MIPS, Power PC, RISC, CISC Central Processing Unit The part of the computer that performs the bulk of data-processing operations is called the central processing unit CPU. The CPU is made up of three major parts, as shown in Fig.1 Fig 1. Major components of CPU. The register set stores intermediate data used during the execution of the instructions. The arithmetic logic unit (ALU) performs the required microoperations for executing the instructions. The control unit supervises the transfer of information among the registers and instructs the ALU as to which operation to perform. General Register Organization When a large number of registers are included in the CPU, it is most efficient to connect them through a common bus system. The registers communicate with each other not only for direct data transfers, but also while performing various microoperations. Hence it is necessary to provide a common unit that can perform all the arithmetic, logic, and shift microoperations in the processor. A bus organization for seven CPU registers is shown in Fig.2. The output of each register is connected to two multiplexers (MUX) to form the two buses A and B. The selection lines in each multiplexer select one register or the input data for the particular bus. The A and B buses form the inputs to a common arithmetic logic unit (ALU). -

Computer Organization and Architecture Designing for Performance Ninth Edition

COMPUTER ORGANIZATION AND ARCHITECTURE DESIGNING FOR PERFORMANCE NINTH EDITION William Stallings Boston Columbus Indianapolis New York San Francisco Upper Saddle River Amsterdam Cape Town Dubai London Madrid Milan Munich Paris Montréal Toronto Delhi Mexico City São Paulo Sydney Hong Kong Seoul Singapore Taipei Tokyo Editorial Director: Marcia Horton Designer: Bruce Kenselaar Executive Editor: Tracy Dunkelberger Manager, Visual Research: Karen Sanatar Associate Editor: Carole Snyder Manager, Rights and Permissions: Mike Joyce Director of Marketing: Patrice Jones Text Permission Coordinator: Jen Roach Marketing Manager: Yez Alayan Cover Art: Charles Bowman/Robert Harding Marketing Coordinator: Kathryn Ferranti Lead Media Project Manager: Daniel Sandin Marketing Assistant: Emma Snider Full-Service Project Management: Shiny Rajesh/ Director of Production: Vince O’Brien Integra Software Services Pvt. Ltd. Managing Editor: Jeff Holcomb Composition: Integra Software Services Pvt. Ltd. Production Project Manager: Kayla Smith-Tarbox Printer/Binder: Edward Brothers Production Editor: Pat Brown Cover Printer: Lehigh-Phoenix Color/Hagerstown Manufacturing Buyer: Pat Brown Text Font: Times Ten-Roman Creative Director: Jayne Conte Credits: Figure 2.14: reprinted with permission from The Computer Language Company, Inc. Figure 17.10: Buyya, Rajkumar, High-Performance Cluster Computing: Architectures and Systems, Vol I, 1st edition, ©1999. Reprinted and Electronically reproduced by permission of Pearson Education, Inc. Upper Saddle River, New Jersey, Figure 17.11: Reprinted with permission from Ethernet Alliance. Credits and acknowledgments borrowed from other sources and reproduced, with permission, in this textbook appear on the appropriate page within text. Copyright © 2013, 2010, 2006 by Pearson Education, Inc., publishing as Prentice Hall. All rights reserved. Manufactured in the United States of America. -

S.D.M COLLEGE of ENGINEERING and TECHNOLOGY Sridhar Y

VISVESVARAYA TECHNOLOGICAL UNIVERSITY S.D.M COLLEGE OF ENGINEERING AND TECHNOLOGY A seminar report on CUDA Submitted by Sridhar Y 2sd06cs108 8th semester DEPARTMENT OF COMPUTER SCIENCE ENGINEERING 2009-10 Page 1 VISVESVARAYA TECHNOLOGICAL UNIVERSITY S.D.M COLLEGE OF ENGINEERING AND TECHNOLOGY DEPARTMENT OF COMPUTER SCIENCE ENGINEERING CERTIFICATE Certified that the seminar work entitled “CUDA” is a bonafide work presented by Sridhar Y bearing USN 2SD06CS108 in a partial fulfillment for the award of degree of Bachelor of Engineering in Computer Science Engineering of the Visvesvaraya Technological University, Belgaum during the year 2009-10. The seminar report has been approved as it satisfies the academic requirements with respect to seminar work presented for the Bachelor of Engineering Degree. Staff in charge H.O.D CSE Name: Sridhar Y USN: 2SD06CS108 Page 2 Contents 1. Introduction 4 2. Evolution of GPU programming and CUDA 5 3. CUDA Structure for parallel processing 9 4. Programming model of CUDA 10 5. Portability and Security of the code 12 6. Managing Threads with CUDA 14 7. Elimination of Deadlocks in CUDA 17 8. Data distribution among the Thread Processes in CUDA 14 9. Challenges in CUDA for the Developers 19 10. The Pros and Cons of CUDA 19 11. Conclusions 21 Bibliography 21 Page 3 Abstract Parallel processing on multi core processors is the industry’s biggest software challenge, but the real problem is there are too many solutions. One of them is Nvidia’s Compute Unified Device Architecture (CUDA), a software platform for massively parallel high performance computing on the powerful Graphics Processing Units (GPUs). -

Benchmark Evaluations of Modern Multi Processor Vlsi Ds Pm Ps

1220 Session 1220 Benchmark Evaluations of Modern Multi-Processor VLSI DSPµPs Aaron L. Robinson and Fred O. Simons, Jr. High-Performance Computing and Simulation (HCS) Research Laboratory Electrical Engineering Department Florida A&M University and Florida State University Tallahassee, FL 32316-2175 Abstract - The authors continue their tradition of presenting technical reviews and performance comparisons of the newest multi-processor VLSI DSPµPs with the intention of providing concise focused analyses designed to help established or aspiring DSP analysts evaluate the applicability of new DSP technology to their specific applications. As in the past, the Analog Devices SHARCTM and Texas Instruments TMS320C80 families of DSPµPs will be the focus of our presentation because these manufacturers continue to push the envelop of new DSPµPs (Digital Signal Processing microProcessors) development. However, in addition to the standard performance analyses and benchmark evaluations, the authors will present a new image-processing bench marking technique designed specifically for evaluating new DSPµP image processing capabilities. 1. Introduction The combination of constantly evolving DSP algorithm development and continually advancing DSPuP hardware have formed the basis for an exponential growth in DSP applications. The increased market demand for these applications and their required hardware has resulted in the production of DSPuPs with very powerful components capable of performing a wide range of complicated operations. Due to the complicated architectures of these new processors, it would be time consuming for even the experienced DSP analysts to review and evaluate these new DSPuPs. Furthermore, the inexperienced DSP analysts would find it even more time consuming, and possibly very difficult to appreciate the significance and opportunities for these new components. -

Lecture 6: Instruction Set Architecture and the 80X86

Lecture 6: Instruction Set Architecture and the 80x86 Professor Randy H. Katz Computer Science 252 Spring 1996 RHK.S96 1 Review From Last Time • Given sales a function of performance relative to competition, tremendous investment in improving product as reported by performance summary • Good products created when have: – Good benchmarks – Good ways to summarize performance • If not good benchmarks and summary, then choice between improving product for real programs vs. improving product to get more sales=> sales almost always wins • Time is the measure of computer performance! • What about cost? RHK.S96 2 Review: Integrated Circuits Costs IC cost = Die cost + Testing cost + Packaging cost Final test yield Die cost = Wafer cost Dies per Wafer * Die yield Dies per wafer = p * ( Wafer_diam / 2)2 – p * Wafer_diam – Test dies Die Area Ö 2 * Die Area Defects_per_unit_area * Die_Area Die Yield = Wafer yield * { 1 + } 4 Die Cost is goes roughly with area RHK.S96 3 Review From Last Time Price vs. Cost 100% 80% Average Discount 60% Gross Margin 40% Direct Costs 20% Component Costs 0% Mini W/S PC 5 4.7 3.8 4 3.5 Average Discount 3 2.5 Gross Margin 2 1.8 Direct Costs 1.5 1 Component Costs 0 Mini W/S PC RHK.S96 4 Today: Instruction Set Architecture • 1950s to 1960s: Computer Architecture Course Computer Arithmetic • 1970 to mid 1980s: Computer Architecture Course Instruction Set Design, especially ISA appropriate for compilers • 1990s: Computer Architecture Course Design of CPU, memory system, I/O system, Multiprocessors RHK.S96 5 Computer Architecture? . the attributes of a [computing] system as seen by the programmer, i.e. -

(12) Patent Application Publication (10) Pub. No.: US 2011/0231717 A1 HUR Et Al

US 20110231717A1 (19) United States (12) Patent Application Publication (10) Pub. No.: US 2011/0231717 A1 HUR et al. (43) Pub. Date: Sep. 22, 2011 (54) SEMICONDUCTOR MEMORY DEVICE Publication Classification (76) Inventors: Hwang HUR, Kyoungki-do (KR): ( 51) Int. Cl. Chang-Ho Do, Kyoungki-do (KR): C. % CR Jae-Bum Ko, Kyoungki-do (KR); ( .01) Jin-Il Chung, Kyoungki-do (KR) (21) Appl. N 13A149,683 (52) U.S. Cl. ................................. 714/718; 714/E11.145 ppl. No.: 9 (22)22) FileFiled: Mavay 31,51, 2011 (57) ABSTRACT Related U.S. Application Data Semiconductor memory device includes a cell array includ (62) Division of application No. 12/154,870, filed on May ing a plurality of unit cells; and a test circuit configured to 28, 2008, now Pat. No. 7,979,758. perform a built-in self-stress (BISS) test for detecting a defect by performing a plurality of internal operations including a (30) Foreign Application Priority Data write operation through an access to the unit cells using a plurality of patterns during a test procedure carried out at a Feb. 29, 2008 (KR) ............................. 2008-OO18761 wafer-level. O 2 FAD MoDE PE, FRS PWD PADX8 GENERATOR EAELER PEC 3. PD) PWDC CRECKCCES CKCKB RASCASECSCE SISTE CLOCKBUFFER CEE e EST CLK BSI RASCASECSCKE WREFB ESTE s ACTF 32 P 8:W 4. 38t.A 3. se. A(2) FIRST REFRESH AKE13A2) REFIREFA CONTROR ROWANC RSBSAApp.12BS ARS BA255612.ESE E L - ww.i. 33 EE 3. PE PWDA RAE7(3) ECS issi: OLREFB SECOND LAK:2) BSDT DATABUFFERG08 EABLER REFIREFA SECON REFRESH BSE Y ADDRC CONTROLLER BST YEADDK)) PWS Testaposs WKB811-12 BAADDICBAAD BSYBRST (88.11:12) "Of BST CLK 380 3. -

Id Question Microprocessor Is the Example of ___Architecture. A

Id Question Microprocessor is the example of _______ architecture. A Princeton B Von Neumann C Rockwell D Harvard Answer A Marks 1 Unit 1 Id Question _______ bus is unidirectional. A Data B Address C Control D None of these Answer B Marks 1 Unit 1 Id Question Use of _______isolates CPU form frequent accesses to main memory. A Local I/O controller B Expansion bus interface C Cache structure D System bus Answer C Marks 1 Unit 1 Id Question _______ buffers data transfer between system bus and I/O controllers on expansion bus. A Local I/O controller B Expansion bus interface C Cache structure D None of these Answer B Marks 1 Unit 1 Id Question ______ Timing involves a clock line. A Synchronous B Asynchronous C Asymmetric D None of these Answer A Marks 1 Unit 1 Id Question -----timing takes advantage of mixture of slow and fast devices, sharing the same bus A Synchronous B asynchronous C Asymmetric D None of these Answer B Marks 1 Unit 1 Id st Question In 1 generation _____language was used to prepare programs. A Machine B Assembly C High level programming D Pseudo code Answer B Marks 1 Unit 1 Id Question The transistor was invented at ________ laboratories. A AT &T B AT &Y C AM &T D AT &M Answer A Marks 1 Unit 1 Id Question ______is used to control various modules in the system A Control Bus B Data Bus C Address Bus D All of these Answer A Marks 1 Unit 1 Id Question The disadvantage of single bus structure over multi bus structure is _____ A Propagation delay B Bottle neck because of bus capacity C Both A and B D Neither A nor B Answer C Marks 1 Unit 1 Id Question _____ provide path for moving data among system modules A Control Bus B Data Bus C Address Bus D All of these Answer B Marks 1 Unit 1 Id Question Microcontroller is the example of _____ architecture.