Download Doctoral Thesis

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Gesture Keyboard Using Arduino

www.ijcrt.org © 2021 IJCRT | Volume 9, Issue 5 May 2021 | ISSN: 2320-2882 GESTURE KEYBOARD USING ARDUINO P. R. Rodge1 Gawali Harshada2 Chaudhari Pooja3 More Mayur4 1Professor of Computer Engineering Department, SSJCOE, Maharashtra, India 2Student of Computer Engineering Department, SSJCOE, Maharashtra, India 3Student of Computer Engineering Department, SSJCOE. Maharashtra, India 4Student of Computer Engineering Department, SSJCOE, Maharashtra, India ABSTRACT Different keyboards are available in the market, with variations in size, layouts, programs, and functionalities. Recently, virtual keyboards are also in use but for every single keyboard, the user should remember the arrangement of keys in order to enter different characters. Remote-based gesture keyboard is a system that serves on the Internet of Things [IoT] architecture and embedded with Arduino as well as the accelerometer and machine learning process that enforces the system to perform in the desired direction. The remote-based gesture keyboard is the model, where the user can enter text and number in a text editor by moving a hand to the specific motion of characters in the air using Arduino. Arduino operates as a remote where gestures relocate into the air, translating into letters, and exposing them on a computer screen. Keywords— gesture, Arduino, remote, keyboard. I.INTRODUCTION The keyboard plays a vibrant role in the computer system for the entry of data by pressing the number of keys. In today’s generation screen touch keyboards are mostly used and gesture keyboards also used only for physically disabled people and for special use. There is plenty of variation in layouts of the physical keyboard such as AZERTY, QWERTY, Dvorak Colemak, Maltron, and JCUKEN. -

An Improved Arabic Keyboard Layout

Sci.Int.(Lahore),33(1),5-15,2021 ISSN 1013-5316; CODEN: SINTE 8 5 AN IMPROVED ARABIC KEYBOARD LAYOUT 1Amjad Qtaish, 2Jalawi Alshudukhi, 3Badiea Alshaibani, 4Yosef Saleh, 5Salam Bazrawi College of Computer Science and Engineering, University of Ha'il, Ha'il, Saudi Arabia. [email protected], [email protected], [email protected], [email protected], [email protected] ABSTRACT: One of the most important human–machine interaction (HMI) systems is the computer keyboard. The keyboard layout (KL) dictates how a person interacts with a physical keyboard through the way in which the letters, numbers, punctuation marks, and symbols are mapped and arranged on the keyboard. Mapping letters onto the keys of a keyboard is complex because many issues need to be taken into considerations, such as the nature of the language, finger fatigue, hand balance, typing speed, and distance traveled by fingers during typing and finger movements. There are two main kinds of KL: English and Arabic. Although numerous research studies have proposed different layouts for the English keyboard, there is a lack of research studies that focus on the Arabic KL. To address this lack, this study analyzed and clarified the limitations of the standard legacy Arabic KL. Then an efficient Arabic KL was proposed to overcome the limitations of the current KL. The frequency of Arabic letters and bi-gram probabilities were measured on a large Arabic corpus in order to assess the current KL and to design the improved Arabic KL. The improved Arabic KL was then evaluated and compared against the current KL in terms of letter frequency, finger-travel distance, hand and finger balance, bi-gram frequency, row distribution, and most frequent words. -

Text Input Methods for Indian Languages Sowmya Vajjala, International Institute of Information Technology

Iowa State University From the SelectedWorks of Sowmya Vajjala 2011 Text Input Methods for Indian Languages Sowmya Vajjala, International Institute of Information Technology Available at: https://works.bepress.com/sowmya-vajjala/3/ TEXT INPUT METHODS FOR INDIAN LANGUAGES By Sowmya V.B. 200607014 A THESIS SUBMITTED IN PARTIAL FULFILLMENT OF THE REQUIREMENTS FOR THE DEGREE OF Master of Science (by Research) in Computer Science & Engineering Search and Information Extraction Lab Language Technologies Research Center International Institute of Information Technology Hyderabad, India September 2008 Copyright c 2008 Sowmya V.B. All Rights Reserved Dedicated to all those people, living and dead, who are directly or indirectly responsible to the wonderful life that I am living now. INTERNATIONAL INSTITUTE OF INFORMATION TECHNOLOGY Hyderabad, India CERTIFICATE It is certified that the work contained in this thesis, titled “ Text input methods for Indian Languages ” by Sowmya V.B. (200607014) submitted in partial fulfillment for the award of the degree of Master of Science (by Research) in Computer Science & Engineering, has been carried out under my supervision and it is not submitted elsewhere for a degree. Date Advisor : Dr. Vasudeva Varma Associate Professor IIIT, Hyderabad Acknowledgements I would like to first express my gratitude to my advisor Dr Vasudeva Varma, for being with me and believing in me throughout the duration of this thesis work. His regular suggestions have been greatly useful. I thank Mr Prasad Pingali for his motivation and guidance during the intial phases of my thesis. I thank Mr Bhupal Reddy for giving me the first lessons in my research. I entered IIIT as a novice to Computer Science in general and research in particular. -

Geekhack Geekhack Community => Ergonomics => Topic Started By: Oneproduct on Sun, 11 January 2015, 22:10:05

geekhack geekhack Community => Ergonomics => Topic started by: oneproduct on Sun, 11 January 2015, 22:10:05 Title: Layout Analysis: Dvorak/Colemak/MTGAP/Carpalx/Workman/my own Post by: oneproduct on Sun, 11 January 2015, 22:10:05 Some interesting statistics based on the layout analyzer I mentioned in this other thread: https://geekhack.org/index.php?topic=67430 (https://geekhack.org/index.php?topic=67430) The text used for this particular example was a book called "The Wasted Generation" which you can find at the Gutenberg project. I tested these layouts with a few other texts as well and the results are generally the same. A few comments on how I value some statistics: 1. Same finger is the most critical statistic. Having to type two letters in a row using the same finger (double letters don't count, such as LL in heLLo) is the slowest thing you can do. 2. Alternation is the next most important statistic. It allows your other hand to get ready to type the next key while the first hand is pressing the first key. 3. Inward rolls are very comfortable, something I learned from using Colemak. 4. Outward rolls are generally bad. If possible try to convert them to alternation or inward rolls. 5. I think that the G and H keys (positions given relative to QWERTY) are not "free" homerow positions. In the Workman layout, he talks about the lateral movement from F to G and from J to H being worse than the vertical movement in reaching from S to W or D to E for example. -

View of Each General at Type Is Discussed

Exploring Empirical Guidelines for Selecting Computer Assistive Technology for People with Disabilities A thesis submitted in partial fulfillment of the requirements for the degree of Master of Science By Jennifer Border B.S., Wright State University, 2008 2011 Wright State University WRIGHT STATE UNIVERSITY SCHOOL OF GRADUATE STUDIES DATE MARCH 7, 2011 I HEREBY RECOMMEND THAT THE THESIS PREPARED UNDER MY SUPERVISION BY Jennifer Border ENTITLED Exploring Empirical Guidelines for Selecting Computer Assistive Technology for People with Disabilities BE ACCEPTED IN PARTIAL FULFILLMENT OF THE REQUIREMENTS FOR THE DEGREE OF Master of Science. Wayne Shebilske, Ph.D. Thesis Director Scott Watamaniuk, Ph.D. Graduate Program Director Committee on Final Examination John Flach, Ph.D. Chair, Department of Psychology Wayne Shebilske, Ph.D. John Flach, Ph.D. Clark Shingledecker, Ph.D. Andrew T. Hsu, Ph.D. Dean, School of Graduate Studies ii ABSTRACT Border, Jennifer. M.S., Department of Psychology, Wright State University, 2011. Exploring Empirical Guidelines for Selecting Computer Assistive Technology for People with Disabilities. Assistive technologies (AT) enable people with disabilities (PWD) who are unable to use traditional computer workstations to independently access computers. The selection process of AT is complex due to the numerous AT available and the specific needs of the user. This study examined the process to select new AT for a PWD with Arthrogryposis. In part 1, a series of two different typing sessions (typing test & journal response) were completed by three different AT (voice recognition (VRS), head tracker (HT), & brain computer interface (BCI)). In part 2 only journaling sessions using VRS & the user’s traditional typing method (touch screen) were completed. -

One-Handed Keyboards Quick Guide Created 8/05 Updated 6/06 PN, FB

One-handed Keyboards Quick Guide Created 8/05 Updated 6/06 PN, FB Although standard computer keyboards are designed for two-handed use someone with just one hand can access a computer in several ways. Standard keyboards The most straight-forward technique is simply to use a standard keyboard. This technique means you can sit down at almost any machine and use it effectively. Five Finger Typist (£45) from Inclusive Technology is a program for teaching single-handed touch typing – either left or right hand. Single handed typists may also find it easier to type on a slightly smaller Compact Keyboard, which are also easier to position on a tray or lap because they are physically smaller than standard keyboards. Single-handed Dvorak keyboard layouts The standard QWERTY layout is not designed for single-handed typing, but you can re-map the keys on a standard keyboard to use a different ‘Dvorak’ layout optimised for either left or right- handed use. Once you have re-mapped the keyboard, lever off the keys and re-position them, or use key stickers to re-label the keys. Dvorak Left-handed keyboard Dvorak layouts can be set within the Mac and Windows operating systems, or downloaded and installed for free. On Windows XP, for example, go to Start > Control Panel > Regional and Language > Languages > Details > Add, then tick Keyboard layout and choose ‘United States – Dvorak’ (for both, left or right hands). Look in the Help for your own computer, or do a search for ‘Dvorak keyboard’ on the internet for more information. A disadvantage with this technique is that you have to change the keyboard layout on every computer you use, and also carry your Dvorak keyboard with you. -

Advanced and Natural Interaction System for Motion-Impaired Users

Universitat de les Illes Balears Departament de Ci`enciesMatem`atiquesi Inform`atica Tesis Doctoral Advanced and natural interaction system for motion-impaired users Cristina Suemay Manresa Yee Dirigida por: Dr. Francisco Perales L¶opez Dr. Javier Varona G¶omez Junio 2009 i Dr. Francisco Jos¶ePerales Lopez. Profesor Titular de Universidad. Departamento de Matem¶aticase Inform¶atica. Universitat de les Illes Balears. Dr. Javier Varona G¶omez. Ram¶ony Cajal. Departamento de Matem¶aticase Inform¶atica. Universitat de les Illes Balears. HACEN CONSTAR: Que la memoria titulada Advanced and natural interaction system for motion- im- paired users ha sido realizada por Cristina Suemay Manresa Yee bajo nuestra di- recci¶onen el Departamento de Matem¶aticase Inform¶aticade la Universitat de les Illes Balears y constituye la tesis para optar al Grado de Doctor en Inform¶atica. Palma de Mallorca, Junio de 2009 iii Dr. Francisco Jos¶ePerales L¶opez Director de la tesis Dr. Javier Varona G¶omez Director de la tesis Cristina Suemay Manresa Yee Doctorando iv A todos los que hicieron posible esta tesis. v vi Agradecimientos Acabada la memoria de la tesis, me gustar¶³aagradecer a muchas personas su ayuda y apoyo por haber hecho este camino largo algo m¶asdulce. Primero a mi familia, sobretodo a mis padres y a mi hermano porque han estado siempre all¶³en todo y para todo. A Moncho, ¶elsabe el porqu¶e.(63353178343) A mis amigos y a Ana por no parar de preguntar cuando presentaba la tesis y por ayudarme a desconectar. :) A Pere, Miquel, Miquel `El Artista', Toni, Fontanet, Angel,¶ Manolo, Jos¶eMar¶³a, Mar¶³aJos¶e,Biel, Lloren»c,Arnau, Ricardo, Margaret, Jose Luis Ortego, Simon, Vic- tor, a los que siempre me encuentro por pasillo, a los del otro laboratorio . -

Fully in the Information and Communication Society

ATLEC Project – WP3 Contract No: 518229-LLP-1-2011-UK-LEONARDO-LMP ATLEC Assistive Technology Learning Through A Unified Curriculum “ATLEC Training course” WP number WP3 Deliverable number 3.2 Development of curriculum, preparation of training courses and production of training WP title materials Status FINAL Project start date 01/01/2012 Project duration 26 months Author PhoenixKM For further information related to the ATLEC project please visit: The project website (atlec-project.eu); Facebook page (facebook.com/ATLECproject); Twitter page (twitter.com/ATLEC_project/). The ATLEC (Assistive Technology Learning Through A Unified Curriculum – 518229-LLP-1-2011-1-UK-LEONARDO-LMP) project has been partially funded under the Lifelong Learning program. This publication reflects the views only of the author(s), and the Commission cannot be held responsible for any use which may be made of the information contained therein. This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. July, 2013 1 ATLEC website: http://atlec-project.eu/ ATLEC Project – WP3 Contract No: 518229-LLP-1-2011-UK-LEONARDO-LMP Introduction Studies throughout Europe under ACCESSIBLE and AEGIS (FP7 projects), and KA3 project IMPACT highlighted in their pan-European surveys with over 1000 end-users and Assistive Technology (AT) specialists that training is the core barrier towards using assistive technology (AT) by end-users. This lack of training is subsequently also recognised as the main barrier to acquiring gainful employment in a (regular) working environment. A quick assessment of the ATLEC partner countries raised the following issues: Belgium: Although there is some ICT AT training available by suppliers, and special vocational training centres for adults, it is time limited and expensive. -

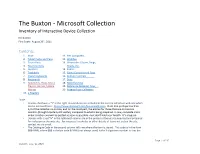

The Buxton - Microsoft Collection Inventory of Interactive Device Collection

The Buxton - Microsoft Collection Inventory of Interactive Device Collection Bill Buxton First Draft: August 29th, 2011 Contents: 1. Mice 11. Pen Computers 2. Tablet Pucks and Pens 12. Watches 3. Touch Pads 13. Wearables: Gloves, Rings, 4. Touch Screens Heads, etc. 5. Joysticks 14. Pedals 6. Trackballs 15. Game Controllers & Toys 7. Chord Keyboards 16. Remote Controls 8. Keyboards 17. Dials 9. Handhelds, PDAs, Music 18. Miscellaneous Players, Games, Mobile 19. Reference Material, Toys, … Phones 20. Purging from Collection 10. e-Readers Note: • Devices that have a “Y” in the right-most column are included in the current collection web-site which can be accessed here: https://www.microsoft.com/buxtoncollection. Note that perhaps less than 1/3 of the collection is on-line, and for the most part, the entries for those that are on-line are minimal (though hopefully still useful), compared to what is being prepared. A new, complete site is under curation and will be posted as soon as possible – but don’t hold your breath. It’s a big job. • Devices with a red “N” in the rightmost column are in the process of being documented and prepared for inclusion on the web site. For requests for photos or other details of items not yet on the site, contact me via email. • The Catalogue Code in the second column tells me where the item is stored. This code is in the form BBB-NNN, where BBB is the bin code & NNN (not always used) is the 3-digit item number in that bin Page 1 of 146 Version: May 12, 2021 Mice Photo Code Name Year Price Compan Notes Pivot? y M-06 NRC 1968 NFS National Donated by Nestor Burtnyk. -

Descargar El ISO Que Podremos Correr Directamente Desde La Máquina Virtual

1 2 Los 1o Columna 36 productos 90 de software hardware libre y su retro GNU/Linux más ma- entrañables Juegos DATTATIPS 44 con 94 rio Historias PRIMERAS CALENDARIO 56 IMPRESIONES 98 GAMMER DEL PLANETA METRO lo 06 ultimo Carreras ALAN WAKE: 66 informáticas 100 AL FILO a demanda DE LA LOCURA Mobile 14 World Congress 2012 Generación HUMOR 72 digital 106 sin límite de edad LinkedIn: 26 Razones (originales) para sumarse al “Facebook TECLADOS de los 76 ALTERNATIVOS negocios” Tecno 32 Gadgets LEY SOPA: 84 QUÉ DICEN LOS ARTISTAS staff DIRECTORA Y JEFA DE REDACCIÓN Débora Orué COLUMNISTAS Alexis Burgos Ariel Corgatelli Juan Gutmann Javier Richarte Luis Altamiranda Magalí Sinopoli Christian Sochas Natalia Solari Franco Rivero Uriel Bederman HUMOR Daniel Paz DISEÑO EDITORIAL Y CREATIVIDAD www.americanbread.net Arte de tapa; Esteban Paniagua COMERCIALIZACIÓN DATTATEC.COM SRL Córdoba 3753, Rosario, Santa fe www.dattatec.com REDACCIÓN [email protected] DATOS DE CONTACTO Dirección comercial: [email protected] Las opiniones expresadas en los artículos son exclusiva responsabilidad de sus autores y no coinciden necesariamente con la opinión de Dattatec.com SRL. 3 4 / editorial El buscador que te entiende // Débora Orué [email protected] Ese algoritmo revolucionario que ofrece links a sitios de entidades, que vienen a ser personas, lugares relacionados a las palabras clave (o keywords) y cosas que tienen un significado específico ante ingresadas en el recuadro de búsqueda de Google, el ojo humano. Por ejemplo, el algoritmo estará en pronto perderá su frialdad matemática. O, mejor dicho, condiciones de asociar el nombre de una compañía seguirá siendo tan matemático como desde sus inicios, (digamos, Google), con sus fundadores (Larry Page pero contará con una cuota extra de comprensión. -

A Minimum Chord Stenograph Keyboard for Blind and Sighted Users

A Minimum Chord Stenograph Keyboard For Blind And Sighted Users by Zhongzhi Hu B.A.Sc., Beijing Institute of Technology A THESIS SUBMITTED IN PARTIAL FULFILLMENT OF THE REQUIREMENTS OF THE DEGREE OF MASTER OF APPLIED SCIENCE in THE FACULTY OF GRADUATE STUDIES DEPARTMENT OF ELECTRICAL ENGINEERING We accept this thesis as conforming to the required standard THE UNIVERSITY OF BRITISH COLUMBIA 1992 © Zhongzhi Hu, 1992 Abstract This thesis deals with the design and testing of an experimental steno- graph keyboard for blind and sighted users. The new keyboard, called the Minimum Chord Stenograph (MCS), is presented. It comprises two panels of six keys, one for each hand. Relocation of the fingers above the keys is at a minimum. A coding scheme for MCS was developed to allow ex act phonemic description of the text or speech to be entered by alternate hand typing. Experiments were carried out with the MCS and with the standard Stenograph-Boswell keyboards. The time between successive movements of hands on the keyboards was precisely measured live with a 386 com puter and the statistics have been compiled and analyzed. The results show that the MCS’ performance (WPM) is significantly better than the stan dard stenograph-Boswell; the problem of training time for the stenograph seems to be solved at least at the level of initial competence. Extrapolating from the published results for QWERTY, MCS has much better performance than QWERTY at the initial stage. In conclusion, MCS keyboard may pro vide an efficient alternative to the existing standard stenograph keyboard and to the QWERTY keyboard. -

Single Button Keyboard for Blind People Using Machine Learning

International Research Journal of Engineering and Technology(IRJET) e-ISSN: 2395-0056 Volume: 08 Issue: 04 | Apr2021 www.irjet.net p-ISSN: 2395-0072 SINGLE BUTTON KEYBOARD FOR BLIND PEOPLE USING MACHINE LEARNING Ms.V.Chinnammal1, D.Aravindhan2, GokulRaj3, S.Surya4 1Assistant Professor (SS), Department of Electronics and Communication Engineering, Rajalakshmi Institute of Technology, Tamil Nadu, India 2, 3, 4 Department of Electronics and Communication, Rajalakshmi Institute of Technology, Tamil Nadu, India ---------------------------------------------------------------------***--------------------------------------------------------------------- Abstract -The KEYBOARD is aimed towards the welfare for using the remote. The user also doesn’t need to of visually impaired people. The visually impaired have an use various functional keys for different languages. exposure to all or any the newest equipments made The essential step for constructing this system is to especially for them, but none has attempted a far better create the motion tracking device that is based on 3 research over this issue. Hence, This paper is certain to major components such as accelerometer, Arduino make a revolution in its own field and ensure complete and switches. The Arduino serial monitor is support from people of various societies. This helps the configured and set the baud rate to 38400 at Arduino visually impaired to interact with the pc system at a maximum probability and easier to speak. At the IDE, now the overall module will work on scikit international arena this paper will certainly achieve greater learn’s a library that converts signals into letters heights and is predicted to be welcomed by communities for through accelerometer and every single character helping the blind.