Real-Time Processing Digital Signal Processing

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

DSP56303 Product Brief, Rev

Freescale Semiconductor DSP56303PB Product Brief Rev. 2, 2/2005 DSP56303 24-Bit Digital Signal Processor 16 6 6 3 Memory Expansion Area The DSP56303 is intended Triple X Data HI08 ESSI SCI PrograM Y Data for use in telecommunication Timer RAM RAM RAM 4096 × 24 2048 × 24 2048 × 24 applications, such as multi- bits bits bits line voice/data/ fax (default) (default) (default) processing, video Peripheral Expansion Area conferencing, audio PM_EB XM_EB PIO_EB YAB YM_EB applications, control, and Address External 18 Generation XAB Address general digital signal Unit PAB Bus Address Six-Channel DAB Switch processing. DMA Unit External 24-Bit Bus 13 Bootstrap DSP56300 Interface ROM and Inst. Core Cache Control Control DDB 24 Internal YDB External Data XDB Data Bus Bus PDB Switch Data Switch GDB EXTAL Power Clock Management Data ALU 5 Generator Program Program Program × + → XTAL Interrupt Decode Address 24 24 56 56-bit MAC JTAG PLL Two 56-bit Accumulators Controller Controller Generator 56-bit Barrel Shifter OnCE™ DE 2 MODA/IRQA MODB/IRQB RESET MODC/IRQC PINIT/NMI MODD/IRQD Figure 1. DSP56303 Block Diagram The DSP56303 is a member of the DSP56300 core family of programmable CMOS DSPs. Significant architectural features of the DSP56300 core family include a barrel shifter, 24-bit addressing, instruction cache, and DMA. The DSP56303 offers 100 million multiply-accumulates per second (MMACS) using an internal 100 MHz clock at 3.0–3.6 volts. The DSP56300 core family offers a rich instruction set and low power dissipation, as well as increasing levels of speed and power to enable wireless, telecommunications, and multimedia products. -

Low Power Asynchronous Digital Signal Processing

LOW POWER ASYNCHRONOUS DIGITAL SIGNAL PROCESSING A thesis submitted to the University of Manchester for the degree of Doctor of Philosophy in the Faculty of Science & Engineering October 2000 Michael John George Lewis Department of Computer Science 1 Contents Chapter 1: Introduction ....................................................................................14 Digital Signal Processing ...............................................................................15 Evolution of digital signal processors ....................................................17 Architectural features of modern DSPs .........................................................19 High performance multiplier circuits .....................................................20 Memory architecture ..............................................................................21 Data address generation .........................................................................21 Loop management ..................................................................................23 Numerical precision, overflows and rounding .......................................24 Architecture of the GSM Mobile Phone System ...........................................25 Channel equalization ..............................................................................28 Error correction and Viterbi decoding ...................................................29 Speech transcoding ................................................................................31 Half-rate and enhanced -

Rlsc & DSP Advanced Microprocessor System Design

Purdue University Purdue e-Pubs ECE Technical Reports Electrical and Computer Engineering 3-1-1992 RlSC & DSP Advanced Microprocessor System Design; Sample Projects, Fall 1991 John E. Fredine Purdue University, School of Electrical Engineering Dennis L. Goeckel Purdue University, School of Electrical Engineering David G. Meyer Purdue University, School of Electrical Engineering Stuart E. Sailer Purdue University, School of Electrical Engineering Glenn E. Schmottlach Purdue University, School of Electrical Engineering Follow this and additional works at: http://docs.lib.purdue.edu/ecetr Fredine, John E.; Goeckel, Dennis L.; Meyer, David G.; Sailer, Stuart E.; and Schmottlach, Glenn E., "RlSC & DSP Advanced Microprocessor System Design; Sample Projects, Fall 1991" (1992). ECE Technical Reports. Paper 302. http://docs.lib.purdue.edu/ecetr/302 This document has been made available through Purdue e-Pubs, a service of the Purdue University Libraries. Please contact [email protected] for additional information. RISC & DSP Advanced Microprocessor System Design Sample Projects, Fall 1991 John E. Fredine Dennis L. Goeckel David G. Meyer Stuart E. Sailer Glenn E. Schmottlach TR-EE 92- 11 March 1992 School of Electrical Engineering Purdue University West Lafayette, Indiana 47907 RlSC & DSP Advanced Microprocessor System Design Sample Projects, Fall 1991 John E. Fredine Dennis L. Goeckel David G. Meyer Stuart E. Sailer Glenn E. Schrnottlach School of Electrical Engineering Purdue University West Lafayette, Indiana 47907 Table of Contents Abstract ................................................................................................................... -

Introduction to Digital Signal Processors

INTRODUCTION TO Accumulator architecture DIGITAL SIGNAL PROCESSORS Memory-register architecture Prof. Brian L. Evans in collaboration with Niranjan Damera-Venkata and Magesh Valliappan Load-store architecture Embedded Signal Processing Laboratory The University of Texas at Austin Austin, TX 78712-1084 http://anchovy.ece.utexas.edu/ Outline n Signal processing applications n Conventional DSP architecture n Pipelining in DSP processors n RISC vs. DSP processor architectures n TI TMS320C6x VLIW DSP architecture n Signal and image processing applications n Signal processing on general-purpose processors n Conclusion 2 Signal Processing Applications n Low-cost embedded systems 4 Modems, cellular telephones, disk drives, printers n High-throughput applications 4 Halftoning, radar, high-resolution sonar, tomography n PC based multimedia 4 Compression/decompression of audio, graphics, video n Embedded processor requirements 4 Inexpensive with small area and volume 4 Deterministic interrupt service routine latency 4 Low power: ~50 mW (TMS320C5402/20: 0.32 mA/MIP) 3 Conventional DSP Architecture n High data throughput 4 Harvard architecture n Separate data memory/bus and program memory/bus n Three reads and one or two writes per instruction cycle 4 Short deterministic interrupt service routine latency 4 Multiply-accumulate (MAC) in a single instruction cycle 4 Special addressing modes supported in hardware n Modulo addressing for circular buffers (e.g. FIR filters) n Bit-reversed addressing (e.g. fast Fourier transforms) 4Instructions to keep the -

The DENX U-Boot and Linux Guide (DULG) for Canyonlands

The DENX U-Boot and Linux Guide (DULG) for canyonlands Table of contents: • 1. Abstract • 2. Introduction ♦ 2.1. Copyright ♦ 2.2. Disclaimer ♦ 2.3. Availability ♦ 2.4. Credits ♦ 2.5. Translations ♦ 2.6. Feedback ♦ 2.7. Conventions • 3. Embedded Linux Development Kit ♦ 3.1. ELDK Availability ♦ 3.2. ELDK Getting Help ♦ 3.3. Supported Host Systems ♦ 3.4. Supported Target Architectures ♦ 3.5. Installation ◊ 3.5.1. Product Packaging ◊ 3.5.2. Downloading the ELDK ◊ 3.5.3. Initial Installation ◊ 3.5.4. Installation and Removal of Individual Packages ◊ 3.5.5. Removal of the Entire Installation ♦ 3.6. Working with ELDK ◊ 3.6.1. Switching Between Multiple Installations ♦ 3.7. Mounting Target Components via NFS ♦ 3.8. Rebuilding ELDK Components ◊ 3.8.1. ELDK Source Distribution ◊ 3.8.2. Rebuilding Target Packages ◊ 3.8.3. Rebuilding ELDT Packages ♦ 3.9. ELDK Packages ◊ 3.9.1. List of ELDT Packages ◊ 3.9.2. List of Target Packages ♦ 3.10. Rebuilding the ELDK from Scratch ◊ 3.10.1. ELDK Build Process Overview ◊ 3.10.2. Setting Up ELDK Build Environment ◊ 3.10.3. build.sh Usage ◊ 3.10.4. Format of the cpkgs.lst and tpkgs.lst Files ♦ 3.11. Notes for Solaris 2.x Host Environment • 4. System Setup ♦ 4.1. Serial Console Access ♦ 4.2. Configuring the "cu" command ♦ 4.3. Configuring the "kermit" command ♦ 4.4. Using the "minicom" program ♦ 4.5. Permission Denied Problems ♦ 4.6. Configuration of a TFTP Server ♦ 4.7. Configuration of a BOOTP / DHCP Server ♦ 4.8. Configuring a NFS Server • 5. -

CMU DSP the Carnegie Mellon Synthesizable Digital Signal Processor Core

CMU DSP The Carnegie Mellon Synthesizable Digital Signal Processor Core Alpha Version Documentation 1.12 1999/06/10 Chris Inacio The CMU DSP Team [email protected] June 10, 1999 CONTENTS CONTENTS Contents 1 Introduction 3 1.1 Getting and Installing the Distribution . ................ 4 2 Architecture 5 2.1Overview................................ 5 2.2ArithmeticandDataLogicUnit.................... 6 2.3AddressGenerationUnit........................ 6 2.4OtherUnits............................... 7 2.4.1 Bus Switch ........................... 7 2.4.2 ProgramControlUnit..................... 7 2.5MemoryandExternalInterface..................... 7 3 File Repository 9 3.1 Availability ............................... 9 3.2VerilogSourceFiles........................... 9 3.3Documentation............................. 10 3.4TestScriptsandFiles.......................... 11 3.5MiscellaneousFiles........................... 11 4 Scripts and Tools 13 4.1 Introduction ............................... 13 4.2 Verilog PreProcessor ..................... 13 4.3 reformat ............................... 14 4.4 coff_text_dump .......................... 14 4.5 run-test ............................... 14 4.6 cp2build ............................... 16 4.7BuildingtheTools............................ 16 5 CMU DSP Testing 17 5.1 Introduction ............................... 17 5.2 Writing a new functional test . ................ 17 5.2.1 Overview............................ 17 5.2.2 Writing a new program . ................ 18 5.2.3 Motorola Simulation Traces . ............... -

(DSP) Application Development System (ADS) User's Manual

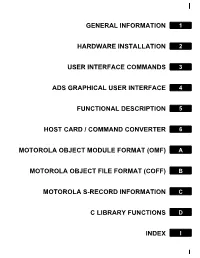

GENERAL INFORMATION 1 HARDWARE INSTALLATION 2 USER INTERFACE COMMANDS 3 ADS GRAPHICAL USER INTERFACE 4 FUNCTIONAL DESCRIPTION 5 HOST CARD / COMMAND CONVERTER 6 MOTOROLA OBJECT MODULE FORMAT (OMF) A MOTOROLA OBJECT FILE FORMAT (COFF) B MOTOROLA S-RECORD INFORMATION C C LIBRARY FUNCTIONS D INDEX I 1 GENERAL INFORMATION 2 HARDWARE INSTALLATION 3 USER INTERFACE COMMANDS 4 ADS GRAPHICAL USER INTERFACE 5 FUNCTIONAL DESCRIPTION 6 HOST CARD / COMMAND CONVERTER A MOTOROLA OBJECT MODULE FORMAT (OMF) B MOTOROLA OBJECT FILE FORMAT (COFF) C MOTOROLA S-RECORD INFORMATION D C LIBRARY FUNCTIONS I INDEX DIGITAL SIGNAL PROCESSOR (DSP) Application Development System (ADS) User’s Manual PRELIMINARY Motorola, Incorporated Semiconductor Products Sector Wireless Signal Processing Division 6501 William Cannon Drive West Austin, TX 78735-8598 This document (and other documents) can be viewed on the World Wide Web at http://www.motorola-dsp.com. OnCE is a trademark of Motorola, Inc. MOTOROLA INC., 1989, 1997 Order this document by DSPADSUM/AD Motorola reserves the right to make changes without further notice to any products herein to improve reliability, function, or design. Motorola does not assume any liability arising out of the application or use of any product or circuit described herein; neither does it convey any license under its patent rights nor the rights of others. Motorola products are not authorized for use as components in life support devices or systems intendedPRELIMINARY for surgical implant into the body or intended to support or sustain life. Buyer agrees to notify Motorola of any such intended end use whereupon Motorola shall determine availability and suitability of its product or products for the use intended. -

Fixed-Point C Compiler for Tms320c50 Digital Signal Processor

FIXED-POINT C COMPILER FOR TMS320C50 DIGITAL SIGNAL PROCESSOR Jiyang liang Wonyong Sung School of Electrical Engineering Seoul National University, KOREA jiyang@hdtv .snu.ac. kr and wysung@dsp, snu. ac. kr ABSTRACT 2. FIXED-POINT ARITHMETIC RULES A fixed-point C compiler is developed for convenient and ef- An integer variable or a constant in C language consists of, ficient programming of TMS320C50 fixed-point digital sig- usually, 16 bits, and the LSB(Least Significant Bit) has the nal processor. This compiler supports the ‘fix’ data type weight of one for the conversion to or from the floating-point that can have an individual integer word-length according data type. This can bring overflows or unacceptable quanti- to the range of a variable. It can add or subtract two data zation errors when a floating-point digital signal processing having different integer word-lengths by automatically in- program is converted to an integer version. Therefore, it is serting shift operations. The accuracy of fixed-point mul- necessary to assign a different weight to the LSB of a vari- tiply operation is significantly increased by storing the up- able or a constant [7] [8]. For this purpose, we employed per part of the multiplied double-precision result instead of a fixed-point data type that can have an individual integer keeping the lower part as conducted in the integer multipli- word-length as follows: cation. Several target specific code optimization techniques are employed to improve the compiler efficiency. The empir- fix(integer-wordlength) variable-name; ical results show that the execution speed of a fixed-point C program is much, about an order of magnitude, faster than that of a floating-point C program in a fixed-point digital Note that the range (R)that a variable can represent and signal processor. -

Debug Interface for Clone of 56000 DSP Andreas Nilsson

Debug Interface for Clone of 56000 DSP Examensarbete utfört i Elektroniksystem av Andreas Nilsson LITH-ISY-EX-ET--07/0319--SE Linköping 2007 Debug Interface for Clone of 56000 DSP Examensarbete utfört i Elektroniksystem vid Linköpings tekniska högskola av Andreas Nilsson LITH-ISY-EX-ET--07/0319--SE Handledare: Thomas Johansson Examinator: Kent Palmkvist Linköping 2007-06-04 Presentationsdatum Institution och avdelning Institutionen för systemteknik 2007-06-04 Elektroniksystem Publiceringsdatum (elektronisk version) Department of Electrical Engineering Electronics Systems 2007-06-11 Språk Typ av publikation ISBN (licentiatavhandling) Svenska Licentiatavhandling ISRN LITH-ISY-EX-ET--07/0319--SE X Annat (ange nedan) X Examensarbete C-uppsats Serietitel (licentiatavhandling) D-uppsats Engelska/English Rapport Antal sidor Annat (ange nedan) Serienummer/ISSN (licentiatavhandling) 42 URL för elektronisk version http://www.ep.liu.se Publikationens titel Debug interface for clone of 56000 DSP Författare Andreas Nilsson Sammanfattning The scope for this thesis was to design a debug interface for a DSP (digital signal processor). The DSP is a research version of a Motorola 56000 that is designed for a project on asynchronous processor and for use in education. The DSP and debug interface are controlled via a standard PC with RS232 interface equipped with Linux operation system. In the project 4 blocks has been designed: The first block can set the DSP core in debug mode or run mode. The second block sends a debug instruction to the DSP core, these debug instructions were prerequisite to the project. The third block enable read and write connection to the memory buses between the DSP core and the three memory blocks. -

Motorola DSP56000 Family Optimizing C Compiler User’S Manual, Release 6.3

Motorola DSP56000 Family Optimizing C Compiler User’s Manual, Release 6.3 DSP56KCCUM/D Document Rev. 2.0, 07/1999 Suite56, OnCe, and MFAX are trademarks of Motorola, Inc. Motorola reserves the right to make changes without further notice to any products herein. Motorola makes no warranty, representation or guarantee regarding the suitability of its products for any particular purpose, nor does Motorola assume any liability arising out of the application or use of any product or circuit, and specifically disclaims any and all liability, including without limitation consequential or incidental damages. “Typical” parameters which may be provided in Motorola data sheets and/or specifications can and do vary in different applications and actual performance may vary over time. All operating parameters, including “Typicals” must be validated for each customer application by customer’s technical experts. Motorola does not convey any license under its patent rights nor the rights of others. Motorola products are not designed, intended, or authorized for use as components in systems intended for surgical implant into the body, or other applications intended to support life, or for any other application in which the failure of the Motorola product could create a situation where personal injury or death may occur. Should Buyer purchase or use Motorola products for any such unintended or unauthorized application, Buyer shall indemnify and hold Motorola and its officers, employees, subsidiaries, affiliates, and distributors harmless against all claims, costs, damages, and expenses, and reasonable attorney fees arising out of, directly or indirectly, any claim of personal injury or death associated with such unintended or unauthorized use, even if such claim alleges that Motorola was negligent regarding the design or manufacture of the part. -

DSP Microprocessor – Advances and Automotive Applications

DSP 1 Subra Ganesan Professor, Computer Science and Engineering Associate Director, Product Development and Manufacturing Center, Oakland University, Rochester, MI 48309 Email: [email protected] Topics Covered: 1. Introduction to DSP Processors 2. Fixed Point DSP- c24x 3. Floating Point DSP- C6711 4. Code Composer Studio 5. DSP/BIOS for C6711 6. External Memory Interface for C6711 7. Interrupt – C6711 8. Applications DSP Microprocessor – Advances and Automotive Applications • Advances in Circuit Technology, Architecture, Algorithms and VLSI design techniques have contributed to high performance Digital Signal Processing(DSP) microprocessors and to multitude of novel applications of DSP chips. • DSP processors are RISC based which have fast arithmetic units, on chip memory, analog interface, serial ports, timers, counters, facilities for inter processor communications and other special features. The Microprocessor overview 1949 Transistors 1958 Integrated Circuits 1961 ICs IN Quality 1964 Small Scale IC(SSI) Gates 1968 Medium Scale IC(MSI) Registers 1971 Large Scale IC(LSI), Memory, CPU 1972 8 BIT MICROPROCESSORS 1973 16 BIT MICROPROCESSORS 1982 32 BIT MICROPROCESSORS 1984 DSP MICROPROCESSORS – I GENERATION 1986 DSP MICROPROCESSORS – II GENERATION 1988 DSP MICROPROCESSORS – III GENERATION 1989 RISC MICROPROCESSORS – II QUALITY 1990 MISC MINIMUM INSTRUSTION SET MICROPROCESSOR MICROPROCESSOR OVERVIEW Microprocessor Number of Performance Number of transistors Instructions 4 Bit Intel 4004 2300 45 1971 68000 70000 0.5 MIPS 80 Different 14 address mode Size B,W,L TMS 320C80 32 2 Billion bit RISC operations per second [BOPs] INTRODUCTION TO DSP MICROPROCESSORS DSP micros are reduced-instruction-set computers optimized for the fastest possible execution of the following instructions • Addition • Subtraction • Multiplication • Shifting Single cycle multiplication and shifting using ARRAY multiplier and barrel (or combination) shifter. -

The Design of a Custom 32-Bit SIMD Enhanced Digital Signal Processor

Rochester Institute of Technology RIT Scholar Works Theses 12-2017 The Design of a Custom 32-Bit SIMD Enhanced Digital Signal Processor Shashank Simha [email protected] Follow this and additional works at: https://scholarworks.rit.edu/theses Recommended Citation Simha, Shashank, "The Design of a Custom 32-Bit SIMD Enhanced Digital Signal Processor" (2017). Thesis. Rochester Institute of Technology. Accessed from This Master's Project is brought to you for free and open access by RIT Scholar Works. It has been accepted for inclusion in Theses by an authorized administrator of RIT Scholar Works. For more information, please contact [email protected]. The Design of a Custom 32-bit SIMD Enhanced Digital Signal Processor by Shashank Simha Graduate Paper Submitted in partial fulfillment of the requirements for the degree of Master of Science in Electrical Engineering Approved by: Mr. Mark A. Indovina, Lecturer Graduate Research Advisor, Department of Electrical and Microelectronic Engineering Dr. Sohail A. Dianat, Professor Department Head, Department of Electrical and Microelectronic Engineering Department of Electrical and Microelectronic Engineering Kate Gleason College of Engineering Rochester Institute of Technology Rochester, New York December 2017 To my family and friends, for all of their endless love, support, and encouragement throughout my career at Rochester Institute of Technology Declaration I hereby declare that except where specific reference is made to the work of others, the contents of this paper are original and have not been submitted in whole or in part for consideration for any other degree or qualification in this, or any other University. This paper is the result of my own work and includes nothing which is the outcome of work done in collaboration, except where specifically indicated in the text.