Unit 21 Student's T Distribution in Hypotheses Testing

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Hypothesis Testing and Likelihood Ratio Tests

Hypottthesiiis tttestttiiing and llliiikellliiihood ratttiiio tttesttts Y We will adopt the following model for observed data. The distribution of Y = (Y1, ..., Yn) is parameter considered known except for some paramett er ç, which may be a vector ç = (ç1, ..., çk); ç“Ç, the paramettter space. The parameter space will usually be an open set. If Y is a continuous random variable, its probabiiillliiittty densiiittty functttiiion (pdf) will de denoted f(yy;ç) . If Y is y probability mass function y Y y discrete then f(yy;ç) represents the probabii ll ii tt y mass functt ii on (pmf); f(yy;ç) = Pç(YY=yy). A stttatttiiistttiiicalll hypottthesiiis is a statement about the value of ç. We are interested in testing the null hypothesis H0: ç“Ç0 versus the alternative hypothesis H1: ç“Ç1. Where Ç0 and Ç1 ¶ Ç. hypothesis test Naturally Ç0 § Ç1 = ∅, but we need not have Ç0 ∞ Ç1 = Ç. A hypott hesii s tt estt is a procedure critical region for deciding between H0 and H1 based on the sample data. It is equivalent to a crii tt ii call regii on: a critical region is a set C ¶ Rn y such that if y = (y1, ..., yn) “ C, H0 is rejected. Typically C is expressed in terms of the value of some tttesttt stttatttiiistttiiic, a function of the sample data. For µ example, we might have C = {(y , ..., y ): y – 0 ≥ 3.324}. The number 3.324 here is called a 1 n s/ n µ criiitttiiicalll valllue of the test statistic Y – 0 . S/ n If y“C but ç“Ç 0, we have committed a Type I error. -

Lecture 22: Bivariate Normal Distribution Distribution

6.5 Conditional Distributions General Bivariate Normal Let Z1; Z2 ∼ N (0; 1), which we will use to build a general bivariate normal Lecture 22: Bivariate Normal Distribution distribution. 1 1 2 2 f (z1; z2) = exp − (z1 + z2 ) Statistics 104 2π 2 We want to transform these unit normal distributions to have the follow Colin Rundel arbitrary parameters: µX ; µY ; σX ; σY ; ρ April 11, 2012 X = σX Z1 + µX p 2 Y = σY [ρZ1 + 1 − ρ Z2] + µY Statistics 104 (Colin Rundel) Lecture 22 April 11, 2012 1 / 22 6.5 Conditional Distributions 6.5 Conditional Distributions General Bivariate Normal - Marginals General Bivariate Normal - Cov/Corr First, lets examine the marginal distributions of X and Y , Second, we can find Cov(X ; Y ) and ρ(X ; Y ) Cov(X ; Y ) = E [(X − E(X ))(Y − E(Y ))] X = σX Z1 + µX h p i = E (σ Z + µ − µ )(σ [ρZ + 1 − ρ2Z ] + µ − µ ) = σX N (0; 1) + µX X 1 X X Y 1 2 Y Y 2 h p 2 i = N (µX ; σX ) = E (σX Z1)(σY [ρZ1 + 1 − ρ Z2]) h 2 p 2 i = σX σY E ρZ1 + 1 − ρ Z1Z2 p 2 2 Y = σY [ρZ1 + 1 − ρ Z2] + µY = σX σY ρE[Z1 ] p 2 = σX σY ρ = σY [ρN (0; 1) + 1 − ρ N (0; 1)] + µY = σ [N (0; ρ2) + N (0; 1 − ρ2)] + µ Y Y Cov(X ; Y ) ρ(X ; Y ) = = ρ = σY N (0; 1) + µY σX σY 2 = N (µY ; σY ) Statistics 104 (Colin Rundel) Lecture 22 April 11, 2012 2 / 22 Statistics 104 (Colin Rundel) Lecture 22 April 11, 2012 3 / 22 6.5 Conditional Distributions 6.5 Conditional Distributions General Bivariate Normal - RNG Multivariate Change of Variables Consequently, if we want to generate a Bivariate Normal random variable Let X1;:::; Xn have a continuous joint distribution with pdf f defined of S. -

Applied Biostatistics Mean and Standard Deviation the Mean the Median Is Not the Only Measure of Central Value for a Distribution

Health Sciences M.Sc. Programme Applied Biostatistics Mean and Standard Deviation The mean The median is not the only measure of central value for a distribution. Another is the arithmetic mean or average, usually referred to simply as the mean. This is found by taking the sum of the observations and dividing by their number. The mean is often denoted by a little bar over the symbol for the variable, e.g. x . The sample mean has much nicer mathematical properties than the median and is thus more useful for the comparison methods described later. The median is a very useful descriptive statistic, but not much used for other purposes. Median, mean and skewness The sum of the 57 FEV1s is 231.51 and hence the mean is 231.51/57 = 4.06. This is very close to the median, 4.1, so the median is within 1% of the mean. This is not so for the triglyceride data. The median triglyceride is 0.46 but the mean is 0.51, which is higher. The median is 10% away from the mean. If the distribution is symmetrical the sample mean and median will be about the same, but in a skew distribution they will not. If the distribution is skew to the right, as for serum triglyceride, the mean will be greater, if it is skew to the left the median will be greater. This is because the values in the tails affect the mean but not the median. Figure 1 shows the positions of the mean and median on the histogram of triglyceride. -

Data 8 Final Stats Review

Data 8 Final Stats review I. Hypothesis Testing Purpose: To answer a question about a process or the world by testing two hypotheses, a null and an alternative. Usually the null hypothesis makes a statement that “the world/process works this way”, and the alternative hypothesis says “the world/process does not work that way”. Examples: Null: “The customer was not cheating-his chances of winning and losing were like random tosses of a fair coin-50% chance of winning, 50% of losing. Any variation from what we expect is due to chance variation.” Alternative: “The customer was cheating-his chances of winning were something other than 50%”. Pro tip: You must be very precise about chances in your hypotheses. Hypotheses such as “the customer cheated” or “Their chances of winning were normal” are vague and might be considered incorrect, because you don’t state the exact chances associated with the events. Pro tip: Null hypothesis should also explain differences in the data. For example, if your hypothesis stated that the coin was fair, then why did you get 70 heads out of 100 flips? Since it’s possible to get that many (though not expected), your null hypothesis should also contain a statement along the lines of “Any difference in outcome from what we expect is due to chance variation”. Steps: 1) Precisely state your null and alternative hypotheses. 2) Decide on a test statistic (think of it as a general formula) to help you either reject or fail to reject the null hypothesis. • If your data is categorical, a good test statistic might be the Total Variation Distance (TVD) between your sample and the distribution it was drawn from. -

Use of Statistical Tables

TUTORIAL | SCOPE USE OF STATISTICAL TABLES Lucy Radford, Jenny V Freeman and Stephen J Walters introduce three important statistical distributions: the standard Normal, t and Chi-squared distributions PREVIOUS TUTORIALS HAVE LOOKED at hypothesis testing1 and basic statistical tests.2–4 As part of the process of statistical hypothesis testing, a test statistic is calculated and compared to a hypothesised critical value and this is used to obtain a P- value. This P-value is then used to decide whether the study results are statistically significant or not. It will explain how statistical tables are used to link test statistics to P-values. This tutorial introduces tables for three important statistical distributions (the TABLE 1. Extract from two-tailed standard Normal, t and Chi-squared standard Normal table. Values distributions) and explains how to use tabulated are P-values corresponding them with the help of some simple to particular cut-offs and are for z examples. values calculated to two decimal places. STANDARD NORMAL DISTRIBUTION TABLE 1 The Normal distribution is widely used in statistics and has been discussed in z 0.00 0.01 0.02 0.03 0.050.04 0.05 0.06 0.07 0.08 0.09 detail previously.5 As the mean of a Normally distributed variable can take 0.00 1.0000 0.9920 0.9840 0.9761 0.9681 0.9601 0.9522 0.9442 0.9362 0.9283 any value (−∞ to ∞) and the standard 0.10 0.9203 0.9124 0.9045 0.8966 0.8887 0.8808 0.8729 0.8650 0.8572 0.8493 deviation any positive value (0 to ∞), 0.20 0.8415 0.8337 0.8259 0.8181 0.8103 0.8206 0.7949 0.7872 0.7795 0.7718 there are an infinite number of possible 0.30 0.7642 0.7566 0.7490 0.7414 0.7339 0.7263 0.7188 0.7114 0.7039 0.6965 Normal distributions. -

1. How Different Is the T Distribution from the Normal?

Statistics 101–106 Lecture 7 (20 October 98) c David Pollard Page 1 Read M&M §7.1 and §7.2, ignoring starred parts. Reread M&M §3.2. The eects of estimated variances on normal approximations. t-distributions. Comparison of two means: pooling of estimates of variances, or paired observations. In Lecture 6, when discussing comparison of two Binomial proportions, I was content to estimate unknown variances when calculating statistics that were to be treated as approximately normally distributed. You might have worried about the effect of variability of the estimate. W. S. Gosset (“Student”) considered a similar problem in a very famous 1908 paper, where the role of Student’s t-distribution was first recognized. Gosset discovered that the effect of estimated variances could be described exactly in a simplified problem where n independent observations X1,...,Xn are taken from (, ) = ( + ...+ )/ a normal√ distribution, N . The sample mean, X X1 Xn n has a N(, / n) distribution. The random variable X Z = √ / n 2 2 Phas a standard normal distribution. If we estimate by the sample variance, s = ( )2/( ) i Xi X n 1 , then the resulting statistic, X T = √ s/ n no longer has a normal distribution. It has a t-distribution on n 1 degrees of freedom. Remark. I have written T , instead of the t used by M&M page 505. I find it causes confusion that t refers to both the name of the statistic and the name of its distribution. As you will soon see, the estimation of the variance has the effect of spreading out the distribution a little beyond what it would be if were used. -

8.5 Testing a Claim About a Standard Deviation Or Variance

8.5 Testing a Claim about a Standard Deviation or Variance Testing Claims about a Population Standard Deviation or a Population Variance ² Uses the chi-squared distribution from section 7-4 → Requirements: 1. The sample is a simple random sample 2. The population has a normal distribution (n −1)s 2 → Test Statistic for Testing a Claim about or ²: 2 = 2 where n = sample size s = sample standard deviation σ = population standard deviation s2 = sample variance σ2 = population variance → P-values and Critical Values: Use table A-4 with df = n – 1 for the number of degrees of freedom *Remember that table A-4 is based on cumulative areas from the right → Properties of the Chi-Square Distribution: 1. All values of 2 are nonnegative and the distribution is not symmetric 2. There is a different 2 distribution for each number of degrees of freedom 3. The critical values are found in table A-4 (based on cumulative areas from the right) --locate the row corresponding to the appropriate number of degrees of freedom (df = n – 1) --the significance level is used to determine the correct column --Right-tailed test: Because the area to the right of the critical value is 0.05, locate 0.05 at the top of table A-4 --Left-tailed test: With a left-tailed area of 0.05, the area to the right of the critical value is 0.95 so locate 0.95 at the top of table A-4 --Two-tailed test: Divide the significance level of 0.05 between the left and right tails, so the areas to the right of the two critical values are 0.975 and 0.025. -

A Study of Non-Central Skew T Distributions and Their Applications in Data Analysis and Change Point Detection

A STUDY OF NON-CENTRAL SKEW T DISTRIBUTIONS AND THEIR APPLICATIONS IN DATA ANALYSIS AND CHANGE POINT DETECTION Abeer M. Hasan A Dissertation Submitted to the Graduate College of Bowling Green State University in partial fulfillment of the requirements for the degree of DOCTOR OF PHILOSOPHY August 2013 Committee: Arjun K. Gupta, Co-advisor Wei Ning, Advisor Mark Earley, Graduate Faculty Representative Junfeng Shang. Copyright c August 2013 Abeer M. Hasan All rights reserved iii ABSTRACT Arjun K. Gupta, Co-advisor Wei Ning, Advisor Over the past three decades there has been a growing interest in searching for distribution families that are suitable to analyze skewed data with excess kurtosis. The search started by numerous papers on the skew normal distribution. Multivariate t distributions started to catch attention shortly after the development of the multivariate skew normal distribution. Many researchers proposed alternative methods to generalize the univariate t distribution to the multivariate case. Recently, skew t distribution started to become popular in research. Skew t distributions provide more flexibility and better ability to accommodate long-tailed data than skew normal distributions. In this dissertation, a new non-central skew t distribution is studied and its theoretical properties are explored. Applications of the proposed non-central skew t distribution in data analysis and model comparisons are studied. An extension of our distribution to the multivariate case is presented and properties of the multivariate non-central skew t distri- bution are discussed. We also discuss the distribution of quadratic forms of the non-central skew t distribution. In the last chapter, the change point problem of the non-central skew t distribution is discussed under different settings. -

1 One Parameter Exponential Families

1 One parameter exponential families The world of exponential families bridges the gap between the Gaussian family and general dis- tributions. Many properties of Gaussians carry through to exponential families in a fairly precise sense. • In the Gaussian world, there exact small sample distributional results (i.e. t, F , χ2). • In the exponential family world, there are approximate distributional results (i.e. deviance tests). • In the general setting, we can only appeal to asymptotics. A one-parameter exponential family, F is a one-parameter family of distributions of the form Pη(dx) = exp (η · t(x) − Λ(η)) P0(dx) for some probability measure P0. The parameter η is called the natural or canonical parameter and the function Λ is called the cumulant generating function, and is simply the normalization needed to make dPη fη(x) = (x) = exp (η · t(x) − Λ(η)) dP0 a proper probability density. The random variable t(X) is the sufficient statistic of the exponential family. Note that P0 does not have to be a distribution on R, but these are of course the simplest examples. 1.0.1 A first example: Gaussian with linear sufficient statistic Consider the standard normal distribution Z e−z2=2 P0(A) = p dz A 2π and let t(x) = x. Then, the exponential family is eη·x−x2=2 Pη(dx) / p 2π and we see that Λ(η) = η2=2: eta= np.linspace(-2,2,101) CGF= eta**2/2. plt.plot(eta, CGF) A= plt.gca() A.set_xlabel(r'$\eta$', size=20) A.set_ylabel(r'$\Lambda(\eta)$', size=20) f= plt.gcf() 1 Thus, the exponential family in this setting is the collection F = fN(η; 1) : η 2 Rg : d 1.0.2 Normal with quadratic sufficient statistic on R d As a second example, take P0 = N(0;Id×d), i.e. -

Two-Sample T-Tests Assuming Equal Variance

PASS Sample Size Software NCSS.com Chapter 422 Two-Sample T-Tests Assuming Equal Variance Introduction This procedure provides sample size and power calculations for one- or two-sided two-sample t-tests when the variances of the two groups (populations) are assumed to be equal. This is the traditional two-sample t-test (Fisher, 1925). The assumed difference between means can be specified by entering the means for the two groups and letting the software calculate the difference or by entering the difference directly. The design corresponding to this test procedure is sometimes referred to as a parallel-groups design. This design is used in situations such as the comparison of the income level of two regions, the nitrogen content of two lakes, or the effectiveness of two drugs. There are several statistical tests available for the comparison of the center of two populations. This procedure is specific to the two-sample t-test assuming equal variance. You can examine the sections below to identify whether the assumptions and test statistic you intend to use in your study match those of this procedure, or if one of the other PASS procedures may be more suited to your situation. Other PASS Procedures for Comparing Two Means or Medians Procedures in PASS are primarily built upon the testing methods, test statistic, and test assumptions that will be used when the analysis of the data is performed. You should check to identify that the test procedure described below in the Test Procedure section matches your intended procedure. If your assumptions or testing method are different, you may wish to use one of the other two-sample procedures available in PASS. -

Characteristics and Statistics of Digital Remote Sensing Imagery (1)

Characteristics and statistics of digital remote sensing imagery (1) Digital Images: 1 Digital Image • With raster data structure, each image is treated as an array of values of the pixels. • Image data is organized as rows and columns (or lines and pixels) start from the upper left corner of the image. • Each pixel (picture element) is treated as a separate unite. Statistics of Digital Images Help: • Look at the frequency of occurrence of individual brightness values in the image displayed • View individual pixel brightness values at specific locations or within a geographic area; • Compute univariate descriptive statistics to determine if there are unusual anomalies in the image data; and • Compute multivariate statistics to determine the amount of between-band correlation (e.g., to identify redundancy). 2 Statistics of Digital Images It is necessary to calculate fundamental univariate and multivariate statistics of the multispectral remote sensor data. This involves identification and calculation of – maximum and minimum value –the range, mean, standard deviation – between-band variance-covariance matrix – correlation matrix, and – frequencies of brightness values The results of the above can be used to produce histograms. Such statistics provide information necessary for processing and analyzing remote sensing data. A “population” is an infinite or finite set of elements. A “sample” is a subset of the elements taken from a population used to make inferences about certain characteristics of the population. (e.g., training signatures) 3 Large samples drawn randomly from natural populations usually produce a symmetrical frequency distribution. Most values are clustered around the central value, and the frequency of occurrence declines away from this central point. -

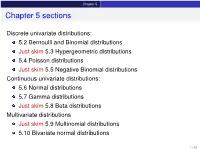

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v.