Vertex and Pixel Shader Tips and Tricks

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Scalability of Terrain Visualization in Large Virtual Environments

WORDT ' Facultyof Mathematics and Natural Department of NIET UITGELEEND Mathematics and Computing Science Scalability of Terrain Visualization in Large Virtual Environments Esger G.N. Abbink Advisors: dr. J.B.TM. Roerdink Department of Mathematics and Computing Science University of Groningen drs. M.J.B. van Delden drs. M. Wierda Virtual Environments, Systems, and Consultancy Zeegse .(,-,-, June,2000 Rkunlv,,,IOIOi*S DDothk W1s*ud. 'iMP* IRS&SIICSIWI'U :.'von S r-;tus800 9' Avr')1rs.r Scalability of terrain visualization in large Virtual Environments Esger G.N. Abbink Department of Mathematics and Computing Science Graduate specialization High Performance Computing and Imaging Studentnumber 0831425 Gerkesklooster/ Zeegse, April 2000 Rapport vesc WR-2000/3 •1 Table of Contents Scalability of terrain visualization in large Virtual Environments..1 1. Introduction 4 2. Virtual Environments 6 2.1 Startingpoints 6 2.2 Means-end analysis 6 2.3 Man-in-the-loop 6 2.4 Hardware 8 2.5 Real-world simulators 9 2.5.1 Flight Simulators 9 2.5.2 Driving Simulators 9 2.5.3 Rail vehicle simulators 10 2.5.4 Ship Simulator 11 2.5.5 yE Walkthrough 11 2.5.6 Entertainment Simulators 12 2.5.7 Telepresence 12 2.5.8 Virtual GIS 13 2.6 Summary & practical continuation 13 3. Scalable terrain visualization 15 3.1 Introduction 15 3.2 The sample implementation 15 3.2.1 Introduction 15 3.2.2 Getting it to work 15 3.2.3 Porting the sample implementation to 4Space 16 3.3 Design 16 3.3.1 Goal 16 3.3.2 Requirements 16 3.3.3 Terrain representation 16 3.3.4 Basic setup 18 3.3.5 Tessellation algorithm 19 3.3.6 Implementation approach 23 3.4 Implementation 24 3.4.1 Terrain data generation 24 3.4.2 Implementing Central Differencing 24 3.4.2 Interfacing 4Space 28 3.4.3 Memory management 29 3.4.4 Dynamic data storages 30 3.4.4.1 Queue 30 3.4.4.2 Dynamic List & Pool 31 3.4.4.3 Red-Black Tree 31 3.4.4.4 Dynamic Storage 32 3.4.4.5 Evaluating performance 33 2 4. -

DRAFT - DATED MATERIAL the CONTENTS of THIS REVIEWER’S GUIDE IS INTENDED SOLELY for REFERENCE WHEN REVIEWING Rev a VERSIONS of VOODOO5 5500 REFERENCE BOARDS

Voodoo5™ 5500 for the PC Reviewer’s Guide DRAFT - DATED MATERIAL THE CONTENTS OF THIS REVIEWER’S GUIDE IS INTENDED SOLELY FOR REFERENCE WHEN REVIEWING Rev A VERSIONS OF VOODOO5 5500 REFERENCE BOARDS. THIS INFORMATION WILL BE REGULARLY UPDATED, AND REVIEWERS SHOULD CONTACT THE PERSONS LISTED IN THIS GUIDE FOR UPDATES BEFORE EVALUATING ANY VOODOO5 5500BOARD. 3dfx Interactive, Inc. 4435 Fortran Dr. San Jose, Ca 95134 408-935-4400 Brian Burke 214-570-2113 [email protected] PT Barnum 214-570-2226 [email protected] Bubba Wolford 281-578-7782 [email protected] www.3dfx.com www.3dfxgamers.com Copyright 2000 3dfx Interactive, Inc. All Rights Reserved. All other trademarks are the property of their respective owners. Visit the 3dfx Virtual Press Room at http://www.3dfx.com/comp/pressweb/index.html. Voodoo5 5500 Reviewers Guide xxxx 2000 Table of Contents Benchmarking the Voodoo5 5500 Page 5 • Cures for common benchmarking and image quality mistakes • Tips for benchmarking • Overclocking Guide INTRODUCTION Page 11 • Optimized for DVD Acceleration • Scalable Performance • Trend-setting Image quality Features • Texture Compression • Glide 2.x and 3.x Compatibility: The Most Titles • World-class Window’s GUI/video Acceleration SECTION 1: Voodoo5 5500 Board Overview Page 12 • General Features • 3D Features set • 2D Features set • Voodoo5 Video Subsystem • SLI • Texture compression • Fill Rate vs T&L • T-Buffer introduction • Alternative APIs (Al Reyes on Mac, Linux, BeOS and more) • Pricing & Availability • Warranty • Technical Support • Photos, screenshots, -

Mobile 3D Devices They're Not Little

Mobile 3D Devices -- They’re not little PCs! Stephen Wilkinson – Graphics Software Technical Lead Texas Instruments CSSD/OMAP Who is this guy? ! Involved with simulation and games since 1995 ! Worked on SIMNET for the US Army ! PC gaming ! Titles include WarBirds 1 & 2, Fighter Squadron, AMA SuperBike, NHRA Drag Racing, NHRA Main Event ! 30+ Casino Games (yeah, yeah) ! Worked on core technology for several console games ! D&D Heroes, Mission Impossible: Operation SURMA, and T3: Redemption (NGC, PS2, Xbox) ! Former member of the Graphics and Computer Vision group at Nokia Research Center ! Now the CSSD graphics software technical lead at Texas Instruments working on OMAP Introduction ! Mobile 3D hardware is not a “Mini-me” of your desktop PC. ! To obtain similar features and relatively high levels of performance, mobile hardware has made some tradeoffs compared to the power of your desktop Introduction… ! If you are just learning mobile 3D or have learned about graphics and performance on a more “traditional” platform such as a PC or console, this presentation should help you: ! Recognize the most important areas in mobile power and performance for 3D and game development ! Take appropriate lessons learned from desktop development whenever possible… ! Avoid lessons from the desktop which can make your game slower or more power-hungry than it could be! Mobile performance preview ! Some you know, some you may not… Sorted by acronym the main problems are: ! CPU ! GPU ! Memory ! Your game The Mobile CPU ! No, it doesn’t have a Hemi. The Mobile GPU ! No, there’s not really a tiny 3dfx Voodoo™ card in that handset Mobile Memory ! It’s SLOOOOOOOW…. -

AMD Radeon E8860

Components for AMD’s Embedded Radeon™ E8860 GPU INTRODUCTION The E8860 Embedded Radeon GPU available from CoreAVI is comprised of temperature screened GPUs, safety certi- fiable OpenGL®-based drivers, and safety certifiable GPU tools which have been pre-integrated and validated together to significantly de-risk the challenges typically faced when integrating hardware and software components. The plat- form is an off-the-shelf foundation upon which safety certifiable applications can be built with confidence. Figure 1: CoreAVI Support for E8860 GPU EXTENDED TEMPERATURE RANGE CoreAVI provides extended temperature versions of the E8860 GPU to facilitate its use in rugged embedded applications. CoreAVI functionally tests the E8860 over -40C Tj to +105 Tj, increasing the manufacturing yield for hardware suppliers while reducing supply delays to end customers. coreavi.com [email protected] Revision - 13Nov2020 1 E8860 GPU LONG TERM SUPPLY AND SUPPORT CoreAVI has provided consistent and dedicated support for the supply and use of the AMD embedded GPUs within the rugged Mil/Aero/Avionics market segment for over a decade. With the E8860, CoreAVI will continue that focused support to ensure that the software, hardware and long-life support are provided to meet the needs of customers’ system life cy- cles. CoreAVI has extensive environmentally controlled storage facilities which are used to store the GPUs supplied to the Mil/ Aero/Avionics marketplace, ensuring that a ready supply is available for the duration of any program. CoreAVI also provides the post Last Time Buy storage of GPUs and is often able to provide additional quantities of com- ponents when COTS hardware partners receive increased volume for existing products / systems requiring additional inventory. -

John Carmack Archive - .Plan (1998)

John Carmack Archive - .plan (1998) http://www.team5150.com/~andrew/carmack March 18, 2007 Contents 1 January 5 1.1 Some of the things I have changed recently (Jan 01, 1998) . 5 1.2 Jan 02, 1998 ............................ 6 1.3 New stuff fixed (Jan 03, 1998) ................. 7 1.4 Version 3.10 patch is now out. (Jan 04, 1998) ......... 8 1.5 Jan 09, 1998 ............................ 9 1.6 I AM GOING OUT OF TOWN NEXT WEEK, DON’T SEND ME ANY MAIL! (Jan 11, 1998) ................. 10 2 February 12 2.1 Ok, I’m overdue for an update. (Feb 04, 1998) ........ 12 2.2 Just got back from the Q2 wrap party in vegas that Activi- sion threw for us. (Feb 09, 1998) ................ 14 2.3 Feb 12, 1998 ........................... 15 2.4 8 mb or 12 mb voodoo 2? (Feb 16, 1998) ........... 19 2.5 I just read the Wired article about all the Doom spawn. (Feb 17, 1998) .......................... 20 2.6 Feb 22, 1998 ........................... 21 1 John Carmack Archive 2 .plan 1998 3 March 22 3.1 American McGee has been let go from Id. (Mar 12, 1998) . 22 3.2 The Old Plan (Mar 13, 1998) .................. 22 3.3 Mar 20, 1998 ........................... 25 3.4 I just shut down the last of the NEXTSTEP systems running at id. (Mar 21, 1998) ....................... 26 3.5 Mar 26, 1998 ........................... 28 4 April 30 4.1 Drag strip day! (Apr 02, 1998) ................. 30 4.2 Things are progressing reasonably well on the Quake 3 en- gine. (Apr 08, 1998) ....................... 31 4.3 Apr 16, 1998 .......................... -

Efficient Algorithms for Large-Scale Image Analysis

Efficient Algorithms for Large-Scale Image Analysis zur Erlangung des akademischen Grades eines Doktors der Ingenieurwissenschaften der Fakultät für Informatik des Karlsruher Instituts für Technologie genehmigte Dissertation von Jan Wassenberg aus Koblenz Tag der mündlichen Prüfung: 24. Oktober 2011 Erster Gutachter: Prof. Dr. Peter Sanders Zweiter Gutachter: Prof. Dr.-Ing. Jürgen Beyerer Abstract The past decade has seen major improvements in the capabilities and availability of imaging sensor systems. Commercial satellites routinely provide panchromatic images with sub-meter resolution. Airborne line scanner cameras yield multi-spectral data with a ground sample distance of 5 cm. The resulting overabundance of data brings with it the challenge of timely analysis. Fully auto- mated processing still appears infeasible, but an intermediate step might involve a computer-assisted search for interesting objects. This would reduce the amount of data for an analyst to examine, but remains a challenge in terms of processing speed and working memory. This work begins by discussing the trade-offs among the various hardware architectures that might be brought to bear upon the problem. FPGA and GPU-based solutions are less universal and entail longer development cycles, hence the choice of commodity multi-core CPU architectures. Distributed processing on a cluster is deemed too costly. We will demonstrate the feasibility of processing aerial images of 100 km × 100 km areas at 1 m resolution within 2 hours on a single workstation with two processors and a total of twelve cores. Because existing approaches cannot cope with such amounts of data, each stage of the image processing pipeline – from data access and signal processing to object extraction and feature computation – will have to be designed from the ground up for maximum performance. -

Integrating Mali GPU with the Browser

Integrating Mali GPU with the Browser Wasim Abbas Staff Engineer, Mali Ecosystem 1 What’s Coming? § Introduction § Mali architecture § Optimizing for Mali § Common pitfalls § Questions 2 Introduction § Technical Lead of Middleware graphics team in Mali Ecosystem § Working with Skia, FireFox (Gecko) and WebKit teams (GPU acceleration for The Web) § Have worked with Mali content optimization and creation tools § Past expertise in the Game industry, engine development § Past expertise teaching Game engine architecture § Who are you? 3 Graphics in the Embedded World § Embedded / mobile graphics fundamentally different from PC § Not just a scaled down version of the desktop world § Completely different power and bandwidth budgets § No dedicated GPU memory or bus – all shared with CPU, video, DSP § Need to last days rather than hours on a single battery charge § Requires dedicated solutions § Must handle “PC use cases” with a mobile phone power budget § Maximize processing efficiency in an embedded memory subsystem 4 Optimizing for Mali § Understanding Mali architecture § Understanding your browser and underlying renderer § Understanding OpenGL ES API and what happens under the hood § Multi-level optimizations at system and application level § Optimizations at client level 5 Abstract Rendering Machine glDraw(1) glDraw(2) glDraw(3) eglSwapBuffers() Original article from Peter Harris at http://community.arm.com/groups/arm-mali-graphics/blog/2014/02/03/the-mali-gpu-an-abstract-machine-part-1 6 Abstract Rendering Machine Synchronous API, asynchronous -

Matrox in the New Millennium: Parhelia Reviewed Matrox in the New Millennium: Parhelia Reviewed by Brian Neal – July 2002

Ace’s Hardware Matrox in the New Millennium: Parhelia Reviewed Matrox in the New Millennium: Parhelia Reviewed By Brian Neal – July 2002 Introduction For some time following the introduction of the Matrox G400 and later the G450, we heard rumors about a "G800" project. About a year and a month ago, Matrox introduced the world to the G550. But the G550 lacked the performance and features of its competitors, and was relegated mostly to 2D work. The G550 was more an evolution of the G450 than anything else, but this time, things are different. Matrox's new Parhelia is an all-new design, incorporating modern features such as Direct X 8.1-compliant pixel and vertex shaders. The G550's 166 MHz 64-bit DDR SDRAM memory interface has been replaced with a far more robust 275 MHz (250 MHz OEM/bulk) 256-bit DDR SDRAM interface capable of 17.6 GB/s. This combined with some interesting and innovative features like hardware displacement-mapping, triple-head surround gaming, and the prospects for Matrox's next- generation product are looking quite good. The Matrox Parhelia But not everything is looking so bright for Parhelia. Manufactured on a 0.15µ process, the 80 million transistor GPU is quite large and also quite hot. Consequently, it currently clocks in at only 220 MHz. To contrast, compare the GeForce 4 Ti4400's 300 MHz clockrate. The low clockrate is compounded by a lack of hardware occlusion culling, which means the quad-pipeline renderer is significantly less efficient than many other contemporary designs when it comes to overdraw. -

Towards Left Duff S Mdbg Holt Winters Gai Incl Tax Drupal Fapi Icici

jimportneoneo_clienterrorentitynotfoundrelatedtonoeneo_j_sdn neo_j_traversalcyperneo_jclientpy_neo_neo_jneo_jphpgraphesrelsjshelltraverserwritebatchtransactioneventhandlerbatchinsertereverymangraphenedbgraphdatabaseserviceneo_j_communityjconfigurationjserverstartnodenotintransactionexceptionrest_graphdbneographytransactionfailureexceptionrelationshipentityneo_j_ogmsdnwrappingneoserverbootstrappergraphrepositoryneo_j_graphdbnodeentityembeddedgraphdatabaseneo_jtemplate neo_j_spatialcypher_neo_jneo_j_cyphercypher_querynoe_jcypherneo_jrestclientpy_neoallshortestpathscypher_querieslinkuriousneoclipseexecutionresultbatch_importerwebadmingraphdatabasetimetreegraphawarerelatedtoviacypherqueryrecorelationshiptypespringrestgraphdatabaseflockdbneomodelneo_j_rbshortpathpersistable withindistancegraphdbneo_jneo_j_webadminmiddle_ground_betweenanormcypher materialised handaling hinted finds_nothingbulbsbulbflowrexprorexster cayleygremlintitandborient_dbaurelius tinkerpoptitan_cassandratitan_graph_dbtitan_graphorientdbtitan rexter enough_ram arangotinkerpop_gremlinpyorientlinkset arangodb_graphfoxxodocumentarangodborientjssails_orientdborientgraphexectedbaasbox spark_javarddrddsunpersist asigned aql fetchplanoriento bsonobjectpyspark_rddrddmatrixfactorizationmodelresultiterablemlibpushdownlineage transforamtionspark_rddpairrddreducebykeymappartitionstakeorderedrowmatrixpair_rddblockmanagerlinearregressionwithsgddstreamsencouter fieldtypes spark_dataframejavarddgroupbykeyorg_apache_spark_rddlabeledpointdatabricksaggregatebykeyjavasparkcontextsaveastextfilejavapairdstreamcombinebykeysparkcontext_textfilejavadstreammappartitionswithindexupdatestatebykeyreducebykeyandwindowrepartitioning -

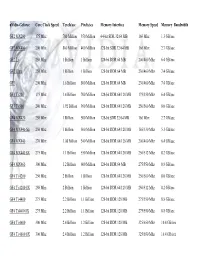

Nvidia-Geforce GF2 MX200 GF2 MX400 GF2 Ti GF2 Ultra

nVidia-Geforce Core Clock Speed Texels/sec Pixels/sec Memory Interface Memory Speed Memory Bandwidth GF2 MX200 175 Mhz 700 Million 350 Million 64-bit SDR 32/64 MB 166 Mhz 1.3 GB/sec GF2 MX400 200 Mhz 800 Million 400 Million 128-bit SDR 32/64 MB 166 Mhz 2.7 GB/sec GF2 Ti 250 Mhz 1 Billion 1 Billion 128-bit DDR 64 MB 200/400 Mhz 6.4 GB/sec GF2 Ultra 250 Mhz 1 Billion 1 Billion 128-bit DDR 64 MB 230/460 Mhz 7.4 GB/sec GF3 200 Mhz 1.6 Billion 800 Million 128-bit DDR 64 MB 230/460 Mhz 7.4 GB/sec GF3 Ti 200 175 Mhz 1.4 Billion 700 Million 128-bit DDR 64/128 MB 175/350 Mhz 6.4 GB/sec GF3 Ti 500 240 Mhz 1.92 Billion 960 Million 128-bit DDR 64/128 MB 250/500 Mhz 8.0 GB/sec GF4 MX420 250 Mhz 1 Billion 500 Million 128-bit SDR 32/64 MB 166 Mhz 2.7 GB/sec GF4 MX440-SE 250 Mhz 1 Billion 500 Million 128-bit DDR 64/128 MB 165/330 Mhz 5.3 GB/sec GF4 MX440 270 Mhz 1.08 Billion 540 Million 128-bit DDR 64/128 MB 200/400 Mhz 6.4 GB/sec GF4 MX440 8X 275 Mhz 1.1 Billion 550 Million 128-bit DDR 64/128 MB 256/512 Mhz 8.2 GB/sec GF4 MX460 300 Mhz 1.2 Billion 600 Million 128-bit DDR 64 MB 275/550 Mhz 8.8 GB/sec GF4 Ti 4200 250 Mhz 2 Billion 1 Billion 128-bit DDR 64/128 MB 250/500 Mhz 8.0 GB/sec GF4 Ti 4200 8X 250 Mhz 2 Billion 1 Billion 128-bit DDR 64/128 MB 256/512 Mhz 8.2 GB/sec GF4 Ti 4400 275 Mhz 2.2 Billion 1.1 Billion 128-bit DDR 128 MB 275/550 Mhz 8.8 GB/sec GF4 Ti4400 8X 275 Mhz 2.2 Billion 1.1 Billion 128-bit DDR 128 MB 275/550 Mhz 8.8 GB/sec GF4 Ti 4600 300 Mhz 2.4 Billion 1.2 Billion 128-bit DDR 128 MB 325/650 Mhz 10.4 GB/sec GF4 Ti 4600 8X 300 Mhz 2.4 -

Gokhan Avkarogullari Richard Schreyer

Gokhan Avkarogullari iPhone GPU Software Richard Schreyer iPhone GPU Software 2 3 4 5 6 7 8 Framebuffer object for single sampling OpenGL ES Resolve Framebuffer 9 Framebuffer objects for multisampling OpenGL ES MSAA Framebuffer OpenGL ES Resolve Framebuffer 10 Framebuffer objects for multisample and resolve OpenGL ES MSAA Framebuffer OpenGL ES Resolve Framebuffer Resolve 11 Single sampled framebuffer creation //color renderbuffer glGenRenderbuffers(1, &colorRenderbuffer); glBindRenderbuffer(GL_RENDERBUFFER, colorRenderbuffer); [context renderbufferStorage:GL_RENDERBUFFER fromDrawable:layer]; glFramebufferRenderbuffer(GL_FRAMEBUFFER, GL_COLOR_ATTACHMENT0, ...); //depth renderbuffer glGenRenderbuffers(1, &depthbuffer); glBindRenderbuffer(GL_RENDERBUFFER, depthbuffer); glRenderbufferStorage(GL_RENDERBUFFER, ..., ); glFramebufferRenderbuffer(GL_FRAMEBUFFER, GL_DEPTH_ATTACHMENT, ...); 12 Multisampled framebuffer creation //color renderbuffer glGenRenderbuffers(1, &colorRenderbuffer); glBindRenderbuffer(GL_RENDERBUFFER, colorRenderbuffer); glRenderbufferStorageMultisampleAPPLE(GL_RENDERBUFFER_OES, samplesToUse, ...); glFramebufferRenderbuffer(GL_FRAMEBUFFER, GL_COLOR_ATTACHMENT0, ...); //depth renderbuffer glGenRenderbuffers(1, &depthbuffer); glBindRenderbuffer(GL_RENDERBUFFER, depthbuffer); glRenderbufferStorageMultisampleAPPLE(GL_RENDERBUFFER, samplesToUse, ...); glFramebufferRenderbuffer(GL_FRAMEBUFFER, GL_DEPTH_ATTACHMENT, ...); 13 Single sampled render and resolve glBindFramebuffer(GL_FRAMEBUFFER, defaultFramebuffer); glViewport(0, 0, backingWidth, -

Accelerating Real-Time Graphics with High Level Shading Languages

2003:306 CIV MASTER’S THESIS Accelerating Real-Time Graphics with High Level Shading Languages EMIL PERSSON MASTER OF SCIENCE PROGRAMME Department of Computer Science and Electrical Engineering Division of Media Technology 2003:306 CIV • ISSN: 1402 - 1617 • ISRN: LTU - EX - - 03/306 - - SE Contents CONTENTS ..............................................................................................................................2 ABSTRACT ..............................................................................................................................4 GOALS ......................................................................................................................................5 ACKNOWLEDGEMENTS .....................................................................................................6 PREFACE.................................................................................................................................7 BASIC REA L-TIME GRAPHICS CONCEPTS...................................................................................7 Hardware rendering paradigm ..........................................................................................7 Common graphics terms.....................................................................................................8 High level shading languages ............................................................................................9 INTRODUCTION ........................................................................................................................9