EECS 470 Lecture 24 Chip Multiprocessors and Simultaneous Multithreading Fall 2007 Prof

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Implicitly-Multithreaded Processors

Appears in the Proceedings of the 30th Annual International Symposium on Computer Architecture (ISCA) Implicitly-Multithreaded Processors Il Park, Babak Falsafi∗ and T. N. Vijaykumar School of Electrical & Computer Engineering ∗Computer Architecture Laboratory (CALCM) Purdue University Carnegie Mellon University {parki,vijay}@ecn.purdue.edu [email protected] http://www.ece.cmu.edu/~impetus Abstract In this paper, we propose the Implicitly-Multi- Threaded (IMT) processor. IMT executes compiler-speci- This paper proposes the Implicitly-MultiThreaded fied speculative threads from a sequential program on a (IMT) architecture to execute compiler-specified specula- wide-issue SMT pipeline. IMT is based on the fundamental tive threads on to a modified Simultaneous Multithreading observation that Multiscalar’s execution model — i.e., pipeline. IMT reduces hardware complexity by relying on compiler-specified speculative threads [10] — can be the compiler to select suitable thread spawning points and decoupled from the processor organization — i.e., distrib- orchestrate inter-thread register communication. To uted processing cores. Multiscalar [10] employs sophisti- enhance IMT’s effectiveness, this paper proposes three cated specialized hardware, the register ring and address novel microarchitectural mechanisms: (1) resource- and resolution buffer, which are strongly coupled to the distrib- dependence-based fetch policy to fetch and execute suit- uted core organization. In contrast, IMT proposes to map able instructions, (2) context multiplexing to improve utili- speculative threads on to generic SMT. zation and map as many threads to a single context as IMT differs fundamentally from prior proposals, TME allowed by availability of resources, and (3) early thread- and DMT, for speculative threading on SMT. While TME invocation to hide thread start-up overhead by overlapping executes multiple threads only in the uncommon case of one thread’s invocation with other threads’ execution. -

Kaisen Lin and Michael Conley

Kaisen Lin and Michael Conley Simultaneous Multithreading ◦ Instructions from multiple threads run simultaneously on superscalar processor ◦ More instruction fetching and register state ◦ Commercialized! DEC Alpha 21464 [Dean Tullsen et al] Intel Hyperthreading (Xeon, P4, Atom, Core i7) Web applications ◦ Web, DB, file server ◦ Throughput over latency ◦ Service many users ◦ Slight delay acceptable 404 not Idea? ◦ More functional units and caches ◦ Not just storage state Instruction fetch ◦ Branch prediction and alignment Similar problems to the trace cache ◦ Large programs: inefficient I$ use Issue and retirement ◦ Complicated OOE logic not scalable Need more wires, hardware, ports Execution ◦ Register file and forwarding logic Power-hungry Replace complicated OOE with more processors ◦ Each with own L1 cache Use communication crossbar for a shared L2 cache ◦ Communication still fast, same chip Size details in paper ◦ 6-SS about the same as 4x2-MP ◦ Simpler CPU overall! SimOS: Simulate hardware env ◦ Can run commercial operating systems on multiple CPUs ◦ IRIX 5.3 tuned for multi-CPU Applications ◦ 4 integer, 4 floating-point, 1 multiprog PARALLELLIZED! Which one is better? ◦ Misses per completed instruction ◦ In general hard to tell what happens Which one is better? ◦ 6-SS isn’t taking advantage! Actual speedup metrics ◦ MP beats the pants off SS some times ◦ Doesn’t perform so much worse other times 6-SS better than 4x2-MP ◦ Non-parallelizable applications ◦ Fine-grained parallel applications 6-SS worse than 4x2-MP -

A Speculative Control Scheme for an Energy-Efficient Banked Register File

IEEE TRANSACTIONS ON COMPUTERS, VOL. 54, NO. 6, JUNE 2005 741 A Speculative Control Scheme for an Energy-Efficient Banked Register File Jessica H. Tseng, Student Member, IEEE, and Krste Asanovicc, Member, IEEE Abstract—Multiported register files are critical components of modern superscalar and simultaneously multithreaded (SMT) processors, but conventional designs consume considerable die area and power as register counts and issue widths grow. Banked multiported register files consisting of multiple interleaved banks of lesser ported cells can be used to reduce area, power, and access time and previous work has shown that such designs can provide sufficient bandwidth for a superscalar machine. These previous banked designs, however, have complex control structures to avoid bank conflicts or to buffer conflicting requests, which add to design complexity and would likely limit cycle time. This paper presents a much simpler and faster control scheme that speculatively issues potentially conflicting instructions, then quickly repairs the pipeline if conflicts occur. We show that, once optimizations to avoid regfile reads are employed, the remaining read accesses observed in detailed simulations are close to randomly distributed and this contributes to the effectiveness of our speculative control scheme. For a four-issue superscalar processor with 64 physical registers, we show that we can reduce area by a factor of three, access time by 25 percent, and energy by 40 percent, while decreasing IPC by less than 5 percent. For an eight-issue SMT processor with 512 physical registers, area is reduced by a factor of seven, access time by 30 percent, and energy by 60 percent, while decreasing IPC by less than 2 percent. -

The Microarchitecture of a Low Power Register File

The Microarchitecture of a Low Power Register File Nam Sung Kim and Trevor Mudge Advanced Computer Architecture Lab The University of Michigan 1301 Beal Ave., Ann Arbor, MI 48109-2122 {kimns, tnm}@eecs.umich.edu ABSTRACT Alpha 21464, the 512-entry 16-read and 8-write (16-r/8-w) ports register file consumed more power and was larger than The access time, energy and area of the register file are often the 64 KB primary caches. To reduce the cycle time impact, it critical to overall performance in wide-issue microprocessors, was implemented as two 8-r/8-w split register files [9], see because these terms grow superlinearly with the number of read Figure 1. Figure 1-(a) shows the 16-r/8-w file implemented and write ports that are required to support wide-issue. This paper directly as a monolithic structure. Figure 1-(b) shows it presents two techniques to reduce the number of ports of a register implemented as the two 8-r/8-w register files. The monolithic file intended for a wide-issue microprocessor without hardly any register file design is slow because each memory cell in the impact on IPC. Our results show that it is possible to replace a register file has to drive a large number of bit-lines. In register file with 16 read and 8 write ports, intended for an eight- contrast, the split register file is fast, but duplicates the issue processor, with a register file with just 8 read and 8 write contents of the register file in two memory arrays, resulting in ports so that the impact on IPC is a few percent. -

Benchmarking the Intel FPGA SDK for Opencl Memory Interface

The Memory Controller Wall: Benchmarking the Intel FPGA SDK for OpenCL Memory Interface Hamid Reza Zohouri*†1, Satoshi Matsuoka*‡ *Tokyo Institute of Technology, †Edgecortix Inc. Japan, ‡RIKEN Center for Computational Science (R-CCS) {zohour.h.aa@m, matsu@is}.titech.ac.jp Abstract—Supported by their high power efficiency and efficiency on Intel FPGAs with different configurations recent advancements in High Level Synthesis (HLS), FPGAs are for input/output arrays, vector size, interleaving, kernel quickly finding their way into HPC and cloud systems. Large programming model, on-chip channels, operating amounts of work have been done so far on loop and area frequency, padding, and multiple types of blocking. optimizations for different applications on FPGAs using HLS. However, a comprehensive analysis of the behavior and • We outline one performance bug in Intel’s compiler, and efficiency of the memory controller of FPGAs is missing in multiple deficiencies in the memory controller, leading literature, which becomes even more crucial when the limited to significant loss of memory performance for typical memory bandwidth of modern FPGAs compared to their GPU applications. In some of these cases, we provide work- counterparts is taken into account. In this work, we will analyze arounds to improve the memory performance. the memory interface generated by Intel FPGA SDK for OpenCL with different configurations for input/output arrays, II. METHODOLOGY vector size, interleaving, kernel programming model, on-chip channels, operating frequency, padding, and multiple types of A. Memory Benchmark Suite overlapped blocking. Our results point to multiple shortcomings For our evaluation, we develop an open-source benchmark in the memory controller of Intel FPGAs, especially with respect suite called FPGAMemBench, available at https://github.com/ to memory access alignment, that can hinder the programmer’s zohourih/FPGAMemBench. -

REPORT Compaq Chooses SMT for Alpha Simultaneous Multithreading

VOLUME 13, NUMBER 16 DECEMBER 6, 1999 MICROPROCESSOR REPORT THE INSIDERS’ GUIDE TO MICROPROCESSOR HARDWARE Compaq Chooses SMT for Alpha Simultaneous Multithreading Exploits Instruction- and Thread-Level Parallelism by Keith Diefendorff Given a full complement of on-chip memory, increas- ing the clock frequency will increase the performance of the As it climbs rapidly past the 100-million- core. One way to increase frequency is to deepen the pipeline. transistor-per-chip mark, the micro- But with pipelines already reaching upwards of 12–14 stages, processor industry is struggling with the mounting inefficiencies may close this avenue, limiting future question of how to get proportionally more performance out frequency improvements to those that can be attained from of these new transistors. Speaking at the recent Microproces- semiconductor-circuit speedup. Unfortunately this speedup, sor Forum, Joel Emer, a Principal Member of the Technical roughly 20% per year, is well below that required to attain the Staff in Compaq’s Alpha Development Group, described his historical 60% per year performance increase. To prevent company’s approach: simultaneous multithreading, or SMT. bursting this bubble, the only real alternative left is to exploit Emer’s interest in SMT was inspired by the work of more and more parallelism. Dean Tullsen, who described the technique in 1995 while at Indeed, the pursuit of parallelism occupies the energy the University of Washington. Since that time, Emer has of many processor architects today. There are basically two been studying SMT along with other researchers at Washing- theories: one is that instruction-level parallelism (ILP) is ton. Once convinced of its value, he began evangelizing SMT abundant and remains a viable resource waiting to be tapped; within Compaq. -

PERL – a Register-Less Processor

PERL { A Register-Less Processor A Thesis Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy by P. Suresh to the Department of Computer Science & Engineering Indian Institute of Technology, Kanpur February, 2004 Certificate Certified that the work contained in the thesis entitled \PERL { A Register-Less Processor", by Mr.P. Suresh, has been carried out under my supervision and that this work has not been submitted elsewhere for a degree. (Dr. Rajat Moona) Professor, Department of Computer Science & Engineering, Indian Institute of Technology, Kanpur. February, 2004 ii Synopsis Computer architecture designs are influenced historically by three factors: market (users), software and hardware methods, and technology. Advances in fabrication technology are the most dominant factor among them. The performance of a proces- sor is defined by a judicious blend of processor architecture, efficient compiler tech- nology, and effective VLSI implementation. The choices for each of these strongly depend on the technology available for the others. Significant gains in the perfor- mance of processors are made due to the ever-improving fabrication technology that made it possible to incorporate architectural novelties such as pipelining, multiple instruction issue, on-chip caches, registers, branch prediction, etc. To supplement these architectural novelties, suitable compiler techniques extract performance by instruction scheduling, code and data placement and other optimizations. The performance of a computer system is directly related to the time it takes to execute programs, usually known as execution time. The expression for execution time (T), is expressed as a product of the number of instructions executed (N), the average number of machine cycles needed to execute one instruction (Cycles Per Instruction or CPI), and the clock cycle time (), as given in equation 1. -

A Modern Primer on Processing in Memory

A Modern Primer on Processing in Memory Onur Mutlua,b, Saugata Ghoseb,c, Juan Gomez-Luna´ a, Rachata Ausavarungnirund SAFARI Research Group aETH Z¨urich bCarnegie Mellon University cUniversity of Illinois at Urbana-Champaign dKing Mongkut’s University of Technology North Bangkok Abstract Modern computing systems are overwhelmingly designed to move data to computation. This design choice goes directly against at least three key trends in computing that cause performance, scalability and energy bottlenecks: (1) data access is a key bottleneck as many important applications are increasingly data-intensive, and memory bandwidth and energy do not scale well, (2) energy consumption is a key limiter in almost all computing platforms, especially server and mobile systems, (3) data movement, especially off-chip to on-chip, is very expensive in terms of bandwidth, energy and latency, much more so than computation. These trends are especially severely-felt in the data-intensive server and energy-constrained mobile systems of today. At the same time, conventional memory technology is facing many technology scaling challenges in terms of reliability, energy, and performance. As a result, memory system architects are open to organizing memory in different ways and making it more intelligent, at the expense of higher cost. The emergence of 3D-stacked memory plus logic, the adoption of error correcting codes inside the latest DRAM chips, proliferation of different main memory standards and chips, specialized for different purposes (e.g., graphics, low-power, high bandwidth, low latency), and the necessity of designing new solutions to serious reliability and security issues, such as the RowHammer phenomenon, are an evidence of this trend. -

Advanced X86

Advanced x86: BIOS and System Management Mode Internals Input/Output Xeno Kovah && Corey Kallenberg LegbaCore, LLC All materials are licensed under a Creative Commons “Share Alike” license. http://creativecommons.org/licenses/by-sa/3.0/ ABribuEon condiEon: You must indicate that derivave work "Is derived from John BuBerworth & Xeno Kovah’s ’Advanced Intel x86: BIOS and SMM’ class posted at hBp://opensecuritytraining.info/IntroBIOS.html” 2 Input/Output (I/O) I/O, I/O, it’s off to work we go… 2 Types of I/O 1. Memory-Mapped I/O (MMIO) 2. Port I/O (PIO) – Also called Isolated I/O or port-mapped IO (PMIO) • X86 systems employ both-types of I/O • Both methods map peripheral devices • Address space of each is accessed using instructions – typically requires Ring 0 privileges – Real-Addressing mode has no implementation of rings, so no privilege escalation needed • I/O ports can be mapped so that they appear in the I/O address space or the physical-memory address space (memory mapped I/O) or both – Example: PCI configuration space in a PCIe system – both memory-mapped and accessible via port I/O. We’ll learn about that in the next section • The I/O Controller Hub contains the registers that are located in both the I/O Address Space and the Memory-Mapped address space 4 Memory-Mapped I/O • Devices can also be mapped to the physical address space instead of (or in addition to) the I/O address space • Even though it is a hardware device on the other end of that access request, you can operate on it like it's memory: – Any of the processor’s instructions -

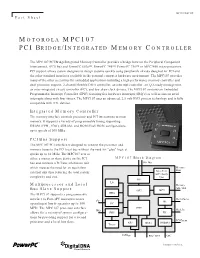

Motorola Mpc107 Pci Bridge/Integrated Memory Controller

MPC107FACT/D Fact Sheet MOTOROLA MPC107 PCI BRIDGE/INTEGRATED MEMORY CONTROLLER The MPC107 PCI Bridge/Integrated Memory Controller provides a bridge between the Peripheral Component Interconnect, (PCI) bus and PowerPC 603e™, PowerPC 740™, PowerPC 750™ or MPC7400 microprocessors. PCI support allows system designers to design systems quickly using peripherals already designed for PCI and the other standard interfaces available in the personal computer hardware environment. The MPC107 provides many of the other necessities for embedded applications including a high-performance memory controller and dual processor support, 2-channel flexible DMA controller, an interrupt controller, an I2O-ready message unit, an inter-integrated circuit controller (I2C), and low skew clock drivers. The MPC107 contains an Embedded Programmable Interrupt Controller (EPIC) featuring five hardware interrupts (IRQ’s) as well as sixteen serial interrupts along with four timers. The MPC107 uses an advanced, 2.5-volt HiP3 process technology and is fully compatible with TTL devices. Integrated Memory Controller The memory interface controls processor and PCI interactions to main memory. It supports a variety of programmable timing supporting DRAM (FPM, EDO), SDRAM, and ROM/Flash ROM configurations, up to speeds of 100 MHz. PCI Bus Support The MPC107 PCI interface is designed to connect the processor and memory buses to the PCI local bus without the need for "glue" logic at speeds up to 66 MHz. The MPC107 acts as either a master or slave device on the PCI MPC107 Block Diagram bus and contains a PCI bus arbitration unit 60x Bus which reduces the need for an equivalent Memory Data external unit thus reducing the total system Data Path ECC / Parity complexity and cost. -

The Impulse Memory Controller

IEEE TRANSACTIONS ON COMPUTERS, VOL. 50, NO. 11, NOVEMBER 2001 1 The Impulse Memory Controller John B. Carter, Member, IEEE, Zhen Fang, Student Member, IEEE, Wilson C. Hsieh, Sally A. McKee, Member, IEEE, and Lixin Zhang, Student Member, IEEE AbstractÐImpulse is a memory system architecture that adds an optional level of address indirection at the memory controller. Applications can use this level of indirection to remap their data structures in memory. As a result, they can control how their data is accessed and cached, which can improve cache and bus utilization. The Impuse design does not require any modification to processor, cache, or bus designs since all the functionality resides at the memory controller. As a result, Impulse can be adopted in conventional systems without major system changes. We describe the design of the Impulse architecture and how an Impulse memory system can be used in a variety of ways to improve the performance of memory-bound applications. Impulse can be used to dynamically create superpages cheaply, to dynamically recolor physical pages, to perform strided fetches, and to perform gathers and scatters through indirection vectors. Our performance results demonstrate the effectiveness of these optimizations in a variety of scenarios. Using Impulse can speed up a range of applications from 20 percent to over a factor of 5. Alternatively, Impulse can be used by the OS for dynamic superpage creation; the best policy for creating superpages using Impulse outperforms previously known superpage creation policies. Index TermsÐComputer architecture, memory systems. æ 1 INTRODUCTION INCE 1987, microprocessor performance has improved at memory. By giving applications control (mediated by the Sa rate of 55 percent per year; in contrast, DRAM latencies OS) over the use of shadow addresses, Impulse supports have improved by only 7 percent per year and DRAM application-specific optimizations that restructure data. -

Optimizing Thread Throughput for Multithreaded Workloads on Memory Constrained Cmps

Optimizing Thread Throughput for Multithreaded Workloads on Memory Constrained CMPs Major Bhadauria and Sally A. Mckee Computer Systems Lab Cornell University Ithaca, NY, USA [email protected], [email protected] ABSTRACT 1. INTRODUCTION Multi-core designs have become the industry imperative, Power and thermal constraints have begun to limit the replacing our reliance on increasingly complicated micro- maximum operating frequency of high performance proces- architectural designs and VLSI improvements to deliver in- sors. The cubic increase in power from increases in fre- creased performance at lower power budgets. Performance quency and higher voltages required to attain those frequen- of these multi-core chips will be limited by the DRAM mem- cies has reached a plateau. By leveraging increasing die ory system: we demonstrate this by modeling a cycle-accurate space for more processing cores (creating chip multiproces- DDR2 memory controller with SPLASH-2 workloads. Sur- sors, or CMPs) and larger caches, designers hope that multi- prisingly, benchmarks that appear to scale well with the threaded programs can exploit shrinking transistor sizes to number of processors fail to do so when memory is accurately deliver equal or higher throughput as single-threaded, single- modeled. We frequently find that the most efficient config- core predecessors. The current software paradigm is based uration is not the one with the most threads. By choosing on the assumption that multi-threaded programs with little the most efficient number of threads for each benchmark, contention for shared data scale (nearly) linearly with the average energy delay efficiency improves by a factor of 3.39, number of processors, yielding power-efficient data through- and performance improves by 19.7%, on average.