A Modern Primer on Processing in Memory

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

ASIC Implementation of DDR SDRAM Memory Controller

© 2016 IJEDR | Volume 4, Issue 4 | ISSN: 2321-9939 ASIC Implementation of DDR SDRAM Memory Controller Ramagiri Ramya, Naganaik M.Tech Scholar, ASST.PROF, HOD of ECE BRIG-IC, Hyderabad ________________________________________________________________________________________________________ Abstract - A Dedicated Memory Controller is of prime importance in applications that do not contain microprocessors (high-end applications). The Memory Controller provides command signals for memory refresh, read and write operation and initialization of SDRAM. Our work will focus on ASIC Design methodology of Double Data Rate (DDR) SDRAM Controller that is located between the DDR SDRAM and Bus Master. The Controller simplifies the SDRAM command interface to standard system read/write interface and also optimizes the access time of read/write cycle. Keywords - DDR SDRAM Controller, Read/Write Data path, Cadence RTL Compiler. ________________________________________________________________________________________________________ I. INTRODUCTION Memory devices are almost found in all systems and nowadays high speed and high performance memories are in great demand. For better throughput and speed, the controllers are to be designed with clock frequency in the range of megahertz. As the clock speed of the controller is increasing, the design challenges are also becoming complex. Therefore the next generation memory devices require very high speed controllers like double data rate and quad data rate memory controllers. In this paper, the double data rate SDRAM Controller is implemented using ASIC methodology. Synchronous DRAM (SDRAM) is preferred in embedded system memory design because of its speed and pipelining capability. In high-end applications, like microprocessors there will be specific built in peripherals to provide the interface to the SDRAM. But for other applications, the system designer must design a specific memory controller to provide command signals for memory refresh, read and write operation and initialization of SDRAM. -

System Design for a Computational-RAM Logic-In-Memory Parailel-Processing Machine

System Design for a Computational-RAM Logic-In-Memory ParaIlel-Processing Machine Peter M. Nyasulu, B .Sc., M.Eng. A thesis submitted to the Faculty of Graduate Studies and Research in partial fulfillment of the requirements for the degree of Doctor of Philosophy Ottaw a-Carleton Ins titute for Eleceical and Computer Engineering, Department of Electronics, Faculty of Engineering, Carleton University, Ottawa, Ontario, Canada May, 1999 O Peter M. Nyasulu, 1999 National Library Biôiiothkque nationale du Canada Acquisitions and Acquisitions et Bibliographie Services services bibliographiques 39S Weiiington Street 395. nie WeUingtm OnawaON KlAW Ottawa ON K1A ON4 Canada Canada The author has granted a non- L'auteur a accordé une licence non exclusive licence allowing the exclusive permettant à la National Library of Canada to Bibliothèque nationale du Canada de reproduce, ban, distribute or seU reproduire, prêter, distribuer ou copies of this thesis in microform, vendre des copies de cette thèse sous paper or electronic formats. la forme de microficbe/nlm, de reproduction sur papier ou sur format électronique. The author retains ownership of the L'auteur conserve la propriété du copyright in this thesis. Neither the droit d'auteur qui protège cette thèse. thesis nor substantial extracts fkom it Ni la thèse ni des extraits substantiels may be printed or otherwise de celle-ci ne doivent être imprimés reproduced without the author's ou autrement reproduits sans son permission. autorisation. Abstract Integrating several 1-bit processing elements at the sense amplifiers of a standard RAM improves the performance of massively-paralle1 applications because of the inherent parallelism and high data bandwidth inside the memory chip. -

Benchmarking the Intel FPGA SDK for Opencl Memory Interface

The Memory Controller Wall: Benchmarking the Intel FPGA SDK for OpenCL Memory Interface Hamid Reza Zohouri*†1, Satoshi Matsuoka*‡ *Tokyo Institute of Technology, †Edgecortix Inc. Japan, ‡RIKEN Center for Computational Science (R-CCS) {zohour.h.aa@m, matsu@is}.titech.ac.jp Abstract—Supported by their high power efficiency and efficiency on Intel FPGAs with different configurations recent advancements in High Level Synthesis (HLS), FPGAs are for input/output arrays, vector size, interleaving, kernel quickly finding their way into HPC and cloud systems. Large programming model, on-chip channels, operating amounts of work have been done so far on loop and area frequency, padding, and multiple types of blocking. optimizations for different applications on FPGAs using HLS. However, a comprehensive analysis of the behavior and • We outline one performance bug in Intel’s compiler, and efficiency of the memory controller of FPGAs is missing in multiple deficiencies in the memory controller, leading literature, which becomes even more crucial when the limited to significant loss of memory performance for typical memory bandwidth of modern FPGAs compared to their GPU applications. In some of these cases, we provide work- counterparts is taken into account. In this work, we will analyze arounds to improve the memory performance. the memory interface generated by Intel FPGA SDK for OpenCL with different configurations for input/output arrays, II. METHODOLOGY vector size, interleaving, kernel programming model, on-chip channels, operating frequency, padding, and multiple types of A. Memory Benchmark Suite overlapped blocking. Our results point to multiple shortcomings For our evaluation, we develop an open-source benchmark in the memory controller of Intel FPGAs, especially with respect suite called FPGAMemBench, available at https://github.com/ to memory access alignment, that can hinder the programmer’s zohourih/FPGAMemBench. -

Load Management and Demand Response in Small and Medium Data Centers

Thiago Lara Vasques Load Management and Demand Response in Small and Medium Data Centers PhD Thesis in Sustainable Energy Systems, supervised by Professor Pedro Manuel Soares Moura, submitted to the Department of Mechanical Engineering, Faculty of Sciences and Technology of the University of Coimbra May 2018 Load Management and Demand Response in Small and Medium Data Centers by Thiago Lara Vasques PhD Thesis in Sustainable Energy Systems in the framework of the Energy for Sustainability Initiative of the University of Coimbra and MIT Portugal Program, submitted to the Department of Mechanical Engineering, Faculty of Sciences and Technology of the University of Coimbra Thesis Supervisor Professor Pedro Manuel Soares Moura Department of Electrical and Computers Engineering, University of Coimbra May 2018 This thesis has been developed under the Energy for Sustainability Initiative of the University of Coimbra and been supported by CAPES (Coordenação de Aperfeiçoamento de Pessoal de Nível Superior – Brazil). ACKNOWLEDGEMENTS First and foremost, I would like to thank God for coming to the conclusion of this work with health, courage, perseverance and above all, with a miraculous amount of love that surrounds and graces me. The work of this thesis has also the direct and indirect contribution of many people, who I feel honored to thank. I would like to express my gratitude to my supervisor, Professor Pedro Manuel Soares Moura, for his generosity in opening the doors of the University of Coimbra by giving me the possibility of his orientation when I was a stranger. Subsequently, by his teachings, guidance and support in difficult times. You, Professor, inspire me with your humbleness given the knowledge you possess. -

Advanced X86

Advanced x86: BIOS and System Management Mode Internals Input/Output Xeno Kovah && Corey Kallenberg LegbaCore, LLC All materials are licensed under a Creative Commons “Share Alike” license. http://creativecommons.org/licenses/by-sa/3.0/ ABribuEon condiEon: You must indicate that derivave work "Is derived from John BuBerworth & Xeno Kovah’s ’Advanced Intel x86: BIOS and SMM’ class posted at hBp://opensecuritytraining.info/IntroBIOS.html” 2 Input/Output (I/O) I/O, I/O, it’s off to work we go… 2 Types of I/O 1. Memory-Mapped I/O (MMIO) 2. Port I/O (PIO) – Also called Isolated I/O or port-mapped IO (PMIO) • X86 systems employ both-types of I/O • Both methods map peripheral devices • Address space of each is accessed using instructions – typically requires Ring 0 privileges – Real-Addressing mode has no implementation of rings, so no privilege escalation needed • I/O ports can be mapped so that they appear in the I/O address space or the physical-memory address space (memory mapped I/O) or both – Example: PCI configuration space in a PCIe system – both memory-mapped and accessible via port I/O. We’ll learn about that in the next section • The I/O Controller Hub contains the registers that are located in both the I/O Address Space and the Memory-Mapped address space 4 Memory-Mapped I/O • Devices can also be mapped to the physical address space instead of (or in addition to) the I/O address space • Even though it is a hardware device on the other end of that access request, you can operate on it like it's memory: – Any of the processor’s instructions -

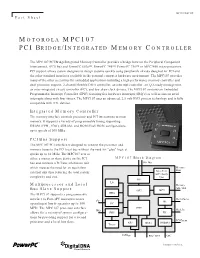

Motorola Mpc107 Pci Bridge/Integrated Memory Controller

MPC107FACT/D Fact Sheet MOTOROLA MPC107 PCI BRIDGE/INTEGRATED MEMORY CONTROLLER The MPC107 PCI Bridge/Integrated Memory Controller provides a bridge between the Peripheral Component Interconnect, (PCI) bus and PowerPC 603e™, PowerPC 740™, PowerPC 750™ or MPC7400 microprocessors. PCI support allows system designers to design systems quickly using peripherals already designed for PCI and the other standard interfaces available in the personal computer hardware environment. The MPC107 provides many of the other necessities for embedded applications including a high-performance memory controller and dual processor support, 2-channel flexible DMA controller, an interrupt controller, an I2O-ready message unit, an inter-integrated circuit controller (I2C), and low skew clock drivers. The MPC107 contains an Embedded Programmable Interrupt Controller (EPIC) featuring five hardware interrupts (IRQ’s) as well as sixteen serial interrupts along with four timers. The MPC107 uses an advanced, 2.5-volt HiP3 process technology and is fully compatible with TTL devices. Integrated Memory Controller The memory interface controls processor and PCI interactions to main memory. It supports a variety of programmable timing supporting DRAM (FPM, EDO), SDRAM, and ROM/Flash ROM configurations, up to speeds of 100 MHz. PCI Bus Support The MPC107 PCI interface is designed to connect the processor and memory buses to the PCI local bus without the need for "glue" logic at speeds up to 66 MHz. The MPC107 acts as either a master or slave device on the PCI MPC107 Block Diagram bus and contains a PCI bus arbitration unit 60x Bus which reduces the need for an equivalent Memory Data external unit thus reducing the total system Data Path ECC / Parity complexity and cost. -

The Impulse Memory Controller

IEEE TRANSACTIONS ON COMPUTERS, VOL. 50, NO. 11, NOVEMBER 2001 1 The Impulse Memory Controller John B. Carter, Member, IEEE, Zhen Fang, Student Member, IEEE, Wilson C. Hsieh, Sally A. McKee, Member, IEEE, and Lixin Zhang, Student Member, IEEE AbstractÐImpulse is a memory system architecture that adds an optional level of address indirection at the memory controller. Applications can use this level of indirection to remap their data structures in memory. As a result, they can control how their data is accessed and cached, which can improve cache and bus utilization. The Impuse design does not require any modification to processor, cache, or bus designs since all the functionality resides at the memory controller. As a result, Impulse can be adopted in conventional systems without major system changes. We describe the design of the Impulse architecture and how an Impulse memory system can be used in a variety of ways to improve the performance of memory-bound applications. Impulse can be used to dynamically create superpages cheaply, to dynamically recolor physical pages, to perform strided fetches, and to perform gathers and scatters through indirection vectors. Our performance results demonstrate the effectiveness of these optimizations in a variety of scenarios. Using Impulse can speed up a range of applications from 20 percent to over a factor of 5. Alternatively, Impulse can be used by the OS for dynamic superpage creation; the best policy for creating superpages using Impulse outperforms previously known superpage creation policies. Index TermsÐComputer architecture, memory systems. æ 1 INTRODUCTION INCE 1987, microprocessor performance has improved at memory. By giving applications control (mediated by the Sa rate of 55 percent per year; in contrast, DRAM latencies OS) over the use of shadow addresses, Impulse supports have improved by only 7 percent per year and DRAM application-specific optimizations that restructure data. -

Optimizing Thread Throughput for Multithreaded Workloads on Memory Constrained Cmps

Optimizing Thread Throughput for Multithreaded Workloads on Memory Constrained CMPs Major Bhadauria and Sally A. Mckee Computer Systems Lab Cornell University Ithaca, NY, USA [email protected], [email protected] ABSTRACT 1. INTRODUCTION Multi-core designs have become the industry imperative, Power and thermal constraints have begun to limit the replacing our reliance on increasingly complicated micro- maximum operating frequency of high performance proces- architectural designs and VLSI improvements to deliver in- sors. The cubic increase in power from increases in fre- creased performance at lower power budgets. Performance quency and higher voltages required to attain those frequen- of these multi-core chips will be limited by the DRAM mem- cies has reached a plateau. By leveraging increasing die ory system: we demonstrate this by modeling a cycle-accurate space for more processing cores (creating chip multiproces- DDR2 memory controller with SPLASH-2 workloads. Sur- sors, or CMPs) and larger caches, designers hope that multi- prisingly, benchmarks that appear to scale well with the threaded programs can exploit shrinking transistor sizes to number of processors fail to do so when memory is accurately deliver equal or higher throughput as single-threaded, single- modeled. We frequently find that the most efficient config- core predecessors. The current software paradigm is based uration is not the one with the most threads. By choosing on the assumption that multi-threaded programs with little the most efficient number of threads for each benchmark, contention for shared data scale (nearly) linearly with the average energy delay efficiency improves by a factor of 3.39, number of processors, yielding power-efficient data through- and performance improves by 19.7%, on average. -

(BEER): Determining DRAM On-Die ECC Functions by Exploiting DRAM Data Retention Characteristics

Bit-Exact ECC Recovery (BEER): Determining DRAM On-Die ECC Functions by Exploiting DRAM Data Retention Characteristics Minesh Pately Jeremie S. Kimzy Taha Shahroodiy Hasan Hassany Onur Mutluyz yETH Zurich¨ zCarnegie Mellon University Increasing single-cell DRAM error rates have pushed DRAM entirely within the DRAM chip [39, 76, 120, 129, 138]. On-die manufacturers to adopt on-die error-correction coding (ECC), ECC is completely invisible outside of the DRAM chip, so ECC which operates entirely within a DRAM chip to improve factory metadata (i.e., parity-check bits, error syndromes) that is used yield. e on-die ECC function and its eects on DRAM relia- to correct errors is hidden from the rest of the system. bility are considered trade secrets, so only the manufacturer Prior works [60, 97, 98, 120, 129, 133, 138, 147] indicate that knows precisely how on-die ECC alters the externally-visible existing on-die ECC codes are 64- or 128-bit single-error cor- reliability characteristics. Consequently, on-die ECC obstructs rection (SEC) Hamming codes [44]. However, each DRAM third-party DRAM customers (e.g., test engineers, experimental manufacturer considers their on-die ECC mechanism’s design researchers), who typically design, test, and validate systems and implementation to be highly proprietary and ensures not to based on these characteristics. reveal its details in any public documentation, including DRAM To give third parties insight into precisely how on-die ECC standards [68,69], DRAM datasheets [63,121,149,158], publi- transforms DRAM error paerns during error correction, we cations [76, 97, 98, 133], and industry whitepapers [120, 147]. -

Computer Architectures

Computer Architectures Processor+Memory Richard Šusta, Pavel Píša Czech Technical University in Prague, Faculty of Electrical Engineering AE0B36APO Computer Architectures Ver.1.00 2019 1 The main instruction cycle of the CPU 1. Initial setup/reset – set initial PC value, PSW, etc. 2. Read the instruction from the memory PC → to the address bus Read the memory contents (machine instruction) and transfer it to the IR PC+l → PC, where l is length of the instruction 3. Decode operation code (opcode) 4. Execute the operation compute effective address, select registers, read operands, pass them through ALU and store result 5. Check for exceptions/interrupts (and service them) 6. Repeat from the step 2 AE0B36APO Computer Architectures 2 Single cycle CPU – implementation of the load instruction lw: type I, rs – base address, imm – offset, rt – register where to store fetched data I opcode(6), 31:26 rs(5), 25:21 rt(5), 20:16 immediate (16), 15:0 ALUControl 25:21 WE3 SrcA Zero WE PC’ PC Instr A1 RD1 A RD ALU A RD Instr. A2 RD2 SrcB AluOut Data ReadData Memory Memory A3 Reg. WD3 File WD 15:0 Sign Ext SignImm B35APO Computer Architectures 3 Single cycle CPU – implementation of the load instruction lw: type I, rs – base address, imm – offset, rt – register where to store fetched data I opcode(6), 31:26 rs(5), 25:21 rt(5), 20:16 immediate (16), 15:0 Write on rising edge of CLK RegWrite = 1 ALUControl 25:21 WE3 SrcA Zero WE PC’ PC Instr A1 RD1 A RD ALU A RD Instr. -

Machxo2 LPDDR SDRAM Controller IP Core User's Guide

MachXO2™ LPDDR SDRAM Controller IP Core User’s Guide February 2014 IPUG92_01.3 Table of Contents Chapter 1. Introduction .......................................................................................................................... 4 Introduction ........................................................................................................................................................... 4 Quick Facts ........................................................................................................................................................... 4 Features ................................................................................................................................................................ 4 Chapter 2. Functional Description ........................................................................................................ 5 Overview ............................................................................................................................................................... 5 Initialization Block......................................................................................................................................... 5 Read Training Block..................................................................................................................................... 6 Data Control Block ....................................................................................................................................... 6 LPDDR I/Os ................................................................................................................................................ -

¡ Semiconductor MSM5718C50/Md5764802this Version: Feb

E2G1059-39-21 ¡ Semiconductor MSM5718C50/MD5764802This version: Feb. 1999 ¡ Semiconductor Previous version: Nov. 1998 MSM5718C50/MD5764802 18Mb (2M ´ 9) & 64Mb (8M ´ 8) Concurrent RDRAM DESCRIPTION The 18/64-Megabit Concurrent Rambus™ DRAMs (RDRAM®) are extremely high-speed CMOS DRAMs organized as 2M or 8M words by 8 or 9 bits. They are capable of bursting unlimited lengths of data at 1.67 ns per byte (13.3 ns per eight bytes). The use of Rambus Signaling Level (RSL) technology permits 600 MHz transfer rates while using conventional system and board design methodologies. Low effective latency is attained by operating the two or four 2KB sense amplifiers as high speed caches, and by using random access mode (page mode) to facilitate large block transfers. Concurrent (simultaneous) bank operations permit high effective bandwidth using interleaved transactions. RDRAMs are general purpose high-performance memory devices suitable for use in a broad range of applications including PC and consumer main memory, graphics, video, and any other application where high-performance at low cost is required. FEATURES • Compatible with Base RDRAMs • 600 MB/s peak transfer rate per RDRAM • Rambus Signaling Level (RSL) interface • Synchronous, concurrent protocol for block-oriented, interleaved (overlapped) transfers • 480 MB/s effective bandwidth for random 32 byte transfers from one RDRAM • 13 active signals require just 32 total pins on the controller interface (including power) • 3.3 V operation • Additional/multiple Rambus Channels each provide an additional 600 MB/s bandwidth • Two or four 2KByte sense amplifiers may be operated as caches for low latency access • Random access mode enables any burst order at full bandwidth within a page • Graphics features include write-per-bit and mask-per-bit operations • Available in horizontal surface mount plastic package (SHP32-P-1125-0.65-K) 1/45 ¡ Semiconductor MSM5718C50/MD5764802 PART NUMBERS The 18- and 64-Megabit RDRAMs are available in horizontal surface mount plastic package (SHP), with 533 and 600 MHz clock rate.