Markov Chain Monte Carlo Methods

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Early Stored Program Computers

Stored Program Computers Thomas J. Bergin Computing History Museum American University 7/9/2012 1 Early Thoughts about Stored Programming • January 1944 Moore School team thinks of better ways to do things; leverages delay line memories from War research • September 1944 John von Neumann visits project – Goldstine’s meeting at Aberdeen Train Station • October 1944 Army extends the ENIAC contract research on EDVAC stored-program concept • Spring 1945 ENIAC working well • June 1945 First Draft of a Report on the EDVAC 7/9/2012 2 First Draft Report (June 1945) • John von Neumann prepares (?) a report on the EDVAC which identifies how the machine could be programmed (unfinished very rough draft) – academic: publish for the good of science – engineers: patents, patents, patents • von Neumann never repudiates the myth that he wrote it; most members of the ENIAC team contribute ideas; Goldstine note about “bashing” summer7/9/2012 letters together 3 • 1.0 Definitions – The considerations which follow deal with the structure of a very high speed automatic digital computing system, and in particular with its logical control…. – The instructions which govern this operation must be given to the device in absolutely exhaustive detail. They include all numerical information which is required to solve the problem…. – Once these instructions are given to the device, it must be be able to carry them out completely and without any need for further intelligent human intervention…. • 2.0 Main Subdivision of the System – First: since the device is a computor, it will have to perform the elementary operations of arithmetics…. – Second: the logical control of the device is the proper sequencing of its operations (by…a control organ. -

The Convergence of Markov Chain Monte Carlo Methods 3

The Convergence of Markov chain Monte Carlo Methods: From the Metropolis method to Hamiltonian Monte Carlo Michael Betancourt From its inception in the 1950s to the modern frontiers of applied statistics, Markov chain Monte Carlo has been one of the most ubiquitous and successful methods in statistical computing. The development of the method in that time has been fueled by not only increasingly difficult problems but also novel techniques adopted from physics. In this article I will review the history of Markov chain Monte Carlo from its inception with the Metropolis method to the contemporary state-of-the-art in Hamiltonian Monte Carlo. Along the way I will focus on the evolving interplay between the statistical and physical perspectives of the method. This particular conceptual emphasis, not to mention the brevity of the article, requires a necessarily incomplete treatment. A complementary, and entertaining, discussion of the method from the statistical perspective is given in Robert and Casella (2011). Similarly, a more thorough but still very readable review of the mathematics behind Markov chain Monte Carlo and its implementations is given in the excellent survey by Neal (1993). I will begin with a discussion of the mathematical relationship between physical and statistical computation before reviewing the historical introduction of Markov chain Monte Carlo and its first implementations. Then I will continue to the subsequent evolution of the method with increasing more sophisticated implementations, ultimately leading to the advent of Hamiltonian Monte Carlo. 1. FROM PHYSICS TO STATISTICS AND BACK AGAIN At the dawn of the twentieth-century, physics became increasingly focused on under- arXiv:1706.01520v2 [stat.ME] 10 Jan 2018 standing the equilibrium behavior of thermodynamic systems, especially ensembles of par- ticles. -

Computer Development at the National Bureau of Standards

Computer Development at the National Bureau of Standards The first fully operational stored-program electronic individual computations could be performed at elec- computer in the United States was built at the National tronic speed, but the instructions (the program) that Bureau of Standards. The Standards Electronic drove these computations could not be modified and Automatic Computer (SEAC) [1] (Fig. 1.) began useful sequenced at the same electronic speed as the computa- computation in May 1950. The stored program was held tions. Other early computers in academia, government, in the machine’s internal memory where the machine and industry, such as Edvac, Ordvac, Illiac I, the Von itself could modify it for successive stages of a compu- Neumann IAS computer, and the IBM 701, would not tation. This made a dramatic increase in the power of begin productive operation until 1952. programming. In 1947, the U.S. Bureau of the Census, in coopera- Although originally intended as an “interim” com- tion with the Departments of the Army and Air Force, puter, SEAC had a productive life of 14 years, with a decided to contract with Eckert and Mauchly, who had profound effect on all U.S. Government computing, the developed the ENIAC, to create a computer that extension of the use of computers into previously would have an internally stored program which unknown applications, and the further development of could be run at electronic speeds. Because of a demon- computing machines in industry and academia. strated competence in designing electronic components, In earlier developments, the possibility of doing the National Bureau of Standards was asked to be electronic computation had been demonstrated by technical monitor of the contract that was issued, Atanasoff at Iowa State University in 1937. -

Lecture #8: Monte Carlo Method

Lecture #8: Monte Carlo method ENGG304: Uncertainty, Reliability and Risk Edoardo Patelli Institute for Risk and Uncertainty E: [email protected] W: www.liv.ac.uk/risk-and-uncertainty T: +44 01517944079 A MEMBER OF THE RUSSELL GROUP Edoardo Patelli University of Liverpool 18 March 2019 1 / 81 Lecture Outline 1 Introduction 2 Monte Carlo method Random Number Generator Sampling Methods Buffon’s experiment Monte Carlo integration Probability of failure 3 Summary 4 Computer based class Some useful slides Assignments Edoardo Patelli University of Liverpool 18 March 2019 2 / 81 Introduction Programme ENGG304 1 28/01/2019 Introduction 2 08/02/2019 Human error 3 11/02/2019 Qualitative risk assessment: Safety analysis 4 18/02/2019 Qualitative risk assessment: Event Tree and Fault Tree 5 25/02/2019 Tutorial I 6 04/03/2019 Model of random phenomena 7 11/03/2019 Structural reliability 8 18/03/2019 Monte Carlo simulation I + Hands-on session 9 25/03/2019 Tutorial II 10 01/04/2019 Monte Carlo simulation II + Tutorial III (Hands-on session) Edoardo Patelli University of Liverpool 18 March 2019 3 / 81 Introduction Summary Lecture #7 Safety Margin Fundamental problem Performance function defined as “Capacity” - “Demand” Safety margin: M = C − D = g(x) 2 For normal and independent random variables: M ∼ N(µM ; σM ) q 2 2 µM = µC − µD σM = σC + σD Reliability index β β = µM /σM represents the number of standard deviations by which the mean value of the safety margin M exceeds zero Edoardo Patelli University of Liverpool 18 March 2019 4 / 81 Introduction -

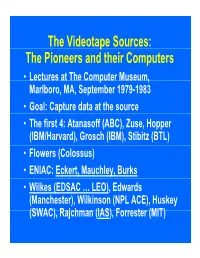

P the Pioneers and Their Computers

The Videotape Sources: The Pioneers and their Computers • Lectures at The Compp,uter Museum, Marlboro, MA, September 1979-1983 • Goal: Capture data at the source • The first 4: Atanasoff (ABC), Zuse, Hopper (IBM/Harvard), Grosch (IBM), Stibitz (BTL) • Flowers (Colossus) • ENIAC: Eckert, Mauchley, Burks • Wilkes (EDSAC … LEO), Edwards (Manchester), Wilkinson (NPL ACE), Huskey (SWAC), Rajchman (IAS), Forrester (MIT) What did it feel like then? • What were th e comput ers? • Why did their inventors build them? • What materials (technology) did they build from? • What were their speed and memory size specs? • How did they work? • How were they used or programmed? • What were they used for? • What did each contribute to future computing? • What were the by-products? and alumni/ae? The “classic” five boxes of a stored ppgrogram dig ital comp uter Memory M Central Input Output Control I O CC Central Arithmetic CA How was programming done before programming languages and O/Ss? • ENIAC was programmed by routing control pulse cables f ormi ng th e “ program count er” • Clippinger and von Neumann made “function codes” for the tables of ENIAC • Kilburn at Manchester ran the first 17 word program • Wilkes, Wheeler, and Gill wrote the first book on programmiidbBbbIiSiing, reprinted by Babbage Institute Series • Parallel versus Serial • Pre-programming languages and operating systems • Big idea: compatibility for program investment – EDSAC was transferred to Leo – The IAS Computers built at Universities Time Line of First Computers Year 1935 1940 1945 1950 1955 ••••• BTL ---------o o o o Zuse ----------------o Atanasoff ------------------o IBM ASCC,SSEC ------------o-----------o >CPC ENIAC ?--------------o EDVAC s------------------o UNIVAC I IAS --?s------------o Colossus -------?---?----o Manchester ?--------o ?>Ferranti EDSAC ?-----------o ?>Leo ACE ?--------------o ?>DEUCE Whirl wi nd SEAC & SWAC ENIAC Project Time Line & Descendants IBM 701, Philco S2000, ERA.. -

SIMD1 Ñ Illiac IV

Illiac IV History Illiac IV n First massively parallel computer ● SIMD (duplicate the PE, not the CU) ● First large system with semiconductor- based primary memory n Three earlier designs (vacuum tubes and transistors) culminating in the Illiac IV design, all at the University of Illinois ● Logical organization similar to the Solomon (prototyped by Westinghouse) ● Sponsored by DARPA, built by various companies, assembled by Burroughs ● Plan was for 256 PEs, in 4 quadrants of 64 PEs, but only one quadrant was built ● Used at NASA Ames Research Center in mid-1970s 1 Fall 2001, Lecture SIMD1 2 Fall 2001, Lecture SIMD1 Illiac IV Architectural Overview Programming Issues n One CU (control unit), n Consider the following FORTRAN code: 64 64-bit PEs (processing elements), DO 10 I = 1, 64 each PE has a PEM (PE memory) 10 A(I) = B(I) + C(I) ● Put A(1), B(1), C(1) on PU 1, etc. n CU operates on scalars, PEs on vector- n Each PE loads RGA from base+1, aligned arrays adds base+2, stores into base, ● All PEs execute the instruction broadcast where “base” is base of data in PEM by the CU, if they are in active mode n Each PE does this simultaneously, giving a speedup of 64 ● Each PE can perform various arithmetic ● and logical instructions For less than 64 array elements, some processors will sit idle ● Each PE has a memory with 2048 64-bit ● words, accessed in less than 188 ns For more than 64 array elements, some processors might have to do more work ● PEs can operate on data in 64-bit, 32-bit, and 8-bit formats n For some algorithms, it may be desirable to turn off PEs n Data routed between PEs various ways ● 64 PEs compute, then one half passes data to other half, then 32 PEs compute, n I/O is handled by a separate Burroughs etc. -

Computer History a Look Back Contents

Computer History A look back Contents 1 Computer 1 1.1 Etymology ................................................. 1 1.2 History ................................................... 1 1.2.1 Pre-twentieth century ....................................... 1 1.2.2 First general-purpose computing device ............................. 3 1.2.3 Later analog computers ...................................... 3 1.2.4 Digital computer development .................................. 4 1.2.5 Mobile computers become dominant ............................... 7 1.3 Programs ................................................. 7 1.3.1 Stored program architecture ................................... 8 1.3.2 Machine code ........................................... 8 1.3.3 Programming language ...................................... 9 1.3.4 Fourth Generation Languages ................................... 9 1.3.5 Program design .......................................... 9 1.3.6 Bugs ................................................ 9 1.4 Components ................................................ 10 1.4.1 Control unit ............................................ 10 1.4.2 Central processing unit (CPU) .................................. 11 1.4.3 Arithmetic logic unit (ALU) ................................... 11 1.4.4 Memory .............................................. 11 1.4.5 Input/output (I/O) ......................................... 12 1.4.6 Multitasking ............................................ 12 1.4.7 Multiprocessing ......................................... -

RN Random Numbers

Random Numb ers RN Copyright C June Computational Science Education Pro ject Remarks Keywords Random pseudorandom linear congruential lagged Fib onacci List of prerequisites some exp osure to sequences and series some abilitytowork in base arithmetic helpful go o d background in FORTRAN or some other pro cedural language List of computational metho ds pseudorandom numb ers random numb ers linear congruential generators LCGs lagged Fib onacci generators LFGs List of architecturescomputers any computer with a FORTRAN compiler and bitwise logical functions List of co des supplied ranlcfor linear congruential generator ranlffor lagged Fib onacci generator randomfor p ortable linear congruential generator after Park and Miller getseedfor to generate initial seeds from the time and date implemented for Unix and IBM PCs Scop e lectures dep ending up on depth of coverage Notation Key a integer multiplier a m c integer constant c m D length of needle in Buons needle exp eriment F fraction of trials needle falls within ruled grid in Buons needle exp eriment k lag in lagged Fib onacci generator k lag in lagged Fib onacci generator k M numb er of binary bits in m m integer mo dulus m N numb er of trials N numb er of parallel pro cessors p P p erio d of generator p a prime integer R random real numb er R n n S spacing b etween grid in Buons needle exp eriment th X n random integer n Subscripts and Sup erscripts denotes initial value or seed th j denotes j value th denotes value th k denotes k value th n denotes n value -

Oral History Interview with David J. Wheeler

An Interview with DAVID J. WHEELER OH 132 Conducted by William Aspray on 14 May 1987 Princeton, NJ Charles Babbage Institute The Center for the History of Information Processing University of Minnesota, Minneapolis Copyright, Charles Babbage Institute 1 David J. Wheeler Interview 14 May 1987 Abstract Wheeler, who was a research student at the University Mathematical Laboratory at Cambridge from 1948-51, begins with a discussion of the EDSAC project during his tenure. He compares the research orientation and the programming methods at Cambridge with those at the Institute for Advanced Study. He points out that, while the Cambridge group was motivated to process many smaller projects from the larger university community, the Institute was involved with a smaller number of larger projects. Wheeler mentions some of the projects that were run on the EDSAC, the user-oriented programming methods that developed at the laboratory, and the influence of the EDSAC model on the ILLIAC, the ORDVAC, and the IBM 701. He also discusses the weekly meetings held in conjunction with the National Physical Laboratory, the University of Birmingham, and the Telecommunications Research Establishment. These were attended by visitors from other British institutions as well as from the continent and the United States. Wheeler notes visits by Douglas Hartree (of Cavendish Laboratory), Nelson Blackman (of ONR), Peter Naur, Aad van Wijngarden, Arthur van der Poel, Friedrich L. Bauer, and Louis Couffignal. In the final part of the interview Wheeler discusses his visit to Illinois where he worked on the ILLIAC and taught from September 1951 to September 1953. 2 DAVID J. -

Parallel Architecture Hardware and General Purpose Operating System

Parallel Architecture Hardware and General Purpose Operating System Co-design Oskar Schirmer G¨ottingen, 2018-07-10 Abstract Because most optimisations to achieve higher computational performance eventually are limited, parallelism that scales is required. Parallelised hard- ware alone is not sufficient, but software that matches the architecture is required to gain best performance. For decades now, hardware design has been guided by the basic design of existing software, to avoid the higher cost to redesign the latter. In doing so, however, quite a variety of supe- rior concepts is excluded a priori. Consequently, co-design of both hardware and software is crucial where highest performance is the goal. For special purpose application, this co-design is common practice. For general purpose application, however, a precondition for usability of a computer system is an arXiv:1807.03546v1 [cs.DC] 10 Jul 2018 operating system which is both comprehensive and dynamic. As no such op- erating system has ever been designed, a sketch for a comprehensive dynamic operating system is presented, based on a straightforward hardware architec- ture to demonstrate how design decisions regarding software and hardware do coexist and harmonise. 1 Contents 1 Origin 4 1.1 Performance............................ 4 1.2 Limits ............................... 5 1.3 TransparentStructuralOptimisation . 8 1.4 VectorProcessing......................... 9 1.5 Asymmetric Multiprocessing . 10 1.6 SymmetricMulticore ....................... 11 1.7 MultinodeComputer ....................... 12 2 Review 14 2.1 SharedMemory.......................... 14 2.2 Cache ............................... 15 2.3 Synchronisation .......................... 15 2.4 Time-Shared Multitasking . 15 2.5 Interrupts ............................. 16 2.6 Exceptions............................. 16 2.7 PrivilegedMode.......................... 17 2.8 PeripheralI/O ......................... -

11/15/20 Engineering Computer Science James E. Robertson

The materials listed in this document are available for research at the University of Record Series Number Illinois Archives. For more information, email [email protected] or search http://www.library.illinois.edu/archives/archon for the record series number. 11/15/20 Engineering Computer Science James E. Robertson Papers, 1942-93 TABLE OF CONTENTS Material Box Biographical 1 Publications 1 Correspondence 1-3 Photography 3 Subject Files 1942-62 3 1962-65 4 1965-72 5 1972-88 6-7 1948-53 11 1953-58 12 1958-70 13 Course Material 8-9, 13 Research Papers, Publications, and Reports 9-10, 13 Computer Logbooks and Manuals 14-15 Computer Codes 16 Oversize 10, 16 11/15/20 2 Box 1: BIOGRAPHICAL Biographical and vitas, ca. 1950s, 1966, 1981, 1993 Appointments, 1949-72 PUBLICATIONS 1952-59 1960-67 1973-79 1980-83 1985-87 CORRESPONDENCE 1947, 1949 (4 folders), 1950-54 (4 folders), 1955-56 (2 folders), 1957-58 (18 folders), 1959-74 Box 2: Correspondence (9 folders), 1975-79 DCL Correspondence (13 folders), 1980-82 Box 3: DCL Correspondence (7 folders), 1983-July 1984 Correspondence (2 folders), 1985-90 PHOTOGRAPHY, J.E. ROBERTSON Drum Photos, Sept. 1953 Old Photos (Ordvac and Illiac), 1954 Pacific Travel Photo listing, 1962-63 Computer Panels & U of I photos, ca. 1965-70 Arithmetic Unit Glass Slides Fast Switching Experiment Glass Slides SUBJECT FILE, 1942-62 Certificates, 1942-51 Burks, Goldstine & von Neumann, Logical Design of an Electronic Computer 1946-47 Computer Memory Improvement article in C-U Gazette, Feb. 8, 1949 "Preliminary Design of an Arithmetic Unit for...Binary Digital Computer," by J.E.R., June 23, 1950 Ordvac article in Daily Oklahoman, Jan. -

ILLIAC IV Is the Most Powerful by As Much As a Factor of Four

The ILLl AC IV System represents a fundamentally different approach to data processing. The limitation imposed by the velocity of light, once thought to be an absolute upper bound on computing power, has been stepped over by several approaches to computer architecture, of which the ILLIAC IV is the most powerful by as much as a factor of four. The conquest of the limitations of the velocity of light was foreseen by Herman Kahn and A.J. Wiener in 1967, when they wrote: ". .over the past fifteen years this basic criterion of computer performance has increased by a factor of ten every two or three years . While some will argue that we are beginning to reach limits set by basic physical constants, such as the speed of light, this may not be true, especially when one considers new techniques in time sharing, segmentation of programs to add flexibility, and parallel processing computers. .(such as). .the ILLIAC IV #I .a. ILLIAC IV represents a significant step forward in computer systems architecture offering - greatly improved performances: 200 MIPS computation speed 109 bitslsec I10 transfer rate 106 bytes of high-speed integrated circuit memories 2.5 X 109bits of parallel disk storage - contemporary technology: ECL circuits semiconductor memories belted cables - and a new approach to the art of computing using parallelism, which offers an opportunity to programmers to utilize the vast ILLIAC 1 V Quadrant power of the system as effectively as possible. MAJOR SYSTEM ELEMENTS As shown in the accompanying system diagram, the major elements of the ILLIAC IV System are the Array Subsystem, the I10 Subsystem, the Disk File Subsystem, and the B 6700 Control Computer Subsystem.