Modern GPU Architectures

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

ATI Radeon™ HD 4870 Computation Highlights

AMD Entering the Golden Age of Heterogeneous Computing Michael Mantor Senior GPU Compute Architect / Fellow AMD Graphics Product Group [email protected] 1 The 4 Pillars of massively parallel compute offload •Performance M’Moore’s Law Î 2x < 18 Month s Frequency\Power\Complexity Wall •Power Parallel Î Opportunity for growth •Price • Programming Models GPU is the first successful massively parallel COMMODITY architecture with a programming model that managgped to tame 1000’s of parallel threads in hardware to perform useful work efficiently 2 Quick recap of where we are – Perf, Power, Price ATI Radeon™ HD 4850 4x Performance/w and Performance/mm² in a year ATI Radeon™ X1800 XT ATI Radeon™ HD 3850 ATI Radeon™ HD 2900 XT ATI Radeon™ X1900 XTX ATI Radeon™ X1950 PRO 3 Source of GigaFLOPS per watt: maximum theoretical performance divided by maximum board power. Source of GigaFLOPS per $: maximum theoretical performance divided by price as reported on www.buy.com as of 9/24/08 ATI Radeon™HD 4850 Designed to Perform in Single Slot SP Compute Power 1.0 T-FLOPS DP Compute Power 200 G-FLOPS Core Clock Speed 625 Mhz Stream Processors 800 Memory Type GDDR3 Memory Capacity 512 MB Max Board Power 110W Memory Bandwidth 64 GB/Sec 4 ATI Radeon™HD 4870 First Graphics with GDDR5 SP Compute Power 1.2 T-FLOPS DP Compute Power 240 G-FLOPS Core Clock Speed 750 Mhz Stream Processors 800 Memory Type GDDR5 3.6Gbps Memory Capacity 512 MB Max Board Power 160 W Memory Bandwidth 115.2 GB/Sec 5 ATI Radeon™HD 4870 X2 Incredible Balance of Performance,,, Power, Price -

An Evolution of Mobile Graphics

AN EVOLUTION OF MOBILE GRAPHICS Michael C. Shebanow Vice President, Advanced Processor Lab Samsung Electronics July 20, 20131 DISCLAIMER • The views herein are my own • They do not represent Samsung’s vision nor product plans 2 • The Mobile Market • Review of GPU Tech • GPU Efficiency • User Experience • Tech Challenges • Summary 3 The Rise of the Mobile GPU & Connectivity A NEW WORLD COMING? 4 DISCRETE GPU MARKET Flattening 5 MOBILE GPU MARKET Smart • In 2012, an estimated 800+ Phones million mobile GPUs shipped “Phablets” • ~123M tablets • ~712M smart phones Tablets • Will easily exceed 1B in the coming years • Trend: • Discrete GPU relatively flat • Mobile is growing rapidly 6 WW INTERNET TRAFFIC • Source: Cisco VNI Mobile INET IP Traffic growth Traffic • Internet traffic growth Year (TB/sec) rate (TB/sec) rate is staggering 2005 0.9 0.00 2006 1.5 65% 0.00 • 2012 total traffic is 2007 2.5 61% 0.01 13.7 GB per person 2008 3.8 54% 0.01 per month 2009 5.6 45% 0.04 2010 7.8 40% 0.10 • 2012 smart phone 2011 10.6 36% 0.23 traffic at 2012 12.4 17% 0.34 0.342 GB per person per month • 2017 smart phone traffic expected at 2.7 GB per person per month 7 WHERE ARE WE HEADED?… • Enormous quantity of GPUs • Large amount of interconnectivity • Better I/O 8 GPU Pipelines A BRIEF REVIEW OF GPU TECH 9 MOBILE GPU PIPELINE ARCHITECTURES Tile-based immediate mode rendering IA VS CCV RS PS ROP (TBIMR) Tile-based deferred IA VS CCV scene rendering (TBDR) RS PS ROP IA = input assembler VS = vertex shader CCV = cull, clip, viewport transform RS = rasterization, -

NVIDIA Quadro P4000

NVIDIA Quadro P4000 GP104 1792 112 64 8192 MB GDDR5 256 bit GRAPHICS PROCESSOR CORES TMUS ROPS MEMORY SIZE MEMORY TYPE BUS WIDTH The Quadro P4000 is a professional graphics card by NVIDIA, launched in February 2017. Built on the 16 nm process, and based on the GP104 graphics processor, the card supports DirectX 12.0. The GP104 graphics processor is a large chip with a die area of 314 mm² and 7,200 million transistors. Unlike the fully unlocked GeForce GTX 1080, which uses the same GPU but has all 2560 shaders enabled, NVIDIA has disabled some shading units on the Quadro P4000 to reach the product's target shader count. It features 1792 shading units, 112 texture mapping units and 64 ROPs. NVIDIA has placed 8,192 MB GDDR5 memory on the card, which are connected using a 256‐bit memory interface. The GPU is operating at a frequency of 1227 MHz, which can be boosted up to 1480 MHz, memory is running at 1502 MHz. We recommend the NVIDIA Quadro P4000 for gaming with highest details at resolutions up to, and including, 5760x1080. Being a single‐slot card, the NVIDIA Quadro P4000 draws power from 1x 6‐pin power connectors, with power draw rated at 105 W maximum. Display outputs include: 4x DisplayPort. Quadro P4000 is connected to the rest of the system using a PCIe 3.0 x16 interface. The card measures 241 mm in length, and features a single‐slot cooling solution. Graphics Processor Graphics Card GPU Name: GP104 Released: Feb 6th, 2017 Architecture: Pascal Production Active Status: Process Size: 16 nm Bus Interface: PCIe 3.0 x16 Transistors: 7,200 -

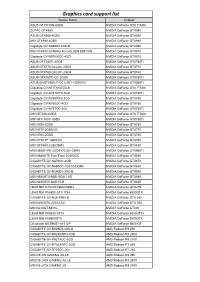

Graphics Card Support List

Graphics card support list Device Name Chipset ASUS GTXTITAN-6GD5 NVIDIA GeForce GTX TITAN ZOTAC GTX980 NVIDIA GeForce GTX980 ASUS GTX980-4GD5 NVIDIA GeForce GTX980 MSI GTX980-4GD5 NVIDIA GeForce GTX980 Gigabyte GV-N980D5-4GD-B NVIDIA GeForce GTX980 MSI GTX970 GAMING 4G GOLDEN EDITION NVIDIA GeForce GTX970 Gigabyte GV-N970IXOC-4GD NVIDIA GeForce GTX970 ASUS GTX780TI-3GD5 NVIDIA GeForce GTX780Ti ASUS GTX770-DC2OC-2GD5 NVIDIA GeForce GTX770 ASUS GTX760-DC2OC-2GD5 NVIDIA GeForce GTX760 ASUS GTX750TI-OC-2GD5 NVIDIA GeForce GTX750Ti ASUS ENGTX560-Ti-DCII/2D1-1GD5/1G NVIDIA GeForce GTX560Ti Gigabyte GV-NTITAN-6GD-B NVIDIA GeForce GTX TITAN Gigabyte GV-N78TWF3-3GD NVIDIA GeForce GTX780Ti Gigabyte GV-N780WF3-3GD NVIDIA GeForce GTX780 Gigabyte GV-N760OC-4GD NVIDIA GeForce GTX760 Gigabyte GV-N75TOC-2GI NVIDIA GeForce GTX750Ti MSI NTITAN-6GD5 NVIDIA GeForce GTX TITAN MSI GTX 780Ti 3GD5 NVIDIA GeForce GTX780Ti MSI N780-3GD5 NVIDIA GeForce GTX780 MSI N770-2GD5/OC NVIDIA GeForce GTX770 MSI N760-2GD5 NVIDIA GeForce GTX760 MSI N750 TF 1GD5/OC NVIDIA GeForce GTX750 MSI GTX680-2GB/DDR5 NVIDIA GeForce GTX680 MSI N660Ti-PE-2GD5-OC/2G-DDR5 NVIDIA GeForce GTX660Ti MSI N680GTX Twin Frozr 2GD5/OC NVIDIA GeForce GTX680 GIGABYTE GV-N670OC-2GD NVIDIA GeForce GTX670 GIGABYTE GV-N650OC-1GI/1G-DDR5 NVIDIA GeForce GTX650 GIGABYTE GV-N590D5-3GD-B NVIDIA GeForce GTX590 MSI N580GTX-M2D15D5/1.5G NVIDIA GeForce GTX580 MSI N465GTX-M2D1G-B NVIDIA GeForce GTX465 LEADTEK GTX275/896M-DDR3 NVIDIA GeForce GTX275 LEADTEK PX8800 GTX TDH NVIDIA GeForce 8800GTX GIGABYTE GV-N26-896H-B -

Gpus: the Hype, the Reality, and the Future

Uppsala Programming for Multicore Architectures Research Center GPUs: The Hype, The Reality, and The Future David Black-Schaffer Assistant Professor, Department of Informaon Technology Uppsala University David Black-Schaffer Uppsala University / Department of Informaon Technology 25/11/2011 | 2 Today 1. The hype 2. What makes a GPU a GPU? 3. Why are GPUs scaling so well? 4. What are the problems? 5. What’s the Future? David Black-Schaffer Uppsala University / Department of Informaon Technology 25/11/2011 | 3 THE HYPE David Black-Schaffer Uppsala University / Department of Informaon Technology 25/11/2011 | 4 How Good are GPUs? 100x 3x David Black-Schaffer Uppsala University / Department of Informaon Technology 25/11/2011 | 5 Real World SoVware • Press release 10 Nov 2011: – “NVIDIA today announced that four leading applicaons… have added support for mul<ple GPU acceleraon, enabling them to cut simulaon mes from days to hours.” • GROMACS – 2-3x overall – Implicit solvers 10x, PME simulaons 1x • LAMPS – 2-8x for double precision – Up to 15x for mixed • QMCPACK – 3x 2x is AWESOME! Most research claims 5-10%. David Black-Schaffer Uppsala University / Department of Informaon Technology 25/11/2011 | 6 GPUs for Linear Algebra 5 CPUs = 75% of 1 GPU StarPU for MAGMA David Black-Schaffer Uppsala University / Department of Informaon Technology 25/11/2011 | 7 GPUs by the Numbers (Peak and TDP) 5 791 675 4 192 176 3 Intel 32nm vs. 40nm 36% smaller per transistor 2 244 250 3.0 Normalized to Intel 3960X 130 172 51 3.3 2.3 2.4 1 1.5 0.9 0 WaEs GFLOP Bandwidth -

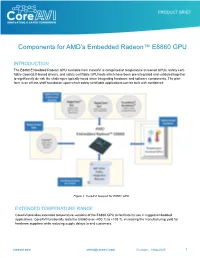

AMD Radeon E8860

Components for AMD’s Embedded Radeon™ E8860 GPU INTRODUCTION The E8860 Embedded Radeon GPU available from CoreAVI is comprised of temperature screened GPUs, safety certi- fiable OpenGL®-based drivers, and safety certifiable GPU tools which have been pre-integrated and validated together to significantly de-risk the challenges typically faced when integrating hardware and software components. The plat- form is an off-the-shelf foundation upon which safety certifiable applications can be built with confidence. Figure 1: CoreAVI Support for E8860 GPU EXTENDED TEMPERATURE RANGE CoreAVI provides extended temperature versions of the E8860 GPU to facilitate its use in rugged embedded applications. CoreAVI functionally tests the E8860 over -40C Tj to +105 Tj, increasing the manufacturing yield for hardware suppliers while reducing supply delays to end customers. coreavi.com [email protected] Revision - 13Nov2020 1 E8860 GPU LONG TERM SUPPLY AND SUPPORT CoreAVI has provided consistent and dedicated support for the supply and use of the AMD embedded GPUs within the rugged Mil/Aero/Avionics market segment for over a decade. With the E8860, CoreAVI will continue that focused support to ensure that the software, hardware and long-life support are provided to meet the needs of customers’ system life cy- cles. CoreAVI has extensive environmentally controlled storage facilities which are used to store the GPUs supplied to the Mil/ Aero/Avionics marketplace, ensuring that a ready supply is available for the duration of any program. CoreAVI also provides the post Last Time Buy storage of GPUs and is often able to provide additional quantities of com- ponents when COTS hardware partners receive increased volume for existing products / systems requiring additional inventory. -

Graphics: Mesa, AMDVLK, Adreno and Protected Xe Path

Published on Tux Machines (http://www.tuxmachines.org) Home > content > Graphics: Mesa, AMDVLK, Adreno and Protected Xe Path Graphics: Mesa, AMDVLK, Adreno and Protected Xe Path By Roy Schestowitz Created 08/02/2021 - 11:48pm Submitted by Roy Schestowitz on Monday 8th of February 2021 11:48:11 PM Filed under Graphics/Benchmarks [1] Panfrost Gallium3D Lands Its New Bifrost Scheduler In Mesa 21.1 - Phoronix[2] Hitting Mesa 21.1 this morning is a scheduler implementation for Panfrost Gallium3D, the open-source Arm Mali graphics driver. Lead Panfrost developer Alyssa Rosenzweig has been working to implement a scheduler in panfrost for the Arm Bifrost graphics code path. The scheduler has been in the works for a number of months and is passing the relevant conformance tests and has now been merged. AMDVLK 2021.Q1.3 Brings Performance Tuning For War Thunder - Phoronix[3] AMDVLK 2021.Q1.3 is out this morning as the latest snapshot of the official open-source AMD Radeon Vulkan driver for Linux systems that is derived from their shared platform driver sources. AMDVLK 2021.Q1.3 is on the lighter side with AMDVLK 2021.Q1.2 having arrived just over one week ago. Of the two listed driver changes, AMDVLK 2021.Q1.3 is rebuilt against the Vulkan API 1.2.168 headers. Freedreno's MSM DRM Driver Adds More Adreno Support, Speedbin Capability For Linux 5.12 - Phoronix[4] The MSM Direct Rendering Manager driver originally developed as part of the Freedreno effort for open-source Qualcomm Adreno graphics on Linux while now supported by the likes of Google and Qualcomm's Code Aurora engineers has some notable changes in store for the next Linux kernel cycle. -

Accelerating Applications with Pattern-Specific Optimizations On

Accelerating Applications with Pattern-specific Optimizations on Accelerators and Coprocessors Dissertation Presented in Partial Fulfillment of the Requirements for the Degree Doctor of Philosophy in the Graduate School of The Ohio State University By Linchuan Chen, B.S., M.S. Graduate Program in Computer Science and Engineering The Ohio State University 2015 Dissertation Committee: Dr. Gagan Agrawal, Advisor Dr. P. Sadayappan Dr. Feng Qin ⃝c Copyright by Linchuan Chen 2015 Abstract Because of the bottleneck in the increase of clock frequency, multi-cores emerged as a way of improving the overall performance of CPUs. In the recent decade, many-cores begin to play a more and more important role in scientific computing. The highly cost- effective nature of many-cores makes them extremely suitable for data-intensive computa- tions. Specifically, many-cores are in the forms of GPUs (e.g., NVIDIA or AMD GPUs) and more recently, coprocessers (Intel MIC). Even though these highly parallel architec- tures offer significant amount of computation power, it is very hard to program them, and harder to fully exploit the computation power of them. Combing the power of multi-cores and many-cores, i.e., making use of the heterogeneous cores is extremely complicated. Our efforts have been made on performing optimizations to important sets of appli- cations on such parallel systems. We address this issue from the perspective of commu- nication patterns. Scientific applications can be classified based on the properties (com- munication patterns), which have been specified in the Berkeley Dwarfs many years ago. By investigating the characteristics of each class, we are able to derive efficient execution strategies, across different levels of the parallelism. -

NVIDIA Corp NVDA (XNAS)

Morningstar Equity Analyst Report | Report as of 14 Sep 2020 04:02, UTC | Page 1 of 14 NVIDIA Corp NVDA (XNAS) Morningstar Rating Last Price Fair Value Estimate Price/Fair Value Trailing Dividend Yield % Forward Dividend Yield % Market Cap (Bil) Industry Stewardship Q 486.58 USD 250.00 USD 1.95 0.13 0.13 300.22 Semiconductors Exemplary 11 Sep 2020 11 Sep 2020 20 Aug 2020 11 Sep 2020 11 Sep 2020 11 Sep 2020 21:37, UTC 01:27, UTC Morningstar Pillars Analyst Quantitative Important Disclosure: Economic Moat Narrow Wide The conduct of Morningstar’s analysts is governed by Code of Ethics/Code of Conduct Policy, Personal Security Trading Policy (or an equivalent of), Valuation Q Overvalued and Investment Research Policy. For information regarding conflicts of interest, please visit http://global.morningstar.com/equitydisclosures Uncertainty Very High High Financial Health — Moderate Nvidia to Buy ARM in $40 Billion Deal with Eyes Set on Data Center Source: Morningstar Equity Research Dominance; Maintain FVE Quantitative Valuation NVDA Business Strategy and Outlook could limit Nvidia’s future growth. a USA Abhinav Davuluri, CFA, Analyst, 19 August 2020 Undervalued Fairly Valued Overvalued Nvidia is the leading designer of graphics processing units Analyst Note that enhance the visual experience on computing Abhinav Davuluri, CFA, Analyst, 13 September 2020 Current 5-Yr Avg Sector Country Price/Quant Fair Value 1.67 1.43 0.77 0.83 platforms. The firm's chips are used in a variety of end On Sept. 13, Nvidia announced it would acquire ARM from Price/Earnings 89.3 37.0 21.4 20.1 markets, including high-end PCs for gaming, data centers, the SoftBank Group in a transaction valued at $40 billion. -

Graphics Processing Units

Graphics Processing Units Graphics Processing Units (GPUs) are coprocessors that traditionally perform the rendering of 2-dimensional and 3-dimensional graphics information for display on a screen. In particular computer games request more and more realistic real-time rendering of graphics data and so GPUs became more and more powerful highly parallel specialist computing units. It did not take long until programmers realized that this computational power can also be used for tasks other than computer graphics. For example already in 1990 Lengyel, Re- ichert, Donald, and Greenberg used GPUs for real-time robot motion planning [43]. In 2003 Harris introduced the term general-purpose computations on GPUs (GPGPU) [28] for such non-graphics applications running on GPUs. At that time programming general-purpose computations on GPUs meant expressing all algorithms in terms of operations on graphics data, pixels and vectors. This was feasible for speed-critical small programs and for algorithms that operate on vectors of floating-point values in a similar way as graphics data is typically processed in the rendering pipeline. The programming paradigm shifted when the two main GPU manufacturers, NVIDIA and AMD, changed the hardware architecture from a dedicated graphics-rendering pipeline to a multi-core computing platform, implemented shader algorithms of the rendering pipeline in software running on these cores, and explic- itly supported general-purpose computations on GPUs by offering programming languages and software- development toolchains. This chapter first gives an introduction to the architectures of these modern GPUs and the tools and languages to program them. Then it highlights several applications of GPUs related to information security with a focus on applications in cryptography and cryptanalysis. -

Radeon GPU Profiler Documentation

Radeon GPU Profiler Documentation Release 1.11.0 AMD Developer Tools Jul 21, 2021 Contents 1 Graphics APIs, RDNA and GCN hardware, and operating systems3 2 Compute APIs, RDNA and GCN hardware, and operating systems5 3 Radeon GPU Profiler - Quick Start7 3.1 How to generate a profile.........................................7 3.2 Starting the Radeon GPU Profiler....................................7 3.3 How to load a profile...........................................7 3.4 The Radeon GPU Profiler user interface................................. 10 4 Settings 13 4.1 General.................................................. 13 4.2 Themes and colors............................................ 13 4.3 Keyboard shortcuts............................................ 14 4.4 UI Navigation.............................................. 16 5 Overview Windows 17 5.1 Frame summary (DX12 and Vulkan).................................. 17 5.2 Profile summary (OpenCL)....................................... 20 5.3 Barriers.................................................. 22 5.4 Context rolls............................................... 25 5.5 Most expensive events.......................................... 28 5.6 Render/depth targets........................................... 28 5.7 Pipelines................................................. 30 5.8 Device configuration........................................... 33 6 Events Windows 35 6.1 Wavefront occupancy.......................................... 35 6.2 Event timing............................................... 48 6.3 -

Massively Parallel Computation Using Graphics Processors with Application to Optimal Experimentation in Dynamic Control

Munich Personal RePEc Archive Massively parallel computation using graphics processors with application to optimal experimentation in dynamic control Morozov, Sergei and Mathur, Sudhanshu Morgan Stanley 10 August 2009 Online at https://mpra.ub.uni-muenchen.de/30298/ MPRA Paper No. 30298, posted 03 May 2011 17:05 UTC MASSIVELY PARALLEL COMPUTATION USING GRAPHICS PROCESSORS WITH APPLICATION TO OPTIMAL EXPERIMENTATION IN DYNAMIC CONTROL SERGEI MOROZOV AND SUDHANSHU MATHUR Abstract. The rapid growth in the performance of graphics hardware, coupled with re- cent improvements in its programmability has lead to its adoption in many non-graphics applications, including a wide variety of scientific computing fields. At the same time, a number of important dynamic optimal policy problems in economics are athirst of computing power to help overcome dual curses of complexity and dimensionality. We investigate if computational economics may benefit from new tools on a case study of imperfect information dynamic programming problem with learning and experimenta- tion trade-off, that is, a choice between controlling the policy target and learning system parameters. Specifically, we use a model of active learning and control of a linear au- toregression with the unknown slope that appeared in a variety of macroeconomic policy and other contexts. The endogeneity of posterior beliefs makes the problem difficult in that the value function need not be convex and the policy function need not be con- tinuous. This complication makes the problem a suitable target for massively-parallel computation using graphics processors (GPUs). Our findings are cautiously optimistic in that new tools let us easily achieve a factor of 15 performance gain relative to an implementation targeting single-core processors.