Augmenting Mathematical Formulae for More Effective Querying & Efficient Presentation

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Tractatus Logico-Philosophicus</Em>

University of South Florida Scholar Commons Graduate Theses and Dissertations Graduate School 8-6-2008 Three Wittgensteins: Interpreting the Tractatus Logico-Philosophicus Thomas J. Brommage Jr. University of South Florida Follow this and additional works at: https://scholarcommons.usf.edu/etd Part of the American Studies Commons Scholar Commons Citation Brommage, Thomas J. Jr., "Three Wittgensteins: Interpreting the Tractatus Logico-Philosophicus" (2008). Graduate Theses and Dissertations. https://scholarcommons.usf.edu/etd/149 This Dissertation is brought to you for free and open access by the Graduate School at Scholar Commons. It has been accepted for inclusion in Graduate Theses and Dissertations by an authorized administrator of Scholar Commons. For more information, please contact [email protected]. Three Wittgensteins: Interpreting the Tractatus Logico-Philosophicus by Thomas J. Brommage, Jr. A dissertation submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy Department of Philosophy College of Arts and Sciences University of South Florida Co-Major Professor: Kwasi Wiredu, B.Phil. Co-Major Professor: Stephen P. Turner, Ph.D. Charles B. Guignon, Ph.D. Richard N. Manning, J. D., Ph.D. Joanne B. Waugh, Ph.D. Date of Approval: August 6, 2008 Keywords: Wittgenstein, Tractatus Logico-Philosophicus, logical empiricism, resolute reading, metaphysics © Copyright 2008 , Thomas J. Brommage, Jr. Acknowledgments There are many people whom have helped me along the way. My most prominent debts include Ray Langely, Billy Joe Lucas, and Mary T. Clark, who trained me in philosophy at Manhattanville College; and also to Joanne Waugh, Stephen Turner, Kwasi Wiredu and Cahrles Guignon, all of whom have nurtured my love for the philosophy of language. -

First Order Logic

Artificial Intelligence CS 165A Mar 10, 2020 Instructor: Prof. Yu-Xiang Wang ® First Order Logic 1 Recap: KB Agents True sentences TELL Knowledge Base Domain specific content; facts ASK Inference engine Domain independent algorithms; can deduce new facts from the KB • Need a formal logic system to work • Need a data structure to represent known facts • Need an algorithm to answer ASK questions 2 Recap: syntax and semantics • Two components of a logic system • Syntax --- How to construct sentences – The symbols – The operators that connect symbols together – A precedence ordering • Semantics --- Rules the assignment of sentences to truth – For every possible worlds (or “models” in logic jargon) – The truth table is a semantics 3 Recap: Entailment Sentences Sentence ENTAILS Representation Semantics Semantics World Facts Fact FOLLOWS A is entailed by B, if A is true in all possiBle worlds consistent with B under the semantics. 4 Recap: Inference procedure • Inference procedure – Rules (algorithms) that we apply (often recursively) to derive truth from other truth. – Could be specific to a particular set of semantics, a particular realization of the world. • Soundness and completeness of an inference procedure – Soundness: All truth discovered are valid. – Completeness: All truth that are entailed can be discovered. 5 Recap: Propositional Logic • Syntax:Syntax – True, false, propositional symbols – ( ) , ¬ (not), Ù (and), Ú (or), Þ (implies), Û (equivalent) • Semantics: – Assigning values to the variables. Each row is a “model”. • Inference rules: – Modus Pronens etc. Most important: Resolution 6 Recap: Propositional logic agent • Representation: Conjunctive Normal Forms – Represent them in a data structure: a list, a heap, a tree? – Efficient TELL operation • Inference: Solve ASK question – Use “Resolution” only on CNFs is Sound and Complete. -

EA-1092; Environmental Assessment and FONSI for Decontamination and Dismantlement June 1995

EA-1092; Environmental Assessment and FONSI for Decontamination and Dismantlement June 1995 TABLE OF CONTENTS 1.0 PURPOSE AND NEED FOR AGENCY ACTION 2.0 PROPOSED ACTION AND ALTERNATIVES 3.0 AFFECTED ENVIRONMENT AND ENVIRONMENTAL CONSEQUENCES OF THE PROPOSED ACTION AND ALTERNATIVES 4.0 AGENCIES, ORGANIZATIONS, AND PERSONS CONSULTED 5.0 REFERENCES 6.0 LIST OF ACRONYMS AND ABBREVIATION 7.0 GLOSSARY LIST OF APPENDICES Appendix A - Letter, Dated September 12, 1991,^ Florida Department of State Division of Historical Resources Appendix B - 1990 Base Year Pinellas County Transportation Appendix C Reference Tables from the Nonnuclear Consolidation Environmental Assessment, June 1993, Finding of No Significant Impact, September 1993 Appendix D - Letter, Dated July 25, 1991, United States Department of Interior Fish and Wildlife Service LIST OF FIGURES Figure 1-1: Pinellas Plant Location Figure 1-2:Pinellas Plant Layout Figure 3-1:Pinellas Plant Areas Leased by DOE and Common Use Areas Figure 3-2:Solid Waste Management Units LIST OF TABLES Table 3-1. Pinellas Plant Building Summary Table 3-2. Calendar Years 1992, 1993, and 1994 Nonradiological Air Emissions Table 3-3. Discharge Standards and Average Concentrations for the Pinellas Plant Liquid Effluent Table 3-4. Tritium Released To PCSS In 1991, 1992, 1993, and 1994 Table 3-5. Waste Management Storage Capabilities (Hazardous Waste Operating Permit No. HO52-228925) (Hazardous Waste Operating Permit No. HO52-228925) Table 3-6. Baseline Traffic by Link at Pinellas Plant Table 3-7. 1994 CAP88-PC Dose Calculations Table B-1. 1990 Base Year Pinellas County Transportation Table C-1. Estimated Hourly Traffic Volumes and Traffic Noise Levels Along the Access Routes to Pinellas Table C-2. -

Every Nonsingular C Flow on a Closed Manifold of Dimension Greater Than

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector Topology and its Applications 135 (2004) 131–148 www.elsevier.com/locate/topol Every nonsingular C1 flow on a closed manifold of dimension greater than two has a global transverse disk William Basener Department of Mathematics and Statistics, Rochester Institute of Technology, Rochester, NY 14414, USA Received 18 March 2002; received in revised form 27 May 2003 Abstract We prove three results about global cross sections which are disks, henceforth called global transverse disks. First we prove that every nonsingular (fixed point free) C1 flow on a closed (compact, no boundary) connected manifold of dimension greater than 2 has a global transverse disk. Next we prove that for any such flow, if the directed graph Gh has a loop then the flow does not have a closed manifold which is a global cross section. This property of Gh is easy to read off from the first return map for the global transverse disk. Lastly, we give criteria for an “M-cellwise continuous” (a special case of piecewise continuous) map h : D2 → D2 that determines whether h is the first return map for some global transverse disk of some flow ϕ. In such a case, we call ϕ the suspension of h. 2003 Elsevier B.V. All rights reserved. Keywords: Minimal flow; Global cross section; Suspension 1. Introduction Let M be a closed (compact, no boundary) connected n-dimensional manifold and ϕ : R × M → M a nonsingular (fixed point free) flow on M. -

TUGBOAT Volume 39, Number 2 / 2018 TUG 2018 Conference

TUGBOAT Volume 39, Number 2 / 2018 TUG 2018 Conference Proceedings TUG 2018 98 Conference sponsors, participants, program 100 Joseph Wright / TUG goes to Rio 104 Joseph Wright / TEX Users Group 2018 Annual Meeting notes Graphics 105 Susanne Raab / The tikzducks package A L TEX 107 Frank Mittelbach / A rollback concept for packages and classes 113 Will Robertson / Font loading in LATEX using the fontspec package: Recent updates 117 Joseph Wright / Supporting color and graphics in expl3 119 Joseph Wright / siunitx: Past, present and future Software & Tools 122 Paulo Cereda / Arara — TEX automation made easy 126 Will Robertson / The Canvas learning management system and LATEXML 131 Ross Moore / Implementing PDF standards for mathematical publishing Fonts 136 Jaeyoung Choi, Ammar Ul Hassan, Geunho Jeong / FreeType MF Module: A module for using METAFONT directly inside the FreeType rasterizer Methods 143 S.K. Venkatesan / WeTEX and Hegelian contradictions in classical mathematics Abstracts 147 TUG 2018 abstracts (Behrendt, Coriasco et al., Heinze, Hejda, Loretan, Mittelbach, Moore, Ochs, Veytsman, Wright) 149 MAPS: Contents of issue 48 (2018) 150 Die TEXnische Kom¨odie: Contents of issues 2–3/2018 151 Eutypon: Contents of issue 38–39 (October 2017) General Delivery 151 Bart Childs and Rick Furuta / Don Knuth awarded Trotter Prize 152 Barbara Beeton / Hyphenation exception log Book Reviews 153 Boris Veytsman / Book review: W. A. Dwiggins: A Life in Design by Bruce Kennett Hints & Tricks 155 Karl Berry / The treasure chest Cartoon 156 John Atkinson / Hyphe-nation; Clumsy Advertisements 157 TEX consulting and production services TUG Business 158 TUG institutional members 159 TUG 2019 election News 160 Calendar TEX Users Group Board of Directors † TUGboat (ISSN 0896-3207) is published by the Donald Knuth, Grand Wizard of TEX-arcana ∗ TEX Users Group. -

Semi-Automated Correction Tools for Mathematics-Based Exercises in MOOC Environments

International Journal of Artificial Intelligence and Interactive Multimedia, Vol. 3, Nº 3 Semi-Automated Correction Tools for Mathematics-Based Exercises in MOOC Environments Alberto Corbí and Daniel Burgos Universidad Internacional de La Rioja the accuracy of the result but also in the correctness of the Abstract — Massive Open Online Courses (MOOCs) allow the resolution process, which might turn out to be as important as participation of hundreds of students who are interested in a –or sometimes even more important than– the final outcome wide range of areas. Given the huge attainable enrollment rate, it itself. Corrections performed by a human (a teacher/assistant) is almost impossible to suggest complex homework to students and have it carefully corrected and reviewed by a tutor or can also add value to the teacher’s view on how his/her assistant professor. In this paper, we present a software students learn and progress. The teacher’s feedback on a framework that aims at assisting teachers in MOOCs during correction sheet always entails a unique opportunity to correction tasks related to exercises in mathematics and topics improve the learner’s knowledge and build a more robust with some degree of mathematical content. In this spirit, our awareness on the matter they are currently working on. proposal might suit not only maths, but also physics and Exercises in physics deepen this reviewing philosophy and technical subjects. As a test experience, we apply it to 300+ physics homework bulletins from 80+ students. Results show our student-teacher interaction. Keeping an organized and solution can prove very useful in guiding assistant teachers coherent resolution flow is as relevant to the understanding of during correction shifts and is able to mitigate the time devoted the underlying physical phenomena as the final output itself. -

Frege and the Logic of Sense and Reference

FREGE AND THE LOGIC OF SENSE AND REFERENCE Kevin C. Klement Routledge New York & London Published in 2002 by Routledge 29 West 35th Street New York, NY 10001 Published in Great Britain by Routledge 11 New Fetter Lane London EC4P 4EE Routledge is an imprint of the Taylor & Francis Group Printed in the United States of America on acid-free paper. Copyright © 2002 by Kevin C. Klement All rights reserved. No part of this book may be reprinted or reproduced or utilized in any form or by any electronic, mechanical or other means, now known or hereafter invented, including photocopying and recording, or in any infomration storage or retrieval system, without permission in writing from the publisher. 10 9 8 7 6 5 4 3 2 1 Library of Congress Cataloging-in-Publication Data Klement, Kevin C., 1974– Frege and the logic of sense and reference / by Kevin Klement. p. cm — (Studies in philosophy) Includes bibliographical references and index ISBN 0-415-93790-6 1. Frege, Gottlob, 1848–1925. 2. Sense (Philosophy) 3. Reference (Philosophy) I. Title II. Studies in philosophy (New York, N. Y.) B3245.F24 K54 2001 12'.68'092—dc21 2001048169 Contents Page Preface ix Abbreviations xiii 1. The Need for a Logical Calculus for the Theory of Sinn and Bedeutung 3 Introduction 3 Frege’s Project: Logicism and the Notion of Begriffsschrift 4 The Theory of Sinn and Bedeutung 8 The Limitations of the Begriffsschrift 14 Filling the Gap 21 2. The Logic of the Grundgesetze 25 Logical Language and the Content of Logic 25 Functionality and Predication 28 Quantifiers and Gothic Letters 32 Roman Letters: An Alternative Notation for Generality 38 Value-Ranges and Extensions of Concepts 42 The Syntactic Rules of the Begriffsschrift 44 The Axiomatization of Frege’s System 49 Responses to the Paradox 56 v vi Contents 3. -

Toward a Logic of Everyday Reasoning

Toward a Logic of Everyday Reasoning Pei Wang Abstract: Logic should return its focus to valid reasoning in real-world situations. Since classical logic only covers valid reasoning in a highly idealized situation, there is a demand for a new logic for everyday reasoning that is based on more realistic assumptions, while still keeps the general, formal, and normative nature of logic. NAL (Non-Axiomatic Logic) is built for this purpose, which is based on the assumption that the reasoner has insufficient knowledge and resources with respect to the reasoning tasks to be carried out. In this situation, the notion of validity has to be re-established, and the grammar rules and inference rules of the logic need to be designed accordingly. Consequently, NAL has features very different from classical logic and other non-classical logics, and it provides a coherent solution to many problems in logic, artificial intelligence, and cognitive science. Keyword: non-classical logic, uncertainty, openness, relevance, validity 1 Logic and Everyday Reasoning 1.1 The historical changes of logic In a broad sense, the study of logic is concerned with the principles and forms of valid reasoning, inference, and argument in various situations. The first dominating paradigm in logic is Aristotle’s Syllogistic [Aristotle, 1882], now usually referred to as traditional logic. This study was carried by philosophers and logicians including Descartes, Locke, Leibniz, Kant, Boole, Peirce, Mill, and many others [Bochenski,´ 1970, Haack, 1978, Kneale and Kneale, 1962]. In this tra- dition, the focus of the study is to identify and to specify the forms of valid reasoning Pei Wang Department of Computer and Information Sciences, Temple University, Philadelphia, USA e-mail: [email protected] 1 2 Pei Wang in general, that is, the rules of logic should be applicable to all domains and situa- tions where reasoning happens, as “laws of thought”. -

Transforming the Arχiv to XML

Transforming the arχiv to XML Heinrich Stamerjohanns and Michael Kohlhase Computer Science, Jacobs University Bremen fh.stamerjohanns,[email protected] Abstract. We describe an experiment of transforming large collections of LATEX documents to more machine-understandable representations. Concretely, we are translating the collection of scientific publications of the Cornell e-Print Archive (arXiv) using the LATEX to XML converter which is currently under development. The main technical task of our arXMLiv project is to supply LaTeXML bindings for the (thousands of) LATEX classes and packages used in the arXiv collection. For this we have developed a distributed build system that reiteratively runs LaTeXML over the arXiv collection and collects statistics about e.g. the most sorely missing LaTeXML bindings and clus- ters common error events. This creates valuable feedback to both the developers of the LaTeXML package and to binding implementers. We have now processed the complete arXiv collection of more than 400,000 documents from 1993 until 2006 (one run is a processor-year-size un- dertaking) and have continuously improved our success rate to more than 56% (i.e. over 56% of the documents that are LATEX have been converted by LaTeXML without noticing an error and are available as XHTML+MathML documents). 1 Introduction The last few years have seen the emergence of various XML-based, content- oriented markup languages for mathematics and natural sciences on the web, e.g. OpenMath, Content MathML, or our own OMDoc. The promise of these content-oriented approaches is that various tasks involved in \doing mathemat- ics" (e.g. -

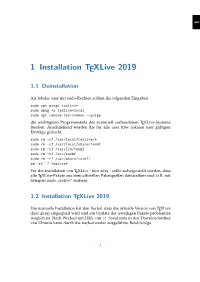

1 Installation Texlive 2019

1 1 Installation TEXLive 2019 1.1 Deinstallation Als lokaler user mit sudo-Rechten sollten die folgenden Eingaben sudo apt purge texlive* sudo dpkg -r texlive-local sudo apt remove tex-common --purge die wichtigsten Programmteile des eventuell vorhandenen TEXLive-Systems loschen.¨ Anschließend werden die fur¨ alle user bzw. lokalen user gultigen¨ Eintrage¨ geloscht.¨ sudo rm -rf /usr/local/texlive/* sudo rm -rf /usr/local/share/texmf sudo rm -rf /var/lib/texmf sudo rm -rf /etc/texmf sudo rm -rf /usr/share/texmf/ rm -rf ~/.texlive* Vor der Installation von TEXLive - hier 2019 - sollte sichergestellt werden, dass alle TEXLive-Pakete aus dem offiziellen Paketquellen deinstalliert sind (z.B. mit Synaptic nach texlive“ suchen). ” 1.2 Installation TEXLive 2019 Die manuelle Installation hat den Vorteil, dass die aktuelle Version von TEXLive (hier 2019) eingespielt wird und ein Update der jeweiligen Pakete problemlos moglich¨ ist. Nach Wechsel mit Hilfe von cd Downloads in den Downloadordner von Ubuntu kann durch die nacheinander ausgefuhrte¨ Befehlsfolge 1 1 1 Installation TEXLive 2019 wget http://mirror.ctan.org/systems/texlive/tlnet/ c ,! install-tl-unx.tar.gz tar -zxvf install-tl-unx.tar.gz cd install-tl-20190503/ sudo ./install-tl -gui der Installationsprozess in die Wege geleitet werden. Zu beachten ist, dass der Wechsel in das Unterverzeichnis mit Hilfe von cd install-tl-20190503/ auch eine andere Nummer (Datumsfolge?) haben kann und dies mit Hilfe der (unvollstandigen)¨ Eingabe von cd install-tl- und anschließender Betatigung¨ der Tabulatortaste automatisch erganzt¨ wird. Die Ubernahme¨ der Voreinstellungen lasst¨ den Installationsvorgang - welcher je nach Internetverbindung von geschatzt¨ einer halben bis zu mehreren Stunden dauern kann - anlaufen. -

Real and Imaginary Parts of Decidability-Making Gilbert Giacomoni

On the Origin of Abstraction : Real and Imaginary Parts of Decidability-Making Gilbert Giacomoni To cite this version: Gilbert Giacomoni. On the Origin of Abstraction : Real and Imaginary Parts of Decidability-Making. 11th World Congress of the International Federation of Scholarly Associations of Management (IF- SAM), Jun 2012, Limerick, Ireland. pp.145. hal-00750628 HAL Id: hal-00750628 https://hal-mines-paristech.archives-ouvertes.fr/hal-00750628 Submitted on 11 Nov 2012 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. On the Origin of Abstraction: Real and Imaginary Parts of Decidability-Making Gilbert Giacomoni Institut de Recherche en Gestion - Université Paris 12 (UPEC) 61 Avenue de Général de Gaulle 94010 Créteil [email protected] Centre de Gestion Scientifique – Chaire TMCI (FIMMM) - Mines ParisTech 60 Boulevard Saint-Michel 75006 Paris [email protected] Abstract: Firms seeking an original standpoint, in strategy or design, need to break with imitation and uniformity. They specifically attempt to understand the cognitive processes by which decision-makers manage to work, individually or collectively, through undecidable situations generated by equivalent possible choices and design innovatively. The behavioral tradition has largely anchored on Simon's early conception of bounded rationality, it is important to engage more explicitly cognitive approaches particularly ones that might link to the issue of identifying novel competitive positions. -

Formalizing Common Sense Reasoning for Scalable Inconsistency-Robust Information Integration Using Direct Logictm Reasoning and the Actor Model

Formalizing common sense reasoning for scalable inconsistency-robust information integration using Direct LogicTM Reasoning and the Actor Model Carl Hewitt. http://carlhewitt.info This paper is dedicated to Alonzo Church, Stanisław Jaśkowski, John McCarthy and Ludwig Wittgenstein. ABSTRACT People use common sense in their interactions with large software systems. This common sense needs to be formalized so that it can be used by computer systems. Unfortunately, previous formalizations have been inadequate. For example, because contemporary large software systems are pervasively inconsistent, it is not safe to reason about them using classical logic. Our goal is to develop a standard foundation for reasoning in large-scale Internet applications (including sense making for natural language) by addressing the following issues: inconsistency robustness, classical contrapositive inference bug, and direct argumentation. Inconsistency Robust Direct Logic is a minimal fix to Classical Logic without the rule of Classical Proof by Contradiction [i.e., (Ψ├ (¬))├¬Ψ], the addition of which transforms Inconsistency Robust Direct Logic into Classical Logic. Inconsistency Robust Direct Logic makes the following contributions over previous work: Direct Inference Direct Argumentation (argumentation directly expressed) Inconsistency-robust Natural Deduction that doesn’t require artifices such as indices (labels) on propositions or restrictions on reiteration Intuitive inferences hold including the following: . Propositional equivalences (except absorption) including Double Negation and De Morgan . -Elimination (Disjunctive Syllogism), i.e., ¬Φ, (ΦΨ)├T Ψ . Reasoning by disjunctive cases, i.e., (), (├T ), (├T Ω)├T Ω . Contrapositive for implication i.e., Ψ⇒ if and only if ¬⇒¬Ψ . Soundness, i.e., ├T ((├T) ⇒ ) . Inconsistency Robust Proof by Contradiction, i.e., ├T (Ψ⇒ (¬))⇒¬Ψ 1 A fundamental goal of Inconsistency Robust Direct Logic is to effectively reason about large amounts of pervasively inconsistent information using computer information systems.