Supercomputers: the Amazing Race (A History of Supercomputing, 1960-2020)

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Emerging Technologies Multi/Parallel Processing

Emerging Technologies Multi/Parallel Processing Mary C. Kulas New Computing Structures Strategic Relations Group December 1987 For Internal Use Only Copyright @ 1987 by Digital Equipment Corporation. Printed in U.S.A. The information contained herein is confidential and proprietary. It is the property of Digital Equipment Corporation and shall not be reproduced or' copied in whole or in part without written permission. This is an unpublished work protected under the Federal copyright laws. The following are trademarks of Digital Equipment Corporation, Maynard, MA 01754. DECpage LN03 This report was produced by Educational Services with DECpage and the LN03 laser printer. Contents Acknowledgments. 1 Abstract. .. 3 Executive Summary. .. 5 I. Analysis . .. 7 A. The Players . .. 9 1. Number and Status . .. 9 2. Funding. .. 10 3. Strategic Alliances. .. 11 4. Sales. .. 13 a. Revenue/Units Installed . .. 13 h. European Sales. .. 14 B. The Product. .. 15 1. CPUs. .. 15 2. Chip . .. 15 3. Bus. .. 15 4. Vector Processing . .. 16 5. Operating System . .. 16 6. Languages. .. 17 7. Third-Party Applications . .. 18 8. Pricing. .. 18 C. ~BM and Other Major Computer Companies. .. 19 D. Why Success? Why Failure? . .. 21 E. Future Directions. .. 25 II. Company/Product Profiles. .. 27 A. Multi/Parallel Processors . .. 29 1. Alliant . .. 31 2. Astronautics. .. 35 3. Concurrent . .. 37 4. Cydrome. .. 41 5. Eastman Kodak. .. 45 6. Elxsi . .. 47 Contents iii 7. Encore ............... 51 8. Flexible . ... 55 9. Floating Point Systems - M64line ................... 59 10. International Parallel ........................... 61 11. Loral .................................... 63 12. Masscomp ................................. 65 13. Meiko .................................... 67 14. Multiflow. ~ ................................ 69 15. Sequent................................... 71 B. Massively Parallel . 75 1. Ametek.................................... 77 2. Bolt Beranek & Newman Advanced Computers ........... -

The ASCI Red TOPS Supercomputer

The ASCI Red TOPS Supercomputer http://www.sandia.gov/ASCI/Red/RedFacts.htm The ASCI Red TOPS Supercomputer Introduction The ASCI Red TOPS Supercomputer is the first step in the ASCI Platforms Strategy, which is aimed at giving researchers the five-order-of-magnitude increase in computing performance over current technology that is required to support "full-physics," "full-system" simulation by early next century. This supercomputer, being installed at Sandia National Laboratories, is a massively parallel, MIMD computer. It is noteworthy for several reasons. It will be the world's first TOPS supercomputer. I/O, memory, compute nodes, and communication are scalable to an extreme degree. Standard parallel interfaces will make it relatively simple to port parallel applications to this system. The system uses two operating systems to make the computer both familiar to the user (UNIX) and non-intrusive for the scalable application (Cougar). And it makes use of Commercial Commodity Off The Shelf (CCOTS) technology to maintain affordability. Hardware The ASCI TOPS system is a distributed memory, MIMD, message-passing supercomputer. All aspects of this system architecture are scalable, including communication bandwidth, main memory, internal disk storage capacity, and I/O. Artist's Concept The TOPS Supercomputer is organized into four partitions: Compute, Service, System, and I/O. The Service Partition provides an integrated, scalable host that supports interactive users, application development, and system administration. The I/O Partition supports a scalable file system and network services. The System Partition supports system Reliability, Availability, and Serviceability (RAS) capabilities. Finally, the Compute Partition contains nodes optimized for floating point performance and is where parallel applications execute. -

The Risks Digest Index to Volume 11

The Risks Digest Index to Volume 11 Search RISKS using swish-e Forum on Risks to the Public in Computers and Related Systems ACM Committee on Computers and Public Policy, Peter G. Neumann, moderator Index to Volume 11 Sunday, 30 June 1991 Issue 01 (4 February 1991) Re: Enterprising Vending Machines (Allan Meers) Re: Risks of automatic flight (Henry Spencer) Re: Voting by Phone & public-key cryptography (Evan Ravitz) Re: Random Voting IDs and Bogus Votes (Vote by Phone) (Mike Beede)) Re: Patriots ... (Steve Mitchell, Steven Philipson, Michael H. Riddle, Clifford Johnson) Re: Man-in-the-loop on SDI (Henry Spencer) Re: Broadcast local area networks ... (Curt Sampson, Donald Lindsay, John Stanley, Jerry Leichter) Issue 02 (5 February 1991) Bogus draft notices are computer generated (Jonathan Rice) People working at home on important tasks (Mike Albaugh) Predicting system reliability (Martyn Thomas) Re: Patriots (Steven Markus Woodcock, Mark Levison) Hungry copiers (another run-in with technology) (Scott Wilson) Re: Enterprising Vending Machines (Dave Curry) Broadcast LANs (Peter da Silva, Scott Hinckley) Issue 03 (6 February 1991) Tube Tragedy (Pete Mellor) New Zealand Computer Error Holds Up Funds (Gligor Tashkovich) "Inquiry into cash machine fraud" (Stella Page) Quick n' easy access to Fidelity account info (Carol Springs) Re: Enterprising Vending Machines (Mark Jackson) RISKS of no escape paths (Geoff Kuenning) A risky gas pump (Bob Grumbine) Electronic traffic signs endanger motorists... (Rich Snider) Re: Predicting system reliability (Richard P. Taylor) The new California licenses (Chris Hibbert) Phone Voting -- Really a Problem? (Michael Barnett, Dave Smith) Re: Electronic cash completely replacing cash (Barry Wright) Issue 04 (7 February 1991) Subway door accidents (Mark Brader) http://catless.ncl.ac.uk/Risks/index.11.html[2011-06-11 08:17:52] The Risks Digest Index to Volume 11 "Virus" destroys part of Mass. -

Three-Dimensional Integrated Circuit Design: EDA, Design And

Integrated Circuits and Systems Series Editor Anantha Chandrakasan, Massachusetts Institute of Technology Cambridge, Massachusetts For other titles published in this series, go to http://www.springer.com/series/7236 Yuan Xie · Jason Cong · Sachin Sapatnekar Editors Three-Dimensional Integrated Circuit Design EDA, Design and Microarchitectures 123 Editors Yuan Xie Jason Cong Department of Computer Science and Department of Computer Science Engineering University of California, Los Angeles Pennsylvania State University [email protected] [email protected] Sachin Sapatnekar Department of Electrical and Computer Engineering University of Minnesota [email protected] ISBN 978-1-4419-0783-7 e-ISBN 978-1-4419-0784-4 DOI 10.1007/978-1-4419-0784-4 Springer New York Dordrecht Heidelberg London Library of Congress Control Number: 2009939282 © Springer Science+Business Media, LLC 2010 All rights reserved. This work may not be translated or copied in whole or in part without the written permission of the publisher (Springer Science+Business Media, LLC, 233 Spring Street, New York, NY 10013, USA), except for brief excerpts in connection with reviews or scholarly analysis. Use in connection with any form of information storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar methodology now known or hereafter developed is forbidden. The use in this publication of trade names, trademarks, service marks, and similar terms, even if they are not identified as such, is not to be taken as an expression of opinion as to whether or not they are subject to proprietary rights. Printed on acid-free paper Springer is part of Springer Science+Business Media (www.springer.com) Foreword We live in a time of great change. -

An Overview of the Blue Gene/L System Software Organization

An Overview of the Blue Gene/L System Software Organization George Almasi´ , Ralph Bellofatto , Jose´ Brunheroto , Calin˘ Cas¸caval , Jose´ G. ¡ Castanos˜ , Luis Ceze , Paul Crumley , C. Christopher Erway , Joseph Gagliano , Derek Lieber , Xavier Martorell , Jose´ E. Moreira , Alda Sanomiya , and Karin ¡ Strauss ¢ IBM Thomas J. Watson Research Center Yorktown Heights, NY 10598-0218 £ gheorghe,ralphbel,brunhe,cascaval,castanos,pgc,erway, jgaglia,lieber,xavim,jmoreira,sanomiya ¤ @us.ibm.com ¥ Department of Computer Science University of Illinois at Urbana-Champaign Urabana, IL 61801 £ luisceze,kstrauss ¤ @uiuc.edu Abstract. The Blue Gene/L supercomputer will use system-on-a-chip integra- tion and a highly scalable cellular architecture. With 65,536 compute nodes, Blue Gene/L represents a new level of complexity for parallel system software, with specific challenges in the areas of scalability, maintenance and usability. In this paper we present our vision of a software architecture that faces up to these challenges, and the simulation framework that we have used for our experiments. 1 Introduction In November 2001 IBM announced a partnership with Lawrence Livermore National Laboratory to build the Blue Gene/L (BG/L) supercomputer, a 65,536-node machine de- signed around embedded PowerPC processors. Through the use of system-on-a-chip in- tegration [10], coupled with a highly scalable cellular architecture, Blue Gene/L will de- liver 180 or 360 Teraflops of peak computing power, depending on the utilization mode. Blue Gene/L represents a new level of scalability for parallel systems. Whereas existing large scale systems range in size from hundreds (ASCI White [2], Earth Simulator [4]) to a few thousands (Cplant [3], ASCI Red [1]) of compute nodes, Blue Gene/L makes a jump of almost two orders of magnitude. -

Advances in Ultrashort-Pulse Lasers • Modeling Dispersions of Biological and Chemical Agents • Centennial of E

October 2001 U.S. Department of Energy’s Lawrence Livermore National Laboratory Also in this issue: • More Advances in Ultrashort-Pulse Lasers • Modeling Dispersions of Biological and Chemical Agents • Centennial of E. O. Lawrence’s Birth About the Cover Computing systems leader Greg Tomaschke works at the console of the 680-gigaops Compaq TeraCluster2000 parallel supercomputer, one of the principal machines used to address large-scale scientific simulations at Livermore. The supercomputer is accessible to unclassified program researchers throughout the Laboratory, thanks to the Multiprogrammatic and Institutional Computing (M&IC) Initiative described in the article beginning on p. 4. M&IC makes supercomputers an institutional resource and helps scientists realize the potential of advanced, three-dimensional simulations. Cover design: Amy Henke About the Review Lawrence Livermore National Laboratory is operated by the University of California for the Department of Energy’s National Nuclear Security Administration. At Livermore, we focus science and technology on assuring our nation’s security. We also apply that expertise to solve other important national problems in energy, bioscience, and the environment. Science & Technology Review is published 10 times a year to communicate, to a broad audience, the Laboratory’s scientific and technological accomplishments in fulfilling its primary missions. The publication’s goal is to help readers understand these accomplishments and appreciate their value to the individual citizen, the nation, and the world. Please address any correspondence (including name and address changes) to S&TR, Mail Stop L-664, Lawrence Livermore National Laboratory, P.O. Box 808, Livermore, California 94551, or telephone (925) 423-3432. Our e-mail address is [email protected]. -

Computer Hardware

Computer Hardware MJ Rutter mjr19@cam Michaelmas 2014 Typeset by FoilTEX c 2014 MJ Rutter Contents History 4 The CPU 10 instructions ....................................... ............................................. 17 pipelines .......................................... ........................................... 18 vectorcomputers.................................... .............................................. 36 performancemeasures . ............................................... 38 Memory 42 DRAM .................................................. .................................... 43 caches............................................. .......................................... 54 Memory Access Patterns in Practice 82 matrixmultiplication. ................................................. 82 matrixtransposition . ................................................107 Memory Management 118 virtualaddressing .................................. ...............................................119 pagingtodisk ....................................... ............................................128 memorysegments ..................................... ............................................137 Compilers & Optimisation 158 optimisation....................................... .............................................159 thepitfallsofF90 ................................... ..............................................183 I/O, Libraries, Disks & Fileservers 196 librariesandkernels . ................................................197 -

Unit 18. Supercomputers: Everything You Need to Know About

GAUTAM SINGH UPSC STUDY MATERIAL – Science & Technology 0 7830294949 Unit 18. Supercomputers: Everything you need to know about Supercomputers have a high level of computing performance compared to a general purpose computer. In this post, we cover all details of supercomputers like history, performance, application etc. We will also see top 3 supercomputers and the National Supercomputing Mission. What is a supercomputer? A computer with a high level of computing performance compared to a general purpose computer and performance measured in FLOPS (floating point operations per second). Great speed and great memory are the two prerequisites of a super computer. The performance is generally evaluated in petaflops (1 followed by 15 zeros). Memory is averaged around 250000 times of the normal computer we use on a daily basis. THANKS FOR READING – VISIT OUR WEBSITE www.educatererindia.com GAUTAM SINGH UPSC STUDY MATERIAL – Science & Technology 0 7830294949 Housed in large clean rooms with high air flow to permit cooling. Used to solve problems that are too complex and huge for standard computers. History of Supercomputers in the World Most of the computers on the market today are smarter and faster than the very first supercomputers and hopefully, today’s supercomputer would turn into future computers by repeating the history of innovation. The first supercomputer was built in 1957 for the United States Department of Defense by Seymour Cray in Control Data Corporation (CDC) in 1957. CDC 1604 was one of the first computers to replace vacuum tubes with transistors. In 1964, Cray’s CDC 6600 replaced Stretch as the fastest computer on earth with 3 million floating-point operations per second (FLOPS). -

2017 HPC Annual Report Team Would Like to Acknowledge the Invaluable Assistance Provided by John Noe

sandia national laboratories 2017 HIGH PERformance computing The 2017 High Performance Computing Annual Report is dedicated to John Noe and Dino Pavlakos. Building a foundational framework Editor in high performance computing Yasmin Dennig Contributing Writers Megan Davidson Sandia National Laboratories has a long history of significant contributions to the high performance computing Mattie Hensley community and industry. Our innovative computer architectures allowed the United States to become the first to break the teraflop barrier—propelling us to the international spotlight. Our advanced simulation and modeling capabilities have been integral in high consequence US operations such as Operation Burnt Frost. Strong partnerships with industry leaders, such as Cray, Inc. and Goodyear, have enabled them to leverage our high performance computing capabilities to gain a tremendous competitive edge in the marketplace. Contributing Editor Laura Sowko As part of our continuing commitment to provide modern computing infrastructure and systems in support of Sandia’s missions, we made a major investment in expanding Building 725 to serve as the new home of high performance computer (HPC) systems at Sandia. Work is expected to be completed in 2018 and will result in a modern facility of approximately 15,000 square feet of computer center space. The facility will be ready to house the newest National Nuclear Security Administration/Advanced Simulation and Computing (NNSA/ASC) prototype Design platform being acquired by Sandia, with delivery in late 2019 or early 2020. This new system will enable continuing Stacey Long advances by Sandia science and engineering staff in the areas of operating system R&D, operation cost effectiveness (power and innovative cooling technologies), user environment, and application code performance. -

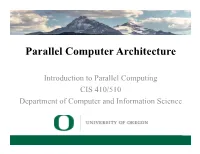

Parallel Computer Architecture

Parallel Computer Architecture Introduction to Parallel Computing CIS 410/510 Department of Computer and Information Science Lecture 2 – Parallel Architecture Outline q Parallel architecture types q Instruction-level parallelism q Vector processing q SIMD q Shared memory ❍ Memory organization: UMA, NUMA ❍ Coherency: CC-UMA, CC-NUMA q Interconnection networks q Distributed memory q Clusters q Clusters of SMPs q Heterogeneous clusters of SMPs Introduction to Parallel Computing, University of Oregon, IPCC Lecture 2 – Parallel Architecture 2 Parallel Architecture Types • Uniprocessor • Shared Memory – Scalar processor Multiprocessor (SMP) processor – Shared memory address space – Bus-based memory system memory processor … processor – Vector processor bus processor vector memory memory – Interconnection network – Single Instruction Multiple processor … processor Data (SIMD) network processor … … memory memory Introduction to Parallel Computing, University of Oregon, IPCC Lecture 2 – Parallel Architecture 3 Parallel Architecture Types (2) • Distributed Memory • Cluster of SMPs Multiprocessor – Shared memory addressing – Message passing within SMP node between nodes – Message passing between SMP memory memory nodes … M M processor processor … … P … P P P interconnec2on network network interface interconnec2on network processor processor … P … P P … P memory memory … M M – Massively Parallel Processor (MPP) – Can also be regarded as MPP if • Many, many processors processor number is large Introduction to Parallel Computing, University of Oregon, -

An Extensible Administration and Configuration Tool for Linux Clusters

An extensible administration and configuration tool for Linux clusters John D. Fogarty B.Sc A dissertation submitted to the University of Dublin, in partial fulfillment of the requirements for the degree of Master of Science in Computer Science 1999 Declaration I declare that the work described in this dissertation is, except where otherwise stated, entirely my own work and has not been submitted as an exercise for a degree at this or any other university. Signed: ___________________ John D. Fogarty 15th September, 1999 Permission to lend and/or copy I agree that Trinity College Library may lend or copy this dissertation upon request. Signed: ___________________ John D. Fogarty 15th September, 1999 ii Summary This project addresses the lack of system administration tools for Linux clusters. The goals of the project were to design and implement an extensible system that would facilitate the administration and configuration of a Linux cluster. Cluster systems are inherently scalable and therefore the cluster administration tool should also scale well to facilitate the addition of new nodes to the cluster. The tool allows the administration and configuration of the entire cluster from a single node. Administration of the cluster is simplified by way of command replication across one, some or all nodes. Configuration of the cluster is made possible through the use of a flexible, variables substitution scheme, which allows common configuration files to reflect differences between nodes. The system uses a GUI interface and is intuitively simple to use. Extensibility is incorporated into the system, by allowing the dynamic addition of new commands and output display types to the system. -

Musings RIK FARROWOPINION

Musings RIK FARROWOPINION Rik is the editor of ;login:. While preparing this issue of ;login:, I found myself falling down a rabbit hole, like [email protected] Alice in Wonderland . And when I hit bottom, all I could do was look around and puzzle about what I discovered there . My adventures started with a casual com- ment, made by an ex-Cray Research employee, about the design of current super- computers . He told me that today’s supercomputers cannot perform some of the tasks that they are designed for, and used weather forecasting as his example . I was stunned . Could this be true? Or was I just being dragged down some fictional rabbit hole? I decided to learn more about supercomputer history . Supercomputers It is humbling to learn about the early history of computer design . Things we take for granted, such as pipelining instructions and vector processing, were impor- tant inventions in the 1970s . The first supercomputers were built from discrete components—that is, transistors soldered to circuit boards—and had clock speeds in the tens of nanoseconds . To put that in real terms, the Control Data Corpora- tion’s (CDC) 7600 had a clock cycle of 27 .5 ns, or in today’s terms, 36 4. MHz . This was CDC’s second supercomputer (the 6600 was first), but included instruction pipelining, an invention of Seymour Cray . The CDC 7600 peaked at 36 MFLOPS, but generally got 10 MFLOPS with carefully tuned code . The other cool thing about the CDC 7600 was that it broke down at least once a day .