Fairness, Learning and Efficiency in Markets with Budgeted Agents

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Notices of the American Mathematical Society

ISSN 0002-9920 of the American Mathematical Society February 2006 Volume 53, Number 2 Math Circles and Olympiads MSRI Asks: Is the U.S. Coming of Age? page 200 A System of Axioms of Set Theory for the Rationalists page 206 Durham Meeting page 299 San Francisco Meeting page 302 ICM Madrid 2006 (see page 213) > To mak• an antmat•d tub• plot Animated Tube Plot 1 Type an expression in one or :;)~~~G~~~t;~~i~~~~~~~~~~~~~:rtwo ' 2 Wrth the insertion point in the 3 Open the Plot Properties dialog the same variables Tl'le next animation shows • knot Plot 30 Animated + Tube Scientific Word ... version 5.5 Scientific Word"' offers the same features as Scientific WorkPlace, without the computer algebra system. Editors INTERNATIONAL Morris Weisfeld Editor-in-Chief Enrico Arbarello MATHEMATICS Joseph Bernstein Enrico Bombieri Richard E. Borcherds Alexei Borodin RESEARCH PAPERS Jean Bourgain Marc Burger James W. Cogdell http://www.hindawi.com/journals/imrp/ Tobias Colding Corrado De Concini IMRP provides very fast publication of lengthy research articles of high current interest in Percy Deift all areas of mathematics. All articles are fully refereed and are judged by their contribution Robbert Dijkgraaf to the advancement of the state of the science of mathematics. Issues are published as S. K. Donaldson frequently as necessary. Each issue will contain only one article. IMRP is expected to publish 400± pages in 2006. Yakov Eliashberg Edward Frenkel Articles of at least 50 pages are welcome and all articles are refereed and judged for Emmanuel Hebey correctness, interest, originality, depth, and applicability. Submissions are made by e-mail to Dennis Hejhal [email protected]. -

Strength in Numbers: the Rising of Academic Statistics Departments In

Agresti · Meng Agresti Eds. Alan Agresti · Xiao-Li Meng Editors Strength in Numbers: The Rising of Academic Statistics DepartmentsStatistics in the U.S. Rising of Academic The in Numbers: Strength Statistics Departments in the U.S. Strength in Numbers: The Rising of Academic Statistics Departments in the U.S. Alan Agresti • Xiao-Li Meng Editors Strength in Numbers: The Rising of Academic Statistics Departments in the U.S. 123 Editors Alan Agresti Xiao-Li Meng Department of Statistics Department of Statistics University of Florida Harvard University Gainesville, FL Cambridge, MA USA USA ISBN 978-1-4614-3648-5 ISBN 978-1-4614-3649-2 (eBook) DOI 10.1007/978-1-4614-3649-2 Springer New York Heidelberg Dordrecht London Library of Congress Control Number: 2012942702 Ó Springer Science+Business Media New York 2013 This work is subject to copyright. All rights are reserved by the Publisher, whether the whole or part of the material is concerned, specifically the rights of translation, reprinting, reuse of illustrations, recitation, broadcasting, reproduction on microfilms or in any other physical way, and transmission or information storage and retrieval, electronic adaptation, computer software, or by similar or dissimilar methodology now known or hereafter developed. Exempted from this legal reservation are brief excerpts in connection with reviews or scholarly analysis or material supplied specifically for the purpose of being entered and executed on a computer system, for exclusive use by the purchaser of the work. Duplication of this publication or parts thereof is permitted only under the provisions of the Copyright Law of the Publisher’s location, in its current version, and permission for use must always be obtained from Springer. -

Prizes and Awards Session

PRIZES AND AWARDS SESSION Wednesday, July 12, 2021 9:00 AM EDT 2021 SIAM Annual Meeting July 19 – 23, 2021 Held in Virtual Format 1 Table of Contents AWM-SIAM Sonia Kovalevsky Lecture ................................................................................................... 3 George B. Dantzig Prize ............................................................................................................................. 5 George Pólya Prize for Mathematical Exposition .................................................................................... 7 George Pólya Prize in Applied Combinatorics ......................................................................................... 8 I.E. Block Community Lecture .................................................................................................................. 9 John von Neumann Prize ......................................................................................................................... 11 Lagrange Prize in Continuous Optimization .......................................................................................... 13 Ralph E. Kleinman Prize .......................................................................................................................... 15 SIAM Prize for Distinguished Service to the Profession ....................................................................... 17 SIAM Student Paper Prizes .................................................................................................................... -

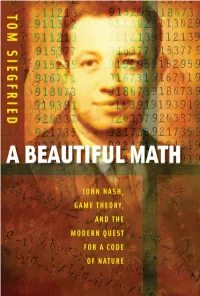

A Beautiful Math : John Nash, Game Theory, and the Modern Quest for a Code of Nature / Tom Siegfried

A BEAUTIFULA BEAUTIFUL MATH MATH JOHN NASH, GAME THEORY, AND THE MODERN QUEST FOR A CODE OF NATURE TOM SIEGFRIED JOSEPH HENRY PRESS Washington, D.C. Joseph Henry Press • 500 Fifth Street, NW • Washington, DC 20001 The Joseph Henry Press, an imprint of the National Academies Press, was created with the goal of making books on science, technology, and health more widely available to professionals and the public. Joseph Henry was one of the founders of the National Academy of Sciences and a leader in early Ameri- can science. Any opinions, findings, conclusions, or recommendations expressed in this volume are those of the author and do not necessarily reflect the views of the National Academy of Sciences or its affiliated institutions. Library of Congress Cataloging-in-Publication Data Siegfried, Tom, 1950- A beautiful math : John Nash, game theory, and the modern quest for a code of nature / Tom Siegfried. — 1st ed. p. cm. Includes bibliographical references and index. ISBN 0-309-10192-1 (hardback) — ISBN 0-309-65928-0 (pdfs) 1. Game theory. I. Title. QA269.S574 2006 519.3—dc22 2006012394 Copyright 2006 by Tom Siegfried. All rights reserved. Printed in the United States of America. Preface Shortly after 9/11, a Russian scientist named Dmitri Gusev pro- posed an explanation for the origin of the name Al Qaeda. He suggested that the terrorist organization took its name from Isaac Asimov’s famous 1950s science fiction novels known as the Foun- dation Trilogy. After all, he reasoned, the Arabic word “qaeda” means something like “base” or “foundation.” And the first novel in Asimov’s trilogy, Foundation, apparently was titled “al-Qaida” in an Arabic translation. -

Brief 2007.Pdf

Brief (1-10-2007) Read Brief on the Web at http://www.umn.edu/umnnews/Publications/Brief/Brief_01102007.html . Vol. XXXVII No. 1; Jan. 10, 2007 Editor: Gayla Marty, [email protected] INSIDE THIS ISSUE --Regents approved UMTC stadium design and revised cost Jan. 3. --CAPA communications survey is now open: P&A staff invited to participate. --Founding director of the new campuswide UMTC honors program is Serge Rudaz. --People: VP for university relations Karen Himle began Jan. 9, and more. Campus Announcements and Events University-wide | Crookston | Duluth | Morris | Rochester | Twin Cities THE BOARD OF REGENTS APPROVED A TCF BANK STADIUM DESIGN for UMTC in a special session Jan. 3. The 50,000-seat stadium will be a blend of brick, stone, and glass in a traditional collegiate horseshoe shape, open to the downtown Minneapolis skyline, with the potential to expand to 80,000 seats. A revised cost of $288.5 million was also approved--an addition of $39.8 million to be financed without added expense to taxpayers, students, or the U's academic mission. Read more at http://www.umn.edu/umnnews/Feature_Stories/Regents_approve_stadium_design.html . COUNCIL OF ACADEMIC PROFESSIONALS AND ADMINISTRATORS (CAPA): The 2006-07 CAPA communications survey is the central way for CAPA to improve communications with U academic professional and administrative (P&A) staff statewide. Committee chair John Borchert urges P&A staff to participate. Read more and link to the survey at http://www.umn.edu/umnnews/Faculty_Staff_Comm/Council_of_Academic_Professionals_and_Administrators/ Survey_begins.html . FOUNDING DIRECTOR OF THE NEW UMTC HONORS PROGRAM is Serge Rudaz, professor and director of undergraduate studies, School of Physics and Astronomy. -

Jennifer Tour Chayes March 2016 CURRICULUM VITAE Office

Jennifer Tour Chayes March 2016 CURRICULUM VITAE Office Address: Microsoft Research New England 1 Memorial Drive Cambridge, MA 02142 e-mail: [email protected] Personal: Born September 20, 1956, New York, New York Education: 1979 B.A., Physics and Biology, Wesleyan University 1983 Ph.D., Mathematical Physics, Princeton University Positions: 1983{1985 Postdoctoral Fellow in Mathematical Physics, Departments of Mathematics and Physics, Harvard University 1985{1987 Postdoctoral Fellow, Laboratory of Atomic and Solid State Physics and Mathematical Sciences Institute, Cornell University 1987{1990 Associate Professor, Department of Mathematics, UCLA 1990{2001 Professor, Department of Mathematics, UCLA 1997{2005 Senior Researcher and Head, Theory Group, Microsoft Research 1997{2008 Affiliate Professor, Dept. of Physics, U. Washington 1999{2008 Affiliate Professor, Dept. of Mathematics, U. Washington 2005{2008 Principal Researcher and Research Area Manager for Mathematics, Theoretical Computer Science and Cryptography, Microsoft Research 2008{ Managing Director, Microsoft Research New England 2010{ Distinguished Scientist, Microsoft Corporation 2012{ Managing Director, Microsoft Research New York City Long-Term Visiting Positions: 1994-95, 1997 Member, Institute for Advanced Study, Princeton 1995, May{July ETH, Z¨urich 1996, Sept.{Dec. AT&T Research, New Jersey Awards and Honors: 1977 Johnston Prize in Physics, Wesleyan University 1979 Graham Prize in Natural Sciences & Mathematics, Wesleyan University 1979 Graduated 1st in Class, Summa Cum Laude, -

AMS Officers and Committee Members

Of®cers and Committee Members Numbers to the left of headings are used as points of reference 1.1. Liaison Committee in an index to AMS committees which follows this listing. Primary All members of this committee serve ex of®cio. and secondary headings are: Chair Felix E. Browder 1. Of®cers Michael G. Crandall 1.1. Liaison Committee Robert J. Daverman 2. Council John M. Franks 2.1. Executive Committee of the Council 3. Board of Trustees 4. Committees 4.1. Committees of the Council 2. Council 4.2. Editorial Committees 4.3. Committees of the Board of Trustees 2.0.1. Of®cers of the AMS 4.4. Committees of the Executive Committee and Board of President Felix E. Browder 2000 Trustees Immediate Past President 4.5. Internal Organization of the AMS Arthur M. Jaffe 1999 4.6. Program and Meetings Vice Presidents James G. Arthur 2001 4.7. Status of the Profession Jennifer Tour Chayes 2000 4.8. Prizes and Awards H. Blaine Lawson, Jr. 1999 Secretary Robert J. Daverman 2000 4.9. Institutes and Symposia Former Secretary Robert M. Fossum 2000 4.10. Joint Committees Associate Secretaries* John L. Bryant 2000 5. Representatives Susan J. Friedlander 1999 6. Index Bernard Russo 2001 Terms of members expire on January 31 following the year given Lesley M. Sibner 2000 unless otherwise speci®ed. Treasurer John M. Franks 2000 Associate Treasurer B. A. Taylor 2000 2.0.2. Representatives of Committees Bulletin Donald G. Saari 2001 1. Of®cers Colloquium Susan J. Friedlander 2001 Executive Committee John B. Conway 2000 President Felix E. -

Biographies of Candidates 1997

bios.qxp 7/16/97 3:11 PM Page 958 Biographies of Candidates 1997 Biographical information about the candidates has been ver- President-Elect ified by the candidates, although in a few instances prior Srinivasa S.R. Varadhan travel arrangements of the candidate at the time of as- Professor of Mathematics, sembly of the information made communication difficult Courant Institute of Math- or impossible. A candidate had the opportunity to make a ematical Sciences, New York statement of not more than 200 words on any subject University. matter without restriction and to list up to five of her or Born: January 2, 1940, his research papers. Madras, India. Abbreviations: American Association for the Advance- Ph.D.: Indian Statistical Insti- ment of Science (AAAS); American Mathematical Society tute, Calcutta, 1963. (AMS); American Statistical Association (ASA); Association AMS Committees: Commit- for Computing Machinery (ACM); Association for Symbolic tee to Select Hour Speakers Logic (ASL); Association for Women in Mathematics (AWM); for Eastern Sectional Meet- Canadian Mathematical Society, Société Mathématique du ings, 1985–1986 (chair, 1986); Canada (CMS); Conference Board of the Mathematical Sci- Committee on Special Dona- ences (CBMS); Institute of Mathematical Statistics (IMS); tions of Publications, 1996– ; International Mathematical Union (IMU); London Math- Committee on Publications, ematical Society (LMS); Mathematical Association of Amer- 1997– ; AMS-SIAM Committee to Select the Winner of the ica (MAA); National Academy of Sciences -

SIAM Unwrapped – August 2015 This Issue of Unwrapped Brought to You with Partial Support From

SIAM Unwrapped – August 2015 This issue of Unwrapped brought to you with partial support from: Dear SIAM members, It’s that time of year again! SIAM will elect members to its Board and Council, as well as several officers including President-elect, Vice President at Large, and Secretary, starting in September. Be sure to vote and make your voices heard on who will make important decisions for our community. On September 1, look for an e-mail message from the “SIAM Election Coordinator” ([email protected]) with your unique voting credentials and a link to the voting site. Regards, Karthika Swamy Cohen Editor ------------------------------------------------------------------------------------------------------------------------------------------ Contents: SIAM HQ UPDATE - SIAM Prizes at ICIAM 2015 - Sign up for SIAM News alerts PUBLIC AWARENESS - Moody’s Mega Math Challenge goes national - What is on SIAM’s YouTube channel? - Math Matters in many different ways PUBLISHING NEWS & NOTES - Do you have undergraduate student research worthy of publishing? - New SIAM Books - Finite Dimensional Linear Systems - The Shapes of Things: A Practical Guide to Differential Geometry and the Shape Derivative - Spline Functions: Computational Methods UPDATES ON CONFERENCES & PRIZES - 2016 Gene Golub SIAM Summer School - SIAM conference registrations & submissions - Prize nomination deadlines —————————— ::: SIAM HQ UPDATE ::: —————————— SIAM Prizes at ICIAM 2015 Will you be attending the 8th International Congress on Industrial and Applied Mathematics (ICIAM 2015) in Beijing next week? As is customary, SIAM will award several of its major prizes at the Prizes and Awards Luncheon to be held at the Congress. The prize luncheon will take place 12:00-1:30 p.m. on Thursday, August 13, as part of the meeting. -

Celis Washington 0250E 10366.Pdf (969.5Kb)

Information Economics in the Age of e-Commerce: Models and Mechanisms for Information-Rich Markets L. Elisa Celis A dissertation submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy University of Washington 2012 Program Authorized to Offer Degree: Computer Science and Engineering In presenting this dissertation in partial fulfillment of the requirements for the doctoral degree at the University of Washington, I agree that the Library shall make its copies freely available for inspection. I further agree that extensive copying of this dissertation is allowable only for scholarly purposes, consistent with \fair use" as prescribed in the U.S. Copyright Law. Requests for copying or reproduction of this dissertation may be referred to Proquest Information and Learning, 300 North Zeeb Road, Ann Arbor, MI 48106-1346, 1-800-521-0600, to whom the author has granted \the right to reproduce and sell (a) copies of the manuscript in microform and/or (b) printed copies of the manuscript made from microform." Signature Date University of Washington Abstract Information Economics in the Age of e-Commerce: Models and Mechanisms for Information-Rich Markets L. Elisa Celis Co-Chairs of the Supervisory Committee: Professor Anna R. Karlin Computer Science and Engineering Senior Researcher Yuval Peres Microsoft Research The internet has dramatically changed the landscape of both markets and computation with the advent of electronic commerce (e-commerce). It has accelerated transactions, informed buyers, and allowed interactions to be computerized, enabling unprecedented sophistication and complexity. Since the environment consists of multiple owners of a wide variety of resources, it is a distributed problem where participants behave strategically and selfishly. -

Report Idea 1

annual report 2005 Innovation and the Integrity of Science The American Association for the Advancement of Science (AAAS) is the world’s largest general scientific society, and publisher of the journal, Science (www.sciencemag.org). AAAS was founded in 1848, and serves 262 affiliated societies and academies of science, reaching 10 million individuals. Science has the largest paid circulation of any peer-reviewed general science journal in the world, with an estimated total readership of 1 million. The non-profit AAAS (www.aaas.org) is open to all and fulfills its mission to “advance science and serve society” through initiatives in science policy; international programs; science education; and more. For the latest research news, log onto EurekAlert!, www.eurekalert.org, the premier science-news Web site, a service of AAAS. Table of Contents 2 Welcome Letter 4 Evolution — 2005 Chronology 6 Science Policy and Security 8 International Impacts 10 Science Education and Careers 12 Science Breakthroughs 14 Engaging the Public 16 AAAS Awards 20 Golden Fund Update 22 AAAS Fellows 26 Acknowledgement of Contributors 32 Financial Summary for 2005 33 AAAS Board of Directors, Officers and Information 1 Year in Review: 2005 WELCOME FROM THE CHAIR, SHIRLEY ANN JACKSON, AND THE CEO, ALAN I. LESHNER In a global economy, our prosperity, safety, and overall well-being depend more than ever upon our capacity for innovation. Geopolitical tensions, exacerbated by an uncertain energy future, underscore the need to step up the pace of fundamental scientific discovery. In the United States, unfortunately, a quiet crisis confronts us as scientists and engineers are retiring in record numbers and too few students enter the pipeline. -

Archn&S Jul 0 8 2013

Dynamic Strategic Interactions: Analysis and Mechanism Design by Utku Ozan Candogan B.S., Electrical and Electronics Engineering, Bilkent University (2007) M.S., Electrical Engineering and Computer Science, Massachusetts Institute of Technology (2009) Submitted to the Department of Electrical Engineering and Computer Science in partial fulfillment of the requirements for the degree of ARCHN&S Doctor of Philosophy MASSACHUSETTS INSTY TE OF TECHNOLOGY at the JUL 0 8 2013 MASSACHUSETTS INSTITUTE OF TECHNOLOGY June 2013 LIBRARIES @ Massachusetts Institute of Technology 2013. All rights reserved. Author.......................................................... Department of Electrical Engineering and Computer Science May 22, 2013 C ertified by ........................... ky Asuman zdaglar ,1 Professor Thesis Supervisor C ertified by ................................. --............ ........ Pablo A. Parrilo Professor V, Thesis Supervisor Accepted by ..... 4lie A. Kolodziejski Professor Chair, Department Committee on Graduate Students 2 Dynamic Strategic Interactions: Analysis and Mechanism Design by Utku Ozan Candogan Submitted to the Department of Electrical Engineering and Computer Science on May 22, 2013, in partial fulfillment of the requirements for the degree of Doctor of Philosophy Abstract Modern systems, such as engineering systems with autonomous entities, markets, and finan- cial networks, consist of self-interested agents with potentially conflicting objectives. These agents interact in a dynamic manner, modifying their strategies over time to improve their payoffs. The presence of self-interested agents in such systems, necessitates the analysis of the impact of multi-agent decision making on the overall system, and the design of new systems with improved performance guarantees. Motivated by this observation, in the first part of this thesis we focus on fundamental structural properties of games, and exploit them to provide a new framework for analyzing the limiting behavior of strategy update rules in various game-theoretic settings.