Accelerating Bandwidth-Bound Deep Learning Inference with Main-Memory Accelerators

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

AMD Athlon™ Processor X86 Code Optimization Guide

AMD AthlonTM Processor x86 Code Optimization Guide © 2000 Advanced Micro Devices, Inc. All rights reserved. The contents of this document are provided in connection with Advanced Micro Devices, Inc. (“AMD”) products. AMD makes no representations or warranties with respect to the accuracy or completeness of the contents of this publication and reserves the right to make changes to specifications and product descriptions at any time without notice. No license, whether express, implied, arising by estoppel or otherwise, to any intellectual property rights is granted by this publication. Except as set forth in AMD’s Standard Terms and Conditions of Sale, AMD assumes no liability whatsoever, and disclaims any express or implied warranty, relating to its products including, but not limited to, the implied warranty of merchantability, fitness for a particular purpose, or infringement of any intellectual property right. AMD’s products are not designed, intended, authorized or warranted for use as components in systems intended for surgical implant into the body, or in other applications intended to support or sustain life, or in any other applica- tion in which the failure of AMD’s product could create a situation where per- sonal injury, death, or severe property or environmental damage may occur. AMD reserves the right to discontinue or make changes to its products at any time without notice. Trademarks AMD, the AMD logo, AMD Athlon, K6, 3DNow!, and combinations thereof, AMD-751, K86, and Super7 are trademarks, and AMD-K6 is a registered trademark of Advanced Micro Devices, Inc. Microsoft, Windows, and Windows NT are registered trademarks of Microsoft Corporation. -

Analyzing the Benefits of Scratchpad Memories for Scientific Matrix

The Pennsylvania State University The Graduate School Department of Computer Science and Engineering ANALYZING THE BENEFITS OF SCRATCHPAD MEMORIES FOR SCIENTIFIC MATRIX COMPUTATIONS A Thesis in Computer Science and Engineering by Bryan Alan Cover c 2008 Bryan Alan Cover Submitted in Partial Fulfillment of the Requirements for the Degree of Master of Science May 2008 The thesis of Bryan Alan Cover was reviewed and approved* by the following: Mary Jane Irwin Evan Pugh Professor of Computer Science and Engineering Thesis Co-Advisor Padma Raghavan Professor of Computer Science and Engineering Thesis Co-Advisor Raj Acharya Professor of Computer Science and Engineering Head of the Department of Computer Science and Engineering *Signatures are on file in the Graduate School. iii Abstract Scratchpad memories (SPMs) have been shown to be more energy efficient, have faster access times, and take up less area than traditional hardware-managed caches. This, coupled with the predictability of data presence and reduced thermal properties, makes SPMs an attractive alternative to cache for many scientific applications. In this work, SPM based systems are considered for a variety of different functions. The first study performed is to analyze SPMs for their thermal and area properties on a conven- tional RISC processor. Six performance optimized variants of architecture are explored that evaluate the impact of having an SPM in the on-chip memory hierarchy. Increasing the performance and energy efficiency of both dense and sparse matrix-vector multipli- cation on a chip multi-processor are also looked at. The efficient utilization of the SPM is ensured by profiling the application for the data structures which do not perform well in traditional cache. -

Memory Architectures 13 Preeti Ranjan Panda

Memory Architectures 13 Preeti Ranjan Panda Abstract In this chapter we discuss the topic of memory organization in embedded systems and Systems-on-Chips (SoCs). We start with the simplest hardware- based systems needing registers for storage and proceed to hardware/software codesigned systems with several standard structures such as Static Random- Access Memory (SRAM) and Dynamic Random-Access Memory (DRAM). In the process, we touch upon concepts such as caches and Scratchpad Memories (SPMs). In general, the emphasis is on concepts that are more generally found in SoCs and less on general-purpose computing systems, although this distinction is not very clearly defined with respect to the memory subsystem. We touch upon implementations of these ideas in modern research and commercial scenarios. In this chapter, we also point out issues arising in the context of the memory architectures that become exported as problems to be addressed by the compiler and system designer. Acronyms ALU Arithmetic-Logic Unit ARM Advanced Risc Machines CGRA Coarse Grained Reconfigurable Architecture CPU Central Processing Unit DMA Direct Memory Access DRAM Dynamic Random-Access Memory GPGPU General-Purpose computing on Graphics Processing Units GPU Graphics Processing Unit HDL Hardware Description Language PCM Phase Change Memory P.R. Panda () Department of Computer Science and Engineering, Indian Institute of Technology Delhi, New Delhi, India e-mail: [email protected] © Springer Science+Business Media Dordrecht 2017 411 S. Ha, J. Teich (eds.), Handbook of Hardware/Software Codesign, DOI 10.1007/978-94-017-7267-9_14 412 P.R. Panda SoC System-on-Chip SPM Scratchpad Memory SRAM Static Random-Access Memory STT-RAM Spin-Transfer Torque Random-Access Memory SWC Software Cache Contents 13.1 Motivating the Significance of Memory.................................... -

Benchmark Evaluations of Modern Multi Processor Vlsi Ds Pm Ps

1220 Session 1220 Benchmark Evaluations of Modern Multi-Processor VLSI DSPµPs Aaron L. Robinson and Fred O. Simons, Jr. High-Performance Computing and Simulation (HCS) Research Laboratory Electrical Engineering Department Florida A&M University and Florida State University Tallahassee, FL 32316-2175 Abstract - The authors continue their tradition of presenting technical reviews and performance comparisons of the newest multi-processor VLSI DSPµPs with the intention of providing concise focused analyses designed to help established or aspiring DSP analysts evaluate the applicability of new DSP technology to their specific applications. As in the past, the Analog Devices SHARCTM and Texas Instruments TMS320C80 families of DSPµPs will be the focus of our presentation because these manufacturers continue to push the envelop of new DSPµPs (Digital Signal Processing microProcessors) development. However, in addition to the standard performance analyses and benchmark evaluations, the authors will present a new image-processing bench marking technique designed specifically for evaluating new DSPµP image processing capabilities. 1. Introduction The combination of constantly evolving DSP algorithm development and continually advancing DSPuP hardware have formed the basis for an exponential growth in DSP applications. The increased market demand for these applications and their required hardware has resulted in the production of DSPuPs with very powerful components capable of performing a wide range of complicated operations. Due to the complicated architectures of these new processors, it would be time consuming for even the experienced DSP analysts to review and evaluate these new DSPuPs. Furthermore, the inexperienced DSP analysts would find it even more time consuming, and possibly very difficult to appreciate the significance and opportunities for these new components. -

(12) Patent Application Publication (10) Pub. No.: US 2011/0231717 A1 HUR Et Al

US 20110231717A1 (19) United States (12) Patent Application Publication (10) Pub. No.: US 2011/0231717 A1 HUR et al. (43) Pub. Date: Sep. 22, 2011 (54) SEMICONDUCTOR MEMORY DEVICE Publication Classification (76) Inventors: Hwang HUR, Kyoungki-do (KR): ( 51) Int. Cl. Chang-Ho Do, Kyoungki-do (KR): C. % CR Jae-Bum Ko, Kyoungki-do (KR); ( .01) Jin-Il Chung, Kyoungki-do (KR) (21) Appl. N 13A149,683 (52) U.S. Cl. ................................. 714/718; 714/E11.145 ppl. No.: 9 (22)22) FileFiled: Mavay 31,51, 2011 (57) ABSTRACT Related U.S. Application Data Semiconductor memory device includes a cell array includ (62) Division of application No. 12/154,870, filed on May ing a plurality of unit cells; and a test circuit configured to 28, 2008, now Pat. No. 7,979,758. perform a built-in self-stress (BISS) test for detecting a defect by performing a plurality of internal operations including a (30) Foreign Application Priority Data write operation through an access to the unit cells using a plurality of patterns during a test procedure carried out at a Feb. 29, 2008 (KR) ............................. 2008-OO18761 wafer-level. O 2 FAD MoDE PE, FRS PWD PADX8 GENERATOR EAELER PEC 3. PD) PWDC CRECKCCES CKCKB RASCASECSCE SISTE CLOCKBUFFER CEE e EST CLK BSI RASCASECSCKE WREFB ESTE s ACTF 32 P 8:W 4. 38t.A 3. se. A(2) FIRST REFRESH AKE13A2) REFIREFA CONTROR ROWANC RSBSAApp.12BS ARS BA255612.ESE E L - ww.i. 33 EE 3. PE PWDA RAE7(3) ECS issi: OLREFB SECOND LAK:2) BSDT DATABUFFERG08 EABLER REFIREFA SECON REFRESH BSE Y ADDRC CONTROLLER BST YEADDK)) PWS Testaposs WKB811-12 BAADDICBAAD BSYBRST (88.11:12) "Of BST CLK 380 3. -

Gpgpus: Overview Pedagogically Precursor Concepts UNIVERSITY of ILLINOIS at URBANA-CHAMPAIGN

GPGPUs: Overview Pedagogically precursor concepts UNIVERSITY OF ILLINOIS AT URBANA-CHAMPAIGN © 2018 L. V. Kale at the University of Illinois Urbana-Champaign Precursor Concepts: Pedagogical • Architectural elements that, at least pedagogically, are precursors to understanding GPGPUs • SIMD and vector units. • We have already seen those • Large scale “Hyperthreading” for latency tolerance • Scratchpad memories • High BandWidth memory L.V.Kale 2 Tera MTA, Sun UltraSPARC T1 (Niagara) • Tera computers, With Burton Smith as a co-founder (1987) • Precursor: HEP processor (Denelcor Inc.), 1982 • First machine Was MTA • MTA-1, MTA-2, MTA-3 • Basic idea: • Support a huge number of hardWare threads (128) • Each With its oWn registers • No Cache! • Switch among threads on every cycle, thus tolerating DRAM latency • These threads could be running different processes • Such processors are called “barrel processors” in literature • But they sWitched to the “next thread” alWays.. So your turn is 127 clocks aWay, always • Was especially good for highly irregular accesses L.V.Kale 3 Scratchpad memory • Caches are complex and can cause unpredictable impact on performance • Scratchpad memories are made from SRAM on chip • Separate part of the address space • As fast or faster than caches, because no tag-matching or associative search • Need explicit instructions to bring data into scratchpad from memory • Load/store instructions exist betWeen registers and scratchpad • Example: IBM/Toshiba/Sony cell processor used in PS/3 • 1 PPE, and 8 SPE cores • Each SPE core has 256 KiB scratchpad • DMA is mechanism for moving data betWeen scratchpad and external DRAM L.V.Kale 4 High BandWidth Memory • As you increase compute capacity of processor chips, the bandWidth to DRAM comes under pressure • Many past improvements (e.g. -

Computer Architecture Out-Of-Order Execution

Computer Architecture Out-of-order Execution By Yoav Etsion With acknowledgement to Dan Tsafrir, Avi Mendelson, Lihu Rappoport, and Adi Yoaz 1 Computer Architecture 2013– Out-of-Order Execution The need for speed: Superscalar • Remember our goal: minimize CPU Time CPU Time = duration of clock cycle × CPI × IC • So far we have learned that in order to Minimize clock cycle ⇒ add more pipe stages Minimize CPI ⇒ utilize pipeline Minimize IC ⇒ change/improve the architecture • Why not make the pipeline deeper and deeper? Beyond some point, adding more pipe stages doesn’t help, because Control/data hazards increase, and become costlier • (Recall that in a pipelined CPU, CPI=1 only w/o hazards) • So what can we do next? Reduce the CPI by utilizing ILP (instruction level parallelism) We will need to duplicate HW for this purpose… 2 Computer Architecture 2013– Out-of-Order Execution A simple superscalar CPU • Duplicates the pipeline to accommodate ILP (IPC > 1) ILP=instruction-level parallelism • Note that duplicating HW in just one pipe stage doesn’t help e.g., when having 2 ALUs, the bottleneck moves to other stages IF ID EXE MEM WB • Conclusion: Getting IPC > 1 requires to fetch/decode/exe/retire >1 instruction per clock: IF ID EXE MEM WB 3 Computer Architecture 2013– Out-of-Order Execution Example: Pentium Processor • Pentium fetches & decodes 2 instructions per cycle • Before register file read, decide on pairing Can the two instructions be executed in parallel? (yes/no) u-pipe IF ID v-pipe • Pairing decision is based… On data -

Hardware and Software Optimizations for GPU Resource Management

Hardware and Software Optimizations for GPU Resource Management A Thesis Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy by Vishwesh Jatala to the DEPARTMENT OF COMPUTER SCIENCE AND ENGINEERING INDIAN INSTITUTE OF TECHNOLOGY KANPUR, INDIA December, 2018 ii Scanned by CamScanner Abstract Graphics Processing Units (GPUs) are widely adopted across various domains due to their massive thread level parallelism (TLP). The TLP that is present in the GPUs is limited by the number of resident threads, which in turn depends on the available resources in the GPUs { such as registers and scratchpad memory. Recent GPUs aim to improve the TLP, and consequently the throughput, by increasing the number of resources. Further, the improvements in semiconductor fabrication enable smaller feature sizes. However, for smaller feature sizes, the leakage power is a significant part of the total power consumption. In this thesis, we provide hardware and software solutions that aim towards the two problems of GPU design: improving throughput and reducing leakage energy. In the first work of the thesis, we focus on improving the performance of GPUs by effective resource management. In GPUs, resources (registers and scratchpad memory) are allocated at thread block level granularity, as a result, some of the resources may not be used up completely and hence will be wasted. We propose an approach that shares the resources of SM to utilize the wasted resources by launching more thread blocks in each SM. We show the effectiveness of our approach for two resources: registers and scratchpad memory (shared memory). On evaluating our approach experimentally with 19 kernels from several benchmark suites, we observed that kernels that underutilize register resource show an average improvement of 11% with register sharing. -

Improving Memory Subsystem Performance Using Viva: Virtual Vector Architecture

Improving Memory Subsystem Performance using ViVA: Virtual Vector Architecture Joseph Gebis12,Leonid Oliker12, John Shalf1, Samuel Williams12,Katherine Yelick12 1 CRD/NERSC, Lawrence Berkeley National Laboratory Berkeley, CA 94720 2 CS Division, University of California at Berkeley, Berkeley, CA 94720 fJGebis, LOliker, JShalf, SWWilliams, [email protected] Abstract. The disparity between microprocessor clock frequencies and memory latency is a primary reason why many demanding applications run well below peak achievable performance. Software controlled scratchpad memories, such as the Cell local store, attempt to ameliorate this discrepancy by enabling precise control over memory movement; however, scratchpad technology confronts the programmer and compiler with an unfamiliar and difficult programming model. In this work, we present the Virtual Vector Architecture (ViVA), which combines the memory semantics of vector computers with a software-controlled scratchpad memory in order to provide a more effective and practical approach to latency hiding. ViVA requires minimal changes to the core design and could thus be eas- ily integrated with conventional processor cores. To validate our approach, we implemented ViVA on the Mambo cycle-accurate full system simulator, which was carefully calibrated to match the performance on our underlying PowerPC Apple G5 architecture. Results show that ViVA is able to deliver significant per- formance benefits over scalar techniques for a variety of memory access pat- terns as well as two important memory-bound compact kernels, corner turn and sparse matrix-vector multiplication — achieving 2x–13x improvement compared the scalar version. Overall, our preliminary ViVA exploration points to a promis- ing approach for improving application performance on leading microprocessors with minimal design and complexity costs, in a power efficient manner. -

Thread Scheduling in Multi-Core Operating Systems Redha Gouicem

Thread Scheduling in Multi-core Operating Systems Redha Gouicem To cite this version: Redha Gouicem. Thread Scheduling in Multi-core Operating Systems. Computer Science [cs]. Sor- bonne Université, 2020. English. tel-02977242 HAL Id: tel-02977242 https://hal.archives-ouvertes.fr/tel-02977242 Submitted on 24 Oct 2020 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Ph.D thesis in Computer Science Thread Scheduling in Multi-core Operating Systems How to Understand, Improve and Fix your Scheduler Redha GOUICEM Sorbonne Université Laboratoire d’Informatique de Paris 6 Inria Whisper Team PH.D.DEFENSE: 23 October 2020, Paris, France JURYMEMBERS: Mr. Pascal Felber, Full Professor, Université de Neuchâtel Reviewer Mr. Vivien Quéma, Full Professor, Grenoble INP (ENSIMAG) Reviewer Mr. Rachid Guerraoui, Full Professor, École Polytechnique Fédérale de Lausanne Examiner Ms. Karine Heydemann, Associate Professor, Sorbonne Université Examiner Mr. Etienne Rivière, Full Professor, University of Louvain Examiner Mr. Gilles Muller, Senior Research Scientist, Inria Advisor Mr. Julien Sopena, Associate Professor, Sorbonne Université Advisor ABSTRACT In this thesis, we address the problem of schedulers for multi-core architectures from several perspectives: design (simplicity and correct- ness), performance improvement and the development of application- specific schedulers. -

Reconfigurable Accelerators in the World of General-Purpose Computing

Reconfigurable Accelerators in the World of General-Purpose Computing Dissertation A thesis submitted to the Faculty of Electrical Engineering, Computer Science and Mathematics of Paderborn University in partial fulfillment of the requirements for the degree of Dr. rer. nat. by Tobias Kenter Paderborn, Germany August 26, 2016 Acknowledgments First and foremost, I would like to thank Prof. Dr. Christian Plessl for the advice and support during my research. As particularly helpful, I perceived his ability to communicate suggestions depending on the situation, either through open questions that give room to explore and learn, or through concrete recommendations that help to achieve results more directly. Special thanks go also to Prof. Dr. Marco Platzner for his advice and support. I profited especially from his experience and ability to systematically identify the essence of challenges and solutions. Furthermore, I would like to thank: • Prof. Dr. João M. P. Cardoso, for serving as external reviewer for my dissertation. • Prof. Dr. Friedhelm Meyer auf der Heide and Dr. Matthias Fischer for serving on my oral examination committee. • All colleagues with whom I had the pleasure to work at the PC2 and the Computer Engineering Group, researchers, technical and administrative staff. In a variation to one of our coffee kitchen puns, I’d like to state that research without colleagues is possible, but pointless. However, I’m not sure about the first part. • My long-time office mates Lars Schäfers and Alexander Boschmann for particularly extensive discussions on our research and far beyond. • Gavin Vaz, Heinrich Riebler and Achim Lösch for intensive and productive collabo- ration on joint research interests. -

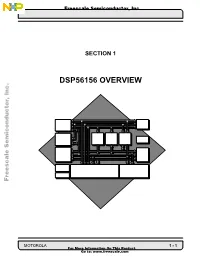

Dsp56156 Overview

Freescale Semiconductor, Inc... MOTOROLA 1 - DSP56156 OVERVIEW Freescale Semiconductor,Inc. F o r M o r G e o I SECTION 1 n t f o o : r w m w a t w i o . f n r e O e n s c T a h l i e s . c P o r o m d u c t , Freescale Semiconductor, Inc. SECTION CONTENTS 1.1 INTRODUCTION . 1-3 1.2 DSP56100 CORE BLOCK DIAGRAM DESCRIPTION . 1-5 1.3 MEMORY ORGANIZATION. 1-12 1.4 EXTERNAL BUS, I/O, AND ON-CHIP PERIPHERALS. 1-12 1.5 OnCE . 1-18 1.6 PROGRAMMING MODEL . 1-18 1.7 INSTRUCTION SET SUMMARY . 1-26 . c n I , r o t c u d n o c i m e S e l a c s e e r F 1 - 2 DSP56156 OVERVIEW MOTOROLA For More Information On This Product, Go to: www.freescale.com Freescale Semiconductor, Inc. INTRODUCTION 1.1 INTRODUCTION This manual is intended to be used with the DSP56100 Family Manual (see Figure 1-1). The DSP56100 Family Manual provides a description of the components of the DSP56100 CORE56100 CORE processor (see Figure 1-2) which is common to all DSP56100 family processors (except for the DSP56116) and includes a detailed descrip- tion of the basic DSP56100 CORE instruction set. The DSP56156 User’s Manual and DSP56166 User’s Manual (see Figure 1-1) provide a brief overview of the core processor and detailed descriptions of the memory and peripherals that are chip specific.