VERSE: Bridging Screen Readers and Voice Assistants for Enhanced

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Accessible Cell Phones

September, 2014 Accessible Cell Phones It can be challenging for individuals with vision loss to find a cell phone that addresses preferences for functionality and accessibility. Some prefer a simple, easy-to-use cell phone that isn’t expensive or complicated while others prefer their phone have a variety of applications. It is important to match needs and capabilities to a cell phone with the features and applications needed by the user. In regard to the accessibility of the cell phone, options to consider are: • access to the status of your phone, such as time, date, signal and battery strength; • options to access and manage your contacts; • type of keypad or touch screen features • screen reader and magnification options (built in or by installation of additional screen-access software); • quality of the display (options for font enlargement and backlighting adjustment). Due to the ever-changing technology in regard to cell phones, new models frequently come on the market. A wide variety of features on today’s cell phones allow them to be used as web browsers, for email, multimedia messaging and much more. Section 255 of the Communications Act, as amended by the Telecommunications Act of 1996, requires that cell phone manufacturers and service providers do all that is “readily achievable” to make each product or service accessible. iPhone The iPhone is an Apple product. The VoiceOver screen reader is a standard feature of the phone. Utilizing a touch screen, a virtual keyboard and voice control called SIRI the iPhone can do email, web browsing, and text messaging, as well as function as a telephone. -

ABC's of Ios: a Voiceover Manual for Toddlers and Beyond!

. ABC’s of iOS: A VoiceOver Manual for Toddlers and Beyond! A collaboration between Diane Brauner Educational Assistive Technology Consultant COMS and CNIB Foundation. Copyright © 2018 CNIB. All rights reserved, including the right to reproduce this manual or portions thereof in any form whatsoever without permission. For information, contact [email protected]. Diane Brauner Diane is an educational accessibility consultant collaborating with various educational groups and app developers. She splits her time between managing the Perkins eLearning website, Paths to Technology, presenting workshops on a national level and working on accessibility-related projects. Diane’s personal mission is to support developers and educators in creating and teaching accessible educational tools which enable students with visual impairments to flourish in the 21st century classroom. Diane has 25+ years as a Certified Orientation and Mobility Specialist (COMS), working primarily with preschool and school-age students. She also holds a Bachelor of Science in Rehabilitation and Elementary Education with certificates in Deaf and Severely Hard of Hearing and Visual Impairments. CNIB Celebrating 100 years in 2018, the CNIB Foundation is a non-profit organization driven to change what it is to be blind today. We work with the sight loss community in a number of ways, providing programs and powerful advocacy that empower people impacted by blindness to live their dreams and tear down barriers to inclusion. Through community consultations and in our day to -

An Explorative Customer Experience Study on Voice Assistant Services of a Swiss Tourism Destination

Athens Journal of Tourism - Volume 7, Issue 4, December 2020 – Pages 191-208 Resistance to Customer-driven Business Model Innovations: An Explorative Customer Experience Study on Voice Assistant Services of a Swiss Tourism Destination By Anna Victoria Rozumowski*, Wolfgang Kotowski± & Michael Klaas‡ For tourism, voice search is a promising tool with a considerable impact on tourist experience. For example, voice search might not only simplify the booking process of flights and hotels but also change local search for tourist information. Against this backdrop, our pilot study analyzes the current state of voice search in a Swiss tourism destination so that providers can benefit from those new opportunities. We conducted interviews with nine experts in Swiss tourism marketing. They agree that voice search offers a significant opportunity as a new and diverse channel in tourism. Moreover, this technology provides new marketing measures and a more efficient use of resources. However, possible threats to this innovation are data protection regulation and providers’ lack of skills and financial resources. Furthermore, the diversity of Swiss dialects pushes voice search to its limits. Finally, our study confirms that tourism destinations should cooperate to implement voice search within their touristic regions. In conclusion, following our initial findings from the sample destination, voice search remains of minor importance for tourist marketing in Switzerland as evident in the given low use of resources. Following this initial investigation of voice search in a Swiss tourism destination, we recommended conducting further qualitative interviews on tourists’ voice search experience in different tourist destinations. Keywords: Business model innovation, resistance to innovation, customer experience, tourism marketing, voice search, Swiss destination marketing, destination management Introduction Since innovations like big data and machine learning have already caused lasting changes in the interaction between companies and consumers (Shankar et al. -

The Nao Robot As a Personal Assistant

1 Ziye Zhou THE NAO ROBOT AS A PERSONAL ASSISTANT Information Technology 2016 2 FOREWORD I would like to take this opportunity to express my gratitude to everyone who have helped me. Dr. Yang Liu is my supervisor in this thesis, without his help I could not come so far and get to know about Artificial Intelligence, I would not understand the gaps between me and other intelligent students in the world. Thanks for giving me a chance to go aboard and get to know more. Also I would like to say thank you to all the teachers and stuffs in VAMK. Thanks for your guidance. Thanks for your patient and unselfish dedication. Finally, thanks to my parents and all my friends. Love you all the time. 3 VAASAN AMMATTIKORKEAKOULU UNIVERSITY OF APPLIED SCIENCES Degree Program in Information Technology ABSTRACT Author Ziye Zhou Title The NAO Robot as a Personal Assistant Year 2016 Language English Pages 55 Name of Supervisor Yang Liu Voice recognition and Artificial Intelligence are popular research topics these days, with robots doing physical and complicated labour work instead of humans is the trend in the world in future. This thesis will combine voice recognition and web crawler, let NAO robot help humans check information (train tickets and weather) from websites by voice interaction with human beings as a voice assistance. The main research works are as follows: 1. Voice recognition, resolved by using Google speech recognition API. 2. Web crawler, resolved by using Selenium to simulate the operation of web pages. 3. The connection and use of NAO robot. -

VPAT™ for Apple Ipad Pro (12.9-Inch)

VPAT™ for Apple iPad Pro (12.9-inch) The following Voluntary Product Accessibility information refers to the Apple iPad Pro (12.9-inch) running iOS 9 or later. For more information on the accessibility features of the iPad Pro and to learn more about iPad Pro features, visit http://www.apple.com/ipad- pro and http://www.apple.com/accessibility iPad Pro (12.9-inch) referred to as iPad Pro below. VPAT™ Voluntary Product Accessibility Template Summary Table Criteria Supporting Features Remarks and Explanations Section 1194.21 Software Applications and Operating Systems Not applicable Section 1194.22 Web-based Internet Information and Applications Not applicable Does not apply—accessibility features consistent Section 1194.23 Telecommunications Products Please refer to the attached VPAT with standards nonetheless noted below. Section 1194.24 Video and Multi-media Products Not applicable Does not apply—accessibility features consistent Section 1194.25 Self-Contained, Closed Products Please refer to the attached VPAT with standards nonetheless noted below. Section 1194.26 Desktop and Portable Computers Not applicable Section 1194.31 Functional Performance Criteria Please refer to the attached VPAT Section 1194.41 Information, Documentation and Support Please refer to the attached VPAT iPad Pro (12.9-inch) VPAT (10.2015) Page 1 of 9 Section 1194.23 Telecommunications products – Detail Criteria Supporting Features Remarks and Explanations (a) Telecommunications products or systems which Not applicable provide a function allowing voice communication and which do not themselves provide a TTY functionality shall provide a standard non-acoustic connection point for TTYs. Microphones shall be capable of being turned on and off to allow the user to intermix speech with TTY use. -

International Journal of Information Movment

International Journal of Information Movement Vol.2 Issue III (July 2017) Website: www.ijim.in ISSN: 2456-0553 (online) Pages 85-92 INFORMATION RETRIEVAL AND WEB SEARCH Sapna Department of Library and Information Science Central University of Haryana Email: sapnasna121@gmail Abstract The paper gives an overview of search techniques used for information retrieval on the web. The features of selected search engines and the search techniques available with emphasis on advanced search techniques are discussed. A historic context is provided to illustrate the evolution of search engines in the semantic web era. The methodology used for the study is review of literature related to various aspects of search engines and search techniques available. In this digital era library and information science professionals should be aware of various search tools and techniques available so that they will be able to provide relevant information to users in a timely and effective manner and satisfy the fourth law of library science i.e. “Save the time of the user.” Keywords: search engine, web search engine, semantic search, resource discovery, - advanced search techniques, information retrieval. 1.0 Introduction Retrieval systems in libraries have been historically very efficient and effective as they are strongly supported by cataloging for description and classification systems for organization of information. The same has continued even in the digital era where online catalogs are maintained by library standards such as catalog codes, classification schemes, standard subject headings lists, subject thesauri, etc. However, the information resources in a given library are limited. With the rapid advancement of technology, a large amount of information is being made available on the web in various forms such as text, multimedia, and another format continuously, however, retrieving relevant results from the web search engine is quite difficult. -

Google Search by Voice: a Case Study

Google Search by Voice: A case study Johan Schalkwyk, Doug Beeferman, Fran¸coiseBeaufays, Bill Byrne, Ciprian Chelba, Mike Cohen, Maryam Garret, Brian Strope Google, Inc. 1600 Amphiteatre Pkwy Mountain View, CA 94043, USA 1 Introduction Using our voice to access information has been part of science fiction ever since the days of Captain Kirk talking to the Star Trek computer. Today, with powerful smartphones and cloud based computing, science fiction is becoming reality. In this chapter we give an overview of Google Search by Voice and our efforts to make speech input on mobile devices truly ubiqui- tous. The explosion in recent years of mobile devices, especially web-enabled smartphones, has resulted in new user expectations and needs. Some of these new expectations are about the nature of the services - e.g., new types of up-to-the-minute information ("where's the closest parking spot?") or communications (e.g., "update my facebook status to 'seeking chocolate'"). There is also the growing expectation of ubiquitous availability. Users in- creasingly expect to have constant access to the information and services of the web. Given the nature of delivery devices (e.g., fit in your pocket or in your ear) and the increased range of usage scenarios (while driving, biking, walking down the street), speech technology has taken on new importance in accommodating user needs for ubiquitous mobile access - any time, any place, any usage scenario, as part of any type of activity. A goal at Google is to make spoken access ubiquitously available. We would like to let the user choose - they should be able to take it for granted that spoken interaction is always an option. -

Adriane-Manual – Wikibooks

Adriane-Manual – Wikibooks Adriane-Manual Notes to this wikibook Target group: Users of the ADRIANE (http://knopper.net/knoppix-adriane /)-Systems as well as people who want to install the system, configure or provide training to. Learning: The user should be enabled, to use the system independently and without sighted assistance and to work productively with the installed programs and services. This book is a "reference book" for a user in which he takes aid to individual tasks. The technician will get instructions for the installation and configuration of the system, so that he can configure it to meet the needs of the user. Trainers should be enabled to understand easily and to explain the system to users so that they can learn how to use it in a short time without help. Contact: Klaus Knopper Are Co authors currently wanted? Yes, in prior consultation with the contact person to coordinate the writing of individual chapters, please. Guidelines for co authors: see above. A clear distinction would be desirable between 'technical part' and 'User part'. Topic description The user part of the book deals with the use of programs that are included with Adriane, as well as the operation of the screen reader. The technical part explains the installation and configuration of Adriane, especially the connection of Braille lines, set-up of internet access, configuration of the mail program etc. Inhaltsverzeichnis 1 Introduction 2 Working with Adriane 2.1 Start and help 2.2 Individual menu system 2.3 Voice output functions 2.4 Programs in Adriane 2.4.1 -

Vizlens: a Robust and Interactive Screen Reader for Interfaces in The

VizLens: A Robust and Interactive Screen Reader for Interfaces in the Real World Anhong Guo1, Xiang ‘Anthony’ Chen1, Haoran Qi2, Samuel White1, Suman Ghosh1, Chieko Asakawa3, Jeffrey P. Bigham1 1Human-Computer Interaction Institute, 2Mechanical Engineering Department, 3Robotics Institute Carnegie Mellon University, 5000 Forbes Ave, Pittsburgh, PA 15213 fanhongg, xiangche, chiekoa, jbighamg @cs.cmu.edu, fhaoranq, samw, sumangg @andrew.cmu.edu ABSTRACT INTRODUCTION The world is full of physical interfaces that are inaccessible The world is full of inaccessible physical interfaces. Mi to blind people, from microwaves and information kiosks to crowaves, toasters and coffee machines help us prepare food; thermostats and checkout terminals. Blind people cannot in printers, fax machines, and copiers help us work; and check dependently use such devices without at least first learning out terminals, public kiosks, and remote controls help us live their layout, and usually only after labeling them with sighted our lives. Despite their importance, few are self-voicing or assistance. We introduce VizLens —an accessible mobile ap have tactile labels. As a result, blind people cannot easily use plication and supporting backend that can robustly and inter them. Generally, blind people rely on sighted assistance ei actively help blind people use nearly any interface they en ther to use the interface or to label it with tactile markings. counter. VizLens users capture a photo of an inaccessible Tactile markings often cannot be added to interfaces on pub interface and send it to multiple crowd workers, who work lic devices, such as those in an office kitchenette or check in parallel to quickly label and describe elements of the inter out kiosk at the grocery store, and static labels cannot make face to make subsequent computer vision easier. -

Turning Voiceover on with Siri 1

Texas School for the Blind and Visually Impaired Outreach Programs www.tsbvi.edu | 512.454.8631 | 1100 W. 45th St. | Austin, TX 78756 Coffee Hour - August 27, 2020 VoiceOver Basics on iOS Presented by Carrie Farraje Introduction: Question: What is VoiceOver? Answer: VoiceOver is a screen reader software that is built into iOS devices such as iPads or iPhones that gives audio description of the screen that people with vision navigate with their eyes. Note: There are multiple ways and gestures to access an iOS device with VoiceOver Important Terms • iOS device: iPad, iPhone, Apple Watch • home button: newer devices do not have a Home button • settings: location of iOS setup and customization • orientation: landscape or portrait • app: application • app icons: small picture that represents an app • page: apps are in rows and columns on a page • gestures: touches that create actions • focus: what has the attention of VoiceOver • page adjuster: to navigate to different pages • dock: area on bottom of screen customized with commonly used apps • status bar: where features such as time, date, battery are located • control center: direct access to customizable settings 1 Turning VoiceOver on in Settings 1. Go to the Settings icon 2. Go to General 3. Go to Accessibility 4. Go to VoiceOver and, tap it on 5. Press Home button to go back to the home screen Turning VoiceOver on with Accessibility Shortcut 1. Go to the Settings icon 2. Go to Accessibility 3. Go to Accessibility Shortcut (at the bottom of the screen) 4. Tap VoiceOver on 5. Turn VoiceOver on by pressing the Home button or the top side button(on newer devices) three times. -

Slide Rule: Making Mobile Touch Screens Accessible to Blind People Using Multi-Touch Interaction Techniques Shaun K

Slide Rule: Making Mobile Touch Screens Accessible to Blind People Using Multi-Touch Interaction Techniques Shaun K. Kane,1 Jeffrey P. Bigham2 and Jacob O. Wobbrock1 1 2 The Information School Computer Science & Engineering DUB Group DUB Group University of Washington University of Washington Seattle, WA 98195 USA Seattle, WA 98195 USA {skane, wobbrock}@u.washington.edu [email protected] ABSTRACT Recent advances in touch screen technology have increased the prevalence of touch screens and have prompted a wave of new touch screen-based devices. However, touch screens are still largely inaccessible to blind users, who must adopt error-prone compensatory strategies to use them or find accessible alternatives. This inaccessibility is due to interaction techniques that require the user to visually locate objects on the screen. To address this problem, we introduce Slide Rule, a set of audio- based multi-touch interaction techniques that enable blind users to access touch screen applications. We describe the design of Slide Rule, our interaction techniques, and a user study in which 10 blind people used Slide Rule and a button-based Pocket PC screen reader. Results show that Slide Rule was significantly faster than Figure 1. Participant using Slide Rule on a multi-touch the button-based system, and was preferred by 7 of 10 users. smartphone. Slide Rule uses audio output only and does not However, users made more errors when using Slide Rule than display information on the screen. when using the more familiar button-based system. different interfaces on the same surface, such as a scrollable list, a Categories and Subject Descriptors: QWERTY keyboard, or a telephone keypad. -

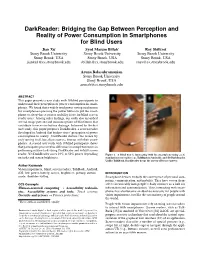

Darkreader: Bridging the Gap Between Perception and Reality Of

DarkReader: Bridging the Gap Between Perception and Reality of Power Consumption in Smartphones for Blind Users Jian Xu∗ Syed Masum Billah* Roy Shilkrot Stony Brook University Stony Brook University Stony Brook University Stony Brook, USA Stony Brook, USA Stony Brook, USA [email protected] [email protected] [email protected] Aruna Balasubramanian Stony Brook University Stony Brook, USA [email protected] ABSTRACT This paper presents a user study with 10 blind participants to understand their perception of power consumption in smart- phones. We found that a widely used power saving mechanism for smartphones–pressing the power button to put the smart- phone to sleep–has a serious usability issue for blind screen reader users. Among other findings, our study also unearthed several usage patterns and misconceptions of blind users that contribute to excessive battery drainage. Informed by the first user study, this paper proposes DarkReader, a screen reader developed in Android that bridges users’ perception of power consumption to reality. DarkReader darkens the screen by truly turning it off, but allows users to interact with their smart- phones. A second user study with 10 blind participants shows that participants perceived no difference in completion times in performing routine tasks using DarkReader and default screen reader. Yet DarkReader saves 24% to 52% power depending Figure 1. A blind user is interacting with his smartphone using a (A) on tasks and screen brightness. standard screen reader (e.g., TalkBack in Android), and (B) DarkReader. Unlike TalkBack, DarkReader keeps the screen off to save power. Author Keywords Vision impairment, blind; screen readers, TalkBack, Android, iOS; low-power, battery, screen, brightness; privacy, curtain INTRODUCTION mode, shoulder-surfing.