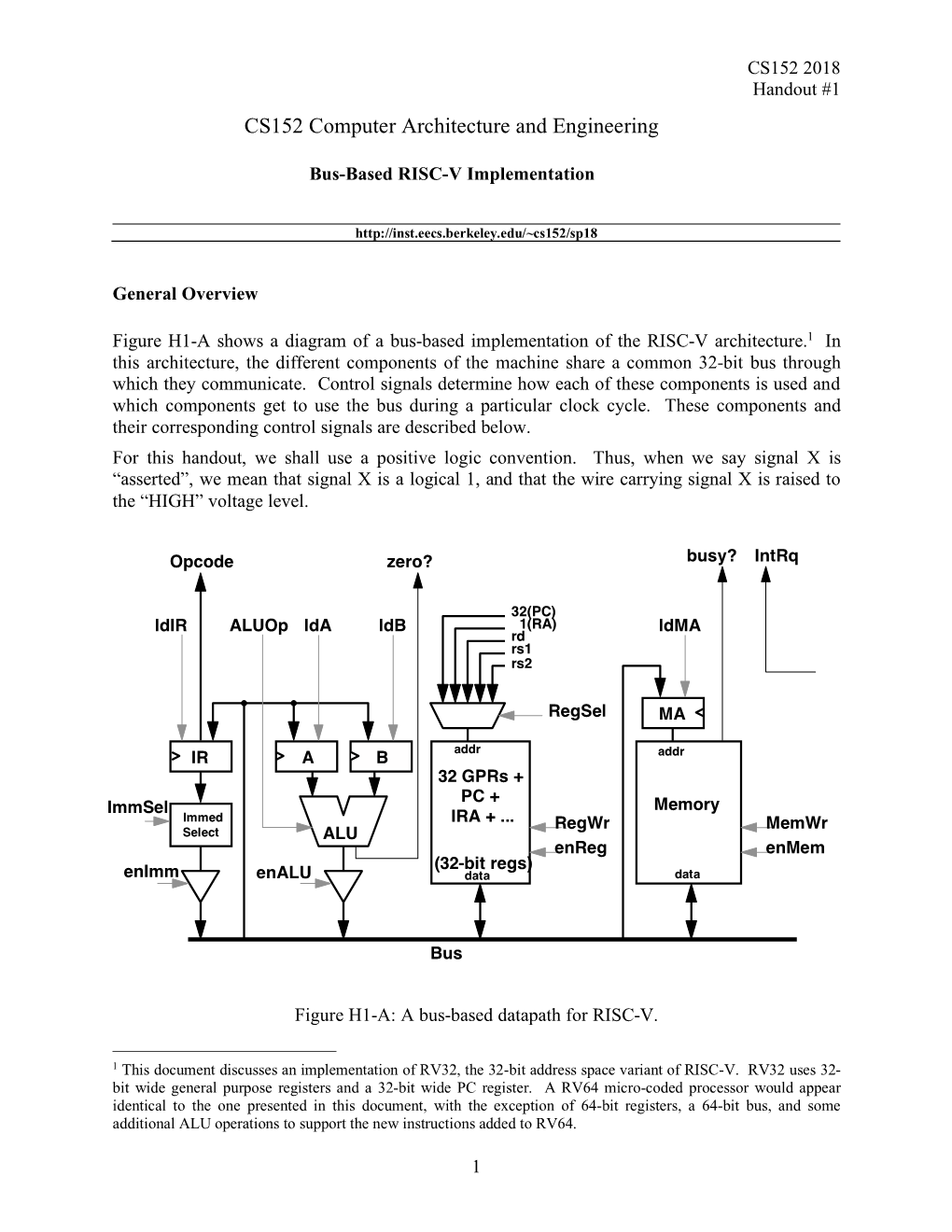

CS152 Computer Architecture and Engineering

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Central Processing Unit(CPU). the Brain of Any Computer System Is the CPU

Computer Fundamentals 1'stage Lec. (8 ) College of Computer Technology Dept.Information Networks The central processing unit(CPU). The brain of any computer system is the CPU. It controls the functioning of the other units and process the data. The CPU is sometimes called the processor, or in the personal computer field called “microprocessor”. It is a single integrated circuit that contains all the electronics needed to execute a program. The processor calculates (add, multiplies and so on), performs logical operations (compares numbers and make decisions), and controls the transfer of data among devices. The processor acts as the controller of all actions or services provided by the system. Processor actions are synchronized to its clock input. A clock signal consists of clock cycles. The time to complete a clock cycle is called the clock period. Normally, we use the clock frequency, which is the inverse of the clock period, to specify the clock. The clock frequency is measured in Hertz, which represents one cycle/second. Hertz is abbreviated as Hz. Usually, we use mega Hertz (MHz) and giga Hertz (GHz) as in 1.8 GHz Pentium. The processor can be thought of as executing the following cycle forever: 1. Fetch an instruction from the memory, 2. Decode the instruction (i.e., determine the instruction type), 3. Execute the instruction (i.e., perform the action specified by the instruction). Execution of an instruction involves fetching any required operands, performing the specified operation, and writing the results back. This process is often referred to as the fetch- execute cycle, or simply the execution cycle. -

Subchapter 2.4–Hp Server Rp5400 Series

Chapter 2 hp server rp5400 series Subchapter 2.4—hp server rp5400 series hp server rp5470 Table 2.4.1 HP Server rp5470 Specifications Server model number rp5470 Max. Network Interface Cards (cont.)–see supported I/O table Server product number A6144B ATM 155 Mb/s–MMF 10 Number of Processors 1-4 ATM 155 Mb/s–UTP5 10 Supported Processors ATM 622 Mb/s–MMF 10 PA-RISC PA-8700 Processor @ 650 and 750 MHz 802.5 Token Ring 4/16/100 Mb/s 10 Cache–Instr/data per CPU (KB) 750/1500 Dual port X.25/SDLC/FR 10 Floating Point Coprocessor included Yes Quad port X.25/FR 7 FDDI 10 Max. Additional Interface Cards–see supported I/O table 8 port Terminal Multiplexer 4 64 port Terminal Multiplexer 10 PA-RISC PA-8600 Processor @ 550 MHz Graphics/USB kit 1 kit (2 cards) Cache–Instr/data/CPU (KB) 512/1024 Public Key Cryptography 10 Floating Point Coprocessor included Yes HyperFabric 7 Electrical Characteristics TPM estimate (4 CPUs) 34,500 AC Input power 100-240V 50/60 Hz SPECweb99 (4 CPUs) 3,750 Hotswap Power supplies 2 included, 3rd for N+1 Redundant AC power inputs 2 required, 3rd for N+1 Min. memory 256 MB Current requirements at 200V 6.5 A (shared across inputs) Max. memory capacity 16 GB Typical Power dissipation (watts) 1008 W Internal Disks Maximum Power dissipation (watts) 1 1360 W Max. disk mechanisms 4 Power factor at full load .98 Max. disk capacity 292 GB kW rating for UPS loading1 1.3 Standard Integrated I/O Maximum Heat dissipation (BTUs/hour) 1 4380 - (3000 typical) Ultra2 SCSI–LVD Yes Site Preparation 10/100Base-T (RJ-45 connector) Yes Site planning and installation included No RS-232 serial ports (multiplexed from DB-25 port) 3 Depth (mm/inches) 774 mm/30.5 Web Console (including 10Base-T port) Yes Width (mm/inches) 482 mm/19 I/O buses and slots Rack Height (EIA/mm/inches) 7 EIA/311/12.25 Total PCI Slots (supports 66/33 MHz×64/32 bits) 10 Deskside Height (mm/inches) 368 mm/14.5 2 Hot-Plug Twin-Turbo (500 MB/s) and 6 Hot-Plug Turbo slots (250 MB/s) Weight (kg/lbs) Max. -

The Microarchitecture of a Low Power Register File

The Microarchitecture of a Low Power Register File Nam Sung Kim and Trevor Mudge Advanced Computer Architecture Lab The University of Michigan 1301 Beal Ave., Ann Arbor, MI 48109-2122 {kimns, tnm}@eecs.umich.edu ABSTRACT Alpha 21464, the 512-entry 16-read and 8-write (16-r/8-w) ports register file consumed more power and was larger than The access time, energy and area of the register file are often the 64 KB primary caches. To reduce the cycle time impact, it critical to overall performance in wide-issue microprocessors, was implemented as two 8-r/8-w split register files [9], see because these terms grow superlinearly with the number of read Figure 1. Figure 1-(a) shows the 16-r/8-w file implemented and write ports that are required to support wide-issue. This paper directly as a monolithic structure. Figure 1-(b) shows it presents two techniques to reduce the number of ports of a register implemented as the two 8-r/8-w register files. The monolithic file intended for a wide-issue microprocessor without hardly any register file design is slow because each memory cell in the impact on IPC. Our results show that it is possible to replace a register file has to drive a large number of bit-lines. In register file with 16 read and 8 write ports, intended for an eight- contrast, the split register file is fast, but duplicates the issue processor, with a register file with just 8 read and 8 write contents of the register file in two memory arrays, resulting in ports so that the impact on IPC is a few percent. -

Analysis of GPGPU Programs for Data-Race and Barrier Divergence

Analysis of GPGPU Programs for Data-race and Barrier Divergence Santonu Sarkar1, Prateek Kandelwal2, Soumyadip Bandyopadhyay3 and Holger Giese3 1ABB Corporate Research, India 2MathWorks, India 3Hasso Plattner Institute fur¨ Digital Engineering gGmbH, Germany Keywords: Verification, SMT Solver, CUDA, GPGPU, Data Races, Barrier Divergence. Abstract: Todays business and scientific applications have a high computing demand due to the increasing data size and the demand for responsiveness. Many such applications have a high degree of parallelism and GPGPUs emerge as a fit candidate for the demand. GPGPUs can offer an extremely high degree of data parallelism owing to its architecture that has many computing cores. However, unless the programs written to exploit the architecture are correct, the potential gain in performance cannot be achieved. In this paper, we focus on the two important properties of the programs written for GPGPUs, namely i) the data-race conditions and ii) the barrier divergence. We present a technique to identify the existence of these properties in a CUDA program using a static property verification method. The proposed approach can be utilized in tandem with normal application development process to help the programmer to remove the bugs that can have an impact on the performance and improve the safety of a CUDA program. 1 INTRODUCTION ans that the program will never end-up in an errone- ous state, or will never stop functioning in an arbitrary With the order of magnitude increase in computing manner, is a well-known and critical property that an demand in the business and scientific applications, de- operational system should exhibit (Lamport, 1977). -

1.1.2. Register File

國 立 交 通 大 學 資訊科學與工程研究所 碩 士 論 文 同步多執行緒架構中可彈性切割與可延展的暫存 器檔案設計之研究 Design of a Flexibly Splittable and Stretchable Register File for SMT Architectures 研 究 生:鐘立傑 指導教授:單智君 教授 中 華 民 國 九 十 六 年 八 月 I II III IV 同步多執行緒架構中可彈性切割與可延展的暫存 器檔案設計之研究 學生:鐘立傑 指導教授:單智君 博士 國立交通大學資訊科學與工程研究所 碩士班 摘 要 如何利用最少的硬體資源來支援同步多執行緒是一個很重要的研究議題,暫存 器檔案(Register file)在微處理器晶片面積中佔有顯著的比例。而且為了支援同步多 執行緒,每一個執行緒享有自己的一份暫存器檔案,這樣的設計會增加晶片的面積。 在本篇論文中,我們提出了一份可彈性切割與可延展的暫存器檔案設計,在這 個設計裡:1.我們可以在需要的時候彈性切割一份暫存器檔案給兩個執行緒來同時 使用,2.適當的延伸暫存器檔案的大小來增加兩個執行緒共用的機會。 藉由我們設計可以得到的益處有:1.增加硬體資源的使用率,2. 減少對於記憶 體的存取以及 3.提升系統的效能。此外我們設計概念可以任意的滿足不同的應用程 式的需求。 V Design of a Flexibly Splittable and Stretchable Register File for SMT Architectures Student:Li-Jie Jhing Advisor:Dr, Jean Jyh-Jiun Shann Institute of Computer Science and Engineering National Chiao-Tung University Abstract How to support simultaneous multithreading (SMT) with minimum resource hence becomes a critical research issue. The register file in a microprocessor typically occupies a significant portion of the chip area, and in order to support SMT, each thread will have a copy of register file. That will increase the area overhead. In this thesis, we propose a register file design techniques that can 1. Split a copy of physical register file flexibly into two independent register sets when required, simultaneously operable for two independent threads. 2. Stretch the size of the physical register file arbitrarily, to increase probability of sharing by two threads. Benefits of these designs are: 1. Increased hardware resource utilization. 2. Reduced memory -

Evolving GPU Machine Code

Journal of Machine Learning Research 16 (2015) 673-712 Submitted 11/12; Revised 7/14; Published 4/15 Evolving GPU Machine Code Cleomar Pereira da Silva [email protected] Department of Electrical Engineering Pontifical Catholic University of Rio de Janeiro (PUC-Rio) Rio de Janeiro, RJ 22451-900, Brazil Department of Education Development Federal Institute of Education, Science and Technology - Catarinense (IFC) Videira, SC 89560-000, Brazil Douglas Mota Dias [email protected] Department of Electrical Engineering Pontifical Catholic University of Rio de Janeiro (PUC-Rio) Rio de Janeiro, RJ 22451-900, Brazil Cristiana Bentes [email protected] Department of Systems Engineering State University of Rio de Janeiro (UERJ) Rio de Janeiro, RJ 20550-013, Brazil Marco Aur´elioCavalcanti Pacheco [email protected] Department of Electrical Engineering Pontifical Catholic University of Rio de Janeiro (PUC-Rio) Rio de Janeiro, RJ 22451-900, Brazil Leandro Fontoura Cupertino [email protected] Toulouse Institute of Computer Science Research (IRIT) University of Toulouse 118 Route de Narbonne F-31062 Toulouse Cedex 9, France Editor: Una-May O'Reilly Abstract Parallel Graphics Processing Unit (GPU) implementations of GP have appeared in the lit- erature using three main methodologies: (i) compilation, which generates the individuals in GPU code and requires compilation; (ii) pseudo-assembly, which generates the individuals in an intermediary assembly code and also requires compilation; and (iii) interpretation, which interprets the codes. This paper proposes a new methodology that uses the concepts of quantum computing and directly handles the GPU machine code instructions. Our methodology utilizes a probabilistic representation of an individual to improve the global search capability. -

Readingsample

Embedded Robotics Mobile Robot Design and Applications with Embedded Systems Bearbeitet von Thomas Bräunl Neuausgabe 2008. Taschenbuch. xiv, 546 S. Paperback ISBN 978 3 540 70533 8 Format (B x L): 17 x 24,4 cm Gewicht: 1940 g Weitere Fachgebiete > Technik > Elektronik > Robotik Zu Inhaltsverzeichnis schnell und portofrei erhältlich bei Die Online-Fachbuchhandlung beck-shop.de ist spezialisiert auf Fachbücher, insbesondere Recht, Steuern und Wirtschaft. Im Sortiment finden Sie alle Medien (Bücher, Zeitschriften, CDs, eBooks, etc.) aller Verlage. Ergänzt wird das Programm durch Services wie Neuerscheinungsdienst oder Zusammenstellungen von Büchern zu Sonderpreisen. Der Shop führt mehr als 8 Millionen Produkte. CENTRAL PROCESSING UNIT . he CPU (central processing unit) is the heart of every embedded system and every personal computer. It comprises the ALU (arithmetic logic unit), responsible for the number crunching, and the CU (control unit), responsible for instruction sequencing and branching. Modern microprocessors and microcontrollers provide on a single chip the CPU and a varying degree of additional components, such as counters, timing coprocessors, watchdogs, SRAM (static RAM), and Flash-ROM (electrically erasable ROM). Hardware can be described on several different levels, from low-level tran- sistor-level to high-level hardware description languages (HDLs). The so- called register-transfer level is somewhat in-between, describing CPU compo- nents and their interaction on a relatively high level. We will use this level in this chapter to introduce gradually more complex components, which we will then use to construct a complete CPU. With the simulation system Retro [Chansavat Bräunl 1999], [Bräunl 2000], we will be able to actually program, run, and test our CPUs. -

Reverse Engineering X86 Processor Microcode

Reverse Engineering x86 Processor Microcode Philipp Koppe, Benjamin Kollenda, Marc Fyrbiak, Christian Kison, Robert Gawlik, Christof Paar, and Thorsten Holz, Ruhr-University Bochum https://www.usenix.org/conference/usenixsecurity17/technical-sessions/presentation/koppe This paper is included in the Proceedings of the 26th USENIX Security Symposium August 16–18, 2017 • Vancouver, BC, Canada ISBN 978-1-931971-40-9 Open access to the Proceedings of the 26th USENIX Security Symposium is sponsored by USENIX Reverse Engineering x86 Processor Microcode Philipp Koppe, Benjamin Kollenda, Marc Fyrbiak, Christian Kison, Robert Gawlik, Christof Paar, and Thorsten Holz Ruhr-Universitat¨ Bochum Abstract hardware modifications [48]. Dedicated hardware units to counter bugs are imperfect [36, 49] and involve non- Microcode is an abstraction layer on top of the phys- negligible hardware costs [8]. The infamous Pentium fdiv ical components of a CPU and present in most general- bug [62] illustrated a clear economic need for field up- purpose CPUs today. In addition to facilitate complex and dates after deployment in order to turn off defective parts vast instruction sets, it also provides an update mechanism and patch erroneous behavior. Note that the implementa- that allows CPUs to be patched in-place without requiring tion of a modern processor involves millions of lines of any special hardware. While it is well-known that CPUs HDL code [55] and verification of functional correctness are regularly updated with this mechanism, very little is for such processors is still an unsolved problem [4, 29]. known about its inner workings given that microcode and the update mechanism are proprietary and have not been Since the 1970s, x86 processor manufacturers have throughly analyzed yet. -

MT-088: Analog Switches and Multiplexers Basics

MT-088 TUTORIAL Analog Switches and Multiplexers Basics INTRODUCTION Solid-state analog switches and multiplexers have become an essential component in the design of electronic systems which require the ability to control and select a specified transmission path for an analog signal. These devices are used in a wide variety of applications including multi- channel data acquisition systems, process control, instrumentation, video systems, etc. Switches and multiplexers of the late 1960s were designed with discrete MOSFET devices and were manufactured in small PC boards or modules. With the development of CMOS processes (yielding good PMOS and NMOS transistors on the same substrate), switches and multiplexers rapidly gravitated to integrated circuit form in the mid-1970s, with product introductions such as the Analog Devices' popular AD7500-series (introduced in 1973). A dielectrically-isolated family of these parts introduced in 1976 allowed input overvoltages of ± 25 V (beyond the supply rails) and was insensitive to latch-up. These early CMOS switches and multiplexers were typically designed to handle signal levels up to ±10 V while operating on ±15-V supplies. In 1979, Analog Devices introduced the popular ADG200-series of switches and multiplexers, and in 1988 the ADG201-series was introduced which was fabricated on a proprietary linear-compatible CMOS process (LC2MOS). These devices allowed input signals to ±15 V when operating on ±15-V supplies. A large number of switches and multiplexers were introduced in the 1980s and 1990s, with the trend toward lower on-resistance, faster switching, lower supply voltages, lower cost, lower power, and smaller surface-mount packages. Today, analog switches and multiplexers are available in a wide variety of configurations, options, etc., to suit nearly all applications. -

Memory Hierarchy

Carnegie Mellon Introduction to Cloud Computing Systems I 15‐319, spring 2010 4th Lecture, Jan 26th Majd F. Sakr 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon Lecture Motivation Overview of a Cloud architecture Systems review . ISA . Memory hierarchy . OS . Networks Next time . Impact on cloud performance 15-319 Introduction to Cloud Computing 2 Spring 2010 © Carnegie Mellon Intel Open Cirrus Cloud ‐ Pittsburgh 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon Blade Performance Consider bandwidth and latency between these layers Quad Core Processor Disk core core core core L1 L2 Quad Core Processor L3 core core core core L1 Memory L2 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon Cloud Performance Consider bandwidth and latency of all layers 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon How could these different bandwidths impact the Cloud’s performance? To understand this, we need to go through a summary of the machine’s components? 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon Single CPU Machine’s Component . ISA (Processor) . Main Memory . Disk . Operating System . Network 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon A Single‐CPU Computer Components Datapath Control Register PC File Main IR I/O Bridge Memory Control ALU Unit CPU I/O Bus Disk Network 15-319 Introduction to Cloud Computing Spring 2010 © Carnegie Mellon The Von Neuman machine ‐ Completed 1952 Main memory storing programs and data -

Introduction to Cpu

microprocessors and microcontrollers - sadri 1 INTRODUCTION TO CPU Mohammad Sadegh Sadri Session 2 Microprocessor Course Isfahan University of Technology Sep., Oct., 2010 microprocessors and microcontrollers - sadri 2 Agenda • Review of the first session • A tour of silicon world! • Basic definition of CPU • Von Neumann Architecture • Example: Basic ARM7 Architecture • A brief detailed explanation of ARM7 Architecture • Hardvard Architecture • Example: TMS320C25 DSP microprocessors and microcontrollers - sadri 3 Agenda (2) • History of CPUs • 4004 • TMS1000 • 8080 • Z80 • Am2901 • 8051 • PIC16 microprocessors and microcontrollers - sadri 4 Von Neumann Architecture • Same Memory • Program • Data • Single Bus microprocessors and microcontrollers - sadri 5 Sample : ARM7T CPU microprocessors and microcontrollers - sadri 6 Harvard Architecture • Separate memories for program and data microprocessors and microcontrollers - sadri 7 TMS320C25 DSP microprocessors and microcontrollers - sadri 8 Silicon Market Revenue Rank Rank Country of 2009/2008 Company (million Market share 2009 2008 origin changes $ USD) Intel 11 USA 32 410 -4.0% 14.1% Corporation Samsung 22 South Korea 17 496 +3.5% 7.6% Electronics Toshiba 33Semiconduc Japan 10 319 -6.9% 4.5% tors Texas 44 USA 9 617 -12.6% 4.2% Instruments STMicroelec 55 FranceItaly 8 510 -17.6% 3.7% tronics 68Qualcomm USA 6 409 -1.1% 2.8% 79Hynix South Korea 6 246 +3.7% 2.7% 812AMD USA 5 207 -4.6% 2.3% Renesas 96 Japan 5 153 -26.6% 2.2% Technology 10 7 Sony Japan 4 468 -35.7% 1.9% microprocessors and microcontrollers -

Processor-103

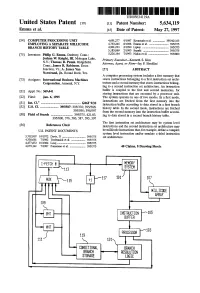

US005634119A United States Patent 19 11 Patent Number: 5,634,119 Emma et al. 45 Date of Patent: May 27, 1997 54) COMPUTER PROCESSING UNIT 4,691.277 9/1987 Kronstadt et al. ................. 395/421.03 EMPLOYING ASEPARATE MDLLCODE 4,763,245 8/1988 Emma et al. ........................... 395/375 BRANCH HISTORY TABLE 4,901.233 2/1990 Liptay ...... ... 395/375 5,185,869 2/1993 Suzuki ................................... 395/375 75) Inventors: Philip G. Emma, Danbury, Conn.; 5,226,164 7/1993 Nadas et al. ............................ 395/800 Joshua W. Knight, III, Mohegan Lake, Primary Examiner-Kenneth S. Kim N.Y.; Thomas R. Puzak. Ridgefield, Attorney, Agent, or Firm-Jay P. Sbrollini Conn.; James R. Robinson, Essex Junction, Vt.; A. James Wan 57 ABSTRACT Norstrand, Jr., Round Rock, Tex. A computer processing system includes a first memory that 73 Assignee: International Business Machines stores instructions belonging to a first instruction set archi Corporation, Armonk, N.Y. tecture and a second memory that stores instructions belong ing to a second instruction set architecture. An instruction (21) Appl. No.: 369,441 buffer is coupled to the first and second memories, for storing instructions that are executed by a processor unit. 22 Fied: Jan. 6, 1995 The system operates in one of two modes. In a first mode, instructions are fetched from the first memory into the 51 Int, C. m. G06F 9/32 instruction buffer according to data stored in a first branch 52 U.S. Cl. ....................... 395/587; 395/376; 395/586; history table. In the second mode, instructions are fetched 395/595; 395/597 from the second memory into the instruction buffer accord 58 Field of Search ...........................