Uniform Manifold Approximation and Projection for Dimension Reduction

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

4 25B Sfc 20190801 20190801 Abebe Gideon 4 11C Sfc

DEPARTMENT OF THE ARMY U.S. ARMY HUMAN RESOURCES COMMAND 1600 SPEARHEAD DIVISION AVENUE FORT KNOX, KY 40122 ORDER NO: 203-13 22 Jul 2019 The Secretary of the Army has reposed special trust and confidence in the patriotism, valor, fidelity and professional excellence of the following noncommissioned officers. In view of these qualities and their demonstrated leadership potential and dedicated service to the United States Army, they are, therefore, promoted to the grade of rank shown. Promotion is made in the MOS shown in the name line and the MOS is awarded as his or her primary MOS on the effective date of promotion. Promotion is not valid and will be revoked if the Soldier concerned is not in a promotable status on the effective date of promotion. Acceptance of promotion constitutes acceptance of the 2-year service remaining requirement from the effective date of promotion for Soldiers selected on a FY11 or earlier board, or a 3-year service remaining requirement from the effective date of promotion for Soldiers selected on a FY12 or later board. Soldiers with over 12 years AFS are required to reenlist for indefinite status if they do not have sufficient time remaining to meet this requirement, or decline promotion IAW AR 600-8-19, paragraphs 1-26 and 4-8. The authority for this promotion is AR 600-8-19, paragraph 4-7. Special Instructions: Soldiers promoted to Sergeant Major who do not have USASMC credit are promoted conditionally. Those Soldiers who receive a conditional promotion will be reduced and their names removed from the centralized list if they fail to meet the NCOES requirement. -

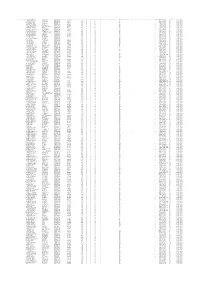

Voter Registration Number Name

VOTER REGISTRATION NUMBER NAME: LAST, FIRST, MIDDLE RESIDENTIAL ADDRESS: FULL STREET RESIDENTIAL ADDRESS: CITY/STATE/ZIP TELEPHONE: FULL NUMBER AGE AT YEAR END PARTY CODE RACE CODE GENDER CODE ETHNICITY CODE STATUS CODE JURISDICTION: MUNICIPAL DISTRICT CODE JURISDICTION: MUNICIPALITY CODE JURISDICTION: SANITATION DISTRICT CODE JURISDICTION: PRECINCT CODE REGISTRATION DATE VOTED METHOD VOTED PARTY CODE ELECTION NAME 141580 ACKISS, FRANCIS PAIGE 2 MULBERRY LN # A NEW BERN, NC 28562 252-671-5911 67 UNA W M NL A RIVE 5 2/25/2015 IN-PERSON UNA 11/07/2017 MUNICIPAL 140387 ADAMS, CHARLES RAYMOND 4100 HOLLY RIDGE RD NEW BERN, NC 28562 910-232-6063 74 DEM W M NL A TREN 3 10/10/2014 IN-PERSON DEM 11/07/2017 MUNICIPAL 20141 ADAMS, KAY RUSSELL 3605 BARONS WAY NEW BERN, NC 28562 252-633-2732 66 REP W F NL A TREN 3 5/24/1976 IN-PERSON REP 11/07/2017 MUNICIPAL 157173 ADAMS, LAUREN PAIGE 204 A ST BRIDGETON, NC 28519 252-474-9712 18 DEM W F NL A BRID FC 11 4/21/2017 IN-PERSON DEM 11/07/2017 MUNICIPAL 20146 ADAMS, LYNN EDWARD JR 3605 BARONS WAY NEW BERN, NC 28562 252-633-2732 69 REP W M NL A TREN 3 3/31/1970 IN-PERSON REP 11/07/2017 MUNICIPAL 66335 ADAMS, TRACY WHITFORD 204 A ST BRIDGETON, NC 28519 252-229-9712 47 REP W F NL A BRID FC 11 4/2/1998 IN-PERSON REP 11/07/2017 MUNICIPAL 49660 ADELSPERGER, ROBERT DEWEY 101 CHADWICK AVE HAVELOCK, NC 28532 252-447-0979 67 REP W M NL A HAVE 2 2/2/1994 IN-PERSON REP 11/07/2017 MUNICIPAL 71341 AGNEW, LYNNE ELIZABETH 31 EASTERN SHORE TOWNHOUSES BRIDGETON, NC 28519 252-638-8302 66 REP W F NL A BRID FC 11 7/27/1999 IN-PERSON -

Esther (Seymour) Atu,Ooa

The Descendants of John G-randerson and Agnes All en (Pulliam,) Se1f11JJ)ur also A Sho-rt Htstory of James Pull tam by Esther (Seymour) Atu,ooa Genealogtcal. Records Commtttee Governor Bradford Chapter Da,u,ghters of Amertcan Bevolutton Danvtlle, Illtnots 1959 - 1960 Mrs. Charles M. Johnson - State Regent Mrs. Richard Thom,pson Jr. - State Chatrman of GenealogtcaJ Records 11rs. Jv!erle s. Randolph - Regent of Governor Bradford Chapter 14rs. Louis Carl Ztllman)Co-Chairmen of Genealogical. Records Corruntttee Mrs. Charles G. Atwood ) .,;.page 1- The Descendants of John Granderson and Agnes Allen (PuJ.liat~) SeymJJur also A Short History of JOJTJSS ...Pall tarn, '1'he beginntng of this genealogy oos compiled by Mrs. Emrra Dora (Mansfield) Lowdermtlk, a great granddaughter of John Granderson ar~ Agnes All en ( Pu.11 iam) SeymJJur.. After the death of }!rs. Lowdermtlk, Hrs. Grace (Roberts) Davenport added, to the recordso With t'he help of vartous JCJ!l,tly heads over a pertod of mcre than three years, I haDe collected and arrari;Jed, the bulk of the data as tt appears in this booklet. Mach credtt ts due 11"'-rs. Guy Martin of Waverly, Illtnots, for long hours spent tn copytn.g record,s for me in. 1°11:organ Oounty Cou,rthou,se 1 and for ,~.,. 1.vorlt in catalogu,tn,g many old country cemetertes where ouP ktn are buried. Esther (SeymDur) Atluooa Typi!IIJ - Courtesy of Charles G. Atwood a,,-.d sec-reta;ry - .l'efrs e El ea?1fJ7' Ann aox. Our earl1est Pulltam ancestora C(]lTl,e from,En,gland to vtrsgtnta, before the middle of the sel)enteenth century. -

Commencement 20-21 Grad List

Northern Essex Community College Commencement 2020-2021 CITY STATE NAME DEGREE Wethersfield CT Sebrina I. Cataldi Associate in Arts General Studies: Individualized Option with High Honors Louisville KY Jessica C. Cunha Associate in Science Business Management Acton MA Michael D. Guarino Associate in Science Computer Information Sciences: Information Technology Option Rafael D. Santos Certificate in Practical Nursing with Honors Agawam MA Natalie Burgos Certificate in Sleep Technologist with Honors Allston MA Hephzibah Frazier Associate in Arts American Sign Language Studies Amesbury MA Melissa R. Barbour Certificate in Peer Recovery Specialist Colette C. Castine Associate in Science Early Childhood Education with High Honors Jennifer D. Collins Certificate in Early Childhood Director with High Honors Noah C. Cotrupi Associate in Arts Liberal Arts with Honors Alexander A. Dannible Associate in Arts Liberal Arts with High Honors Jean M. DesRoches Certificate in Healthcare Technician with Honors Brianna P. Duchemin Associate in Arts General Studies: Art and Design with Honors Graham D. Gannett Associate in Science Business Transfer Glen Gjuraj Associate in Arts General Studies: Art Tyson A. Jackson Associate in Science Business Transfer with Honors Darby J. Jones Associate in Science Biology with Honors Jeanette M. Macomber Associate in Science Human Services with High Honors Sophie H. Rokosz Associate in Science Engineering Science with High Honors Sokunthea Ros Associate in Science Business Transfer with Honors Jonas D. Ruzek Associate in Arts Liberal Arts: Journalism/Communication Option with High Honors Nicole M. Santana- Rivas Associate in Arts Liberal Arts: Psychology Option with Honors Robert G. Schultz Associate in Science Computer Information Sciences: Information Technology Option Dean A. -

WNSC Graduate List Includes Cromwell, Ligonier Wawaka High Schools

WNSC Graduate List includes Cromwell, Ligonier Wawaka High Schools Last Name First Name Middle Name Grad Yr Misc Information School Abbott Rebecca 1993 West Noble High School Abbott William Dale 1999 West Noble High School Abdill Edward E 1879 Ligonier Abdill Zulu M 1881 Ligonier Abdullah Salah Ahmed 1999 West Noble High School Abner Brandie Shantelle 2009 West Noble High School Abner Michele Loraine 1994 West Noble High School Abner Tracy 1992 West Noble High School Abrams Delores 1929 Cromwell Abud Tulio Batista 2000 West Noble High School Ackerman Alfred 1921 Ligonier Ackerman Isaac 1883 Ligonier Ackerman Jennie 1888 Ligonier Ackerman Jerry Michael 1993 West Noble High School Ackerman Kevin 1984 West Noble High School Ackerman Shonda 1988 West Noble High School Acosta Angel Clyde 2007 West Noble High School Acosta Ruby Ann 2000 West Noble High School Acosta Zusy Renee 2008 West Noble High School Acton Carrie Anna 1993 West Noble High School Acton James R 1998 West Noble High School Acton Michael Dane 1991 West Noble High School Adair Alice 1967 Cromwell Adair Angela 1983 West Noble High School Adair Beau GJ 2001 West Noble High School Adair Bill 1960 Cromwell Adair Donald 1937 Cromwell Adair Jerod S 1996 West Noble High School Adair Jerry 1964 Wawaka Adair Judy 1961 Cromwell Adair Larry 1964 Wawaka Adair Linda 1964 Cromwell Adair Lois Ellen 1944 Ligonier Adair Matt 1988 West Noble High School Adair Michelle Renee' 1986 West Noble High School Adair Mike 1984 West Noble High School Adair Pam 1984 West Noble High School Adair Patricia -

Infection and Immunity Volume 43 * March 1984 * Number 3 J

INFECTION AND IMMUNITY VOLUME 43 * MARCH 1984 * NUMBER 3 J. W. Shands, Jr., Editor in Chief (1984) University of Florida, Gainesville Phillip J. Baker, Editor (1985) Stephan E. Mergenhagen, Editor (1984) National Institute ofAllergy and Peter F. Bonventre, Editor (1984) National Institute ofDental Research Infectious Diseases University of Cincinnati Bethesda, Md. Bethesda, Md. Cincinnati, Ohio John H. Schwab, Editor (1985) Edwin H. Beachey, Editor (1988) Arthur G. Johnson, Editor (1986) University of North Carolina, VA Medical Center University ofMinnesota, Duluth Medical School Memphis, Tenn. Chapel Hill EDITORIAL BOARD Leonard C. Altman (1986) John C. Feeley (1985) Julius P. Kreier (1986) Stephen W. Russell (1985) Michael A. Apicella (1985) Robert Finberg (1984) Maurice J. Lefford (1984) Catherine Saelinger (1984) Roland Arnold (1984) John R. Finerty (1984) Thomas Lehner (1986) Edward J. St. Martin (1985) Joel B. Baseman (1985) Robert Fitzgerald (1986) Stephan H. Leppla (1985) Irvin E. Salit (1986) Elmer L. Becker (1984) Samuel B. Formal (1986) Michael Loos (1984) Anthony J. Sbarra (1984) Neil Blacklow (1984) John Gallin (1985) John Mansfield (1985) Charles F. Schachtele (1985) Arnold S. Bleiweis (1984) Peter Gemski (1985) Zell A. McGee (1985) Julius Schachter (1986) William H. Bowen (1985) Robert Genco (1985) Jerry R. McGhee (1985) Jerome L. Schulman (1985) Robert R. Brubaker (1986) Ronald J. Gibbons (1985) Douglas D. McGregor (1985) Alan Sher (1984) Ward Bullock, Jr. (1985) Frances Gillin (1984) Floyd C. McIntire (1985) Gerald D. Shockman (1986) Charles Carpenter (1985) Mayer B. Goren (1985) Monte Meltzer (1986) Phillip Smith (1985) Bruce Chassy (1984) Harry Greenberg (1985) Jiri Mestecky (1986) Ralph Snyderman (1985) John 0. -

ANTHEM PARTICIPATING PRIMARY CARE PROVIDER (PCP) LIST - 2020 This List Includes Pcps Who Participate with Anthem BCBS in New Hampshire

ANTHEM PARTICIPATING PRIMARY CARE PROVIDER (PCP) LIST - 2020 This list includes PCPs who participate with Anthem BCBS in New Hampshire. Please note this is not an all-inclusive list for Access Blue New England, BlueChoice New England or HMO Blue New England plans. To search for participating PCPs or providers in other New England states, visit www.healthtrustnh.org and click on the orange Medical icon, then follow the simple instructions located under Medical Plan Provider Directories in the box on the right side of the page. PCP# LAST NAME FIRST NAME TITLE PROVIDER LOCATION/PRACTICE NAME CITY STATE ZIP PHONE PRIMARY SPECIALTY 770YP22529 AAKRE KIMBERLY J MD WINDSOR HOSPITAL CORP WINDSOR VT 05089 (802) 674-7337 PEDIATRICS 770YP22529 AAKRE KIMBERLY J MD WINDSOR HOSPITAL CORP WOODSTOCK VT 05091 (802) 457-3030 PEDIATRICS 770YP34527 ABBOTT CARA L APRN WENTWORTH DOUGLASS PHYSICIAN CORP LEE NH 03861 (603) 868-3300 FAMILY NURSE PRACTITIONERS 770YP30990 ABBOTT MCCALL M APRN CONCORD HOSPITAL INC CONCORD NH 03301 (603) 226-3400 FAMILY NURSE PRACTITIONERS 770YP16108 ABEL SUSAN E MD DARTMOUTH HITCHCOCK CLINIC NASHUA NH 03063 (603) 577-4400 PEDIATRICS 770YP36640 ABELE MICHAEL J MD PARTNERS COMMUNITY PHYS ORGANIZATION INC HAVERHILL MA 01830 (978) 521-3270 INTERNAL MEDICINE 770YP33636 ABEYSINGHE CHAMPA D MD HEALTH FIRST FAMILY CARE CENTER FRANKLIN NH 03235 (603) 934-0177 INTERNAL MEDICINE 770YP33636 ABEYSINGHE CHAMPA D MD HEALTH FIRST FAMILY CARE CENTER LACONIA NH 03246 (603) 366-1070 INTERNAL MEDICINE 770YP05379 ABRAHAM PHILIP MD PHILIP ABRAHAM MD SANFORD -

Name Index PIERCE COUNTY DISTRICT COURT Year: 2008 Run Date: 3/7/11 Page 1/117

Name Index PIERCE COUNTY DISTRICT COURT Year: 2008 Run date: 3/7/11 Page 1/117 Person Name Party Case Number CT Disp Filed Case Cause 123 CASH INC PLA1 8Y992318C SC DW 05/29/2008 Other 123 CASH INC PLA1 8Y993591C SC DW 11/13/2008 Other 1ST OPTION REALTY GROUP LLC DEF1 8Y993894C SC 12/31/2008 Damage Deposit 48TH STREET PUB & GRILL AKA 48TH STREETDEF1 8Y993579CPUB & EATERYSC DW 11/12/2008 Other 6TH AVE AMP WARNER LLC PLA1 8Y991682C SC DW 02/29/2008 Other 6TH AVE & WARNER LLC PLA1 8Y993585C SC SA 11/12/2008 Other 701 MANA WANA PL, LLC DEF1 8Y992377C SC DW 06/05/2008 Other 701 MANA WANA PL., LLC DEF1 8Y992801C SC CL 08/04/2008 Rent A-1 TOWING SERVICE, INC DEF1 8Y993470C SC CL 10/24/2008 Other A A A ALL CITY CONTRACTING DEF1 8Y992429C SC 06/11/2008 Other AA GLASS NATION INC DEF1 8Y993525C SC TC 11/04/2008 Other AAHL, HEATHER DEF2 8Y992192C SC DW 05/08/2008 Other AAHL, KYLE PLA1 8Y992192C SC DW 05/08/2008 Other AAHL, KYLE PLA1 8Y992588C SC DW 07/08/2008 Other AAL COMPUTER SERVICE INC PLA1 8Y993536C SC 11/06/2008 Other ABARROTES PAQUITO, LLC PLA1 8Y993656C SC DW 11/20/2008 Loan ABDERHALDEN, RICHARD PLA1 8Y992868C SC TC 08/12/2008 Other ABERCROMBIE, SAADIA PLA1 8Y993244C SC SA 10/01/2008 Other ABOUBAKR, CONNIE DEF2 8Y993002C SC DW 09/02/2008 Damage Deposit ABOUBAKR, KARIM DEF1 8Y993002C SC DW 09/02/2008 Damage Deposit ABRA CADABRA-SOLD LLC PLA1 8Y992478C SC 06/17/2008 Other ABRAMSON, MARC A DEF1 8Y993451C SC 11/03/2008 Loan ABRON, KAREN DEF1 8Y992819C SC DW 08/05/2008 Other ACCESS FIRE PROTECTION SVCS INC PLA1 8Y993461C SC DP 10/23/2008 Other -

Better Together 2018 Annual Report Confidence, Independence & Resilience

CAMP CHIEF YMCA of the ROCKIES OURAY BETTER TOGETHER 2018 ANNUAL REPORT CONFIDENCE, INDEPENDENCE & RESILIENCE Youth today have fewer and fewer opportunities to be outside and connect with nature. Confidence, independence, and resilience—critical skills for a successful adulthood—are skills that can be learned through new experiences, problem solving and facing challenges head-on. Camp provides these opportunities and we are forever grateful to be serving youth in this way. We hear from so many campers and their families that camp changed their life. A child who was shy and timid before camp, now willingly tries new things and isn’t afraid. A child who struggled with making friends, is social and outgoing after coming home from camp. We call this phenomenon “Camp Magic”. This year, we embarked on a capital campaign to better serve our growing camp family. As Camp Chief Ouray begins to transform, we thank our past supporters and encourage future donors to join us on our road to an inspired future! In the spirit of camp, Julie Watkins Michael Ohl President/CEO Executive Director YMCA of the Rockies Camp Chief Ouray 2 / / 3 FROM ONE GENERATION TO THE NEXT 4 / / 5 YMCA of the Rockies promotes youth development by cultivating tomorrow’s leaders at our residential camp, Camp Chief CAMP Ouray. Parents know that camp builds confidence, independence and resilience, CHIEF OURAY therefore every year we receive increasing requests for financial assistance. Donations to camp ensure that no child is turned away due to the FRIENDS OF THE FIRE inability -

Notice of Delinquent Taxes on Real Property in Clark County, Nevada For

NOTICE OF DELINQUENT TAXES ON REAL PROPERTY IN CLARK COUNTY, NEVADA FOR FISCAL YEAR 2020-2021 (July 1, 2020 – June 30, 2021) Published in accordance with Nevada Revised Statutes (NRS) 361.565 The following is a list of real properties on which all or a portion of the current fiscal year taxes remained delinquent as of May 5, 2021. The list includes the parcel number, the name of the owner of record, and the amount of taxes, penalties, interest, and costs due. If the amount due is not paid by 5:00 p.m. on June 7, 2021 (the first Monday in June), a certificate will be issued authorizing the county treasurer, as trustee for the State and county, to hold each property, subject to redemption within 2 years after the date of the certificate. However, pursuant to NRS 361.570.3.(b), a property determined to be abandoned may be redeemed within one year after the date of the certificate. Redemption may be made by payment of the taxes and accruing taxes, penalties and costs, together with interest on the taxes at an annual rate of 10 percent, assessed monthly. Such redemption may be made in accordance with the provisions of Chapter 21 of NRS regarding real property sold under execution. Note: This list also contains parcels that have prior year delinquencies and are either in a redemption period or may have been deeded to the county treasurer as trustee for the county and State. Per NRS 361, these parcels will continue to accrue taxes, penalties, interest, and costs until paid in full, or are sold at public auction. -

Baby Girl Names | Registered in 2019

Vital Statistics Baby2019 BabyGirl GirlsNames Names | Registered in 2019 From:Jan 01, 2019 To: Dec 31, 2019 First Name Frequency First Name Frequency First Name Frequency Aadhini 1 Aarnavi 1 Aadhirai 1 Aaro 1 Aadhya 2 Aarohi 2 Aadila 1 Aarora 1 Aadison 1 Aarushi 1 Aadroop 1 Aarya 3 Aadya 3 Aarza 2 Aafiya 1 Aashvee 1 Aaghnya 1 Aasiya 1 Aahana 2 Aasiyah 2 Aaila 1 Aasmi 1 Aaira 3 Aasmine 1 Aaleena 1 Aatmja 1 Aalia 1 Aatri 1 Aaliah 1 Aayah 2 Aalis 1 Aayara 1 Aaliya 1 Aayat 1 Aaliyah 17 Aayla 1 Aaliyah-Lynn 1 Aayna 1 Aalya 1 Aayra 2 Aamina 1 Aazeen 1 Aamna 1 Abagail 1 Aanaya 1 Abbey 4 Aaniya 1 Abbi 1 Aaniyah 1 Abbie 1 Aanya 3 Abbiejean 1 Aara 1 Abbigail 3 Aaradhya 1 Abbiteal 1 Aaradya 1 Abby 10 Aaraya 1 Abbygail 1 Aaria 2 Abdirashid 1 Aariya 1 Abeeha 1 Aariyah 1 Aberlene 1 Aarna 3 Abhideep 1 Abi 1 Abiah 1 10 Jun 2020 1 Abiegail 02:22:21 PM1 Abigael 1 Abigail 141 Abigale 1 1 10 Jun 2020 2 02:22:21 PM Baby Girl Names | Registered in 2019 First Name Frequency First Name Frequency Abigayle 1 Addalyn 4 Abihail 1 Addalynn 1 Abilene 2 Addasyn 1 Abina 1 Addelyn 1 Abisha 2 Addi 1 Ablakat 1 Addie 2 Aboni 1 Addilyn 3 Abrahana 1 Addilynn 2 Abreen 1 Addison 61 Abrielle 2 Addisyn 2 Absalat 1 Addley 1 Abuk 2 Addy 1 Abyan 1 Addyson 2 Abygale 1 Adedoyin 1 Acadia 1 Adeeva 1 Acelynn 1 Adeifeoluwa 1 Achai 1 Adela 1 Achan 1 Adélaïde 1 Achol 1 Adelaide 20 Ackley 1 Adelaine 1 Ada 23 Adelayne 1 Adabpreet 1 Adele 4 Adaeze 1 Adèle 2 Adah 1 Adeleigha 1 Adair 1 Adeleine 1 Adalee 1 Adelheid 1 Adalena 1 Adelia 3 Adaley 1 Adelina 2 Adalina 2 Adeline 40 Adalind 1 Adella -

First Name: Initiation Year Patsy Kjosness Gottschalk 1946

First Name: Initiation Year Patsy Kjosness Gottschalk 1946 Rosermary Harland Pennell 1947 jackie mitchell last 1948 Jody Getty Norman 1948 Becky Barline Boyington 1948 Carm McMahon Johnson 1948 Sheila Janssen Klages 1950 Ernestine Gohrband Oringdulph 1951 Mary Carnoll Heath 1951 Mary Lou Varian Porter 1951 Margery Nobles McIntosh 1951 Gwen Tupper Soderberg 1951 Joann Smith Lefferts 1951 Pat Long Odberg 1951 Carolyn Gale Louthian 1952 Jo Harwood Flynn 1952 June Powels Smith 1953 Janet Austad Parks 1953 June Powels Smith 1953 Marilyn Norseth Will 1954 Carolyn Sanderson Staley 1954 Rosemarie Perrin Bowman 1954 Judy Crookham Krueger 1954 Karen Crozier Smith 1955 Dorothy Bauer Schedler 1956 Rosemary Maule Daubert 1956 Marjie Bradbury Johnson 1956 Eleanor Whitney Fowler 1956 Suzanne Roffler Tarlov 1956 Pat Casey Nelson 1956 Ella Taye Springer Williams 1956 Barbara Tatum Snow 1957 Judy Orcutt Burke-Chelius 1957 Ann Irwin Shively 1958 Karen (Stedtfeld) Offen 1958 Patsy Rogers Mosman 1958 Kay Irwin Rowley 1959 Heather Hill Chrisman 1959 Linda Ensign Dashew 1959 Lynda Herndon Carey 1960 Kathryn Rodell Hunt 1960 Judy Powers Arnt 1960 Jo Ann Tatum Hattner 1960 Ann Rosendahl White 1960 Rowena Eikum Henry 1960 Linda Engle Sande 1960 Janice Rieman Gisler 1961 Mary Casey Ratcliffe 1961 Pat Swan Gustavel 1961 Jeanne Maxey Reese 1961 Alice Fulcher Anderson 1962 Bonnie Johansen Bruce 1962 Barbara Ware Featherstone 1962 Joan Sorensen Sullivan 1962 Judy Frazier Livingston 1962 Leslie Ensign Hall 1963 Nina Jenkins Bartlett 1963 Barbara Doll Keithly 1963 Zena