CPU Architecture Chapter Four

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Inside Intel® Core™ Microarchitecture Setting New Standards for Energy-Efficient Performance

White Paper Inside Intel® Core™ Microarchitecture Setting New Standards for Energy-Efficient Performance Ofri Wechsler Intel Fellow, Mobility Group Director, Mobility Microprocessor Architecture Intel Corporation White Paper Inside Intel®Core™ Microarchitecture Introduction Introduction 2 The Intel® Core™ microarchitecture is a new foundation for Intel®Core™ Microarchitecture Design Goals 3 Intel® architecture-based desktop, mobile, and mainstream server multi-core processors. This state-of-the-art multi-core optimized Delivering Energy-Efficient Performance 4 and power-efficient microarchitecture is designed to deliver Intel®Core™ Microarchitecture Innovations 5 increased performance and performance-per-watt—thus increasing Intel® Wide Dynamic Execution 6 overall energy efficiency. This new microarchitecture extends the energy efficient philosophy first delivered in Intel's mobile Intel® Intelligent Power Capability 8 microarchitecture found in the Intel® Pentium® M processor, and Intel® Advanced Smart Cache 8 greatly enhances it with many new and leading edge microar- Intel® Smart Memory Access 9 chitectural innovations as well as existing Intel NetBurst® microarchitecture features. What’s more, it incorporates many Intel® Advanced Digital Media Boost 10 new and significant innovations designed to optimize the Intel®Core™ Microarchitecture and Software 11 power, performance, and scalability of multi-core processors. Summary 12 The Intel Core microarchitecture shows Intel’s continued Learn More 12 innovation by delivering both greater energy efficiency Author Biographies 12 and compute capability required for the new workloads and usage models now making their way across computing. With its higher performance and low power, the new Intel Core microarchitecture will be the basis for many new solutions and form factors. In the home, these include higher performing, ultra-quiet, sleek and low-power computer designs, and new advances in more sophisticated, user-friendly entertainment systems. -

Pentium II Processor Performance Brief

PentiumÒ II Processor Performance Brief January 1998 Order Number: 243336-004 Information in this document is provided in connection with Intel products. No license, express or implied, by estoppel or otherwise, to any intellectual property rights is granted by this document. Except as provided in Intel’s Terms and Conditions of Sale for such products, Intel assumes no liability whatsoever, and Intel disclaims any express or implied warranty, relating to sale and/or use of Intel products including liability or warranties relating to fitness for a particular purpose, merchantability, or infringement of any patent, copyright or other intellectual property right. Intel products are not intended for use in medical, life saving, or life sustaining applications. Intel may make changes to specifications and product descriptions at any time, without notice. Designers must not rely on the absence or characteristics of any features or instructions marked "reserved" or "undefined." Intel reserves these for future definition and shall have no responsibility whatsoever for conflicts or incompatibilities arising from future changes to them. The Pentium® II processor may contain design defects or errors known as errata. Current characterized errata are available on request. MPEG is an international standard for video compression/decompression promoted by ISO. Implementations of MPEG CODECs, or MPEG enabled platforms may require licenses from various entities, including Intel Corporation. Contact your local Intel sales office or your distributor to obtain the latest specifications and before placing your product order. Copies of documents which have an ordering number and are referenced in this document, or other Intel literature, may be obtained from by calling 1-800-548-4725 or by visiting Intel’s website at http://www.intel.com. -

Intel Architecture Optimization Manual

Intel Architecture Optimization Manual Order Number 242816-003 1997 5/5/97 11:38 AM FRONT.DOC Information in this document is provided in connection with Intel products. No license, express or implied, by estoppel or otherwise, to any intellectual property rights is granted by this document. Except as provided in Intel's Terms and Conditions of Sale for such products, Intel assumes no liability whatsoever, and Intel disclaims any express or implied warranty, relating to sale and/or use of Intel products including liability or warranties relating to fitness for a particular purpose, merchantability, or infringement of any patent, copyright or other intellectual property right. Intel products are not intended for use in medical, life saving, or life sustaining applications. Intel may make changes to specifications and product descriptions at any time, without notice. Designers must not rely on the absence or characteristics of any features or instructions marked "reserved" or "undefined." Intel reserves these for future definition and shall have no responsibility whatsoever for conflicts or incompatibilities arising from future changes to them. The Pentium®, Pentium Pro and Pentium II processors may contain design defects or errors known as errata which may cause the product to deviate from published specifications. Such errata are not covered by Intel’s warranty. Current characterized errata are available on request. Contact your local Intel sales office or your distributor to obtain the latest specifications before placing your product order. Copies of documents which have an ordering number and are referenced in this document, or other Intel literature, may be obtained from: Intel Corporation P.O. -

DOS Virtualized in the Linux Kernel

DOS Virtualized in the Linux Kernel Robert T. Johnson, III Abstract Due to the heavy dominance of Microsoft Windows® in the desktop market, some members of the software industry believe that new operating systems must be able to run Windows® applications to compete in the marketplace. However, running applications not native to the operating system generally causes their performance to degrade significantly. When the application and the operating system were written to run on the same machine architecture, all of the instructions in the application can still be directly executed under the new operating system. Some will only need to be interpreted differently to provide the required functionality. This paper investigates the feasibility and potential to speed up the performance of such applications by including the support needed to run them directly in the kernel. In order to avoid the impact to the kernel when these applications are not running, the needed support was built as a loadable kernel module. 1 Introduction New operating systems face significant challenges in gaining consumer acceptance in the desktop marketplace. One of the first realizations that must be made is that the majority of this market consists of non-technical users who are unlikely to either understand or have the desire to understand various technical details about why the new operating system is “better” than a competitor’s. This means that such details are extremely unlikely to sway a large amount of users towards the operating system by themselves. The incentive for a consumer to continue using their existing operating system or only upgrade to one that is backwards compatible is also very strong due to the importance of application software. -

Strengthening Diversification Defenses by Means of a Non-Readable Code

1 Strengthening diversification defenses by means of a non-readable code segment Sebastian Österlund Department of Computer Science Vrije Universiteit, Amsterdam, Netherlands Supervised By: H. Bos & C. Giuffrida Abstract—In this paper we present a new defense against Just- hardware segmentation, this approach should theoretically have In-Time return-oriented-programming attacks. By making pro- no run-time performance overhead for Intel x86 architecture, gram code non-readable, the assembly of Just-In-Time gadgets by once it has been set up. Both position dependent executables scanning the memory is effectively blocked. Using segmentation and position independent executables are covered. Furthermore on Intel x86 hardware, the implementation of execute-only code an approach to implement a similar defense on x86_64 using can be achieved. We discuss two different ways of implementing the new MPX [7] instructions is presented. The defense such a defense for 32-bit Intel architecture: one for position dependent executables, and one for position independent executa- mechanism we present in this paper is of interest, mainly, bles. The first implementation works by splitting the address- for use in security of network connected applications (such space into two mirrored segments. The second implementation as servers or web-browsers), since these applications are often creates an execute-only memory-section at the top of the address- the main targets of remote code execution exploits. space, making it possible to still use the whole address-space. By relying on hardware segmentation the run-time performance II. RETURN-ORIENTED-PROGRAMMING ATTACKS overhead of these defenses is minimal. Remote code execution by means of buffer overflows has Keywords—ROP, segmentation, XnR, buffer overflow, memory been a problem for a long time. -

Chapter 3 Protected-Mode Memory Management

CHAPTER 3 PROTECTED-MODE MEMORY MANAGEMENT This chapter describes the Intel 64 and IA-32 architecture’s protected-mode memory management facilities, including the physical memory requirements, segmentation mechanism, and paging mechanism. See also: Chapter 5, “Protection” (for a description of the processor’s protection mechanism) and Chapter 20, “8086 Emulation” (for a description of memory addressing protection in real-address and virtual-8086 modes). 3.1 MEMORY MANAGEMENT OVERVIEW The memory management facilities of the IA-32 architecture are divided into two parts: segmentation and paging. Segmentation provides a mechanism of isolating individual code, data, and stack modules so that multiple programs (or tasks) can run on the same processor without interfering with one another. Paging provides a mech- anism for implementing a conventional demand-paged, virtual-memory system where sections of a program’s execution environment are mapped into physical memory as needed. Paging can also be used to provide isolation between multiple tasks. When operating in protected mode, some form of segmentation must be used. There is no mode bit to disable segmentation. The use of paging, however, is optional. These two mechanisms (segmentation and paging) can be configured to support simple single-program (or single- task) systems, multitasking systems, or multiple-processor systems that used shared memory. As shown in Figure 3-1, segmentation provides a mechanism for dividing the processor’s addressable memory space (called the linear address space) into smaller protected address spaces called segments. Segments can be used to hold the code, data, and stack for a program or to hold system data structures (such as a TSS or LDT). -

I386-Based Computer Architecture and Elementary Data Operations

Leonardo Journal of Sciences Issue 3, July-December 2003 ISSN 1583-0233 p. 9-23 I386-Based Computer Architecture and Elementary Data Operations Lorentz JÄNTSCHI Technical University of Cluj-Napoca, Romania http://lori.academicdirect.ro Abstract Computers using in a very large field of sciences and not only in sciences is a reality now. Research, evidence, automation, entertainment, communication are makes by computer. To create easy to use, professional, and efficient applications is not an easy task. Compatibility problems, when data are ports from different applications, are frequently solves using operating system modules (such as ODBC – open database connectivity). The aim of this paper was to describe i.386-based computer architecture and to debate the elementary data operators. Keywords i386 computer architecture, elementary data operations Introduction Computers using in a very large field of sciences and not only in sciences is a reality now. Research, evidence, automation, entertainment, communication are makes by computer. The peoples who work with the computer are splits in two categories. Users want at key applications to exploit them. May be the majority of computer are simply users that uses 9 http://ljs.academicdirect.ro i386-Based Computer Architecture and Elementary Data Operations Lorentz JÄNTSCHI at key applications at office in a job specific action. Developers are computer specialists, which use the software and the hardware knowledge to create, test, and upgrade computers and programs. In computers industry (and not only) there exists so called brands. Most of the peoples it heard about Microsoft [1] or IBM [2]. These are brands. A brand is generally a corporation, frequently a multinational one, which produce a significant quantity (percents of total) of specific products for world users. -

Energy Per Instruction Trends in Intel® Microprocessors

Energy per Instruction Trends in Intel® Microprocessors Ed Grochowski, Murali Annavaram Microarchitecture Research Lab, Intel Corporation 2200 Mission College Blvd, Santa Clara, CA 95054 [email protected], [email protected] Abstract where throughput performance is the primary objective. In order to deliver high throughput performance within a Energy per Instruction (EPI) is a measure of the amount fixed power budget, a microprocessor must achieve low of energy expended by a microprocessor for each EPI. instruction that the microprocessor executes. In this It is important to note that MIPS/watt and EPI do not paper, we present an overview of EPI, explain the consider the amount of time (latency) needed to process factors that affect a microprocessor’s EPI, and derive a an instruction from start to finish. Other metrics such as MIPS 2/watt (related to energy•delay) and MIPS 3/watt historical comparison of the trends in EPI over multiple 2 generations of Intel microprocessors. We show that the (related to energy•delay ) assign increasing importance recent Intel® Pentium® M and Intel® Core™ Duo to the time required to process instructions, and are thus microprocessors achieve significantly lower EPI than used in environments in which latency performance is what would be expected from a continuation of historical the primary objective. trends. 2. What Determines EPI? 1. Introduction Consider a capacitor that is charged and discharged With the power consumption of recent desktop by a CMOS inverter as shown in Figure 1. microprocessors having reached 130 watts, power has emerged at the forefront of challenges facing the V microprocessor designer [1, 2]. -

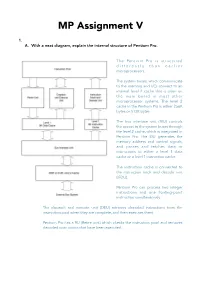

MP Assignment V.Pages

MP Assignment V 1. A. With a neat diagram, explain the internal structure of Pentium Pro. The Pentium Pro is structured d i f f e r e n t l y t h a n e a r l i e r microprocessors. The system buses, which communicate to the memory and I/O, connect to an internal level 2 cache that is often on the main board in most other microprocessor systems. The level 2 cache in the Pentium Pro is either 256K bytes or 512K bytes. The bus interface unit (BIU) controls the access to the system buses through the level 2 cache, which is integrated in Pentium Pro. The BIU generates the memory address and control signals, and passes and fetches data or instructions to either a level 1 data cache or a level 1 instruction cache. The instruction cache is connected to the instruction fetch and decode unit (IFDU). Pentium Pro can process two integer instructions and one floating-point instruction simultaneously. The dispatch and execute unit (DEU) retrieves decoded instructions from the instruction pool when they are complete, and then executes them. Pentium Pro has a RU (Retire unit) which checks the instruction pool and removes decoded instructions that have been executed. B. List the new features added to Pentium Pro when compared with its predecessors with respect to memory system. The memory system for the Pentium Pro microprocessor is 4G bytes in size, similar to 80386DX–Pentium microprocessors, but access to an area between 4G and 64G is made possible by additional address signals A32-35. -

IA-32 Architecture

Outline IA-32 Architecture Intel Microprocessors IA-32 Registers Computer Organization Instruction Execution Cycle & IA-32 Memory Management Assembly Language Programming Dr Adnan Gutub aagutub ‘at’ uqu.edu.sa [Adapted from slides of Dr. Kip Irvine: Assembly Language for Intel-Based Computers] Most Slides contents have been arranged by Dr Muhamed Mudawar & Dr Aiman El-Maleh from Computer Engineering Dept. at KFUPM 45/٢ IA-32 Architecture Computer Organization and Assembly Language slide Intel Microprocessors Intel 80286 and 80386 Processors Intel introduced the 8086 microprocessor in 1979 80286 was introduced in 1982 8086, 8087, 8088, and 80186 processors 24-bit address bus ⇒ 224 bytes = 16 MB address space 16-bit processors with 16-bit registers Introduced protected mode 16-bit data bus and 20-bit address bus Segmentation in protected mode is different from the real mode Physical address space = 220 bytes = 1 MB 80386 was introduced in 1985 8087 Floating-Point co-processor First 32-bit processor with 32-bit general-purpose registers Uses segmentation and real-address mode to address memory First processor to define the IA-32 architecture Each segment can address 216 bytes = 64 KB 32-bit data bus and 32-bit address bus 8088 is a less expensive version of 8086 232 bytes ⇒ 4 GB address space Uses an 8-bit data bus Introduced paging , virtual memory , and the flat memory model 80186 is a faster version of 8086 Segmentation can be turned off 45/٤ 45 IA-32 Architecture Computer Organization and Assembly Language slide/٣ -

Itanium Processor Microarchitecture

ITANIUM PROCESSOR MICROARCHITECTURE THE ITANIUM PROCESSOR EMPLOYS THE EPIC DESIGN STYLE TO EXPLOIT INSTRUCTION-LEVEL PARALLELISM. ITS HARDWARE AND SOFTWARE WORK IN CONCERT TO DELIVER HIGHER PERFORMANCE THROUGH A SIMPLER, MORE EFFICIENT DESIGN. The Itanium processor is the first ic runtime optimizations to enable the com- implementation of the IA-64 instruction set piled code schedule to flow through at high architecture (ISA). The design team opti- throughput. This strategy increases the syn- mized the processor to meet a wide range of ergy between hardware and software, and requirements: high performance on Internet leads to higher overall performance. servers and workstations, support for 64-bit The processor provides a six-wide and 10- addressing, reliability for mission-critical stage deep pipeline, running at 800 MHz on applications, full IA-32 instruction set com- a 0.18-micron process. This combines both patibility in hardware, and scalability across a abundant resources to exploit ILP and high range of operating systems and platforms. frequency for minimizing the latency of each The processor employs EPIC (explicitly instruction. The resources consist of four inte- parallel instruction computing) design con- ger units, four multimedia units, two Harsh Sharangpani cepts for a tighter coupling between hardware load/store units, three branch units, two and software. In this design style the hard- extended-precision floating-point units, and Ken Arora ware-software interface lets the software two additional single-precision floating-point exploit all available compilation time infor- units (FPUs). The hardware employs dynam- Intel mation and efficiently deliver this informa- ic prefetch, branch prediction, nonblocking tion to the hardware. -

P6: Microarchitecture

TheThe PentiumPentium®® II/IIIII/III ProcessorProcessor ““CompilerCompiler onon aa ChipChip”” Ronny Ronen Senior Principal Engineer Director of Architecture Research Intel Labs - Haifa Intel Corporation Tel Aviv University January 18, 2005 ® 1 G-Number AgendaAgenda z Goal,Goal, ExpectationsExpectations…… z GeneralGeneral InformationInformation z µµarchitecurearchitecure basicsbasics ® z PentiumPentium ProPro ProcessorProcessor µµarchitecurearchitecure z SWSW aspectsaspects ® 2 G-Number TechnologyTechnology ProfileProfile Pentium Pro - 1995 Pentium-II - 1998 Pentium-III - 1999 z Core @200MHz z Core @333MHz z Core @600MHz z 256K L2 on package, z 512KB L2 in SEC z 512KB L2 @200MHz @167MHz @ ???MHz z Performance: z Performance: z Performance: 8.09 SPECint95 12.8 SPECint95 24.0 SPECint95 6.70 SPECfp95 9.14 SPECfp95 15.9 SPECfp95 z 0.35 µm BiCMOS (P55C: 7.12/5.21) z 5.5M transistors z 0.25 µm CMOS z 0.25 µm CMOS process process z 195 sq mm (14x14) z 7.5M transistors z ???M transistors z 3.3V, 11.2A z 28.1W / 35.0W ® 3 G-Number TechnologyTechnology ProfileProfile (cont.)(cont.) z Pentium-III – 2000 (Coppermine) z Pentium-III - 2002 (Tualatin) z Core @1000MHz z Core @1400MHz z 256KB L2 on chip @ 1000MHz z 512KB L2 on chip @ 1400MHz z Performance: z Performance (estimated): >46 SPECint95 >60 SPECint95 >20 SPECfp95 >30 SPECfp95 z 0.18 µm CMOS process z 0.13 µm CMOS process z ~20M transistors z ~44M transistors z Pentium M Processor 2003 (Banias) z Pentium M Processor 2004 (Dothan) z Core @1800MHz z Core @2000MHz z 1024KB L2 on chip @