Pdf) Is Shown at the Bottom of Fig

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Software Development Career Pathway

Career Exploration Guide Software Development Career Pathway Information Technology Career Cluster For more information about NYC Career and Technical Education, visit: www.cte.nyc Summer 2018 Getting Started What is software? What Types of Software Can You Develop? Computers and other smart devices are made up of Software includes operating systems—like Windows, Web applications are websites that allow users to contact management system, and PeopleSoft, a hardware and software. Hardware includes all of the Apple, and Google Android—and the applications check email, share documents, and shop online, human resources information system. physical parts of a device, like the power supply, that run on them— like word processors and games. among other things. Users access them with a Mobile applications are programs that can be data storage, and microprocessors. Software contains Software applications can be run directly from a connection to the Internet through a web browser accessed directly through mobile devices like smart instructions that are stored and run by the hardware. device or through a connection to the Internet. like Firefox, Chrome, or Safari. Web browsers are phones and tablets. Many mobile applications have Other names for software are programs or applications. the platforms people use to find, retrieve, and web-based counterparts. display information online. Web browsers are applications too. Desktop applications are programs that are stored on and accessed from a computer or laptop, like Enterprise software are off-the-shelf applications What is Software Development? word processors and spreadsheets. that are customized to the needs of businesses. Popular examples include Salesforce, a customer Software development is the design and creation of Quality Testers test the application to make sure software and is usually done by a team of people. -

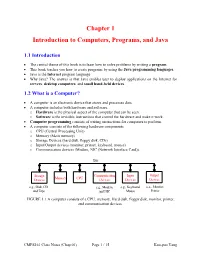

Chapter 1 Introduction to Computers, Programs, and Java

Chapter 1 Introduction to Computers, Programs, and Java 1.1 Introduction • The central theme of this book is to learn how to solve problems by writing a program . • This book teaches you how to create programs by using the Java programming languages . • Java is the Internet program language • Why Java? The answer is that Java enables user to deploy applications on the Internet for servers , desktop computers , and small hand-held devices . 1.2 What is a Computer? • A computer is an electronic device that stores and processes data. • A computer includes both hardware and software. o Hardware is the physical aspect of the computer that can be seen. o Software is the invisible instructions that control the hardware and make it work. • Computer programming consists of writing instructions for computers to perform. • A computer consists of the following hardware components o CPU (Central Processing Unit) o Memory (Main memory) o Storage Devices (hard disk, floppy disk, CDs) o Input/Output devices (monitor, printer, keyboard, mouse) o Communication devices (Modem, NIC (Network Interface Card)). Bus Storage Communication Input Output Memory CPU Devices Devices Devices Devices e.g., Disk, CD, e.g., Modem, e.g., Keyboard, e.g., Monitor, and Tape and NIC Mouse Printer FIGURE 1.1 A computer consists of a CPU, memory, Hard disk, floppy disk, monitor, printer, and communication devices. CMPS161 Class Notes (Chap 01) Page 1 / 15 Kuo-pao Yang 1.2.1 Central Processing Unit (CPU) • The central processing unit (CPU) is the brain of a computer. • It retrieves instructions from memory and executes them. -

Introduction to High Performance Computing

Introduction to High Performance Computing Shaohao Chen Research Computing Services (RCS) Boston University Outline • What is HPC? Why computer cluster? • Basic structure of a computer cluster • Computer performance and the top 500 list • HPC for scientific research and parallel computing • National-wide HPC resources: XSEDE • BU SCC and RCS tutorials What is HPC? • High Performance Computing (HPC) refers to the practice of aggregating computing power in order to solve large problems in science, engineering, or business. • Purpose of HPC: accelerates computer programs, and thus accelerates work process. • Computer cluster: A set of connected computers that work together. They can be viewed as a single system. • Similar terminologies: supercomputing, parallel computing. • Parallel computing: many computations are carried out simultaneously, typically computed on a computer cluster. • Related terminologies: grid computing, cloud computing. Computing power of a single CPU chip • Moore‘s law is the observation that the computing power of CPU doubles approximately every two years. • Nowadays the multi-core technique is the key to keep up with Moore's law. Why computer cluster? • Drawbacks of increasing CPU clock frequency: --- Electric power consumption is proportional to the cubic of CPU clock frequency (ν3). --- Generates more heat. • A drawback of increasing the number of cores within one CPU chip: --- Difficult for heat dissipation. • Computer cluster: connect many computers with high- speed networks. • Currently computer cluster is the best solution to scale up computer power. • Consequently software/programs need to be designed in the manner of parallel computing. Basic structure of a computer cluster • Cluster – a collection of many computers/nodes. • Rack – a closet to hold a bunch of nodes. -

On the Cognitive Prerequisites of Learning Computer Programming

On the Cognitive Prerequisites of Learning Computer Programming Roy D. Pea D. Midian Kurland Technical Report No. 18 ON THE COGNITIVE PREREQUISITES OF LEARNING COMPUTER PROGRAMMING* Roy D. Pea and D. Midian Kurland Introduction Training in computer literacy of some form, much of which will consist of training in computer programming, is likely to involve $3 billion of the $14 billion to be spent on personal computers by 1986 (Harmon, 1983). Who will do the training? "hardware and software manu- facturers, management consultants, -retailers, independent computer instruction centers, corporations' in-house training programs, public and private schools and universities, and a variety of consultants1' (ibid.,- p. 27). To date, very little is known about what one needs to know in order to learn to program, and the ways in which edu- cators might provide optimal learning conditions. The ultimate suc- cess of these vast training programs in programming--especially toward the goal of providing a basic computer programming compe- tency for all individuals--will depend to a great degree on an ade- quate understanding of the developmental psychology of programming skills, a field currently in its infancy. In the absence of such a theory, training will continue, guided--or to express it more aptly, misguided--by the tacit Volk theories1' of programming development that until now have served as the underpinnings of programming instruction. Our paper begins to explore the complex agenda of issues, promise, and problems that building a developmental science of programming entails. Microcomputer Use in Schools The National Center for Education Statistics has recently released figures revealing that the use of micros in schools tripled from Fall 1980 to Spring 1983. -

Software Development a Practical Approach!

Software Development A Practical Approach! Hans-Petter Halvorsen https://www.halvorsen.blog https://halvorsen.blog Software Development A Practical Approach! Hans-Petter Halvorsen Software Development A Practical Approach! Hans-Petter Halvorsen Copyright © 2020 ISBN: 978-82-691106-0-9 Publisher Identifier: 978-82-691106 https://halvorsen.blog ii Preface The main goal with this document: • To give you an overview of what software engineering is • To take you beyond programming to engineering software What is Software Development? It is a complex process to develop modern and professional software today. This document tries to give a brief overview of Software Development. This document tries to focus on a practical approach regarding Software Development. So why do we need System Engineering? Here are some key factors: • Understand Customer Requirements o What does the customer needs (because they may not know it!) o Transform Customer requirements into working software • Planning o How do we reach our goals? o Will we finish within deadline? o Resources o What can go wrong? • Implementation o What kind of platforms and architecture should be used? o Split your work into manageable pieces iii • Quality and Performance o Make sure the software fulfills the customers’ needs We will learn how to build good (i.e. high quality) software, which includes: • Requirements Specification • Technical Design • Good User Experience (UX) • Improved Code Quality and Implementation • Testing • System Documentation • User Documentation • etc. You will find additional resources on this web page: http://www.halvorsen.blog/documents/programming/software_engineering/ iv Information about the author: Hans-Petter Halvorsen The author currently works at the University of South-Eastern Norway. -

Computer Programming and Database Management - Software Engineering Technology Major (SET)

Computer Programming and Database Management - Software Engineering Technology Major (SET) Program Chair: Robert (Bob) Nields, MBA • Email: [email protected] • Phone: (513) 569-1653 Co-Op Coordinator Chair: Noelle Grome, ME, MA • Email: [email protected] • Phone: (513) 569-4693 The Computer Programming and Database Management - Software Engineering Technology Major (SET) focuses on the design, development, implementation, and maintenance of software solutions used in a variety of industries and organizations. Students gain practical knowledge and experience in the software development process and methods using relevant, current programming languages, databases, and database query languages. Students also gain knowledge of core math and science concepts and skills. Graduates earn an Associate of Applied Science degree and are prepared to enter the workforce as skilled software developers/computer programmers. Graduates may continue their education in a bachelor's degree program in computer science, engineering technology, or mathematics. Although some required courses are available through evening and/or online classes, most of the required courses for the Software Engineering Technology Major are scheduled as in-person classes offered on Monday through Friday between 8 a.m. and 5 p.m. Employment Options GRADUATE STARTING SALARY Education Options PROJECTIONS: GRADUATES ARE PREPARED TO: $40,000 to $60,000 annual salary STRONG TRANSFER HISTORY: • Design and write computer programs Northern Kentucky University using programming languages NET, EMPLOYMENT OUTLOOK: University of Cincinnati Python, Java, C, and C++ Graduates of the Software Engineering Western Governors University • Develop applications using the Technology program are in demand by Wilmington College Object-Oriented Programming companies locally and nationally. Wright State University Methodology According to the U.S. -

Computer Programming

d. Ballpoint pens or sharpened pencils e. Blank notebook paper COMPUTER f. All competitors must create a one-page résumé and submit a hard copy to the PROGRAMMING technical committee chair at orientation. Failure to do so will result in a 10-point penalty. PURPOSE To evaluate each contestant’s preparation for Note: Your contest may also require a hard employment and to recognize outstanding copy of your résumé as part of the actual students for excellence and professionalism in contest. Check the Contest Guidelines the field of computer programming. and/or the updates page on the SkillsUSA website: http://updates.skillsusa.org. First, download and review the General Regulations at: http://updates.skillsusa.org. SCOPE OF THE CONTEST The contest uses competencies identified by the ELIGIBILITY Computing Technology Industry Association. Open to active SkillsUSA members enrolled in The specific projects chosen for national programs with computer programming as the competition will be determined by the occupational objective. Computer Programming technical committee. Knowledge Performance CLOTHING REQUIREMENTS The contest includes a written knowledge test Class E: Contest specific — Business Casual assessing knowledge of Visual Basic, Java, C++ • Official SkillsUSA white polo shirt or RPG or “other approved language.” Check • Black dress slacks (accompanied by black the Contest Guidelines and/or the updates page dress socks or black or skin-tone seamless on the SkillsUSA website: updates.skillsusa.org. hose) or black dress skirt (knee-length, accompanied by black or skin-tone Skill Performance seamless hose) The contest includes a computer programming • Black leather closed-toe dress shoes problem consisting of background information and program specifications with accompanying These regulations refer to clothing items that reference materials and description of program are pictured and described at: output requirements. -

R00456--FM Getting up to Speed

GETTING UP TO SPEED THE FUTURE OF SUPERCOMPUTING Susan L. Graham, Marc Snir, and Cynthia A. Patterson, Editors Committee on the Future of Supercomputing Computer Science and Telecommunications Board Division on Engineering and Physical Sciences THE NATIONAL ACADEMIES PRESS Washington, D.C. www.nap.edu THE NATIONAL ACADEMIES PRESS 500 Fifth Street, N.W. Washington, DC 20001 NOTICE: The project that is the subject of this report was approved by the Gov- erning Board of the National Research Council, whose members are drawn from the councils of the National Academy of Sciences, the National Academy of Engi- neering, and the Institute of Medicine. The members of the committee responsible for the report were chosen for their special competences and with regard for ap- propriate balance. Support for this project was provided by the Department of Energy under Spon- sor Award No. DE-AT01-03NA00106. Any opinions, findings, conclusions, or recommendations expressed in this publication are those of the authors and do not necessarily reflect the views of the organizations that provided support for the project. International Standard Book Number 0-309-09502-6 (Book) International Standard Book Number 0-309-54679-6 (PDF) Library of Congress Catalog Card Number 2004118086 Cover designed by Jennifer Bishop. Cover images (clockwise from top right, front to back) 1. Exploding star. Scientific Discovery through Advanced Computing (SciDAC) Center for Supernova Research, U.S. Department of Energy, Office of Science. 2. Hurricane Frances, September 5, 2004, taken by GOES-12 satellite, 1 km visible imagery. U.S. National Oceanographic and Atmospheric Administration. 3. Large-eddy simulation of a Rayleigh-Taylor instability run on the Lawrence Livermore National Laboratory MCR Linux cluster in July 2003. -

A Component-Based Software Development Platform for Rapid Prototyping of Embedded Software

A Component-Based Software Development Platform for Rapid Prototyping of Embedded Software Advisor: Prof. Jun Wu Student: Chong-Fa Hsu A thesis submitted to the Department of Computer Science and Information Engineering of the National Pingtung Institute of Commerce in partial ful¯llment of the requirements for the Degree of Master of Computer Science Information Engineer Pingtung, Taiwan, R.O.C. July 2009 Acknowledgements During these two years, I received a lot of assistance and encouragement from many people. First of all, I must thank my supervisor Prof. Jun Wu. During the composition of the thesis, he provided me with great suggestions and enlightening viewpoints. Although I met with many setbacks which made me dejected in the process of writing this thesis, he always en- couraged me to complete my work. so, without his patient guidance, timely correction, and excellent training, I could not ¯nish the thesis. In particular, I wish to thank my committee members, Dr. Chi-Chou Kao and Dr. Lung-Jen Wang. Their professional corrections and suggestions made the thesis better. Additionally, I would like express my sincere gratitude to Chia-Kang Lin, Chia-Wei Ko, and all real-time system laboratory members in the National Pingtung Institute of Commerce. They always help me whenever I had problems or di±- culties. Finally, I wish to give my deep apprecitation to my family for their endless support and encouragement during these two years. Chong-Fa Hsu Taiwan July 2009 I 摘要 嵌入式軟體的開發與硬體平台有著高度的關聯性,由於嵌入式硬體 的種類逐日成長以及必須縮短上市時間的需求,嵌入式軟體的開發工作 也愈形複雜。為了能縮短嵌入式軟體的上市時間,採用快速應用程式開 -

Role of Agent Middleware in Teaching Distributed Systems and Agent Technologies

CIT. Journal of Computing and Information Technology, Vol. 27, No. 1, March 2019, 25–42 25 doi: 10.20532/cit.2019.1004464 Role of Agent Middleware in Teaching Distributed Systems and Agent Technologies Costin Badica1, Milan Vidaković2, Sorin Ilie1, Mirjana Ivanović3 and Jovana Vidaković3 ¹University of Craiova, Department of Computers and Information Technology; Romania ²University of Novi Sad, Faculty of Technical Sciences, Department of Computing and Control; Serbia ³University of Novi Sad, Faculty of Sciences, Department of Mathematics and Informatics; Serbia Computer science and information communication 1. Introduction technologies are among the fastest changing areas and it is essential to follow this world-wide trend also in Computer Science (CS) and Information Com- education, constantly innovating and adapting curric- munication Technologies (ICT) curricula must ula. In this paper, we introduce the structure, meth- be under constant reevaluation and develop- odological aspects and educational experiences of ment as nowadays these two areas have been teaching two courses on distributed systems and agent rapidly changing. Therefore, it is significant to technologies at two different universities and coun- tries. The presentation is focused on the role of agent impose and suggest adequate approaches for re- middleware and multi-agent systems in teaching vari- vitalizing CS and ICT education and curricula. ous theoretical and practical aspects of these courses. Significant changes in developing modern soft- At the University of Craiova, the conclusion is that the ware have happened in the last two decades. We use of agent middleware in general and of JADE plat- are now in a world of computing, where basical- form in particular for teaching the course Distributed ly everything is distributed in the broader sense, Systems certainly brings many advantages, but also i.e. -

SHORT HISTORY of SOFTWARE METHODS by David F. Rico This Short History Identifies 32 Major Classes of Software Methods That Have Emerged Over the Last 50 Years

SHORT HISTORY OF SOFTWARE METHODS by David F. Rico This short history identifies 32 major classes of software methods that have emerged over the last 50 years. There are many variations of each major class of software method, which renders the number of software methods in the hundreds. This short history contains a brief synopsis of each of the 32 major classes of software methods, identifying the decade and year they appeared, their purpose, their major tenets, their strengths, and their weaknesses. The year each software method appeared corresponds to the seminal work that introduced the method based on extensive bibliographic research and the strengths and weaknesses were based on scholarly and empirical works to provide an objective capstone for each method. Mainframe Era Midrange Era Microcomputer Era Internet Era Personalized Era 1960s 1970s 1980s 1990s 2000s Flowcharting Structured Design Formal Specification Software Estimation Software Reliability Iterative/Incremental Software Inspections Structured Analysis Software Testing Configuration Control Quality Assurance Project Management Defect Prevention Process Improvement CASE Tools Object Oriented Software Reuse Rapid Prototyping Concurrent Lifecycle Software Factory Domain Analysis Quality Management Risk Management Software Architecture Software Metrics Six Sigma Buy versus Make Personal Processes Product Lines Synch-n-Stabilize Team Processes Agile Methods Market Conditions Market Conditions Market Conditions Market Conditions Market Conditions y Mainframe computer y Computer -

Course Outline Development

Computer Programming COURSE OUTLINE 1. Course Title: Computer Programming 2. CBEDS Title: Computer Programming 3. CBEDS Number: 4631 4. Job Titles: Computer Operators Computer Support Specialists Computer Systems Analysts 5. Course Description: This course is designed as a general introduction to the rapidly expanding field of computer science. Through the use of the Visual Basic programming language, students will perform the basics of computer programming including methodologies, structures, and user interfaces, as well as more advanced programming concepts like searching, sorting, and object-oriented programming. Other programming languages, such as C++ and Java, may be included for individual or group projects. This course is designed for students seeking to further develop their computer skills and requires good math skills. Student Outcomes and Objectives: Students will: 1. Develop the ability to write computer programs in Visual Basic. 2. Use principles of software design to analyze programming problems and develop solutions. 3. Compare values and perform alternative operations based upon the results of the comparison. 4. Identify the proper structure of loops. 5. Demonstrate the use of arrays. 6. Demonstrate the use of strings. 7. Create and test computer programs that incorporate control structures, and object- oriented programming methods. Pathway Recommended Courses Sequence Introductory Computer Foundations Skill Building Computer Applications in Business 1 or Computer Programming Advanced Skill Multi Media & Desktop Publishing or Computer Applications in Business 2 6. Hours: Students receive up to 180 hours of classroom instruction. 7. Prerequisites: Computer Applications in Business 1 8. Date (of creation/revision): July 2010 2 9. Course Outline COURSE OUTLINE Upon successful completion of this course, students will be able to demonstrate the following skills necessary for entry- level employment.