An Automatic Web News Article Contents Extraction System Based on Rss Feeds

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

(Nueva) Guia Canales Cable Del Norte

Paquete Paquete Paquete Paquete Paquete Paquete Paquete Paquete Basico Premium Internac. Adultos XTIME HD Musicales PPV ●210‐Guatevision ●325‐NBC Sports ●1661‐History 2 HD Nacionales Variados ●211‐Senal Colombia ●330‐EuroSport Peliculas Educativos Pay Per View ●1671‐Sun Channel HD ●1‐TV Guia ●100‐Telemundo ●212‐Canal UNO ●331‐Baseball ●500‐HBO ●650‐Discovery ●800‐PPV Events ●2‐TeleAntillas ●101‐Telemundo ●213‐TeleCaribe ●332‐Basketball ●501‐HBO2 ●651‐Discovery Turbo ●810‐XTASY ●3‐Costa Norte ●103‐AzMundo ●214‐TRO ●333‐Golf TV ●502‐HBO LA ●652‐Discovery Science ●811‐Canal Adultos ●4‐CERTV ●104‐AzCorazon ●215‐Meridiano ●334‐Gol TV ●510‐CineMax ●653‐Civilization Disc. ●5‐Telemicro ●110‐Estrellas ●215‐Televen ●335‐NHL ●520‐Peliculas ●654‐Travel & Living High Definition ●6‐OLA TV ●111‐DTV ●216‐Tves ●336‐NFL ●530‐Peliculas ●655‐Home & Health ●1008‐El Mazo TV HD ●7‐Antena Latina ●112‐TV Novelas ●217‐Vive ●337‐Tennis Channel ●540‐Peliculas ●656‐Animal Planet ●1100‐Telemundo HD ●8‐El Mazo TV ●113‐Distrito Comedia ●218‐VTV ●338‐Horse Racing TV ●550‐Xtime ●657‐ID ●1103‐AzMundo HD ●9‐Color Vision ●114‐Antiestres TV ●220‐Globovision ●339‐F1 LA ●551‐Xtime 2 ●660‐History ●1104‐AzCorazon HD ●10‐GH TV ●115‐Ve Plus TV ●240‐Arirang TV ●552‐Xtime 3 ●661‐History 2 ●1129‐TeleXitos HD ●11‐Telesistema ●120‐Wapa Entretenimiento ●553‐Xtime Family ●665‐National Geo. ●1256‐NHK HD ●12‐JM TV ●121‐Wapa 2 Noticias ●400‐ABC ●554‐Xtime Comedy ●670‐Mas Chic ●1257‐France 24 HD ●13‐TeleCentro ●122‐Canal i ●250‐CNN ●401‐NBC ●555‐Xtime Action ●672‐Destinos TV ●1265‐RT ESP HD ●14‐OEPM TV ●123‐City TV ●251‐CNN (Es) ●402‐CBS ●556‐Xtime Horror ●673‐TV Agro ●1266‐RT USA HD ●15‐Digital 15 ●124‐PRTV ●252‐CNN Int. -

Reuters Institute Digital News Report 2020

Reuters Institute Digital News Report 2020 Reuters Institute Digital News Report 2020 Nic Newman with Richard Fletcher, Anne Schulz, Simge Andı, and Rasmus Kleis Nielsen Supported by Surveyed by © Reuters Institute for the Study of Journalism Reuters Institute for the Study of Journalism / Digital News Report 2020 4 Contents Foreword by Rasmus Kleis Nielsen 5 3.15 Netherlands 76 Methodology 6 3.16 Norway 77 Authorship and Research Acknowledgements 7 3.17 Poland 78 3.18 Portugal 79 SECTION 1 3.19 Romania 80 Executive Summary and Key Findings by Nic Newman 9 3.20 Slovakia 81 3.21 Spain 82 SECTION 2 3.22 Sweden 83 Further Analysis and International Comparison 33 3.23 Switzerland 84 2.1 How and Why People are Paying for Online News 34 3.24 Turkey 85 2.2 The Resurgence and Importance of Email Newsletters 38 AMERICAS 2.3 How Do People Want the Media to Cover Politics? 42 3.25 United States 88 2.4 Global Turmoil in the Neighbourhood: 3.26 Argentina 89 Problems Mount for Regional and Local News 47 3.27 Brazil 90 2.5 How People Access News about Climate Change 52 3.28 Canada 91 3.29 Chile 92 SECTION 3 3.30 Mexico 93 Country and Market Data 59 ASIA PACIFIC EUROPE 3.31 Australia 96 3.01 United Kingdom 62 3.32 Hong Kong 97 3.02 Austria 63 3.33 Japan 98 3.03 Belgium 64 3.34 Malaysia 99 3.04 Bulgaria 65 3.35 Philippines 100 3.05 Croatia 66 3.36 Singapore 101 3.06 Czech Republic 67 3.37 South Korea 102 3.07 Denmark 68 3.38 Taiwan 103 3.08 Finland 69 AFRICA 3.09 France 70 3.39 Kenya 106 3.10 Germany 71 3.40 South Africa 107 3.11 Greece 72 3.12 Hungary 73 SECTION 4 3.13 Ireland 74 References and Selected Publications 109 3.14 Italy 75 4 / 5 Foreword Professor Rasmus Kleis Nielsen Director, Reuters Institute for the Study of Journalism (RISJ) The coronavirus crisis is having a profound impact not just on Our main survey this year covered respondents in 40 markets, our health and our communities, but also on the news media. -

Download Streaming TV Lineup

TiVo Logo Lockup | 4C Blue RCN STREAMING TV Boston Channel Lineup RCN SIGNATURE CH CH CH CH CH CH 1 RCN On Demand 111 FX HD 306 HLN HD 866 Music Choice Metal 1126-1128 Milton PEG 1144-1146 Stoneham PEG 2 WGBH HD (PBS) 112 SYFY HD 310 CNBC HD 867 Music Choice Alternative 1129-1131 Natick PEG 1147-1149 Wakefield PEG 4 WBZ 4 HD (CBS) 115 E! HD 311 MSNBC HD 868 Music Choice Adult Alternative 1132-1134 Needham PEG 1150-1152 Waltham PEG 5 WCVB HD (ABC) 116 TRU TV HD 312 NBCLX 869 Music Choice Rock Hits 1135-1137 Newton PEG 1153-1155 Woburn PEG 6 WFXT HD (FOX) 117 COMEDY CENTRAL HD 315 FOX NEWS HD 870 Music Choice Classic Rock 1138-1140 Revere PEG 1156-1158 Watertown PEG 7 WHDH HD (NBC) 118 JUSTICE CENTRAL HD 316 FOX BUSN NEWS HD 871 Music Choice Soft Rock 1141-1143 Somerville PEG 1159-1161 Peabody PEG 8 WLVI HD (CW) 120 ANIMAL PLANET HD 318 NECN HD 872 Music Choice Love Songs 9 WGBH CREATE 128 GSN HD 320 WEATHER CH HD-MA 873 Music Choice Pop Hits 10 WBTS HD (NBC) 140 REELZ HD 321 Accuweather 874 Music Choice Party Favorites 11 WSBK MyTV 141 FXM HD 330 NASA 875 Music Choice Teen Beats RCN PREMIERE 12 WBPX HD (ION) 142 AMC HD 333 TRAVEL HD 876 Music Choice Kidz Only 14 WGBX (PBS 44) 143 TCM HD 335 DISCOVERY HD 877 Music Choice Toddler Tunes CH CH 16 WNEU HD (TELEMUNDO 60) 158 IFC HD 336 OWN HD 878 Music Choice Y2K Movies & Entertainment 249 BOOMERANG 17 WUNI HD (UNIVISION) 159 SUNDANCE TV HD 337 ID HD 879 Music Choice 90’s 103 BET HER 252 DISNEY XD HD 18 WWJE Justice 160 MTV HD 340 HISTORY HD 880 Music Choice 80’s 113 OLYMPIC CH 255 DISCOVERY -

Digital News Report 2018 Reuters Institute for the Study of Journalism / Digital News Report 2018 2 2 / 3

1 Reuters Institute Digital News Report 2018 Reuters Institute for the Study of Journalism / Digital News Report 2018 2 2 / 3 Reuters Institute Digital News Report 2018 Nic Newman with Richard Fletcher, Antonis Kalogeropoulos, David A. L. Levy and Rasmus Kleis Nielsen Supported by Surveyed by © Reuters Institute for the Study of Journalism Reuters Institute for the Study of Journalism / Digital News Report 2018 4 Contents Foreword by David A. L. Levy 5 3.12 Hungary 84 Methodology 6 3.13 Ireland 86 Authorship and Research Acknowledgements 7 3.14 Italy 88 3.15 Netherlands 90 SECTION 1 3.16 Norway 92 Executive Summary and Key Findings by Nic Newman 8 3.17 Poland 94 3.18 Portugal 96 SECTION 2 3.19 Romania 98 Further Analysis and International Comparison 32 3.20 Slovakia 100 2.1 The Impact of Greater News Literacy 34 3.21 Spain 102 2.2 Misinformation and Disinformation Unpacked 38 3.22 Sweden 104 2.3 Which Brands do we Trust and Why? 42 3.23 Switzerland 106 2.4 Who Uses Alternative and Partisan News Brands? 45 3.24 Turkey 108 2.5 Donations & Crowdfunding: an Emerging Opportunity? 49 Americas 2.6 The Rise of Messaging Apps for News 52 3.25 United States 112 2.7 Podcasts and New Audio Strategies 55 3.26 Argentina 114 3.27 Brazil 116 SECTION 3 3.28 Canada 118 Analysis by Country 58 3.29 Chile 120 Europe 3.30 Mexico 122 3.01 United Kingdom 62 Asia Pacific 3.02 Austria 64 3.31 Australia 126 3.03 Belgium 66 3.32 Hong Kong 128 3.04 Bulgaria 68 3.33 Japan 130 3.05 Croatia 70 3.34 Malaysia 132 3.06 Czech Republic 72 3.35 Singapore 134 3.07 Denmark 74 3.36 South Korea 136 3.08 Finland 76 3.37 Taiwan 138 3.09 France 78 3.10 Germany 80 SECTION 4 3.11 Greece 82 Postscript and Further Reading 140 4 / 5 Foreword Dr David A. -

CV – June 2021 – 1

SÉVERINE AUTESSERRE * Department of Political Science. 3009 Broadway. New York, NY 10027-6598 ( (1) (212) 854-4877 : [email protected] / http://www.severineautesserre.com CURRENT POSITION: Professor, Department of Political Science, Barnard College, Columbia University, July 2007 - Present Assistant Professor from 2007 to 2015; promoted to Associate Professor with tenure in 2015 and to Professor in 2017; elected Department Chair (2022 - 2025) Research civil and international wars, international interventions, and African politics, and disseminate research findings through seminars, conferences, and scientific articles Main field research site: Democratic Republic of Congo. Other sites: Burundi, Cyprus, Colombia, Israel-Palestine, Northern Ireland, South Sudan, Timor-Leste, Somaliland Teach undergraduate and graduate classes on international relations and African politics Hold a courtesy appointment (since 2009) and a senior research scholar position (since 2015) at the School of International and Public Affairs Affiliated with the Columbia University Saltzman Institute for War and Peace Studies, Columbia University Institute for African Studies, Columbia University Institute for the Study of Human Rights, and Barnard College Africana Studies Program PUBLICATIONS Books The Frontlines of Peace: An Insider’s Guide to Changing the World. • Hardback & eBook: Oxford University Press, 2021 Audiobook: Audible, 2021 • Reviewed to date in: Diplomatie, The Financial Times, Foreign Affairs, International Peacekeeping, Política Exterior, The Economist, -

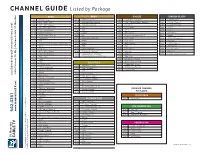

CHANNEL GUIDE Listed by Package

Listed by Package CHANNEL GUIDE BASIC BASIC BRONZE SPANISH SILVER* 104 WGN-Chicago 267 Lifetime Television 142 Fox Business Network 400 Fox Deportes 106 PBS - WPBT-Miami 269 Lifetime Movie Network 152 JNN - Jamaica News Network 402 CNN en Espanol 108 NBC - WTVJ-Miami 273 TV Guide Network 155 EuroNews 404 E! Latin America 110 CW - WPIX-New York 274 TNT 156 CCTV News 406 GLITZ 112 FOX - WSVN-Miami 276 TBS 182 JCTV 410 HTV 114 Bulletin Channel 278 Space 186 Vh1 Classic 411 MuchMusic 115 TV15 - SXM Cable TV 280 BET 190 Reggae Entertainment TV 412 Infinito 116 CaribVision 297 Game Show Network 195 BET Gospel 414 Caracol Television 119 RFO 309 EWTN 208 Fight Now! 416 Pasiones 120 Special Events Channel - SXM Cable TV 311 3ABN 217 Golf Channel 419 EWTN Espanol 121 3SCS 314 TBN 219 TVG 421 TBN Enlace 122 BVN TV 337 DWTV (German) 238 Fashion TV 424 Disney Junior 126 ABC - WPLG-Miami 418 Azteca International 245 Halogen 428 Baby TV 127 CBS - WFOR-Miami 900 - Galaxie Music Channels* 246 Baby TV 430 La Familia Cosmovision (refer to channel category for listing) 130 TeleCuraco 949 256 Teen Nick 136 CNBC 290 Sundance Channel 138 CNN SOLID GOLD 316 The Church Channel 146 Headline News 324 France 24 (French) 123 WWOR-New York 148 CNN International 325 TV5 Monde (French) 150 One Caribbean TV 151 Weather Channel 332 EuroChannel 177 A&E 153 MSNBC 184 MTV Hits USA 157 BBC World 192 CMT Pure Country 160 France 24 (English) 204 Fox Soccer Channel 162 Animal Planet 282 Africa Channel 166 Lifetime Real Women 284 G4 .net 168 TruTV X 286 Sony Entertainment TV 175 National Geographic PREMIUM CHANNEL 292 Warner Channel 179 Discovery Channel PACKAGES 313 Smile of a Child 191 Tempo SPORTSMAX 194 CENTRIC 196 MTV PLATINUM PLUS 200 SportsMax 206 Big Ten Network 131 CBC - Canada innovativeS 209 ESPN Caribbean 140 Fox News Channel w. -

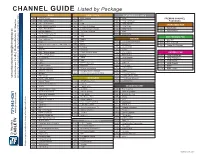

CHANNEL GUIDE Listed by Package

Listed by Package CHANNEL GUIDE BASIC BASIC cont’d PLATINUM PLUS cont’d 104 WGN-Chicago 262 BBC America 288 Spike PREMIUM CHANNEL 106 PBS - WPBT-Miami 264 E! 500 HBO Caribbean PACKAGES 108 NBC - WTVJ-Miami AXN 266 502 HBO Signature SPORTSMAX PAK 110 CW - WPIX-New York 267 Lifetime Television 506 HBO PLUS SportsMax 112 FOX - WSVN-Miami 269 Lifetime Movie Network 512 HBO Family 200 SportsMax2 114 Bulletin Channel 273 TV Guide Network 524 MAX 202 115 TV15 - SXM Cable TV 274 TNT 528 MAX Prime 116 CaribVision 276 TBS ZEE PREMIUM PAK One Caribbean TV BRONZE 117 278 Space 340 Zee TV RFO 142 Fox Business Network 119 280 BET 342 Zee Cinema Special Events Channel - SXM Cable TV 155 EuroNews 120 290 Bravo 346 Alpha Etc Punjabi 121 3SCS 294 UPlifting 156 CCTV News 122 BVN TV 296 Cinemax 182 JCTV 126 ABC - WPLG-Miami 297 Game Show Network 186 Vh1 Classic HBO/MAX PAK 127 CBS - WFOR-Miami 309 EWTN 195 BET Gospel 500 HBO Caribbean 130 TeleCuracao 311 3ABN 208 FOX Sports2 502 HBO Signature 136 CNBC 314 TBN 217 Golf Channel 506 HBO PLUS 138 CNN 337 DWTV (German) 246 Baby TV 512 HBO Family 146 Headline News 408 Univision 256 Teen Nick 524 MAX 148 CNN International 418 Azteca International 258 CalaClassics 528 MAX Prime 151 Weather Channel 900 - Stingray Music Channels 281 Aspire 153 MSNBC 950 (refer to channel category for listing) 316 Hillsong Channel 157 BBC World 324 France 24 (French) [email protected] Join us on Face Book and Like us: http://www.facebook.com/StMaartenCable 160 France 24 (English) SOLID GOLD 325 TV5 Monde (French) 162 -

Comcast Channel Lineup for WCUPA HD Channels Only When HDMI Cable Used to Connect to Set Top Cable Box

Comcast Channel Lineup for WCUPA HD Channels only when HDMI cable used to connect to Set Top Cable Box CH. NETWORK Standard Def CH. NETWORK Standard Def CH. MUSIC STATIONS 2 MeTV (KJWP, Phila.) 263 RT (WYBE, Phila.) 401 Music Choice Hit List 3 CBS 3 (KYW, Phila.) 264 France 24 (WYBE, Phila.) 402 Music Choice Max 4 WACP 265 NHK World (WYBE, Phila.) 403 Dance / EDM 6 6 ABC (WPVI, Phila.) 266 Create (WLVT, Allentown) 404 Music Choice Indie 7 PHL 17 (WPHL, Phila.) 267 V-me (WLVT, Allentown) 405 Hip-Hop and R&B 8 NBC 8 (WGAL, Phila.) 268 Azteca (WZPA, Phila.) 406 Music Choice Rap 9 Fox 29 (WTXF, Phila.) 278 The Works (WTVE, Phila.) 407 Hip-Hop Classics 10 NBC 10 (WCAU, Phila.) 283 EVINE Live 408 Music Choice Throwback Jamz 11 QVC 287 Daystar 409 Music Choice R&B Classics 12 WHYY (PBS, Phila.) 291 EWTN 410 Music Choice R&B Soul 13 CW Philly 57 (WPSG, Phila.) 294 The Word 411 Music Choice Gospel 14 Animal Planet 294 Inspiration 412 Music Choice Reggae 15 WFMZ 500 On Demand Previews 413 Music Choice Rock 16 Univision (WUVP. Phila.) 550 XFINITY Latino 414 Music Choice Metal 17 MSNBC 556 TeleXitos (WWSI, Phila.) 415 Music Choice Alternative 18 TBN (WGTW, Phila.) 558 V-me (WLVT, Allentown) 416 Music Choice Adult Alternative 19 NJTV 561 Univision (WUVP, Phila.) 417 Music Choice Retro Rock 20 HSN 563 UniMás (WFPA, Phila.) 418 Music Choice Classic Rock 21 WMCN 565 Telemundo (WWSI, Phila.) 419 Music Choice Soft Rock 22 EWTN 568 Azteca (WZPA, Phila.) 420 Music Choice Love Songs 23 39 PBS (WLVT, Allentown) 725 FXX 421 Music Choice Pop Hits 24 Telemundo (WWSI, Phila.) 733 NFL Network 422 Music Choice Party Favorites 25 WTVE 965 Chalfont Borough Gov. -

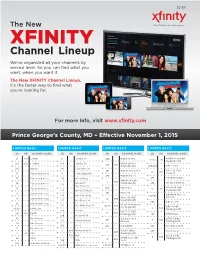

XFINITY Channel Lineup We’Ve Organized All Your Channels by Service Level

2C-307 The New XFINITY Channel Lineup We’ve organized all your channels by service level. So you can find what you want, when you want it. The New XFINITY Channel Lineup. It’s the faster way to find what you’re looking for. For more info, visit www.xfinity.com Prince George’s County, MD – Efective November 1, 2015 LIMITED BASIC LIMITED BASIC LIMITED BASIC LIMITED BASIC SD HD CHANNEL NAME SD HD CHANNEL NAME SD HD CHANNEL NAME SD HD CHANNEL NAME WMDO-47 (uniMàs) 24 941 C-SPAN 23 Jewelry TV 200 WDCA Movies! 15/563 795 Washington DC WDCA-20 (MY) 104 942 C-SPAN2 184 Jewelry TV 20 810 Washington DC 269/559 WMPT V-me 287 Daystar 190 Leased Access WMPT-22 (PBS) 201 WDCW Antenna TV 22 812 72 Educational Access 76 Local Origination Annapolis 206 WDCW This TV 73 Educational Access 279 MHz Arirang 275 WNVC Bon-China WDCW-50 (CW) 3 803 MHz CCTV Washington DC 294 The Word Network 74 Educational Access 276 Documentary WPXW-66 (ION) 75 Educational Access 266 WETA Kids 16 813 273 MHz CCTV News Washington DC 265 WETA UK 77 Educational Access WQAW-20 (Azteca 278 MHz CNC World 198/568 WETA-26 (PBS) America) 78 Educational Access 26 800 277 MHz France 24 Washington DC 208 WRC Cozi TV 96 Educational Access WFDC-14 (Univision) 272 MHz NHK World TV 14/561 794 WRC-4 (NBC) Washington DC 4 804 89/283 EVINE Live Washington DC 274 MHz RT WHUT-32 (PBS) 19 802 291 799 EWTN Washington DC 197 WTTG Buzzr 280 MHz teleSUR 69 Gov’t Access WJLA Live Well WTTG-5 (FOX) 205 5 805 271 MHz Worldview Network Washington DC 70 Gov’t Access 268 MPT2 204 WJLA MeTV 207 WUSA Bounce TV 71 -

Channel Listing Fibe Tv Current As of June 18, 2015

CHANNEL LISTING FIBE TV CURRENT AS OF JUNE 18, 2015. $ 95/MO.1 CTV ...................................................................201 MTV HD ........................................................1573 TSN1 HD .......................................................1400 IN A BUNDLE CTV HD ......................................................... 1201 MUCHMUSIC ..............................................570 TSN RADIO 1050 .......................................977 GOOD FROM 41 CTV NEWS CHANNEL.............................501 MUCHMUSIC HD .................................... 1570 TSN RADIO 1290 WINNIPEG ..............979 A CTV NEWS CHANNEL HD ..................1501 N TSN RADIO 990 MONTREAL ............ 980 ABC - EAST ................................................... 221 CTV TWO ......................................................202 NBC ..................................................................220 TSN3 ........................................................ VARIES ABC HD - EAST ..........................................1221 CTV TWO HD ............................................ 1202 NBC HD ........................................................ 1220 TSN3 HD ................................................ VARIES ABORIGINAL VOICES RADIO ............946 E NTV - ST. JOHN’S ......................................212 TSN4 ........................................................ VARIES AMI-AUDIO ....................................................49 E! .........................................................................621 -

BMJ in the News Is a Weekly Digest of Journal Stories, Plus Any Other News

BMJ in the News is a weekly digest of journal stories, plus any other news about the company that has appeared in the national and a selection of English-speaking international media. A total of 20 journals were picked up in the media last week (11-17 May) - our highlights include: ● A study published in BMJ Global Health, revealing that nearly a quarter of a billion people in Africa will catch coronavirus and up to 190,000 could die, generated excellent global coverage, including The Economist, The Guardian, Voice of America and International Business Times. ● Another BMJ Global Health study finding that more than one in four of the most viewed COVID-19 videos on YouTube in spoken English contains misleading or inaccurate information was covered extensively, including BBC News, NBC News, India TV News and ABC News. ● An analysis published in Annals of the Rheumatic Diseases revealing that there were more than 300 million cases of hip and knee osteoarthritis worldwide in 2017 was picked up by MailOnline, Gibraltar Chronicle and OnMedica. BMJ PRESS RELEASES The BMJ | Annals of the Rheumatic Diseases BMJ Global Health | Occupational & Environmental Medicine EXTERNAL PRESS RELEASES Gut OTHER COVERAGE The BMJ | Archives of Disease in Childhood BMJ Case Reports | BMJ Nutrition, Prevention & Health BMJ Open | BMJ Open Diabetes Research & Care British Journal of Ophthalmology | British Journal of Sports Medicine International Journal of Gynecological Cancer Journal of Clinical Pathology -

Comcast Channel Lineup

Corneast FCC Information and Discovsry Rsqusst MB Docket No.1 0·56 Exhibit 3.3 CABLE MVPDs IN COMCAST FOOTPRINT (BY STATE AND COUNTY) ~ SIllle, CGUnty MVPOName UT JUAB TOWN OF LEVAN UT SALT LAKE QWEST BROADBAND SERVICES INC UT SUMMIT ALL WEST/UTAH INC UT TOOELE CENTRAL TELCOM SERVICES LLC UT UTAH CENTRAL TELCOM SERVICES LLC UT UTAH QWEST BROADBAND SERVICES INC UT UTAH SPANISH FORK CITY UT UTAH THE CITY OF PROVO, UTAH VA AUGUSTA DAVI COMMUNICATIONS INC VA AUGUSTA NTELOS MEDIA INC VA PATRICK CITIZENS CABLEVISION. INC VA SPOTSYLVANIA COXCOMINC VA SPOTSYLVANIA VERIZON VIRGINIA. INC VA MECKLENBURG BUGGS ISLAND TELEPHONE COOPERATIVE VA MECKLENBURG CWACABLE VA MECKLENBURG JETBROADBAND VA, LLC VA MECKLENBURG WINDJAMMER COMMUNICATIONS LLC VA CHESTERFIELD VERIZON VIRGINIA, INC. VA GREENSVILLE CWACABLE VA HENRICO VERIZON VIRGINIA, INC. VA KING WILLIAM COX COMMUNICATIONS HAMPTON ROADS LLC VA NORTHUMBERLAND GANS COMMUNICATIONS LP VA POWHATAN VER1Z0N VIRGINIA, INC. VA CARROLL CITIZENS CABLEVISION, INC VA CARROLL TIME WARNER ENTERTAINMENT - ADVANCE/NEWHOUSE PARTNERSHIP VA PULASKI JETBROADBAND VA, LLC ,VA BEDFORD JET BROADBAND VA, LLC VA BEDFORD JETBROADBAND VA, LLC VA BOTETOURT JETBROADBAND VA, LLC VA BOTETOURT NTELOS MEOlA INC. VA CAMPBELL JET BROADBAND VA, LLC VA MONTGOMERY JETBROADBAND VA, LLC VA PITTSYLVANIA JETBROADBAND VA, LLC VA PITISYLVANIA SHOEMAKER JOHN P VA ROANOKE COXCOMINC VA DICKENSON CABLE PLUS INC VA DICKENSON FLEMING, DENNIS VA DICKENSON JMtES VERMILLION/LEBANON TV VA DICKENSON SCOTT TELECOM & ELECTRONICS INC VA DICKENSON TECHCORE CONSULTANTS III INC VA LEE ZITO MEDIA, LP VA TAZEWELL BISHOP lV CLUB INC VA TAZEWELL JETBROADBAND WV, LLC VA TAZEWELL TIME WARNER NY CABLE LLC VA WASHINGTON CITY OF BRISTOL VA WASHINGTON MARCUS CABLE ASSOCIATES LLC VA WASHINGTON TECHCORE CONSULTANTS 1II1NC VA WASHINGTON ZITO MEDIA, LP.