Proceedings 2014 Download

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Medical Devices

FRAUNHOFER INSTITUTE FOR EXPERIMENTAL SOFTWARE ENGINEERING IESE MEDICAL DEVICES Contact Fraunhofer Institute for Experimental Software Engineering IESE Ralf Kalmar Software is a part of our lives. Embedded into everyday equipment, into living and working en- [email protected] vironments or modern means of transportation, countless processors and controllers make our Phone: +49 631 6800-1603 lives simpler, safer, and more pleasant. We help organizations to develop software systems that www.iese.fraunhofer.de are dependable in every aspect, and empirically validate the necessary processes, methods, and techniques, emphasizing engineering-style principles such as measurability and transparency. Fraunhofer Institute for The Fraunhofer Institute for Experimental Software Engineering IESE in Kaiserslautern has been Experimental Software one of the world’s leading research institutes in the area of software and systems engineering Engineering IESE for more than 20 years. Its researchers have contributed their expertise in the areas of Process- es, Architecture, Security, Safety, Requirements Engineering, and User Experience in more than Fraunhofer-Platz 1 1,200 projects. 67663 Kaiserslautern Germany Under the leadership of Prof. Peter Liggesmeyer, Fraunhofer IESE is working on innovative topics related to digital ecosystems, such as Industrie 4.0, Big Data, and Cyber-Security. As a technology and innovation partner for the digital transformation in the areas of Autonomous & Cyber-Physical Systems and Digital Services, the institute’s research focuses on the interaction between embedded systems and information systems in digital ecosystems. Fraunhofer IESE is one of 72 institutes and research units of the Fraunhofer-Gesellschaft. To- gether they have a major impact on shaping applied research in Europe and contribute to Ger- many’s competitiveness in international markets. -

ECSO State of the Art Syllabus V1 ABOUT ECSO

STATE OF THE ART SYLLABUS Overview of existing Cybersecurity standards and certification schemes WG1 I Standardisation, certification, labelling and supply chain management JUNE 2017 ECSO State of the Art Syllabus v1 ABOUT ECSO The European Cyber Security Organisation (ECSO) ASBL is a fully self-financed non-for-profit organisation under the Belgian law, established in June 2016. ECSO represents the contractual counterpart to the European Commission for the implementation of the Cyber Security contractual Public-Private Partnership (cPPP). ECSO members include a wide variety of stakeholders across EU Member States, EEA / EFTA Countries and H2020 associated countries, such as large companies, SMEs and Start-ups, research centres, universities, end-users, operators, clusters and association as well as European Member State’s local, regional and national administrations. More information about ECSO and its work can be found at www.ecs-org.eu. Contact For queries in relation to this document, please use [email protected]. For media enquiries about this document, please use [email protected]. Disclaimer The document was intended for reference purposes by ECSO WG1 and was allowed to be distributed outside ECSO. Despite the authors’ best efforts, no guarantee is given that the information in this document is complete and accurate. Readers of this document are encouraged to send any missing information or corrections to the ECSO WG1, please use [email protected]. This document integrates the contributions received from ECSO members until April 2017. Cybersecurity is a very dynamic field. As a result, standards and schemes for assessing Cybersecurity are being developed and updated frequently. -

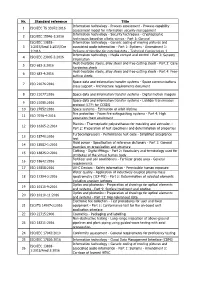

Nr. Standard Reference Title 1 ISO/IEC TS 33072:2016 Information Technology

Nr. Standard reference Title Information technology - Process assessment - Process capability 1 ISO/IEC TS 33072:2016 assessment model for information security management Information technology - Security techniques - Cryptographic 2 ISO/IEC 15946-1:2016 techniques based on elliptic curves - Part 1: General ISO/IEC 13818- Information technology - Generic coding of moving pictures and 3 1:2015/Amd 1:2015/Cor associated audio information - Part 1: Systems - Amendment 1: 1:2016 Delivery of timeline for external data - Technical Corrigendum 1 Information technology - Media context and control - Part 3: Sensory 4 ISO/IEC 23005-3:2016 information Heat-treatable steels, alloy steels and free-cutting steels - Part 3: Case- 5 ISO 683-3:2016 hardening steels Heat-treatable steels, alloy steels and free-cutting steels - Part 4: Free- 6 ISO 683-4:2016 cutting steels Space data and information transfer systems - Space communications 7 ISO 21076:2016 cross support - Architecture requirements document 8 ISO 21077:2016 Space data and information transfer systems - Digital motion imagery Space data and information transfer systems - Licklider transmission 9 ISO 21080:2016 protocol (LTP) for CCSDS 10 ISO 27852:2016 Space systems - Estimation of orbit lifetime Fire protection - Foam fire extinguishing systems - Part 4: High 11 ISO 7076-4:2016 expansion foam equipment Plastics - Thermoplastic polyurethanes for moulding and extrusion - 12 ISO 16365-2:2014 Part 2: Preparation of test specimens and determination of properties Turbocompressors - Performance test -

Thesis and Dissertation Template

DEVELOPMENT OF A QUALITY MANAGEMENT ASSESSMENT TOOL TO EVALUATE SOFTWARE USING SOFTWARE QUALITY MANAGEMENT BEST PRACTICES Item Type Other Authors Erukulapati, Kishore Publisher Cunningham Memorial library, Terre Haute,Indiana State University Download date 23/09/2021 23:31:43 Link to Item http://hdl.handle.net/10484/12347 DEVELOPMENT OF A QUALITY MANAGEMENT ASSESSMENT TOOL TO EVALUATE SOFTWARE USING SOFTWARE QUALITY MANAGEMENT BEST PRACTICES _______________________ A Dissertation Presented to The College of Graduate and Professional Studies College of Technology Indiana State University Terre Haute, Indiana ______________________ In Partial Fulfillment of the Requirements for the Degree Doctor of Philosophy _______________________ by Kishore Erukulapati December 2017 © Kishore Erukulapati 2017 Keywords: Software Quality, Technology Management, Software Quality Management, Bibliometric techniques, Best Practices VITA Kishore Erukulapati EDUCATION Ph.D. Indiana State University (ISU), Terre Haute, IN. Technology Management, 2017. M.S. California State University (CSU), Dominguez Hills, CA. Quality Assurance, 2011. B.Tech. National Institute of Technology (NIT), Calicut, Kerala, India. Computer Science and Engineering, 1996. INDUSTRIAL EXPERIENCE 2011-Present Hawaii Medical Service Association. Position: Senior IT Consultant. 2008-2010 Telvent. Position: Operations Manager. 2008-2008 ACG Tech Systems. Position: Test Manager. 2000-2008 IBM Global Services. Position: Project Manager. 1996-2000 Tata Consultancy Services. Position: System Analyst. PUBLICATIONS/PRESENTATIONS Erukulapati, K., & Sinn, J. W. (2017). Better Intelligence. Quality Progress, 50 (9), 34-40. Erukulapati, K. (2016). What Can Software Quality Engineering Contribute to Cybersecurity?. Software Quality Professional, 19(1). Erukulapati, K. (2015, Dec 2). Information Technology in Healthcare: Current Status and Future Perspectives. Presented at IEEE Hawaii Chapter - Computer Society Meeting, Honolulu, Hawaii. -

Quality Factors for Mobile Medical Apps

_________________________________________________________________________________________________________________Proceedings of the Central European Conference on Information and Intelligent Systems 229 Quality Factors for Mobile Medical Apps Nadica Hrgarek Lechner Vjeran Strahonja MED-EL Elektromedizinische Geräte GmbH Faculty of Organization and Informatics Fürstenweg 77, 6020 Innsbruck, Austria Pavlinska 2, 42000 Varaždin, Croatia [email protected] [email protected] Abstract. The increased use of mobile phones, less in healthcare. According to a report from the smartphones, tablets, and connected wearable devices business consulting firm Grand Review Research, Inc. has fostered the development of mobile medical (2016), the global connected health and wellness applications (apps) in the last few years. Mobile devices market is expected to reach USD 612.0 billion medical apps are developed to extend or replace some by 2024. existing applications that run on a desktop or laptop The results of the sixth annual study on mobile computer, or on a remote server. health app publishing done by research2guidance If mobile apps are used, for example, to diagnose, (2016) are based on 2,600 plus respondents who mitigate, treat, cure, or prevent human diseases, participated in the online survey. As displayed in Fig. patient safety is at potential risk if the apps do not 1, remote monitoring, diagnostic, and medical function as intended. Therefore, such apps need to be condition management apps are the top three mHealth cleared, approved, or otherwise regulated in order to app types offering the highest market potential in the protect public health. next five years. In 2016, 32% of total respondents There are many papers that have been published predicted remote monitoring apps as the app category about the quality attributes of mobile apps. -

Relevant Norms and Standards

Appendix A Relevant norms and standards A.1 A Short Overview of the Most Relevant Process Standards There is a huge number of different process standards, and Fig. A.1 contains an overview of the most relevant such standards as covered in this book, showing the topics covered. A more extensive list of the relevant standards will be provided in the following section below. ISO/IEC 15504, ISO 19011 ISO/IEC 330xx Auditing man- agement systems Process Process assessment ISO/IEC 20000-1 ISO 9001 ISO/IEC 15504-6 ISO/IEC 15504-5 Service management QM system re- System life cycle PAM Software life cycle PAM Assessments, audits Criteria system requirements quirements ISO/IEC/IEEE 15288 ISO/IEC/IEEE 12207 ITIL System life cy- Software life cy- IT Infrastruc- cle processes cle processes ture Library COBIT Life cycle processes SWEBoK Software engineering Funda- Body of Knowledge mentals ISO/IEC/IEEE 24765 ISO 9000 Systems and SW Engineering Vocabulary QM fundamentals (SEVOCAB) and vocabulary Vocabulary Systems Software Organzational IT Quality Management Engineering Engineering Fig. A.1 Overview of the most important standards for software processes © Springer Nature Switzerland AG 2018 327 R. Kneuper, Software Processes and Life Cycle Models, https://doi.org/10.1007/978-3-319-98845-0 328 A Relevant norms and standards A.2 ISO and IEC Standards The International Organization for Standardization (ISO) is the main international standard-setting organisation, working with representatives from many national standard-setting organisations. Standards referring to electrical, electronic and re- lated technologies, including software, are often published jointly with its sister organisation, the International Electrotechnical Commission (IEC), but IEC also publishes a number of standards on their own. -

FI-STAR Deliverable D1.1 Has Already Discussed Legal Requirements Related to Data Security Breach Notifications

Ref. Ares(2014)706091 - 13/03/2014 Deliverable D2.1 Standardisation and Certification requirements Editor: Franck Le Gall, easy global markets Deliverable nature: Report (R) Dissemination level: Public (PU) (Confidentiality) Contractual delivery Dec. 2013 date: Actual delivery date: Jan. 2014 Suggested readers: Version: 1.1 Total number of 74 pages: Keywords: Standardisation, certification Abstract This document details the technical requirements resulting from existing standards and prepares future certification process. FI-STAR FP7-ICT-604691 D1.2 Standardisation and Certification requirements Disclaimer This document contains material, which is the copyright of certain FI-STAR consortium parties, and may not be reproduced or copied without permission. All FI-STAR consortium parties have agreed to full publication of this document. The commercial use of any information contained in this document may require a license from the proprietor of that information. Neither the FI-STAR consortium as a whole, nor a certain part of the FI-STAR consortium, warrant that the information contained in this document is capable of use, nor that use of the information is free from risk, accepting no liability for loss or damage suffered by any person using this information. This project has received funding from the European Union’s Seventh Framework Programme for research, technological development and demonstration under grant agreement no 604691. Impressum [Full project title] Future Internet Social and Technological Alignment Research [Short project -

Standards Action Layout SAV3645.Fp5

PUBLISHED WEEKLY BY THE AMERICAN NATIONAL STANDARDS INSTITUTE 25 West 43rd Street, NY, NY 10036 VOL. 36, #45 November 11, 2005 Contents American National Standards Call for Comment on Standards Proposals ................................................ 2 Call for Comment Contact Information ....................................................... 6 Initiation of Canvasses ................................................................................. 8 Final Actions.................................................................................................. 9 Project Initiation Notification System (PINS).............................................. 10 International Standards ISO Draft Standards ...................................................................................... 15 ISO Newly Published Standards .................................................................. 16 Proposed Foreign Government Regulations................................................ 18 Information Concerning ................................................................................. 19 Standards Action is now available via the World Wide Web For your convenience Standards Action can now be down- loaded from the following web address: http://www.ansi.org/news_publications/periodicals/standards _action/standards_action.aspx?menuid=7 American National Standards Call for comment on proposals listed This section solicits public comments on proposed draft new American National Standards, including the national adoption of Ordering Instructions -

Open Source Software Security a Research Summary December 2020

Open Source Software Security A research summary December 2020 Table of Contents Executive Summary ......................................................................................................... 5 Welcome ........................................................................................................................... 6 Introduction ...................................................................................................................... 7 Background ...................................................................................................................... 9 Systems-level Approach ..................................................................................................... 9 Component-level approach............................................................................................... 12 Research Topics ............................................................................................................. 13 Research Overview ........................................................................................................ 14 National Telecoms and Information Administration (NTIA) ............................................... 15 SAFECode.org ................................................................................................................. 19 OWASP ( https://owasp.org ) ............................................................................................ 25 OASIS Open Standards, Open Source (OASIS).............................................................. -

Best Practices for Dealing with Offshore Software Development

Best Practices for Dealing with Offshore Software Development by David A. Vogel, Ph.D. and Jill E. Connolly Intertech Engineering Associates, Inc. as published in Handbook of Business Strategy 2005 Is cost the only benefit to offshore outsourcing, Advantages of Offshore Outsourcing or are there others? Do you give up anything For US companies to endure the disadvantages of for the cost savings? The purpose of this offshoring listed below, the advantages need to be article is to examine why US companies substantial. Advantages reported by US companies outsource software development offshore, and that have experience with offshoring: to determine whether the benefits of offshore • The most powerful attraction is significant outsourcing outweigh the drawbacks. cost savings. US companies are finding that offshoring their software development ffshore outsourcing (offshoring) of software saves them money because of lower labor development has been an increasingly popular costs. For example, Otis Elevator Company trend in recent years. The cost benefits are O COMPANY PROFILE easy to understand when you consider that the average annual salary for an engineer in the USA is $70,000 Intertech Engineering Associates, Inc. in 2004, compared with a $13,580 average salary for an engineer in India, according to Electronic Business Address: 100 Lowder Brook Avenue magazine. Suite 2500 Westwood, MA 02090 Is offshoring worth it? Are the cost savings as large www.inea.com - (781) 801-1100 as they seem? Is quality of the software sacrificed Industry: (Electro)Medical -

Nr. Standard Reference Title 1 IEC 60317-4:2015

Nr. Standard reference Title Specifications for particular types of winding wires - Part 4: Solderable 1 IEC 60317-4:2015 polyurethane enamelled round copper wire, class 130 Appliance couplers for household and similar general purposes - Part 1: 2 IEC 60320-1:2015 General requirements Amendment 1 - Low-voltage electrical installations – Part 4-44: Protection IEC 60364-4- 3 for safety – Protection against voltage disturbances and electromagnetic 44:2007/AMD1:2015 PRV disturbances IEC 60364-5- Amendment 2 - Electrical installations of buildings – Part 5-53: Selection 4 53:2001/AMD2:2015 PRV and erection of electrical equipment – Isolation, switching and control Corrigendum 1 - High-voltage swithgear and controlgear - Part 200: AC IEC 62271- 5 metal-enclosed switchgear and controlgear for rated voltages above 1 kV 200:2011/COR1:2015 and up to and including 52 kV IEC/IEEE 62271-37- High-voltage switchgear and controlgear – Part 37-013: Alternating-current 6 013:2015 PRV generator circuit-breakers Photobiological safety of lamps and lamp systems - Part 5: Image 7 IEC 62471-5:2015 projectors LEDsi lamps for general lighting services with supply voltages not 8 IEC 62838:2015 PRV exceeding 50 V a.c. r.m.s. or 120 V ripple free d.c. – Safety specifications IEC Corrigendum 1 - Amendment 1 - Self-ballasted LED-lamps for general 9 62560:2011/AMD1:2015/CO lighting services by voltages >50 V - Safety specifications R1:2015 Electrical installations for lighting and beaconing of aerodromes – Safety 10 IEC 62870:2015 PRV secondary circuits in series circuits -

Zephyr Overview 091520.Pdf

Zephyr Project: Unlocking Innovation with an Open Source RTOS Kate Stewart @_kate_stewart [email protected] Copyright © 2020 The Linux Foundation. Made avaiilable under Attribution-ShareAlike 4.0 International Zephyr Project Open Source, RTOS, Connected, Embedded • Open source real time operating system Fits where Linux is too big • Vibrant Community participation • Built with safety and security in mind • Cross-architecture with broad SoC and Zephyr OS development board support. 3rd Party Libraries • Vendor Neutral governance Application Services • Permissively licensed - Apache 2.0 OS Services • Complete, fully integrated, highly Kernel configurable, modular for flexibility HAL • Product development ready using LTS includes security updates • Certification ready with Auditable Products Running Zephyr Today Grush Gaming hereO Proglove Rigado IoT Gateway Distancer Toothbrush Smartwatch Ellcie-Healthy Smart Intellinium Safety Anicare Reindeer GNARBOX 2.0 SSD Adero Tracking Devices Sentrius Connected Eyewear Shoes Tracker GEPS Point Home Alarm RUUVI Node HereO Core Box Safety Pod Zephyr Supported Hardware Architectures Cortex-M, Cortex-R & Cortex-A X86 & x86_64 32 & 64 bit Xtensa Coming soon: Native Execution on a POSIX-compliant OS • Build Zephyr as native Linux application • Enable large scale simulation of network or Bluetooth tests without involving HW • Improve test coverage of application layers • Use any native tools available for debugging and profiling • Develop GUI applications entirely on the desktop • Optionally connect