ANIMAL-FARM: an Extensible Framework for Algorithm Visualization

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Ethereal Developer's Guide Draft 0.0.2 (15684) for Ethereal 0.10.11

Ethereal Developer's Guide Draft 0.0.2 (15684) for Ethereal 0.10.11 Ulf Lamping, Ethereal Developer's Guide: Draft 0.0.2 (15684) for Ethere- al 0.10.11 by Ulf Lamping Copyright © 2004-2005 Ulf Lamping Permission is granted to copy, distribute and/or modify this document under the terms of the GNU General Public License, Version 2 or any later version published by the Free Software Foundation. All logos and trademarks in this document are property of their respective owner. Table of Contents Preface .............................................................................................................................. vii 1. Foreword ............................................................................................................... vii 2. Who should read this document? ............................................................................... viii 3. Acknowledgements ................................................................................................... ix 4. About this document .................................................................................................. x 5. Where to get the latest copy of this document? ............................................................... xi 6. Providing feedback about this document ...................................................................... xii I. Ethereal Build Environment ................................................................................................14 1. Introduction .............................................................................................................15 -

Techniques for Visualization and Interaction in Software Architecture Optimization

Institute of Software Technology Reliable Software Systems University of Stuttgart Universitätsstraße 38 D–70569 Stuttgart Masterarbeit Techniques for Visualization and Interaction in Software Architecture Optimization Sebastian Frank Course of Study: Softwaretechnik Examiner: Dr.-Ing. André van Hoorn Supervisor: Dr.-Ing. André van Hoorn Dr. J. Andrés Díaz-Pace, UNICEN, ARG Dr. Santiago Vidal, UNICEN, ARG Commenced: March 25, 2019 Completed: September 25, 2019 CR-Classification: D.2.11, H.5.2, H.1.2 Abstract Software architecture optimization aims at improving the architecture of software systems with regard to a set of quality attributes, e.g., performance, reliability, and modifiability. However, particular tasks in the optimization process are hard to automate. For this reason, architects have to participate in the optimization process, e.g., by making trade-offs and selecting acceptable architectural proposals. The existing software architecture optimization approaches only offer limited support in assisting architects in the necessary tasks by visualizing the architectural proposals. In the best case, these approaches provide very basic visualizations, but often results are only delivered in textual form, which does not allow for an efficient assessment by humans. Hence, this work investigates strategies and techniques to assist architects in specific use cases of software architecture optimization through visualization and interaction. Based on this, an approach to assist architects in these use cases is proposed. A prototype of the proposed approach has been implemented. Domain experts solved tasks based on two case studies. The results show that the approach can assist architects in some of the typical use cases of the domain. Con- ducted time measurements indicate that several hundred architectural proposals can be handled. -

Multi-Touch Table User Interfaces for Co-Located Collaborative Software Visualization

Multi-touch Table User Interfaces for Co-located Collaborative Software Visualization Craig Anslow School of Engineering and Computer Science Victoria University of Wellington, New Zealand [email protected] ABSTRACT Integrated Development Environments (IDE) like Eclipse, Most software visualization systems and tools are designed and the web. These design decisions do not allow users in from a single-user perspective and are bound to the desktop, a co-located environment (within the same room) to easily IDEs, and the web. Few tools are designed with sufficient collaborate, communicate, or be aware of each others activi- support for the social aspects of understanding software such ties to analyse software [19]. Multi-touch user interfaces are as collaboration, communication, and awareness. This re- an interactive technique that allows single or multiple users search aims at supporting co-located collaborative software to collaboratively control graphical displays with more than analysis using software visualization techniques and multi- one finger, mouse pointer, or tangible object on various kinds touch tables. The research will be conducted via qualitative of surfaces and devices. user experiments which will inform the design of collabora- tive software visualization applications and further our un- The goal of this research is to investigate whether multi- derstanding of how software developers work together with touch interaction techniques are more effective for co-located multi-touch user interfaces. collaborative software visualization than existing single user desktop interaction techniques. The research will be con- ACM Classification: H1.2 [User/Machine Systems]: Hu- ducted by user experiments to observe how users interact man Factors; H5.2 [Information interfaces and presentation]: and collaborate with a multi-touch software visualization ap- User Interfaces. -

A.5.1. Linux Programming and the GNU Toolchain

Making the Transition to Linux A Guide to the Linux Command Line Interface for Students Joshua Glatt Making the Transition to Linux: A Guide to the Linux Command Line Interface for Students Joshua Glatt Copyright © 2008 Joshua Glatt Revision History Revision 1.31 14 Sept 2008 jg Various small but useful changes, preparing to revise section on vi Revision 1.30 10 Sept 2008 jg Revised further reading and suggestions, other revisions Revision 1.20 27 Aug 2008 jg Revised first chapter, other revisions Revision 1.10 20 Aug 2008 jg First major revision Revision 1.00 11 Aug 2008 jg First official release (w00t) Revision 0.95 06 Aug 2008 jg Second beta release Revision 0.90 01 Aug 2008 jg First beta release License This document is licensed under a Creative Commons Attribution-Noncommercial-Share Alike 3.0 United States License [http:// creativecommons.org/licenses/by-nc-sa/3.0/us/]. Legal Notice This document is distributed in the hope that it will be useful, but it is provided “as is” without express or implied warranty of any kind; without even the implied warranties of merchantability or fitness for a particular purpose. Although the author makes every effort to make this document as complete and as accurate as possible, the author assumes no responsibility for errors or omissions, nor does the author assume any liability whatsoever for incidental or consequential damages in connection with or arising out of the use of the information contained in this document. The author provides links to external websites for informational purposes only and is not responsible for the content of those websites. -

A Systematic Literature Review of Software Visualization Evaluation T ⁎ L

The Journal of Systems & Software 144 (2018) 165–180 Contents lists available at ScienceDirect The Journal of Systems & Software journal homepage: www.elsevier.com/locate/jss A systematic literature review of software visualization evaluation T ⁎ L. Merino ,a, M. Ghafaria, C. Anslowb, O. Nierstrasza a Software Composition Group, University of Bern, Switzerland b School of Engineering and Computer Science, Victoria University of Wellington, New Zealand ARTICLE INFO ABSTRACT Keywords: Context:Software visualizations can help developers to analyze multiple aspects of complex software systems, but Software visualisation their effectiveness is often uncertain due to the lack of evaluation guidelines. Evaluation Objective: We identify common problems in the evaluation of software visualizations with the goal of for- Literature review mulating guidelines to improve future evaluations. Method:We review the complete literature body of 387 full papers published in the SOFTVIS/VISSOFT con- ferences, and study 181 of those from which we could extract evaluation strategies, data collection methods, and other aspects of the evaluation. Results:Of the proposed software visualization approaches, 62% lack a strong evaluation. We argue that an effective software visualization should not only boost time and correctness but also recollection, usability, en- gagement, and other emotions. Conclusion:We call on researchers proposing new software visualizations to provide evidence of their effec- tiveness by conducting thorough (i) case studies for approaches that must be studied in situ, and when variables can be controlled, (ii) experiments with randomly selected participants of the target audience and real-world open source software systems to promote reproducibility and replicability. We present guidelines to increase the evidence of the effectiveness of software visualization approaches, thus improving their adoption rate. -

Visualization of the Static Aspects of Software: a Survey Pierre Caserta, Olivier Zendra

Visualization of the Static aspects of Software: a survey Pierre Caserta, Olivier Zendra To cite this version: Pierre Caserta, Olivier Zendra. Visualization of the Static aspects of Software: a survey. IEEE Trans- actions on Visualization and Computer Graphics, Institute of Electrical and Electronics Engineers, 2011, 17 (7), pp.913-933. 10.1109/TVCG.2010.110. inria-00546158v2 HAL Id: inria-00546158 https://hal.inria.fr/inria-00546158v2 Submitted on 28 Oct 2011 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. IEEE TRANSACTIONS ON VISUALIZATION AND COMPUTER GRAPHICS, VOL. 17, NO. 7, JULY 2011 913 Visualization of the Static Aspects of Software: A Survey Pierre Caserta and Olivier Zendra Abstract—Software is usually complex and always intangible. In practice, the development and maintenance processes are time- consuming activities mainly because software complexity is difficult to manage. Graphical visualization of software has the potential to result in a better and faster understanding of its design and functionality, thus saving time and providing valuable information to improve its quality. However, visualizing software is not an easy task because of the huge amount of information comprised in the software. -

Hands on #1 Overview

Hands On #1 ercises Overview See Wednesday’ s hands on Part Where1 : Starting is your andinstallation familiarizing ? Getting the example programs Running novice examples : N01, N03, N02 … Part Examine2 : Looking cross into sections Geant4, trying it out with ex Simulate depth dose curve Compute and plot Bragg curve Addenda : other examples, histogramming Your Geant4 installation VMware Player users under Windows or Mac OS all files downloaded from http://geant4.in2p3.fr/cenbg/vmware.html in principle, no installation needed all your peripherals should be operational (WiFi, disks,…) Installation from beginning CERN link http://geant4.web.cern.ch/geant4/support/download.shtml SLAC link http://geant4.slac.stanford.edu/installation/ User forum http://geant4-hn.slac.stanford.edu:5090/HyperNews/public/get/installconfig.html Installation guide http://geant4.web.cern.ch/geant4/UserDocumentation/UsersGuides/InstallationGuide/html/index.html This Hands On will help you check your installation of Geant4 is correct If not, we can try to help during this Hands On… Access your Geant4 installation for VMware users Start the VMware player software Start your VMware machine Log onto the VMware machine Username: local1 , password: local1 Open a terminal (right click on desktop with mouse) You are now working under Scientific Linux 4.2 with gcc 3.4.4 By default on your Windows PC, the directory /mnt/hgfs/echanges is a link to C:\ Tips for VMware users (1/2) Geant4 8.3 installation path : /usr/local/geant4 you need root privileges -

Source Level Debugging GDB: Gnu Debugger Starting/Exiting GDB Stopping and Continuing the Execution of Your Program In

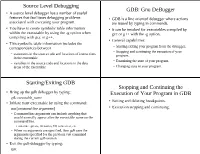

Source Level Debugging GDB: Gnu DeBugger ● A source level debugger has a number of useful features that facilitates debugging problems ● GDB is a line oriented debugger where actions associated with executing your program. are issued by typing in commands. ● You have to create symbolic table information ● It can be invoked for executables compiled by within the executable by using the -g option when gcc or g++ with the -g option. compiling with gcc or g++. ● General capabilities: ● This symbolic table information includes the correspondances between – Starting/exiting your program from the debugger. – Stopping and continuing the execution of your – statements in the source code and locations of instructions program. in the executable – Examining the state of your program. – variables in the source code and locations in the data areas of the executable – Changing state in your program. Starting/Exiting GDB Stopping and Continuing the ● Bring up the gdb debugger by typing: Execution of Your Program in GDB gdb executable_name ● Setting and deleting breakpoints. ● Initiate your executable by using the command: run [command-line arguments] ● Execution stepping and continuing. – Command line arguments can include anything that would normally appear after the executable name on the command line. ● run-time options, filenames, I/O redirection, etc. – When no arguments are specified, then gdb uses the arguments specified for the previous run command during the current gdb session. ● Exit the gdb debugger by typing: quit Setting and Deleting Breakpoints Examples of Setting and Deleting Breakpoints ● Can set a breakpoint to stop: – at a particular source line number or function (gdb) break # sets breakpoint at current line # number – when a specified condition occurs (gdb) break 74 # sets breakpoint at line 74 in the # current file ● General form. -

Evocells - a Treemap Layout Algorithm for Evolving Tree Data

Initial State EvoCells - A Treemap Layout Algorithm for Evolving Tree Data Willy Scheibel, Christopher Weyand and Jurgen¨ Dollner¨ NextHasso State Plattner Institute Faculty of Digital Engineering, University of Potsdam, Potsdam, Germany Initial | State Keywords: Treemap Layout Algorithm, Time-varying Tree-structured Data, Treemap Layout Metrics. Abstract: We propose the rectangular treemap layout algorithm EvoCells that maps changes in tree-structured data onto an initial treemap layout. Changes in topology and node weights are mapped to insertion, removal, growth, and shrinkage of the layout rectangles. Thereby, rectangles displace their neighbors and stretche their enclosing rectangles with a run-time complexity of O(nlogn). An evaluation using layout stability metrics on the open source ElasticSearch software system suggests EvoCells as a valid alternative for stable treemap layouting. 1 INTRODUCTION regard to algorithmic complexity and layout stability, together with a case-study in the domain of software Tree-structured data is subject to constant change. analytics based on the software system ElasticSearch. In order to manage change, understanding the evo- lution is important. An often used tool to commu- Initial State nicate structure and characteristics of tree-structured data is the treemap (Shneiderman, 1992). Most lay- outs are based on a recursive partition of a given ini- tial 2D rectangular area proportional to the summed weights of the nodes. Besides topology and associ- ated weights, additional visual variables can be used (Carpendale, 2003), including extrusion of the 2D layout to 3D cuboids. The restricted use of the third dimension is reflected by the term 2.5D treemaps (Limberger et al., 2017b). When used over time, treemap layouts are faced Next State by their inherent instability regarding even minor changes to the nodes’ weights used for the spatial layouting, impeding the creation and use of a mental map (Misue et al., 1995). -

Appendix B Development Tools

Appendix B Development Tools Problem Statement Although more proprietary packages are available, there are abundant open source packages, giving its users unprecedented flexibility and freedom of choice, that most users can find an application that exactly meets their needs. However, it is also this pool of choices that drive individuals to share a wide range of preferences and biases. As a result, Linux/Open source packages (and softeware in general) is flooded with religious wars over programming languages, editors, licences, etc. Some are worthwhile debates, others are meaningless flamewars. Throughout Appendix B and next chapter, Appendix C, we intend to summarize a few essential tools (from our point of view) that may help readers to know the aspects and how-to of (1) development tools including programming, debugging, and maintaining, and (2) network experiment tools including name-addressing, perimeter probing, traffic-monitoring, benchmarking, simulating/emulating, and finally hacking. Section B.1 guides readers to begin the developing journey with programming tools. A good first step would be writing the first piece of codes using a Visual Improved (vim) text editor. Then compiling it to binary executables with GNU C compiler (gcc), and furthermore offloading some repetitive compiling steps to the make utility. The old 80/20 rule still works on programming where 80% of your codes come from 20% of your efforts leaving 80% of your efforts go buggy about your program. Therefore you would need some debugging tools as dicussed in Section 1.2. including source-level debugging, GNU Debugger (gdb), or a GUI fasion approach, Data Display Debugger (ddd), and debug the kernel itself using remote Kernel GNU Debugger (kgdb). -

Storytelling and Visualization: a Survey

Storytelling and Visualization: A Survey Chao Tong1, Richard Roberts1, Robert S.Laramee1,Kodzo Wegba2, Aidong Lu2, Yun Wang3, Huamin Qu3, Qiong Luo3 and Xiaojuan Ma3 1Visual and Interactive Computing Group, Swansea University 2Department of Computer Science, University of North Carolina at Charlotte 3Department of Computer Science and Engineering, Hong Kong University of Science and Technology Keywords: Storytelling, Narrative, Information visualization, Scientific visualization Abstract: Throughout history, storytelling has been an effective way of conveying information and knowledge. In the field of visualization, storytelling is rapidly gaining momentum and evolving cutting-edge techniques that enhance understanding. Many communities have commented on the importance of storytelling in data visual- ization. Storytellers tend to be integrating complex visualizations into their narratives in growing numbers. In this paper, we present a survey of storytelling literature in visualization and present an overview of the com- mon and important elements in storytelling visualization. We also describe the challenges in this field as well as a novel classification of the literature on storytelling in visualization. Our classification scheme highlights the open and unsolved problems in this field as well as the more mature storytelling sub-fields. The benefits offer a concise overview and a starting point into this rapidly evolving research trend and provide a deeper understanding of this topic. 1 MOTIVATION cation of the literature on storytelling in visualiza- tion. Our classification highlights both mature and “We believe in the power of science, exploration, unsolved problems in this area. The benefit is a con- and storytelling to change the world” - Susan Gold- cise overview and valuable starting point into this berg, Editor in Chief of National Geographic Maga- rapidly growing and evolving research trend. -

Analyzing Feature Implementation by Visual Exploration of Architecturally

Analyzing Feature Implementation by Visual Exploration of Architecturally-Embedded Call-Graphs Johannes Bohnet Jürgen Döllner University of Potsdam University of Potsdam Hasso-Plattner-Institute Hasso-Plattner-Institute Prof.-Dr.-Helmert-Str. 2-3 Prof.-Dr.-Helmert-Str. 2-3 14482 Potsdam, Germany 14482 Potsdam, Germany [email protected] [email protected] ABSTRACT 1. INTRODUCTION Maintenance, reengineering, and refactoring of large and complex As stated in [32] “year after year the lion's share of effort goes software systems are commonly based on modifications and into modifying and extending preexisting systems, about which enhancements related to features. Before developers can modify we know very little”. Requests for software changes are often feature functionality they have to locate the relevant code expressed by end users in terms of features, i.e. an observable components and understand the components’ interaction. In this behavior that is triggered by user interaction. Requesting feature paper, we present a prototype tool for analyzing feature changes concerns bug fixes or enhancements of feature functiona- implementation of large C/C++ software systems by visual lity and is a key concept for maintaining, reengineering, and exploration of dynamically extracted call relations between code refactoring large and complex software systems. To implement components. The component interaction can be analyzed on these new requirements, software developers have to translate the various abstraction levels ranging from function interaction up to feature change requests to changes in code components and their interaction of the system with shared libraries of the operating interaction behavior. The term component is used here in a more system.