Creativity in Computer Science. in J

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Historical Perspective and Further Reading 162.E1

2.21 Historical Perspective and Further Reading 162.e1 2.21 Historical Perspective and Further Reading Th is section surveys the history of in struction set architectures over time, and we give a short history of programming languages and compilers. ISAs include accumulator architectures, general-purpose register architectures, stack architectures, and a brief history of ARMv7 and the x86. We also review the controversial subjects of high-level-language computer architectures and reduced instruction set computer architectures. Th e history of programming languages includes Fortran, Lisp, Algol, C, Cobol, Pascal, Simula, Smalltalk, C+ + , and Java, and the history of compilers includes the key milestones and the pioneers who achieved them. Accumulator Architectures Hardware was precious in the earliest stored-program computers. Consequently, computer pioneers could not aff ord the number of registers found in today’s architectures. In fact, these architectures had a single register for arithmetic instructions. Since all operations would accumulate in one register, it was called the accumulator , and this style of instruction set is given the same name. For example, accumulator Archaic EDSAC in 1949 had a single accumulator. term for register. On-line Th e three-operand format of RISC-V suggests that a single register is at least two use of it as a synonym for registers shy of our needs. Having the accumulator as both a source operand and “register” is a fairly reliable indication that the user the destination of the operation fi lls part of the shortfall, but it still leaves us one has been around quite a operand short. Th at fi nal operand is found in memory. -

John Mccarthy

JOHN MCCARTHY: the uncommon logician of common sense Excerpt from Out of their Minds: the lives and discoveries of 15 great computer scientists by Dennis Shasha and Cathy Lazere, Copernicus Press August 23, 2004 If you want the computer to have general intelligence, the outer structure has to be common sense knowledge and reasoning. — John McCarthy When a five-year old receives a plastic toy car, she soon pushes it and beeps the horn. She realizes that she shouldn’t roll it on the dining room table or bounce it on the floor or land it on her little brother’s head. When she returns from school, she expects to find her car in more or less the same place she last put it, because she put it outside her baby brother’s reach. The reasoning is so simple that any five-year old child can understand it, yet most computers can’t. Part of the computer’s problem has to do with its lack of knowledge about day-to-day social conventions that the five-year old has learned from her parents, such as don’t scratch the furniture and don’t injure little brothers. Another part of the problem has to do with a computer’s inability to reason as we do daily, a type of reasoning that’s foreign to conventional logic and therefore to the thinking of the average computer programmer. Conventional logic uses a form of reasoning known as deduction. Deduction permits us to conclude from statements such as “All unemployed actors are waiters, ” and “ Sebastian is an unemployed actor,” the new statement that “Sebastian is a waiter.” The main virtue of deduction is that it is “sound” — if the premises hold, then so will the conclusions. -

Getting Started Computing at the Al Lab by Christopher C. Stacy Abstract

MASSACHUSETTS INSTITUTE OF TECHNOLOGY ARTIFICIAL INTELLI..IGENCE LABORATORY WORKING PAPER 235 7 September 1982 Getting Started Computing at the Al Lab by Christopher C. Stacy Abstract This document describes the computing facilities at the M.I.T. Artificial Intelligence Laboratory, and explains how to get started using them. It is intended as an orientation document for newcomers to the lab, and will be updated by the author from time to time. A.I. Laboratory Working Papers are produced for internal circulation. and may contain information that is, for example, too preliminary or too detailed for formal publication. It is not intended that they should be considered papers to which reference can be made in the literature. a MASACHUSETS INSTITUTE OF TECHNOLOGY 1982 Getting Started Table of Contents Page i Table of Contents 1. Introduction 1 1.1. Lisp Machines 2 1.2. Timesharing 3 1.3. Other Computers 3 1.3.1. Field Engineering 3 1.3.2. Vision and Robotics 3 1.3.3. Music 4 1,3.4. Altos 4 1.4. Output Peripherals 4 1.5. Other Machines 5 1.6. Terminals 5 2. Networks 7 2.1. The ARPAnet 7 2.2. The Chaosnet 7 2.3. Services 8 2.3.1. TELNET/SUPDUP 8 2.3.2. FTP 8 2.4. Mail 9 2.4.1. Processing Mail 9 2.4.2. Ettiquette 9 2.5. Mailing Lists 10 2.5.1. BBoards 11 2.6. Finger/Inquire 11 2.7. TIPs and TACs 12 2.7.1. ARPAnet TAC 12 2.7.2. Chaosnet TIP 13 3. -

John Mccarthy – Father of Artificial Intelligence

Asia Pacific Mathematics Newsletter John McCarthy – Father of Artificial Intelligence V Rajaraman Introduction I first met John McCarthy when he visited IIT, Kanpur, in 1968. During his visit he saw that our computer centre, which I was heading, had two batch processing second generation computers — an IBM 7044/1401 and an IBM 1620, both of them were being used for “production jobs”. IBM 1620 was used primarily to teach programming to all students of IIT and IBM 7044/1401 was used by research students and faculty besides a large number of guest users from several neighbouring universities and research laboratories. There was no interactive computer available for computer science and electrical engineering students to do hardware and software research. McCarthy was a great believer in the power of time-sharing computers. John McCarthy In fact one of his first important contributions was a memo he wrote in 1957 urging the Director of the MIT In this article we summarise the contributions of Computer Centre to modify the IBM 704 into a time- John McCarthy to Computer Science. Among his sharing machine [1]. He later persuaded Digital Equip- contributions are: suggesting that the best method ment Corporation (who made the first mini computers of using computers is in an interactive mode, a mode and the PDP series of computers) to design a mini in which computers become partners of users computer with a time-sharing operating system. enabling them to solve problems. This logically led to the idea of time-sharing of large computers by many users and computing becoming a utility — much like a power utility. -

Fpgas As Components in Heterogeneous HPC Systems: Raising the Abstraction Level of Heterogeneous Programming

FPGAs as Components in Heterogeneous HPC Systems: Raising the Abstraction Level of Heterogeneous Programming Wim Vanderbauwhede School of Computing Science University of Glasgow A trip down memory lane 80 Years ago: The Theory Turing, Alan Mathison. "On computable numbers, with an application to the Entscheidungsproblem." J. of Math 58, no. 345-363 (1936): 5. 1936: Universal machine (Alan Turing) 1936: Lambda calculus (Alonzo Church) 1936: Stored-program concept (Konrad Zuse) 1937: Church-Turing thesis 1945: The Von Neumann architecture Church, Alonzo. "A set of postulates for the foundation of logic." Annals of mathematics (1932): 346-366. 60-40 Years ago: The Foundations The first working integrated circuit, 1958. © Texas Instruments. 1957: Fortran, John Backus, IBM 1958: First IC, Jack Kilby, Texas Instruments 1965: Moore’s law 1971: First microprocessor, Texas Instruments 1972: C, Dennis Ritchie, Bell Labs 1977: Fortran-77 1977: von Neumann bottleneck, John Backus 30 Years ago: HDLs and FPGAs Algotronix CAL1024 FPGA, 1989. © Algotronix 1984: Verilog 1984: First reprogrammable logic device, Altera 1985: First FPGA,Xilinx 1987: VHDL Standard IEEE 1076-1987 1989: Algotronix CAL1024, the first FPGA to offer random access to its control memory 20 Years ago: High-level Synthesis Page, Ian. "Closing the gap between hardware and software: hardware-software cosynthesis at Oxford." (1996): 2-2. 1996: Handel-C, Oxford University 2001: Mitrion-C, Mitrionics 2003: Bluespec, MIT 2003: MaxJ, Maxeler Technologies 2003: Impulse-C, Impulse Accelerated -

Selected Filmography of Digital Culture and New Media Art

Dejan Grba SELECTED FILMOGRAPHY OF DIGITAL CULTURE AND NEW MEDIA ART This filmography comprises feature films, documentaries, TV shows, series and reports about digital culture and new media art. The selected feature films reflect the informatization of society, economy and politics in various ways, primarily on the conceptual and narrative plan. Feature films that directly thematize the digital paradigm can be found in the Film Lists section. Each entry is referenced with basic filmographic data: director’s name, title and production year, and production details are available online at IMDB, FilmWeb, FindAnyFilm, Metacritic etc. The coloured titles are links. Feature films Fritz Lang, Metropolis, 1926. Fritz Lang, M, 1931. William Cameron Menzies, Things to Come, 1936. Fritz Lang, The Thousand Eyes of Dr. Mabuse, 1960. Sidney Lumet, Fail-Safe, 1964. James B. Harris, The Bedford Incident, 1965. Jean-Luc Godard, Alphaville, 1965. Joseph Sargent, Colossus: The Forbin Project, 1970. Henri Verneuil, Le serpent, 1973. Alan J. Pakula, The Parallax View, 1974. Francis Ford Coppola, The Conversation, 1974. Sidney Pollack, The Three Days of Condor, 1975. George P. Cosmatos, The Cassandra Crossing, 1976. Sidney Lumet, Network, 1976. Robert Aldrich, Twilight's Last Gleaming, 1977. Michael Crichton, Coma, 1978. Brian De Palma, Blow Out, 1981. Steven Lisberger, Tron, 1982. Godfrey Reggio, Koyaanisqatsi, 1983. John Badham, WarGames, 1983. Roger Donaldson, No Way Out, 1987. F. Gary Gray, The Negotiator, 1988. John McTiernan, Die Hard, 1988. Phil Alden Robinson, Sneakers, 1992. Andrew Davis, The Fugitive, 1993. David Fincher, The Game, 1997. David Cronenberg, eXistenZ, 1999. Frank Oz, The Score, 2001. Tony Scott, Spy Game, 2001. -

The Computational Attitude in Music Theory

The Computational Attitude in Music Theory Eamonn Bell Submitted in partial fulfillment of the requirements for the degree of Doctor of Philosophy in the Graduate School of Arts and Sciences COLUMBIA UNIVERSITY 2019 © 2019 Eamonn Bell All rights reserved ABSTRACT The Computational Attitude in Music Theory Eamonn Bell Music studies’s turn to computation during the twentieth century has engendered particular habits of thought about music, habits that remain in operation long after the music scholar has stepped away from the computer. The computational attitude is a way of thinking about music that is learned at the computer but can be applied away from it. It may be manifest in actual computer use, or in invocations of computationalism, a theory of mind whose influence on twentieth-century music theory is palpable. It may also be manifest in more informal discussions about music, which make liberal use of computational metaphors. In Chapter 1, I describe this attitude, the stakes for considering the computer as one of its instruments, and the kinds of historical sources and methodologies we might draw on to chart its ascendance. The remainder of this dissertation considers distinct and varied cases from the mid-twentieth century in which computers or computationalist musical ideas were used to pursue new musical objects, to quantify and classify musical scores as data, and to instantiate a generally music-structuralist mode of analysis. I present an account of the decades-long effort to prepare an exhaustive and accurate catalog of the all-interval twelve-tone series (Chapter 2). This problem was first posed in the 1920s but was not solved until 1959, when the composer Hanns Jelinek collaborated with the computer engineer Heinz Zemanek to jointly develop and run a computer program. -

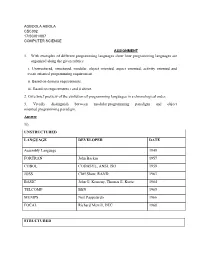

1. with Examples of Different Programming Languages Show How Programming Languages Are Organized Along the Given Rubrics: I

AGBOOLA ABIOLA CSC302 17/SCI01/007 COMPUTER SCIENCE ASSIGNMENT 1. With examples of different programming languages show how programming languages are organized along the given rubrics: i. Unstructured, structured, modular, object oriented, aspect oriented, activity oriented and event oriented programming requirement. ii. Based on domain requirements. iii. Based on requirements i and ii above. 2. Give brief preview of the evolution of programming languages in a chronological order. 3. Vividly distinguish between modular programming paradigm and object oriented programming paradigm. Answer 1i). UNSTRUCTURED LANGUAGE DEVELOPER DATE Assembly Language 1949 FORTRAN John Backus 1957 COBOL CODASYL, ANSI, ISO 1959 JOSS Cliff Shaw, RAND 1963 BASIC John G. Kemeny, Thomas E. Kurtz 1964 TELCOMP BBN 1965 MUMPS Neil Pappalardo 1966 FOCAL Richard Merrill, DEC 1968 STRUCTURED LANGUAGE DEVELOPER DATE ALGOL 58 Friedrich L. Bauer, and co. 1958 ALGOL 60 Backus, Bauer and co. 1960 ABC CWI 1980 Ada United States Department of Defence 1980 Accent R NIS 1980 Action! Optimized Systems Software 1983 Alef Phil Winterbottom 1992 DASL Sun Micro-systems Laboratories 1999-2003 MODULAR LANGUAGE DEVELOPER DATE ALGOL W Niklaus Wirth, Tony Hoare 1966 APL Larry Breed, Dick Lathwell and co. 1966 ALGOL 68 A. Van Wijngaarden and co. 1968 AMOS BASIC FranÇois Lionet anConstantin Stiropoulos 1990 Alice ML Saarland University 2000 Agda Ulf Norell;Catarina coquand(1.0) 2007 Arc Paul Graham, Robert Morris and co. 2008 Bosque Mark Marron 2019 OBJECT-ORIENTED LANGUAGE DEVELOPER DATE C* Thinking Machine 1987 Actor Charles Duff 1988 Aldor Thomas J. Watson Research Center 1990 Amiga E Wouter van Oortmerssen 1993 Action Script Macromedia 1998 BeanShell JCP 1999 AngelScript Andreas Jönsson 2003 Boo Rodrigo B. -

Pioneers of Computing

Pioneers of Computing В 1980 IEEE Computer Society учредило Золотую медаль (бронзовую) «Вычислительный Пионер» Пионерами учредителями стали 32 члена IEEE Computer Society, связанных с работами по информатике и вычислительным наукам. 1 Pioneers of Computing 1.Howard H. Aiken (Havard Mark I) 2.John V. Atanasoff 3.Charles Babbage (Analytical Engine) 4.John Backus 5.Gordon Bell (Digital) 6.Vannevar Bush 7.Edsger W. Dijkstra 8.John Presper Eckert 9.Douglas C. Engelbart 10.Andrei P. Ershov (theroretical programming) 11.Tommy Flowers (Colossus engineer) 12.Robert W. Floyd 13.Kurt Gödel 14.William R. Hewlett 15.Herman Hollerith 16.Grace M. Hopper 17.Tom Kilburn (Manchester) 2 Pioneers of Computing 1. Donald E. Knuth (TeX) 2. Sergei A. Lebedev 3. Augusta Ada Lovelace 4. Aleksey A.Lyapunov 5. Benoit Mandelbrot 6. John W. Mauchly 7. David Packard 8. Blaise Pascal 9. P. Georg and Edvard Scheutz (Difference Engine, Sweden) 10. C. E. Shannon (information theory) 11. George R. Stibitz 12. Alan M. Turing (Colossus and code-breaking) 13. John von Neumann 14. Maurice V. Wilkes (EDSAC) 15. J.H. Wilkinson (numerical analysis) 16. Freddie C. Williams 17. Niklaus Wirth 18. Stephen Wolfram (Mathematica) 19. Konrad Zuse 3 Pioneers of Computing - 2 Howard H. Aiken (Havard Mark I) – США Создатель первой ЭВМ – 1943 г. Gene M. Amdahl (IBM360 computer architecture, including pipelining, instruction look-ahead, and cache memory) – США (1964 г.) Идеология майнфреймов – система массовой обработки данных John W. Backus (Fortran) – первый язык высокого уровня – 1956 г. 4 Pioneers of Computing - 3 Robert S. Barton For his outstanding contributions in basing the design of computing systems on the hierarchical nature of programs and their data. -

Publications Core Magazine, 2007 Read

CA PUBLICATIONo OF THE COMPUTERre HISTORY MUSEUM ⁄⁄ SPRINg–SUMMER 2007 REMARKABLE PEOPLE R E scuE d TREAsuREs A collection saved by SAP Focus on E x TRAORdinARy i MAGEs Computers through the Robert Noyce lens of Mark Richards PUBLISHER & Ed I t o R - I n - c hie f THE BEST WAY Karen M. Tucker E X E c U t I V E E d I t o R TO SEE THE FUTURE Leonard J. Shustek M A n A GI n G E d I t o R OF COMPUTING IS Robert S. Stetson A S S o c IA t E E d I t o R TO BROWSE ITS PAST. Kirsten Tashev t E c H n I c A L E d I t o R Dag Spicer E d I t o R Laurie Putnam c o n t RIBU t o RS Leslie Berlin Chris garcia Paula Jabloner Luanne Johnson Len Shustek Dag Spicer Kirsten Tashev d E S IG n Kerry Conboy P R o d U c t I o n ma n ager Robert S. Stetson W E BSI t E M A n AGER Bob Sanguedolce W E BSI t E d ESIG n The computer. In all of human history, rarely has one invention done Dana Chrisler so much to change the world in such a short time. Ton Luong The Computer History Museum is home to the world’s largest collection computerhistory.org/core of computing artifacts and offers a variety of exhibits, programs, and © 2007 Computer History Museum. -

![Arxiv:2106.11534V1 [Cs.DL] 22 Jun 2021 2 Nanjing University of Science and Technology, Nanjing, China 3 University of Southampton, Southampton, U.K](https://docslib.b-cdn.net/cover/7768/arxiv-2106-11534v1-cs-dl-22-jun-2021-2-nanjing-university-of-science-and-technology-nanjing-china-3-university-of-southampton-southampton-u-k-1557768.webp)

Arxiv:2106.11534V1 [Cs.DL] 22 Jun 2021 2 Nanjing University of Science and Technology, Nanjing, China 3 University of Southampton, Southampton, U.K

Noname manuscript No. (will be inserted by the editor) Turing Award elites revisited: patterns of productivity, collaboration, authorship and impact Yinyu Jin1 · Sha Yuan1∗ · Zhou Shao2, 4 · Wendy Hall3 · Jie Tang4 Received: date / Accepted: date Abstract The Turing Award is recognized as the most influential and presti- gious award in the field of computer science(CS). With the rise of the science of science (SciSci), a large amount of bibliographic data has been analyzed in an attempt to understand the hidden mechanism of scientific evolution. These include the analysis of the Nobel Prize, including physics, chemistry, medicine, etc. In this article, we extract and analyze the data of 72 Turing Award lau- reates from the complete bibliographic data, fill the gap in the lack of Turing Award analysis, and discover the development characteristics of computer sci- ence as an independent discipline. First, we show most Turing Award laureates have long-term and high-quality educational backgrounds, and more than 61% of them have a degree in mathematics, which indicates that mathematics has played a significant role in the development of computer science. Secondly, the data shows that not all scholars have high productivity and high h-index; that is, the number of publications and h-index is not the leading indicator for evaluating the Turing Award. Third, the average age of awardees has increased from 40 to around 70 in recent years. This may be because new breakthroughs take longer, and some new technologies need time to prove their influence. Besides, we have also found that in the past ten years, international collabo- ration has experienced explosive growth, showing a new paradigm in the form of collaboration. -

Introduction to the Literature on Programming Language Design Gary T

Computer Science Technical Reports Computer Science 7-1999 Introduction to the Literature On Programming Language Design Gary T. Leavens Iowa State University Follow this and additional works at: http://lib.dr.iastate.edu/cs_techreports Part of the Programming Languages and Compilers Commons Recommended Citation Leavens, Gary T., "Introduction to the Literature On Programming Language Design" (1999). Computer Science Technical Reports. 59. http://lib.dr.iastate.edu/cs_techreports/59 This Article is brought to you for free and open access by the Computer Science at Iowa State University Digital Repository. It has been accepted for inclusion in Computer Science Technical Reports by an authorized administrator of Iowa State University Digital Repository. For more information, please contact [email protected]. Introduction to the Literature On Programming Language Design Abstract This is an introduction to the literature on programming language design and related topics. It is intended to cite the most important work, and to provide a place for students to start a literature search. Keywords programming languages, semantics, type systems, polymorphism, type theory, data abstraction, functional programming, object-oriented programming, logic programming, declarative programming, parallel and distributed programming languages Disciplines Programming Languages and Compilers This article is available at Iowa State University Digital Repository: http://lib.dr.iastate.edu/cs_techreports/59 Intro duction to the Literature On Programming Language Design Gary T. Leavens TR 93-01c Jan. 1993, revised Jan. 1994, Feb. 1996, and July 1999 Keywords: programming languages, semantics, typ e systems, p olymorphism, typ e theory, data abstrac- tion, functional programming, ob ject-oriented programming, logic programming, declarative programming, parallel and distributed programming languages.