Project 7: Virtual Machine Translator I

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Compilers & Translator Writing Systems

Compilers & Translators Compilers & Translator Writing Systems Prof. R. Eigenmann ECE573, Fall 2005 http://www.ece.purdue.edu/~eigenman/ECE573 ECE573, Fall 2005 1 Compilers are Translators Fortran Machine code C Virtual machine code C++ Transformed source code Java translate Augmented source Text processing language code Low-level commands Command Language Semantic components Natural language ECE573, Fall 2005 2 ECE573, Fall 2005, R. Eigenmann 1 Compilers & Translators Compilers are Increasingly Important Specification languages Increasingly high level user interfaces for ↑ specifying a computer problem/solution High-level languages ↑ Assembly languages The compiler is the translator between these two diverging ends Non-pipelined processors Pipelined processors Increasingly complex machines Speculative processors Worldwide “Grid” ECE573, Fall 2005 3 Assembly code and Assemblers assembly machine Compiler code Assembler code Assemblers are often used at the compiler back-end. Assemblers are low-level translators. They are machine-specific, and perform mostly 1:1 translation between mnemonics and machine code, except: – symbolic names for storage locations • program locations (branch, subroutine calls) • variable names – macros ECE573, Fall 2005 4 ECE573, Fall 2005, R. Eigenmann 2 Compilers & Translators Interpreters “Execute” the source language directly. Interpreters directly produce the result of a computation, whereas compilers produce executable code that can produce this result. Each language construct executes by invoking a subroutine of the interpreter, rather than a machine instruction. Examples of interpreters? ECE573, Fall 2005 5 Properties of Interpreters “execution” is immediate elaborate error checking is possible bookkeeping is possible. E.g. for garbage collection can change program on-the-fly. E.g., switch libraries, dynamic change of data types machine independence. -

Source-To-Source Translation and Software Engineering

Journal of Software Engineering and Applications, 2013, 6, 30-40 http://dx.doi.org/10.4236/jsea.2013.64A005 Published Online April 2013 (http://www.scirp.org/journal/jsea) Source-to-Source Translation and Software Engineering David A. Plaisted Department of Computer Science, University of North Carolina at Chapel Hill, Chapel Hill, USA. Email: [email protected] Received February 5th, 2013; revised March 7th, 2013; accepted March 15th, 2013 Copyright © 2013 David A. Plaisted. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited. ABSTRACT Source-to-source translation of programs from one high level language to another has been shown to be an effective aid to programming in many cases. By the use of this approach, it is sometimes possible to produce software more cheaply and reliably. However, the full potential of this technique has not yet been realized. It is proposed to make source- to-source translation more effective by the use of abstract languages, which are imperative languages with a simple syntax and semantics that facilitate their translation into many different languages. By the use of such abstract lan- guages and by translating only often-used fragments of programs rather than whole programs, the need to avoid writing the same program or algorithm over and over again in different languages can be reduced. It is further proposed that programmers be encouraged to write often-used algorithms and program fragments in such abstract languages. Libraries of such abstract programs and program fragments can then be constructed, and programmers can be encouraged to make use of such libraries by translating their abstract programs into application languages and adding code to join things together when coding in various application languages. -

Tdb: a Source-Level Debugger for Dynamically Translated Programs

Tdb: A Source-level Debugger for Dynamically Translated Programs Naveen Kumar†, Bruce R. Childers†, and Mary Lou Soffa‡ †Department of Computer Science ‡Department of Computer Science University of Pittsburgh University of Virginia Pittsburgh, Pennsylvania 15260 Charlottesville, Virginia 22904 {naveen, childers}@cs.pitt.edu [email protected] Abstract single stepping through program execution, watching for par- ticular conditions and requests to add and remove breakpoints. Debugging techniques have evolved over the years in response In order to respond, the debugger has to map the values and to changes in programming languages, implementation tech- statements that the user expects using the source program niques, and user needs. A new type of implementation vehicle viewpoint, to the actual values and locations of the statements for software has emerged that, once again, requires new as found in the executable program. debugging techniques. Software dynamic translation (SDT) As programming languages have evolved, new debugging has received much attention due to compelling applications of techniques have been developed. For example, checkpointing the technology, including software security checking, binary and time stamping techniques have been developed for lan- translation, and dynamic optimization. Using SDT, program guages with concurrent constructs [6,19,30]. The pervasive code changes dynamically, and thus, debugging techniques use of code optimizations to improve performance has necessi- developed for statically generated code cannot be used to tated techniques that can respond to queries even though the debug these applications. In this paper, we describe a new optimization may have changed the number of statement debug architecture for applications executing with SDT sys- instances and the order of execution [15,25,29]. -

Virtual Machine Part II: Program Control

Virtual Machine Part II: Program Control Building a Modern Computer From First Principles www.nand2tetris.org Elements of Computing Systems, Nisan & Schocken, MIT Press, www.nand2tetris.org , Chapter 8: Virtual Machine, Part II slide 1 Where we are at: Human Abstract design Software abstract interface Thought Chapters 9, 12 hierarchy H.L. Language Compiler & abstract interface Chapters 10 - 11 Operating Sys. Virtual VM Translator abstract interface Machine Chapters 7 - 8 Assembly Language Assembler Chapter 6 abstract interface Computer Machine Architecture abstract interface Language Chapters 4 - 5 Hardware Gate Logic abstract interface Platform Chapters 1 - 3 Electrical Chips & Engineering Hardware Physics hierarchy Logic Gates Elements of Computing Systems, Nisan & Schocken, MIT Press, www.nand2tetris.org , Chapter 8: Virtual Machine, Part II slide 2 The big picture Some . Some Other . Jack language language language Chapters Some Jack compiler Some Other 9-13 compiler compiler Implemented in VM language Projects 7-8 VM implementation VM imp. VM imp. VM over the Hack Chapters over CISC over RISC emulator platforms platforms platform 7-8 A Java-based emulator CISC RISC is included in the course written in Hack software suite machine machine . a high-level machine language language language language Chapters . 1-6 CISC RISC other digital platforms, each equipped Any Hack machine machine with its VM implementation computer computer Elements of Computing Systems, Nisan & Schocken, MIT Press, www.nand2tetris.org , Chapter 8: Virtual Machine, -

A Brief History of Just-In-Time Compilation

A Brief History of Just-In-Time JOHN AYCOCK University of Calgary Software systems have been using “just-in-time” compilation (JIT) techniques since the 1960s. Broadly, JIT compilation includes any translation performed dynamically, after a program has started execution. We examine the motivation behind JIT compilation and constraints imposed on JIT compilation systems, and present a classification scheme for such systems. This classification emerges as we survey forty years of JIT work, from 1960–2000. Categories and Subject Descriptors: D.3.4 [Programming Languages]: Processors; K.2 [History of Computing]: Software General Terms: Languages, Performance Additional Key Words and Phrases: Just-in-time compilation, dynamic compilation 1. INTRODUCTION into a form that is executable on a target platform. Those who cannot remember the past are con- What is translated? The scope and na- demned to repeat it. ture of programming languages that re- George Santayana, 1863–1952 [Bartlett 1992] quire translation into executable form covers a wide spectrum. Traditional pro- This oft-quoted line is all too applicable gramming languages like Ada, C, and in computer science. Ideas are generated, Java are included, as well as little lan- explored, set aside—only to be reinvented guages [Bentley 1988] such as regular years later. Such is the case with what expressions. is now called “just-in-time” (JIT) or dy- Traditionally, there are two approaches namic compilation, which refers to trans- to translation: compilation and interpreta- lation that occurs after a program begins tion. Compilation translates one language execution. into another—C to assembly language, for Strictly speaking, JIT compilation sys- example—with the implication that the tems (“JIT systems” for short) are com- translated form will be more amenable pletely unnecessary. -

Language Translators

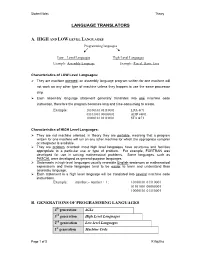

Student Notes Theory LANGUAGE TRANSLATORS A. HIGH AND LOW LEVEL LANGUAGES Programming languages Low – Level Languages High-Level Languages Example: Assembly Language Example: Pascal, Basic, Java Characteristics of LOW Level Languages: They are machine oriented : an assembly language program written for one machine will not work on any other type of machine unless they happen to use the same processor chip. Each assembly language statement generally translates into one machine code instruction, therefore the program becomes long and time-consuming to create. Example: 10100101 01110001 LDA &71 01101001 00000001 ADD #&01 10000101 01110001 STA &71 Characteristics of HIGH Level Languages: They are not machine oriented: in theory they are portable , meaning that a program written for one machine will run on any other machine for which the appropriate compiler or interpreter is available. They are problem oriented: most high level languages have structures and facilities appropriate to a particular use or type of problem. For example, FORTRAN was developed for use in solving mathematical problems. Some languages, such as PASCAL were developed as general-purpose languages. Statements in high-level languages usually resemble English sentences or mathematical expressions and these languages tend to be easier to learn and understand than assembly language. Each statement in a high level language will be translated into several machine code instructions. Example: number:= number + 1; 10100101 01110001 01101001 00000001 10000101 01110001 B. GENERATIONS OF PROGRAMMING LANGUAGES 4th generation 4GLs 3rd generation High Level Languages 2nd generation Low-level Languages 1st generation Machine Code Page 1 of 5 K Aquilina Student Notes Theory 1. MACHINE LANGUAGE – 1ST GENERATION In the early days of computer programming all programs had to be written in machine code. -

A Language Translator for a Computer Aided Rapid Prototyping System

Calhoun: The NPS Institutional Archive Theses and Dissertations Thesis Collection 1988 A language translator for a computer aided rapid prototyping system Moffitt, Charlie Robert. Monterey, California. Naval Postgraduate School http://hdl.handle.net/10945/23284 MC. NAVAL POSTGRADUATE SCHOOL Monterey, California M^rl^ A LANGUAGE TRANSLATOR FOR A COMPUTER AIDED RAPID PROTOTYPING SYS- TEM by Charlie Robert Moflitt, II March 1988 Thesis Advisor Luqi Approved for public release; distribution is unlimited. T239106 Unclassified ecurity classification of this page REPORT DOCUMENTATION PAGE :b Restrictive Mai kings la Report Security Classification Lnclassificd 2a Security Classification Authority 3 Distribution Availability of Report 2b Declassification Downgrading Schedule Approved lor public release; distribution is unlimited. 4 Performing Organization Report Number(s) 5 Monitoring Organization Report Numbcr(s) 6a Name of Performing Organization 6b Office Symbol 7a Name of Monitoring Organization Naval Postgraduate School (if applicable) 32 Naval Postgraduate School 6c Address (cicv, stare, and ZIP code) 7b Address (cirv, state, and ZIP code) Monterev. CA 93943-5000 Monterey, CA 93943-5000 8a Name of Funding Sponsoring Organization 8b Office Symbol 9 Procurement Instrument Identification Number (if applicable) 8c Address (city, state, and ZIP code) 10 Source of Fundina Numbers Program Element No Project No Task No Work Unit Accession No u Title (include security classification) A LANGUAGE TRANSLATOR FOR A COMPUTER AIDED RAPID PROTOTYP- ING SYSTEM 12 Personal Author(s) Charlie Robert Moffitt, II 13a Type of Report 13b Time Covered 14 Date of Report (year, month, day) 15 Page Count Master's Thesis From To March 198S 16 Supplementary Notation The views expressed in this thesis are those of the author and do not reflect the official policy or po- sition of the Department of Defense or the U.S. -

Development of a Translator from LLVM to ACL2

Development of a Translator from LLVM to ACL2 David Hardin, Jennifer Davis, David Greve, and Jedidiah McClurg July 2014 © 2014 Rockwell Collins All rights reserved. Introduction • Research objectives: – Reason about machine code generated from high-level languages • Eliminate need to trust compiler frontends by reasoning about a compiler intermediate representation – Reason about small fragments of assembly code • We wish to create a library of formally verified software component models for layered assurance. • In our current work, the components are in LLVM intermediate form. • We wish to translate them to a theorem proving language, such as ACL2. • Thus, we built an LLVM-to-ACL2 translator. © 2014 Rockwell Collins 2 All rights reserved. Motivating Work • Jianzhou Zhao (U Penn) et al. produced several different formalizations of operational semantics for LLVM in Coq. (2012) – Intention to produce a verified LLVM compiler • Magnus Myreen’s (Cambridge) “decompilation into logic” work (2009 - present) – Imperative machine code (PPC, x86, ARM) -> HOL4 – Extracts functional behavior of imperative code – Assures decompilation process is sound • Andrew Appel (Princeton) observed that “SSA is functional programming” (1998) – Inspired us to build a translator from LLVM to ACL2 © 2014 Rockwell Collins 3 All rights reserved. LLVM • LLVM is the intermediate form for many common compilers, including clang. • LLVM code generation targets exist for a variety of machines. • LLVM is a register-based intermediate in Static Single Assignment (SSA) form (each variable is assigned exactly once, statically). • An LLVM program consists of a list of entities: – There are eight types including: • function declarations • function definitions • Our software component models are created from code that has been compiled into the LLVM intermediate form. -

Program Translation and Execution I:Linking Sept. 29, 1998

15-213 Introduction to Computer Systems Program Translation and Execution I: Linking Sept. 29, 1998 Topics • object files • linkers class11.ppt A simplistic program translation scheme p.c source file Translators executable object file p (memory image on disk) Loader executing program p (memory image) Problems: • efficiency: small change requires complete recompilation • modularity: hard to share common functionality (e.g. printf) class11.ppt – 2 – CS 213 F’98 Separate compilation p1.c p2.c libc.c Translator Translator Translator relocatable p1.o p2.o libc.o object files Static Linker executable object file p (contains code and data for all functions Loader defined in libc.c) Improves modularity and efficiency, but still hard to package common functions class11.ppt – 3 – CS 213 F’98 Static libraries p1.c p2.c Translator Translator static library p1.o p2.o libc.a of relocatable object files Static Linker p executable object file (only contains code and data for libc functions that are called Loader from p1.c and p2.c) Further improves modularity and efficiency, but duplicates common functions in different processes class11.ppt – 4 – CS 213 F’98 Shared libraries p1.c p2.c Translator Translator p1.o p2.o Static Linker shared library of dynamically p libc.so relocatable object files Loader/Dynamic Linker libc functions called by p1.c and p2.c are loaded at run- time and shared among processes. class11.ppt – 5 – CS 213 F’98 The complete picture p1.c p2.c Translator Translator p1.o p2.o libwhatever.a Static Linker p libc.so libm.so Loader/Dynamic Linker running program class11.ppt – 6 – CS 213 F’98 Object files C Program Object file char c[100]; bss sections double d; uninitialized static variables Block Started by Symbol int n=20; Better Save Space main() { int i; data sections Increasing initialized static variables addresses i=5; c[i] = ‘z’; } text sections: read-only code and data Note: local variables don’t go in object files. -

18 a Retargetable Static Binary Translator for the ARM Architecture

A Retargetable Static Binary Translator for the ARM Architecture BOR-YEH SHEN, WEI-CHUNG HSU, and WUU YANG, National Chiao-Tung University Machines designed with new but incompatible Instruction Set Architecture (ISA) may lack proper applica- tions. Binary translation can address this incompatibility by migrating applications from one legacy ISA to a new one, although binary translation has problems such as code discovery for variable-length ISA and code location issues for handling indirect branches. Dynamic Binary Translation (DBT) has been widely adopted for migrating applications since it avoids those problems. Static Binary Translation (SBT) is a less general solution and has not been actively researched. However, SBT performs more aggressive optimizations, which could yield more compact code and better code quality. Applications translated by SBT can consume less memory, processor cycles, and power than DBT and can be started more quickly. These advantages are even more critical for embedded systems than for general systems. In this article, we designed and implemented a new SBT tool, called LLBT, which translates ARM in- structions into LLVM IRs and then retargets the LLVM IRs to various ISAs, including ×86, ×86–64, ARM, and MIPS. LLBT leverages two important functionalities from LLVM: comprehensive optimizations and 18 retargetability. More importantly, LLBT solves the code discovery problem for ARM/Thumb binaries without resorting to interpretation. LLBT also effectively reduced the size of the address mapping table, making SBT a viable solution for embedded systems. Our experiments based on the EEMBC benchmark suite show that the LLBT-generated code can run more than 6× and 2.3× faster on average than emulation with QEMU and HQEMU, respectively. -

Automatic Generation of Assembly to IR Translators Using Compilers Niranjan Hasabnis and R

1 Automatic Generation of Assembly to IR Translators Using Compilers Niranjan Hasabnis and R. Sekar Stony Brook University, NY, USA Abstract—Translating low-level machine instructions into on manually developed translators from assembly to their higher-level intermediate representation (IR) is one of the central IR. Unfortunately, such a manual approach is laborious and steps in many binary translation, analysis and instrumentation error-prone for complex architectures such as x86, where systems. Most of these systems manually build the machine the manuals describing the instruction set semantics run to instruction to IR mapping table needed for such a translation. thousands of pages (for instance, Intel’s 1400-page instruction As a result, these systems often suffer from two problems: set reference (version 052) [5].) As a result, these frameworks (a) a great deal of manual effort is required to support new architectures, and (b) even for existing architectures, lack of often support only a limited number of architectures, and support for recent instruction set extensions, e.g., Valgrind’s lack even for a single architecture, support only a subset of the 1 of support for AVX, FMA4 and SSE4.1 for x86 processors. To instructions. For instance, Valgrind , still lacks support for overcome these difficulties, we propose a novel approach based several classes of x86 instructions, including AVX, FMA4, and on learning the assembly-to-IR mapping automatically. Modern SSE4.1, even after being in development for 10 years. compilers such as GCC and LLVM embed knowledge about In this paper, we propose a novel automatic approach that these mappings in their code generators. -

Decoupling Dynamic Program Analysis from Execution in Virtual Environments

Decoupling dynamic program analysis from execution in virtual environments Jim Chow Tal Garfinkel Peter M. Chen VMware Abstract 40x [18, 26], rendering many production workloads un- Analyzing the behavior of running programs has a wide usable. In non-production settings, such as program de- variety of compelling applications, from intrusion detec- velopment or quality assurance, this overhead may dis- tion and prevention to bug discovery. Unfortunately, the suade use in longer, more realistic tests. Further, the per- high runtime overheads imposed by complex analysis formance perturbations introduced by these analyses can techniques makes their deployment impractical in most lead to Heisenberg effects, where the phenomena under settings. We present a virtual machine based architec- observation is changed or lost due to the measurement ture called Aftersight ameliorates this, providing a flex- itself [25]. ible and practical way to run heavyweight analyses on We describe a system called Aftersight that overcomes production workloads. these limitations via an approach called decoupled analy- Aftersight decouples analysis from normal execution sis. Decoupled analysis moves analysis off the computer by logging nondeterministic VM inputs and replaying that is executing the main workload by separating execu- them on a separate analysis platform. VM output can tion and analysis into two tasks: recording, where system be gated on the results of an analysis for intrusion pre- execution is recorded in full with minimal interference, vention or analysis can run at its own pace for intrusion and analysis, where the log of the execution is replayed detection and best effort prevention. Logs can also be and analyzed. stored for later analysis offline for bug finding or foren- Aftersight is able to record program execution effi- sics, allowing analyses that would otherwise be unusable ciently using virtual machine recording and replay [4, 9, to be applied ubiquitously.