Propagation Via Kernelization: the Vertex Cover Constraint

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Vertex Cover Might Be Hard to Approximate to Within 2 Ε − Subhash Khot ∗ Oded Regev †

Vertex Cover Might be Hard to Approximate to within 2 ε − Subhash Khot ∗ Oded Regev † Abstract Based on a conjecture regarding the power of unique 2-prover-1-round games presented in [Khot02], we show that vertex cover is hard to approximate within any constant factor better than 2. We actually show a stronger result, namely, based on the same conjecture, vertex cover on k-uniform hypergraphs is hard to approximate within any constant factor better than k. 1 Introduction Minimum vertex cover is the problem of finding the smallest set of vertices that touches all the edges in a given graph. This is one of the most fundamental NP-complete problems. A simple 2- approximation algorithm exists for this problem: construct a maximal matching by greedily adding edges and then let the vertex cover contain both endpoints of each edge in the matching. It can be seen that the resulting set of vertices indeed touches all the edges and that its size is at most twice the size of the minimum vertex cover. However, despite considerable efforts, state of the art techniques can only achieve an approximation ratio of 2 o(1) [16, 21]. − Given this state of affairs, one might strongly suspect that vertex cover is NP-hard to approxi- mate within 2 ε for any ε> 0. This is one of the major open questions in the field of approximation − algorithms. In [18], H˚astad showed that approximating vertex cover within constant factors less 7 than 6 is NP-hard. This factor was recently improved by Dinur and Safra [10] to 1.36. -

Introduction to Parameterized Complexity

Introduction to Parameterized Complexity Ignasi Sau CNRS, LIRMM, Montpellier, France UFMG Belo Horizonte, February 2018 1/37 Outline of the talk 1 Why parameterized complexity? 2 Basic definitions 3 Kernelization 4 Some techniques 2/37 Next section is... 1 Why parameterized complexity? 2 Basic definitions 3 Kernelization 4 Some techniques 3/37 Karp (1972): list of 21 important NP-complete problems. Nowadays, literally thousands of problems are known to be NP-hard: unlessP = NP, they cannot be solved in polynomial time. But what does it mean for a problem to be NP-hard? No algorithm solves all instances optimally in polynomial time. Some history of complexity: NP-completeness Cook-Levin Theorem (1971): the SAT problem is NP-complete. 4/37 Nowadays, literally thousands of problems are known to be NP-hard: unlessP = NP, they cannot be solved in polynomial time. But what does it mean for a problem to be NP-hard? No algorithm solves all instances optimally in polynomial time. Some history of complexity: NP-completeness Cook-Levin Theorem (1971): the SAT problem is NP-complete. Karp (1972): list of 21 important NP-complete problems. 4/37 But what does it mean for a problem to be NP-hard? No algorithm solves all instances optimally in polynomial time. Some history of complexity: NP-completeness Cook-Levin Theorem (1971): the SAT problem is NP-complete. Karp (1972): list of 21 important NP-complete problems. Nowadays, literally thousands of problems are known to be NP-hard: unlessP = NP, they cannot be solved in polynomial time. 4/37 Some history of complexity: NP-completeness Cook-Levin Theorem (1971): the SAT problem is NP-complete. -

Exploiting C-Closure in Kernelization Algorithms for Graph Problems

Exploiting c-Closure in Kernelization Algorithms for Graph Problems Tomohiro Koana Technische Universität Berlin, Algorithmics and Computational Complexity, Germany [email protected] Christian Komusiewicz Philipps-Universität Marburg, Fachbereich Mathematik und Informatik, Marburg, Germany [email protected] Frank Sommer Philipps-Universität Marburg, Fachbereich Mathematik und Informatik, Marburg, Germany [email protected] Abstract A graph is c-closed if every pair of vertices with at least c common neighbors is adjacent. The c-closure of a graph G is the smallest number such that G is c-closed. Fox et al. [ICALP ’18] defined c-closure and investigated it in the context of clique enumeration. We show that c-closure can be applied in kernelization algorithms for several classic graph problems. We show that Dominating Set admits a kernel of size kO(c), that Induced Matching admits a kernel with O(c7k8) vertices, and that Irredundant Set admits a kernel with O(c5/2k3) vertices. Our kernelization exploits the fact that c-closed graphs have polynomially-bounded Ramsey numbers, as we show. 2012 ACM Subject Classification Theory of computation → Parameterized complexity and exact algorithms; Theory of computation → Graph algorithms analysis Keywords and phrases Fixed-parameter tractability, kernelization, c-closure, Dominating Set, In- duced Matching, Irredundant Set, Ramsey numbers Funding Frank Sommer: Supported by the Deutsche Forschungsgemeinschaft (DFG), project MAGZ, KO 3669/4-1. 1 Introduction Parameterized complexity [9, 14] aims at understanding which properties of input data can be used in the design of efficient algorithms for problems that are hard in general. The properties of input data are encapsulated in the notion of a parameter, a numerical value that can be attributed to each input instance I. -

3.1 Matchings and Factors: Matchings and Covers

1 3.1 Matchings and Factors: Matchings and Covers This copyrighted material is taken from Introduction to Graph Theory, 2nd Ed., by Doug West; and is not for further distribution beyond this course. These slides will be stored in a limited-access location on an IIT server and are not for distribution or use beyond Math 454/553. 2 Matchings 3.1.1 Definition A matching in a graph G is a set of non-loop edges with no shared endpoints. The vertices incident to the edges of a matching M are saturated by M (M-saturated); the others are unsaturated (M-unsaturated). A perfect matching in a graph is a matching that saturates every vertex. perfect matching M-unsaturated M-saturated M Contains copyrighted material from Introduction to Graph Theory by Doug West, 2nd Ed. Not for distribution beyond IIT’s Math 454/553. 3 Perfect Matchings in Complete Bipartite Graphs a 1 The perfect matchings in a complete b 2 X,Y-bigraph with |X|=|Y| exactly c 3 correspond to the bijections d 4 f: X -> Y e 5 Therefore Kn,n has n! perfect f 6 matchings. g 7 Kn,n The complete graph Kn has a perfect matching iff… Contains copyrighted material from Introduction to Graph Theory by Doug West, 2nd Ed. Not for distribution beyond IIT’s Math 454/553. 4 Perfect Matchings in Complete Graphs The complete graph Kn has a perfect matching iff n is even. So instead of Kn consider K2n. We count the perfect matchings in K2n by: (1) Selecting a vertex v (e.g., with the highest label) one choice u v (2) Selecting a vertex u to match to v K2n-2 2n-1 choices (3) Selecting a perfect matching on the rest of the vertices. -

Approximation Algorithms

Lecture 21 Approximation Algorithms 21.1 Overview Suppose we are given an NP-complete problem to solve. Even though (assuming P = NP) we 6 can’t hope for a polynomial-time algorithm that always gets the best solution, can we develop polynomial-time algorithms that always produce a “pretty good” solution? In this lecture we consider such approximation algorithms, for several important problems. Specific topics in this lecture include: 2-approximation for vertex cover via greedy matchings. • 2-approximation for vertex cover via LP rounding. • Greedy O(log n) approximation for set-cover. • Approximation algorithms for MAX-SAT. • 21.2 Introduction Suppose we are given a problem for which (perhaps because it is NP-complete) we can’t hope for a fast algorithm that always gets the best solution. Can we hope for a fast algorithm that guarantees to get at least a “pretty good” solution? E.g., can we guarantee to find a solution that’s within 10% of optimal? If not that, then how about within a factor of 2 of optimal? Or, anything non-trivial? As seen in the last two lectures, the class of NP-complete problems are all equivalent in the sense that a polynomial-time algorithm to solve any one of them would imply a polynomial-time algorithm to solve all of them (and, moreover, to solve any problem in NP). However, the difficulty of getting a good approximation to these problems varies quite a bit. In this lecture we will examine several important NP-complete problems and look at to what extent we can guarantee to get approximately optimal solutions, and by what algorithms. -

Complexity and Approximation Results for the Connected Vertex Cover Problem in Graphs and Hypergraphs Bruno Escoffier, Laurent Gourvès, Jérôme Monnot

Complexity and approximation results for the connected vertex cover problem in graphs and hypergraphs Bruno Escoffier, Laurent Gourvès, Jérôme Monnot To cite this version: Bruno Escoffier, Laurent Gourvès, Jérôme Monnot. Complexity and approximation results forthe connected vertex cover problem in graphs and hypergraphs. 2007. hal-00178912 HAL Id: hal-00178912 https://hal.archives-ouvertes.fr/hal-00178912 Preprint submitted on 12 Oct 2007 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Laboratoire d'Analyse et Modélisation de Systèmes pour l'Aide à la Décision CNRS UMR 7024 CAHIER DU LAMSADE 262 Juillet 2007 Complexity and approximation results for the connected vertex cover problem in graphs and hypergraphs Bruno Escoffier, Laurent Gourvès, Jérôme Monnot Complexity and approximation results for the connected vertex cover problem in graphs and hypergraphs Bruno Escoffier∗ Laurent Gourv`es∗ J´erˆome Monnot∗ Abstract We study a variation of the vertex cover problem where it is required that the graph induced by the vertex cover is connected. We prove that this problem is polynomial in chordal graphs, has a PTAS in planar graphs, is APX-hard in bipartite graphs and is 5/3-approximable in any class of graphs where the vertex cover problem is polynomial (in particular in bipartite graphs). -

Approximation Algorithms Instructor: Richard Peng Nov 21, 2016

CS 3510 Design & Analysis of Algorithms Section B, Lecture #34 Approximation Algorithms Instructor: Richard Peng Nov 21, 2016 • DISCLAIMER: These notes are not necessarily an accurate representation of what I said during the class. They are mostly what I intend to say, and have not been carefully edited. Last week we formalized ways of showing that a problem is hard, specifically the definition of NP , NP -completeness, and NP -hardness. We now discuss ways of saying something useful about these hard problems. Specifi- cally, we show that the answer produced by our algorithm is within a certain fraction of the optimum. For a maximization problem, suppose now that we have an algorithm A for our prob- lem which, given an instance I, returns a solution with value A(I). The approximation ratio of algorithm A is dened to be OP T (I) max : I A(I) This is not restricted to NP-complete problems: we can apply this to matching to get a 2-approximation. Lemma 0.1. The maximal matching has size at least 1=2 of the optimum. Proof. Let the maximum matching be M ∗. Consider the process of taking a maximal matching: an edge in M ∗ can't be chosen only after one (or both) of its endpoints are picked. Each edge we add to the maximal matching only has 2 endpoints, so it takes at least M ∗ 2 edges to make all edges in M ∗ ineligible. This ratio is indeed close to tight: consider a graph that has many length 3 paths, and the maximal matching keeps on taking the middle edges. -

What Is the Set Cover Problem

18.434 Seminar in Theoretical Computer Science 1 of 5 Tamara Stern 2.9.06 Set Cover Problem (Chapter 2.1, 12) What is the set cover problem? Idea: “You must select a minimum number [of any size set] of these sets so that the sets you have picked contain all the elements that are contained in any of the sets in the input (wikipedia).” Additionally, you want to minimize the cost of the sets. Input: Ground elements, or Universe U= { u1, u 2 ,..., un } Subsets SSSU1, 2 ,..., k ⊆ Costs c1, c 2 ,..., ck Goal: Find a set I ⊆ {1,2,...,m} that minimizes ∑ci , such that U SUi = . i∈ I i∈ I (note: in the un-weighted Set Cover Problem, c j = 1 for all j) Why is it useful? It was one of Karp’s NP-complete problems, shown to be so in 1972. Other applications: edge covering, vertex cover Interesting example: IBM finds computer viruses (wikipedia) elements- 5000 known viruses sets- 9000 substrings of 20 or more consecutive bytes from viruses, not found in ‘good’ code A set cover of 180 was found. It suffices to search for these 180 substrings to verify the existence of known computer viruses. Another example: Consider General Motors needs to buy a certain amount of varied supplies and there are suppliers that offer various deals for different combinations of materials (Supplier A: 2 tons of steel + 500 tiles for $x; Supplier B: 1 ton of steel + 2000 tiles for $y; etc.). You could use set covering to find the best way to get all the materials while minimizing cost. -

FPT Algorithms and Kernelization?

Noname manuscript No. (will be inserted by the editor) Structural Parameterizations of Undirected Feedback Vertex Set: FPT Algorithms and Kernelization? Diptapriyo Majumdar · Venkatesh Raman Received: date / Accepted: date Abstract A feedback vertex set in an undirected graph is a subset of vertices whose removal results in an acyclic graph. We consider the parameterized and kernelization complexity of feedback vertex set where the parameter is the size of some structure in the input. In particular, we consider parameterizations where the parameter is (instead of the solution size), the distance to a class of graphs where the problem is polynomial time solvable, and sometimes smaller than the solution size. Here, by distance to a class of graphs, we mean the minimum number of vertices whose removal results in a graph in the class. Such a set of vertices is also called the `deletion set'. In this paper, we show that k (1) { FVS is fixed-parameter tractable by an O(2 nO ) time algorithm, but is unlikely to have polynomial kernel when parameterized by the number of vertices of the graph whose degree is at least 4. This answersp a question asked in an earlier paper. We also show that an algorithm with k (1) running time O(( 2 − ) nO ) is not possible unless SETH fails. { When parameterized by k, the number of vertices, whose deletion results in a split graph, we give k (1) an O(3:148 nO ) time algorithm. { When parameterized by k, the number of vertices whose deletion results in a cluster graph (a k (1) disjoint union of cliques), we give an O(5 nO ) algorithm. -

1 Bipartite Matching and Vertex Covers

ORF 523 Lecture 6 Princeton University Instructor: A.A. Ahmadi Scribe: G. Hall Any typos should be emailed to a a [email protected]. In this lecture, we will cover an application of LP strong duality to combinatorial optimiza- tion: • Bipartite matching • Vertex covers • K¨onig'stheorem • Totally unimodular matrices and integral polytopes. 1 Bipartite matching and vertex covers Recall that a bipartite graph G = (V; E) is a graph whose vertices can be divided into two disjoint sets such that every edge connects one node in one set to a node in the other. Definition 1 (Matching, vertex cover). A matching is a disjoint subset of edges, i.e., a subset of edges that do not share a common vertex. A vertex cover is a subset of the nodes that together touch all the edges. (a) An example of a bipartite (b) An example of a matching (c) An example of a vertex graph (dotted lines) cover (grey nodes) Figure 1: Bipartite graphs, matchings, and vertex covers 1 Lemma 1. The cardinality of any matching is less than or equal to the cardinality of any vertex cover. This is easy to see: consider any matching. Any vertex cover must have nodes that at least touch the edges in the matching. Moreover, a single node can at most cover one edge in the matching because the edges are disjoint. As it will become clear shortly, this property can also be seen as an immediate consequence of weak duality in linear programming. Theorem 1 (K¨onig). If G is bipartite, the cardinality of the maximum matching is equal to the cardinality of the minimum vertex cover. -

1 Vertex Cover on Hypergraphs

15-859FF: Coping with Intractability CMU, Fall 2019 Lecture #3: Reductions for Problems in FPT September 11, 2019 Lecturer: Venkatesan Guruswami Scribe: Anish Sevekari 1 Vertex Cover on Hypergraphs We define a d-regular hypergraph H = (V; F) where F is a family of subsets of V of size d. The vertex cover problem on hypergraphs is to find the smallest set S such that every edge X 2 F intersects S at least once. We parameterize the vertex cover problem by size of the optimum solution. Then the parameterized version of the problem is given a hypergraph H and an integer k, does H have a vertex cover of size k? We will now give a dkpoly(n) algorithm that solves the vertex cover problem and a O(d2d!kd) kernel for d-regular Hypergraph Vertex Cover problem. 1.1 An algorithm for Hypergraph Vertex Cover The algorithm is a simple generalization of the 2kpoly(n) algorithm for vertex cover over graphs. We pick any edge, then branch on the vertex on this edge which is in the vertex cover. Algorithm 1 VertexCover(H = (V; F); k) 1: if k = 0 and jFj > 0 then 2: return ? 3: end if 4: Pick any edge e 2 F. 5: for v 2 e do 6: H0 = (V n fvg; F n ff 2 F : v 2 fg) 7: if VertexCover(H0; k) 6=? then 8: return fvg [ VertexCover(H0; k − 1) 9: end if 10: end for 11: return ? Note that the recursion depth of this algorithm is k, and we branch on at most d points inside each call. -

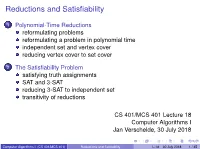

Reductions and Satisfiability

Reductions and Satisfiability 1 Polynomial-Time Reductions reformulating problems reformulating a problem in polynomial time independent set and vertex cover reducing vertex cover to set cover 2 The Satisfiability Problem satisfying truth assignments SAT and 3-SAT reducing 3-SAT to independent set transitivity of reductions CS 401/MCS 401 Lecture 18 Computer Algorithms I Jan Verschelde, 30 July 2018 Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 1 / 45 Reductions and Satifiability 1 Polynomial-Time Reductions reformulating problems reformulating a problem in polynomial time independent set and vertex cover reducing vertex cover to set cover 2 The Satisfiability Problem satisfying truth assignments SAT and 3-SAT reducing 3-SAT to independent set transitivity of reductions Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 2 / 45 reformulating problems The Ford-Fulkerson algorithm computes maximum flow. By reduction to a flow problem, we could solve the following problems: bipartite matching, circulation with demands, survey design, and airline scheduling. Because the Ford-Fulkerson is an efficient algorithm, all those problems can be solved efficiently as well. Our plan for the remainder of the course is to explore computationally hard problems. Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 3 / 45 imagine a meeting with your boss ... From Computers and intractability. A Guide to the Theory of NP-Completeness by Michael R. Garey and David S. Johnson, Bell Laboratories, 1979. Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 4 / 45 what you want to say is From Computers and intractability.