Term Association Modelling in Information Retrieval

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Class Descriptions Class Descriptions

class descriptions Class Descriptions SKILL LEVEL KEY SEWING/MACHINE CLASSES: BEGINNER Little or no sewing (i.e. construction, patterns) or machine (i.e. serger, sewing) knowledge. INTERMEDIATE Basic sewing (i.e. construction, patterns) or machine (i.e. serger, sewing) understanding. Can easily adjust stitches, tension, accessory feet, etc. ADVANCED Complete understanding of sewing (i.e. construction, patterns) and machines (i.e. serger, sewing). Able to construct a garment/quilt without guidance/assistance. Can upload, manipulate, edit embroidery designs. Complete understanding of sewing terminology. SOFTWARE: BEGINNER Little or no computer/software knowledge. INTERMEDIATE Legend Basic understanding of computers/software. Familiar with keyboards, mouse, external peripherals and equipment. (i.e. able to: copy/paste, drag/drop, make new file folders.) Hands On ADVANCED Complete understanding of computers/software. Can use multiple features of Digitizer MB. Can install/uninstall software/hardware with ease. (i.e. understands updates, drivers, Kit/Fee Required sewing machine to PC connectivity, PC terminology) Basic Sewing Kit: Sewing scissors, paper scissors, pins, seam ripper, rotary cutter/ruler/ mat, water-soluble fabric marker or chalk, tape measure, all purpose sewing thread, light Laptop Required neutral and dark neutral colors, glue stick or temporary spray adhesive, hand sewing needles, small flat head screwdriver, seam sealant, several medium safety pins. Lecture Basic Serger Kit: Sewing scissors, serger tweezers, thread snips, needle threader, floss threader, Allen screwdriver for 1100D and 1000CPX, needles, small lint brush, seam sealant. Pictures may not represent actual fabrics in the kits. 2 Class Descriptions NEW TOL ROOMS Certification for New TOL Machine for US and Canadian Dealers All US dealers must purchase a TOL machine as part of the certification. -

Atlantic News Courtesy Photo — 44 in CONCERT O Milk Money (1994) Melanie Griffith

This Page © 2004 Connelly Communications, LLC, PO Box 592 Hampton, NH 03843- Contributed items and logos are © and ™ their respective owners Unauthorized reproduction 26 of this page or its contents for republication in whole or in part is strictly prohibited • For permission, call (603) 926-4557 • AN-Mark 9A-EVEN- Rev 12-16-2004 PAGE 26A | ATLANTIC NEWS | NOVEMBER 4, 2005 | VOL 31, NO 44 SEACOAST ENTERTAINMENT &ARTS | ATLANTICNEWS.COM . NOTES ANNUAL CRAFT, 11/9/05 5 PM 5:30 6 PM 6:30 7 PM 7:30 8 PM 8:30 9 PM 9:30 10 PM 10:30 11 PM 11:30 12 AM 12:30 FOOD FAIR AMONG THE BEST WBZ-4 Dr. Phil (N) News CBS The Insid- Ent. Still Yes, Dear Criminal Minds “The CSI: NY “Manhattan News Late Show With Late Late SALEM | The 10th annual New (CBS) (CC) News er (N) Tonight Standing (N) Fox” (N) (CC) Manhunt” (N) (CC) (CC) David Letterman (N) Show England Craft and Specialty Food WCVB-5 News News News ABC Wld Inside Chronicle George Freddie Lost “Abandoned” Invasion “Fish Sto- News (:35) (12:06) Jimmy Kim- (ABC) (CC) (CC) (CC) News Edition (CC) Lopez (N) (N) (CC) (N) ’ (HD) (CC) ry” (N) (CC) (CC) Nightline mel Live (N) ’ Fair will be held indoors at the Rock- WCSH-6 News ’ News ’ News ’ NBC 207 Maga- Seinfeld E-Ring “Cemetery The Apprentice: Law & Order “House News ’ The Tonight Show Late Night ingham Park Racetrack in Salem on (CBS) (CC) (CC) (CC) News zine ’ (CC) Wind” (N) (CC) Martha Stewart (N) of Cards” (CC) With Jay Leno (N) Friday through Sunday, November WHDH-7 News ’ News ’ News ’ NBC Access Extra (N) E-Ring “Cemetery The Apprentice: Law & Order “House News ’ The Tonight Show Late Night 11-13, from 10 a.m. -

Sewing Machines and Sergers

Welcome To Janome Institute 2011- Your Education Connection September 3 7, at The Marriott World Center Resort In Orlando i Expand Your Horizons j How about some good news, for a change? The economic recovery has been a mixed bag for the sewing industry. Customers are buying... but they’re also holding onto their purse strings a little more tightly. They’re taking longer to make that big machine purchase. But, we’ve clearly seen, when they’re excited about a machine, they will gladly make the purchase. And that’s good news. Because at Janome Institute 2011 we’re going to unveil a machine that will cause your customers to be very excited. This top-of-the-line machine is going to redefine Janome sewing the way we did with the MC8000, MC10000, and MC11000. And it’s going to cause quite a stir among your customers. Believe me, people you’ve never seen before are going to stop by your store just to see it in person. You better have your demo ready, because you’ll be doing it a lot. (It wouldn’t be a bad idea, either, to order some extra cash register tape.) On Opening Night, Saturday, September 3rd, this machine will be revealed for the first time ever to attendees of Janome Institute 2011 in Orlando. You’re going to want to be one of them. Because starting the next day, you’ll get to try out the new machine and get the sales training and product knowledge you need to become a certified dealer. -

NYC Garment District Locations by Street 35Th Street

NYC Garment District Locations By Street Shop Address Phone Description Notes: 35th Street Open to Public - Fashion Sew Fast Sew Easy 147 W. 35th St., Suite 807 (212) 268-4321 Patterns, sewing kits Source Book Open to Public - Fashion American Sewing Supply 224 W. 35th St., Suite 500B (212) 239-3695 Professional supplies and equipment for tailors etc Source Book Open to Public - Fashion Safa Fabrics 237 W. 35th St. Ground Floor (212) 239-3415 Laces, tapestry, silk, brocade Source Book No Minimums: Big in stock inventory all colors many skins including: Leathers,Suedes, Metallics, pearlized, Exotics, Embosses, Furs Shearling , Novelty leathers Laser and Open to Public - Fashion Global Leathers 253 W. 35th St., 9th Floor (212) 244-5190 Perforated. Source Book Imported high quality fabric for dress, lining, satin; Open to Public - Fashion Nousha Tex, Sunmaid 253 W. 35th St., Ground Floor (212) 268-7770 Converter and importer of fine fabrics, woven Source Book No sales minimum. Leather and suede skins dealer. Importer, leather dealer. The source for the world's most beautiful leather. Supplier of leather, suede in lambskin, cowhide, pigskin, pearlized, printed, perforated, embossed, Tibet lamb, snakeskins, exotics, leather and fur trim providing garment accessories, home furnishing and suede Open to Public - Fashion Leather Suede Skins, Inc. 261 W. 35th St., 11th Floor (212) 967-6616 skins. Available for immediate delivery from stock. Source Book Jobber of lining, interlining, fusable, fusing fabrics; Open to Public - Fashion Moon Tex 261 W. 35th St., Ground Floor (212) 631-0970 embroidered Source Book NYC_Garment District_Shop List RI Sewing Network NYC Garment District Locations By Street Shop Address Phone Description Notes: 36th Street Open to Public - Fashion Gettinger Feather Corp. -

06 SM 9/21 (TV Guide)

Page 6 THE NORTON TELEGRAM Tuesday, September 21, 2004 Monday Evening September 27, 2004 7:00 7:30 8:00 8:30 9:00 9:30 10:00 10:30 11:00 11:30 KHGI/ABC The Benefactor Monday Night Football:Cowboys @ Redskins Jimmy K KBSH/CBS Still Stand Listen Up Raymond Two Men CSI Miami Local Late Show Late Late KSNK/NBC Fear Factor Las Vegas LAX Local Tonight Show Conan FOX North Shore Renovate My Family Local Local Local Local Local Local Cable Channels A&E Parole Squad Plots Gotti Airline Airline Crossing Jordan Parole Squad AMC The Wrecking Crew Mother, Jugs and Speed Sixteen Candles ANIM Growing Up Lynx Growing Up Rhino Miami Animal Police Growing Up Lynx Growing Up Rhino CNN Paula Zahn Now Larry King Live Newsnight Lou Dobbs Larry King DISC Monster House Monster Garage American Chopper Monster House Monster Garage Norton TV DISN Disney Movie: TBA Raven Sis Bug Juice Lizzie Boy Meets Even E! THS E!ES Dr. 90210 Howard Stern SNL ESPN Monday Night Countdown Hustle EOE Special Sportscenter ESPN2 Hustle WNBA Playoffs Shootarou Dream Job Hustle FAM The Karate Kid Whose Lin The 700 Club Funniest Funniest FX The Animal Fear Factor King of the Hill Cops HGTV Smrt Dsgn Decor Ce Organize Dsgn Chal Dsgn Dim Dsgn Dme To Go Hunters Smrt Dsgn Decor Ce HIST UFO Files Deep Sea Detectives Investigating History Tactical To Practical UFO Files LIFE Too Young to be a Dad Torn Apart How Clea Golden Nanny Nanny MTV MTV Special Road Rules The Osbo Real World Video Clash Listings: NICK SpongeBo Drake Full Hous Full Hous Threes Threes Threes Threes Threes Threes SCI Stargate -

For Men & Women

MAKING Click on any image to go to the related material for Men & Women Keyhole Buttonholes Project-Garment Galleries the DVD Video Demos Printable Patterns All content © 2009 by David Page Coffin RTW & Custom Galleries Sources & Links Click Here to launch DPC’s blog for more info about using this DVD Click this symbol to return to this page Click this symbol to go to next page 1 BONUS CHAPTER Using an Eyelet Plate to Make Keyhole Buttonholes Eyelet Plates and Cutters Keyhole buttonholes are designed to An eyelet plate, shown in photos 1 and 2, covers prevent distortion when the closure is your feed dogs and provides a post on which under strain during wear. Without the ex- to position the precut hole for your keyhole, tra space provided by the keyhole shape, plus a slot that allows the needle to make a zig- 2 an ordinary buttonhole will be distorted zag stitch. The post is slotted, too, so the needle as the shank pulls against the end of the can swing inside it to form the inner edge of the hole. On pants, I’d suggest using key- eyelet (photo 3). The black plastic plate in photo holes for any and all buttonholes, wheth- 2 (sitting on top of my Bernina plate) is from my er on button flies, pocket flaps, or waist dear old Pfaff; notice that its slot is oriented in closures. I only recently got a sewing the opposite direction compared to the Bernina machine that could make keyhole but- plate (this apparently makes no difference), and tonholes, but I had already developed a that it has “toes” designed to snap into the feed- way of making keyhole buttonholes with dog holes on the machine, instead of screwing an eyelet plate. -

Sew Stitch Quick Manual

Sew Stitch Quick Manual If you are searched for the ebook Sew stitch quick manual in pdf form, in that case you come on to faithful site. We present the full variation of this ebook in DjVu, PDF, doc, ePub, txt forms. You may read Sew stitch quick manual online or load. Additionally to this ebook, on our site you may reading the guides and diverse art eBooks online, either downloading them as well. We like to attract your consideration that our website not store the eBook itself, but we provide reference to website wherever you may load or reading online. So that if you have necessity to load Sew stitch quick manual pdf, in that case you come on to the right website. We have Sew stitch quick manual doc, txt, PDF, ePub, DjVu formats. We will be happy if you return again and again. stitch sew quick hand held sewing machine by - SINGER-Stitch Sew Quick Hand Held Sewing Machine. This hand held sewing machine compact and portable; excellent for on-the-spot repairs and is lightweight and powerful. singer simple 23- stitch sewing machine 2263 - - Learning to sew is fun and easy when you start with the Singer Simple 23-Stitch Sewing Machine 2263. This product is designed specifically for first-timer sewers and singer sew quick manual - shopping.com - Features: 60 Built-In Stitches with stitch guide included in manual Automatic Needle Threader - sewing's biggest timesaver! Automatic Stitch Length Width ensures the amazon.com: stitch sew quick - Stitch Sew Quick. by Dyno Merchandise. 320 customer reviews | 23 answered questions Price: Manual Sewing Machine for Easy Repair of Fabric and Clothes. -

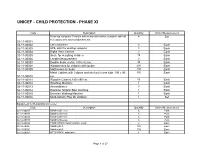

Unicef - Child Protection - Phase Xi

UNICEF - CHILD PROTECTION - PHASE XI Code Description Quantity Unit of Measurement Desk top computer Pentium with coloured monitor, complete with all 4 Set necessary accessories/attachments. 02-11-00001 02-11-00002 LaserJet printer 4 Each 02-11-00003 UPS, 220V for desktop computer 4 Each 02-11-00004 Digital Video Camera 2 Each 02-11-00005 Scale for weighing children 10 Each 02-11-00006 Length Measurement 2 Each 02-11-00007 Double beds, metal, 189 x 82 cm. 40 Each 02-11-00008 Wooden bed for children with locker 200 Each 02-11-00009 Mattresses for beds 200 Each Metal Cabinet with 2 doors and shelves in one side, 190 x 90 155 Each 02-11-00010 cm. 02-11-00011 Wooden Cabinet,180 x 90 cm. 50 Each 02-11-00012 Washing Machine 1 Each 02-11-00013 Airconditioner 3 Each 02-11-00014 Exercise Athletic Bike machine 1 Each 02-11-00015 Exercise Walking Machine 1 Each 02-11-00016 Multi-Activity Play for children 1 Set Equipment for Rehabilitation Center Code Description Quantity Unit of Measurement 02-11-00017 Sanding Sleeves 6 Pack 02-12-00018 Sanding Sleeves 6 Pack 02-11-00018 Sanding Sleeves 6 Pack 02-12-00019 Sanding Sleeves 6 Pack 02-11-00019 HABERMANN Sanding Drum. Long 2 Each 02-12-00020 Sanding Belt 100 Each 02-11-00020 Sanding Belt 100 Each 02-12-00021 OTTO BOCK, adjustable 1 Each Page 1 of 27 Code Description Quantity Unit of Measurement 02-11-00021 Round End Sanding Drum 1 Each 02-12-00022 Sanding Drum 10 Each 02-11-00022 Sanding Sleeve 25 Pack 02-12-00023 Sanding Sleeve 50 Pack 02-11-00023 Hot Air Gun (Triac) 1 Each 02-12-00024 Hole Punch Set -

Your Best Source for Local Entertainment Information

Page 6 THE NORTON TELEGRAM Tuesday, August 24, 2004 Monday Evening August 30, 2004 7:00 7:30 8:00 8:30 9:00 9:30 10:00 10:30 11:00 11:30 KHGI/ABC Titans @ Cowboys: Monday Night Football Local Local Jimmy K KBSH/CBS Still Stand Yes Dear Raymond Two Men CSI Miami Local Late Show Late Late KSNK/NBC Fear Factor Last Comic : Battle of the Comics Local Tonight Show Conan FOX The Complex: Malibu Local Local Local Local Local Local Cable Channels A&E The Lori Hacking Cas Fam Plots Growing U Airline Crossing Jordan The Lori Hacking Cas AMC Airport 1975 Airport '77 The Mask ANIM Growing Up Baboon That's My Baby Animal Cops Detroit Growing Up Baboon That's My Baby CNN Paula Zahn Now Larry King Live Newsnight Lou Dobbs Larry King DISC Monster Garage Monster Garage American Chopper Monster Garage Monster Garage Norton TV DISN You Wish! Raven Sis Bug Juice Lizzie Boy Meets Even E! THS Dr. 90210 Howard Stern SNL ESPN Monday Night Baseball Sportscenter Outside Baseball ESPN2 WPBA Classic Tour Mix Tape Tour FAM Romy & Michele's High School Reunion Whose Lin The 700 Club Funniest Funniest FX Shawshank Redemption Shawshank Redemption HGTV Smrt Dsgn Decor Ce Organize Dsgn Chal Dsgn Dim Dsgn Dme To Go Hunters Smrt Dsgn Decor Ce HIST UFO Files Deep Sea Detectives Investigating History Tactical To Practical UFO Files LIFE Born into Exile Deadly Matrimony MTV The Assistant Road Rule Assistant MTV Special HIp Hop Show Listings: NICK SpongeBo Drake Full Hous Full Hous Threes Threes Threes Threes Threes Threes SCI Srargate SG-1 SPIKE Wildest Police Videos WWE Raw WWE Raw Zone MXC World's Wildest Police TBS Raymond Raymond Movie: TBA Movie: TBA TCM Million Dollar Mermaid Dangerous When Wet On An Island With Yo TLC Trauma Body Work What Not To Wear Trauma Body Work TNT Law & Order Law & Order Law & Order Law & Order X-Files Your best source for local TV LAND Munsters Green Andy Beaver Sanford All Fam Threes Threes Cheers Cheers USA Tennis U.S. -

Owner's Manual

TM TM Owner‘s manual This household sewing machine is designed to comply with IEC/EN 60335-2-28 and UL1594. IMPORTANT SAFETY INSTRUCTIONS When using an electrical appliance, basic safety precautions should always be followed, including the following: Read all instructions before using this household sewing machine. DANGER - To reduce the risk of electric shock: • A sewing machine should never be left unattended when plugged in. Always unplug this sewing machine from the electric outlet immediately after using and before cleaning. WARNING - To reduce the risk of burns, fire, electric shock, or injury to persons: • This sewing machine is not intended for use by persons (including children) with reduced physical, sensory or mental capabilities, or lack of experience and knowledge, unless they have been given supervision or instruction concerning use of the sewing machine by a person responsible for their safety. • Children should be supervised to ensure that they do not play with the sewing machine. • Use this sewing machine only for its intended use as described in this manual. Use only attachments recommended by the manufacturer as contained in this manual. • Never operate this sewing machine if it has a damaged cord or plug, if it is not working properly, if it has been dropped or damaged, or dropped into water. Return the sewing machine to the nearest authorized dealer or service center for examination, repair, electrical or mechanical adjustment. • Never operate the sewing machine with any air openings blocked. Keep ventilation openings of the sewing machine and foot controller free from the accumulation of lint, dust, and loose threads. -

Goodland Star-News

6b The Goodland Star-News / Tuesday, August 23, 2005 abigail have made reference to the incident, — MALE SUPERVISOR, In my opinion, she should go to said was not cute or clever, not a Co-worker’s but neither has apologized.” By re- SEBASTOPOL, CALIF. her supervisor and tell him or her joke, and above all, will not be tol- van buren ferring to the incident at work, the DEAR MALE SUPERVISOR: that she would like to have a meet- erated by her again. In reality it was men HAVE “brought it into the Thank you for pointing it out. She ing with everyone who was in- sexual harassment, and will be crude comment workplace.” Read on: should also make a point of docu- volved. She should ask that the su- handled as such if it recurs. •dear abby DEAR ABBY: “Hurt and Of- menting any further references pervisor be present. The following There are courts of law that take inappropriate fended” has done all that she needed made by those co-workers. issues should be presented: care of such situations. And those DEAR ABBY: Most of your re- he was being funny.) But it can’t be to do. She said she had talked to her DEAR ABBY: If “Hurt” allows (1) The question she was asked clowns need to know that she will go sponses I agree with, but the one you sexual harassment if it happened co-worker, who is the men’s super- those co-workers of hers to get away was rude, and embarrassed both her to one if they pull that stunt again. -

Proximity-Based Approaches to Blog Opinion Retrieval

Proximity-based Approaches to Blog Opinion Retrieval Doctoral Dissertation submitted to the Faculty of Informatics of the Università della Svizzera Italiana in partial fulfillment of the requirements for the degree of Doctor of Philosophy presented by Shima Gerani under the supervision of Fabio Crestani September 2012 Dissertation Committee Marc Langheinrich University of Lugano, Switzerland Fernando Pedone University of Lugano, Switzerland Maarten De Rijke University of Amsterdam, The Netherlands Mohand Boughanem Paul Sabatier University, Toulouse, France Dissertation accepted on 13 September 2012 Research Advisor PhD Program Director Fabio Crestani Antonio Carzaniga pro tempore i I certify that except where due acknowledgement has been given, the work presented in this thesis is that of the author alone; the work has not been sub- mitted previously, in whole or in part, to qualify for any other academic award; and the content of the thesis is the result of work which has been carried out since the official commencement date of the approved research program. Shima Gerani Lugano, 13 September 2012 ii Abstract Recent years have seen the rapid growth of social media platforms that enable people to express their thoughts and perceptions on the web and share them with other users. Many people write their opinion about products, movies, peo- ple or events on blogs, forums or review sites. The so-called User Generated Content is a good source of users’ opinion and mining it can be very useful for a wide variety of applications that require understudying of public opinion about a concept. Blogs are one of the most popular and influential social media.