Digital Light Field Photography

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Still Photography

Still Photography Soumik Mitra, Published by - Jharkhand Rai University Subject: STILL PHOTOGRAPHY Credits: 4 SYLLABUS Introduction to Photography Beginning of Photography; People who shaped up Photography. Camera; Lenses & Accessories - I What a Camera; Types of Camera; TLR; APS & Digital Cameras; Single-Lens Reflex Cameras. Camera; Lenses & Accessories - II Photographic Lenses; Using Different Lenses; Filters. Exposure & Light Understanding Exposure; Exposure in Practical Use. Photogram Introduction; Making Photogram. Darkroom Practice Introduction to Basic Printing; Photographic Papers; Chemicals for Printing. Suggested Readings: 1. Still Photography: the Problematic Model, Lew Thomas, Peter D'Agostino, NFS Press. 2. Images of Information: Still Photography in the Social Sciences, Jon Wagner, 3. Photographic Tools for Teachers: Still Photography, Roy A. Frye. Introduction to Photography STILL PHOTOGRAPHY Course Descriptions The department of Photography at the IFT offers a provocative and experimental curriculum in the setting of a large, diversified university. As one of the pioneers programs of graduate and undergraduate study in photography in the India , we aim at providing the best to our students to help them relate practical studies in art & craft in professional context. The Photography program combines the teaching of craft, history, and contemporary ideas with the critical examination of conventional forms of art making. The curriculum at IFT is designed to give students the technical training and aesthetic awareness to develop a strong individual expression as an artist. The faculty represents a broad range of interests and aesthetics, with course offerings often reflecting their individual passions and concerns. In this fundamental course, students will identify basic photographic tools and their intended purposes, including the proper use of various camera systems, light meters and film selection. -

Photography and Photomontage in Landscape and Visual Impact Assessment

Photography and Photomontage in Landscape and Visual Impact Assessment Landscape Institute Technical Guidance Note Public ConsuDRAFTltation Draft 2018-06-01 To the recipient of this draft guidance The Landscape Institute is keen to hear the views of LI members and non-members alike. We are happy to receive your comments in any form (eg annotated PDF, email with paragraph references ) via email to [email protected] which will be forwarded to the Chair of the working group. Alternatively, members may make comments on Talking Landscape: Topic “Photography and Photomontage Update”. You may provide any comments you consider would be useful, but may wish to use the following as a guide. 1) Do you expect to be able to use this guidance? If not, why not? 2) Please identify anything you consider to be unclear, or needing further explanation or justification. 3) Please identify anything you disagree with and state why. 4) Could the information be better-organised? If so, how? 5) Are there any important points that should be added? 6) Is there anything in the guidance which is not required? 7) Is there any unnecessary duplication? 8) Any other suggeDRAFTstions? Responses to be returned by 29 June 2018. Incidentally, the ##’s are to aid a final check of cross-references before publication. Contents 1 Introduction Appendices 2 Background Methodology App 01 Site equipment 3 Photography App 02 Camera settings - equipment and approaches needed to capture App 03 Dealing with panoramas suitable images App 04 Technical methodology template -

Chapter 3 (Aberrations)

Chapter 3 Aberrations 3.1 Introduction In Chap. 2 we discussed the image-forming characteristics of optical systems, but we limited our consideration to an infinitesimal thread- like region about the optical axis called the paraxial region. In this chapter we will consider, in general terms, the behavior of lenses with finite apertures and fields of view. It has been pointed out that well- corrected optical systems behave nearly according to the rules of paraxial imagery given in Chap. 2. This is another way of stating that a lens without aberrations forms an image of the size and in the loca- tion given by the equations for the paraxial or first-order region. We shall measure the aberrations by the amount by which rays miss the paraxial image point. It can be seen that aberrations may be determined by calculating the location of the paraxial image of an object point and then tracing a large number of rays (by the exact trigonometrical ray-tracing equa- tions of Chap. 10) to determine the amounts by which the rays depart from the paraxial image point. Stated this baldly, the mathematical determination of the aberrations of a lens which covered any reason- able field at a real aperture would seem a formidable task, involving an almost infinite amount of labor. However, by classifying the various types of image faults and by understanding the behavior of each type, the work of determining the aberrations of a lens system can be sim- plified greatly, since only a few rays need be traced to evaluate each aberration; thus the problem assumes more manageable proportions. -

Invention of Digital Photograph

Invention of Digital photograph Digital photography uses cameras containing arrays of electronic photodetectors to capture images focused by a lens, as opposed to an exposure on photographic film. The captured images are digitized and stored as a computer file ready for further digital processing, viewing, electronic publishing, or digital printing. Until the advent of such technology, photographs were made by exposing light sensitive photographic film and paper, which was processed in liquid chemical solutions to develop and stabilize the image. Digital photographs are typically created solely by computer-based photoelectric and mechanical techniques, without wet bath chemical processing. The first consumer digital cameras were marketed in the late 1990s.[1] Professionals gravitated to digital slowly, and were won over when their professional work required using digital files to fulfill the demands of employers and/or clients, for faster turn- around than conventional methods would allow.[2] Starting around 2000, digital cameras were incorporated in cell phones and in the following years, cell phone cameras became widespread, particularly due to their connectivity to social media websites and email. Since 2010, the digital point-and-shoot and DSLR formats have also seen competition from the mirrorless digital camera format, which typically provides better image quality than the point-and-shoot or cell phone formats but comes in a smaller size and shape than the typical DSLR. Many mirrorless cameras accept interchangeable lenses and have advanced features through an electronic viewfinder, which replaces the through-the-lens finder image of the SLR format. While digital photography has only relatively recently become mainstream, the late 20th century saw many small developments leading to its creation. -

Alternative Processes a Few Essentials Introduction

Alternative Processes A Few Essentials Introduction Chapter 1. Capture Techniques From Alternative Photographic Processes: Crafting Handmade Images Chapter 2. Digital Negatives for Gum From Gum Printing: A Step-by-Step Manual, Highlighting Artists and Their Creative Practice Chapter 3. Fugitive and Not-So-Fugitive Printing From Jill Enfield?s Guide to Photographic Alternative Processes: Popular Historical and Contemporary Techniques 2 Featured Books on Alternative Process Photography from Routledge | Focal Press Use discount code FLR40 to take 20% off all Routledge titles. Simply visit www.routledge.com/photography to browse and purchase books of interest. 3 Introduction A young art though it may be, photography already has a rich history. As media moves full steam ahead into the digital revolution and beyond, it is a natural instinct to look back at where we?ve come from. With more artists rediscovering photography?s historical processes, the practice of photography continually redefines and re-contextualizes itself. The creative possibilities of these historical processes are endless, spawning a growing arena of practice - alternative processes, which combines past, present and everything in between, in the creation of art. This collection is an introduction to and a sample of these processes and possibilities. With Alternative Photographic Processes, Brady Wilks demonstrates techniques for manipulating photographs, negatives and prints ? emphasizing the ?hand-made? touch. Bridging the gap between the simplest of processes to the most complex, Wilks? introduction demonstrates image-manipulation pre-capture, allowing the artist to get intimate with his or her images long before development. In the newly-released Gum Printing, leading gum expert Christina Z. -

Depth of Focus (DOF)

Erect Image Depth of Focus (DOF) unit: mm Also known as ‘depth of field’, this is the distance (measured in the An image in which the orientations of left, right, top, bottom and direction of the optical axis) between the two planes which define the moving directions are the same as those of a workpiece on the limits of acceptable image sharpness when the microscope is focused workstage. PG on an object. As the numerical aperture (NA) increases, the depth of 46 focus becomes shallower, as shown by the expression below: λ DOF = λ = 0.55µm is often used as the reference wavelength 2·(NA)2 Field number (FN), real field of view, and monitor display magnification unit: mm Example: For an M Plan Apo 100X lens (NA = 0.7) The depth of focus of this objective is The observation range of the sample surface is determined by the diameter of the eyepiece’s field stop. The value of this diameter in 0.55µm = 0.6µm 2 x 0.72 millimeters is called the field number (FN). In contrast, the real field of view is the range on the workpiece surface when actually magnified and observed with the objective lens. Bright-field Illumination and Dark-field Illumination The real field of view can be calculated with the following formula: In brightfield illumination a full cone of light is focused by the objective on the specimen surface. This is the normal mode of viewing with an (1) The range of the workpiece that can be observed with the optical microscope. With darkfield illumination, the inner area of the microscope (diameter) light cone is blocked so that the surface is only illuminated by light FN of eyepiece Real field of view = from an oblique angle. -

Field of View - Wikipedia, the Free Encyclopedia

Field of view - Wikipedia, the free encyclopedia http://en.wikipedia.org/wiki/Field_of_view From Wikipedia, the free encyclopedia The field of view (also field of vision, abbreviated FOV) is the extent of the observable world that is seen at any given moment. In case of optical instruments or sensors it is a solid angle through which a detector is sensitive to electromagnetic radiation. 1 Humans and animals 2 Conversions Horizontal Field of View 3 Machine vision 4 Remote sensing 5 Astronomy 6 Photography 7 Video games 8 See also 9 References In the context of human vision, the term “field of view” is typically used in the sense of a restriction Vertical Field of View to what is visible by external apparatus, like spectacles[2] or virtual reality goggles. Note that eye movements do not change the field of view. If the analogy of the eye’s retina working as a sensor is drawn upon, the corresponding concept in human (and much of animal vision) is the visual field. [3] It is defined as “the number of degrees of visual angle during stable fixation of the eyes”.[4]. Note that eye movements are excluded in the definition. Different animals have different visual fields, depending, among others, on the placement of the eyes. Humans have an almost 180-degree forward-facing horizontal diameter of their visual field, while some birds have a complete or nearly complete 360-degree Angle of view can be measured visual field. The vertical range of the visual field in humans is typically horizontally, vertically, or diagonally. -

A N E W E R a I N O P T I

A NEW ERA IN OPTICS EXPERIENCE AN UNPRECEDENTED LEVEL OF PERFORMANCE NIKKOR Z Lens Category Map New-dimensional S-Line Other lenses optical performance realized NIKKOR Z NIKKOR Z NIKKOR Z Lenses other than the with the Z mount 14-30mm f/4 S 24-70mm f/2.8 S 24-70mm f/4 S S-Line series will be announced at a later date. NIKKOR Z 58mm f/0.95 S Noct The title of the S-Line is reserved only for NIKKOR Z lenses that have cleared newly NIKKOR Z NIKKOR Z established standards in design principles and quality control that are even stricter 35mm f/1.8 S 50mm f/1.8 S than Nikon’s conventional standards. The “S” can be read as representing words such as “Superior”, “Special” and “Sophisticated.” Top of the S-Line model: the culmination Versatile lenses offering a new dimension Well-balanced, of the NIKKOR quest for groundbreaking in optical performance high-performance lenses Whichever model you choose, every S-Line lens achieves richly detailed image expression optical performance These lenses bring a higher level of These lenses strike an optimum delivering a sense of reality in both still shooting and movie creation. It offers a new This lens has the ability to depict subjects imaging power, achieving superior balance between advanced in ways that have never been seen reproduction with high resolution even at functionality, compactness dimension in optical performance, including overwhelming resolution, bringing fresh before, including by rendering them with the periphery of the image, and utilizing and cost effectiveness, while an extremely shallow depth of field. -

A High Full Well Capacity CMOS Image Sensor for Space Applications

sensors Article A High Full Well Capacity CMOS Image Sensor for Space Applications Woo-Tae Kim 1 , Cheonwi Park 1, Hyunkeun Lee 1 , Ilseop Lee 2 and Byung-Geun Lee 1,* 1 School of Electrical Engineering and Computer Science, Gwangju Institute of Science and Technology, Gwangju 61005, Korea; [email protected] (W.-T.K.); [email protected] (C.P.); [email protected] (H.L.) 2 Korea Aerospace Research Institute, Daejeon 34133, Korea; [email protected] * Correspondence: [email protected]; Tel.: +82-62-715-3231 Received: 24 January 2019; Accepted: 26 March 2019; Published: 28 March 2019 Abstract: This paper presents a high full well capacity (FWC) CMOS image sensor (CIS) for space applications. The proposed pixel design effectively increases the FWC without inducing overflow of photo-generated charge in a limited pixel area. An MOS capacitor is integrated in a pixel and accumulated charges in a photodiode are transferred to the in-pixel capacitor multiple times depending on the maximum incident light intensity. In addition, the modulation transfer function (MTF) and radiation damage effect on the pixel, which are especially important for space applications, are studied and analyzed through fabrication of the CIS. The CIS was fabricated using a 0.11 µm 1-poly 4-metal CIS process to demonstrate the proposed techniques and pixel design. A measured FWC of 103,448 electrons and MTF improvement of 300% are achieved with 6.5 µm pixel pitch. Keywords: CMOS image sensors; wide dynamic range; multiple charge transfer; space applications; radiation damage effects 1. Introduction Imaging devices are essential components in the space environment for a range of applications including earth observation, star trackers on satellites, lander and rover cameras [1]. -

Irix 45Mm F1.4

Explore the magic of medium format photography with the Irix 45mm f/1.4 lens equipped with the native mount for Fujifilm GFX cameras! The Irix Lens brand introduces a standard 45mm wide-angle lens with a dedicated mount that can be used with Fujifilm GFX series cameras equipped with medium format sensors. Digital medium format is a nod to traditional analog photography and a return to the roots that defined the vividness and quality of the image captured in photos. Today, the Irix brand offers creators, who seek iconic image quality combined with mystical vividness, a tool that will allow them to realize their wildest creative visions - the Irix 45mm f / 1.4 G-mount lens. It is an innovative product because as a precursor, it paves the way for standard wide-angle lenses with low aperture, which are able to cope with medium format sensors. The maximum aperture value of f/1.4 and the sensor size of Fujifilm GFX series cameras ensure not only a shallow depth of field, but also smooth transitions between individual focus areas and a high dynamic range. The wide f/1.4 aperture enables you to capture a clear background separation and work in low light conditions, and thanks to the excellent optical performance, which consists of high sharpness, negligible amount of chromatic aberration and great microcontrast - this lens can successfully become the most commonly used accessory that will help you create picturesque shots. The Irix 45mm f / 1.4 GFX is a professional lens designed for FujiFilm GFX cameras. It has a high-quality construction, based on the knowledge of Irix Lens engineers gained during the design and production of full-frame lenses. -

Lecture Notes 3 Charge-Coupled Devices (Ccds) – Part II • CCD

Lecture Notes 3 Charge-Coupled Devices (CCDs) { Part II • CCD array architectures and pixel layout ◦ One-dimensional CCD array ◦ Two-dimensional CCD array • Smear • Readout circuits • Anti-blooming, electronic shuttering, charge reset operation • Window of interest, pixel binning • Pinned photodiode EE 392B: CCDs{Part II 3-1 One-Dimensional (Linear) CCD Operation A. Theuwissen, \Solid State Imaging with Charge-Coupled Devices," Kluwer (1995) EE 392B: CCDs{Part II 3-2 • A line of photodiodes or photogates is used for photodetection • After integration, charge from the entire row is transferred in parallel to the horizontal CCD (HCCD) through transfer gates • New integration period begins while charge packets are transferred through the HCCD (serial transfer) to the output readout circuit (to be discussed later) • The scene can be mechanically scanned at a speed commensurate with the pixel size in the vertical direction to obtain 2D imaging • Applications: scanners, scan-and-print photocopiers, fax machines, barcode readers, silver halide film digitization, DNA sequencing • Advantages: low cost (small chip size) EE 392B: CCDs{Part II 3-3 Two-Dimensional (Area) CCD • Frame transfer CCD (FT-CCD) ◦ Full frame CCD • Interline transfer CCD (IL-CCD) • Frame-interline transfer CCD (FIT-CCD) • Time-delay-and-integration CCD (TDI-CCD) EE 392B: CCDs{Part II 3-4 Frame Transfer CCD Light−sensitive CCD array Frame−store CCD array Amplifier Output Horizontal CCD Integration Vertical shift Operation Vertical shift Horizotal shift Time EE 392B: CCDs{Part II 3-5 Pixel Layout { FT-CCD D. N. Nichols, W. Chang, B. C. Burkey, E. G. Stevens, E. A. Trabka, D. -

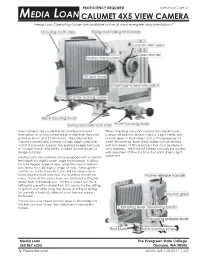

Basic View Camera

PROFICIENCY REQUIRED Operating Guide for MEDIA LOAN CALUMET 4X5 VIEW CAMERA Media Loan Operating Guides are available online at www.evergreen.edu/medialoan/ View cameras are usually tripod mounted and lend When checking out a 4x5 camera from Media Loan, themselves to a more contemplative style than the more patrons will need to obtain a tripod, a light meter, one portable 35mm and 2 1/4 formats. The Calumet 4x5 or both types of film holders, and a changing bag for Standard model view camera is a lightweight, portable sheet film loading. Each sheet holder can be loaded tool that produces superior, fine grained images because with two sheets of film, a process that must be done in of its large format and ability to adjust for a minimum of total darkness. The Polaroid holders can only be loaded image distortion. with one sheet of film at a time, but each sheet is light Media Loan's 4x5 cameras come equipped with a 150mm protected. lens which is a slightly wider angle than normal. It allows for a 44 degree angle of view, while the normal 165mm lens allows for a 40 degree angle of view. Although the controls on each of Media Loan's 4x5 lens may vary in terms of placement and style, the functions remain the same. Some of the lenses have an additional setting for strobe flash or flashbulb use. On these lenses, use the X setting for use with a strobe flash (It’s crucial for the setting to remain on X while using the studio) and the M setting for use with a flashbulb (Media Loan does not support flashbulbs).