Task Scheduling in Conversationalmulticore Speech

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Embedded Systems

Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering STUDY MATERIAL for III rd Year VI th Semester Subject Name: EMBEDDED SYSTEMS Prepared by Dr.P.Venkatesan, Associate Professor, ECE, SCSVMV University Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering PRE-REQUISITE: Basic knowledge of Microprocessors, Microcontrollers & Digital System Design OBJECTIVES: The student should be made to – Learn the architecture and programming of ARM processor. Be familiar with the embedded computing platform design and analysis. Be exposed to the basic concepts and overview of real time Operating system. Learn the system design techniques and networks for embedded systems to industrial applications. Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering SYLLABUS UNIT – I INTRODUCTION TO EMBEDDED COMPUTING AND ARM PROCESSORS Complex systems and micro processors– Embedded system design process –Design example: Model train controller- Instruction sets preliminaries - ARM Processor – CPU: programming input and output- supervisor mode, exceptions and traps – Co- processors- Memory system mechanisms – CPU performance- CPU power consumption. UNIT – II EMBEDDED COMPUTING PLATFORM DESIGN The CPU Bus-Memory devices and systems–Designing with computing platforms – consumer electronics architecture – platform-level performance analysis - Components for embedded programs- Models of programs- -

1999 Nendo Supercompiler Te

NEDO-PR- 9 90 3 x-A°-=i wu ? '7-z / □ ixomgffi'g. NEDOBIS T93018 #f%4vl/4=- — 'I'Jff&'a fi ISEDQ mb'Sa 3a 0-7 rx-/<-n>/<^7-T^yovOIElS9f%l TR&1 2^3^ A 249H illK t>*^#itafc?iJI+WE$>i=t^<bLfc3>/U7S#r$u:|-^n S & - *%#$............. (3) (5) m #.......... (7) Abstract............ (9) m #?'Hb3>/W3## i 1.1 # i 1.2 g ##Wb3 7 ©&ffitib[p].................................................... 3 1.2.1 ............................................................................... 3 1.2.2 ^ y ........................................................................ 18 1.2.3 OpenMP 43,fctf OpenMP ©x-^fcKlfOttfcifeSi................. 26 1.2.4 #%3 >;W ly —^ 3 .............................................. 39 1.2.5 VLIW ^;i/3£#Hb.................................................................... 48 1.2.6 ........................ 62 1.2.7 3>;W7 <b)## p/i-f-zL —- > py—............... 72 1.2.8 3 >;w 3©##E#m©##mm.............................................. 82 1.2.9 3°3-tr y +1 © lb fp] : Stanford Hydra Chip Multiprocessor 95 1.2.10 s^^Eibrniiis : hpca -6 .............................. 134 1.3 # b#^Hb3>/W7^#m%................. 144 1.3.1 m # 144 1.3.2 145 1.3.3 m 152 1.3.4 152 2$ j£M^>E3 >hajL-T-^ 155 2.1 M E......................................................................................................................... 155 2.2 j£M5>i(3 > b° j-—x 4“ >^"©&Hlib[p] ........................................................... 157 2.2.. 1 j[£WtS(3>h0a.-:r^ >^'©51^............................................................ 157 2.2.2 : Grid Forum99, U.C.B., JavaGrande Portals Group Meeting iZjotf & ti^jSbfp] — 165 2.2.3 : Supercomputing99 iZlotf & ££l/tribip].................... 182 2.2.4 Grid##43Z^^m^m^^7"A Globus ^©^tn1 G: Hit* £ tiWSbfp] ---- 200 (1) 2.3 ><y(D mmtmrn...... -

Thread Scheduling in Multi-Core Operating Systems Redha Gouicem

Thread Scheduling in Multi-core Operating Systems Redha Gouicem To cite this version: Redha Gouicem. Thread Scheduling in Multi-core Operating Systems. Computer Science [cs]. Sor- bonne Université, 2020. English. tel-02977242 HAL Id: tel-02977242 https://hal.archives-ouvertes.fr/tel-02977242 Submitted on 24 Oct 2020 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Ph.D thesis in Computer Science Thread Scheduling in Multi-core Operating Systems How to Understand, Improve and Fix your Scheduler Redha GOUICEM Sorbonne Université Laboratoire d’Informatique de Paris 6 Inria Whisper Team PH.D.DEFENSE: 23 October 2020, Paris, France JURYMEMBERS: Mr. Pascal Felber, Full Professor, Université de Neuchâtel Reviewer Mr. Vivien Quéma, Full Professor, Grenoble INP (ENSIMAG) Reviewer Mr. Rachid Guerraoui, Full Professor, École Polytechnique Fédérale de Lausanne Examiner Ms. Karine Heydemann, Associate Professor, Sorbonne Université Examiner Mr. Etienne Rivière, Full Professor, University of Louvain Examiner Mr. Gilles Muller, Senior Research Scientist, Inria Advisor Mr. Julien Sopena, Associate Professor, Sorbonne Université Advisor ABSTRACT In this thesis, we address the problem of schedulers for multi-core architectures from several perspectives: design (simplicity and correct- ness), performance improvement and the development of application- specific schedulers. -

Linux CPU Schedulers: CFS and Muqss Comparison

Umeå University 2021-06-14 Bachelor’s Thesis In Computing Science Linux CPU Schedulers: CFS and MuQSS Comparison Name David Shakoori Gustafsson Supervisor Anna Jonsson Examinator Ola Ringdahl CFS and MuQSS Comparison Abstract The goal of this thesis is to compare two process schedulers for the Linux operating system. In order to provide a responsive and interactive user ex- perience, an efficient process scheduling algorithm is important. This thesis seeks to explain the potential performance differences by analysing the sched- ulers’ respective designs. The two schedulers that are tested and compared are Con Kolivas’s MuQSS and Linux’s default scheduler, CFS. They are tested with respect to three main aspects: latency, turn-around time and interactivity. Latency is tested by using benchmarking software, the turn- around time by timing software compilation, and interactivity by measuring video frame drop percentages under various background loads. These tests are performed on a desktop PC running Linux OpenSUSE Leap 15.2, using kernel version 5.11.18. The test results show that CFS manages to keep a generally lower latency, while turn-around times differs little between the two. Running the turn-around time test’s compilation using a single process gives MuQSS a small advantage, while dividing the compilation evenly among the available logical cores yields little difference. However, CFS clearly outper- forms MuQSS in the interactivity test, where it manages to keep frame drop percentages considerably lower under each tested background load. As is apparent by the results, Linux’s current default scheduler provides a more responsive and interactive experience within the testing conditions, than the alternative MuQSS. -

Cross-Layer Resource Control and Scheduling for Improving Interactivity in Android

SOFTWARE—PRACTICE AND EXPERIENCE Softw. Pract. Exper. 2014; 00:1–22 Published online in Wiley InterScience (www.interscience.wiley.com). DOI: 10.1002/spe Cross-Layer Resource Control and Scheduling for Improving Interactivity in Android ∗ † Sungju Huh1, Jonghun Yoo2 and Seongsoo Hong1,2,3, , 1Department of Transdisciplinary Studies, Graduate School of Convergence Science and Technology, Seoul National University, Suwon-si, Gyeonggi-do, Republic of Korea 2Department of Electrical and Computer Engineering, Seoul National University, Seoul, Republic of Korea 3Advanced Institutes of Convergence Technology, Suwon-si, Gyeonggi-do, Republic of Korea SUMMARY Android smartphones are often reported to suffer from sluggish user interactions due to poor interactivity. This is partly because Android and its task scheduler, the completely fair scheduler (CFS), may incur perceptibly long response time to user-interactive tasks. Particularly, the Android framework cannot systemically favor user-interactive tasks over other background tasks since it does not distinguish between them. Furthermore, user-interactive tasks can suffer from high dispatch latency due to the non-preemptive nature of CFS. To address these problems, this paper presents framework-assisted task characterization and virtual time-based CFS. The former is a cross-layer resource control mechanism between the Android framework and the underlying Linux kernel. It identifies user-interactive tasks at the framework-level, by using the notion of a user-interactive task chain. It then enables the kernel scheduler to selectively promote the priorities of worker tasks appearing in the task chain to reduce the preemption latency. The latter is a cross-layer refinement of CFS in terms of interactivity. It allows a task to be preempted at every predefined period. -

Abkürzungs-Liste ABKLEX

Abkürzungs-Liste ABKLEX (Informatik, Telekommunikation) W. Alex 1. Juli 2021 Karlsruhe Copyright W. Alex, Karlsruhe, 1994 – 2018. Die Liste darf unentgeltlich benutzt und weitergegeben werden. The list may be used or copied free of any charge. Original Point of Distribution: http://www.abklex.de/abklex/ An authorized Czechian version is published on: http://www.sochorek.cz/archiv/slovniky/abklex.htm Author’s Email address: [email protected] 2 Kapitel 1 Abkürzungen Gehen wir von 30 Zeichen aus, aus denen Abkürzungen gebildet werden, und nehmen wir eine größte Länge von 5 Zeichen an, so lassen sich 25.137.930 verschiedene Abkür- zungen bilden (Kombinationen mit Wiederholung und Berücksichtigung der Reihenfol- ge). Es folgt eine Auswahl von rund 16000 Abkürzungen aus den Bereichen Informatik und Telekommunikation. Die Abkürzungen werden hier durchgehend groß geschrieben, Akzente, Bindestriche und dergleichen wurden weggelassen. Einige Abkürzungen sind geschützte Namen; diese sind nicht gekennzeichnet. Die Liste beschreibt nur den Ge- brauch, sie legt nicht eine Definition fest. 100GE 100 GBit/s Ethernet 16CIF 16 times Common Intermediate Format (Picture Format) 16QAM 16-state Quadrature Amplitude Modulation 1GFC 1 Gigabaud Fiber Channel (2, 4, 8, 10, 20GFC) 1GL 1st Generation Language (Maschinencode) 1TBS One True Brace Style (C) 1TR6 (ISDN-Protokoll D-Kanal, national) 247 24/7: 24 hours per day, 7 days per week 2D 2-dimensional 2FA Zwei-Faktor-Authentifizierung 2GL 2nd Generation Language (Assembler) 2L8 Too Late (Slang) 2MS Strukturierte -

© Groupe Eyrolles, 2012, ISBN : 978-2-212-13382-0 Avant-Propos

© Groupe Eyrolles, 2012, ISBN : 978-2-212-13382-0 Avant-propos La mise au point d’un système temps réel, construit sur des solutions logicielles libres peut sem- bler a priori assez compliquée. Quel noyau choisir ? Quelle version ? Faut-il ajouter des extensions ? Quelles sont les per- formances que l’on peut en espérer ? Quel sera le comportement du système en cas de pics de charge logicielle ? De charge d’interruption ? Toutes ces questions – qui se poseront à un moment ou un autre – peuvent décourager l’architecte système face à la multitude d’offres libres ou commerciales se réclamant de « Linux temps réel ». Dans ce livre, j’ai souhaité aider le concepteur, le chef de projet, le développeur, à bien saisir les éléments à prendre en considération lors de la mise au point d’un système où les performances d’ordonnancement et les temps de réponse aux interruptions sont importants. Le propos s’articu- lera donc sur le fonctionnement et les possibilités temps réel de Linux et de ses extensions libres. Nous n’aborderons ni les spécificités des distributions Linux (Ubuntu, Debian, Fedora, etc.) qui encadrent le noyau standard, ni les solutions commerciales (comme Suse Linux Enterprise Real Time, ou Red Hat Enterprise MRG) construites autour de Linux et de ses extensions temps réel auxquelles elles apportent essentiellement un support technique et une validation sur des plates- formes définies. Dans un premier temps (chapitres 1 et 2), nous examinerons quelques concepts de base concer- nant le multitâche sous Linux et les interactions entre l’espace utilisateur (dans lequel les applications s’exécutent) et l’espace kernel (contenant le code du noyau proprement dit). -

Scheduling of Real- Time Processes, Strategies and Analysis

FYS 4220 / 9220 – 2011 / #7 Real Time and Embedded Data Systems and Computing Scheduling of Real- Time processes, strategies and analysis T.B. Skaali, Department of Physics, University of Oslo) Some definitions to start with • The scheduler is the component of the kernel that selects which process to run next. The scheduler (or process scheduler, as it is sometimes called) can be viewed as the code that divides the finite resource of processor time between the runnable processes on a system. • Multitasking operating systems come in two flavors: cooperative multitasking and preemptive multitasking. Linux, like all Unix variants and most modern operating systems, provides preemptive multitasking. In preemptive multitasking, the scheduler decides when a process is to cease running and a new process is to resume running. The act of involuntarily suspending a running process is called preemption. The time a process runs before it is preempted is predetermined, and is called the timeslice of the process. The timeslice, in effect, gives each process a slice of the processor's time. Managing the timeslice enables the scheduler to make global scheduling decisions for the system. It also prevents any one process from monopolizing the system – Conversely, in cooperative multitasking, a process does not stop running until it voluntary decides to do so. This approach has many shortcomings T.B. Skaali, Department of Physics, University of Oslo FYS 4220/9220 - 2011 - Lecture #7 2 The challenge of scheduling in Real-Time systems • Scheduling is the problem of assigning a set of processes (tasks) to a set of resources subject to a set of constraints. -

A Complete Guide to Linux Process Scheduling

A complete guide to Linux process scheduling Nikita Ishkov University of Tampere School of Information Sciences Computer Science M.Sc. Thesis Supervisor: Martti Juhola February 2015 University of Tampere School of Information Sciences Computer Science Nikita Ishkov M.Sc. Thesis, 59 pages February 2015 The subject of this thesis is process scheduling in wide purpose operating systems. For many years kernel hackers all over the world tried to accomplish the seemingly infeasible task of achieving good interaction on desktop systems and low latencies on heavily loaded server machines. Some progress has been made in this area since the rise of free software, but, in opinion of many, it is still far from perfect. Lots of beginner operating system enthusiasts find the existing solutions too complex to understand and, in light of almost complete lack of documentation along with common hostility of active kernel developers towards rookies, impossible to get hands on. Anyone who has the courage to wade into the dragon infested layer that is the scheduler, should be aware of the ideas behind current implementations before making any contributions. That is what this thesis is about – showing how things work under the hood and how they developed to be like this. Every decision behind each concept in a kernel of an OS has its history and meaning. Here I will guide you through process scheduling mechanisms in currently stable Linux kernel as an object lesson on the matter. The work starts with an overview of the essentials of process abstraction in Linux, and continues with detailed code-level description of scheduling techniques involved in past and present kernels. -

ESW08-Scheduling, Interrupt

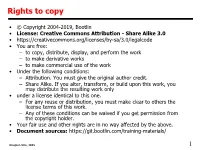

Rights to copy • © Copyright 2004-2019, Bootlin • License: Creative Commons Attribution - Share Alike 3.0 • https://creativecommons.org/licenses/by-sa/3.0/legalcode • You are free: – to copy, distribute, display, and perform the work – to make derivative works – to make commercial use of the work • Under the following conditions: – Attribution. You must give the original author credit. – Share Alike. If you alter, transform, or build upon this work, you may distribute the resulting work only • under a license identical to this one. – For any reuse or distribution, you must make clear to others the license terms of this work. – Any of these conditions can be waived if you get permission from the copyright holder. • Your fair use and other rights are in no way affected by the above. • Document sources: https://git.bootlin.com/training-materials/ Dongkun Shin, SKKU 1 8. Task scheduling Dongkun Shin, SKKU 2 Process, thread? • Confusion about the terms process, thread and task • A process is created using fork() and is composed of – An address space, which contains the program code, data, stack, shared libraries, etc. – One thread, that starts executing the main() function. – Upon creation, a process contains one thread • Additional threads can be created inside an existing process, using pthread_create() – They run in the same address space as the initial thread of the process – They start executing a function passed as argument to pthread_create() • The kernel represents each thread running in the system by a structure of type struct task_struct • From a scheduling point of view, it makes no difference between the initial thread of a process and all additional threads created dynamically using pthread_create() Dongkun Shin, SKKU 3 A thread life Dongkun Shin, SKKU 4 Execution of system calls Dongkun Shin, SKKU 5 Task Schedulers and Load Balancing • The OS scheduler assigns tasks to cores by assessing a number of parameters, such as task priority, how much time the task has had, and how long it was since last run. -

Computer Programming and Cyber Security for Beginners

Computer Programming and Cyber Security for Beginners This Book Includes : Python Machine Learning , SQL , Linux , Hacking with Kali Linux , Ethical Hacking . Coding and Cybersecurity Fundamentals Zach Codings Download the Audio Book Version of This Book for FREE If you love listening to audio books on-the-go, I have great news for you. You can download the audio book version of this book for FREE just by signing up for a FREE 30-day audible trial! See below for more details! Audible Trial Benefits As an audible customer, you will receive the below benefits with your 30- day free trial: FREE audible book copy of this book After the trial, you will get 1 credit each month to use on any audiobook Your credits automatically roll over to the next month if you don’t use them Choose from Audible’s 200,000 + titles Listen anywhere with the Audible app across multiple devices Make easy, no-hassle exchanges of any audiobook you don’t love Keep your audiobooks forever, even if you cancel your membership And much more Click the links below to get started! For Audible US For Audible UK For Audible FR For Audible DE © Copyright 2019 by Zach Codings All rights reserved. The content contained within this book may not be reproduced, duplicated or transmitted without direct written permission from the author or the publisher. Under no circumstances will any blame or legal responsibility be held against the publisher, or author, for any damages, reparation, or monetary loss due to the information contained within this book, either directly or indirectly. -

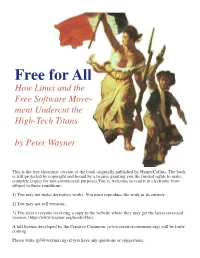

Free for All How Linux and the Free Software Move- Ment Undercut the High-Tech Titans

Free for All How Linux and the Free Software Move- ment Undercut the High-Tech Titans by Peter Wayner This is the free electronic version of the book originally published by HarperCollins. The book is still protected by copyright and bound by a license granting you the limited rights to make complete copies for non-commercial purposes.You’re welcome to read it in electronic form subject to these conditions: 1) You may not make derivative works. You must reproduce the work in its entirety. 2) You may not sell versions. 3) You refer everyone receiving a copy to the website where they may get the latest corrected version. (http://www.wayner.org/books/ffa/). A full license developed by the Creative Commons (www.creativecommons.org) will be forth- coming. Please write ([email protected]) if you have any questions or suggestions. Disappearing Cryptography, 2nd Edition Information Hiding: Steganography & Watermarking by Peter Wayner ISBN 1-55860-769-2 $44.95 To order, visit: http://www.wayner.org/books/discrypt2/ Disappearing Cryptography, Second Edition describes how to take words, sounds, or images and hide them in digital data so they look like other words, sounds, or images. When used properly, this powerful technique makes it almost impossible to trace the author and the recipient of a message. Conversations can be sub- merged in the flow of information through the Internet so that no one can know if a conversation exists at all. This full revision of the best-selling first edition “Disappearing Cryptography is a witty and enter- describes a number of different techniques to hide taining look at the world of information hiding.