Evaluation of the Foveon X3 Sensor for Astronomy

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Management of Large Sets of Image Data Capture, Databases, Image Processing, Storage, Visualization Karol Kozak

Management of large sets of image data Capture, Databases, Image Processing, Storage, Visualization Karol Kozak Download free books at Karol Kozak Management of large sets of image data Capture, Databases, Image Processing, Storage, Visualization Download free eBooks at bookboon.com 2 Management of large sets of image data: Capture, Databases, Image Processing, Storage, Visualization 1st edition © 2014 Karol Kozak & bookboon.com ISBN 978-87-403-0726-9 Download free eBooks at bookboon.com 3 Management of large sets of image data Contents Contents 1 Digital image 6 2 History of digital imaging 10 3 Amount of produced images – is it danger? 18 4 Digital image and privacy 20 5 Digital cameras 27 5.1 Methods of image capture 31 6 Image formats 33 7 Image Metadata – data about data 39 8 Interactive visualization (IV) 44 9 Basic of image processing 49 Download free eBooks at bookboon.com 4 Click on the ad to read more Management of large sets of image data Contents 10 Image Processing software 62 11 Image management and image databases 79 12 Operating system (os) and images 97 13 Graphics processing unit (GPU) 100 14 Storage and archive 101 15 Images in different disciplines 109 15.1 Microscopy 109 360° 15.2 Medical imaging 114 15.3 Astronomical images 117 15.4 Industrial imaging 360° 118 thinking. 16 Selection of best digital images 120 References: thinking. 124 360° thinking . 360° thinking. Discover the truth at www.deloitte.ca/careers Discover the truth at www.deloitte.ca/careers © Deloitte & Touche LLP and affiliated entities. Discover the truth at www.deloitte.ca/careers © Deloitte & Touche LLP and affiliated entities. -

What Resolution Should Your Images Be?

What Resolution Should Your Images Be? The best way to determine the optimum resolution is to think about the final use of your images. For publication you’ll need the highest resolution, for desktop printing lower, and for web or classroom use, lower still. The following table is a general guide; detailed explanations follow. Use Pixel Size Resolution Preferred Approx. File File Format Size Projected in class About 1024 pixels wide 102 DPI JPEG 300–600 K for a horizontal image; or 768 pixels high for a vertical one Web site About 400–600 pixels 72 DPI JPEG 20–200 K wide for a large image; 100–200 for a thumbnail image Printed in a book Multiply intended print 300 DPI EPS or TIFF 6–10 MB or art magazine size by resolution; e.g. an image to be printed as 6” W x 4” H would be 1800 x 1200 pixels. Printed on a Multiply intended print 200 DPI EPS or TIFF 2-3 MB laserwriter size by resolution; e.g. an image to be printed as 6” W x 4” H would be 1200 x 800 pixels. Digital Camera Photos Digital cameras have a range of preset resolutions which vary from camera to camera. Designation Resolution Max. Image size at Printable size on 300 DPI a color printer 4 Megapixels 2272 x 1704 pixels 7.5” x 5.7” 12” x 9” 3 Megapixels 2048 x 1536 pixels 6.8” x 5” 11” x 8.5” 2 Megapixels 1600 x 1200 pixels 5.3” x 4” 6” x 4” 1 Megapixel 1024 x 768 pixels 3.5” x 2.5” 5” x 3 If you can, you generally want to shoot larger than you need, then sharpen the image and reduce its size in Photoshop. -

Foveon FO18-50-F19 4.5 MP X3 Direct Image Sensor

® Foveon FO18-50-F19 4.5 MP X3 Direct Image Sensor Features The Foveon FO18-50-F19 is a 1/1.8-inch CMOS direct image sensor that incorporates breakthrough Foveon X3 technology. Foveon X3 direct image sensors Foveon X3® Technology • A stack of three pixels captures superior color capture full-measured color images through a unique stacked pixel sensor design. fidelity by measuring full color at every point By capturing full-measured color images, the need for color interpolation and in the captured image. artifact-reducing blur filters is eliminated. The Foveon FO18-50-F19 features the • Images have improved sharpness and immunity to color artifacts (moiré). powerful VPS (Variable Pixel Size) capability. VPS provides the on-chip capabil- • Foveon X3 technology directly converts light ity of grouping neighboring pixels together to form larger pixels that are optimal of all colors into useful signal information at every point in the captured image—no light for high frame rate, reduced noise, or dual mode still/video applications. Other absorbing filters are used to block out light. advanced features include: low fixed pattern noise, ultra-low power consumption, Variable Pixel Size (VPS) Capability and integrated digital control. • Neighboring pixels can be grouped together on-chip to obtain the effect of a larger pixel. • Enables flexible video capture at a variety of resolutions. • Enables higher ISO mode at lower resolutions. Analog Biases Row Row Digital Supplies • Reduces noise by combining pixels. Pixel Array Analog Supplies Readout Reset 1440 columns x 1088 rows x 3 layers On-Chip A/D Conversion Control Control • Integrated 12-bit A/D converter running at up to 40 MHz. -

Single-Pixel Imaging Via Compressive Sampling

© DIGITAL VISION Single-Pixel Imaging via Compressive Sampling [Building simpler, smaller, and less-expensive digital cameras] Marco F. Duarte, umans are visual animals, and imaging sensors that extend our reach— [ cameras—have improved dramatically in recent times thanks to the intro- Mark A. Davenport, duction of CCD and CMOS digital technology. Consumer digital cameras in Dharmpal Takhar, the megapixel range are now ubiquitous thanks to the happy coincidence that the semiconductor material of choice for large-scale electronics inte- Jason N. Laska, Ting Sun, Hgration (silicon) also happens to readily convert photons at visual wavelengths into elec- Kevin F. Kelly, and trons. On the contrary, imaging at wavelengths where silicon is blind is considerably Richard G. Baraniuk more complicated, bulky, and expensive. Thus, for comparable resolution, a US$500 digi- ] tal camera for the visible becomes a US$50,000 camera for the infrared. In this article, we present a new approach to building simpler, smaller, and cheaper digital cameras that can operate efficiently across a much broader spectral range than conventional silicon-based cameras. Our approach fuses a new camera architecture Digital Object Identifier 10.1109/MSP.2007.914730 1053-5888/08/$25.00©2008IEEE IEEE SIGNAL PROCESSING MAGAZINE [83] MARCH 2008 based on a digital micromirror device (DMD—see “Spatial Light Our “single-pixel” CS camera architecture is basically an Modulators”) with the new mathematical theory and algorithms optical computer (comprising a DMD, two lenses, a single pho- of compressive sampling (CS—see “CS in a Nutshell”). ton detector, and an analog-to-digital (A/D) converter) that com- CS combines sampling and compression into a single non- putes random linear measurements of the scene under view. -

Spatial Frequency Response of Color Image Sensors: Bayer Color Filters and Foveon X3 Paul M

Spatial Frequency Response of Color Image Sensors: Bayer Color Filters and Foveon X3 Paul M. Hubel, John Liu and Rudolph J. Guttosch Foveon, Inc. Santa Clara, California Abstract Bayer Background We compared the Spatial Frequency Response (SFR) The Bayer pattern, also known as a Color Filter of image sensors that use the Bayer color filter Array (CFA) or a mosaic pattern, is made up of a pattern and Foveon X3 technology for color image repeating array of red, green, and blue filter material capture. Sensors for both consumer and professional deposited on top of each spatial location in the array cameras were tested. The results show that the SFR (figure 1). These tiny filters enable what is normally for Foveon X3 sensors is up to 2.4x better. In a black-and-white sensor to create color images. addition to the standard SFR method, we also applied the SFR method using a red/blue edge. In this case, R G R G the X3 SFR was 3–5x higher than that for Bayer filter G B G B pattern devices. R G R G G B G B Introduction In their native state, the image sensors used in digital Figure 1 Typical Bayer filter pattern showing the alternate sampling of red, green and blue pixels. image capture devices are black-and-white. To enable color capture, small color filters are placed on top of By using 2 green filtered pixels for every red or blue, each photodiode. The filter pattern most often used is 1 the Bayer pattern is designed to maximize perceived derived in some way from the Bayer pattern , a sharpness in the luminance channel, composed repeating array of red, green, and blue pixels that lie mostly of green information. -

One Is Enough. a Photograph Taken by the “Single-Pixel Camera” Built by Richard Baraniuk and Kevin Kelly of Rice University

One is Enough. A photograph taken by the “single-pixel camera” built by Richard Baraniuk and Kevin Kelly of Rice University. (a) A photograph of a soccer ball, taken by a conventional digital camera at 64 64 resolution. (b) The same soccer ball, photographed by a single-pixel camera. The image is de- rived× mathematically from 1600 separate, randomly selected measurements, using a method called compressed sensing. (Photos courtesy of R. G. Baraniuk, Compressive Sensing [Lecture Notes], Signal Processing Magazine, July 2007. c 2007 IEEE.) 114 What’s Happening in the Mathematical Sciences Compressed Sensing Makes Every Pixel Count rash and computer files have one thing in common: compactisbeautiful.Butifyou’veevershoppedforadigi- Ttal camera, you might have noticed that camera manufac- turers haven’t gotten the message. A few years ago, electronic stores were full of 1- or 2-megapixel cameras. Then along came cameras with 3-megapixel chips, 10 megapixels, and even 60 megapixels. Unfortunately, these multi-megapixel cameras create enor- mous computer files. So the first thing most people do, if they plan to send a photo by e-mail or post it on the Web, is to com- pact it to a more manageable size. Usually it is impossible to discern the difference between the compressed photo and the original with the naked eye (see Figure 1, next page). Thus, a strange dynamic has evolved, in which camera engineers cram more and more data onto a chip, while software engineers de- Emmanuel Candes. (Photo cour- sign cleverer and cleverer ways to get rid of it. tesy of Emmanuel Candes.) In 2004, mathematicians discovered a way to bring this “armsrace”to a halt. -

Cameras • Video Camera

Outline • Pinhole camera •Film camera • Digital camera Cameras • Video camera Digital Visual Effects, Spring 2007 Yung-Yu Chuang 2007/3/6 with slides by Fredo Durand, Brian Curless, Steve Seitz and Alexei Efros Camera trial #1 Pinhole camera pinhole camera scene film scene barrier film Add a barrier to block off most of the rays. • It reduces blurring Put a piece of film in front of an object. • The pinhole is known as the aperture • The image is inverted Shrinking the aperture Shrinking the aperture Why not making the aperture as small as possible? • Less light gets through • Diffraction effect High-end commercial pinhole cameras Adding a lens “circle of confusion” scene lens film A lens focuses light onto the film $200~$700 • There is a specific distance at which objects are “in focus” • other points project to a “circle of confusion” in the image Lenses Exposure = aperture + shutter speed F Thin lens equation: • Aperture of diameter D restricts the range of rays (aperture may be on either side of the lens) • Any object point satisfying this equation is in focus • Shutter speed is the amount of time that light is • Thin lens applet: allowed to pass through the aperture http://www.phy.ntnu.edu.tw/java/Lens/lens_e.html Exposure Effects of shutter speeds • Two main parameters: • Slower shutter speed => more light, but more motion blur – Aperture (in f stop) – Shutter speed (in fraction of a second) • Faster shutter speed freezes motion Aperture Depth of field • Aperture is the diameter of the lens opening, usually specified by f-stop, f/D, a fraction of the focal length. -

Making the Transition from Film to Digital

TECHNICAL PAPER Making the Transition from Film to Digital TABLE OF CONTENTS Photography became a reality in the 1840s. During this time, images were recorded on 2 Making the transition film that used particles of silver salts embedded in a physical substrate, such as acetate 2 The difference between grain or gelatin. The grains of silver turned dark when exposed to light, and then a chemical and pixels fixer made that change more or less permanent. Cameras remained pretty much the 3 Exposure considerations same over the years with features such as a lens, a light-tight chamber to hold the film, 3 This won’t hurt a bit and an aperture and shutter mechanism to control exposure. 3 High-bit images But the early 1990s brought a dramatic change with the advent of digital technology. 4 Why would you want to use a Instead of using grains of silver embedded in gelatin, digital photography uses silicon to high-bit image? record images as numbers. Computers process the images, rather than optical enlargers 5 About raw files and tanks of often toxic chemicals. Chemically-developed wet printing processes have 5 Saving a raw file given way to prints made with inkjet printers, which squirt microscopic droplets of ink onto paper to create photographs. 5 Saving a JPEG file 6 Pros and cons 6 Reasons to shoot JPEG 6 Reasons to shoot raw 8 Raw converters 9 Reading histograms 10 About color balance 11 Noise reduction 11 Sharpening 11 It’s in the cards 12 A matter of black and white 12 Conclusion Snafellnesjokull Glacier Remnant. -

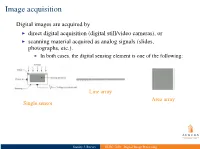

ELEC 7450 - Digital Image Processing Image Acquisition

I indirect imaging techniques, e.g., MRI (Fourier), CT (Backprojection) I physical quantities other than intensities are measured I computation leads to 2-D map displayed as intensity Image acquisition Digital images are acquired by I direct digital acquisition (digital still/video cameras), or I scanning material acquired as analog signals (slides, photographs, etc.). I In both cases, the digital sensing element is one of the following: Line array Area array Single sensor Stanley J. Reeves ELEC 7450 - Digital Image Processing Image acquisition Digital images are acquired by I direct digital acquisition (digital still/video cameras), or I scanning material acquired as analog signals (slides, photographs, etc.). I In both cases, the digital sensing element is one of the following: Line array Area array Single sensor I indirect imaging techniques, e.g., MRI (Fourier), CT (Backprojection) I physical quantities other than intensities are measured I computation leads to 2-D map displayed as intensity Stanley J. Reeves ELEC 7450 - Digital Image Processing Single sensor acquisition Stanley J. Reeves ELEC 7450 - Digital Image Processing Linear array acquisition Stanley J. Reeves ELEC 7450 - Digital Image Processing Two types of quantization: I spatial: limited number of pixels I gray-level: limited number of bits to represent intensity at a pixel Array sensor acquisition I Irradiance incident at each photo-site is integrated over time I Resulting array of intensities is moved out of sensor array and into a buffer I Quantized intensities are stored as a grayscale image Stanley J. Reeves ELEC 7450 - Digital Image Processing Array sensor acquisition I Irradiance incident at each photo-site is integrated over time I Resulting array of intensities is moved out of sensor array and into a buffer I Quantized Two types of quantization: intensities are stored as a I spatial: limited number of pixels grayscale image I gray-level: limited number of bits to represent intensity at a pixel Stanley J. -

Comparison of Color Demosaicing Methods Olivier Losson, Ludovic Macaire, Yanqin Yang

Comparison of color demosaicing methods Olivier Losson, Ludovic Macaire, Yanqin Yang To cite this version: Olivier Losson, Ludovic Macaire, Yanqin Yang. Comparison of color demosaicing methods. Advances in Imaging and Electron Physics, Elsevier, 2010, 162, pp.173-265. 10.1016/S1076-5670(10)62005-8. hal-00683233 HAL Id: hal-00683233 https://hal.archives-ouvertes.fr/hal-00683233 Submitted on 28 Mar 2012 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Comparison of color demosaicing methods a, a a O. Losson ∗, L. Macaire , Y. Yang a Laboratoire LAGIS UMR CNRS 8146 – Bâtiment P2 Université Lille1 – Sciences et Technologies, 59655 Villeneuve d’Ascq Cedex, France Keywords: Demosaicing, Color image, Quality evaluation, Comparison criteria 1. Introduction Today, the majority of color cameras are equipped with a single CCD (Charge- Coupled Device) sensor. The surface of such a sensor is covered by a color filter array (CFA), which consists in a mosaic of spectrally selective filters, so that each CCD ele- ment samples only one of the three color components Red (R), Green (G) or Blue (B). The Bayer CFA is the most widely used one to provide the CFA image where each pixel is characterized by only one single color component. -

Robust Pixel Classification for 3D Modeling with Structured Light

Robust Pixel Classification for 3D Modeling with Structured Light Yi Xu Daniel G. Aliaga Department of Computer Science Purdue University {xu43|aliaga}@cs.purdue.edu ABSTRACT (a) (b) Modeling 3D objects and scenes is an important part of computer graphics. One approach to modeling is projecting binary patterns onto the scene in order to obtain correspondences and reconstruct ... ... a densely sampled 3D model. In such structured light systems, ... ... determining whether a pixel is directly illuminated by the projector is essential to decoding the patterns. In this paper, we Binary pattern structured Our pixel classification introduce a robust, efficient, and easy to implement pixel light images algorithm classification algorithm for this purpose. Our method correctly establishes the lower and upper bounds of the possible intensity values of an illuminated pixel and of a non-illuminated pixel. Correspondence and reconstruction Based on the two intervals, our method classifies a pixel by determining whether its intensity is within one interval and not in the other. Experiments show that our method improves both the quantity of decoded pixels and the quality of the final (c) (d) reconstruction producing a dense set of 3D points, inclusively for complex scenes with indirect lighting effects. Furthermore, our method does not require newly designed patterns; therefore, it can be easily applied to previously captured data. CR Categories: I.3 [Computer Graphics], I.3.7 [Three- Dimensional Graphics and Realism], I.4 [Image Processing and Computer Vision], I.4.1 [Digitization and Image Capture]. Point cloud Our improved point cloud Keywords: structured light, direct and global separation, 3D Figure 1. -

Computer Vision, CS766

Announcement • A total of 5 (five) late days are allowed for projects. • Office hours – Me: 3:50-4:50pm Thursday (or by appointment) – Jake: 12:30-1:30PM Monday and Wednesday Image Formation Digital Camera Film Alexei Efros’ slide The Eye Image Formation • Let’s design a camera – Idea 1: put a piece of film in front of an object – Do we get a reasonable image? Steve Seitz’s slide Pinhole Camera • Add a barrier to block off most of the rays – This reduces blurring – The opening known as the aperture – How does this transform the image? Steve Seitz’s slide Camera Obscura • The first camera – 5th B.C. Aristotle, Mozi (Chinese: 墨子) – How does the aperture size affect the image? http://en.wikipedia.org/wiki/Pinhole_camera Shrinking the aperture • Why not make the aperture as small as possible? – Less light gets through – Diffraction effects... Shrinking the aperture Shrinking the aperture Sharpest image is obtained when: d 2 f d is diameter, f is distance from hole to film λ is the wavelength of light, all given in metres. Example: If f = 50mm, λ = 600nm (red), d = 0.36mm Srinivasa Narasimhan’s slide Pinhole cameras are popular Jerry Vincent's Pinhole Camera Impressive Images Jerry Vincent's Pinhole Photos What’s wrong with Pinhole Cameras? • Low incoming light => Long exposure time => Tripod KODAK Film or Paper Bright Sun Cloudy Bright TRI-X Pan 1 or 2 seconds 4 to 8 seconds T-MAX 100 Film 2 to 4 seconds 8 to 16 seconds KODABROMIDE Paper, F2 2 minutes 8 minutes http://www.kodak.com/global/en/consumer/education/lessonPlans/pinholeCamera/pinholeCanBox.shtml What’s wrong with Pinhole Cameras People are ghosted What’s wrong with Pinhole Cameras People become ghosts! Pinhole Camera Recap • Pinhole size (aperture) must be “very small” to obtain a clear image.