Logarithmic Number System Theory, Analysis and Design

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Mock In-Class Test COMS10007 Algorithms 2018/2019

Mock In-class Test COMS10007 Algorithms 2018/2019 Throughout this paper log() denotes the binary logarithm, i.e, log(n) = log2(n), and ln() denotes the logarithm to base e, i.e., ln(n) = loge(n). 1 O-notation 1. Let f : N ! N be a function. Define the set Θ(f(n)). Proof. Θ(f(n)) = fg(n) : There exist positive constants c1; c2 and n0 s.t. 0 ≤ c1f(n) ≤ g(n) ≤ c2f(n) for all n ≥ n0g 2. Give a formal proof of the statement: p 10 n 2 O(n) : p Proof. We need to show that there are positive constants c; n0 such that 10 n ≤ c · n, 10 p for every n ≥ n0. The previous inequality is equivalent to c ≤ n, which in turn 100 100 gives c2 ≤ n. Hence, we can pick c = 1 and n0 = 12 = 100. 3. Use the racetrack principle to prove the following statement: n 2 O(2n) : Hint: The following facts can be useful: • The derivative of 2n is ln(2)2n. 1 • 2 ≤ ln(2) ≤ 1 holds. n Proof. We need to show that there are positive constants c; n0 such that n ≤ c·2 . We n pick c = 1 and n0 = 1. Observe that n ≤ 2 holds for n = n0(= 1). It remains to show n that n ≤ 2 also holds for every n ≥ n0. To show this, we use the racetrack principle. Observe that the derivative of n is 1 and the derivative of 2n is ln(2)2n. Hence, by n the racetrack principle it is enough to show that 1 ≤ ln(2)2 holds for every n ≥ n0, 1 1 1 1 or log( ln 2 ) ≤ n. -

Chapter 2 Data Representation in Computer Systems 2.1 Introduction

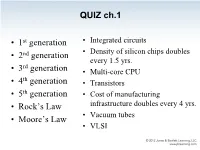

QUIZ ch.1 • 1st generation • Integrated circuits • 2nd generation • Density of silicon chips doubles every 1.5 yrs. rd • 3 generation • Multi-core CPU th • 4 generation • Transistors • 5th generation • Cost of manufacturing • Rock’s Law infrastructure doubles every 4 yrs. • Vacuum tubes • Moore’s Law • VLSI QUIZ ch.1 The highest # of transistors in a CPU commercially available today is about: • 100 million • 500 million • 1 billion • 2 billion • 2.5 billion • 5 billion QUIZ ch.1 The highest # of transistors in a CPU commercially available today is about: • 100 million • 500 million “As of 2012, the highest transistor count in a commercially available CPU is over 2.5 • 1 billion billion transistors, in Intel's 10-core Xeon • 2 billion Westmere-EX. • 2.5 billion Xilinx currently holds the "world-record" for an FPGA containing 6.8 billion transistors.” Source: Wikipedia – Transistor_count Chapter 2 Data Representation in Computer Systems 2.1 Introduction • A bit is the most basic unit of information in a computer. – It is a state of “on” or “off” in a digital circuit. – Sometimes these states are “high” or “low” voltage instead of “on” or “off..” • A byte is a group of eight bits. – A byte is the smallest possible addressable unit of computer storage. – The term, “addressable,” means that a particular byte can be retrieved according to its location in memory. 5 2.1 Introduction A word is a contiguous group of bytes. – Words can be any number of bits or bytes. – Word sizes of 16, 32, or 64 bits are most common. – Intel: 16 bits = 1 word, 32 bits = double word, 64 bits = quad word – In a word-addressable system, a word is the smallest addressable unit of storage. -

Optimizing MPC for Robust and Scalable Integer and Floating-Point Arithmetic

Optimizing MPC for robust and scalable integer and floating-point arithmetic Liisi Kerik1, Peeter Laud1, and Jaak Randmets1,2 1 Cybernetica AS, Tartu, Estonia 2 University of Tartu, Tartu, Estonia {liisi.kerik, peeter.laud, jaak.randmets}@cyber.ee Abstract. Secure multiparty computation (SMC) is a rapidly matur- ing field, but its number of practical applications so far has been small. Most existing applications have been run on small data volumes with the exception of a recent study processing tens of millions of education and tax records. For practical usability, SMC frameworks must be able to work with large collections of data and perform reliably under such conditions. In this work we demonstrate that with the help of our re- cently developed tools and some optimizations, the Sharemind secure computation framework is capable of executing tens of millions integer operations or hundreds of thousands floating-point operations per sec- ond. We also demonstrate robustness in handling a billion integer inputs and a million floating-point inputs in parallel. Such capabilities are ab- solutely necessary for real world deployments. Keywords: Secure Multiparty Computation, Floating-point operations, Protocol design 1 Introduction Secure multiparty computation (SMC) [19] allows a group of mutually distrust- ing entities to perform computations on data private to various members of the group, without others learning anything about that data or about the intermedi- ate values in the computation. Theory-wise, the field is quite mature; there exist several techniques to achieve privacy and correctness of any computation [19, 28, 15, 16, 21], and the asymptotic overheads of these techniques are known. -

CS 270 Algorithms

CS 270 Algorithms Week 10 Oliver Kullmann Binary heaps Sorting Heapification Building a heap 1 Binary heaps HEAP- SORT Priority 2 Heapification queues QUICK- 3 Building a heap SORT Analysing 4 QUICK- HEAP-SORT SORT 5 Priority queues Tutorial 6 QUICK-SORT 7 Analysing QUICK-SORT 8 Tutorial CS 270 General remarks Algorithms Oliver Kullmann Binary heaps Heapification Building a heap We return to sorting, considering HEAP-SORT and HEAP- QUICK-SORT. SORT Priority queues CLRS Reading from for week 7 QUICK- SORT 1 Chapter 6, Sections 6.1 - 6.5. Analysing 2 QUICK- Chapter 7, Sections 7.1, 7.2. SORT Tutorial CS 270 Discover the properties of binary heaps Algorithms Oliver Running example Kullmann Binary heaps Heapification Building a heap HEAP- SORT Priority queues QUICK- SORT Analysing QUICK- SORT Tutorial CS 270 First property: level-completeness Algorithms Oliver Kullmann Binary heaps In week 7 we have seen binary trees: Heapification Building a 1 We said they should be as “balanced” as possible. heap 2 Perfect are the perfect binary trees. HEAP- SORT 3 Now close to perfect come the level-complete binary Priority trees: queues QUICK- 1 We can partition the nodes of a (binary) tree T into levels, SORT according to their distance from the root. Analysing 2 We have levels 0, 1,..., ht(T ). QUICK- k SORT 3 Level k has from 1 to 2 nodes. Tutorial 4 If all levels k except possibly of level ht(T ) are full (have precisely 2k nodes in them), then we call the tree level-complete. CS 270 Examples Algorithms Oliver The binary tree Kullmann 1 ❚ Binary heaps ❥❥❥❥ ❚❚❚❚ ❥❥❥❥ ❚❚❚❚ ❥❥❥❥ ❚❚❚❚ Heapification 2 ❥ 3 ❖ ❄❄ ❖❖ Building a ⑧⑧ ❄ ⑧⑧ ❖❖ heap ⑧⑧ ❄ ⑧⑧ ❖❖❖ 4 5 6 ❄ 7 ❄ HEAP- ⑧ ❄ ⑧ ❄ SORT ⑧⑧ ❄ ⑧⑧ ❄ Priority 10 13 14 15 queues QUICK- is level-complete (level-sizes are 1, 2, 4, 4), while SORT ❥ 1 ❚❚ Analysing ❥❥❥❥ ❚❚❚❚ QUICK- ❥❥❥ ❚❚❚ SORT ❥❥❥ ❚❚❚❚ 2 ❥❥ 3 ♦♦♦ ❄❄ ⑧ Tutorial ♦♦ ❄❄ ⑧⑧ ♦♦♦ ⑧ 4 ❄ 5 ❄ 6 ❄ ⑧⑧ ❄❄ ⑧ ❄ ⑧ ❄ ⑧⑧ ❄ ⑧⑧ ❄ ⑧⑧ ❄ 8 9 10 11 12 13 is not (level-sizes are 1, 2, 3, 6). -

Data Representation in Computer Systems

© Jones & Bartlett Learning, LLC © Jones & Bartlett Learning, LLC NOT FOR SALE OR DISTRIBUTION NOT FOR SALE OR DISTRIBUTION There are 10 kinds of people in the world—those who understand binary and those who don’t. © Jones & Bartlett Learning, LLC © Jones—Anonymous & Bartlett Learning, LLC NOT FOR SALE OR DISTRIBUTION NOT FOR SALE OR DISTRIBUTION © Jones & Bartlett Learning, LLC © Jones & Bartlett Learning, LLC NOT FOR SALE OR DISTRIBUTION NOT FOR SALE OR DISTRIBUTION CHAPTER Data Representation © Jones & Bartlett Learning, LLC © Jones & Bartlett Learning, LLC NOT FOR SALE 2OR DISTRIBUTIONin ComputerNOT FOR Systems SALE OR DISTRIBUTION © Jones & Bartlett Learning, LLC © Jones & Bartlett Learning, LLC NOT FOR SALE OR DISTRIBUTION NOT FOR SALE OR DISTRIBUTION 2.1 INTRODUCTION he organization of any computer depends considerably on how it represents T numbers, characters, and control information. The converse is also true: Stan- © Jones & Bartlettdards Learning, and conventions LLC established over the years© Jones have determined& Bartlett certain Learning, aspects LLC of computer organization. This chapter describes the various ways in which com- NOT FOR SALE putersOR DISTRIBUTION can store and manipulate numbers andNOT characters. FOR SALE The ideas OR presented DISTRIBUTION in the following sections form the basis for understanding the organization and func- tion of all types of digital systems. The most basic unit of information in a digital computer is called a bit, which is a contraction of binary digit. In the concrete sense, a bit is nothing more than © Jones & Bartlett Learning, aLLC state of “on” or “off ” (or “high”© Jones and “low”)& Bartlett within Learning, a computer LLCcircuit. In 1964, NOT FOR SALE OR DISTRIBUTIONthe designers of the IBM System/360NOT FOR mainframe SALE OR computer DISTRIBUTION established a conven- tion of using groups of 8 bits as the basic unit of addressable computer storage. -

CS211 ❒ Overview • Code (Number Guessing Example)

CS211 1. Number Guessing Example ASYMPTOTIC COMPLEXITY 1.1 Code ❒ Overview import java.io.*; • code (number guessing example) public class NumberGuess { • approximate analysis to motivate math public static void main(String[] args) throws IOException { int guess; • machine model int count; final int LOW=Integer.parseInt(args[0]); • analysis (space, time) final int HIGH=Integer.parseInt(args[1]); final int STOP=HIGH-LOW+1; • machine architecture and time assumptions int target = (int)(Math.random()*(HIGH-LOW+1))+(int)(LOW); BufferedReader in = new BufferedReader(new • code analysis InputStreamReader(System.in)); • more assumptions System.out.print("\nGuess an integer: "); guess = Integer.parseInt(in.readLine()); • Big Oh notation count = 1; • examples while (guess != target && guess >= LOW && • extra material (theory, background, math) guess <= HIGH && count < STOP ) { if (guess < target) System.out.println("Too low!"); else if (guess>target) System.out.println("Too high!"); else System.exit(0); System.out.print("\nGuess an integer: "); guess = Integer.parseInt(in.readLine()); count++ ; } if (target == guess) System.out.println("\nCongratulations!\n"); } } 12 1.2 Solution Algorithms 1.4 More General • random: • count only comparisons of guess to target - pick any number (same number as guesses) - worst-case time could be infinite • other examples: • linear: range linea binar pattern r y - guess one number at a time 1111→1 - start from bottom and head to top 1–10 10 5 5→7→8→9→10 1–100 100 8 50→75→87→93→96→98→99→100 - worst-case time could -

Chap02: Data Representation in Computer Systems

CHAPTER 2 Data Representation in Computer Systems 2.1 Introduction 57 2.2 Positional Numbering Systems 58 2.3 Converting Between Bases 58 2.3.1 Converting Unsigned Whole Numbers 59 2.3.2 Converting Fractions 61 2.3.3 Converting between Power-of-Two Radices 64 2.4 Signed Integer Representation 64 2.4.1 Signed Magnitude 64 2.4.2 Complement Systems 70 2.4.3 Excess-M Representation for Signed Numbers 76 2.4.4 Unsigned Versus Signed Numbers 77 2.4.5 Computers, Arithmetic, and Booth’s Algorithm 78 2.4.6 Carry Versus Overflow 81 2.4.7 Binary Multiplication and Division Using Shifting 82 2.5 Floating-Point Representation 84 2.5.1 A Simple Model 85 2.5.2 Floating-Point Arithmetic 88 2.5.3 Floating-Point Errors 89 2.5.4 The IEEE-754 Floating-Point Standard 90 2.5.5 Range, Precision, and Accuracy 92 2.5.6 Additional Problems with Floating-Point Numbers 93 2.6 Character Codes 96 2.6.1 Binary-Coded Decimal 96 2.6.2 EBCDIC 98 2.6.3 ASCII 99 2.6.4 Unicode 99 2.7 Error Detection and Correction 103 2.7.1 Cyclic Redundancy Check 103 2.7.2 Hamming Codes 106 2.7.3 Reed-Soloman 113 Chapter Summary 114 CMPS375 Class Notes (Chap02) Page 1 / 25 Dr. Kuo-pao Yang 2.1 Introduction 57 This chapter describes the various ways in which computers can store and manipulate numbers and characters. Bit: The most basic unit of information in a digital computer is called a bit, which is a contraction of binary digit. -

Binary Search Algorithm Anthony Lin¹* Et Al

WikiJournal of Science, 2019, 2(1):5 doi: 10.15347/wjs/2019.005 Encyclopedic Review Article Binary search algorithm Anthony Lin¹* et al. Abstract In In computer science, binary search, also known as half-interval search,[1] logarithmic search,[2] or binary chop,[3] is a search algorithm that finds a position of a target value within a sorted array.[4] Binary search compares the target value to an element in the middle of the array. If they are not equal, the half in which the target cannot lie is eliminated and the search continues on the remaining half, again taking the middle element to compare to the target value, and repeating this until the target value is found. If the search ends with the remaining half being empty, the target is not in the array. Binary search runs in logarithmic time in the worst case, making 푂(log 푛) comparisons, where 푛 is the number of elements in the array, the 푂 is ‘Big O’ notation, and 푙표푔 is the logarithm.[5] Binary search is faster than linear search except for small arrays. However, the array must be sorted first to be able to apply binary search. There are spe- cialized data structures designed for fast searching, such as hash tables, that can be searched more efficiently than binary search. However, binary search can be used to solve a wider range of problems, such as finding the next- smallest or next-largest element in the array relative to the target even if it is absent from the array. There are numerous variations of binary search. -

Introduction to Algorithms

CS 5002: Discrete Structures Fall 2018 Lecture 9: November 8, 2018 1 Instructors: Adrienne Slaughter, Tamara Bonaci Disclaimer: These notes have not been subjected to the usual scrutiny reserved for formal publications. They may be distributed outside this class only with the permission of the Instructor. Introduction to Algorithms Readings for this week: Rosen, Chapter 2.1, 2.2, 2.5, 2.6 Sets, Set Operations, Cardinality of Sets, Matrices 9.1 Overview Objective: Introduce algorithms. 1. Review logarithms 2. Asymptotic analysis 3. Define Algorithm 4. How to express or describe an algorithm 5. Run time, space (Resource usage) 6. Determining Correctness 7. Introduce representative problems 1. foo 9.2 Asymptotic Analysis The goal with asymptotic analysis is to try to find a bound, or an asymptote, of a function. This allows us to come up with an \ordering" of functions, such that one function is definitely bigger than another, in order to compare two functions. We do this by considering the value of the functions as n goes to infinity, so for very large values of n, as opposed to small values of n that may be easy to calculate. Once we have this ordering, we can introduce some terminology to describe the relationship of two functions. 9-1 9-2 Lecture 9: November 8, 2018 Growth of Functions nn 4096 n! 2048 1024 512 2n 256 128 n2 64 32 n log(n) 16 n 8 p 4 n log(n) 2 1 1 2 3 4 5 6 7 8 From this chart, we see: p 1 log n n n n log(n) n2 2n n! nn (9.1) Complexity Terminology Θ(1) Constant Θ(log n) Logarithmic Θ(n) Linear Θ(n log n) Linearithmic Θ nb Polynomial Θ(bn) (where b > 1) Exponential Θ(n!) Factorial 9.2.1 Big-O: Upper Bound Definition 9.1 (Big-O: Upper Bound) f(n) = O(g(n)) means there exists some constant c such that f(n) ≤ c · g(n), for large enough n (that is, as n ! 1). -

Comparison of Parallel Sorting Algorithms

Comparison of parallel sorting algorithms Darko Božidar and Tomaž Dobravec Faculty of Computer and Information Science, University of Ljubljana, Slovenia Technical report November 2015 TABLE OF CONTENTS 1. INTRODUCTION .................................................................................................... 3 2. THE ALGORITHMS ................................................................................................ 3 2.1 BITONIC SORT ........................................................................................................................ 3 2.2 MULTISTEP BITONIC SORT ................................................................................................. 3 2.3 IBR BITONIC SORT ................................................................................................................ 3 2.4 MERGE SORT .......................................................................................................................... 4 2.5 QUICKSORT ............................................................................................................................ 4 2.6 RADIX SORT ........................................................................................................................... 4 2.7 SAMPLE SORT ........................................................................................................................ 4 3. TESTING ENVIRONMENT .................................................................................... 5 4. RESULTS ................................................................................................................ -

Calculating Logs Manually

calculating logs manually File Name: calculating logs manually.pdf Size: 2113 KB Type: PDF, ePub, eBook Category: Book Uploaded: 3 May 2019, 12:21 PM Rating: 4.6/5 from 561 votes. Status: AVAILABLE Last checked: 10 Minutes ago! In order to read or download calculating logs manually ebook, you need to create a FREE account. Download Now! eBook includes PDF, ePub and Kindle version ✔ Register a free 1 month Trial Account. ✔ Download as many books as you like (Personal use) ✔ Cancel the membership at any time if not satisfied. ✔ Join Over 80000 Happy Readers Book Descriptions: We have made it easy for you to find a PDF Ebooks without any digging. And by having access to our ebooks online or by storing it on your computer, you have convenient answers with calculating logs manually . To get started finding calculating logs manually , you are right to find our website which has a comprehensive collection of manuals listed. Our library is the biggest of these that have literally hundreds of thousands of different products represented. Home | Contact | DMCA Book Descriptions: calculating logs manually And let’s start with the logarithm of 2. As a kid I always wanted to know how to calculate log 2 and nobody was able to tell me. Can we guess the logarithm of 19,683.Let’s follow the chain. We are looking for 1225, so to account for the difference let’s round 3.0876 up to 3.088. How to calculate log 11. Here is a way to do this. We can take the geometric mean of 10 and 12. -

Representing Information in English Until 1598

In the twenty-first century, digital computers are everywhere. You al- most certainly have one with you now, although you may call it a “phone.” You are more likely to be reading this book on a digital device than on paper. We do so many things with our digital devices that we may forget the original purpose: computation. When Dr. Howard Figure 1-1 Aiken was in charge of the huge, Howard Aiken, center, and Grace Murray Hopper, mechanical Harvard Mark I calcu- on Aiken’s left, with other members of the Bureau of Ordnance Computation Project, in front of the lator during World War II, he Harvard Mark I computer at Harvard University, wasn’t happy unless the machine 1944. was “making numbers,” that is, U.S. Department of Defense completing the numerical calculations needed for the war effort. We still use computers for purely computational tasks, to make numbers. It may be less ob- vious, but the other things for which we use computers today are, underneath, also computational tasks. To make that phone call, your phone, really a com- puter, converts an electrical signal that’s an analog of your voice to numbers, encodes and compresses them, and passes them onward to a cellular radio which it itself is controlled by numbers. Numbers are fundamental to our digital devices, and so to understand those digital devices, we have to understand the numbers that flow through them. 1.1 Numbers as Abstractions It is easy to suppose that as soon as humans began to accumulate possessions, they wanted to count them and make a record of the count.