Open Huiwenyu-Dissertation.Pdf

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Selective Maximum Coverage and Set Packing

Selective Maximum Coverage and Set Packing Felix J. L. Willamowski1∗ and Bj¨ornF. Tauer2y 1 Lehrstuhl f¨urOperations Research RWTH Aachen University [email protected] 2 Lehrstuhl f¨urManagement Science RWTH Aachen University [email protected] Abstract. In this paper we introduce the selective maximum coverage and the selective maximum set packing problem and variants of them. Both problems are strongly related to well studied problems such as maximum coverage, set packing, and (bipartite) hypergraph matching. The two problems are given by a collection of subsets of a ground set and index subsets of the indices of these subsets. Additionally, there are weights either for each element of the ground set or each subset for each index subset. The goal is to find at most one index per index subset such that the total weight of covered elements or of disjoint subsets is maximum. Applications arise in transportation, e.g., dispatching for ride- sharing services. We prove strong intractability results for the problems and provide almost best possible approximation guarantees. 1 Introduction and Preliminaries We introduce the selective maximum coverage problem (smc) generalizing the weighted maximum k coverage problem (wmkc) which is given by a finite ground set X with weights w : X ! Q≥0, a finite collection S of subsets of X, and an 0 integer k 2 Z≥0. The goal is to find a subcollection S ⊆ S containing at most k P subsets and maximizing the total weight of covered elements x2X0 w(x) with 0 X = [S2S0 S. For unit weights, w ≡ 1, the problem is called maximum k coverage problem (mkc). -

Exact Algorithms and APX-Hardness Results for Geometric Packing and Covering Problems∗

Exact Algorithms and APX-Hardness Results for Geometric Packing and Covering Problems∗ Timothy M. Chan† Elyot Grant‡ March 29, 2012 Abstract We study several geometric set cover and set packing problems involv- ing configurations of points and geometric objects in Euclidean space. We show that it is APX-hard to compute a minimum cover of a set of points in the plane by a family of axis-aligned fat rectangles, even when each rectangle is an ǫ-perturbed copy of a single unit square. We extend this result to several other classes of objects including almost-circular ellipses, axis-aligned slabs, downward shadows of line segments, downward shad- ows of graphs of cubic functions, fat semi-infinite wedges, 3-dimensional unit balls, and axis-aligned cubes, as well as some related hitting set prob- lems. We also prove the APX-hardness of a related family of discrete set packing problems. Our hardness results are all proven by encoding a highly structured minimum vertex cover problem which we believe may be of independent interest. In contrast, we give a polynomial-time dynamic programming algo- rithm for geometric set cover where the objects are pseudodisks containing the origin or are downward shadows of pairwise 2-intersecting x-monotone curves. Our algorithm extends to the weighted case where a minimum-cost cover is required. We give similar algorithms for several related hitting set and discrete packing problems. 1 Introduction In a geometric set cover problem, we are given a range space (X, )—a universe X of points in Euclidean space and a pre-specified configurationS of regions or geometric objects such as rectangles or half-planes. -

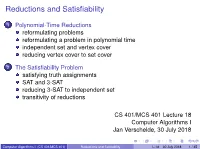

Reductions and Satisfiability

Reductions and Satisfiability 1 Polynomial-Time Reductions reformulating problems reformulating a problem in polynomial time independent set and vertex cover reducing vertex cover to set cover 2 The Satisfiability Problem satisfying truth assignments SAT and 3-SAT reducing 3-SAT to independent set transitivity of reductions CS 401/MCS 401 Lecture 18 Computer Algorithms I Jan Verschelde, 30 July 2018 Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 1 / 45 Reductions and Satifiability 1 Polynomial-Time Reductions reformulating problems reformulating a problem in polynomial time independent set and vertex cover reducing vertex cover to set cover 2 The Satisfiability Problem satisfying truth assignments SAT and 3-SAT reducing 3-SAT to independent set transitivity of reductions Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 2 / 45 reformulating problems The Ford-Fulkerson algorithm computes maximum flow. By reduction to a flow problem, we could solve the following problems: bipartite matching, circulation with demands, survey design, and airline scheduling. Because the Ford-Fulkerson is an efficient algorithm, all those problems can be solved efficiently as well. Our plan for the remainder of the course is to explore computationally hard problems. Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 3 / 45 imagine a meeting with your boss ... From Computers and intractability. A Guide to the Theory of NP-Completeness by Michael R. Garey and David S. Johnson, Bell Laboratories, 1979. Computer Algorithms I (CS 401/MCS 401) Reductions and Satifiability L-18 30 July 2018 4 / 45 what you want to say is From Computers and intractability. -

8. NP and Computational Intractability

Chapter 8 NP and Computational Intractability CS 350 Winter 2018 1 Algorithm Design Patterns and Anti-Patterns Algorithm design patterns. Ex. Greedy. O(n log n) interval scheduling. Divide-and-conquer. O(n log n) FFT. Dynamic programming. O(n2) edit distance. Duality. O(n3) bipartite matching. Reductions. Local search. Randomization. Algorithm design anti-patterns. NP-completeness. O(nk) algorithm unlikely. PSPACE-completeness. O(nk) certification algorithm unlikely. Undecidability. No algorithm possible. 2 8.1 Polynomial-Time Reductions Classify Problems According to Computational Requirements Q. Which problems will we be able to solve in practice? A working definition. [von Neumann 1953, Godel 1956, Cobham 1964, Edmonds 1965, Rabin 1966] Those with polynomial-time algorithms. Yes Probably no Shortest path Longest path Matching 3D-matching Min cut Max cut 2-SAT 3-SAT Planar 4-color Planar 3-color Bipartite vertex cover Vertex cover Primality testing Factoring 4 Classify Problems Desiderata. Classify problems according to those that can be solved in polynomial-time and those that cannot. Provably requires exponential-time. Given a Turing machine, does it halt in at most k steps? (the Halting Problem) Given a board position in an n-by-n generalization of chess, can black guarantee a win? Frustrating news. Huge number of fundamental problems have defied classification for decades. This chapter. Show that these fundamental problems are "computationally equivalent" and appear to be different manifestations of one really hard problem. 5 Polynomial-Time Reduction Desiderata'. Suppose we could solve X in polynomial-time. What else could we solve in polynomial time? don't confuse with reduces from Reduction. -

Generalized Sphere-Packing and Sphere-Covering Bounds on the Size

1 Generalized sphere-packing and sphere-covering bounds on the size of codes for combinatorial channels Daniel Cullina, Student Member, IEEE and Negar Kiyavash, Senior Member, IEEE Abstract—Many of the classic problems of coding theory described. Erasure and deletion errors differ from substitution are highly symmetric, which makes it easy to derive sphere- errors in a more fundamental way: the error operation takes packing upper bounds and sphere-covering lower bounds on the an input from one set and produces an output from another. size of codes. We discuss the generalizations of sphere-packing and sphere-covering bounds to arbitrary error models. These In this paper, we will discuss the generalizations of sphere- generalizations become especially important when the sizes of the packing and sphere-covering bounds to arbitrary error models. error spheres are nonuniform. The best possible sphere-packing These generalizations become especially important when the and sphere-covering bounds are solutions to linear programs. sizes of the error spheres are nonuniform. Sphere-packing and We derive a series of bounds from approximations to packing sphere-covering bounds are fundamentally related to linear and covering problems and study the relationships and trade-offs between them. We compare sphere-covering lower bounds with programming and the best possible versions of the bounds other graph theoretic lower bounds such as Turan’s´ theorem. We are solutions to linear programs. In highly symmetric cases, show how to obtain upper bounds by optimizing across a family of including many classical error models, it is often possible to channels that admit the same codes. -

Packing Triangles in Low Degree Graphs and Indifference Graphsଁ Gordana Mani´C∗, Yoshiko Wakabayashi

Discrete Mathematics 308 (2008) 1455–1471 www.elsevier.com/locate/disc Packing triangles in low degree graphs and indifference graphsଁ Gordana Mani´c∗, Yoshiko Wakabayashi Departamento de Ciência da Computação, Universidade de São Paulo, Rua do Matão, 1010—CEP 05508-090–São Paulo, Brazil Received 5 November 2005; received in revised form 28 August 2006; accepted 11 July 2007 Available online 6 September 2007 Abstract We consider the problems of finding the maximum number of vertex-disjoint triangles (VTP) and edge-disjoint triangles (ETP) in a simple graph. Both problems are NP-hard. The algorithm with the best approximation ratio known so far for these problems has ratio 3/2 + ε, a result that follows from a more general algorithm for set packing obtained by Hurkens and Schrijver [On the size of systems of sets every t of which have an SDR, with an application to the worst-case ratio of heuristics for packing problems, SIAM J. Discrete Math. 2(1) (1989) 68–72]. We present improvements on the approximation ratio for restricted cases of VTP and ETP that are known to be APX-hard: we give an approximation algorithm for VTP on graphs with maximum degree 4 with ratio slightly less than 1.2, and for ETP on graphs with maximum degree 5 with ratio 4/3. We also present an exact linear-time algorithm for VTP on the class of indifference graphs. © 2007 Elsevier B.V. All rights reserved. Keywords: Packing triangles; Approximation algorithm; Polynomial algorithm; Low degree graph; Indifference graph 1. Introduction For a given family F of sets, a collection of pairwise disjoint sets of F is called a packing of F. -

Set Packing� Partitioning� and Covering

Aspects of Set Packing Partitioning and Covering Ralf Borndorfer C L C L C L L C L C Aspects of Set Packing Partitioning and Covering vorgelegt von DiplomWirtschaftsmathematiker Ralf Borndorfer Vom Fachb ereich Mathematik der Technischen Universitat Berlin zur Erlangung des akademischen Grades Doktor der Naturwissenschaften genehmigte Dissertation Promotionsausschu Vorsitzender Prof Dr Kurt Kutzler Berichter Prof Dr Martin Grotschel Berichter Prof Dr Rob ert Weismantel Tag der wissenschaftlichen Aussprache Marz Berlin D Zusammenfassung Diese Dissertation b efat sich mit ganzzahligen Programmen mit Systemen SetPacking Partitioning und CoveringProbleme Die drei Teile der Dissertation b ehandeln p olyedrische algorithmische und angewandte Asp ekte derartiger Mo delle Teil diskutiert p olyedrische Asp ekte Den Auftakt bildet ei ne Literaturubersicht in Kapitel In Kapitel untersuchen wir SetPackingRelaxierungen von kombinatorischen Optimierungspro blemen ub er Azyklische Digraphen und Lineare Ordnungen Schnitte und Multischnitte Ub erdeckungen von Mengen und ub er Packungen von Mengen Familien von Ungleichungen fur geeignete SetPacking Relaxierungen sowie deren zugehorige Separierungsalgorithmen sind auf diese Probleme ub ertragbar T eil ist algorithmischen und rechnerischen Asp ekten gewidmet Wir dokumentieren in Kapitel die wesentlichen Bestandteile ei nes BranchAndCut Algorithmus zur Losung von SetPartitioning Problemen Der Algorithmus implementiert einige der theoretischen Ergebnisse aus Teil Rechenergebnisse -

NP-Completeness Proofs

Outline NP-Completeness Proofs Matt Williamson1 1Lane Department of Computer Science and Electrical Engineering West Virginia University Graph Theory, Packing, and Covering Williamson NP-Completeness Proofs Outline Outline 1 NP-Complete Problems in Graph Theory Bisection Hamilton Path and Circuit Longest Path and Circuit TSP (D) 3-Coloring 2 Sets and Numbers Tripartite Matching Set Covering,Set Packing, and Exact Cover by 3-Sets Integer Programming Knapsack Pseudopolynomial Algorithms and Strong NP-Completeness Williamson NP-Completeness Proofs Outline Outline 1 NP-Complete Problems in Graph Theory Bisection Hamilton Path and Circuit Longest Path and Circuit TSP (D) 3-Coloring 2 Sets and Numbers Tripartite Matching Set Covering,Set Packing, and Exact Cover by 3-Sets Integer Programming Knapsack Pseudopolynomial Algorithms and Strong NP-Completeness Williamson NP-Completeness Proofs Bisection Hamilton Path and Circuit Graph-Theoretic Problems Longest Path and Circuit Sets and Numbers TSP (D) 3-Coloring Outline 1 NP-Complete Problems in Graph Theory Bisection Hamilton Path and Circuit Longest Path and Circuit TSP (D) 3-Coloring 2 Sets and Numbers Tripartite Matching Set Covering,Set Packing, and Exact Cover by 3-Sets Integer Programming Knapsack Pseudopolynomial Algorithms and Strong NP-Completeness Williamson NP-Completeness Proofs Bisection Hamilton Path and Circuit Graph-Theoretic Problems Longest Path and Circuit Sets and Numbers TSP (D) 3-Coloring MAX BISECTION Problem Given a graph G = (V , E), we are looking for a cut S, V − S of size K or more such that |S| = |V − S|. Note that if |V | = n is odd, then the problem is trivial. Example Williamson NP-Completeness Proofs Bisection Hamilton Path and Circuit Graph-Theoretic Problems Longest Path and Circuit Sets and Numbers TSP (D) 3-Coloring MAX BISECTION Problem Given a graph G = (V , E), we are looking for a cut S, V − S of size K or more such that |S| = |V − S|. -

Online Set Packing and Competitive Scheduling of Multi-Part Tasks

Online Set Packing∗ Yuval Emek† Magn´usM. Halld´orsson‡ Yishay Mansour§ [email protected] [email protected] [email protected] Boaz Patt-Shamir¶ k Jaikumar Radhakrishnan∗∗ Dror Rawitz¶ [email protected] [email protected] [email protected] Abstract In online set packing (osp), elements arrive online, announcing which sets they belong to, and the algorithm needs to assign each element, upon arrival, to one of its sets. The goal is to maximize the number of sets that are assigned all their elements: a set that misses even a single element is deemed worthless. This is a natural online optimization problem that abstracts allocation of scarce compound resources, e.g., multi-packet data frames in communication networks. We present a randomized competitive online algorithm for the weighted case with general capacity (namely, where sets may have different values, and elements arrive with different multiplicities). We prove a matching lower bound on the competitive ratio for any randomized online algorithm. Our bounds are expressed in terms of the maximum set size and the maximum number of sets an element belongs to. We also present refined bounds that depend on the uniformity of these parameters. Keywords: online set packing, competitive analysis, randomized algorithm, packet fragmentation, multi-packet frames ∗A preliminary version was presented at the 29th Annual ACM Symposium on Principles of Distributed Computing (PODC), 2010. Research supported in part by the Next Generation Video (NeGeV) Consortium, Israel. †Computer Engineering and Networks Laboratory, ETH Zurich, 8092 Zurich, Switzerland ‡School of Computer Science, Reykjavik University, 103 Reykjavik, Iceland. -

On the Complexity of Approximating K-Set Packing *

On the Complexity of Approximating k-Set Packing ? Elad Hazan1 Shmuel Safra2;3 and Oded Schwartz2 1 Department of Computer Science, Princeton University, Princeton NJ ,08544 USA [email protected] 2 School of Computer Science, Tel Aviv University, Tel Aviv 69978, Israel fsafra,[email protected] 3 School of Mathematics, Tel Aviv University, Tel Aviv 69978, Israel Abstract. We study the complexity of bounded packing problems, mainly the problem of k-Set k Packing (k-SP), We prove that k-SP cannot be efficiently approximated to within a factor of O( ln k ) k unless P = NP . This improves the previous factor of p by Trevisan [Tre01]. 2O( ln k) 54 30 23 A similar technique yields NP-hardness factors of 53 ¡ "; 29 ¡ " and 22 ¡ " for 4-SP, 5-SP and 6-SP respectively. These results extend to the problem of k-Dimensional-Matching and the problem of Maximum Independent-Set in (k + 1)-claw-free graphs. 1 Introduction Bounded variants of optimization problems are often easier to approximate than the general, unbounded problems. The Independent-Set problem illustrates this well: it cannot be approximated to within O(N 1¡") unless P = NP [H˚as99],nevertheless, once the input graph has a bounded degree d, much better approx- d log log d imations exist (e.g, a log d approximation by [Vis96]). We next examine some bounded variants of the set-packing (SP) problem and try to illustrate the connection between the bounded parameters (e.g, sets size, occurrences of elements) and the complexity of the bounded problem. -

Solving the Set Cover Problem and the Problem of Exact Cover by 3-Sets In

BioSystems 72 (2003) 263–275 Solving the set cover problem and the problem of exact cover by 3-sets in the Adleman–Lipton model Weng-Long Chang a,1, Minyi Guo b,∗ a Department of Information Management, Southern Taiwan University of Technology, Tainan, Taiwan 701, ROC b Department of Computer Software, The University of Aizu, Aizu-Wakamatsu City, Fukushima 965-8580, Japan Received 15 April 2003; received in revised form 23 July 2003; accepted 23 July 2003 Abstract Adleman wrote the first paper in which it is shown that deoxyribonucleic acid (DNA) strands could be employed towards cal- culating solutions to an instance of the NP-complete Hamiltonian path problem (HPP). Lipton also demonstrated that Adleman’s techniques could be used to solve the NP-complete satisfiability (SAT) problem (the first NP-complete problem). In this paper, it is proved how the DNA operations presented by Adleman and Lipton can be used for developing DNA algorithms to resolving the set cover problem and the problem of exact cover by 3-sets. © 2003 Elsevier Ireland Ltd. All rights reserved. Keywords: Biological computing; Molecular computing; DNA-based computing; The NP-complete problem 1. Introduction shows that the set cover problem and the problem of exact cover by 3-sets can be solved with biological op- Producing 1018 DNA strands that fit in a test tube erations in the Adleman–Lipton model. Furthermore, is possible through advances in molecular biology this work represents obvious evidence for the ability (Sinden, 1994). Adleman wrote the first paper in of DNA-based computing to solve the NP-complete which it is shown that each DNA strand could be ap- problems. -

NP Completeness Part 2

Grand challenge: Classify Problems According to Computational Requirements Q. Which problems will we be able to solve in practice? NP completeness A working definition. [Cobham 1964, Edmonds 1965, Rabin 1966] and computational tractability Those with polynomial-time algorithms. Part II Yes Probably no Shortest path Longest path Matching 3D-matching Min cut Max cut 2-SAT 3-SAT Planar 4-color Planar 3-color Some Slides by Kevin Wayne. Copyright © 2005 Pearson-Addison Wesley. Bipartite vertex cover Vertex cover All rights reserved. Primality testing Factoring 1 2 Classify Problems Polynomial-Time Reduction Desiderata. Classify problems according to those that can be solved in Desiderata'. Suppose we could solve X in polynomial-time. What else polynomial-time and those that cannot. could we solve in polynomial time? For any nice function T(n) Reduction. Problem X polynomial reduces to problem Y if arbitrary There are problems that require more than T(n) time to solve. instances of problem X can be solved using: Polynomial number of standard computational steps, plus Frustrating news. Huge number of fundamental problems have defied Polynomial number of calls to oracle that solves problem Y. classification for decades. Notation. X P Y. NP-completeness: Show that these fundamental problems are "computationally equivalent" and appear to be different manifestations of one really hard problem. 3 4 Polynomial-Time Reduction Purpose. Classify problems according to relative difficulty. Basic Reduction Strategies Design algorithms. If X P Y and Y can be solved in polynomial-time, then X can also be solved in polynomial time. Reduction by simple equivalence. Establish intractability.