Real-Time Systems Development This Page Intentionally Left Blank Real-Time Systems Development

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Validated Products List, 1995 No. 3: Programming Languages, Database

NISTIR 5693 (Supersedes NISTIR 5629) VALIDATED PRODUCTS LIST Volume 1 1995 No. 3 Programming Languages Database Language SQL Graphics POSIX Computer Security Judy B. Kailey Product Data - IGES Editor U.S. DEPARTMENT OF COMMERCE Technology Administration National Institute of Standards and Technology Computer Systems Laboratory Software Standards Validation Group Gaithersburg, MD 20899 July 1995 QC 100 NIST .056 NO. 5693 1995 NISTIR 5693 (Supersedes NISTIR 5629) VALIDATED PRODUCTS LIST Volume 1 1995 No. 3 Programming Languages Database Language SQL Graphics POSIX Computer Security Judy B. Kailey Product Data - IGES Editor U.S. DEPARTMENT OF COMMERCE Technology Administration National Institute of Standards and Technology Computer Systems Laboratory Software Standards Validation Group Gaithersburg, MD 20899 July 1995 (Supersedes April 1995 issue) U.S. DEPARTMENT OF COMMERCE Ronald H. Brown, Secretary TECHNOLOGY ADMINISTRATION Mary L. Good, Under Secretary for Technology NATIONAL INSTITUTE OF STANDARDS AND TECHNOLOGY Arati Prabhakar, Director FOREWORD The Validated Products List (VPL) identifies information technology products that have been tested for conformance to Federal Information Processing Standards (FIPS) in accordance with Computer Systems Laboratory (CSL) conformance testing procedures, and have a current validation certificate or registered test report. The VPL also contains information about the organizations, test methods and procedures that support the validation programs for the FIPS identified in this document. The VPL includes computer language processors for programming languages COBOL, Fortran, Ada, Pascal, C, M[UMPS], and database language SQL; computer graphic implementations for GKS, COM, PHIGS, and Raster Graphics; operating system implementations for POSIX; Open Systems Interconnection implementations; and computer security implementations for DES, MAC and Key Management. -

Timesys Linux Install HOWTO

TimeSys Linux Install HOWTO Trevor Harmon <[email protected]> 2005−04−05 Revision History Revision 1.0 2005−04−05 Revised by: TH first official release This document is a quick−start guide for installing TimeSys Linux on a typical desktop workstation. TimeSys Linux Install HOWTO Table of Contents 1. Introduction.....................................................................................................................................................1 1.1. Background.......................................................................................................................................1 1.2. Copyright and License......................................................................................................................1 1.3. Disclaimer.........................................................................................................................................2 1.4. Feedback...........................................................................................................................................2 2. Requirements...................................................................................................................................................3 3. Install the packages.........................................................................................................................................4 4. Prepare the source directories.......................................................................................................................5 5. Configure -

Cochran Undersea Technology

Cochran Undersea Technology www.DiveCochran.com Technical Publication ©2013 7Apr13 Task Loading While scuba diving, the diver wants to focus on his Mission whether it be cruising a reef, photographing fish, cave diving, disarming a mine, or just diving with a buddy for the fun of it. The diver doesn’t need or want to be distracted or concerned by equipment tasks that could be easily avoided. Cochran dive computers have the lowest task loading of any unit on the market today. This is one reason why Cochran is the only dive computer used by NATO, the US Navy, and other international militaries. Cochran’s goal is to allow Cochran dive computer owners to maximize their diving experience. Toward this goal, Cochran has addressed the following issues: No Buttons – no Worries Regardless of how well a product is designed, having pushbuttons is always less reliable than not having pushbuttons. Pushbuttons can be troublesome when wearing gloves. Trying to press a pushbutton or combination of pushbuttons while carrying a camera and taking a picture is at best, challenging. Cochran dive computers are fully automatic and have no pushbuttons. If desired to change any settings in a Cochran dive computer while on the surface, the diver uses the three permanent stainless contacts on the side or bottom of the unit. Batteries Cochran dive computers have the longest battery life. By checking for battery warnings just before a dive, the diver can be assured that when he starts a dive there is sufficient battery power to complete it. Constantly checking the battery while in a dive is not necessary with a Cochran dive computer. -

Download This Issue

Editorial Dru Lavigne, Thomas Kunz, François Lefebvre Open is the New Closed: How the Mobile Industry uses Open Source to Further Commercial Agendas Andreas Constantinou Establishing and Engaging an Active Open Source Ecosystem with the BeagleBoard Jason Kridner Low Cost Cellular Networks with OpenBTS David Burgess CRC Mobile Broadcasting F/LOSS Projects François Lefebvre Experiences From the OSSIE Open Source Software Defined Radio Project Carl B. Dietrich, Jeffrey H. Reed, Stephen H. Edwards, Frank E. Kragh The Open Source Mobile Cloud: Delivering Next-Gen Mobile Apps and Systems Hal Steger The State of Free Software in Mobile Devices Startups Bradley M. Kuhn Recent Reports Upcoming Events March Contribute 2010 March 2010 Editorial Dru Lavigne, Thomas Kunz, and François Lefebvre discuss the 3 editorial theme of Mobile. Open is the New Closed: How the Mobile Industry uses Open Source to Further Commercial Agendas Andreas Constantinou, Research Director at VisionMobile, PUBLISHER: examines the many forms that governance models can take and 5 The Open Source how they are used in the mobile industry to tightly control the Business Resource is a roadmap and application of open source projects. monthly publication of the Talent First Network. Establishing and Engaging an Active Open Source Ecosystem with Archives are available at the BeagleBoard the website: Jason Kridner, open platforms principal architect at Texas 9 http://www.osbr.ca Instruments Inc., introduces the BeagleBoard open source community. EDITOR: Low Cost Cellular Networks with OpenBTS Dru Lavigne David Burgess, Co-Founder of The OpenBTS Project, describes 14 [email protected] how an open source release may have saved the project. -

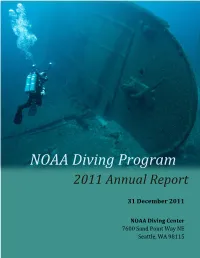

2011 NOAA Diving Program Annual Report

NOAA Diving Program 2011 Annual Report 31 December 2011 NOAA Diving Center 7600 Sand Point Way NE Seattle, WA 98115 NOAA Diving Program - Annual Report 2011 Summary For over 40 years, NOAA divers have safely, efficiently, and cost-effectively collected data and performed tasks underwater in support of NOAA goals and objectives. Fiscal year 2011 was no exception. FY11 continued to be a year of change and transition for the NOAA Diving Program (NDP) with the implementation of new standards, policies and procedures designed to increase safety and ensure compliance with federal regulations. Nine new policies were implemented, a new Working Diving standards and safety manual was adopted, and a new Diving Unit Safety Assessment (DUSA) program was initiated. This year, when compared to FY 2010, the Program experienced a 09% (44) decrease in the number of divers, a 01% (130) decrease in the number of dives performed, and a 05% (471) decrease in the total hours of bottom time logged by NOAA divers. These data do not include dives conducted by reciprocity partners which would have significantly impacted the totals in each category. Of the total number of dives recorded (13,859), 71% (9,845) were classified as ‘scientific,’ 14% (1,971) were ‘working,’ and 26% (3,550) were ‘training or proficiency’ (see Chart 1). These data represent almost no change in the number of scientific dives, a 12% decrease in working dives compared to FY10, and a 09% decrease in training and proficiency dives. Should the proposed alternate diving standards, currently under review by OSHA, be approved, the Program may see an increase in the number of working dives performed each year due to the lessening of restrictions on Nitrox breathing mixtures and the ability to conduct working dives without a chamber on site. -

Biomechanics of Safe Ascents Workshop

PROCEEDINGS OF BIOMECHANICS OF SAFE ASCENTS WORKSHOP — 10 ft E 30 ft TIME AMERICAN ACADEMY OF UNDERWATER SCIENCES September 25 - 27, 1989 Woods Hole, Massachusetts Proceedings of the AAUS Biomechanics of Safe Ascents Workshop Michael A. Lang and Glen H. Egstrom, (Editors) Copyright © 1990 by AMERICAN ACADEMY OF UNDERWATER SCIENCES 947 Newhall Street Costa Mesa, CA 92627 All Rights Reserved No part of this book may be reproduced in any form by photostat, microfilm, or any other means, without written permission from the publishers Copies of these Proceedings can be purchased from AAUS at the above address This workshop was sponsored in part by the National Oceanic and Atmospheric Administration (NOAA), Department of Commerce, under grant number 40AANR902932, through the Office of Undersea Research, and in part by the Diving Equipment Manufacturers Association (DEMA), and in part by the American Academy of Underwater Sciences (AAUS). The U.S. Government is authorized to produce and distribute reprints for governmental purposes notwithstanding the copyright notation that appears above. Opinions presented at the Workshop and in the Proceedings are those of the contributors, and do not necessarily reflect those of the American Academy of Underwater Sciences PROCEEDINGS OF THE AMERICAN ACADEMY OF UNDERWATER SCIENCES BIOMECHANICS OF SAFE ASCENTS WORKSHOP WHOI/MBL Woods Hole, Massachusetts September 25 - 27, 1989 MICHAEL A. LANG GLEN H. EGSTROM Editors American Academy of Underwater Sciences 947 Newhall Street, Costa Mesa, California 92627 U.S.A. An American Academy of Underwater Sciences Diving Safety Publication AAUSDSP-BSA-01-90 CONTENTS Preface i About AAUS ii Executive Summary iii Acknowledgments v Session 1: Introductory Session Welcoming address - Michael A. -

RTI Data Distribution Service Platform Notes

RTI Data Distribution Service The Real-Time Publish-Subscribe Middleware Platform Notes Version 4.5c © 2004-2010 Real-Time Innovations, Inc. All rights reserved. Printed in U.S.A. First printing. June 2010. Trademarks Real-Time Innovations and RTI are registered trademarks of Real-Time Innovations, Inc. All other trademarks used in this document are the property of their respective owners. Copy and Use Restrictions No part of this publication may be reproduced, stored in a retrieval system, or transmitted in any form (including electronic, mechanical, photocopy, and facsimile) without the prior written permission of Real- Time Innovations, Inc. The software described in this document is furnished under and subject to the RTI software license agreement. The software may be used or copied only under the terms of the license agreement. Technical Support Real-Time Innovations, Inc. 385 Moffett Park Drive Sunnyvale, CA 94089 Phone: (408) 990-7444 Email: [email protected] Website: http://www.rti.com/support Contents 1 Supported Platforms.........................................................................................................................1 2 AIX Platforms.....................................................................................................................................3 2.1 Changing Thread Priority.......................................................................................................3 2.2 Multicast Support ....................................................................................................................3 -

Research Purpose Operating Systems – a Wide Survey

GESJ: Computer Science and Telecommunications 2010|No.3(26) ISSN 1512-1232 RESEARCH PURPOSE OPERATING SYSTEMS – A WIDE SURVEY Pinaki Chakraborty School of Computer and Systems Sciences, Jawaharlal Nehru University, New Delhi – 110067, India. E-mail: [email protected] Abstract Operating systems constitute a class of vital software. A plethora of operating systems, of different types and developed by different manufacturers over the years, are available now. This paper concentrates on research purpose operating systems because many of them have high technological significance and they have been vividly documented in the research literature. Thirty-four academic and research purpose operating systems have been briefly reviewed in this paper. It was observed that the microkernel based architecture is being used widely to design research purpose operating systems. It was also noticed that object oriented operating systems are emerging as a promising option. Hence, the paper concludes by suggesting a study of the scope of microkernel based object oriented operating systems. Keywords: Operating system, research purpose operating system, object oriented operating system, microkernel 1. Introduction An operating system is a software that manages all the resources of a computer, both hardware and software, and provides an environment in which a user can execute programs in a convenient and efficient manner [1]. However, the principles and concepts used in the operating systems were not standardized in a day. In fact, operating systems have been evolving through the years [2]. There were no operating systems in the early computers. In those systems, every program required full hardware specification to execute correctly and perform each trivial task, and its own drivers for peripheral devices like card readers and line printers. -

Dive Medicine Aide-Memoire Lt(N) K Brett Reviewed by Lcol a Grodecki Diving Physics Physics

Dive Medicine Aide-Memoire Lt(N) K Brett Reviewed by LCol A Grodecki Diving Physics Physics • Air ~78% N2, ~21% O2, ~0.03% CO2 Atmospheric pressure Atmospheric Pressure Absolute Pressure Hydrostatic/ gauge Pressure Hydrostatic/ Gauge Pressure Conversions • Hydrostatic/ gauge pressure (P) = • 1 bar = 101 KPa = 0.987 atm = ~1 atm for every 10 msw/33fsw ~14.5 psi • Modification needed if diving at • 10 msw = 1 bar = 0.987 atm altitude • 33.07 fsw = 1 atm = 1.013 bar • Atmospheric P (1 atm at 0msw) • Absolute P (ata)= gauge P +1 atm • Absolute P = gauge P + • °F = (9/5 x °C) +32 atmospheric P • °C= 5/9 (°F – 32) • Water virtually incompressible – density remains ~same regardless • °R (rankine) = °F + 460 **absolute depth/pressure • K (Kelvin) = °C + 273 **absolute • Density salt water 1027 kg/m3 • Density fresh water 1000kg/m3 • Calculate depth from gauge pressure you divide press by 0.1027 (salt water) or 0.10000 (fresh water) Laws & Principles • All calculations require absolute units • Henry’s Law: (K, °R, ATA) • The amount of gas that will dissolve in a liquid is almost directly proportional to • Charles’ Law V1/T1 = V2/T2 the partial press of that gas, & inversely proportional to absolute temp • Guy-Lussac’s Law P1/T1 = P2/T2 • Partial Pressure (pp) – pressure • Boyle’s Law P1V1= P2V2 contributed by a single gas in a mix • General Gas Law (P1V1)/ T1 = (P2V2)/ T2 • To determine the partial pressure of a gas at any depth, we multiply the press (ata) • Archimedes' Principle x %of that gas Henry’s Law • Any object immersed in liquid is buoyed -

Vxworks OS Changer Gives Developers the Ability to Reuse Vxworks Applications on Diff Erent Operating Systems

VxWorks® OS Changer Change Your OS - Keep Your Code The OS Changer family of products gives developers the freedom to switch operating systems while leveraging on their existing code and knowledge base to protect their software investment. VxWorks OS Changer gives developers the ability to reuse VxWorks applications on diff erent operating systems. VxWorks OS Changer Highlights Protect your software investment by re-using your VxWorks code Reduce time to market by migrating VxWorks code to a standard OS interface architecture Protect your knowledge-base by using familiar APIs and eliminate the learning curve on the new OS platform Eliminate dependency on a single OS vendor and switch to - An OS that meets your performance and memory footprint needs - An OS that off ers better tools, middleware/drivers and support - An OS that supports your next generation silicon Reduce ongoing development and maintenance cost - Develop target specifi c code on a host platform - Re-use one set of code across multiple host & target OS platforms - Break down VxWorks applications into manageable pieces to reduce complexity and add module protection - Use same APIs for inter-task and inter-process communications OS Changer is highly optimized for each specifi c OS platform Eclipse-based host environment is available to port VxWorks applications using OS Changer in OS PAL (refer to the OS PAL datasheet) OS Changer includes access to the BASE OS Abstractor API features to allow development of highly portable applications (refer to the OS Abstractor datasheet) Additionally, POSIX or open source Linux code can be reused on a new OS platform with POSIX OS Abstractor (refer to the POSIX OS Abstractor datasheet) VxWorks OS Changer is off ered royalty-free with source code Using VxWorks OS Changer OS Changer is designed for use as a C library. -

|||GET||| Group Interaction in High Risk Environments 1St Edition

GROUP INTERACTION IN HIGH RISK ENVIRONMENTS 1ST EDITION DOWNLOAD FREE Rainer Dietrich | 9781351932097 | | | | | Looking for other ways to read this? Computational methods such as resampling and bootstrapping are also used when data are insufficient for direct statistical methods. There is little point in making highly precise computer calculations on numbers that cannot be estimated accurately Group Interaction in High Risk Environments 1st edition. Categories : Underwater diving safety. Thus, the surface-supplied diver is much less likely to have an "out-of-air" emergency than a scuba diver as there are normally two alternative air sources available. Methods for dealing with Group Interaction in High Risk Environments 1st edition risks include Provision for adequate contingencies safety factors for budget and schedule contingencies are discussed in Chapter 6. This genetic substudy included Two Sister Study participants and their parents, and some Sister Study participants who developed incident breast cancer invasive or DCIS before age 50 during the follow-up period. In terms of generalizability, our sample is predominantly non-Hispanic white women, and the results may not apply to other racial or ethnic groups. This third parameter may give some management support by establishing early warning indicators for specific serious risks, which might not otherwise have been established. ROVs may be used together with divers, or without a diver in the water, in which case the risk to the diver associated with the dive is eliminated altogether. Suitable equipment can be selected, personnel can be trained in its use and support provided to manage the foreseeable contingencies. However, taken together, there is the possibility that many of the estimates of these factors would prove to be too optimistic, leading. -

Altaapi Software User's Manual

AltaAPI™ Software User’s Manual Part Number: 14301-00000-I4 Cage Code: 4RK27 ● NAICS: 334118 Alta Data Technologies LLC 4901 Rockaway Blvd, Building A Rio Rancho, NM 87124 USA (tel) 505-994-3111 ● www.altadt.com i AltaAPI™ Software User’s Manual ● 14301-00000-I4 Alta Data Technologies LLC ● www.altadt.com CUSTOMER NOTES: Document Information: Alta Software Version: 2.6.5.0 Rev I4 Release Date: November 10, 2014 Note to the Reader and End-User: This document is provided for information only and is copyright by © Alta Data Technologies LLC. While Alta strives to provide the most accurate information, there may be errors and omissions in this document. Alta disclaims all liability in document errors and any product usage. By using an Alta product, the customer or end user agrees (1) to accept Alta’s Standard Terms and Conditions of Sale, Standard Warranty and Software License and (2) to not hold Alta Members, Employees, Contractors or Sales & Support Representatives responsible for any loss or legal liability, tangible or intangible, from any document errors or any product usage. The product described in this document is not US ITAR controlled. Use of Alta products or documentation in violation of local usage, waste discard and export control rules, or in violation of US ITAR regulations, voids product warranty and shall not be supported. This document may be distributed to support government programs and projects. Third party person, company or consultant distribution is not allowed without Alta’s written permission. AltaCore, AltaCore-1553, AltaCore-ARINC, AltaAPI, AltaAPI-LV, AltaView, AltaRTVal, ENET- 1553, ENET-A429 & ENET-1553-EBR are Trademarks of Alta Data Technologies LLC, Rio Rancho, New Mexico USA Contact: We welcome comments and suggestions.