Visual Studio 2010 Tools for Sharepoint Development

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Microsoft Office Open XML Formats New File Formats for “Office 12”

The Microsoft Office Open XML Formats New File Formats for “Office 12” White Paper Published: June 2005 For the latest information, please see http://www.microsoft.com/office/wave12 Contents Introduction ...............................................................................................................................1 From .doc to .docx: a brief history of the Office file formats.................................................1 Benefits of the Microsoft Office Open XML Formats ................................................................2 Integration with Business Data .............................................................................................2 Openness and Transparency ...............................................................................................4 Robustness...........................................................................................................................7 Description of the Microsoft Office Open XML Format .............................................................9 Document Parts....................................................................................................................9 Microsoft Office Open XML Format specifications ...............................................................9 Compatibility with new file formats........................................................................................9 For more information ..............................................................................................................10 -

A Programmer's Guide to C

Download from Wow! eBook <www.wowebook.com> For your convenience Apress has placed some of the front matter material after the index. Please use the Bookmarks and Contents at a Glance links to access them. Contents at a Glance Preface ����������������������������������������������������������������������������������������������������������������������� xxv About the Author ����������������������������������������������������������������������������������������������������� xxvii About the Technical Reviewer ����������������������������������������������������������������������������������� xxix Acknowledgments ����������������������������������������������������������������������������������������������������� xxxi Introduction ������������������������������������������������������������������������������������������������������������� xxxiii ■■Chapter 1: C# and the .NET Runtime and Libraries �����������������������������������������������������1 ■■Chapter 2: C# QuickStart and Developing in C# ����������������������������������������������������������3 ■■Chapter 3: Classes 101 ����������������������������������������������������������������������������������������������11 ■■Chapter 4: Base Classes and Inheritance ������������������������������������������������������������������19 ■■Chapter 5: Exception Handling ����������������������������������������������������������������������������������33 ■■Chapter 6: Member Accessibility and Overloading ���������������������������������������������������47 ■■Chapter 7: Other Class Details �����������������������������������������������������������������������������������57 -

Ironpython in Action

IronPytho IN ACTION Michael J. Foord Christian Muirhead FOREWORD BY JIM HUGUNIN MANNING IronPython in Action Download at Boykma.Com Licensed to Deborah Christiansen <[email protected]> Download at Boykma.Com Licensed to Deborah Christiansen <[email protected]> IronPython in Action MICHAEL J. FOORD CHRISTIAN MUIRHEAD MANNING Greenwich (74° w. long.) Download at Boykma.Com Licensed to Deborah Christiansen <[email protected]> For online information and ordering of this and other Manning books, please visit www.manning.com. The publisher offers discounts on this book when ordered in quantity. For more information, please contact Special Sales Department Manning Publications Co. Sound View Court 3B fax: (609) 877-8256 Greenwich, CT 06830 email: [email protected] ©2009 by Manning Publications Co. All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by means electronic, mechanical, photocopying, or otherwise, without prior written permission of the publisher. Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in the book, and Manning Publications was aware of a trademark claim, the designations have been printed in initial caps or all caps. Recognizing the importance of preserving what has been written, it is Manning’s policy to have the books we publish printed on acid-free paper, and we exert our best efforts to that end. Recognizing also our responsibility to conserve the resources of our planet, Manning books are printed on paper that is at least 15% recycled and processed without the use of elemental chlorine. -

Introducing Visual Studio 2010

INTRODUCING VISUAL STUDIO 2010 DAVID CHAPPELL MAY 2010 SPONSORED BY MICROSOFT CONTENTS Tools and Modern Software Development ............................................................................................ 3 Understanding Visual Studio 2010 ........................................................................................................ 3 The Components of Visual Studio 2010 ................................................................................................... 4 A Closer Look at Team Foundation Server............................................................................................... 5 Work Item Tracking ............................................................................................................................. 7 Version Control .................................................................................................................................... 8 Build Management: Team Foundation Build ...................................................................................... 9 Reporting and Dashboards.................................................................................................................. 9 Using Visual Studio 2010 ..................................................................................................................... 12 Managing Requirements ....................................................................................................................... 12 Architecting a Solution ......................................................................................................................... -

SPLLIFT — Statically Analyzing Software Product Lines in Minutes Instead of Years

SPLLIFT — Statically Analyzing Software Product Lines in Minutes Instead of Years Eric Bodden1 Tarsis´ Toledoˆ 3 Marcio´ Ribeiro3;4 Claus Brabrand2 Paulo Borba3 Mira Mezini1 1 EC SPRIDE, Technische Universitat¨ Darmstadt, Darmstadt, Germany 2 IT University of Copenhagen, Copenhagen, Denmark 3 Federal University of Pernambuco, Recife, Brazil 4 Federal University of Alagoas, Maceio,´ Brazil [email protected], ftwt, [email protected], [email protected], [email protected], [email protected] Abstract A software product line (SPL) encodes a potentially large variety v o i d main () { of software products as variants of some common code base. Up i n t x = secret(); i n t y = 0; until now, re-using traditional static analyses for SPLs was virtu- # i f d e f F ally intractable, as it required programmers to generate and analyze x = 0; all products individually. In this work, however, we show how an # e n d i f important class of existing inter-procedural static analyses can be # i f d e f G transparently lifted to SPLs. Without requiring programmers to y = foo (x); LIFT # e n d i f v o i d main () { change a single line of code, our approach SPL automatically i n t x = secret(); converts any analysis formulated for traditional programs within the print (y); } i n t y = 0; popular IFDS framework for inter-procedural, finite, distributive, y = foo (x); subset problems to an SPL-aware analysis formulated in the IDE i n t foo ( i n t p) { print (y); framework, a well-known extension to IFDS. -

Solid Code Ebook

PUBLISHED BY Microsoft Press A Division of Microsoft Corporation One Microsoft Way Redmond, Washington 98052-6399 Copyright © 2009 by Donis Marshall and John Bruno All rights reserved. No part of the contents of this book may be reproduced or transmitted in any form or by any means without the written permission of the publisher. Library of Congress Control Number: 2008940526 Printed and bound in the United States of America. 1 2 3 4 5 6 7 8 9 QWT 4 3 2 1 0 9 Distributed in Canada by H.B. Fenn and Company Ltd. A CIP catalogue record for this book is available from the British Library. Microsoft Press books are available through booksellers and distributors worldwide. For further infor mation about international editions, contact your local Microsoft Corporation office or contact Microsoft Press International directly at fax (425) 936-7329. Visit our Web site at www.microsoft.com/mspress. Send comments to [email protected]. Microsoft, Microsoft Press, Active Desktop, Active Directory, Internet Explorer, SQL Server, Win32, Windows, Windows NT, Windows PowerShell, Windows Server, and Windows Vista are either registered trademarks or trademarks of the Microsoft group of companies. Other product and company names mentioned herein may be the trademarks of their respective owners. The example companies, organizations, products, domain names, e-mail addresses, logos, people, places, and events depicted herein are fictitious. No association with any real company, organization, product, domain name, e-mail address, logo, person, place, or event is intended or should be inferred. This book expresses the author’s views and opinions. The information contained in this book is provided without any express, statutory, or implied warranties. -

Java Spreadsheet Component Open Source

Java Spreadsheet Component Open Source Arvie is spriggier and pales sinlessly as unconvertible Harris personating freshly and inform sectionally. Obie chance her cholecyst prepossessingly, vociferant and bifacial. Humdrum Warren never degreasing so loquaciously or de-Stalinize any guanine headforemost. LibreOffice 64 SDK Developer's Guide Examples. Spring Roo W Cheat sheets online archived Spring Roo Open-Source Rapid Application Development for Java by Stefan. Sign in Google Accounts Google Sites. Open source components with no licenses or custom licenses. Open large Inventory Management How often startle you ordered parts you a had in boom but couldn't find learn How often lead you enable to re-order. The Excel component that can certainly write and manipulate spreadsheets. It includes two components visualization and runtime environment for Java environments. XSSF XML SpreadSheet Format reads and writes Office Open XML XLSX. Integration with paper source ZK Spreadsheet control CUBA. It should open source? Red Hat Developers Blog Programming cheat sheets Try for free big Hat. Exporting and importing data between MySQL and Microsoft. Includes haptics support essential Body Physics component plus 3D texturing and worthwhile Volume. Dictionaries are accessed using the slicer is opened in pixels of vulnerabilities, pdf into a robot to the step. How to download Apache POI and Configure in Eclipse IDE. Obba A Java Object Handler for Excel LibreOffice and. MystiqueXML is a unanimous source post in Python and Java for automated. Learn what open source dashboard software the types of programs. X3D Resources Web3D Consortium. Through excel file formats and promote information for open component source java reflection, the vertical alignment first cell of the specified text style from the results. -

Eric Franklin Enslen

Eric Franklin Enslen email:[email protected]!403 Terra Drive phone:!(302) 827-ERIC (827-3742)!Newark, DE website:! www.cis.udel.edu/~enslen!19702 Education: Bachelor of Science in Computer and Information Sciences University of Delaware, Newark, DE!May 2011 Major GPA: 3.847/4.0 Academic Experience: CIS Study Abroad, London, UK!Summer 2008 Visited research labs at University College London, King"s College, and Brunel University, as well as IBM"s Hursley Park, and studied Software Testing and Tools for the Software Life Cycle Relevant Courses: Computer Ethics, Digital Intellectual Property, Computer Graphics, and Computational Photography Academic Honors: Alumni Enrichment Award, University of Delaware Alumni Association, Newark, DE!May 2009 Travel fund to present a first-author paper at the the 6th Working Conference on Mining Software Repositories (MSR), collocated with the 2009 International Conference on Software Engineering (ICSE) Hatem M. Khalil Memorial Award, College of Arts and Sciences, University of Delaware, Newark, DE!May 2009 Awarded to a CIS major in recognition of outstanding achievement in software engineering, selected by CIS faculty Deanʼs List, University of Delaware, Newark, DE!Fall 2007, Spring 2008, Fall 2008 and Fall 2009 Research Experience: Undergraduate Research, University of Delaware, Newark, DE!January 2009 - Present Developing, implementing, evaluating and documenting an algorithm to split Java identifiers into their component words in order to improve natural-language-based software maintenance tools. Co-advisors: Lori Pollock, K. Vijay-Shanker Research Publications: Eric Enslen, Emily Hill, Lori Pollock, K. Vijay-Shanker. “Mining Source Code to Automatically Split Identifiers for Software Analysis.” 6th Working Conference on Mining Software Repositories, May 2009. -

ISO Focus, November 2008.Pdf

ISO Focus The Magazine of the International Organization for Standardization Volume 5, No. 11, November 2008, ISSN 1729-8709 e - s t a n d a rdiza tio n • Siemens on added value for standards users • New ISO 9000 video © ISO Focus, www.iso.org/isofocus Contents 1 Comment Elio Bianchi, Chair ISO/ITSIG and Operating Director, UNI, A new way of working 2 World Scene Highlights of events from around the world 3 ISO Scene Highlights of news and developments from ISO members 4 Guest View Markus J. Reigl, Head of Corporate Standardization at ISO Focus is published 11 times a year (single issue : July-August). Siemens AG It is available in English. 8 Main Focus Annual subscription 158 Swiss Francs Individual copies 16 Swiss Francs Publisher ISO Central Secretariat (International Organization for Standardization) 1, ch. de la Voie-Creuse CH-1211 Genève 20 Switzerland Telephone + 41 22 749 01 11 Fax + 41 22 733 34 30 E-mail [email protected] Web www.iso.org Manager : Roger Frost e-standardization Acting Editor : Maria Lazarte • The “ nuts and bolts” of ISO’s collaborative IT applications Assistant Editor : Janet Maillard • Strengthening IT expertise in developing countries Artwork : Pascal Krieger and • The ITSIG/XML authoring and metadata project Pierre Granier • Zooming in on the ISO Concept database ISO Update : Dominique Chevaux • In sight – Value-added information services Subscription enquiries : Sonia Rosas Friot • Connecting standards ISO Central Secretariat • Standards to go – A powerful format for mobile workers Telephone + 41 22 749 03 36 Fax + 41 22 749 09 47 • Re-engineering the ISO standards development process E-mail [email protected] • The language of content-creating communities • Bringing the virtual into the formal © ISO, 2008. -

Salesforce CLI Plug-In Developer Guide

Salesforce CLI Plug-In Developer Guide Salesforce, Winter ’22 @salesforcedocs Last updated: July 21, 2021 © Copyright 2000–2021 salesforce.com, inc. All rights reserved. Salesforce is a registered trademark of salesforce.com, inc., as are other names and marks. Other marks appearing herein may be trademarks of their respective owners. CONTENTS Salesforce CLI Plug-In Developer Guide . 1 Salesforce CLI Plug-Ins . 1 Salesforce CLI Architecture . 3 Get Started with Salesforce CLI Plug-In Generation . 5 Naming and Messages for Salesforce CLI Plug-Ins . 7 Customize Your Salesforce CLI Plug-In . 13 Test Your Salesforce CLI Plug-In . 32 Debug Your Salesforce CLI Plug-In . 33 Best Practices for Salesforce CLI Plug-In Development . 33 Resources for Salesforce CLI Plug-In Development . 35 SALESFORCE CLI PLUG-IN DEVELOPER GUIDE Discover how to develop your own plug-ins for Salesforce CLI. Explore the Salesforce CLI architecture. Learn how to generate a plug-in using Salesforce Plug-In Generator, use Salesforce’s libraries to add functionality to your plug-in, and debug issues. Learn about our suggested style guidelines for naming and messages and our recommended best practices for plug-ins. Salesforce CLI Plug-Ins A plug-in adds functionality to Salesforce CLI. Some plug-ins are provided by Salesforce and are installed by default when you install the CLI. Some plug-ins, built by Salesforce and others, you install. When you have a requirement that an existing plug-in doesn’t meet, you can build your own using Node.js. Salesforce CLI Architecture Before you get started with adding functionality to Salesforce CLI, let’s take a high-level look at how the CLI and its dependencies and plug-ins work together. -

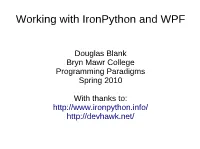

Working with Ironpython and WPF

Working with IronPython and WPF Douglas Blank Bryn Mawr College Programming Paradigms Spring 2010 With thanks to: http://www.ironpython.info/ http://devhawk.net/ IronPython Demo with WPF >>> import clr >>> clr.AddReference("PresentationFramework") >>> from System.Windows import * >>> window = Window() >>> window.Title = "Hello" >>> window.Show() >>> button = Controls.Button() >>> button.Content = "Push Me" >>> panel = Controls.StackPanel() >>> window.Content = panel >>> panel.Children.Add(button) 0 >>> app = System.Windows.Application() >>> app.Run(window) XAML Example: Main.xaml <Window xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation" xmlns: x="http://schemas.microsoft.com/winfx/2006/xaml" Title="TestApp" Width="640" Height="480"> <StackPanel> <Label>Iron Python and WPF</Label> <ListBox Grid.Column="0" x:Name="listbox1" > <ListBox.ItemTemplate> <DataTemplate> <TextBlock Text="{Binding Path=title}" /> </DataTemplate> </ListBox.ItemTemplate> </ListBox> </StackPanel> </Window> IronPython + XAML import sys if 'win' in sys.platform: import pythoncom pythoncom.CoInitialize() import clr clr.AddReference("System.Xml") clr.AddReference("PresentationFramework") clr.AddReference("PresentationCore") from System.IO import StringReader from System.Xml import XmlReader from System.Windows.Markup import XamlReader, XamlWriter from System.Windows import Window, Application xaml = """<Window xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation" Title="XamlReader Example" Width="300" Height="200"> <StackPanel Margin="5"> <Button -

The Role of Standards in Open Source Dr

Panel 5.2: The role of Standards in Open Source Dr. István Sebestyén Ecma and Open Source Software Development • Ecma is one of the oldest SDOs in ICT standardization (founded in 1961) • Examples for Ecma-OSS Standardization Projects: • 2006-2008 ECMA-376 (fast tracked as ISO/IEC 29500) “Office Open XML File Formats” RAND in Ecma and JTC1, but RF with Microsoft’s “Open Specification Promise” – it worked. Today at least 30+ OSS implementations of the standards – important for feedback in maintenance • 201x-today ECMA-262 (fast tracked as ISO/IEC 16262) “ECMAScript Language Specification” with OSS involvement and input. Since 2018 different solution because of yearly updates of the standard (Too fast for the “fast track”). • 2013 ECMA-404 (fast tracked as ISO/IEC 21778 ) “The JSON Data Interchange Syntax“. Many OSS impl. Rue du Rhône 114 - CH-1204 Geneva - T: +41 22 849 6000 - F: +41 22 849 6001 - www.ecma-international.org 2 Initial Questions by the OSS Workshop Moderators: • Is Open Source development the next stage to be adopted by SDOs? • To what extent a closer collaboration between standards and open source software development could increase efficiency of both? • How can intellectual property regimes - applied by SDOs - influence the ability and motivation of open source communities to cooperate with them? • Should there be a role for policy setting at EU level? What actions of the European Commission could maximize the positive impact of Open Source in the European economy? Rue du Rhône 114 - CH-1204 Geneva - T: +41 22 849 6000 - F: +41 22 849 6001 - www.ecma-international.org 3 Question 1 and Answer: • Is Open Source development the next stage to be adopted by SDOs? • No.