High Performance Computing: Models, Methods, & Means Benchmarking

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Memory Centric Characterization and Analysis of SPEC CPU2017 Suite

Session 11: Performance Analysis and Simulation ICPE ’19, April 7–11, 2019, Mumbai, India Memory Centric Characterization and Analysis of SPEC CPU2017 Suite Sarabjeet Singh Manu Awasthi [email protected] [email protected] Ashoka University Ashoka University ABSTRACT These benchmarks have become the standard for any researcher or In this paper, we provide a comprehensive, memory-centric charac- commercial entity wishing to benchmark their architecture or for terization of the SPEC CPU2017 benchmark suite, using a number of exploring new designs. mechanisms including dynamic binary instrumentation, measure- The latest offering of SPEC CPU suite, SPEC CPU2017, was re- ments on native hardware using hardware performance counters leased in June 2017 [8]. SPEC CPU2017 retains a number of bench- and operating system based tools. marks from previous iterations but has also added many new ones We present a number of results including working set sizes, mem- to reflect the changing nature of applications. Some recent stud- ory capacity consumption and memory bandwidth utilization of ies [21, 24] have already started characterizing the behavior of various workloads. Our experiments reveal that, on the x86_64 ISA, SPEC CPU2017 applications, looking for potential optimizations to SPEC CPU2017 workloads execute a significant number of mem- system architectures. ory related instructions, with approximately 50% of all dynamic In recent years the memory hierarchy, from the caches, all the instructions requiring memory accesses. We also show that there is way to main memory, has become a first class citizen of computer a large variation in the memory footprint and bandwidth utilization system design. -

Microbenchmarks in Big Data

M Microbenchmark Overview Microbenchmarks constitute the first line of per- Nicolas Poggi formance testing. Through them, we can ensure Databricks Inc., Amsterdam, NL, BarcelonaTech the proper and timely functioning of the different (UPC), Barcelona, Spain individual components that make up our system. The term micro, of course, depends on the prob- lem size. In BigData we broaden the concept Synonyms to cover the testing of large-scale distributed systems and computing frameworks. This chap- Component benchmark; Functional benchmark; ter presents the historical background, evolution, Test central ideas, and current key applications of the field concerning BigData. Definition Historical Background A microbenchmark is either a program or routine to measure and test the performance of a single Origins and CPU-Oriented Benchmarks component or task. Microbenchmarks are used to Microbenchmarks are closer to both hardware measure simple and well-defined quantities such and software testing than to competitive bench- as elapsed time, rate of operations, bandwidth, marking, opposed to application-level – macro or latency. Typically, microbenchmarks were as- – benchmarking. For this reason, we can trace sociated with the testing of individual software microbenchmarking influence to the hardware subroutines or lower-level hardware components testing discipline as can be found in Sumne such as the CPU and for a short period of time. (1974). Furthermore, we can also find influence However, in the BigData scope, the term mi- in the origins of software testing methodology crobenchmarking is broadened to include the during the 1970s, including works such cluster – group of networked computers – acting as Chow (1978). One of the first examples of as a single system, as well as the testing of a microbenchmark clearly distinguishable from frameworks, algorithms, logical and distributed software testing is the Whetstone benchmark components, for a longer period and larger data developed during the late 1960s and published sizes. -

Overview of the SPEC Benchmarks

9 Overview of the SPEC Benchmarks Kaivalya M. Dixit IBM Corporation “The reputation of current benchmarketing claims regarding system performance is on par with the promises made by politicians during elections.” Standard Performance Evaluation Corporation (SPEC) was founded in October, 1988, by Apollo, Hewlett-Packard,MIPS Computer Systems and SUN Microsystems in cooperation with E. E. Times. SPEC is a nonprofit consortium of 22 major computer vendors whose common goals are “to provide the industry with a realistic yardstick to measure the performance of advanced computer systems” and to educate consumers about the performance of vendors’ products. SPEC creates, maintains, distributes, and endorses a standardized set of application-oriented programs to be used as benchmarks. 489 490 CHAPTER 9 Overview of the SPEC Benchmarks 9.1 Historical Perspective Traditional benchmarks have failed to characterize the system performance of modern computer systems. Some of those benchmarks measure component-level performance, and some of the measurements are routinely published as system performance. Historically, vendors have characterized the performances of their systems in a variety of confusing metrics. In part, the confusion is due to a lack of credible performance information, agreement, and leadership among competing vendors. Many vendors characterize system performance in millions of instructions per second (MIPS) and millions of floating-point operations per second (MFLOPS). All instructions, however, are not equal. Since CISC machine instructions usually accomplish a lot more than those of RISC machines, comparing the instructions of a CISC machine and a RISC machine is similar to comparing Latin and Greek. 9.1.1 Simple CPU Benchmarks Truth in benchmarking is an oxymoron because vendors use benchmarks for marketing purposes. -

Hypervisors Vs. Lightweight Virtualization: a Performance Comparison

2015 IEEE International Conference on Cloud Engineering Hypervisors vs. Lightweight Virtualization: a Performance Comparison Roberto Morabito, Jimmy Kjällman, and Miika Komu Ericsson Research, NomadicLab Jorvas, Finland [email protected], [email protected], [email protected] Abstract — Virtualization of operating systems provides a container and alternative solutions. The idea is to quantify the common way to run different services in the cloud. Recently, the level of overhead introduced by these platforms and the lightweight virtualization technologies claim to offer superior existing gap compared to a non-virtualized environment. performance. In this paper, we present a detailed performance The remainder of this paper is structured as follows: in comparison of traditional hypervisor based virtualization and Section II, literature review and a brief description of all the new lightweight solutions. In our measurements, we use several technologies and platforms evaluated is provided. The benchmarks tools in order to understand the strengths, methodology used to realize our performance comparison is weaknesses, and anomalies introduced by these different platforms in terms of processing, storage, memory and network. introduced in Section III. The benchmark results are presented Our results show that containers achieve generally better in Section IV. Finally, some concluding remarks and future performance when compared with traditional virtual machines work are provided in Section V. and other recent solutions. Albeit containers offer clearly more dense deployment of virtual machines, the performance II. BACKGROUND AND RELATED WORK difference with other technologies is in many cases relatively small. In this section, we provide an overview of the different technologies included in the performance comparison. -

Power Measurement Tutorial for the Green500 List

Power Measurement Tutorial for the Green500 List R. Ge, X. Feng, H. Pyla, K. Cameron, W. Feng June 27, 2007 Contents 1 The Metric for Energy-Efficiency Evaluation 1 2 How to Obtain P¯(Rmax)? 2 2.1 The Definition of P¯(Rmax)...................................... 2 2.2 Deriving P¯(Rmax) from Unit Power . 2 2.3 Measuring Unit Power . 3 3 The Measurement Procedure 3 3.1 Equipment Check List . 4 3.2 Software Installation . 4 3.3 Hardware Connection . 4 3.4 Power Measurement Procedure . 5 4 Appendix 6 4.1 Frequently Asked Questions . 6 4.2 Resources . 6 1 The Metric for Energy-Efficiency Evaluation This tutorial serves as a practical guide for measuring the computer system power that is required as part of a Green500 submission. It describes the basic procedures to be followed in order to measure the power consumption of a supercomputer. A supercomputer that appears on The TOP500 List can easily consume megawatts of electric power. This power consumption may lead to operating costs that exceed acquisition costs as well as intolerable system failure rates. In recent years, we have witnessed an increasingly stronger movement towards energy-efficient computing systems in academia, government, and industry. Thus, the purpose of the Green500 List is to provide a ranking of the most energy-efficient supercomputers in the world and serve as a complementary view to the TOP500 List. However, as pointed out in [1, 2], identifying a single objective metric for energy efficiency in supercom- puters is a difficult task. Based on [1, 2] and given the already existing use of the “performance per watt” metric, the Green500 List uses “performance per watt” (PPW) as its metric to rank the energy efficiency of supercomputers. -

3 — Arithmetic for Computers 2 MIPS Arithmetic Logic Unit (ALU) Zero Ovf

Chapter 3 Arithmetic for Computers 1 § 3.1Introduction Arithmetic for Computers Operations on integers Addition and subtraction Multiplication and division Dealing with overflow Floating-point real numbers Representation and operations Rechnerstrukturen 182.092 3 — Arithmetic for Computers 2 MIPS Arithmetic Logic Unit (ALU) zero ovf 1 Must support the Arithmetic/Logic 1 operations of the ISA A 32 add, addi, addiu, addu ALU result sub, subu 32 mult, multu, div, divu B 32 sqrt 4 and, andi, nor, or, ori, xor, xori m (operation) beq, bne, slt, slti, sltiu, sltu With special handling for sign extend – addi, addiu, slti, sltiu zero extend – andi, ori, xori overflow detection – add, addi, sub Rechnerstrukturen 182.092 3 — Arithmetic for Computers 3 (Simplyfied) 1-bit MIPS ALU AND, OR, ADD, SLT Rechnerstrukturen 182.092 3 — Arithmetic for Computers 4 Final 32-bit ALU Rechnerstrukturen 182.092 3 — Arithmetic for Computers 5 Performance issues Critical path of n-bit ripple-carry adder is n*CP CarryIn0 A0 1-bit result0 ALU B0 CarryOut0 CarryIn1 A1 1-bit result1 B ALU 1 CarryOut 1 CarryIn2 A2 1-bit result2 ALU B2 CarryOut CarryIn 2 3 A3 1-bit result3 ALU B3 CarryOut3 Design trick – throw hardware at it (Carry Lookahead) Rechnerstrukturen 182.092 3 — Arithmetic for Computers 6 Carry Lookahead Logic (4 bit adder) LS 283 Rechnerstrukturen 182.092 3 — Arithmetic for Computers 7 § 3.2 Addition and Subtraction 3.2 Integer Addition Example: 7 + 6 Overflow if result out of range Adding +ve and –ve operands, no overflow Adding two +ve operands -

The High Performance Linpack (HPL) Benchmark Evaluation on UTP High Performance Computing Cluster by Wong Chun Shiang 16138 Diss

The High Performance Linpack (HPL) Benchmark Evaluation on UTP High Performance Computing Cluster By Wong Chun Shiang 16138 Dissertation submitted in partial fulfilment of the requirements for the Bachelor of Technology (Hons) (Information and Communications Technology) MAY 2015 Universiti Teknologi PETRONAS Bandar Seri Iskandar 31750 Tronoh Perak Darul Ridzuan CERTIFICATION OF APPROVAL The High Performance Linpack (HPL) Benchmark Evaluation on UTP High Performance Computing Cluster by Wong Chun Shiang 16138 A project dissertation submitted to the Information & Communication Technology Programme Universiti Teknologi PETRONAS In partial fulfillment of the requirement for the BACHELOR OF TECHNOLOGY (Hons) (INFORMATION & COMMUNICATION TECHNOLOGY) Approved by, ____________________________ (DR. IZZATDIN ABDUL AZIZ) UNIVERSITI TEKNOLOGI PETRONAS TRONOH, PERAK MAY 2015 ii CERTIFICATION OF ORIGINALITY This is to certify that I am responsible for the work submitted in this project, that the original work is my own except as specified in the references and acknowledgements, and that the original work contained herein have not been undertaken or done by unspecified sources or persons. ________________ WONG CHUN SHIANG iii ABSTRACT UTP High Performance Computing Cluster (HPCC) is a collection of computing nodes using commercially available hardware interconnected within a network to communicate among the nodes. This campus wide cluster is used by researchers from internal UTP and external parties to compute intensive applications. However, the HPCC has never been benchmarked before. It is imperative to carry out a performance study to measure the true computing ability of this cluster. This project aims to test the performance of a campus wide computing cluster using a selected benchmarking tool, the High Performance Linkpack (HPL). -

A Study on the Evaluation of HPC Microservices in Containerized Environment

ReceivED XXXXXXXX; ReVISED XXXXXXXX; Accepted XXXXXXXX DOI: xxx/XXXX ARTICLE TYPE A Study ON THE Evaluation OF HPC Microservices IN Containerized EnVIRONMENT DeVKI Nandan Jha*1 | SaurABH Garg2 | Prem PrAKASH JaYARAMAN3 | Rajkumar Buyya4 | Zheng Li5 | GrAHAM Morgan1 | Rajiv Ranjan1 1School Of Computing, Newcastle UnivERSITY, UK Summary 2UnivERSITY OF Tasmania, Australia, 3Swinburne UnivERSITY OF TECHNOLOGY, AustrALIA Containers ARE GAINING POPULARITY OVER VIRTUAL MACHINES (VMs) AS THEY PROVIDE THE ADVANTAGES 4 The UnivERSITY OF Melbourne, AustrALIA OF VIRTUALIZATION WITH THE PERFORMANCE OF NEAR bare-metal. The UNIFORMITY OF SUPPORT PROVIDED 5UnivERSITY IN Concepción, Chile BY DockER CONTAINERS ACROSS DIFFERENT CLOUD PROVIDERS MAKES THEM A POPULAR CHOICE FOR DEVel- Correspondence opers. EvOLUTION OF MICROSERVICE ARCHITECTURE ALLOWS COMPLEX APPLICATIONS TO BE STRUCTURED INTO *DeVKI Nandan Jha Email: [email protected] INDEPENDENT MODULAR COMPONENTS MAKING THEM EASIER TO manage. High PERFORMANCE COMPUTING (HPC) APPLICATIONS ARE ONE SUCH APPLICATION TO BE DEPLOYED AS microservices, PLACING SIGNIfiCANT RESOURCE REQUIREMENTS ON THE CONTAINER FRamework. HoweVER, THERE IS A POSSIBILTY OF INTERFERENCE BETWEEN DIFFERENT MICROSERVICES HOSTED WITHIN THE SAME CONTAINER (intra-container) AND DIFFERENT CONTAINERS (inter-container) ON THE SAME PHYSICAL host. In THIS PAPER WE DESCRIBE AN Exten- SIVE EXPERIMENTAL INVESTIGATION TO DETERMINE THE PERFORMANCE EVALUATION OF DockER CONTAINERS EXECUTING HETEROGENEOUS HPC microservices. WE ARE PARTICULARLY CONCERNED WITH HOW INTRa- CONTAINER AND inter-container INTERFERENCE INflUENCES THE performance. MoreoVER, WE INVESTIGATE THE PERFORMANCE VARIATIONS IN DockER CONTAINERS WHEN CONTROL GROUPS (cgroups) ARE USED FOR RESOURCE limitation. FOR EASE OF PRESENTATION AND REPRODUCIBILITY, WE USE Cloud Evaluation Exper- IMENT Methodology (CEEM) TO CONDUCT OUR COMPREHENSIVE SET OF Experiments. -

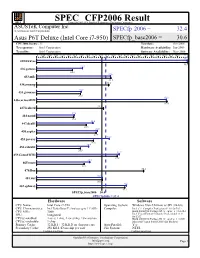

Asustek Computer Inc.: Asus P6T

SPEC CFP2006 Result spec Copyright 2006-2014 Standard Performance Evaluation Corporation ASUSTeK Computer Inc. (Test Sponsor: Intel Corporation) SPECfp 2006 = 32.4 Asus P6T Deluxe (Intel Core i7-950) SPECfp_base2006 = 30.6 CPU2006 license: 13 Test date: Oct-2008 Test sponsor: Intel Corporation Hardware Availability: Jun-2009 Tested by: Intel Corporation Software Availability: Nov-2008 0 3.00 6.00 9.00 12.0 15.0 18.0 21.0 24.0 27.0 30.0 33.0 36.0 39.0 42.0 45.0 48.0 51.0 54.0 57.0 60.0 63.0 66.0 71.0 70.7 410.bwaves 70.8 21.5 416.gamess 16.8 34.6 433.milc 34.8 434.zeusmp 33.8 20.9 435.gromacs 20.6 60.8 436.cactusADM 61.0 437.leslie3d 31.3 17.6 444.namd 17.4 26.4 447.dealII 23.3 28.5 450.soplex 27.9 34.0 453.povray 26.6 24.4 454.calculix 20.5 38.7 459.GemsFDTD 37.8 24.9 465.tonto 22.3 470.lbm 50.2 30.9 481.wrf 30.9 42.1 482.sphinx3 41.3 SPECfp_base2006 = 30.6 SPECfp2006 = 32.4 Hardware Software CPU Name: Intel Core i7-950 Operating System: Windows Vista Ultimate w/ SP1 (64-bit) CPU Characteristics: Intel Turbo Boost Technology up to 3.33 GHz Compiler: Intel C++ Compiler Professional 11.0 for IA32 CPU MHz: 3066 Build 20080930 Package ID: w_cproc_p_11.0.054 Intel Visual Fortran Compiler Professional 11.0 FPU: Integrated for IA32 CPU(s) enabled: 4 cores, 1 chip, 4 cores/chip, 2 threads/core Build 20080930 Package ID: w_cprof_p_11.0.054 CPU(s) orderable: 1 chip Microsoft Visual Studio 2008 (for libraries) Primary Cache: 32 KB I + 32 KB D on chip per core Auto Parallel: Yes Secondary Cache: 256 KB I+D on chip per core File System: NTFS Continued on next page Continued on next page Standard Performance Evaluation Corporation [email protected] Page 1 http://www.spec.org/ SPEC CFP2006 Result spec Copyright 2006-2014 Standard Performance Evaluation Corporation ASUSTeK Computer Inc. -

Fair Benchmarking for Cloud Computing Systems

Fair Benchmarking for Cloud Computing Systems Authors: Lee Gillam Bin Li John O’Loughlin Anuz Pranap Singh Tomar March 2012 Contents 1 Introduction .............................................................................................................................................................. 3 2 Background .............................................................................................................................................................. 4 3 Previous work ........................................................................................................................................................... 5 3.1 Literature ........................................................................................................................................................... 5 3.2 Related Resources ............................................................................................................................................. 6 4 Preparation ............................................................................................................................................................... 9 4.1 Cloud Providers................................................................................................................................................. 9 4.2 Cloud APIs ...................................................................................................................................................... 10 4.3 Benchmark selection ...................................................................................................................................... -

Modeling and Analyzing CPU Power and Performance: Metrics, Methods, and Abstractions

Modeling and Analyzing CPU Power and Performance: Metrics, Methods, and Abstractions Margaret Martonosi David Brooks Pradip Bose VET NOV TES TAM EN TVM DE I VI GE T SV B NV M I NE Moore’s Law & Power Dissipation... Moore’s Law: ❚ The Good News: 2X Transistor counts every 18 months ❚ The Bad News: To get the performance improvements we’re accustomed to, CPU Power consumption will increase exponentially too... (Graphs courtesy of Fred Pollack, Intel) Why worry about power dissipation? Battery life Thermal issues: affect cooling, packaging, reliability, timing Environment Hitting the wall… ❚ Battery technology ❚ ❙ Linear improvements, nowhere Past: near the exponential power ❙ Power important for increases we’ve seen laptops, cell phones ❚ Cooling techniques ❚ Present: ❙ Air-cooled is reaching limits ❙ Power a Critical, Universal ❙ Fans often undesirable (noise, design constraint even for weight, expense) very high-end chips ❙ $1 per chip per Watt when ❚ operating in the >40W realm Circuits and process scaling can no longer solve all power ❙ Water-cooled ?!? problems. ❚ Environment ❙ SYSTEMS must also be ❙ US EPA: 10% of current electricity usage in US is directly due to power-aware desktop computers ❙ Architecture, OS, compilers ❙ Increasing fast. And doesn’t count embedded systems, Printers, UPS backup? Power: The Basics ❚ Dynamic power vs. Static power vs. short-circuit power ❙ “switching” power ❙ “leakage” power ❙ Dynamic power dominates, but static power increasing in importance ❙ Trends in each ❚ Static power: steady, per-cycle energy cost ❚ Dynamic power: power dissipation due to capacitance charging at transitions from 0->1 and 1->0 ❚ Short-circuit power: power due to brief short-circuit current during transitions. -

Continuous Profiling: Where Have All the Cycles Gone?

Continuous Profiling: Where Have All the Cycles Gone? JENNIFER M. ANDERSON, LANCE M. BERC, JEFFREY DEAN, SANJAY GHEMAWAT, MONIKA R. HENZINGER, SHUN-TAK A. LEUNG, RICHARD L. SITES, MARK T. VANDEVOORDE, CARL A. WALDSPURGER, and WILLIAM E. WEIHL Digital Equipment Corporation This article describes the Digital Continuous Profiling Infrastructure, a sampling-based profiling system designed to run continuously on production systems. The system supports multiprocessors, works on unmodified executables, and collects profiles for entire systems, including user programs, shared libraries, and the operating system kernel. Samples are collected at a high rate (over 5200 samples/sec. per 333MHz processor), yet with low overhead (1–3% slowdown for most workloads). Analysis tools supplied with the profiling system use the sample data to produce a precise and accurate accounting, down to the level of pipeline stalls incurred by individual instructions, of where time is being spent. When instructions incur stalls, the tools identify possible reasons, such as cache misses, branch mispredictions, and functional unit contention. The fine-grained instruction-level analysis guides users and automated optimizers to the causes of performance problems and provides important insights for fixing them. Categories and Subject Descriptors: C.4 [Computer Systems Organization]: Performance of Systems; D.2.2 [Software Engineering]: Tools and Techniques—profiling tools; D.2.6 [Pro- gramming Languages]: Programming Environments—performance monitoring; D.4 [Oper- ating Systems]: General; D.4.7 [Operating Systems]: Organization and Design; D.4.8 [Operating Systems]: Performance General Terms: Performance Additional Key Words and Phrases: Profiling, performance understanding, program analysis, performance-monitoring hardware An earlier version of this article appeared at the 16th ACM Symposium on Operating System Principles (SOSP), St.